Everything We Know About Intel's Skylake Platform

Intel's Skylake architecture and corresponding platform represent a huge evolution in connectivity, overclocking and, ultimately, system performance. This resource should help answer any questions you have about the company's current desktop PC design.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Memory And Bandwidth

DMI And Bandwidth

Another potential issue is the interface between the CPU and PCH. DMI 3.0 is essentially equivalent to a four-lane PCIe 3.0 link, offering roughly 4 GB/s of bi-directional bandwidth. All I/O from your USB-attached thumb drive, SATA-based SSD and gigabit Ethernet network goes through the PCH and across that interface before landing in system memory and eventually the CPU or GPU.

Using multiple devices simultaneously connected to the PCH forces them to compete for bandwidth. Intel claims that there shouldn't be as much contention now that the third-gen DMI doubles the previous generation's peak throughput, but it's still a plausible concern. That's one reason you probably wouldn't want to use a multi-GPU configuration on a chipset like H170 that won't divide the CPU's PCIe lanes between multiple graphics cards. It's also one of the reasons why Nvidia doesn't allow SLI across four-lane links.

Memory Support

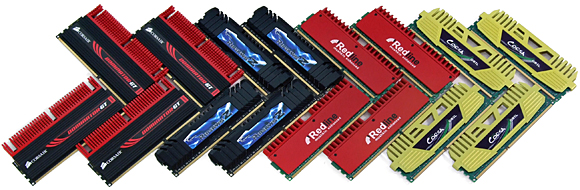

Starting with Skylake, Intel added DDR4 support to its memory controller. But because the technology is still fairly new, the company retained support for DDR3 as well, easing adoption of its most modern platform.

Article continues belowDon't take that to mean any DDR3 module will work with Skylake, though. Only DDR3 operating at or below 1.35V is officially supported, and using DDR3 at higher voltage levels could damage the CPU's integrated memory controller.

Several board vendors list support for RAM operating at higher voltage levels, and you may not run into problems using RAM rated for 1.5 or 1.65V, but Intel doesn't recommend it. A lot of damage materializes slowly over time due to electromigration. As such, you'll want to carefully weigh the risks of dropping older modules into Skylake-based systems, providing you have a motherboard with DDR3 slots at all.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

Captainawzome Reply

Thanks! Sadly, this page does not explain the nuances of other factors such as overclocking potential, and the probability of getting a skylake CPU that overclocks to 4.6, 4.8, etc17734443 said:Intel's Skylake architecture and corresponding platform represent a huge evolution in connectivity, overclocking and, ultimately, system performance. This resource should help answer any questions you have about the company's current desktop PC design.

Everything We Know About Intel's Skylake Platform : Read more

Very informational though! :)

-

logainofhades I suspect that if Zen is in any way successful, Intel will back off a bit, on the non z overclock stance. If they price a chip that is competitive, say at least on the same single threaded performance level as Haswell, with a locked i3 or i5, AMD will get a much needed boost in sales. I honestly hope something like this happens. This one side dominating completely, is bad for consumers.Reply -

IInuyasha74 Reply17734455 said:

Thanks! Sadly, this page does not explain the nuances of other factors such as overclocking potential, and the probability of getting a skylake CPU that overclocks to 4.6, 4.8, etc17734443 said:Intel's Skylake architecture and corresponding platform represent a huge evolution in connectivity, overclocking and, ultimately, system performance. This resource should help answer any questions you have about the company's current desktop PC design.

Everything We Know About Intel's Skylake Platform : Read more

Very informational though! :)

Well you see, it is hard to put a number on that which would hold up reliably. Overclocking chips could land just about anywhere, and without testing dozens of samples we couldn't come up with an average overclock that Skylake seems to be able to hit that would hold up well enough. -

kunstderfugue Hopefully the competition later this year makes Intel reconsider the way they're treating their consumers.Reply -

logainofhades Yea, I have not been very happy with Intel, since Skylake released. The Xeon chipset part, in particular, irked me. The whole launch has been a disaster of confusion. Glad this article was made to clear some things up.Reply -

TJ Hooker ASUS, MSI, and Gigabyte all ventured into non-k overclocking on their Z170 boards as well. The BIOSs that enabled it may have been labelled as betas, and I'm not sure if they're available through official channels anymore. But if Biostar gets a mention for releasing and then retracting non-k OC, I don't know why these other manufacturers aren't brought up.Reply

Another drawback of non-k BCLK OC is that CPU core temperature can no longer be read.

Lastly, another potential topic to add is the subject of DDR4 at speeds greater than 2133 MHz. I've seen many forum questions about what CPU/mobo support for running 2400+ MHz DDR4. I'm under the impression that you need a Z170 mobo (I could be wrong), I've seen people say you need an unlocked CPU (from personal experience I know this is wrong), could be handy to add a section to clear this up. -

josejones I am far more interested in articles about the soon to come Z270 motherboardsReply

200-Series Union Point Motherboards

http://www.tomshardware.com/forum/id-2983311/200-series-union-point-motherboards.html -

Jaran Gaarder Heggen Interresting article, but can you please add chipset for dual cpu xeon also in the Workstation area?Reply

-

hixbot ReplyI am far more interested in articles about the soon to come Z270 motherboards

You will be lucky to see Kaby Lake mobile before the end of 2016. It will be mid 2017 at the earliest before consumers can get their hands on desktop motherboards with Union Point.

200-Series Union Point Motherboards

http://www.tomshardware.com/forum/id-2983311/200-series-union-point-motherboards.html

Very hard to expect a detailed breakdown of that platform at this time.