ARM Mali-C71 ISP Brings Ultra-Wide Dynamic Range To Advanced Driver Assistance Systems

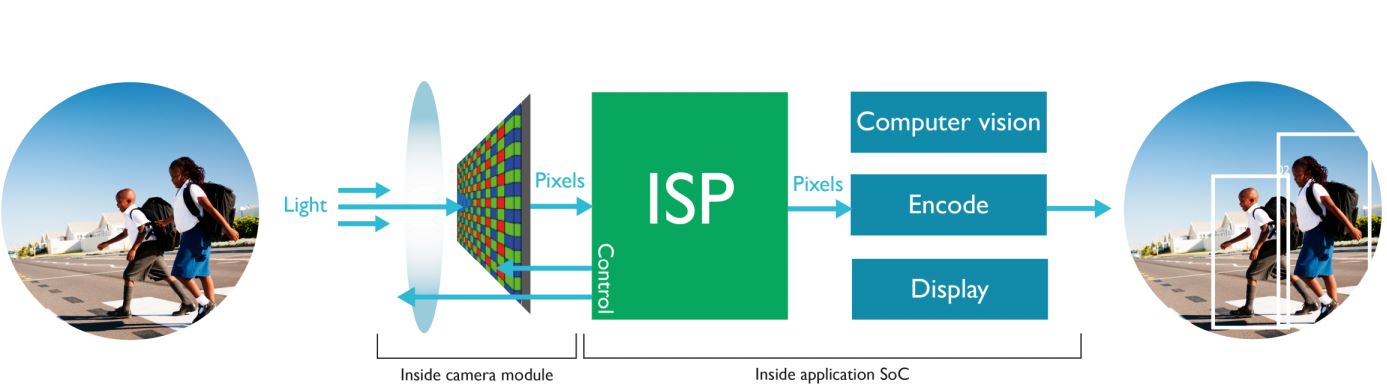

ARM announced the first Image Signal Processor (ISP) for the automotive market with Ultra Wide Dynamic Range (Ultra WDR) capabilities, allowing autonomous driving systems to work in even the most difficult daylight lighting conditions.

ARM To Focus On Computer Vision

Last year, ARM purchased Apical, a company that was licensing imaging and embedded computer vision intellectual property. ARM seems to have realized that computer vision is an important part of the future of embedded chips, considering how many types of products could benefit from it: self-driving cars, drones, robots, surveillance cameras, and any other product that has a camera and needs to analyze data.

"Computer vision is in the early stages of development and the world of devices powered by this exciting technology can only grow from here," said Simon Segars, ARM’s CEO, when the company acquired Apical. "Apical is at the forefront of embedded computer vision technology, building on its leadership in imaging products that already enable intelligent devices to deliver amazing new user experiences. The ARM partnership is solving the technical challenges of next generation products such as driverless cars and sophisticated security systems. These solutions rely on the creation of dedicated image computing solutions and Apical's technologies will play a crucial role in their delivery," he added.

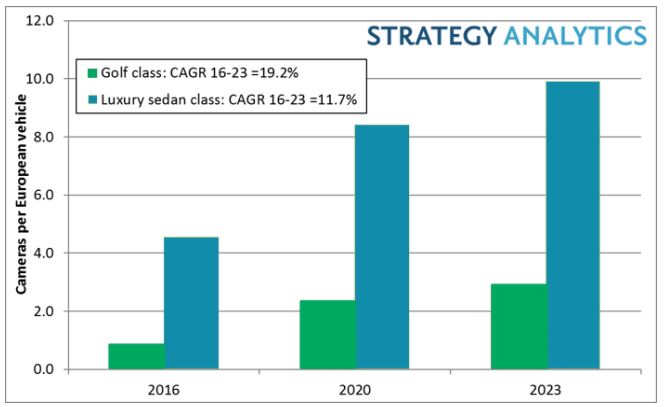

ARM wants to focus on the self-driving car market in particular. According to Strategy Analytics, a market research firm, it’s expected that mid-range cars, such as the Volkswagen Golf, will have at least three cameras, and and luxury cars should be approaching ten cameras by 2023.

Not all cameras will be external; some will be internal in order to provide certain features, such as monitoring to see if the driver falls asleep while the car is in cruise control. This could benefit cars that haven’t yet reached Level 4 or higher for autonomous driving, and they still require a human to be alert in case something goes wrong.

Cameras on a car could also offer better ways to detect pedestrians or provide night vision for the driver, thus enhancing regular cars that don’t yet have self-driving systems.

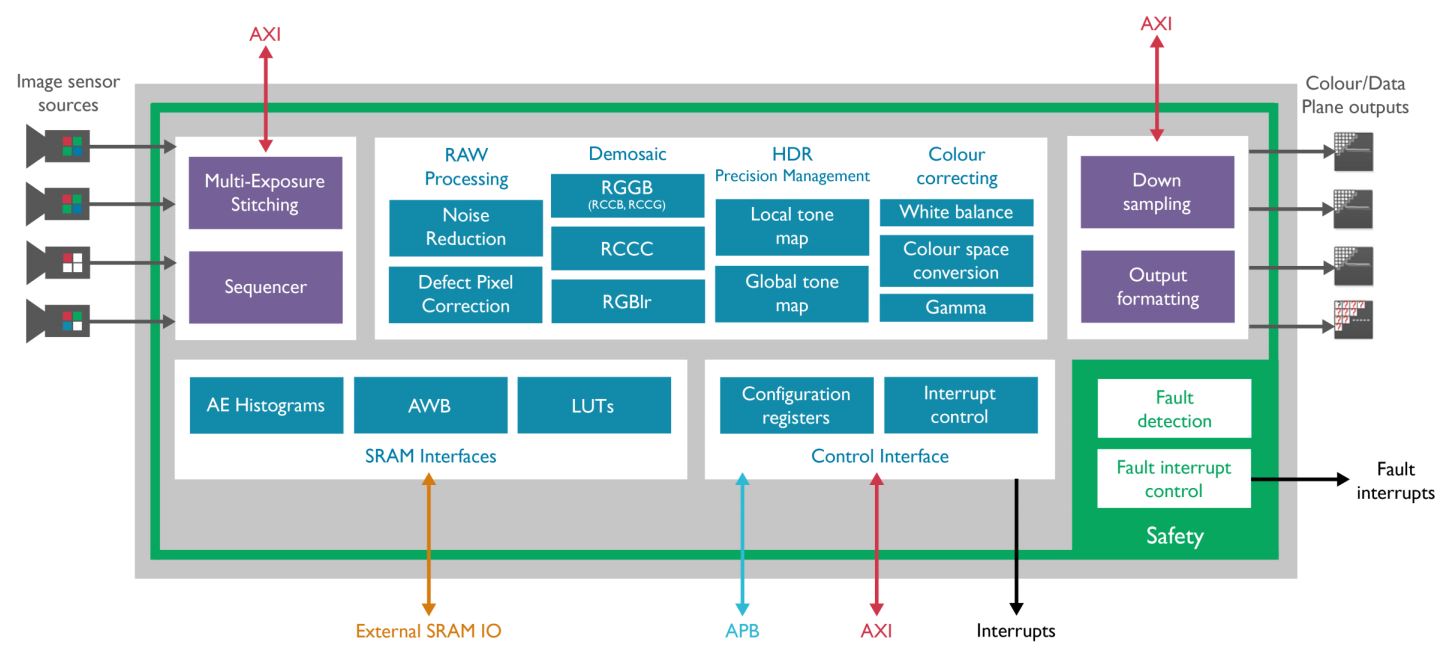

Mali-C71 Ultra Wide Dynamic Range ISP

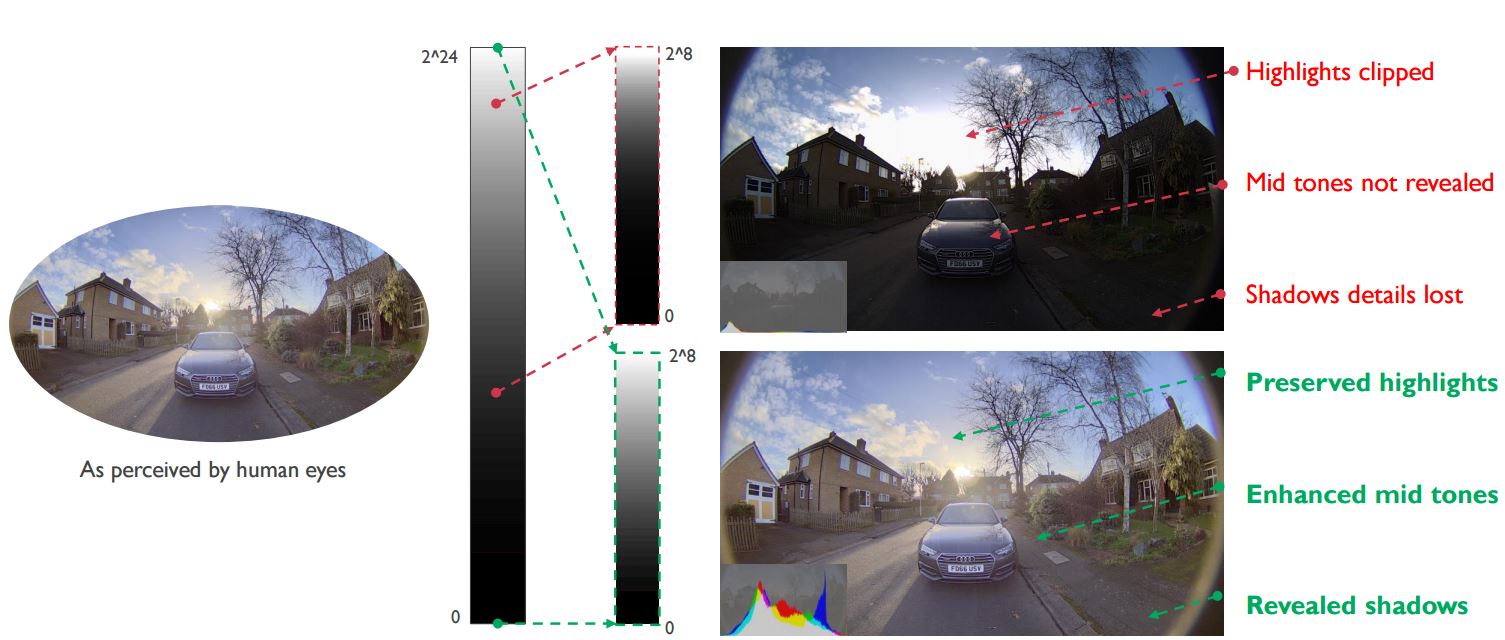

The first product to come from the Apical acquisition is the Mali-C71, a high-performance ISP that’s capable of 24 stops of dynamic range. In photography, a stop is a doubling of light exposure for an image, so the bigger the range of stops, the brighter you can make an image or part of an image. That means that an advanced driver assistance system (ADAS), for instance, can more easily distinguish what’s in the shadows in the daylight.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Mali-C71 can also reduce light exposure to more easily observe something that has too much light on it, as seen in the example below.

ARM’s ISP can support both human displays computer vision simultaneously. This means humans (that's you!) can watch what the cameras see while the computer vision system does its work in the background to analyze every pixel at the same time.

The ISP has a processing performance of 1.2 Gigapixels per second and can support up to four different cameras at the same time, each with up to 4K resolution. Manufacturers that want to put more than four cameras on a car can opt for multiple ISPs.

ARM said that the Mali-C71 ISP has been designed with the highest levels of safety in mind and is in accordance with the Automotive Safety Integrity Level D (ASIL D). ASIL D certification is required for components where the risk of fatal injury is highest, which means the highest level of assurance is also necessary.

According to the company, the ISP has 300 fault detection circuits, a built-in continuous self-test, Cyclic Redundancy Checking (CRC) on data paths, and every pixel is tagged for reliability. The software for the ISP is also being developed with ASIL compliance in mind.

Competition

ARM will be entering a market where the competition is already quite strong. Nvidia has made significant progress in the automotive market over the past few years, and Intel recently acquired Mobileye, the company that used to make Tesla’s "Autopilot" systems.

The market is still young, however, and ARM will likely also be an important player by licensing its IP to other chip makers. Then, it could gain market share in the same way it has in smartphones, networking equipment, and other embedded chip markets.

Lucian Armasu is a Contributing Writer for Tom's Hardware US. He covers software news and the issues surrounding privacy and security.

-

bit_user Reply

Yes and no, but the thing I hate about it is that it'll eventually force everyone to forego manual driving. On highways, at least.19607220 said:All this nanny tech simply because people don't take driving seriously enough.

With all the money being spent on self-driving cars, we could probably have a pretty awesome high-speed rail network. -

bit_user WDR is a given, for such applications. However, 24-bit is what's really impressive about this.Reply

I wonder what's their effective dynamic range at highway speeds. -

rantoc And in later news, human average IQ is on a steep decline due to tech who makes thinking superfluous!Reply -

turbotong Does that night vision picture look photoshopped to anyone? I don't think IR shadows and lighting works the same way as lighting from a streetlamp.Reply -

Wisecracker The big deal in this (as I understand it) is in the improvement of "white balance" in the dynamic range --- this was the problem in the fatal Tesla crash last year.Reply

White cars/trucks, white lines, bright/white skies, etc., need extra attention as to prevent conflicting sensors and interpretation.

And, el-oh-el @ the whining. I'll take my chances with a self-driving car long before I trust a distracted knuckle-head behind the wheel of a 2-ton vehicle traveling 70+ MPH ...

-

blitzkrieg316 Self driving cars will go the way of the dodo once your car bluescreens through a school zone...Reply -

nzalog Reply

Yeah, it's the direction things are headed and it kind of sucks because I enjoy driving (or at least used to). There are so many oblivious people on the road now a day that it's kind of hard to enjoy. I do hope that manual driving doesn't become completely outlawed, instead I hope they just make the test to drive manually much more difficult.19607578 said:

Yes and no, but the thing I hate about it is that it'll eventually force everyone to forego manual driving. On highways, at least.19607220 said:All this nanny tech simply because people don't take driving seriously enough.

With all the money being spent on self-driving cars, we could probably have a pretty awesome high-speed rail network.

I can imagine getting pulled over "If you want to drive manually, take it to the track!" -

bit_user Reply

It seems that way, but I'm pretty sure thinking has always been optional.19608689 said:And in later news, human average IQ is on a steep decline due to tech who makes thinking superfluous!

Granting the ability to do more, with less effort or knowledge, is an intrinsic aspect of technology. To me, the really interesting question is whether access to technology should be in any way limited or restricted. Certainly, we accept the need to do this for nuclear technology, but what about certain types of information technologies? -

bit_user Reply

It's obviously a false-color image.19609789 said:Does that night vision picture look photoshopped to anyone? I don't think IR shadows and lighting works the same way as lighting from a streetlamp.

If we were talking about far-IR (AKA thermal imaging), then you're correct that shadows take as long to form as it takes for the surface to cool a measurable amount. But I think cost likely prohibits the use of thermal imaging in automotive applications. And since cars can use active illumination, there's not so much benefit.