Crash Tested: Nvidia Improves RTX 30-Series Stability With New Driver

We tested several RTX 3080 and 3090 cards with the latest drivers, showing before and after performance, clocks, and power.

Nvidia’s RTX 30-series launches of the Nvidia GeForce RTX 3080 and GeForce RTX 3090 have come with more than a few problems, no doubt about it. Our initial look at performance showed great promise, but supply on the GPUs has been limited at best. Compound that with bots scooping up many of the initial sales and things were already dicey. Then more people started getting their hands on cards and the problems escalated, with an increasing number of people reporting persistent crashing issues.

Was the launch rushed? Yes, undoubtedly. There wasn't enough supply, for one, but in an attempt to keep leaks from occurring, it seems the AIB partners also got the drivers later than normal — one rumor says AIBs got drivers at the same time as reviewers, just one or two weeks before launch. That mostly stopped the leaks, but it also meant the AIB cards were rushed, which limited their testing. Now there are concerns about the choice of different capacitors (SP-Caps vs. MLCC) causing instability, and some AIBs have implied as much.

The very short summary is that Nvidia provides multiple capacitor options to its partners, all of which it has validated. SP-Caps are Conductive Polymer Tantalum Solid Capacitors, or Conductive Polymer Aluminum Electrolytic Capacitors — often incorrectly lumped under the specific brand of POSCAPs, though it's not particularly important. These are one option that tends to be less expensive and more tolerant of higher temperatures, while not doing as well with higher clock speeds. The other option is MLCC, Multilayer Ceramic Chip capacitors, where typically ten smaller capacitors are used in parallel in place of a single larger capacitor. MLCC is generally more expensive overall, and better for higher clocks but less tolerant of heat. However, this is greatly simplified — you can watch this Buildzoid video for substantially more information on the topic of capacitors, or alternatively here's another take from Reddit on the subject of capacitors.

Moving on, Nvidia says trying to blame instability on capacitors alone is off target. Graphics cards are incredibly complex, and Nvidia is still working to improve the overall experience of using the new Ampere GPUs. More specifically, the Nvidia 456.55 drivers are supposed to help address the instability problems. Could new drivers be enough, or is this a stopgap solution while the AIBs look to tweak their card designs?

We've had several AIB cards in for review, and at least two of the cards had stability problems that caused us to reach out to the manufacturers. The 3080 Founders Edition and 3090 Founders Edition performed fine in our initial testing, but those are also running the lowest clock speeds of the bunch. They're also using full MLCC solutions. Meanwhile, the AIB cards come with factory overclocks and often use at least a few SP-Caps instead of pure MLCC solutions. All of the AIB cards that showed instability also tended to run fine with a modest drop in clock speeds. Could the new drivers just be tuning clocks and performance down a bit to improve stability, or is there more to it? That's what we wanted to figure out.

First let's introduce the cards we're testing. We've got the RTX 3080 Founders Edition and Asus RTX 3080 TUF Gaming OC, both of which seemed to run fine even during our initial testing. However, we couldn't test every game, let alone play each game for a long stretch, so it's possible we just got 'lucky.' Next, we have the RTX 3090 Founders Edition and Gigabyte’s RTX 3090 Eagle. We haven't posted our full review of the Gigabyte yet, due to — you guessed it — instabilities encountered during our initial testing.

Let's also quickly pause to talk about the blame game. Who's responsible for the AIB cards showing increased instability compared to Nvidia's Founders Edition cards? Did they choose the wrong capacitor solutions? Since the capacitor options are based on Nvidia's documentation, we'd say no. These designs were effectively sanctioned by Nvidia before the AIBs manufactured them. However, testing cards at reference clocks of around 1700-1850 MHz isn't the same as factory overclocking the cards to levels that at times reach beyond 2000 MHz.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Now couple that with various manufacturers trying to bin their GPUs in a short time to put the best chips in the best cards and you have a recipe for… well, it sounds like a recipe for the RTX 30-series launch. Stock-clocked cards generally do fine, but factory overclocked cards might be pushing things too hard — particularly it seems on cards that eschew MLCC blocks in favor of generally cheaper SP-Caps. The constant game of one upmanship waged among the AIBs has caused casualties before, and likely will again. It's like hotrodders trying to squeeze every ounce of performance out of their cars, occasionally going too far.

Again, however, we come to the real question: Can the drivers make a difference? The answer is yes. On a simple level, Nvidia could use new drivers to tune down the boost clocks. If the cards only encounter stability issues at speeds above 2000 MHz, that might be enough. Or the drivers might tweak how quickly boost clocks ramp up and down. It's possible that rapid changes in clock speed are to blame, and Nvidia could for example have the GPU clocks scale up/down over 100ms instead of 10ms to improve stability. We're not saying that's what's happening — it could be something else. There's a lot that can be done with drivers, and so far Nvidia isn't revealing any specific details.

Whatever the approach, changing the boost clock algorithm or other aspects of the drivers might also affect the performance on cards that weren't having stability problems. We've got four cards that we tested with earlier drivers, and we're going to run the same tests with the 456.55 drivers to see what if anything has changed.

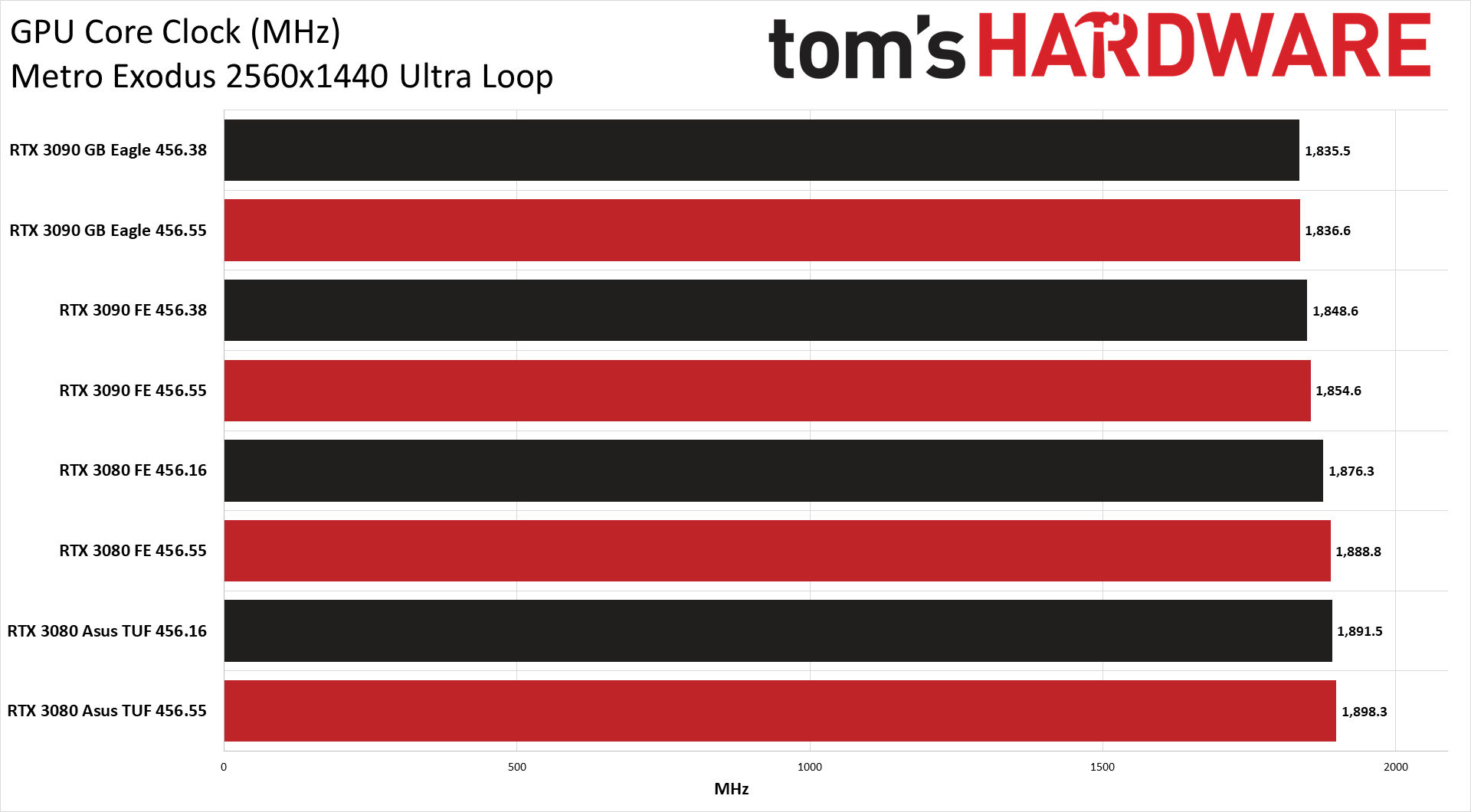

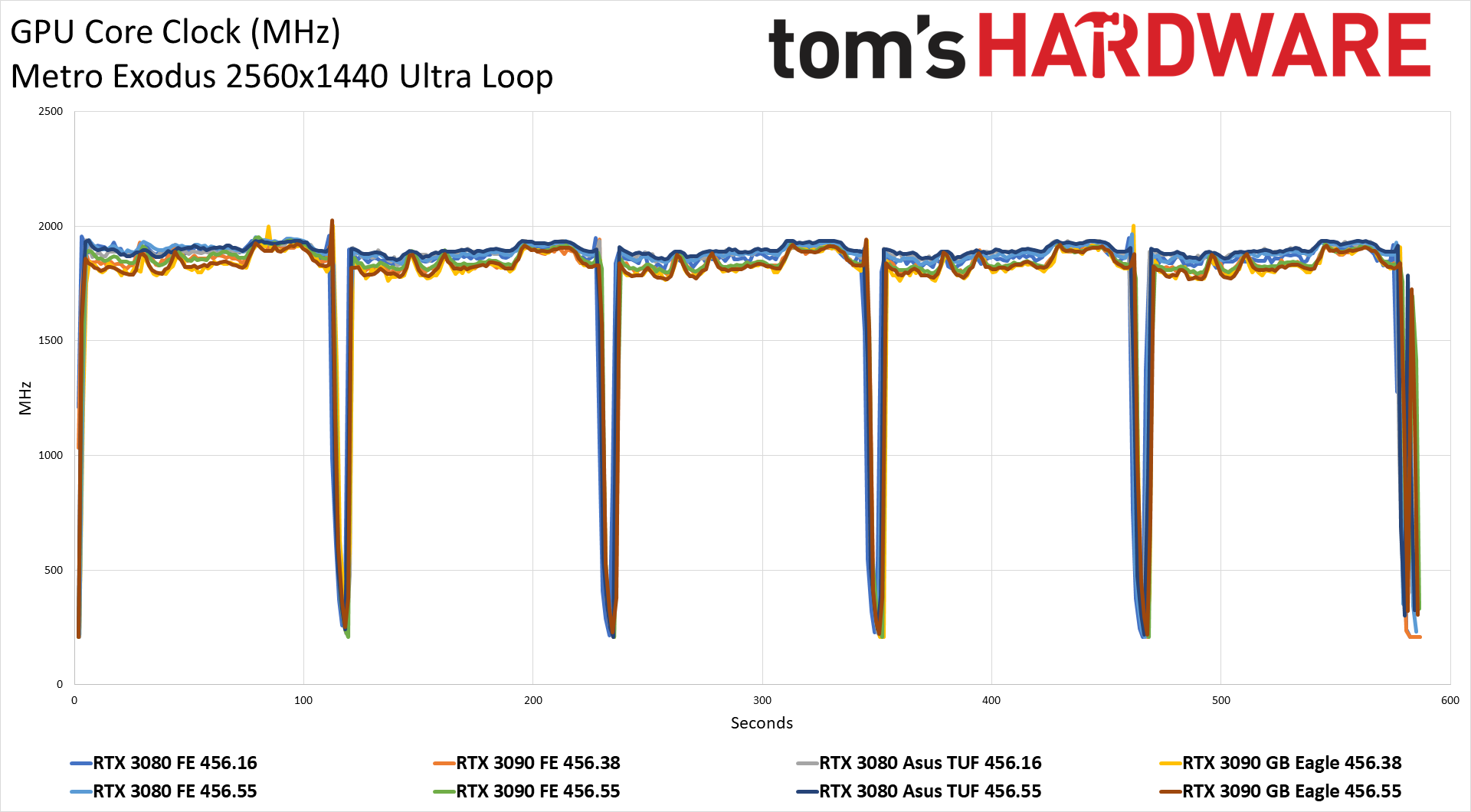

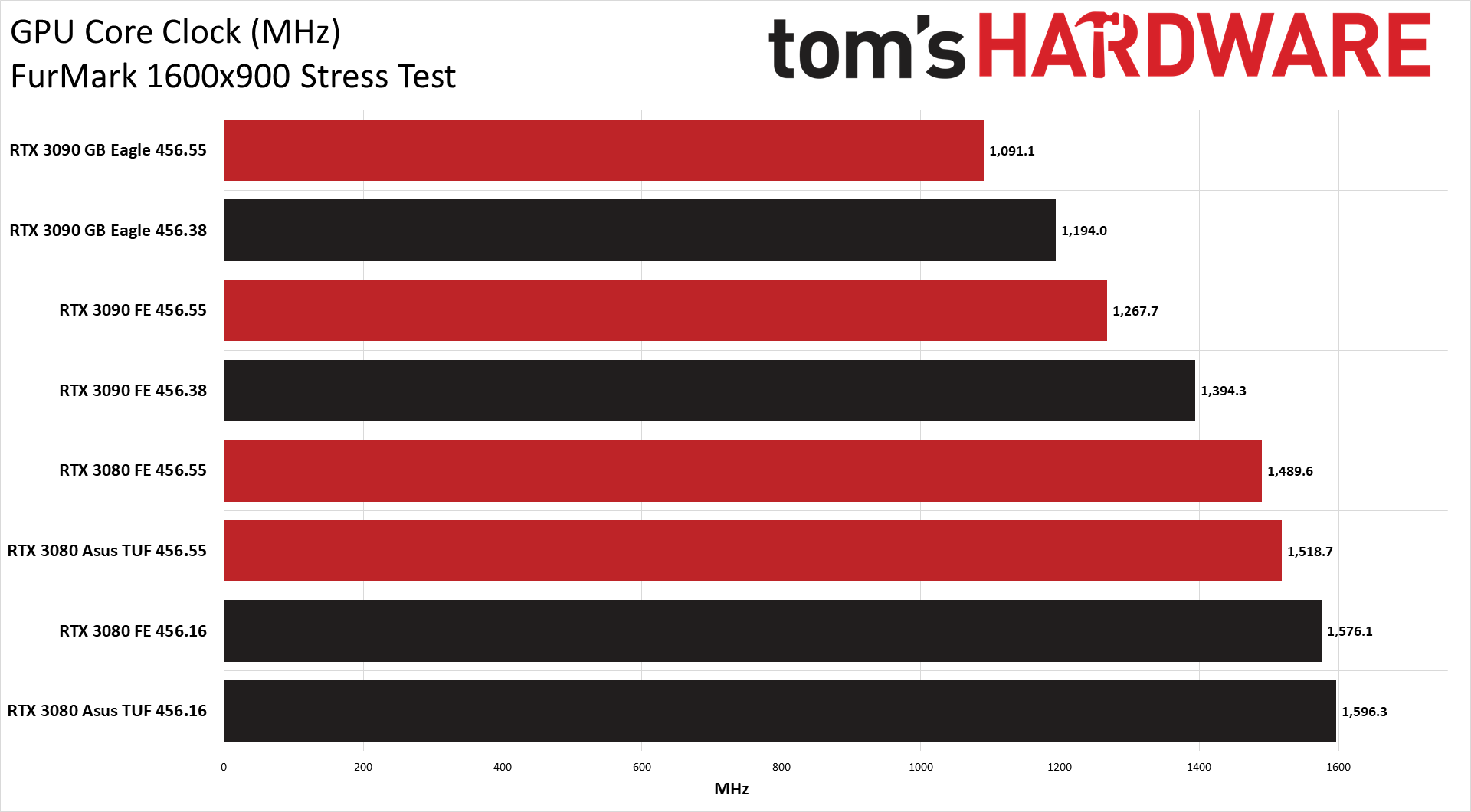

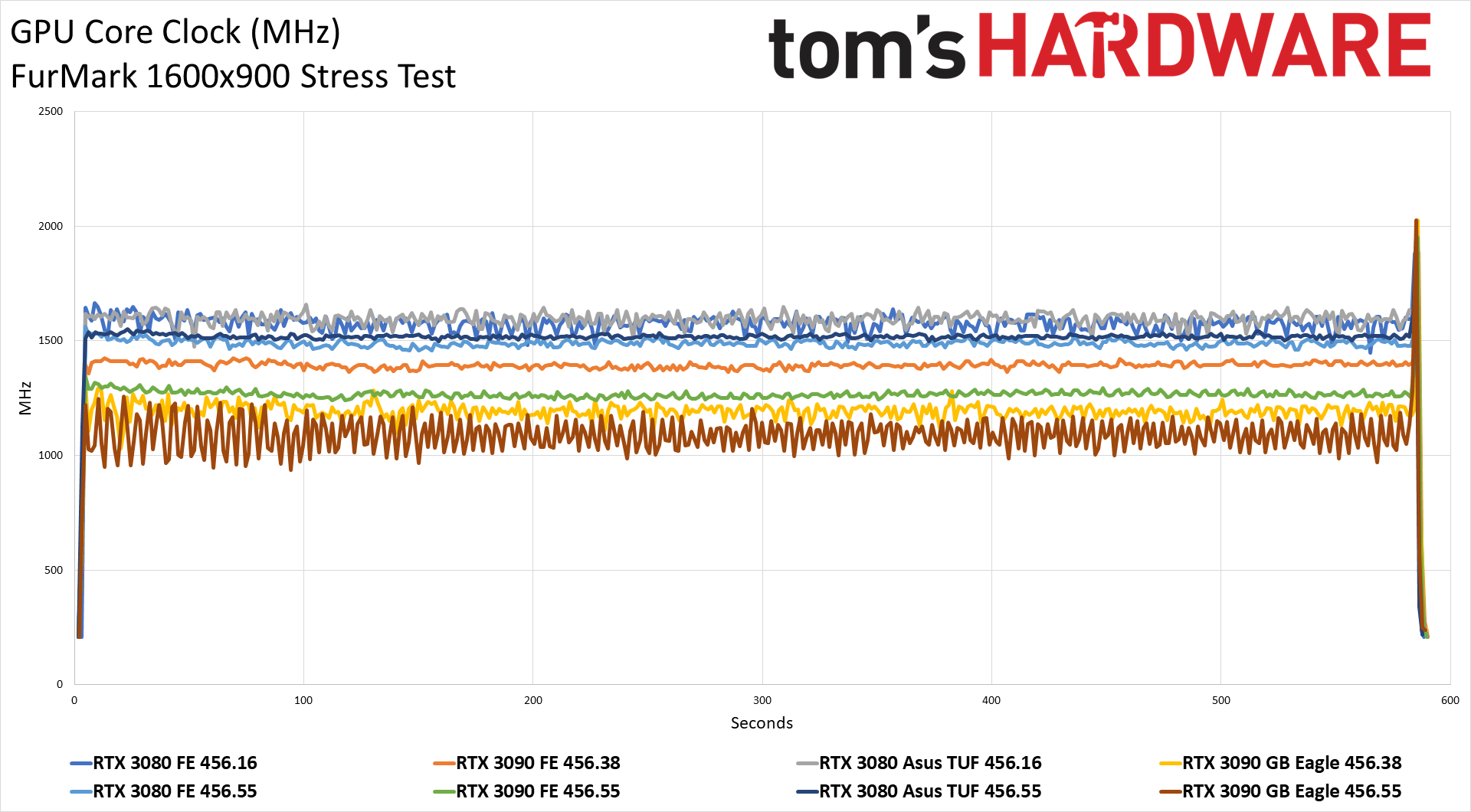

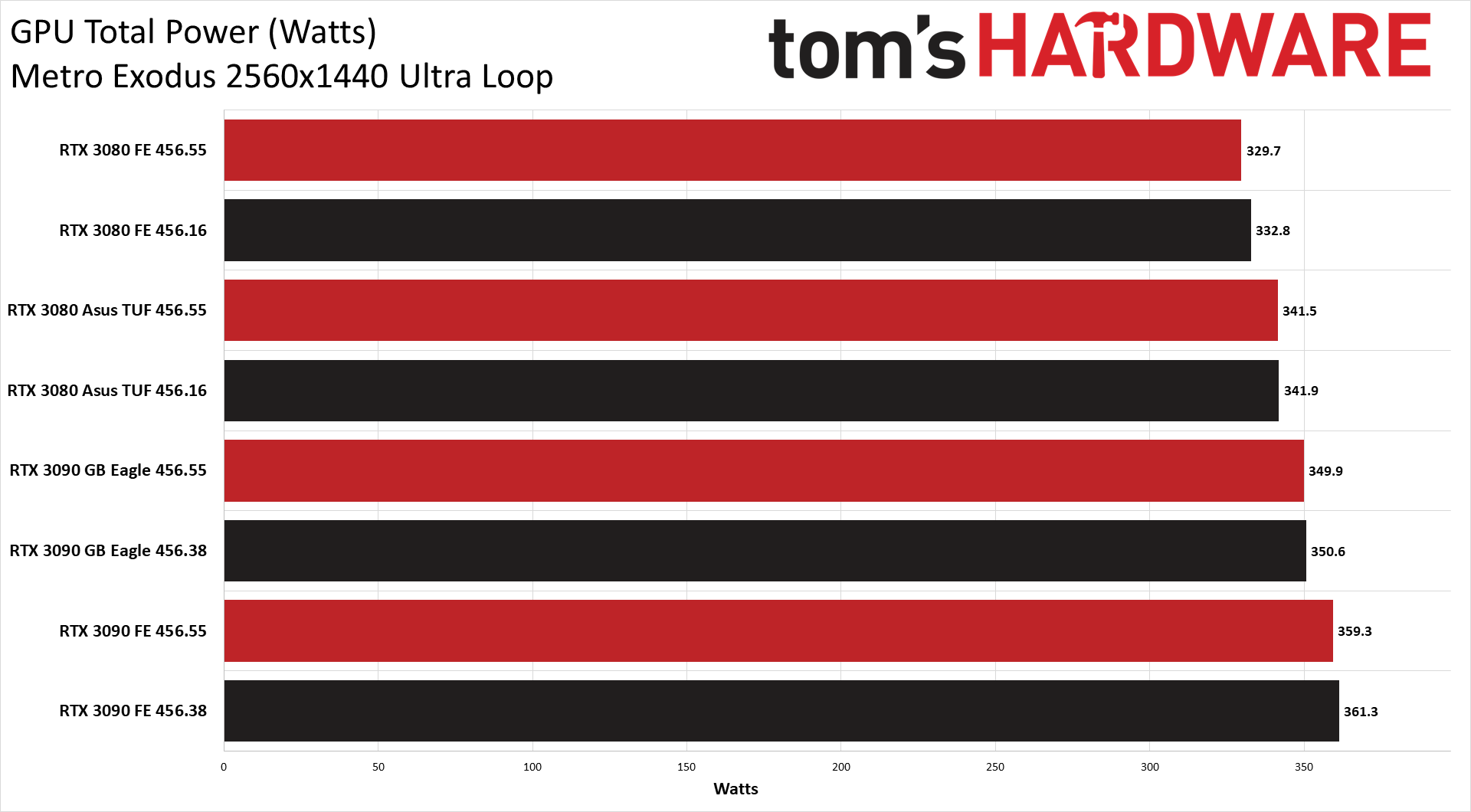

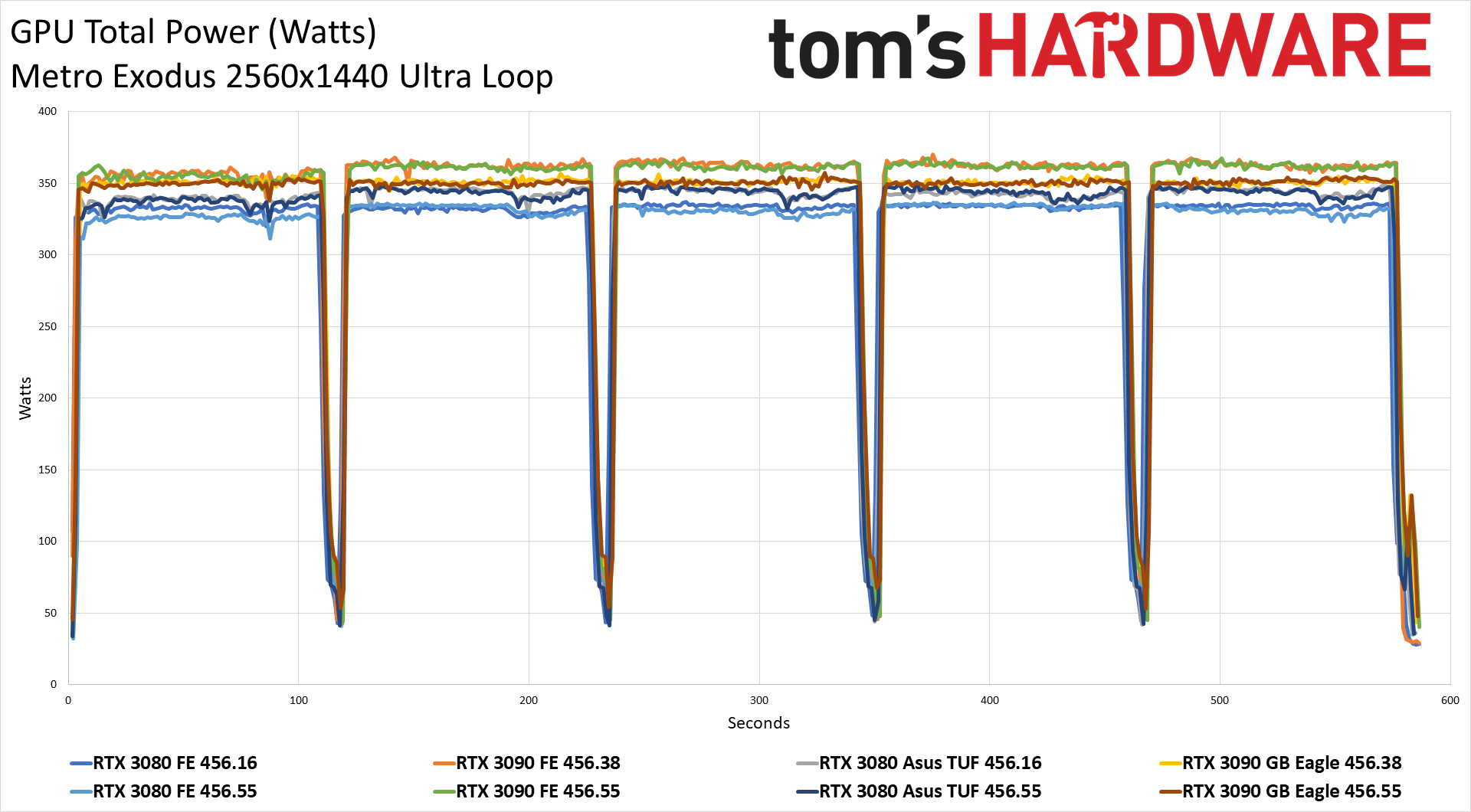

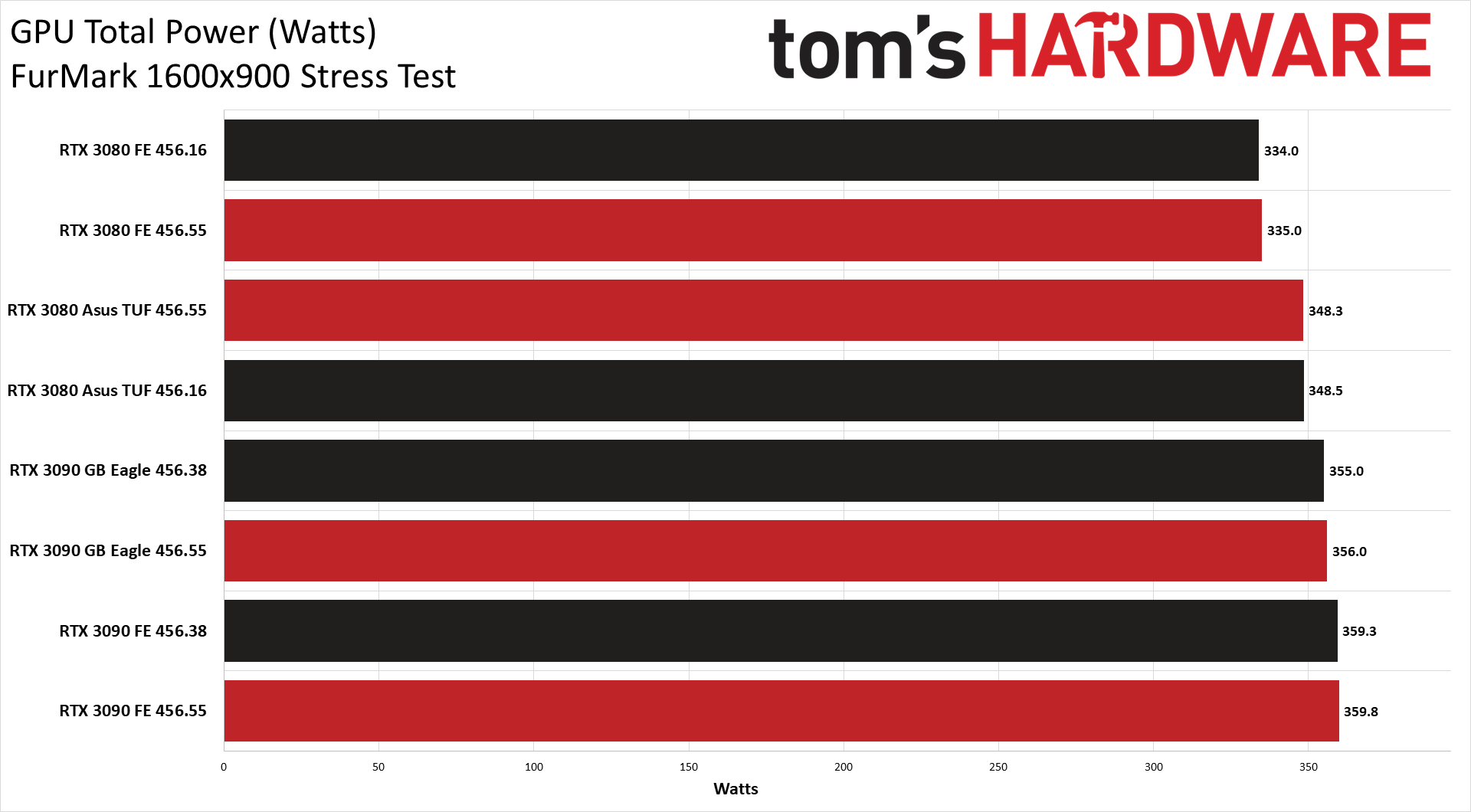

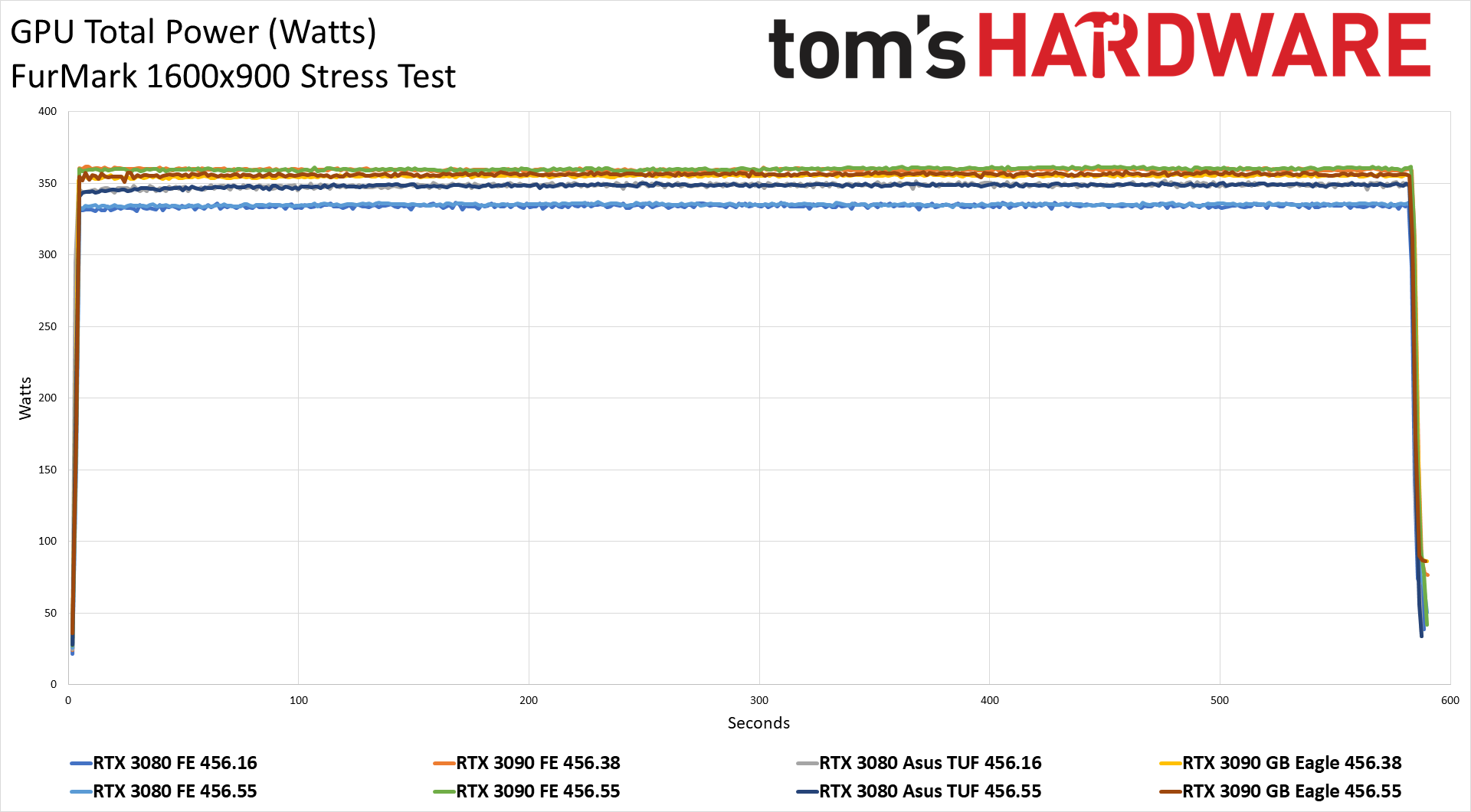

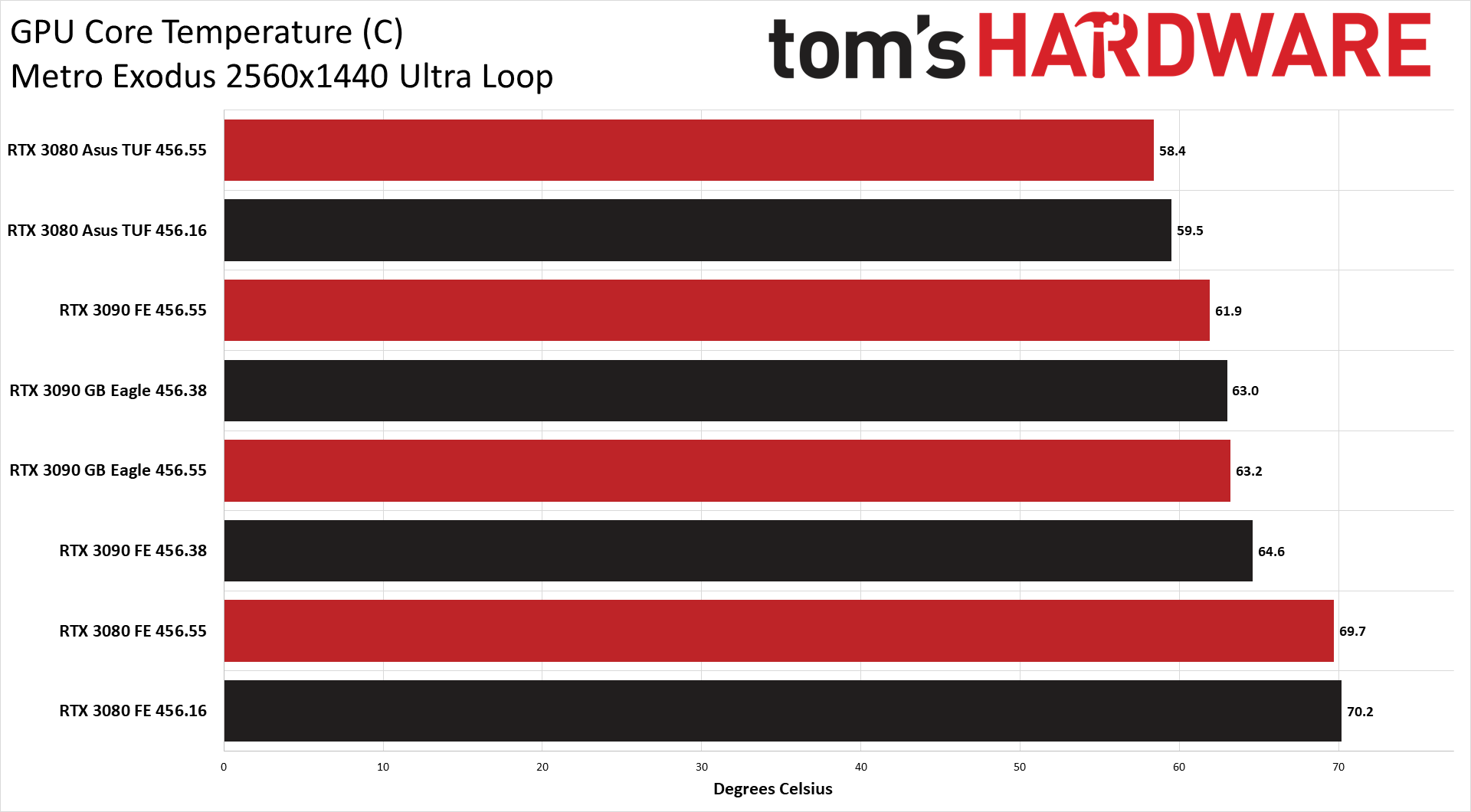

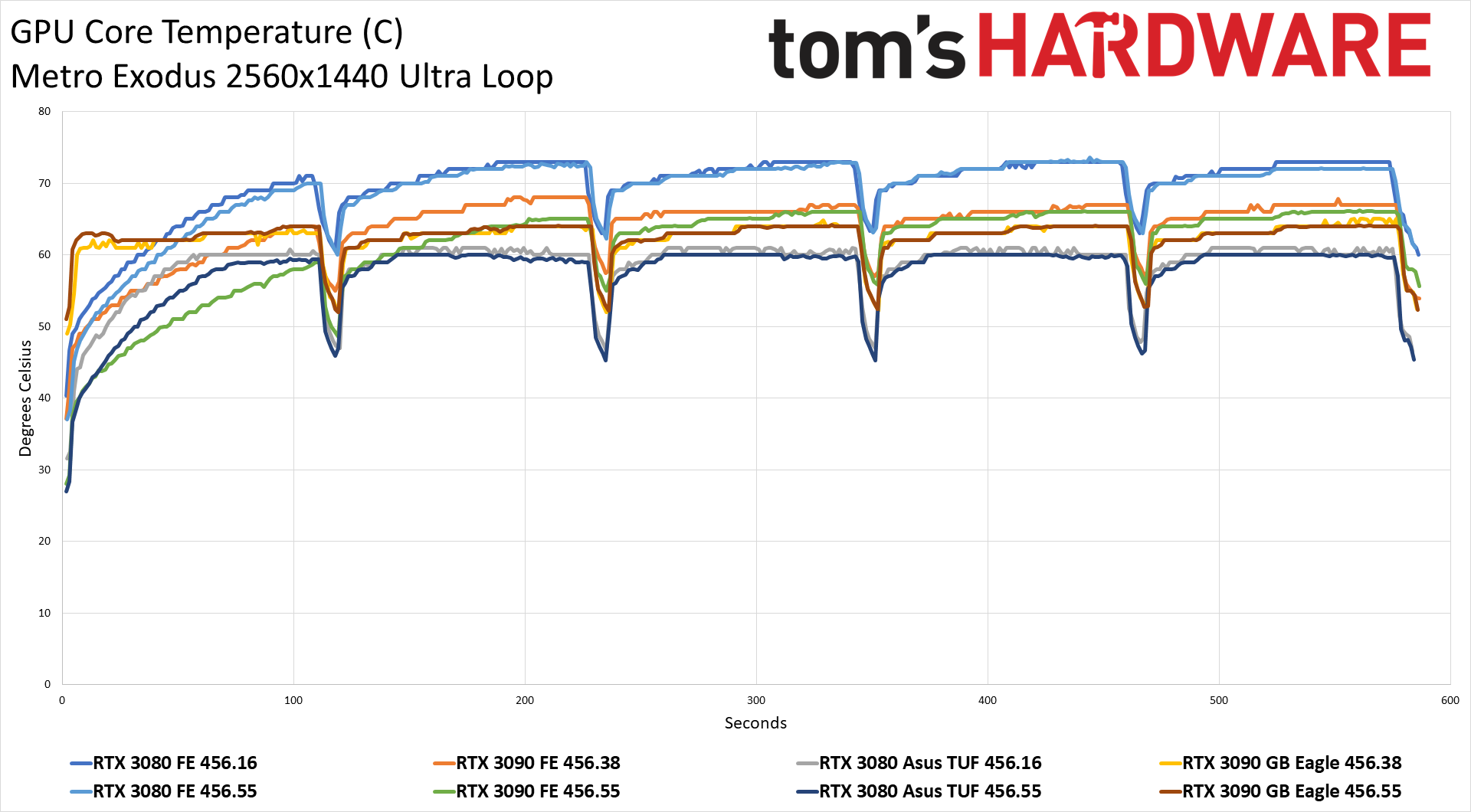

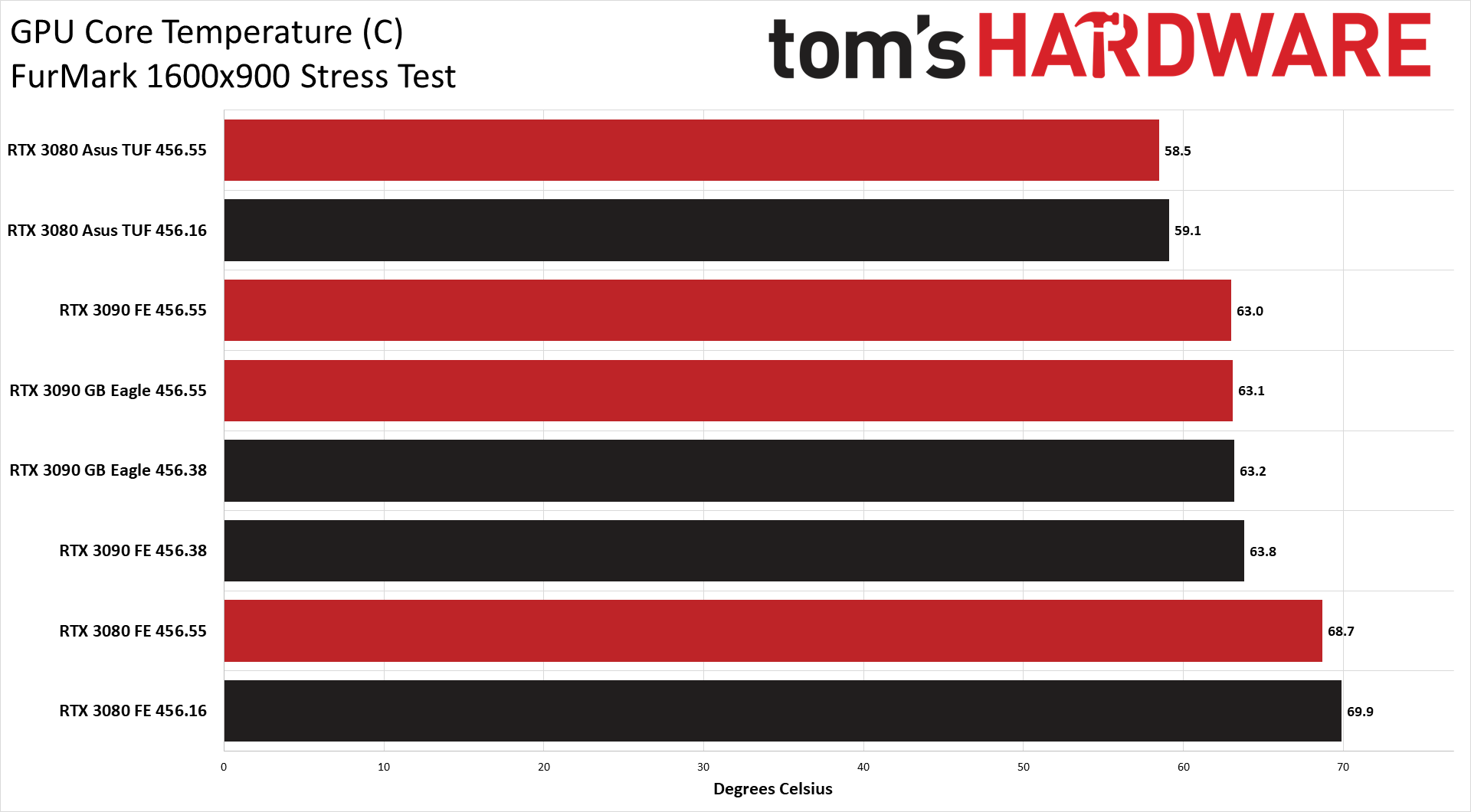

While we have additional data from other games, we only captured detailed clock speeds in our Metro Exodus and FurMark power testing. Since Metro Exodus also happens to be one of the games where we encountered stability issues on at least some of the cards, that should be a good enough starting point. FurMark meanwhile remains an interesting look at worst-case scenarios with a 'power virus.' We're using the same testbed as always (see boxout on the right), so let's just hit the before and after numbers. Note that the 3080 cards were tested with the reviewer 456.16 drivers, while the 3090 cards used the publicly available 456.38 drivers. We'll indicate the drivers used in the charts.

We don't have extensive clock speed data from every game, but we can immediately see that the latest drivers didn't really impact GPU clocks in a meaningful way. There's some averaging and variance in these charts, as each run is somewhat unique, but in general the GPU clocks are if anything a touch higher with the latest drivers -- or at least, the clocks are higher in Metro Exodus specifically.

Swap over to FurMark and the opposite is true. The two 3090 cards dropped average clocks by over 100 MHz; the two RTX 3080 cards run about 80-90 MHz slower. Again, FurMark isn't really a 'normal' workload for a GPU, so changes in its behavior shouldn't be a major problem for most users. However, there are compute workloads (cryptocurrency mining, Folding@Home, and others) where the behavior may end up mirroring FurMark more than games.

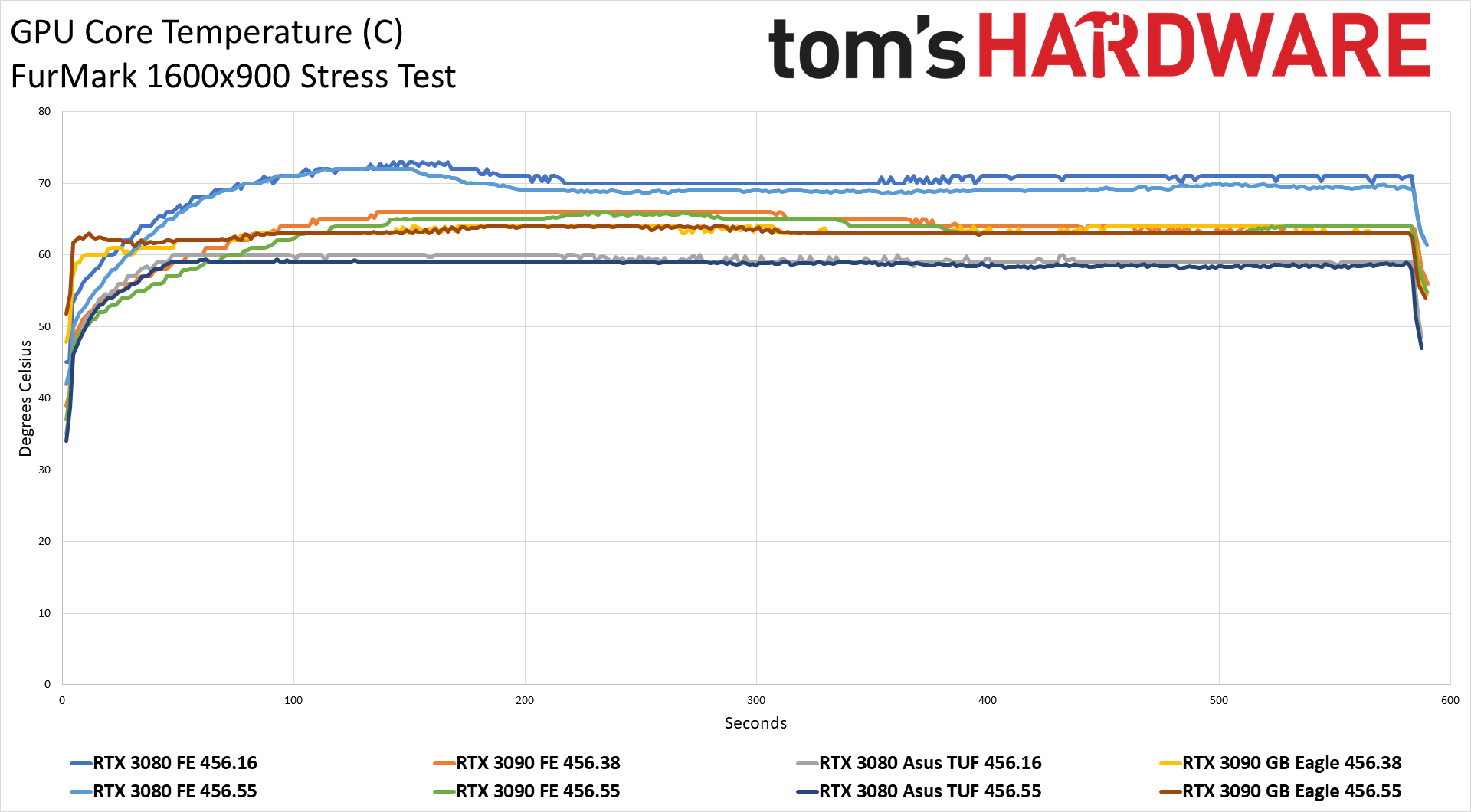

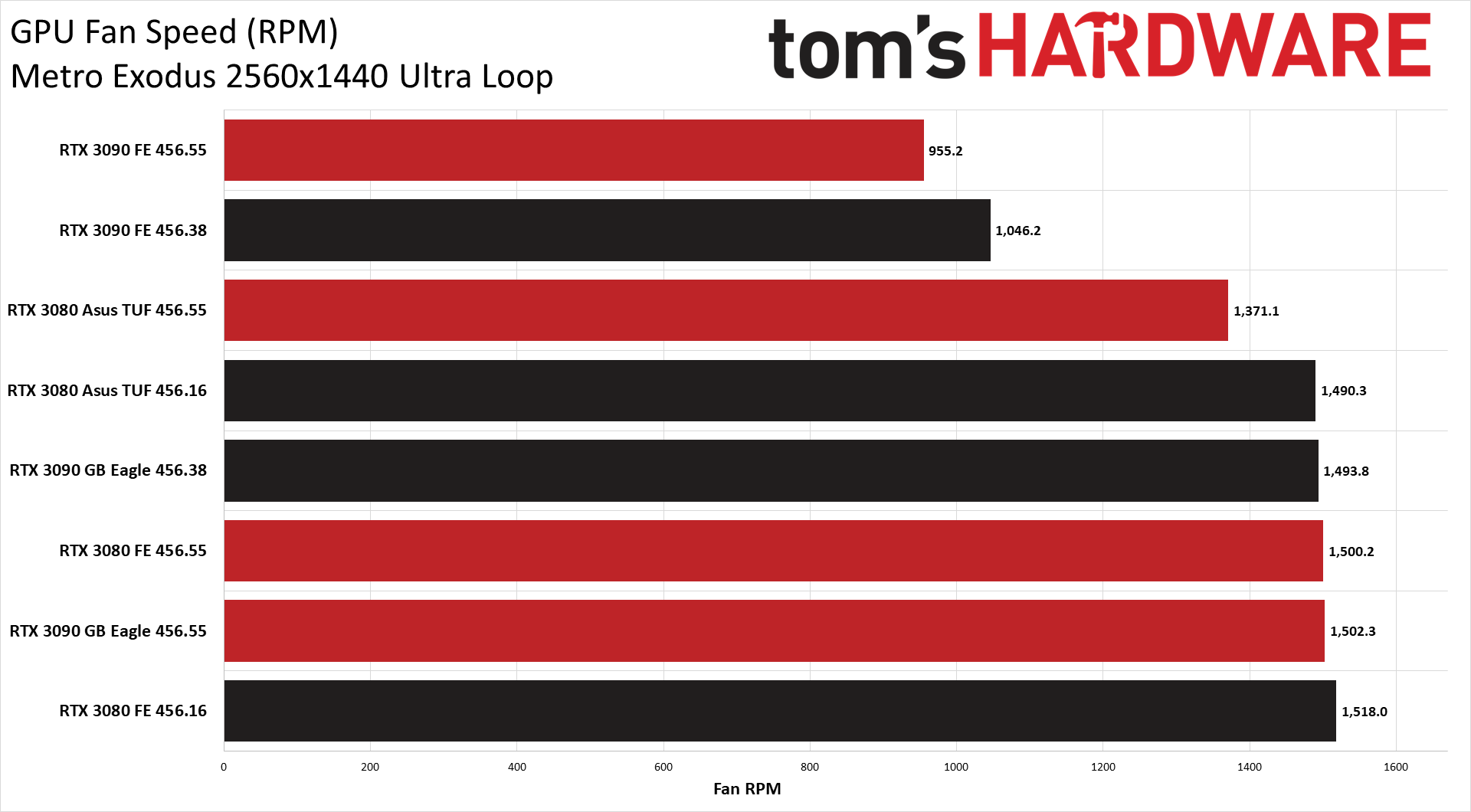

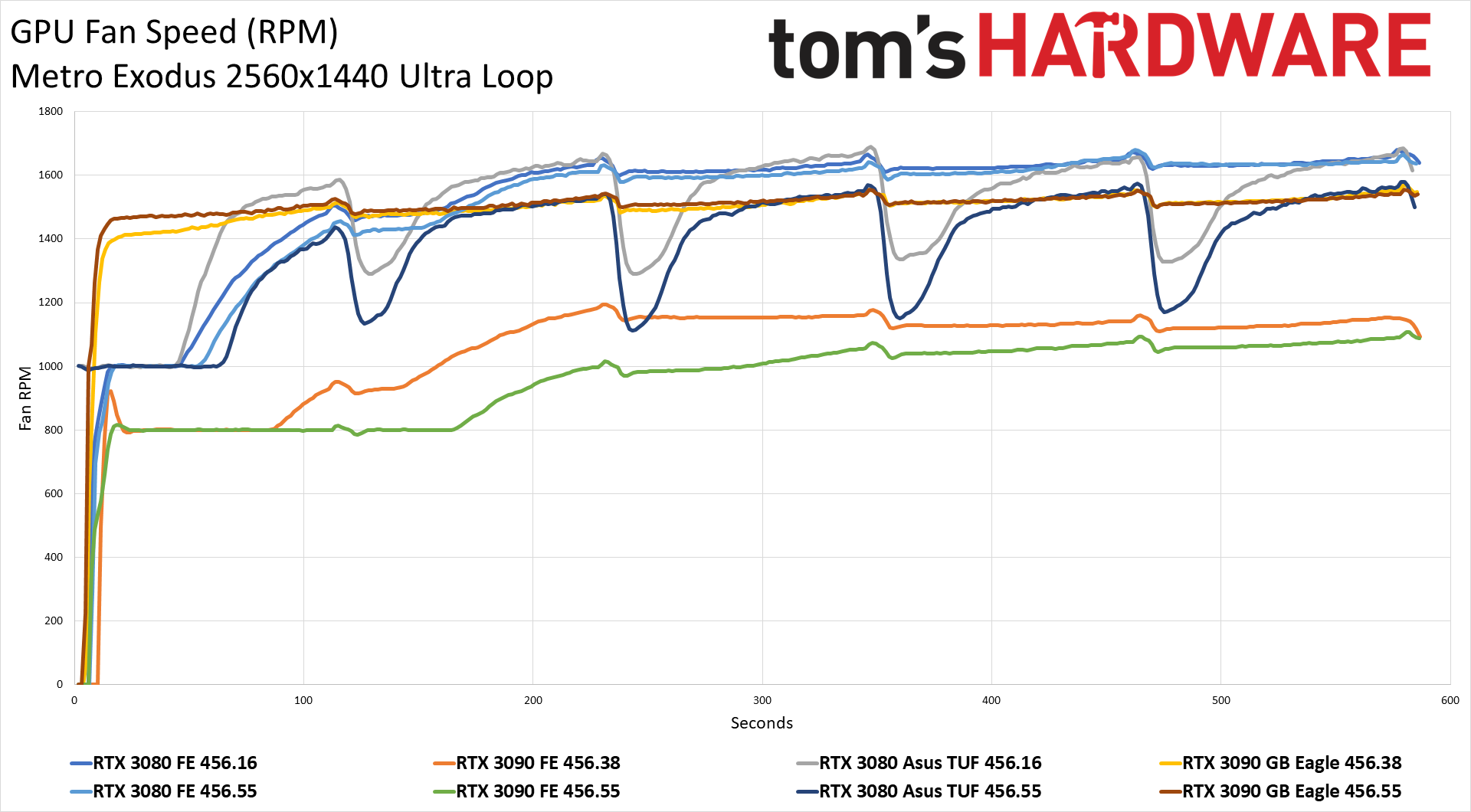

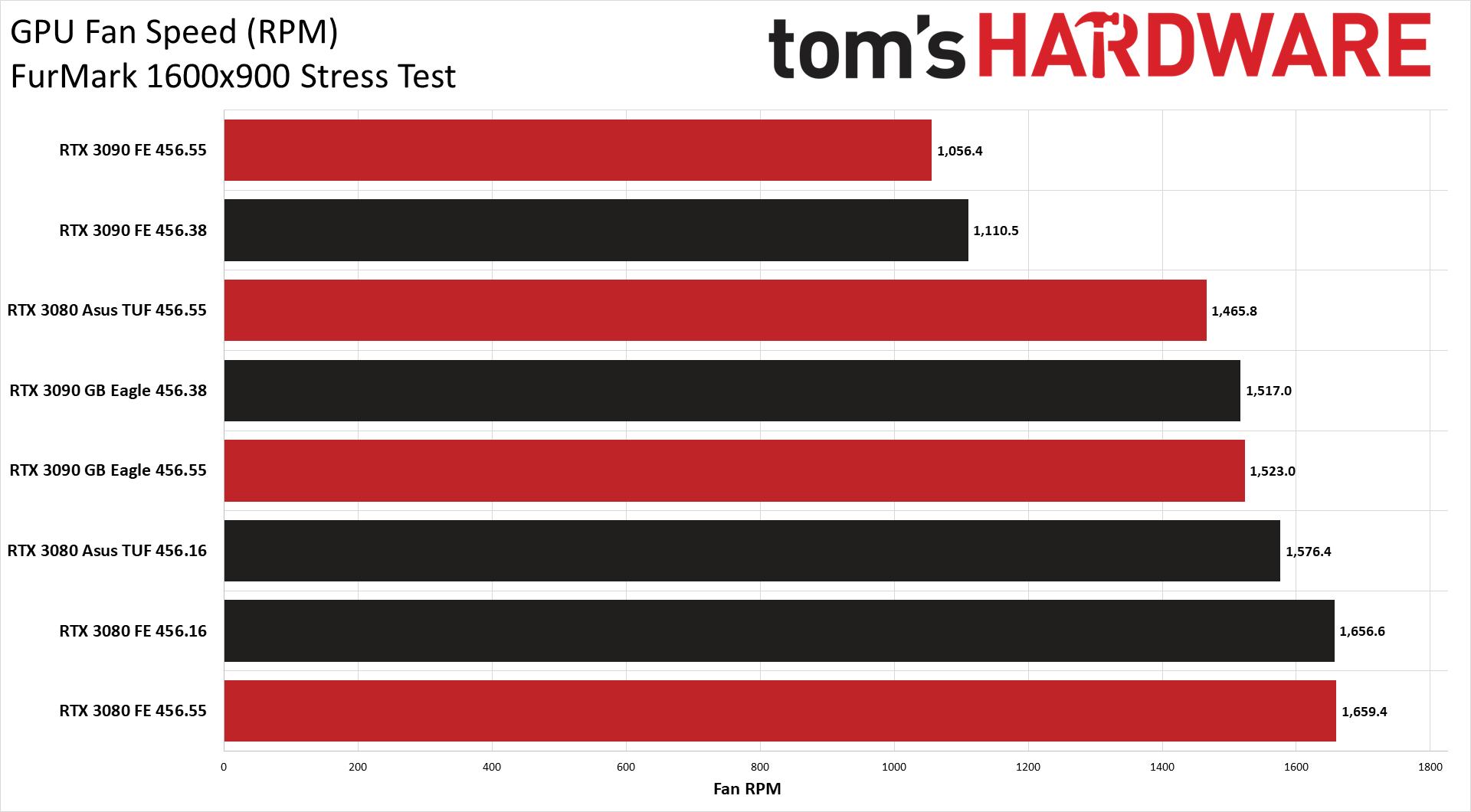

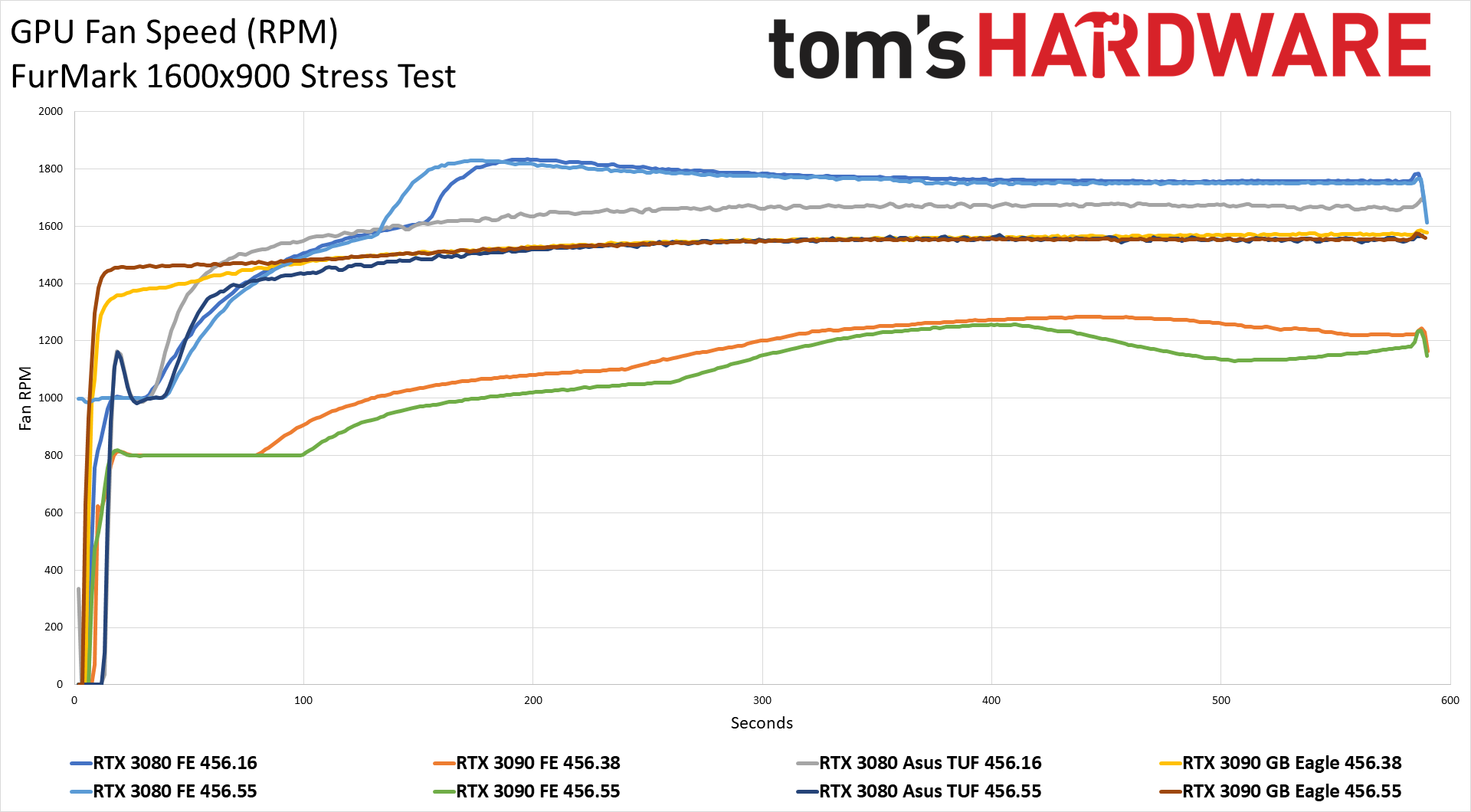

Interestingly, despite higher clocks on average, power use has dropped slightly on each GPU in the gaming workload. FurMark wavers a bit, with slightly higher power on some cards and slightly lower power with others, though all of these changes are extremely small (less than 1W for FurMark, and at most 3W with Metro Exodus). Power use isn't the only thing that generally improved, however. Look at the temperature and fan speed charts and it's clear that the updated drivers have improved things in these areas as well. Temperatures are 1-2C lower, and fan speeds show a significant drop on the 3090 FE and Asus 3080 cards.

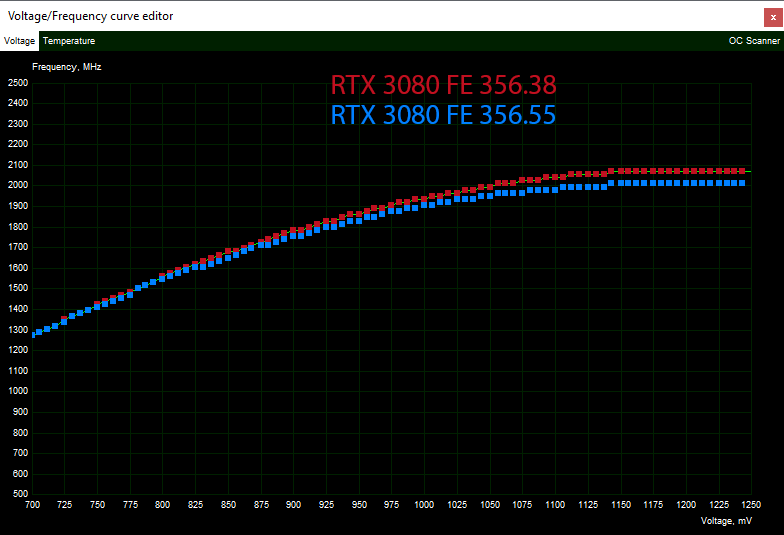

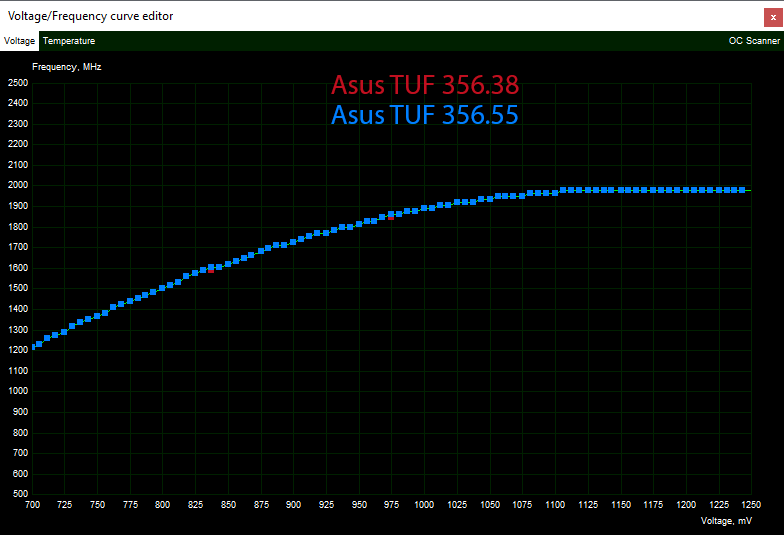

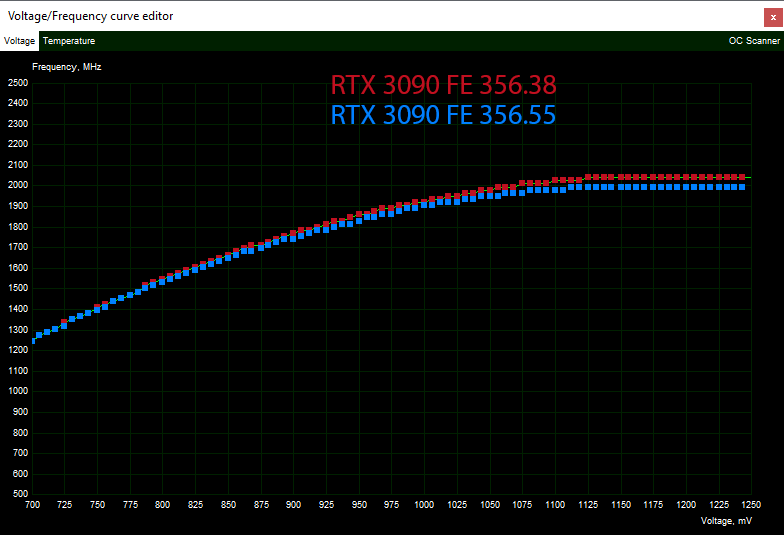

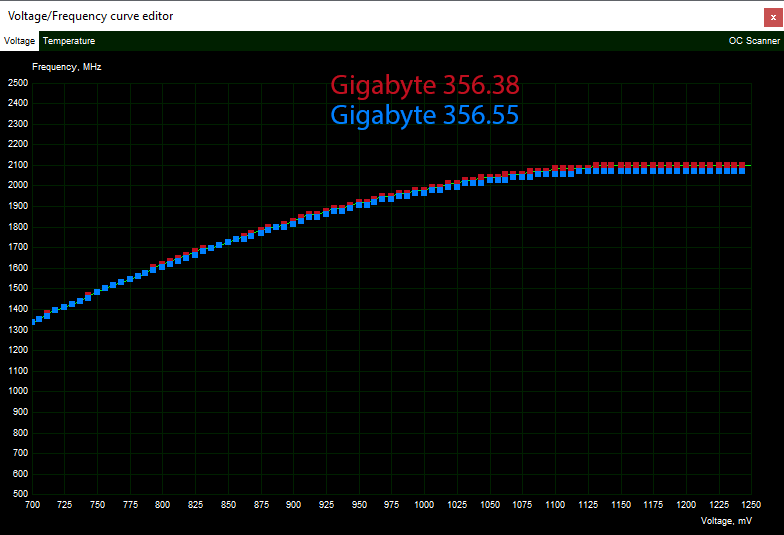

That brings us to the final bit of information: We grabbed the voltage/frequency curves using MSI Afterburner with the 456.38 and 456.55 drivers and overlaid the results for each card. The Asus card is using the default 'Gaming Mode' profile, so it's possible the 'OC Mode' would have shown larger changes; the default mode looks virtually identical. The other cards, though, show a clear and consistent drop in frequencies near the top of the voltage range.

What does that mean? In general, there's no need for a card to run at 1250mV if it can hit the same performance level at 1150mV — it's just using more power and generating more heat, which perhaps means worse stability. That seems to be a big part of reducing the temperatures and fan speeds without sacrificing performance.

That doesn't mean performance didn't change at all. We did run a full suite of tests on the Gigabyte RTX 3090 card, on both 456.38 and 456.55 drivers. The result: Margin of error differences. Technically, the 456.55 drivers are 0.2-0.5% slower overall, but they're slightly faster in a few games as well, and we're not going to argue about a less than 1% difference.

So there you have it: Drivers Matter™. We suspected there was a good chance Nvidia could implement fixes without the need for firmware updates or hardware recalls. At the same time, the 456.55 drivers certainly aren't the end of the road. Nvidia will continue to tweak and tune its drivers, and there are probably still cards and/or games that have issues. As we noted earlier, higher factory overclocks often seem to be the main culprit, so if you do have an RTX 3080 or RTX 3090 that still isn't running fully stable, and you're not ready to return the card, try using MSI Afterburner, EVGA Precision X1, or a similar utility to drop the GPU clocks by 20-50 MHz. That might be all that's required to go from frustrating crashes to stable high-end gaming bliss.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

spongiemaster Replythe 456.55 drivers are 0.2-0.5% slower overall

I hope Nvidia set aside money for the class action lawsuit. -

helper800 Reply

Thats within the margin of error. I completely doubt any lawyer worth their salt would sue over that specifically.spongiemaster said:I hope Nvidia set aside money for the class action lawsuit. -

Kamen Rider Blade So OCing is largely meaningless when you're this close to the ragged edge of stability.Reply -

Tanquen Still a pretty good slap in the face considering gaming cards cost 2-3-4 times what they should.Reply -

ForwardUntoDawn Tom's, could you please list which cards from which manufacturers do and don't have the MLCC blocks?Reply -

drivinfast247 Reply

Lol. Where did it guarantee a 2ghz clock?spongiemaster said:I hope Nvidia set aside money for the class action lawsuit. -

spongiemaster Reply

Seriously?helper800 said:Thats within the margin of error. I completely doubt any lawyer worth their salt would sue over that specifically. -

helper800 Reply

Seriously. 0.5-1% is within the margin of error in any testing with many many variables like hardware. Especially since boosting behaviors are never going to be the same between benchmarking runs.spongiemaster said:Seriously? -

deesider Reply

They fixed the problem for free - how are you going to sue for that :)spongiemaster said:I hope Nvidia set aside money for the class action lawsuit.