GPU Shortages Hit Nvidia's Data Center Business: Not Enough $15,000+ GPUs

Supply for Nvidia's A100 GPU will take several months to catch up.

Demand for the latest consumer-grade graphics cards from AMD and Nvidia exceeds supply so badly that the scalping business now thrives more than ever. But gamers aren't the only customers who want to buy the latest GPUs. Apparently demand for Nvidia's A100 among data centers, scientists, and the HPC community is so high that it will take several months for the company to catch up, its VP recently admitted.

"It is going to take several months to catch up some of the demand," said Ian Buck, vice president of Accelerated Computing Business Unit at Nvidia, at Wells Fargo TMT Broker Conference Call. "What's exciting is the sort of the interest and growth in both training and inference. […] Every time we introduce a new architecture, it's a game changer, right? So A100 is 20x better performance than V100, and with that comes a new wave of demand and interest in our products." (based on SeekingAlpha's transcript.)

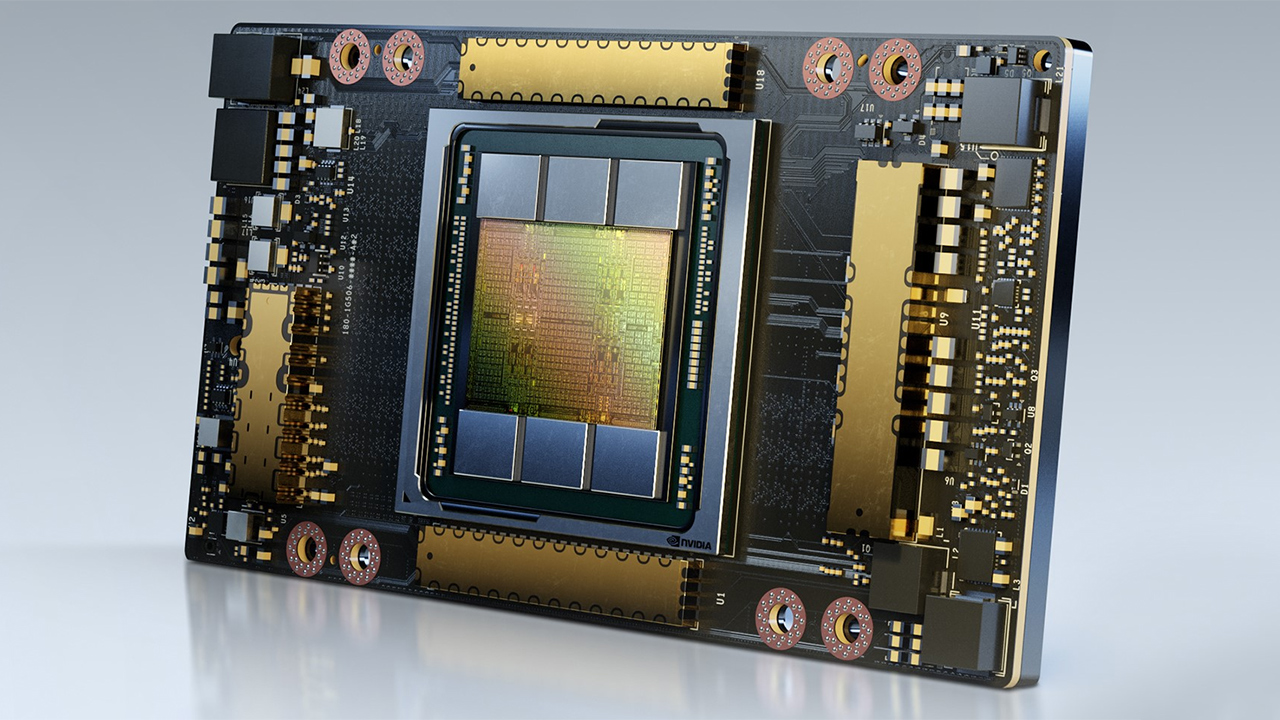

Nvidia's A100 is pretty much a universal GPU that supports a host of new instructions and formats for various compute workloads. The GPU is manufactured by Taiwan Semiconductor Manufacturing Co. (TSMC) using one of its 7nm fabrication processes, one of the most popular advanced technologies today. The 7nm TSMC process is also used to manufacture dozens of chips for the mass market, including SoCs for Microsoft's Xbox Series X and Sony's PlayStation 5.

Meanwhile, Nvidia's A100 is among the largest chips currently produced by TSMC, and it's typically rather challenging to hit great yields with this large of a die. In addition to the GPU silicon, the A100 products also consume loads of HBM2 memory – each carries 40GB of HBM2 onboard.

"It is always an exciting time for me to help bring all those platforms to market, whether they are hyperscalers, who are just now bringing online, all the OEMs as they're launching their products, as well as the rest of the market," said Buck. "So, it will take several months to catch up with the demand."

The A100's flexibility allows its use in a wide variety of applications, including AI/ML inference and training, as well as all kinds of high-performance computing (HPC). The A100 also delivers significantly higher performance than the Tesla V100, its predecessor. The A100 is 25% faster in FP32/FP64 computing, up to four times faster in Big Data analytics, and up to 20 times faster in certain edge cases where its architecture can spread its wings. Given the capabilities and performance of Nvidia's latest datacenter GPU, it's not surprising that demand is so high.

Nvidia does not disclose the pricing of its compute accelerators, such as the A100 or its predecessors. Resellers sell Nvidia's new Tesla V100 32GB SXM2 for $14,500, whereas a refurbished card can be obtained for $7,515. The latest A100 may cost more than its predecessor, so Nvidia seems to have a boatload of $15,000+ GPUs (that's a speculative price) on backorder.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Interestingly, Nvidia is actually expanding the addressable market for its A100-based solutions. Recently the company introduced its A100 80GB SXM2 GPU that doubles the local memory capacity. The monstrous GPU can be used to process workloads with ultra-large datasets, or it can be partitioned into (up to) seven GPU instances, each with about 10GB of memory. Nvidia expects its partners to ship their A100 80GB-based solutions sometime in the first half of 2021.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

samopa Why would Nvidia want to pruduce $1,500 card or even $800 card if they still overwhelm in producing $15,000 cardReply -

torbjorn.lindgren Reply

The A100 chip is produced in TSMC 7nm while the gaming cards are produced in Samsung 8nm and redesigning A100 for S-8nm would be massive and long undertaking (many months at best and result in reduced performance and/or higher power). So there's no competition between their gaming cards and the A100 on the chip side, and the same applies to other major components such as memory, basically there's little or no commonality outside commodity parts which can often be mixed and matched depending on what is available and is unlikely to be a bottleneck.samopa said:Why would Nvidia want to pruduce $1,500 card or even $800 card if they still overwhelm in producing $15,000 card

Note that this mean Nvidia A100 are in theory competing with TSMC's other 7nm customers for manufacturing space, IE with RX6800/6900, Zen3, XBox Series S/X, Playstation 5 and lots of different mobile SOCs (for phones). Given the price Nvidia get for the A100 they'd likely be willing to pay more for it but... all that capacity is bound up via iron-clad contracts, even if they offer TSMC more money they likely can't produce more for Nvidia right now!

If Nvidia produced gaming GPUs on TSMC 7nm they likely would have already redirected that production capacity to A100 but for RTX 30xx Nvidia uses the (new and slightly) inferior Samsung 8nm because they couldn't possibly get enough capacity for RTX 30xx from TSMC due to all their existing commitments TSMC had on it - to be fair RTX 30xx would have required a much larger capacity allocation than increasing A100 chip production but it shows that capacity there is tight, TSMC wouldn't leave money on the table if they had a choice.

The other obvious major component that could be their bottleneck for A100 is the HBM2 memory, there they're not competing with as many but supply is also much more limited and the other users also tend to use theirs in massively expensive hardware so they likely can't go the "we're paying more" route even if it's not bound up in contracts (and it may be). -

Gomez Addams I am not aware of any GPU sell for 15K and, as far as I know, they don't have one that sells for more than 10K.Reply

Here's an example : https://www.thinkmate.com/system/gpx-qt8-12e2-8gpu. That is a page at Thinkmate's site who is an OEM that makes servers and workstations and also resells machines from SuperMicro, ASUS, Tyan, and others. They sell a machine with an EPYC CPU and the added cost to select an A100 GPU is $9799. That is considerably less than 15K. The Tesla GPUs are all in the 9K range also.