Immersed Europe Keynote: Epic's Big VR Buy-In And Upcoming Revolutionary Audio Engine

Unreal Engine 4 Lead Programmer James Golding has spoken extensively about the future of its engine, and he shared more in his keynote address at the Immersed Europe conference.

VR will certainly change the world (it's already doing it), and developers are concerned about the huge amount of hardware devices that are coming, starting this year, to fulfill our needs of display and input in this new environment. And Epic Games wants to help with new advancements, features and hardware support to empower this device agnostic engine that allow developers to care only about...well, developing.

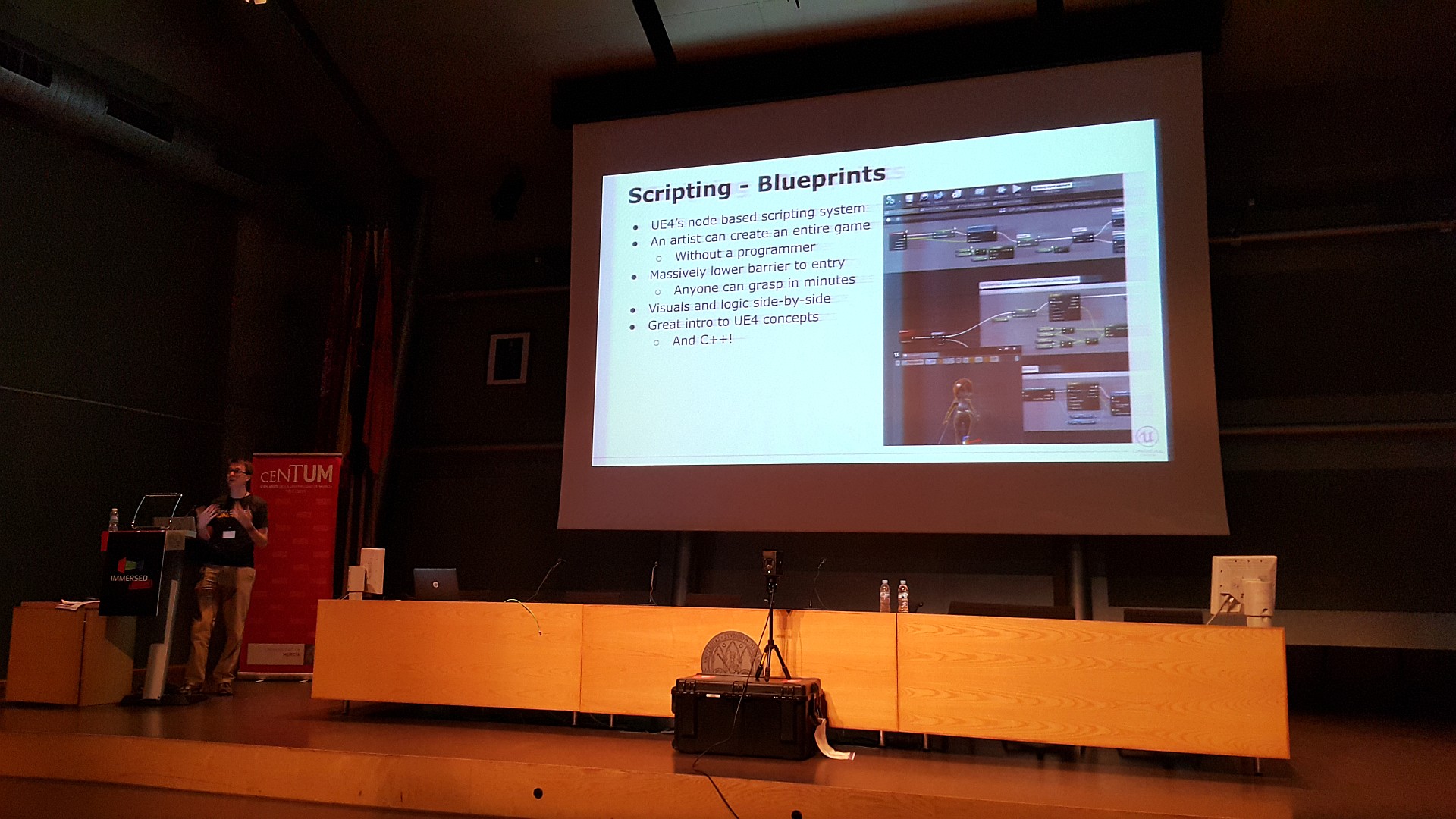

The first thing Golding mentioned was coding. Although blueprints have been known for a while, he also talked about the future of scripting -- what he called "visual scripting" by using wires between objects and natural languages like iKinema INTiMATE or the upcoming VRScript, a platform that John Carmack is building using Scheme that will be revealed at Oculus Connect 2. Epic Games wants graphical artists to create full-fledged experiences without a programmer, and visual scripting will ease the pain of coding and debugging.

Article continues belowGolding continued talking about the latest changes brought by Unreal Engine 4.9, especially the support for motion controllers. Currently, it's compatible with just the HTC Vive controllers, but Epic is already working on PS Move support, and in the future, Oculus Touch will also be integrated.

What does that mean for developers? Well, they won't have to worry about individual device support. They can just drop a Motion Controller component on their projects, and the engine will take care of it, so their apps will work with any supported controller. This could be very interesting for some motion controllers (Sixense STEM, ControlVR and PrioVR to name a few) that were successfully funded on Kickstarter but still haven't been shipped to backers who keep asking whether those devices will be useful at all once HTC Vive controllers and Oculus Touch are released. So, if they are supported under Unreal Engine, they will simply work with any experience created with it.

Speaking about motion controllers, Golding asked developers to be careful with their users. And by "careful," he meant that they should be forced neither to make abrupt moves, nor keep their arms up for long periods of time. Motion controllers can leave players physically exhausted, and developers should be very aware of this issue, or users will lose interest in playing that way.

"Gamifying" interactions should be avoided, VR experiences must be created from scratch for VR and not adapted from traditional gaming. For example, shooters will have to be quite different from how they're presented today. Players don't want to be holding their weapon up all the time while playing, so the encounters with enemies can be intense, but after that the players should be able to let their arms rest to recover.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Another interesting feature is the data fusion from multiple sensors or controllers. For example, we could use the HTC Vive controllers in our hands and be tracked by Kinect, or wear a Perception Neuron sensor kit to capture our full body. That info will be translated to the user's avatar and can be useful not only to increase the feeling of presence, but also to interact with the world.

Animation poses can be used to trigger events, and this takes us to physics, which will be extremely important in VR. Today we are accustomed to seeing physics as something "cool" that enhances our experience by creating realistic debris on explosions or making trees move with the wind, but playing games in 1:1 scale in VR must force the game world to react as the real world, or the magic of "presence" can be broken, just as Neo perceived glitches inside The Matrix. With motions controllers and full body avatars, the player can touch, throw, push, pull, cut, bend -- whatever action you can imagine. Hardware-accelerated physics will be needed to create a realistic physics behavior that is really part of the gameplay, and not just cool FX.

Further, the UI must be completely rethought. Most of you will probably have seen that floating HUDs showing our health or ammo count feel a bit strange in VR, and Unreal encourages developers to use icons instead of text, although it will use the layers feature of virtual reality SDKs to render text at higher resolutions than the rest of the experience. This has already been implemented both by Oculus and Valve, and it was a logical step for Unreal Engine to embrace it.

Facial animation will also play a key role in VR, although it's still a ways off because the first wave of consumer products from Oculus, Sony and HTC will completely lack eye tracking or face capture capabilities. It's quite a difficult challenge, because your face will be covered by the HMD, but Golding thinks that facial animation will be instrumental for realistic social experiences. In the future, we should be able to play with our friends or share experiences with people around the globe, so we should see each other's faces and gestures. VR shouldn't be an isolating experience (that goes for you too, Nintendo) but a social one, and our adventure buddies must be able to see our expressions, our mouth, our pose and even what we do with each one of our fingers.

Last but not least, Epic Games is planning a complete overhaul of the audio subsystem in Unreal Engine to bring binaural quality and spaciality to virtual reality apps. People will be using headphones in VR, and we need much more advanced solutions than plain stereo. We have already seen some impressive HRTF demos like those based on the Oculus Audio SDK, and this SDK will be integrated into Unreal Engine, but it won't be tied to Oculus devices, so it should work even with HMDs from other manufacturers.

There will be some extremely important audio design changes, and developers should use more and simpler audio sources to create a realistic audio landscape. And if point sources are not enough, Unreal will bring what it calls "area sources," which are useful for huge elements like a powerful dragon, a volcano, or simply a very big explosion.

But this is only the beginning, because physical interactions will play a key role in sound. 3D-accelerated audio was very important in the past, until Microsoft axed the hardware acceleration with Windows Vista (some hardware manufactures may be to blame here, but that's another story). Unreal Engine will bring back past concepts like audio waveguides, similar to what Aureal created with its "wavetracing" technology back in 1998. (If you played the original Half-Life with an Aureal Vortex 2-based sound card, you will understand what I'm talking about.)

Basically, the sound is affected by the environment in a more complex way than simply enabling a reverb profile that depends on the area where the player is. The world geometry, the obstacles, the materials -- everything will affect every sound source, as well as the subtle movements from the player's head.

Golding said that realistic audio processing is even more difficult than correct light processing, and Epic still has much R&D work before it works as it should. Today, thanks to powerful multicore CPUs, these calculations will be made in software and will debut in a brand new audio engine that will be available in 2016. But the best is that it will be a "drop in" replacement for the current audio stack in Unreal Engine, so developers won't have to do anything to take advantage of these new and powerful features.

It's clear that Epic is heavily investing in virtual reality with Unreal Engine. Most of the new features and enhancements are a direct result of the special needs that this new medium will bring to the table in the coming years, and at Immersed Europe, we have been given a small glimpse of what the future of electronic entertainment will be. 2016 is shaping to be a truly remarkable year.

Update, 9/915, 7:25am PT: Fixed typo.

Follow us @tomshardware, on Facebook and on Google+.

-

Epsilon_0EVP Finally we're getting binaural audio in games! I've been seeing a lot of demos of it recently, and I found it astounding that no major player has bothered to implement it for gaming yet when it is so superior in immersion. I was even considering writing my own audio plugin for Unreal Engine just to try to get it to be used more, but thankfully it seems the pros are going to be handling it now.Reply -

Kewlx25 I know exactly what you mean. Aureal was the BEST! Even the Vortex1 cards were great. Why did it take 20+ years to bring back something that already existed and was awesome?Reply -

Epsilon_0EVP From what I have heard, patents and licenses. Apparently Creative bought out Aureal, and then left the patents in a storage room somewhere. Hopefully that changes soon, thoughReply