Report: Intel in Talks to Acquire AI Company Habana Labs for Over $1 Billion

Intel's AI acquisitions already include Movidius and Nervana.

Intel could be in talks to acquire deep learning chip start-up Habana Labs for a price tag of over $1 billion, according to a report Tuesday. It would be Intel’s third major artificial intelligence (AI) start-up acquisition after Movidius and Nervana in 2016.

The report from CTech, the technology outlet of Israeli publication Calcalist, cited an annoymous source "familiar with the matter." Intel is in “advanced negotiations”, according to the person, and the potential deal is valued at between $1 and $2 billion dollars. This would make it Intel’s largest acquisition since the $15.3 billion deal to acquire Israel-based Mobileye in 2017.

Neither Intel nor Habana Labs responded to the rumor.

Habana Labs, one of many AI start-ups, was founded in 2016 by two former executives of 3D sensing company PrimeSense, which was acquired by Apple in 2013 for $360 million. Its goal is to create processors for deep learning training and inference with higher performance, scalability cost and power “from the ground-up." The company has raised a total of $120 million and employs 120 people. The last investing round in November 2018 gave it $75 million and was led by Intel Capital. At the time, Habana CEO said Intel had no plans for working in conjunction with Habana.

If the deal goes through, it would be a noteworthy addition to Intel’s portfolio. For the last several years, Intel has been investing in AI capabilities from edge to data center and CPU to ASIC (application specific integrated circuits), as well as software enablement.

In 2016, Intel bought Movidius for edge and vision AI, and Movidius recently announced the Keem Bay VPU (vision processing unit). Also in 2016, Intel acquired Nervana (reportedly for $350 million) to enter the deep learning training chips market. Intel also developed the NNP-I for inference by itself. Hence, a potential acquisition of Habana Labs could – surprisingly – indicate that Intel still sees gaps in its AI portfolio.

Gaudi: Habana's Deep Learning Training Chip

Habana’s training chip is called Gaudi (PDF). Similar to Nervana, its architecture is based on Tensor Processor Cores (TPCs), it has 32GB of HBM2 at 1 TBps and supports PCIe 4.0. It also supports popular DL numeric formats, such as INT8 and BF16.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

A standout feature of Gaudi is its interconnect for scaling out to hundreds of chips. It is the first AI chip with engines for Remote Direct Memory Access (RDMA) over Converged Ethernet with a bi-directional throughput of 2 Tbps (via up to 10 100 Gbps Ethernet ports). RDMA means that a chip can access any other chip’s memory directly (without involving the operating system). The advantage of Ethernet is that switches are readily available, and there is no need for PCIe switches or dedicated NICs.

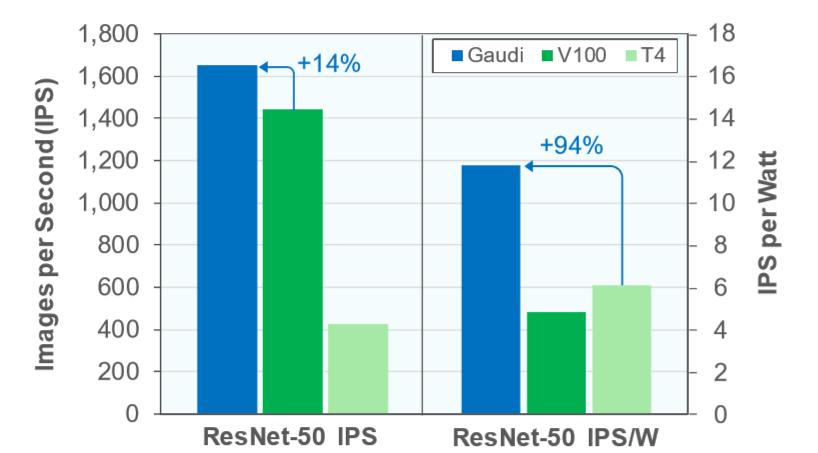

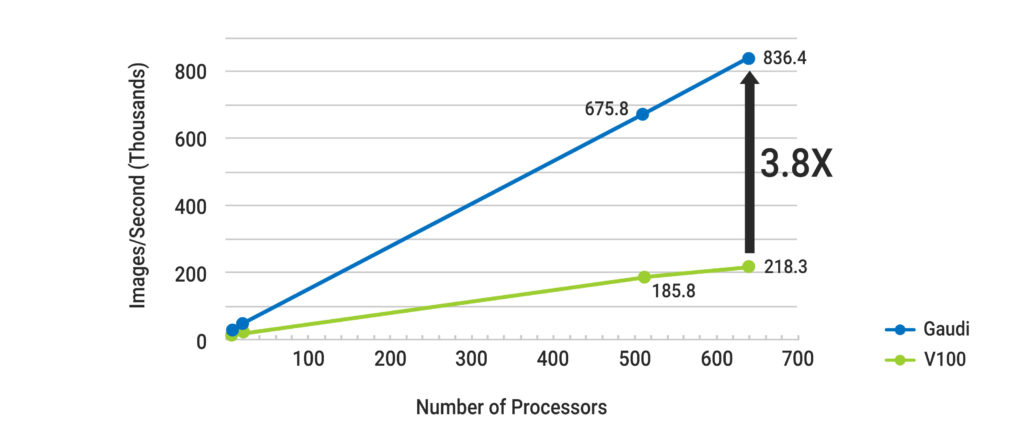

In terms of performance, one 140W TDP chip has a throughput of 1,650 images per second in ResNet-50, 14% higher than Nvidia’s Tesla V100 at half the power consumption. This results in an efficiency of over twice the number of images per second per watt. The difference becomes even larger as the number of chips scales. Based on MLPerf v0.5, 640 chips achieve a throughput of 845,400,000 images per second, whereas the V100-based system with 80 DGX-1s reached 218.3 thousand images per second. A 512-chip Gaudi system has a relative efficiency of 80% compared to under 30% for a V100 system.

Goya: Deep Learning Inference Chip

Habana also claims to have the first commercially available deep learning inference processor with its HL-1000 Goya (PDF) chip. Exact details are scarce, but it features fully programmable VLIW TPCs and supports all major frameworks.

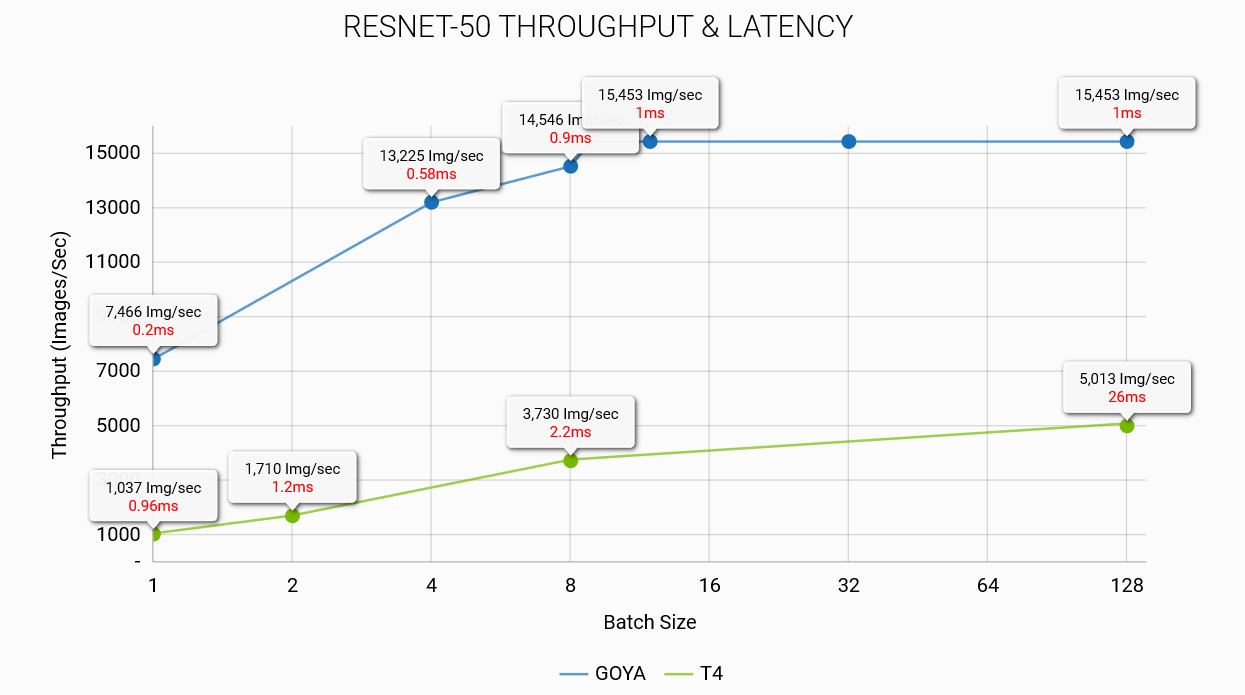

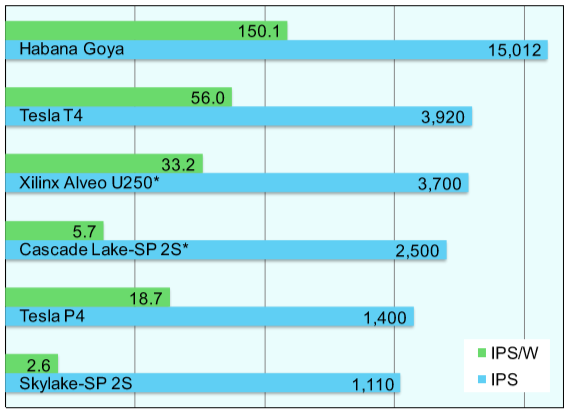

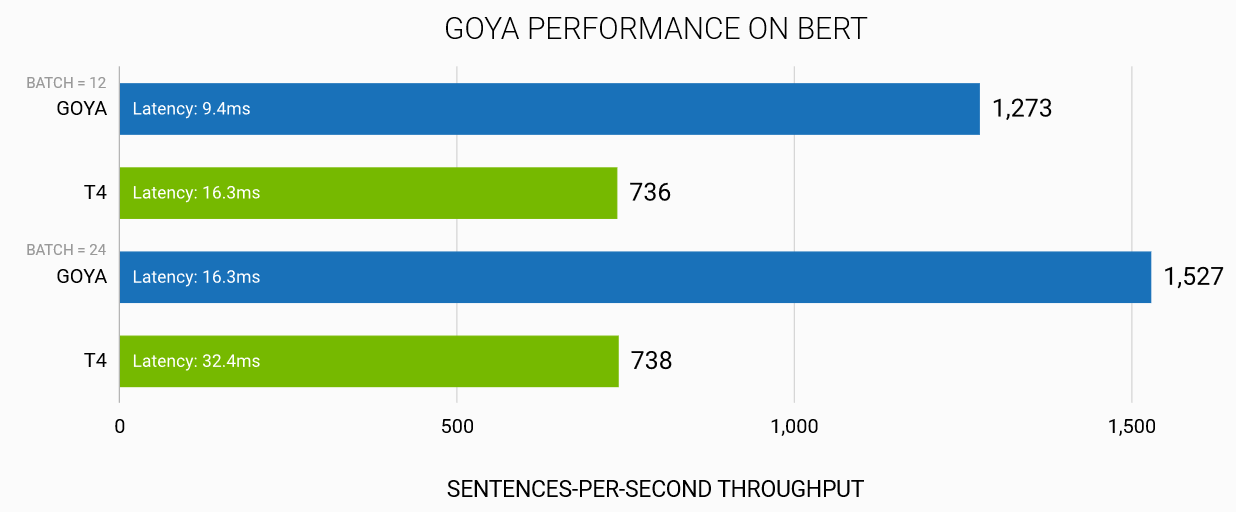

Habana claims it has up to three times higher performance and two times the efficiency of Nvidia’s Tesla T4 while also having much lower latency.