No, Raja Koduri Didn't Say Intel's Discrete GPUs Will Debut at $200

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

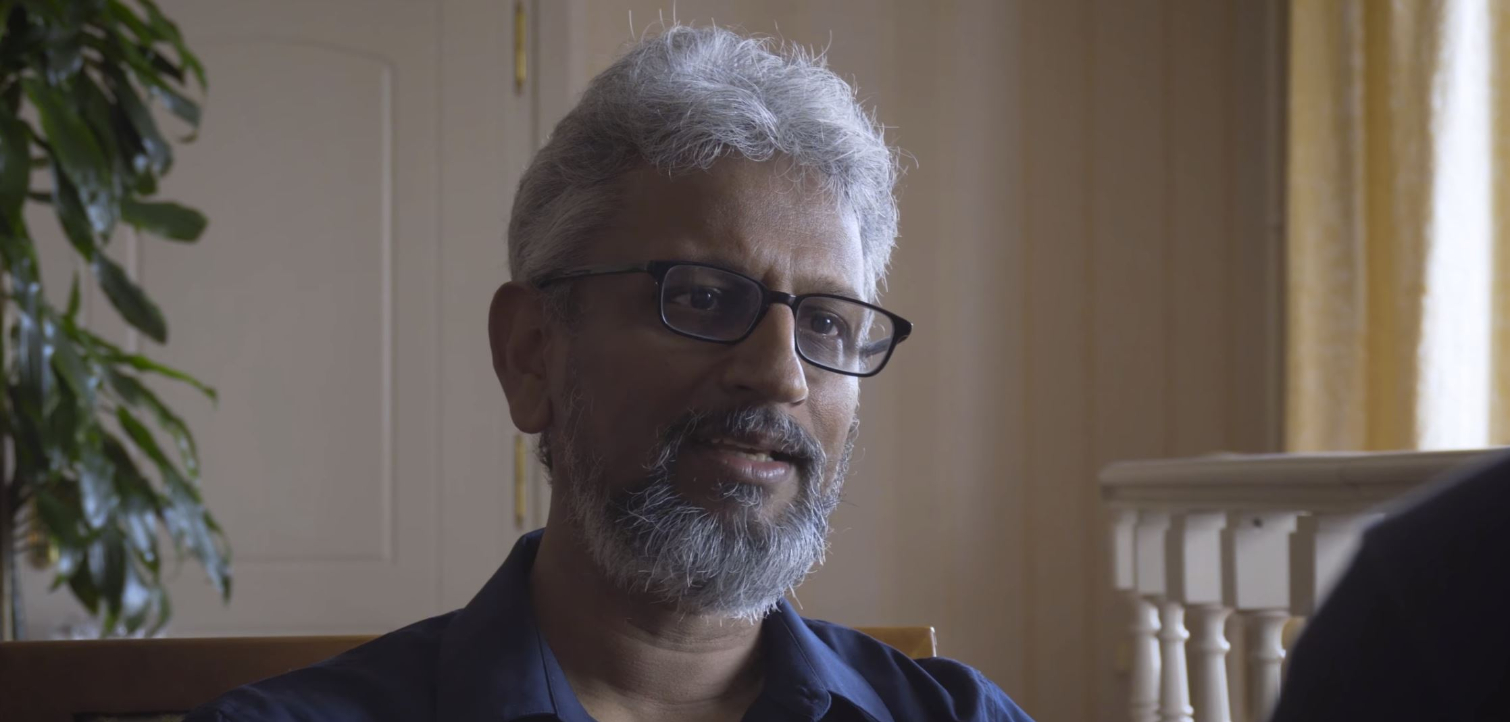

Russian YouTube channel Pro Hi-Tech posted a video claiming that Raja Koduri, Intel's chief architect and senior vice president of Architecture, Software, and Graphics, said that the company would launch its first discrete graphics card at the $200 price point. After a bit of fact-checking, we found that the comments attributed to Koduri are not accurate.

Nvidia and AMD have dominated the discrete graphics card market for the last 20 years, so Intel's announcement last year that it would enter the discrete gaming GPU arena has been met with plenty of excitement. Intel is still holding its cards close to its chest on many of the finer details of its impending Xe graphics architecture, so almost any fresh information is newsworthy, but the information in the interview seemed dubious at best.

Pro Hi-Tech is a Russian outlet, so it isn't surprising that the sit-down interview with Koduri is in Russian, but the channel chose to dub over Koduri's comments with a translation that renders Koduri's actual comments, spoken in English, undecipherable.

YouTube's poor auto-translation doesn't make a lot of sense, but most news coverage of the exchange is based on this translation posted to Reddit:

Our strategy revolves around price, not performance. First are GPUs for everyone at 200$ price, then the same architecture but with the higher amount of HBM memory for data centers. <...> Our strategy in 2-3 years is to release whole family of GPUs from integrated graphics and popular discrete graphics to data centers gpus.

Unfortunately, that translation is based on the dubbed-over translator, and not Koduri's actual statements.

We followed up with Intel to see if Koduri actually made the statements attributed to him, and the company responded that Koduri's comments "had been misconstrued and/or lost in translation." Intel provided us with audio of Koduri's actual comments, which we've transcribed here for reference:

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

“Not everybody will buy a $500-$600 card, but there are enough people buying those too – so that’s a great market.So the strategy we’re taking is we’re not really worried about the performance range, the cost range and all because eventually our architecture as I’ve publicly said, has to hit from mainstream, which starts even around $100, all the way to Data Center-class graphics with HBM memories and all, which will be expensive. We have to hit everything; it’s just a matter of where do you start? The First one? The Second one? The Third one? And the strategy that we have within a period of roughly – let’s call it 2-3 years – to have the full stack." - Raja Koduri

Koduri's comments mirror Intel's prior disclosures that the Xe graphics architecture, which will scale from integrated graphics chips on processors up to discrete mid-range, enthusiast, and data center/AI cards, will attack every price segment. Intel says it will split these graphics solutions into two distinct architectures, with both integrated and discrete graphics cards for the consumer market (client), and discrete cards for the data center. Intel also says the cards will come wielding the 10nm process and arrive in 2020.

As with any launch, we can expect a fairly extended period of launches to build out the full stack, which Koduri says will take two to three years.

Intel hasn't announced what price ranges the first discrete GPUs will launch at, or which types of GPUs will hit shelves first. It's logical to expect Intel to hit the mid-range price points first, but it could also choose to let the high-end variants out into the wild first. Only time will tell.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

jimmysmitty I would doubt Intel would come out swinging like that but what a market shakeup it would be if Intel were to enter at equal performance for a hundred or so less than the competition.Reply

One can only dream... -

paul prochnow Reply

Well, no REPLIES. It is better to say nothing, than something at all, in a critical manner, at times.admin said:After a bit of fact-checking, we found that Raja Koduri did not say that Intel's discrete graphics cards will not launch for $200.

No, Raja Koduri Didn't Say Intel's Discrete GPUs Will Debut at $200 : Read more -

NightHawkRMX Nvidia has stagnated in GPUs in my mind. The 2080 offers equal performance to the 1080ti while having 3gb less vram and costing more money! What a steal! Sure they have RTX, but by the time this feature is relevant, original 20 series cards won't be relevant.Reply

This leaves AMD and intel room to swoop in and get some market share.

I just watched an interesting video from gamer meld. Gamer meld said Intel did say all of their GPUs for data center, aib cards, and igpus would be based on one architecture.

AMD did this with GCN and it turned out to be a limitation, hence why gaming GPUs are now based on RDNA and everything else is still GCN.

Also, I saw something from Cortex that said (paraphrased) the 5700 die is tiny and can overclock to well over 2ghz with unlocked power. So it makes sense AMD would enlarge the die and add a bunch more stream processors. If they shipped this GPU with the 2ghz we know it can hit, it would have incredible performance. -

jimmysmitty Replyremixislandmusic said:Nvidia has stagnated in GPUs in my mind. The 2080 offers equal performance to the 1080ti while having 3gb less vram and costing more money! What a steal! Sure they have RTX, but by the time this feature is relevant, original 20 series cards won't be relevant.

This leaves AMD and intel room to swoop in and get some market share.

I just watched an interesting video from gamer meld. Gamer meld said Intel did say all of their GPUs for data center, aib cards, and igpus would be based on one architecture.

AMD did this with GCN and it turned out to be a limitation, hence why gaming GPUs are now based on RDNA and everything else is still GCN.

Also, I saw something from Cortex that said (paraphrased) the 5700 die is tiny and can overclock to well over 2ghz with unlocked power. So it makes sense AMD would enlarge the die and add a bunch more stream processors. If they shipped this GPU with the 2ghz we know it can hit, it would have incredible performance.

Most GPUs are based on similar uArchs. Nvidias are typically uArchs in the HPC world trickled down to consumer. We never got Volta, except the Titan V, but RTX cards are similar to Volta just without the high speed interconnects and such.

Also the current RDNA, as far as I can find, is still closely tied to GCN. If thats to it has a SPU limit of 4096 which is what Vega 64 has currently. Having more is not always the answer either. Nvidia has had less SPUs most generations while performing better.

I think the reason Navi is not clocked that high though is due to how much power draw it would take. Wouldn't look good if it sucked vastly more power.

Intel is not beginner in GPUs either. They have a ton of GPU patents. They just are newish to the discrete GPU market. If they leverage their patents and process technology correctly and get their driver team up to par they have a good shot at competing with both AMD and nVidia. I read somewhere once that Intel has the largest software team, even larger than Microsoft.

I am all for three full on competitors for GPUs. I just want to see top end GPUs back down to $500 bucks like my 9700 Pro and 9800XT were. -

NightHawkRMX Reply

And AMD couldn't sell the cards with the same old crappy blower. Although i dont think they will for the higher end navi cards AMD confirmed are in the works.jimmysmitty said:I think the reason Navi is not clocked that high though is due to how much power draw it would take. -

jimmysmitty Replyremixislandmusic said:And AMD couldn't sell the cards with the same old crappy blower. Although i dont think they will for the higher end navi cards AMD confirmed are in the works.

My assumption on those cards is going to be more SPUs rather than higher clocks and possibly a Vega 64/56 replacement with less HBM than the Radeon VII instead of GDDR6 like the 5700 has for more memory bandwidth. But that will also up the cost quite a bit if so. -

jimmysmitty Yea they jumped the bandwagon on that. As my boss always says you want to be on the leading edge not the bleeding edge. Bleeding edge always adds costs and you are basically the guinea pig.Reply -

NightHawkRMX Reply

Reminds me of a little thing Nvidia did. Ya know, that thing called RTX.jimmysmitty said:Yea they jumped the bandwagon on that. As my boss always says you want to be on the leading edge not the bleeding edge. Bleeding edge always adds costs and you are basically the guinea pig.

Too little, too soon and too much at the same time.

To little software support and too little performance caused by too soon of a launch. To add insult to injury they also had high of a cost. -

jimmysmitty Which is why, even though I wanted one, I didn't buy a 2080. My plan is to wait till the next or following release. Although I think we need more than just nVidia in the hardware ray-tracing game before we see good results.Reply