Microsoft Says Its Getting Easier to Buy Nvidia's AI GPUs

No more compute GPU shortages?.

Kevin Scott, chief technology officer of Microsoft, recently said at the Code Conference in Dana Point, California, that procuring Nvidia's compute GPUs for artificial intelligence and high-performance computing applications is now less challenging than it was a few months back.

"Demand was far exceeding the supply of GPU capacity that the whole ecosystem could produce," Scott said in an interview with The Verge. "That is resolving. It is still tight, but it is getting better every week, and we have got more good news ahead of us than bad on that front, which is great. […] It is easier now than when we talked last time."

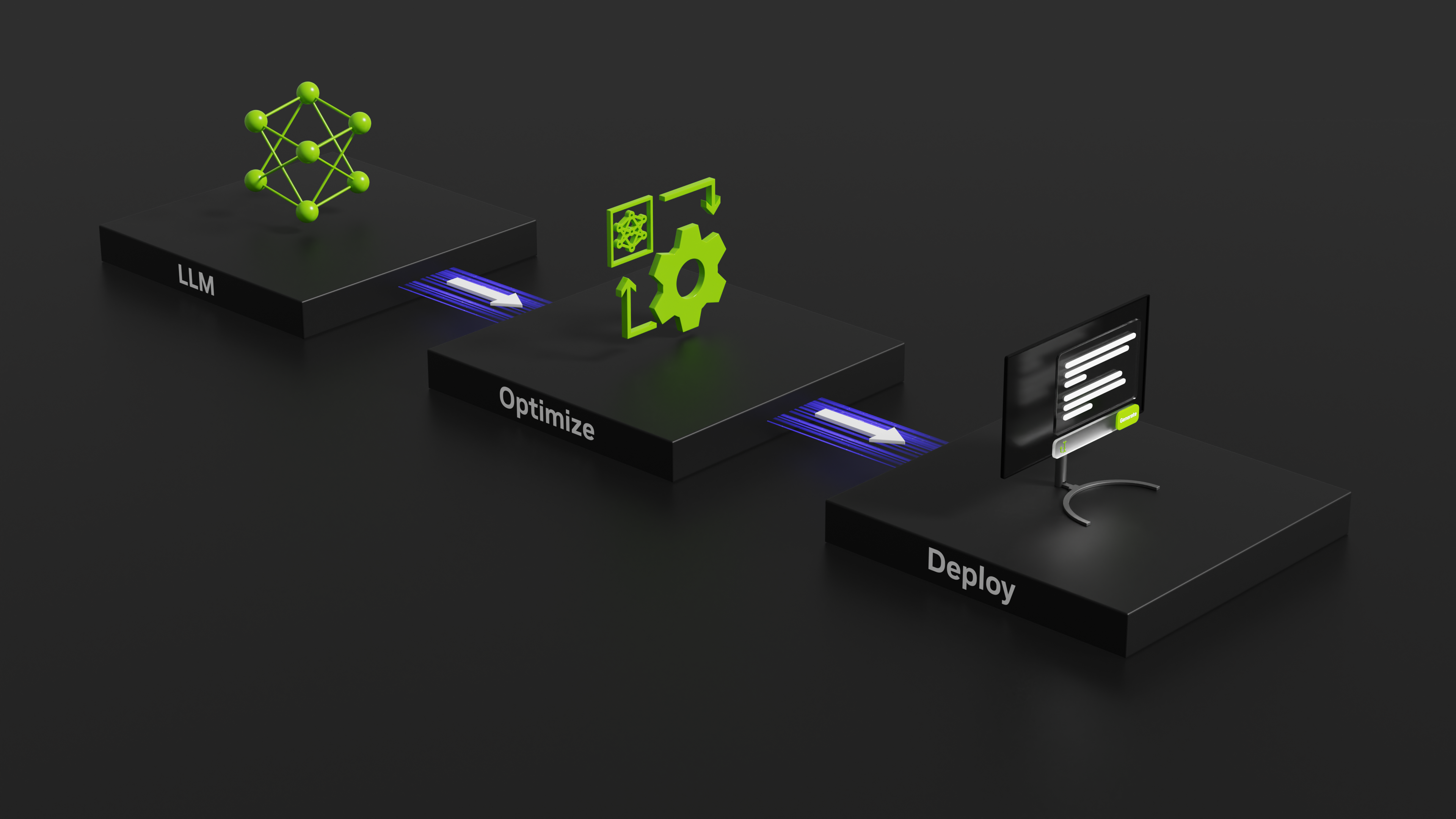

The massive demand for Nvidia's graphics chips was driven by major tech corporations, including Microsoft, integrating generative AI into their offerings. Such services primarily hinged on Nvidia's compute GPUs to train large language models and run inference, leading to a noticeable supply crunch. Microsoft and its counterparts' reliance on these chips led Nvidia to witness a considerable revenue spike and a stock price increase of 190% in 2023.

Kevin Scott, who manages GPU allocations at Microsoft, described the role as daunting over the past few quarters. However, he observed that the availability of compute GPUs is steadily getting better. Scott's responsibilities have been slightly eased due to the improving availability of Nvidia's chips, especially as the AI technology landscape continues to evolve.

In a recent earnings discussion, Nvidia has expressed its intention to augment its supply throughout the forthcoming year, a sentiment echoed by its finance head, Colette Kress. In parallel, ChatGPT's user traffic has decreased for three straight months. Microsoft's Azure platform supports OpenAI, the company behind ChatGPT, by providing cloud-computing facilities.

In terms of in-house AI processor development, Scott refrained from commenting on rumors about Microsoft building its own custom silicon for AI workloads. Yet, he accentuated the company's significant endeavors in the silicon domain. For the past several years, Nvidia has remained the top choice for Microsoft's needs in the AI sector, the company's CTO admitted.

"I am not confirming anything, but I will say that we have got a pretty substantial silicon investment that we have had for years," Scott said. "And the thing that we will do is we will make sure that we are making the best choices for how we build these systems, using whatever options we have available. And the best option that’s been available during the last handful of years has been Nvidia."

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

hannibal Nvidia has been moving production capasity away from gaming GPUs towards AI GPUs... That is starting to show here!Reply