Nvidia Doubles Down On Embedded AI With New Jetson TX2 Chip

Nvidia unveiled the Jetson TX2, which the company will target at customers who want “AI at the edge,” or in other words, embedded artificial intelligence that can be put into robots, smart cameras, and other IoT devices.

AI At The Edge

Nvidia has reaped many rewards for creating powerful chips that can be used to train advanced neural networks, which are described as “artificial intelligence” (AI) today. However, cloud-provided AI is not always needed or even wanted on most IoT devices that require only basic intelligence and vision capabilities.

As we saw last year with Movidius’ Fathom stick, there are already specialized vision processors that can offer such capabilities, and we’re only going to see more of them. In fact, some of the latest high-end mobile chips we’ve seen announced recently, such as the Samsung Exynos 8895 and MediaTek’s Helio X30, come with embedded vision processors. These chips will start offering enhanced computational photography at first, but developers will likely be able to expand their use beyond that.

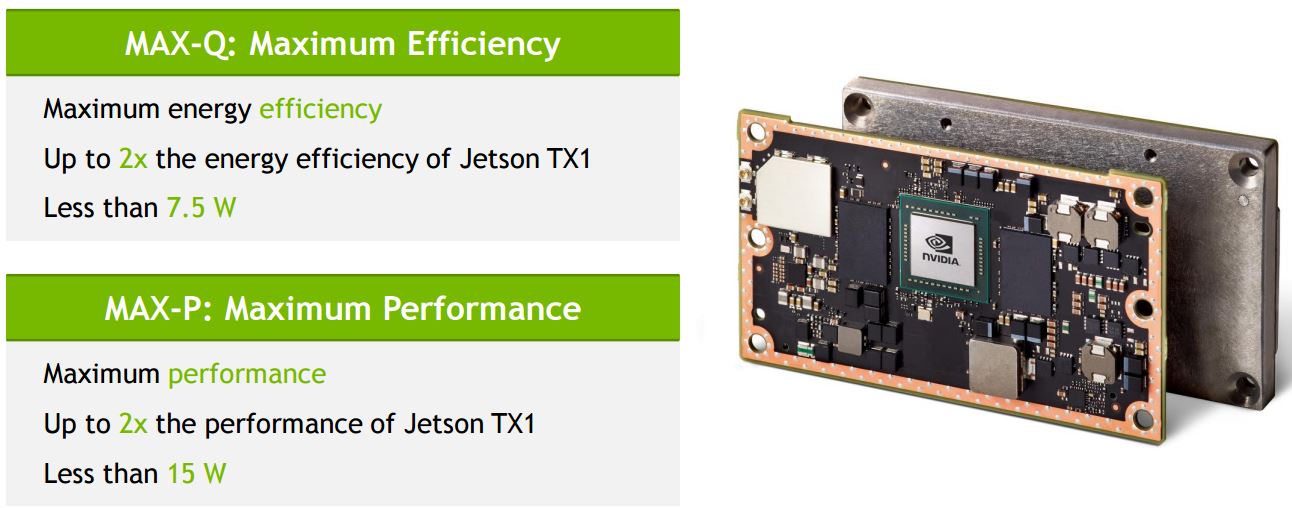

Nvidia is now trying to stay ahead of this competition with its own Jetson TX2 processor, which promises a high-performance and a high-efficiency dual-mode operation for embedded devices. The chip can run at either double the performance of the previous Jetson TX1 generation, or it can run at similar performance at half the power (7.5W).

Nvidia seems to have realized that some customers are happy with a lower power chip, even if it comes with lower performance, so the new Jetson TX2 module should offer them a little more flexibility.

At half the power (and performance similar to the Jetson TX1), the Jetson TX2 can still enable “edge devices” to do smart things such as image classification, navigation, and voice recognition.

Jetson TX2 Specs

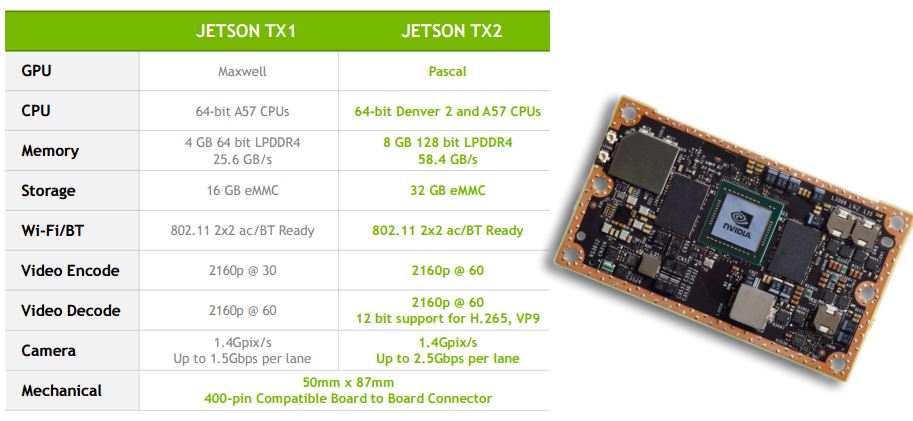

Nvidia seems to have stuck with a Cortex-A57 CPU for this generation, too, just like it did for the Jetson TX1. However, it added two “Denver 2” cores, as well. The company hasn’t given too many details about Denver 2 yet, other than the fact that the whole chip can deliver “50 to 100 percent higher multi-core CPU performance than other mobile processors.”

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The configuration seems to be similar to what we saw last year in the “Parker” module, which is at the heart of the company’s autonomous driving systems (Drive PX 2 comes with two Parker modules).

Nvidia has also doubled the amount of RAM (from 4GB to 8GB) and storage (from 16GB to 32GB), and it has almost doubled the bandwidth of the six supported cameras (from 1.4 Gpix/s/lane - or 1.5 Gbps/lane - to 2.5 Gbps/lane).

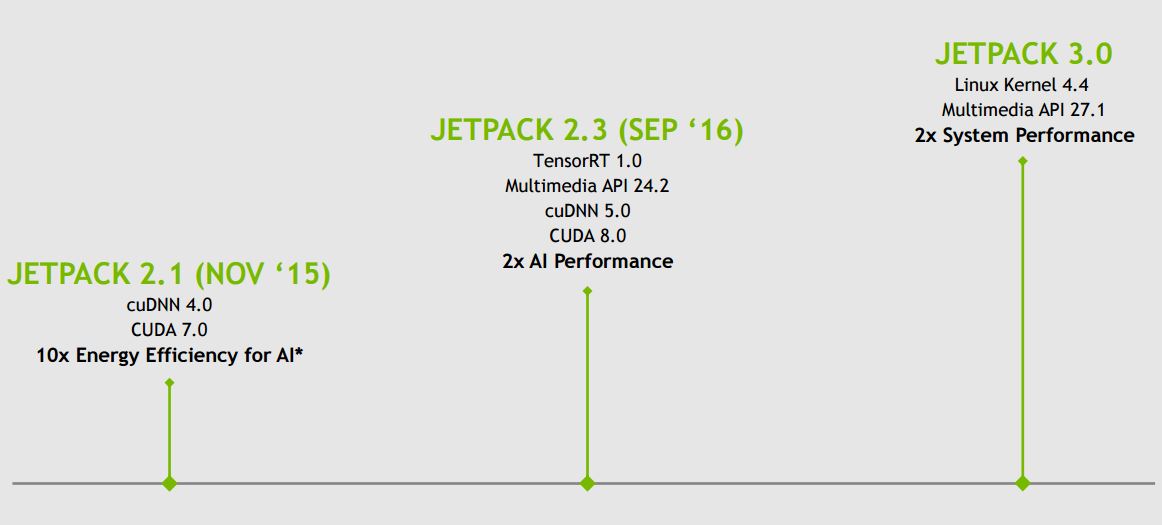

Jetpack 3.0 Doubles System Performance

Nvidia, as the primary beneficiary of the machine learning boom, knows that software can be just as important as the hardware. Last year, the company launched Jetpack 2.3, which promised double the inference performance on the same chip. Nvidia is now also claiming twice the system performance with Jetpack 3.0, due to an optimized Linux 4.4 kernel and a new multimedia API.

The Jetpack 3.0 SDK supports multiple software libraries, frameworks, and APIs that are are relevant to machine learning, including:

TensorRT - Nvidia’s high-performance neural network inference enginecuDNN 5.1 - a GPU-accelerated library of primitives for deep neural networksVisionWorks 1.6 - a software development package for computer vision and imageThe latest graphics API: Vulkan 1.0, OpenGL 4.5, OpenGL ES 3.2, and EGL 1.4CUDA 8: Nvidia’s parallel computing platform that allows its GPUs to do general purpose computation

Availability

The Jetson TX2 developer kit, which includes the carrier board and the Jetson TX2 module, can be pre-ordered in the U.S. and Europe for $599, starting today, and it will ship on March 14. The kit will be available in other regions in the coming weeks.

The production-ready Jetson TX2 will be available in Q2 for $399, in quantities of 1,000 units or more, from Nvidia and its distributors.

Lucian Armasu is a Contributing Writer for Tom's Hardware US. He covers software news and the issues surrounding privacy and security.