How Has Nvidia Managed to Push 60Hz 4K Over HDMI 1.4?

Nvidia has used an old trick to compress video images in order to get a 60 Hz 4K signal over the limited HDMI 1.4 interfaces on Kepler cards.

Normally, in order to run a 4K signal over HDMI at 60 Hz, you’d need an HDMI 2.0 connection. 'Normally,' isn’t really the right word though, as you cannot yet buy any graphics cards with an HDMI 2.0 output. However, it seems that the GeForce 340.43 Beta driver release had made it possible to push 4K at 60 Hz out of Kepler based cards, despite not having the HDMI 2.0 interface (the Kepler cards are HDMI 1.4).

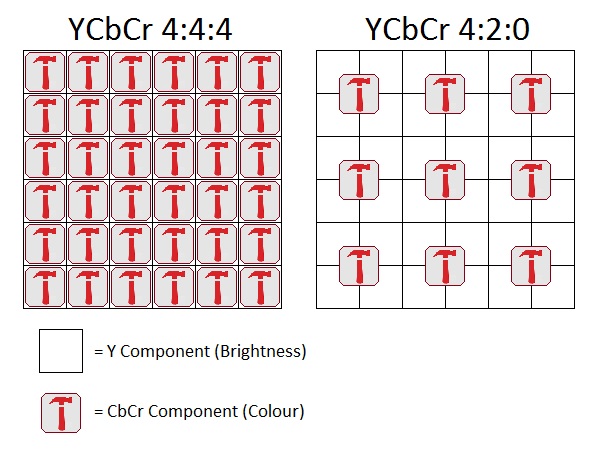

So, what’s the trick? The answer to that is chroma subsampling. Rather than using the standard YCbCr 4:4:4 or RGB sampling, Nvidia set the system to use a 4:2:0 sample. As such, the image retains the same brightness information, but the colour information is reduced by merging the information of four pixels. Four times less colour information means the signal is a lot smaller. As a result, Nvidia has managed to squeeze an entire 4K 60Hz signal over the 8.2 Gb/s bandwidth available to HDMI 1.4 interfaces.

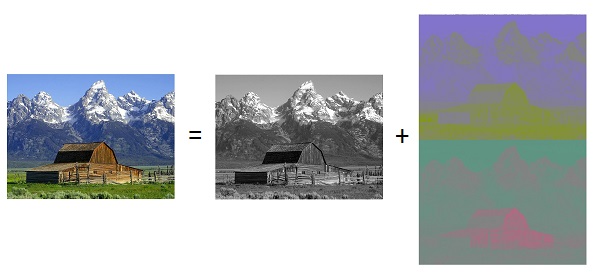

Of course, this does come at a cost. Take a look at the image above. On the left, we've got a 4:4:4 sample with all of the colour and brightness information. On the right, we have the 4:2:0 sample with only a quarter of the colour information. Because all of the brightness information is still present, each pixel in the resulting image will appear different. However, because the colour resolution is cut in four, there will still be distortions. This isn't as noticeable with moving pictures, but too much information will be lost when it comes to something like text. Text won’t appear clearly, so using this for a normal desktop environment will be awful because a lot of colours will appear very distorted.

Article continues belowYCbCr is a way of transmitting images where the luminance (Y) and the red-difference and blue-difference chromas (CbCr) are transmitted, as you can see in the image below. This in itself saves a lot of bandwidth, and because the human eye is a lot more sensitive to different brightness levels, you won't notice a difference when using 4:4:4 samples. However, when a 4:2:0 sample is used, significantly less colour information is transmitted. Using the two images provided (and maybe some imagination), you can see why that's not that big a deal for video (a lot of video files are even encoded with the 4:2:0 preset in order to reduce the file size simply because you'll hardly notice a difference), but it will be for text.

Despite the loss in quality, this is a fairly effective solution for those in need. If you run the desktop at 30 Hz you’ll keep the real estate and colour accuracy, while running your games and videos at 60 Hz will mean you’ll keep the smoothness and gain 4K sharpness. It won't be perfect, but it'll be a step in the right direction.

Alternatively you can also use DisplayPort, as DisplayPort 1.2 supports a 3840x2160 resolution at 60 Hz. Nvidia's solution really should only be considered if the display you’re using does not have DisplayPort support, as some TVs do.

Follow Niels Broekhuijsen @NBroekhuijsen. Follow us @tomshardware, on Facebook and on Google+.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Niels Broekhuijsen is a Contributing Writer for Tom's Hardware US. He reviews cases, water cooling and pc builds.

-

Wisecracker So ...Reply

nVidia is compressing the 4k packets 75% ? Wouldn't that be a 1K signal ??

:lol:

Kinda defeats the purpose of 4K, doesn't it?

-

Quarkzquarkz @WiseCracker, no not 75%. Keep in mind compression loss and resolution distortion are two completely different things. In this case, the sample packets are down from 4:4 to about half so you're essentially looking at a very poor 1440p. And not even 2k at that, you would expect a range between 1k and 2k and no one respects downsampling at any rate.Reply -

Wisecracker :lol: 36 pixels 'compressed' to 9 pixels = 4:1 = 75% reductionReply

Feel free to rationalize that any way you wish in your defense of pseudo "Voodoo 4k"

-

DarkSable Reply:lol: 36 pixels 'compressed' to 9 pixels = 4:1 = 75% reduction

Feel free to rationalize that any way you wish in your defense of pseudo "Voodoo 4k"

If you had read his post you would have seen he was simply correcting your misinformation, not trying to defend anything. -

Ikepuska I object to the use of "a lot of video files are even encoded with the 4:2:0 preset in order to reduce the file size". In fact the use of chroma subsampling is a STANDARD and MOST video files including the ones on your commercially produced Blu-ray movies were encoded using it.Reply

This is actually a really useful corner case for things like HTPCs or if you have a 50ft HDMI to your TV or projector, because there really is no loss of fidelity. But for desktop use it's just a gimmick. -

matt_b Well, at least on the surface Nvidia has a superior marketing claim here; no doubt that's all they care about anyway. Just let display port and HDMI v2.0 take over and do it right, no since in milking the old standard that can't.Reply -

Keyrock42 Anyone hooking up a 4K TV or monitor really should do their research and make sure it has a display port input (why some 4k TVs or monitors are even manufactured without a display port input is beyond me). That said, it's nice that there is at least a dirty hack like this available for those that need to connect to a 4K TV/Monitor via HDMI. It's far from ideal, but better than nothing, I guess.Reply -

thundervore ReplyAnd so the moral of the story is don't buy a 4k TV / Monitor without display port.

If using a computer monitor then yes, Display Port wins here at 4K but if connecting to a 4K TV then using Display Port is not an option as i have yet to see a TV with Display port.

edit:

Looks like they are making TVs with Dispaly port after all. Didnt think this was going to happen any time soon.

http://www.panasonic.com/au/consumer/tvs-projectors/led-lcd-tvs/th-l65wt600a.html