Nvidia Clears Up 8K DLSS Upscaling With GeForce RTX 3090

You didn't really expect native 8K at 60 fps, right?

During it's GeForce RTX 3090 announcement, Nvidia spent some time hyping up 8K gaming. What's it going to take to run 8K at reasonable frame rates? That's a huge can of worms, and besides one of the best graphics cards, there are a few other requirements. Nvidia provided additional details on 8K gaming and DLSS, which is what we're focused on here.

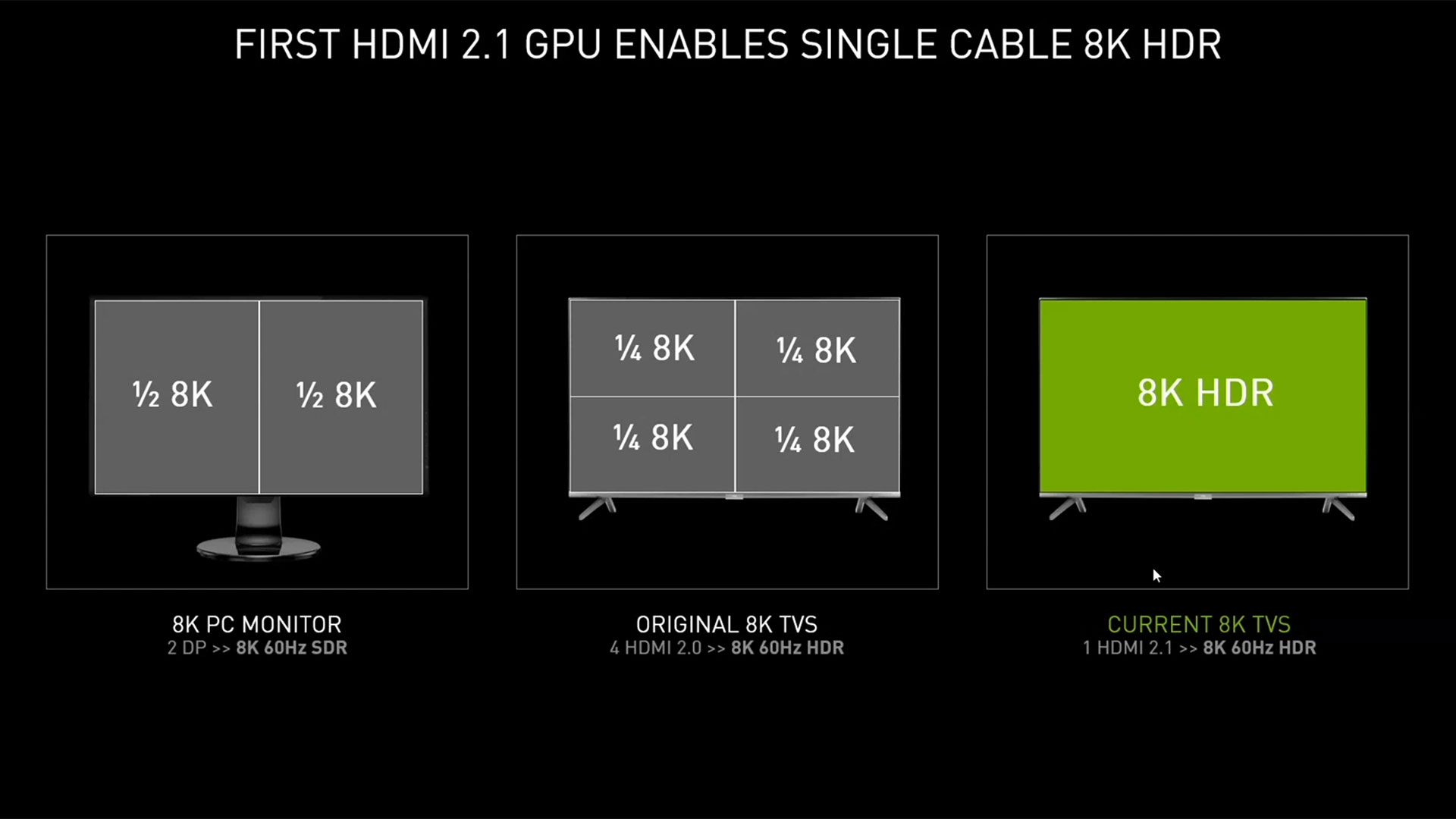

First, let's address the elephant in the corner. To play games at 8K, you need an 8K display. Technically you could use one of the existing 8K monitor solutions that use dual DisplayPort inputs, or even one of the original an 8K TVs that used quad HDMI 2.0 inputs. That's messy and prone to various issues, but the newer 8K TVs support HDMI 2.1, which allows a single cable to drive 8K at 60Hz in HDR mode.

Such TVs start at around $2,500, and that's not even a proper 8K implementation. There are a bunch of restrictions on which HDMI ports support 2.1, what video codecs can be used, and so forth. Higher end 8K TVs can easily pass the $5,000 mark. To be blunt: If you can afford an 8K TV, and you're willing to buy one, the price of the RTX 3090 probably isn't a major stumbling block.

Article continues belowWill you actually notice the difference between 4K and 8K, though? Maybe up close, but if you're sitting on a couch, we certainly question whether there will be any true difference to most of our eyes. That's a topic for another day. Let's talk about 8K gaming on Nvidia's new RTX 3090.

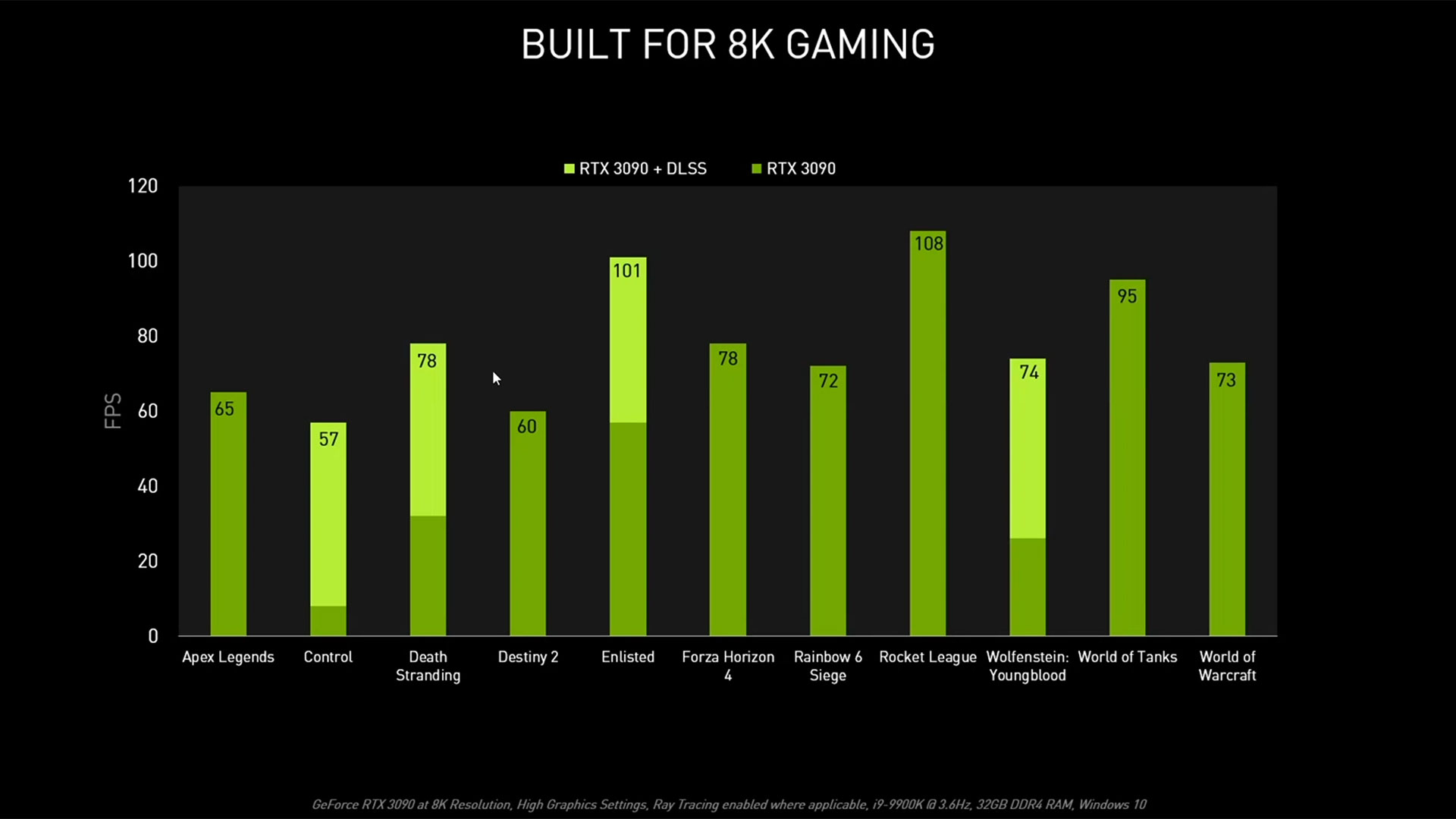

There are games where natively rendering at 8K is actually viable. They's the usual culprits: lighter fare like CSGO, League of Legends, maybe Overwatch. Nvidia also showed Apex Legends, Destiny 2, Rainbow Six Siege, World of Tanks, and World of Warcraft breaking 60 fps without any upscaling tricks, though it lists high graphics settings (i.e., not ultra), and none of those games support ray tracing effects.

What do you need to do for ray tracing at 8K?

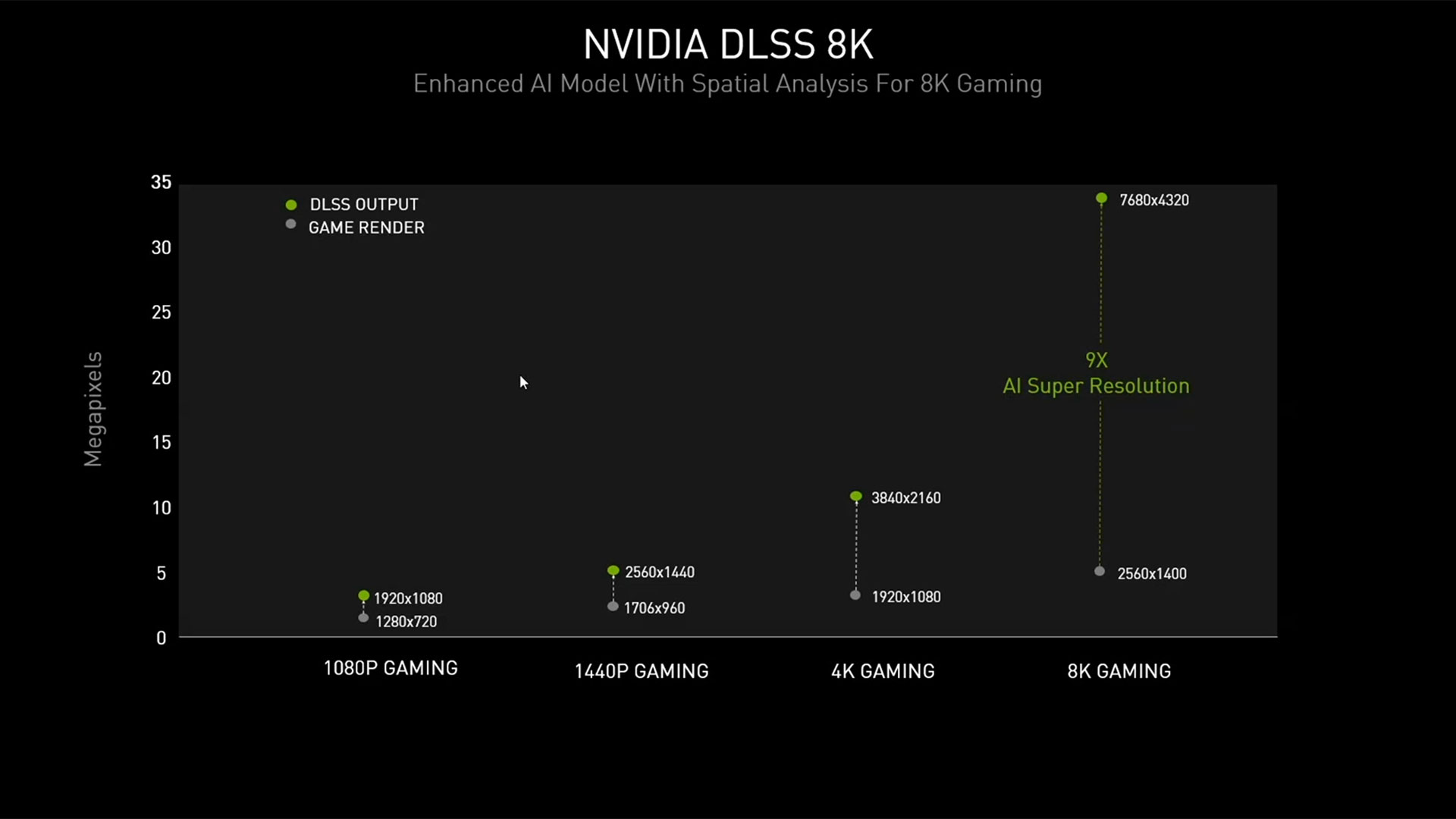

It's simple: You need DLSS. Nvidia also quietly updated DLSS to version 2.1, or at least the DLSS SDK is at 2.1 now. The new features for DLSS 2.1 include an "ultra performance mode" for 8K gaming, with up to 9X scaling. There's also support for DLSS in VR modes, and DLSS now has a dynamic scaling option so that it doesn't have to upscale from a fixed resolution.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The important bit is the 8K support and 9x scaling. To be clear, what Nvidia specifically means is that 8K DLSS gaming will render internally at 2560x1440 and then upscale that result to 7680x4320.

That's a pretty big leap from the 4X scaling in the 'DLSS performance mode' currently used in DLSS 2.0. Control, Death Stranding, Wolfenstein Youngblood, and other DLSS 2.0 games typically upscale 1920x1080 content to 4K when using the 'performance' option. There's a 'quality' option that upscales from a higher resolution (e.g., 2560x1440 to 4K). We've looked at this in a few games, and in Death Stranding with DLSS we could see a difference between the two rendering modes.

How does 8K DLSS 'ultra performance' upscaling look compared to other possibilities? We haven't tried it ourselves, but circling back to what we said earlier, it probably looks really nice. Better than native 4K? On a couch, from ten feet away? I'm not sure my eyes would notice one way or the other, because the pixels at 4K are already so darn small.

Put another way, I'm using a 28-inch 4K G-Sync display right now. That works out to a pixel size of 0.161mm, and while it's mostly okay, I'd be more comfortable with something closer to 0.242mm (i.e., 2560x1440 on a 28-inch monitor). I have to use 150% DPI scaling to comfortably read most text, and that's from just three feet away. And I don't like using anything other than 100% DPI scaling if possible.

4K on a 65-inch display has a pixel size of 0.374mm, which is far larger, and 8K obviously cuts that in half, to 0.187mm ... but for a 65-inch TV I'm usually sitting ten feet away. For movies and video content, it's going to be hard to see the difference in clarity between 4K and 8K for a typical TV. For gaming content? I sure hope text and UI assets scale properly, or reading them will be quite difficult.

I could sit six feet away from a 65-inch 8K TV and get roughly the same field of view experience as sitting three feet away from a 28-inch 4K monitor, but without the ability to read text. Moving a 65-inch display to three feet would make 8K at 100% scaling usable, except then I'd get whiplash trying to take in everything happening on the screen if I tried to play a game.

Don't even get me started on what it would be like to try and use an 8K display at native scaling that has a 30-inch or smaller screen size. 300% scaling seems like it would be okay, except DPI scaling often doesn't work okay.

We're not saying no one wants 8K displays. If that's what you're after, go for it! We're also curious to see how the lesser GeForce RTX 3080 would do with 8K output using DLSS. Theoretically, it's only about 17% slower than the 3090, and certainly it should handle 1440p rendering without any problems. The same goes for the GeForce RTX 3070. Maybe the 8K upscaling requires more Tensor core power?

More likely is that Nvidia figures anyone who's willing to buy an 8K TV probably isn't worried about saving $800 on a graphics card. Meanwhile, the vast majority of gamers are still using 1080p displays, according to the Steam Hardware Survey.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

nofanneeded Upscaling from lower resolution into 8K resolution ? 5000$TV can do it natively , so why bother upscaling from the card itself ?Reply -

HyperMatrix Replynofanneeded said:Upscaling from lower resolution into 8K resolution ? 5000$TV can do it natively , so why bother upscaling from the card itself ?

Because it's not upscaling in the way you're thinking. It's using Ai Tensor Cores to recreate the image using DLSS 2.0. Look up some videos. It quite often ends up looking even better than native resolution. Although there are a few bugs to be worked out due to over-sharpening of some elements. DLSS went from being an absolute joke with versions 1.0 through 1.9, to an absolutely amazing piece of tech in version 2.0. Night and day difference. -

setx Reply

It's exactly plain old upscaling. Well known in image and video processing for years.HyperMatrix said:Because it's not upscaling in the way you're thinking. It's using Ai Tensor Cores to recreate the image using DLSS 2.0.

That can only be true if your source is extremely bad.HyperMatrix said:It quite often ends up looking even better than native resolution.

Obviously, so they can claim to do "8k" rendering while doing only 2560x1400.nofanneeded said:Upscaling from lower resolution into 8K resolution ? 5000$TV can do it natively , so why bother upscaling from the card itself ? -

drivinfast247 Reply

Introduces additional lag.nofanneeded said:Upscaling from lower resolution into 8K resolution ? 5000$TV can do it natively , so why bother upscaling from the card itself ? -

HyperMatrix Replysetx said:It's exactly plain old upscaling. Well known in image and video processing for years.

No. It’s not. I don’t even have time to argue with this level of ignorance and arrogance. -

Endymio ReplyThat works out to a pixel size of 0.161mm, and while it's mostly okay, I'd be more comfortable with something closer to 0.242mm...I have to use 150% DPI scaling to comfortably read most text...I could sit six feet away from a 65-inch 8K TV and get roughly the same experience as sitting three feet away from a 28-inch 4K monitor.

The author is conflating two different situations here in a confused manner. Let's take situation 1: Display-constant FOV viewing, which encompasses most gaming and video situations. In this case, assuming equal frame rates of course, the smaller the pixel (the higher the ppi) the better. For these situations, the author's last statement is correct; his first statement incorrect.

The second case encompasses most computer monitor usage, where higher resolution expands display fov, and information content per unit area of display is constant. Here the reverse is true. An 8K display at six foot would render onscreen text or other objects 1/4 the size as a 4K display at 3 feet, for a far different viewing experience. -

Endymio Reply

There is no such thing as "plain old" upscaling, in the manner you mean. There are dozens of different algorithms used for upscaling, from a simple nearest-neighbor transform up through various interpolation schemes, ending in one of the newest and perhaps the most sophisticated approach: DLSS.setx said:It's exactly plain old upscaling. Well known in image and video processing for years. -

JarredWaltonGPU Reply

Calling an opinion 'incorrect' is sort of silly, probably because you're trying to read an opinion as a factual statement. The pixel sizes are facts, yes; the experience of those pixel sizes is opinion. Was the opinion not expressed clearly enough? Probably. I'll go edit it to try and clarify exactly what I'm trying to say. (I need DLSS for my writing, sometimes.)Endymio said:The author is conflating two different situations here in a confused manner. Let's take situation 1: Display-constant FOV viewing, which encompasses most gaming and video situations. In this case, assuming equal frame rates of course, the smaller the pixel (the higher the ppi) the better. For these situations, the author's last statement is correct; his first statement incorrect.

The second case encompasses most computer monitor usage, where higher resolution expands display fov, and information content per unit area of display is constant. Here the reverse is true. An 8K display at six foot would render onscreen text or other objects 1/4 the size as a 4K display at 3 feet, for a far different viewing experience.

What I was trying to say is that, having used computers for many years (decades even), with modern LCDs for PC use, I find anything smaller than around 0.25mm for pixel size starts to mean native 100% DPI with no other scaling means stuff is 'too small' for me to comfortably read at monitor viewing distances (of around three feet). And for various reasons, running Windows with DPI scaling at 100% is vastly preferable for me.

So, 4K on 28-inch displays is too small and I don't really see a major difference in clarity between 4K and 1440p on a 28-inch screen size at around three feet (for videos and some games). Obviously for Windows use there's more resolution, which means smaller windows and text, but if I open a movie, or a game that scales UI, assets, and everything else based off the resolution? Yeah, I'm not really going to notice the difference between a 28-inch native 1440p and 28-inch native 4K display. (Unless a game has zero anti-aliasing, in which case I'd definitely notice -- Project CARS 3 is a good example of this, in a bad way, because what game doesn't support post-process AA these days!?)

Take that a step further and give me a 28-inch 8K display. It will be useless at 100% DPI scaling, and I'd end up at 200% scaling, giving the same effective resolution as 4K in a lot of scenarios. But again, DPI scaling still breaks on a lot of apps, which means irritation. Even if the DPI scaling worked perfectly, however, as someone in my mid-40s, I can pretty much guarantee that 8K with 200% DPI scaling vs. 4K with 100% DPI scaling isn't going to matter to me. I really won't see the extra clarity of 8K. Maybe in my 20s that wouldn't have been true. Maybe. But definitely not now.

You're right that the second bit about 8K 65-inch at six feet vs. 4K 28-inch at 3 feet wasn't about resolution. It's about how much of my field of view the screens would occupy. To read text on an 8K display more or less comfortably at 100% scaling, though, it would have to be a 60-inch display with me sitting at three feet away. Or if I were 10 feet away, I'd need about a 180-inch display. -

Endymio Reply

With all due respect, I wasn't taking issue with your opinion, and and I believe you've missed my point. You prefaced the pixel size remark with the question, "How does 8K DLSS upscaling look?" In the context of rendering fixed-scale fonts, pixel size does indeed matter, and your opinion is not only valid, but one that I (and most people, I believe) would agree with. But that's not DLSS.JarredWaltonGPU said:Calling an opinion 'incorrect' is sort of silly....

In the context of DLSS or upscaling of any sort, a smaller pixel size never translates into a poorer experience. I may be being presumptuous, but I don't believe your opinion IS your opinion in that case. If you play a fixed-fov video game on a hypothetical 24" screen at 16K resolution, you would not find those microscopic pixels "too small" -- because the objects rendered in them would occupy your same field of view as when rendered at 4K, 2K, or 1080p.

By the way, may I belatedly congratulate you on your move here? I always found your columns in your prior home the highlight of the issue.