'Enhanced' Nvidia A100 GPUs appear in China's second-hand market — new cards surpass sanctioned counterparts with 7,936 CUDA cores and 96GB HBM2 memory

Nvidia's Ampere A100 was previously one of the top AI accelerators, before being dethroned by the newer Hopper H100 — not to mention the H200 and upcoming Blackwell GB200. It looks like the chipmaker may have experimented with an enhanced version that never hit the market, or perhaps companies have clandestinely modified the A100 to make it even faster in the wake of U.S. sanctions against China. X user Jiacheng Liu recently discovered various A100 prototypes in the Chinese second-hand market that flaunt substantially higher specifications than Nvidia's 'regular' A100.

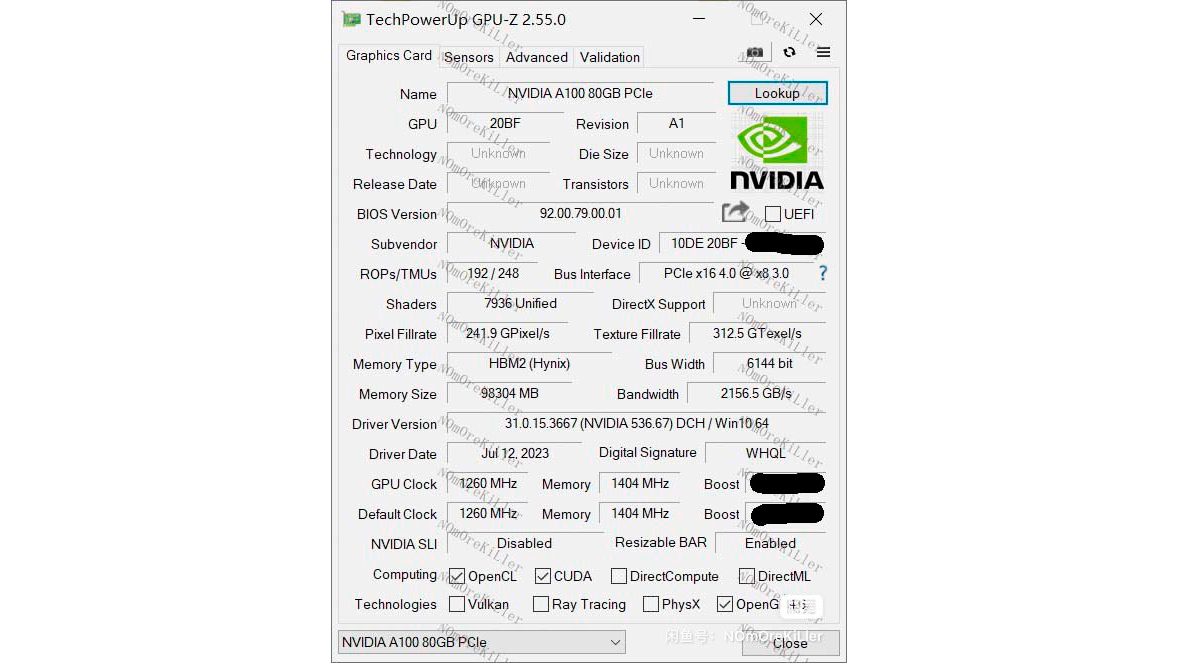

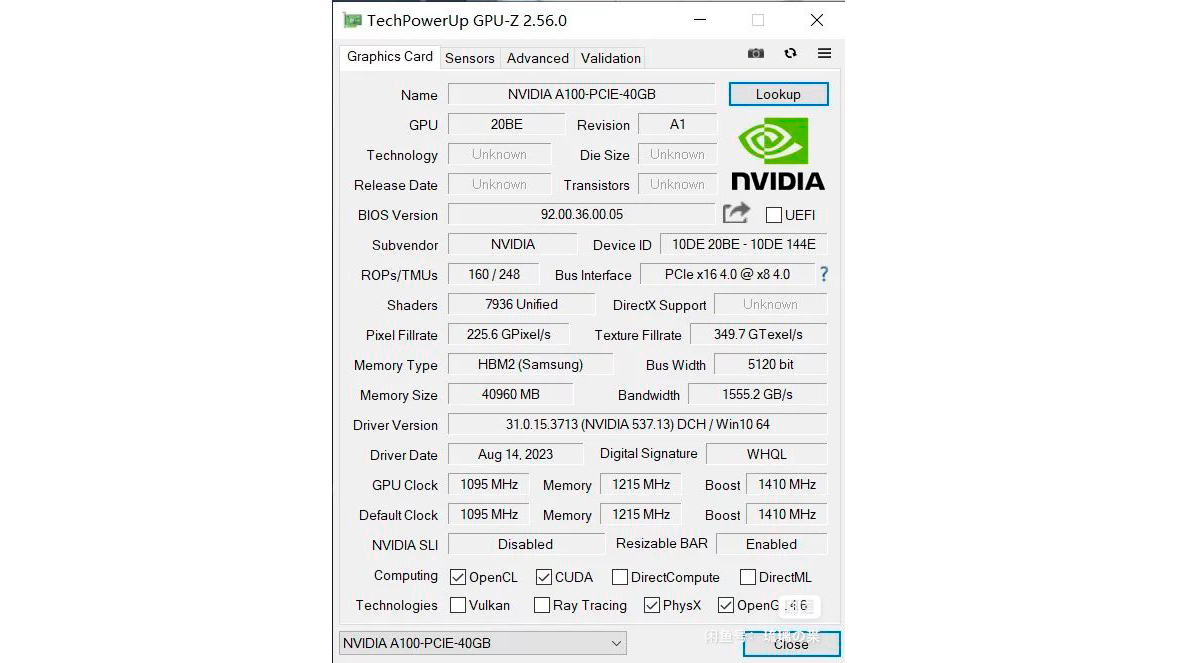

Despite the beefed-up attributes, the A100 7936SP (unofficial name, based on its having 7936 shader processors) shares the same GA100 Ampere die as the regular A100. However, the former has 124 enabled SMs (Streaming Multiprocessors) out of the possible 128 on the GA100 silicon. While it's not the maximum configuration, the A100 7936SP has 15% more CUDA cores than the standard A100, representing a significant performance uplift.

Tensor core counts likewise increase in proportion to the number of SMs. Having more enabled SMs thus means that the A100 7936SP also possesses more Tensor cores. Based on specs alone, the 15% increase in SM, CUDA, and Tensor core counts could similarly boost AI performance by 15%.

Nvidia offers the A100 in 40GB and 80GB configurations. The A100 7936SP likewise comes in two variants. The A100 7936SP 40GB model flaunts a 59% higher base clock than the A100 80GB while maintaining the same 1,410 MHz boost clock. On the other hand, the A100 7936SP 96GB shows an 18% faster base clock compared to the regular A100, and it also enables the sixth HBM2 stack to get to 96GB of total memory. Sadly, Chinese sellers have censored the boost clock speed from the GPU-Z screenshot.

Nvidia A100 7936SP Specifications

| Graphics Card | A100 7936SP 96GB | A100 80GB | A100 7936SP 40GB | A100 40GB |

|---|---|---|---|---|

| Architecture | GA100 | GA100 | GA100 | GA100 |

| Process Technology | TSMC 7N | TSMC 7N | TSMC 7N | TSMC 7N |

| Transistors (Billion) | 54.2 | 54.2 | 54.2 | 54.2 |

| Die size (mm^2) | 826 | 826 | 826 | 54.2 |

| SMs | 124 | 108 | 124 | 108 |

| CUDA Cores | 7,936 | 6,912 | 7,936 | 6,912 |

| Tensor / AI Cores | 496 | 432 | 496 | 432 |

| Ray Tracing Cores | N/A | N/A | N/A | N/A |

| Base Clock (MHz) | 1,260 | 1,065 | 1,215 | 765 |

| Boost Clock (MHz) | ? | 1,410 | 1,410 | 1,410 |

| TFLOPS (FP16) | >320 | 312 | 358 | 312 |

| VRAM Speed (Gbps) | 2.8 | 3 | 2.4 | 2.4 |

| VRAM (GB) | 96 | 80 | 40 | 40 |

| VRAM Bus Width (Bit) | 6,144 | 5,120 | 5,120 | 5120 |

| L2 (MB) | ? | 80 | ? | 40 |

| Render Output Units | 192 | 160 | 160 | 160 |

| Texture Mapping Units | 496 | 432 | 432 | 432 |

| Bandwidth (TB/s) | 2.16 | 1.94 | 1.56 | 1.56 |

| TDP (watts) | ? | 300 | ? | 250 |

The A100 7936SP 40GB memory subsystem is identical to the A100 40GB. The 40GB of HBM2 memory runs at 2.4 Gbps across a 5120-bit memory interface using five HBM2 stacks. The design contributes to a maximum memory bandwidth of up to 1.56 TB/s. The A100 7936SP 96GB model, however, is the centerfold here. The graphics card has 20% more HBM2 memory than what Nvidia offers thanks to the sixth enabled HBM2 stack. Training very large language models can be memory intensive, so the added capacity would certainly come in handy for AI work.

The A100 7936SP 96GB appears to sport a revamped memory subsystem compared to the A100 80GB — the HBM2 memory checks in at 2.8 Gbps instead of 3 Gbps but resides on a wider 6144-bit memory bus to help make up the difference. This results in the A100 7936SP 96GB having approximately 11% more memory bandwidth than the A100 80GB.

The A100 40GB and 80GB have TDPs of 250W and 300W, respectively. Given the faster specifications, the A100 7936SP could have a higher TDP. However, the value isn't available from the shared GPU-Z screenshots. The engineering PCB has three 8-pin PCIe power connectors instead of the vanilla A100's single 8-pin PCIe power connector. Being an engineering prototype, the A100 7936SP may not use all three power connectors, but it should draw somewhat more power than the standard A100 due to the extra CUDA cores and HBM2 memory.

Many Chinese sellers are selling the A100 7936SP on eBay. The 96GB model ranges between $18,000 and $19,800. It's unknown if the accelerators are engineering samples that escaped Nvidia's lab, or if they're customized models that the chipmaker developed for a specific client. In any event, it isn't legal to pick one up while the A100 may be subject to the latest U.S. export sanctions, that doesn't affect cards already within China.

Of course, there's no warranty or official driver support. While the A100 7936SP offers better performance than the A100 at the same or potentially lower price, purchasing a retail product or renting a GPU for all your AI needs is safer. But for the Chinese market, which can no longer import A100 GPUs, the added memory and compute are apparently worth considering.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Zhiye Liu is a news editor, memory reviewer, and SSD tester at Tom’s Hardware. Although he loves everything that’s hardware, he has a soft spot for CPUs, GPUs, and RAM.

-

Reply

It's unknown if the accelerators are engineering samples that escaped Nvidia's lab, or if they're customized models that the chipmaker developed for a specific client.

These were early prototype/test samples made for NVIDIA's GRID platform. In fact similar to the A100B GPU to be specific, if we do some digging based on the leaked specs and GPU-Z screenshot.

https://www.techpowerup.com/gpu-specs/grid-a100b.c3578

https://www.techpowerup.com/gpu-specs/grid-a100a.c3579

Although the specs of these early prototype samples may seem a bit off if we compare it with the A100B, the device ID is the same. Listed under the GPU section/box entry.

20BF and 20BE, as evident from the GPU-Z screen.

So these are some sort of variants of the A100B/A GPU.

https://devicehunt.com/view/type/pci/vendor/10DE/device/20BE

https://devicehunt.com/view/type/pci/vendor/10DE/device/20BF

Also, something seems a bit off in those GPU-Z specs, and the calculations mentioned in the article. The TMU count on the GPU-Z screenshot says 248 ! Should have been 432. -

It says 20BE and 20BF under the "GPU" name entry which corresponds to the Device ID as used by Nvidia. Some entries in GPU-Z are missing though.Reply

GRID A100B and GRID A100A.

https://devicehunt.com/view/type/pci/vendor/10DE/device/20BE

https://devicehunt.com/view/type/pci/vendor/10DE/device/20BF

https://i.imgur.com/rKPgzw9.jpeg

https://i.imgur.com/7iP7SKS.jpeg -

TechyIT223 So I assume these are again sanction compliant chips for the Chinese market since based on specs these might be definitely banned for export to china.Reply -

Of course based on the upgraded specs, these are definitely on the 'blacklist' for export to China, but in case you missed it, these chips are already within the China mainland, and they are currently selling on local Chinese online marketplace- Goofish. :pReply

These are buggy prototype models though, and they don't support all versions of NVIDIA drivers (let alone the latest), and there is a driver bug which reports incorrect GPU TDP and clock speeds, among other things, but the GPU still does the job (based on a report from a user who bought it for his local AI startup).

That wouldn't be surprising, given these are early "validation" boards (judging from the exposed jumpers/voltage points), which have somehow managed to escape the labs, either legally or illegally. :sneaky:

-

TechyIT223 Wow...That' not entirely unexpected though. A lot of hardware do usually land up in china as always.,.. just wondering why would someone pay so much for a protocol model though...Reply -

Well, the reason might be simple. Since China is banned from accessing the latest and the most potent AI accelerators for AI/HPC and similar market segments (from Nvidia and AMD), they need to rely on existing tech, cut-down variants, and/or use whatever hardware they can get their hands on.Reply

And this A100 GPU despite being a prototype board is still capable of doing the job, so the demand is quite high in China, regardless of the price tag.

The graphics processor might lack full software/driver support though, but they are already working on workarounds and fixes.