23 Years Of Supercomputer Evolution

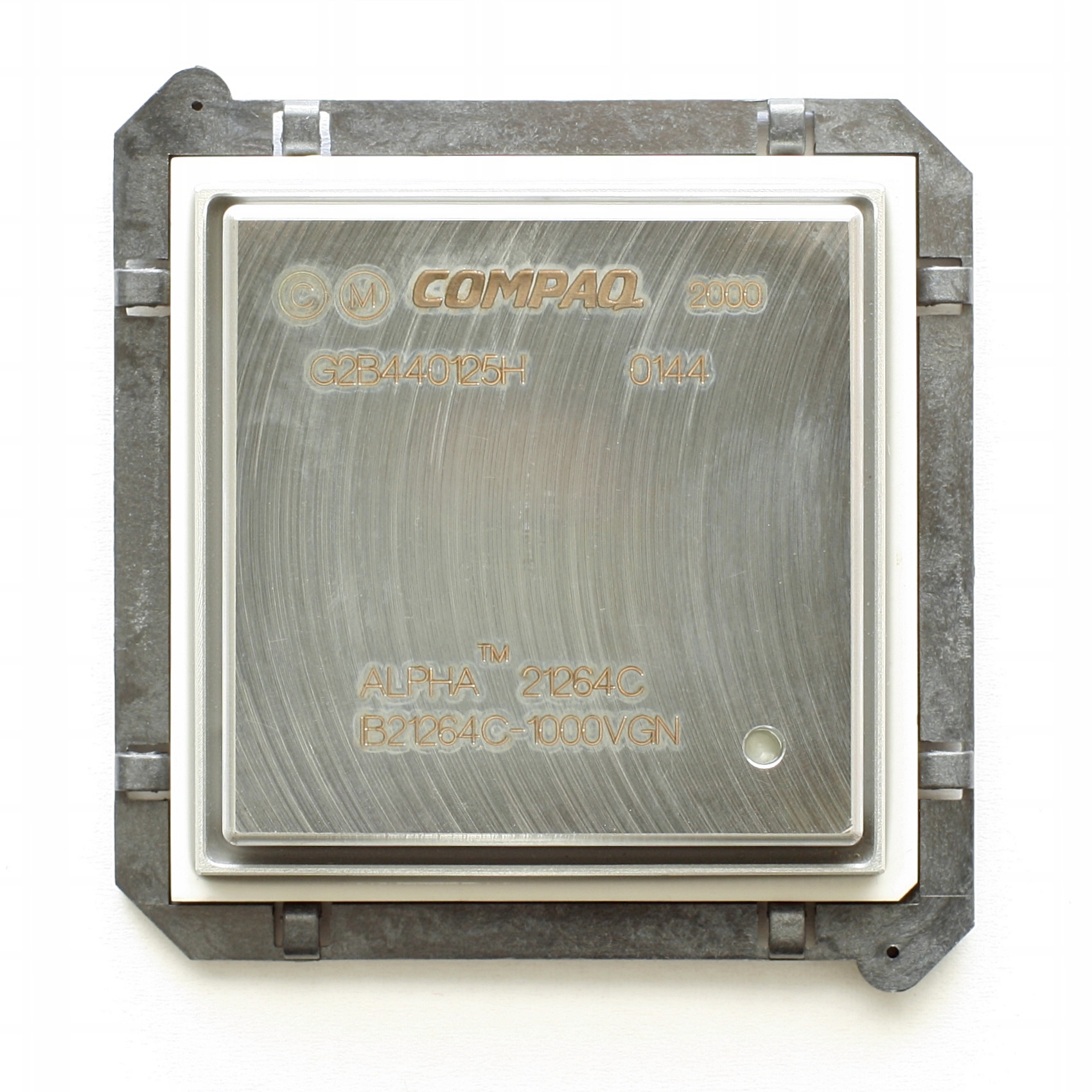

June 2003: ASCI Q And Alpha EV6

Earth Simulator was so far ahead that it continued to lead the Top500 until June 2004. Meanwhile, competitors continued to fight for the number two spot in the list. In June 2003, the number two spot belonged to the ASCI Q. This system was built by HP at the Los Alamos National Laboratory.

Plans for the ASCI Q originally included three segments, each containing 1024 HP AlphaServer SC45 servers. The TOP500, however, only shows the machine with 2 segments. Each server contains two Alpha 21264 processor clocked at 1.25 GHz. The total theoretical capacity of the system was 20.5 TFlops, which resulted in 13.9 TFlops in Linpack.

The Intruder: System X, AKA Big Mac

During the summer of 2003, Virginia Tech University decided to build a "low-price" supercomputer from public machines. System X (or Big Mac as it was called) was comprised of 1100 Apple PowerMac G5 systems, each equipped with two PowerPC 970 CPUs clocked at 2.3 GHz, working as a single system. The construction of the Big Mac, took only three months and cost 5.2 million dollars. Significantly cheaper than the 400 million dollar Earth Simulator. In November 2003, Big Mac was ranked as the third fastest supercomputer on the TOP500, with 10.3 TFlops of processing power demonstrated on Linpack. The Big Mac was updated in 2004 by replacing its PowerMac with Xserve, which Boosted its processing power to 12.25 TFlops.

MORE: Best CPUs

MORE: All CPU Content

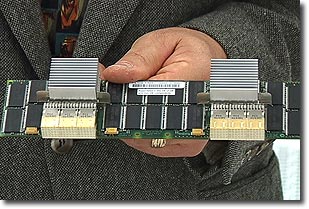

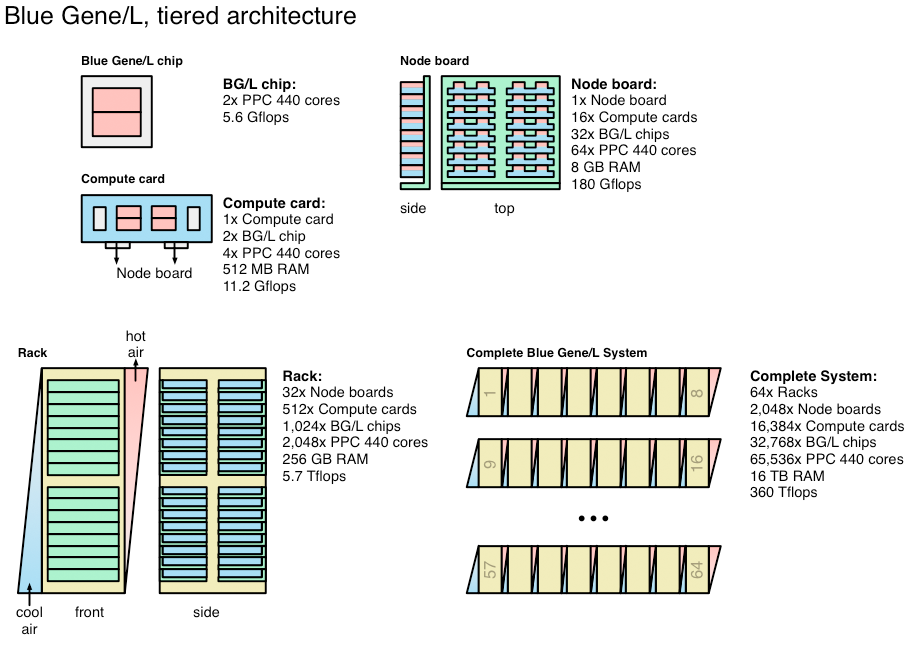

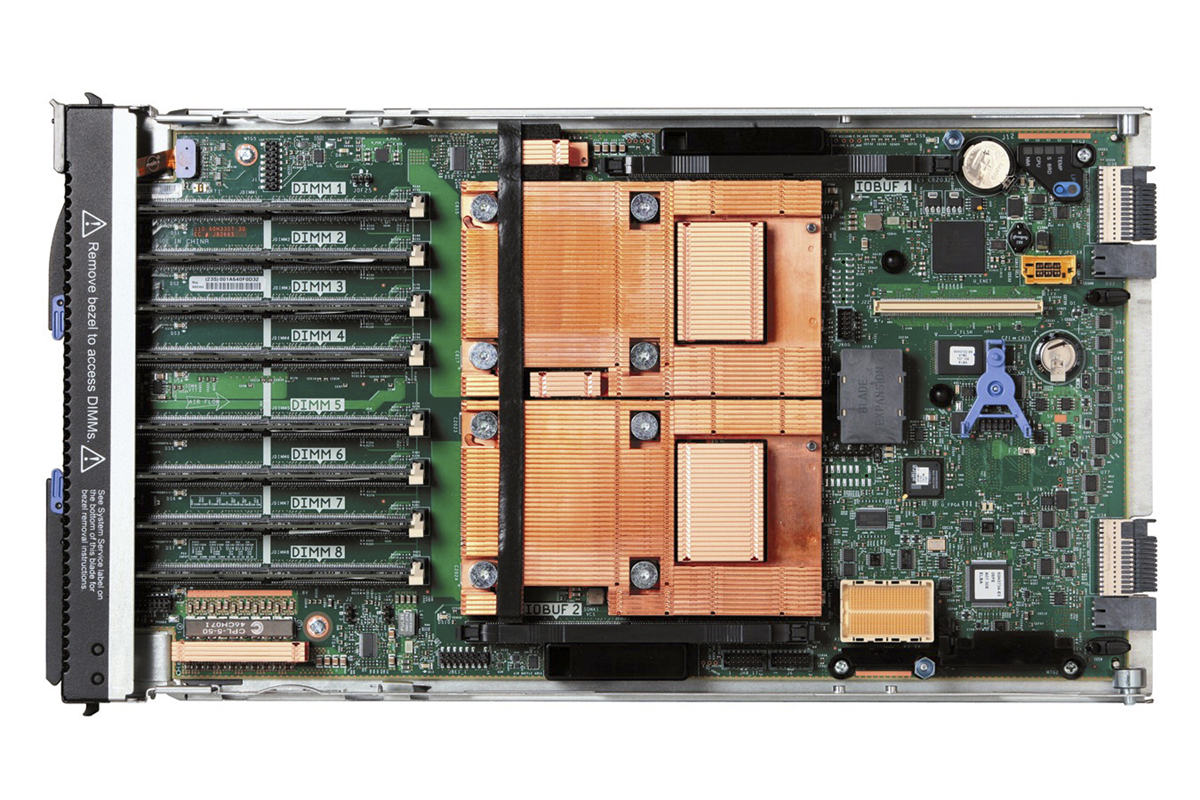

November 2004: Blue Gene/L

In September 2004, the Earth Simulator was finally defeated by IBM's BlueGene/L. It reached 36 TFlops while still under construction. When it was completed in November 2004, it amounted to 70.7 TFlops of processing power, twice that of the Earth Simulator. In June 2005, the BlueGene/L was extended and reached an exceptional 136.8 TFlops in Linpack, almost four times more than the Earth Simulator. The BlueGene/L was then the first supercomputer to pass the 100 TFlops bar.

To achieve this record, IBM employed 65,536 PowerPC 440 processors clocked at 700 MHz. The processors used were not considered to be relatively powerful, but they were compact and consume relatively little power, which allowed IBM to install two of them together a on small card (above) and plugged into a motherboard inside of a rack. The BlueGene/L was shown to have excellent performance: reaching 75 percent of its theoretical power under Linpack.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

June 2006: BlueGene/L 2.0

In late 2005, the Blue Gene/L at Lawrence Livermore National Laboratory doubled the number of processors to 131,072. As a result the BlueGene/L 2.0 easily held the number one spot in the TOP500. Under Linpack the BlueGene/L recorded 280.6 TFlops of performance under Linpack. Thanks to IBM's use of small energy efficient chips, this configuration of the BlueGene/L consumed only 1.2 MW of power.

At the time, the BlueGene/L was the only supercomputer to exceed 100 TFlops, with the runner up on the TOP500 peaked at 91.3 TFlops. Note that also in June 2006, the Tera 10 French supercomputer ranked 6th with 42.9 TFlops in Linpack.

June 2007: Jaguar

The Blue Gene/L stayed on top as the fastest supercomputer for another two years. Although no other system could match its performance, other supercomputers did edge closer and managed to pass the 100 TFlops mark. In June 2007, both the Jaguar (No. 2) and the Re Storm (No. 3) surpassed the 100 TFlops mark. The Jaguar, which had been constantly updated since 2005, comprised of Cray XT3 and XT4 servers. It marks the entry of AMD into the big league, as these systems used Opteron dual-core 2.6 GHz processors. In total, at the time Jaguar contained 23,016 cores and reached 101.7 TFlops in Linpack.

June 2008: Roadrunner

Beep! Beep! In June 2008 IBM succeeds the BlueGene/L with the IBM Roadrunner. The supercomputer does its nickname justice, as it was the first supercomputer in history to exceed the petaflop threshold. It was also a technological breakthrough, as the first hybrid supercomputer, taking advantage of two significantly different processor architectures.

The Roadrunner contained a total of 122,400 cores split between IBM and AMD processors. The 6,562 AMD64 Opteron dual-core processors operated at 1.8 GHz and were capable of handling traditional x86 software. Each Opteron core was paired with one PowerXCell 8i 3200 core clocked at 3.2 GHz, which is comprised of 1 PPE and 8 SPE. These IBM processors are related to the ones used inside of the Xbox 360 and Playstation 3. In this configuration the PowerXCell 8i processors were used as coprocessors, which the Opteron cores could leverage for additional processing power. The cumulative theoretical power of the Roadrunner was 1.38 PFlops. Its performance under Linpack reached 1.03 PFlops, placing it on top of the TOP500.

One of the advantages of the hybrid architecture was greater energy efficiency. Roadrunner consumed only 2.35 MW of power, and thus was capable of 437 MFLOPS / W. The system weighed 227 tons and occupied an area of 483 m2 in the Los Alamos laboratory.

June 2009: Roadrunner

Just like ASCI Red and BlueGene/L before it, the Roadrunner retained leadership of the TOP500 for several months, and experienced updates to push its computational power higher. In November 2008, the total number of computing cores increased to 129,600, and performance under Linpack jumped to 1.1 PFlops.

This slight increase was just enough for Roadrunner to remain as the fastest super computer in the world. The runner up, the updated Jaguar using Cray XT5 servers in place of the older XT3 and XT4 systems, had achieved 1.059 PFlops under Linpack. Jaguar and Roadrunner were the only two supercomputers with computational power in excess of one petaflop.

June 2010: Jaguar 3.0

In November 2009, Jaguar finally managed to dislodge the Roadrunner and become the fastest supercomputer in the world. It was composed of two "partitions" of Cray servers. The old section comprised of 7,832 Cray XT4 servers, each containing a quad-core Opteron 1354 Budapest processor clocked at 2.1 GHz. The new section was made up of 18,868 Cray XT5 servers each containing two hex-core Opteron 2435 Istanbul processors clocked at 2.6 GHz.

Its theoretical power was estimated at 2.33 PFlops, and resulted in 1.76 PFlops under Linpack. Unlike the Roadrunner, Jaguar was not particularly energy efficient, and it consumed about 7 MW of power (253 MFlops / W).

2010: China Enters The Race With GPU Power

In 2010, China entered the race with two supercomputers competing to be the fastest in the world. In June 2010, the Nebulae had the highest theoretical power out of the TOP500 super computers, estimated at 2.98 PFlops, but its real world performance under Linpack remained below that of the Jaguar. Then, in November 2010, the Tianhe-1A displaced both the Jaguar and Nebulae, taking the lead in both theoretical power and Linpack performance.

This system was theoretically capable of 4.7 PFlops, but only reached 2.57 PFlops under Linpack.

Both the Tianhe-1A and Nebulae draw much of their processing power from the use of GPUs for general purpose processing. Similar to Roadrunner, these systems are considered to be hybrid supercomputers, as they combine x86 Intel Xeon X5600 processors (X5650 in Nebulae, X5670 in Tianhe-1A) with NVIDIA Tesla GPUs (C2050 for Nebulae, M2050 for Tianhe-1A). This gained wide spread recognition of GPGPU.

As a result of this hybrid configuration, these Chinese supercomputers displayed excellent efficiency. The Tianhe-1A consumed only 4 MW, and thus achieved 640 MFlops of performance per watt.

June 2011: K Computer

In June 2011, Japan took over the performance crown with the Fujitsu K Computer installed in the Riken Advanced Institute of Computational Sciences.

The Fujitsu K Computer is one of the few machines to demonstrated real world performance relatively close to its theoretical power. The system was comprised of 68,544 SPARC64 VIIIfx octa-core processors, adding up to a total of 548,352 cores. Unlike the Tianhe-1A, it does not rely on GPUs for GPGPU. It was capable of 8.16 PFlops of computational power.

Although the K Supercomputer was considerably faster than the Tianhe-1A, it also consumed significantly more power, 9899 kW compared to Tianhe-1A's 4,000 kW. The efficiency was therefore notably worse than the Tianhe-1A, and the problem did not improve when Fujitsu added additional cores that propelled K Computer to 705,024 cores with a power consumption over 12,650 kW.

June 2011 marked another significant event in the TOP500, as for the first time, the top ten supercomputers in the world possessed computational power in excess of one petaflop.

-

adamovera Archived comments are found here: http://www.tomshardware.com/forum/id-2844227/years-supercomputer-evolution.htmlReply -

bit_user SW26010 is basically a rip-off of the Cell architecture that everyone hated programming so much that it never had a successor.Reply

It might get fast Linpack benchies, but I don't know how much else will run fast on it. I'd be surprised if they didn't have a whole team of programmers just to optimize Linpack for it.

I suspect Sunway TaihuLight was done mostly for bragging rights, as opposed to maximizing usable performance. On the bright side, I'm glad they put emphasis on power savings and efficiency. -

bit_user BTW, nowhere in the article you linked does it support your claim that:Replythe U.S. government restricted the sale of server-grade Intel processors in China in an attempt to give the U.S. time to build a new supercomputer capable of surpassing the Tianhe-2.

-

bit_user aldaia only downvotes because I'm right. If I'm wrong, prove it.Reply

Look, we all know China will eventually dominate all things. I'm just saying this thing doesn't pwn quite as the top line numbers would suggest. It's a lot of progress, nonetheless.

BTW, China's progress would be more impressive, if it weren't tainted by the untold amounts of industrial espionage. That makes it seem like they can only get ahead by cheating, even though I don't believe that's true.

And if they want to avoid future embargoes by the US, EU, and others, I'd recommend against such things as massive DDOS attacks on sites like github.

-

g00ey Reply18201362 said:SW26010 is basically a rip-off of the Cell architecture that everyone hated programming so much that it never had a successor.

It might get fast Linpack benchies, but I don't know how much else will run fast on it. I'd be surprised if they didn't have a whole team of programmers just to optimize Linpack for it.

I suspect Sunway TaihuLight was done mostly for bragging rights, as opposed to maximizing usable performance. On the bright side, I'm glad they put emphasis on power savings and efficiency.

I'd assume that what "everyone" hated was to have to maintain software (i.e. games) for very different architectures. Maintaining a game for PS3, XBOX360 and PC that all have their own architecture apparently is more of a hurdle that if they all were Intel-based or whatever architecture have you. At least XboX360 had DirectX...

In the heydays of PowerPC, developers liked it better than the Intel architecture, particularly assembler developers. Today, it may not have that "fancy" stuff such as AVX, SSE etc but it probably is quite capable for computations. Benchmarks should be able to give some indications... -

bit_user Reply

I'm curious how you got from developers hating Cell programming, to game companies preferring not to support different platforms, and why they would then single out the PS3 for criticism, when this problem was hardly new. This requires several conceptual leaps from my original statement, as well as assuming I have even more ignorance of the matter than you've demonstrated. I could say I'm insulted, but really I'm just annoyed.18208196 said:I'd assume that what "everyone" hated was to have to maintain software (i.e. games) for very different architectures.

No, you're way off base. Cell was painful to program, because the real horsepower is in the vector cores (so-called PPEs), but they don't have random access to memory. Instead, they have only a tiny bit of scratch pad RAM, and must DMA everything back and forth from main memory (or their neighbors). This means virtually all software for it must be effectively written from scratch and tuned to queue up work efficiently, so that the vector cores don't waste loads of time doing nothing while data is being copied around. Worse yet, many algorithms inherently depend on random access and perform poorly on such an architecture.

In terms of programming difficulty, the gap in complexity between it and multi-threaded programming is at least as big as that separating single-threaded and multi-threaded programming. And that assumes you're starting from a blank slate - not trying to port existing software to it. I think it's safe to say it's even harder than GPU programming, once you account for performance tuning.

Architectures like this are good at DSP, dense linear algebra, and not a whole lot else. The main reason they were able to make it work in a games console is because most game engines really aren't that different from each other and share common, underlying libraries. And as game engines and libraries became better tuned for it, the quality of PS3 games improved noticeably. But HPC is a different beast, which is probably why IBM never tried to follow it with any successors.

I'm not even sure what you're talking about, but I'd just point out that both Cell and the XBox 360's CPUs were derived from Power PC. And PPC did have AltiVec, which had some advantages over MMX & SSE.18208196 said:In the heydays of PowerPC, developers liked it better than the Intel architecture, particularly assembler developers. Today, it may not have that "fancy" stuff such as AVX, SSE etc but it probably is quite capable for computations. Benchmarks should be able to give some indications...

-

alidan Reply18208196 said:18201362 said:SW26010 is basically a rip-off of the Cell architecture that everyone hated programming so much that it never had a successor.

It might get fast Linpack benchies, but I don't know how much else will run fast on it. I'd be surprised if they didn't have a whole team of programmers just to optimize Linpack for it.

I suspect Sunway TaihuLight was done mostly for bragging rights, as opposed to maximizing usable performance. On the bright side, I'm glad they put emphasis on power savings and efficiency.

I'd assume that what "everyone" hated was to have to maintain software (i.e. games) for very different architectures. Maintaining a game for PS3, XBOX360 and PC that all have their own architecture apparently is more of a hurdle that if they all were Intel-based or whatever architecture have you. At least XboX360 had DirectX...

In the heydays of PowerPC, developers liked it better than the Intel architecture, particularly assembler developers. Today, it may not have that "fancy" stuff such as AVX, SSE etc but it probably is quite capable for computations. Benchmarks should be able to give some indications...

18209958 said:

I'm curious how you got from developers hating Cell programming, to game companies preferring not to support different platforms, and why they would then single out the PS3 for criticism, when this problem was hardly new. This requires several conceptual leaps from my original statement, as well as assuming I have even more ignorance of the matter than you've demonstrated. I could say I'm insulted, but really I'm just annoyed.18208196 said:I'd assume that what "everyone" hated was to have to maintain software (i.e. games) for very different architectures.

No, you're way off base. Cell was painful to program, because the real horsepower is in the vector cores (so-called PPEs), but they don't have random access to memory. Instead, they have only a tiny bit of scratch pad RAM, and must DMA everything back and forth from main memory (or their neighbors). This means virtually all software for it must be effectively written from scratch and tuned to queue up work efficiently, so that the vector cores don't waste loads of time doing nothing while data is being copied around. Worse yet, many algorithms inherently depend on random access and perform poorly on such an architecture.

In terms of programming difficulty, the gap in complexity between it and multi-threaded programming is at least as big as that separating single-threaded and multi-threaded programming. And that assumes you're starting from a blank slate - not trying to port existing software to it. I think it's safe to say it's even harder than GPU programming, once you account for performance tuning.

Architectures like this are good at DSP, dense linear algebra, and not a whole lot else. The main reason they were able to make it work in a games console is because most game engines really aren't that different from each other and share common, underlying libraries. And as game engines and libraries became better tuned for it, the quality of PS3 games improved noticeably. But HPC is a different beast, which is probably why IBM never tried to follow it with any successors.

I'm not even sure what you're talking about, but I'd just point out that both Cell and the XBox 360's CPUs were derived from Power PC. And PPC did have AltiVec, which had some advantages over MMX & SSE.18208196 said:In the heydays of PowerPC, developers liked it better than the Intel architecture, particularly assembler developers. Today, it may not have that "fancy" stuff such as AVX, SSE etc but it probably is quite capable for computations. Benchmarks should be able to give some indications...

ah the cel... listening to devs talk about it and an mit lecture, the main problems with it were this

1) sony refused to give out proper documentation, they wanted their games to get progressively better as the console aged, preformance wise and graphically, so what better way then to kneecap devs

2) from what i understand about the architecture, and im not going to say this right, you had one core devoted to the os/drm, then you had the rest devoted to games and one core disabled on each to keep yields (something from the early day of the ps3) then you had to program the games while thinking of what core the crap executed on, all in all, a nightmare to work with.

if a game was made ps3 first it would port fairly good across consoles, but most games were made xbox first, and porting to ps3 was a nightmare. -

bit_user Reply

It's slightly annoying that they took a 8 + 1 core CPU and turned it into a 6 + 1 core CPU, but I doubt anyone was too bothered about that.18224039 said:2) from what i understand about the architecture, and im not going to say this right, you had one core devoted to the os/drm, then you had the rest devoted to games and one core disabled on each to keep yields (something from the early day of the ps3)

I think PS4 launched with only 4 of the 8 cores available for games (or maybe it was 5/8?). Recently, they unlocked one more. I wonder how many the PS4 Neo will allow.

This is part of what I was saying. Again, the reason why it mattered which core was that the memory model was so restrictive. Each PPE plays in its own sandbox, and has to schedule any copies to/from other cores or main memory. Most multi-core CPUs don't work this way, as it's too much burden to place on software, with the biggest problem being that it prevents one from using any libraries that weren't written to work this way.18224039 said:you had to program the games while thinking of what core the crap executed on, all in all, a nightmare to work with.

if a game was made ps3 first it would port fairly good across consoles, but most games were made xbox first, and porting to ps3 was a nightmare.

Now, if you write your software that way, you can port it to XBox 360/PC/etc. by simply taking the code that'd run on the PPEs and put it in a normal userspace thread. The DMA operations can be replaced with memcpy's (and, with a bit more care, you could even avoid some copying).

Putting it in more abstract terms, the Cell strictly enforces a high degree of data locality. Taking code written under that constraint and porting it to a less constrained architecture is easy. Going the other way is hard.

-

alidan Reply18224479 said:

It's slightly annoying that they took a 8 + 1 core CPU and turned it into a 6 + 1 core CPU, but I doubt anyone was too bothered about that.18224039 said:2) from what i understand about the architecture, and im not going to say this right, you had one core devoted to the os/drm, then you had the rest devoted to games and one core disabled on each to keep yields (something from the early day of the ps3)

I think PS4 launched with only 4 of the 8 cores available for games (or maybe it was 5/8?). Recently, they unlocked one more. I wonder how many the PS4 Neo will allow.

This is part of what I was saying. Again, the reason why it mattered which core was that the memory model was so restrictive. Each PPE plays in its own sandbox, and has to schedule any copies to/from other cores or main memory. Most multi-core CPUs don't work this way, as it's too much burden to place on software, with the biggest problem being that it prevents one from using any libraries that weren't written to work this way.18224039 said:you had to program the games while thinking of what core the crap executed on, all in all, a nightmare to work with.

if a game was made ps3 first it would port fairly good across consoles, but most games were made xbox first, and porting to ps3 was a nightmare.

Now, if you write your software that way, you can port it to XBox 360/PC/etc. by simply taking the code that'd run on the PPEs and put it in a normal userspace thread. The DMA operations can be replaced with memcpy's (and, with a bit more care, you could even avoid some copying).

Putting it in more abstract terms, the Cell strictly enforces a high degree of data locality. Taking code written under that constraint and porting it to a less constrained architecture is easy. Going the other way is hard.

as for turning cores off, that's largely to do with yields, later on in the consoles cycle they unlocked cores so some systems that weren't bad chips got slightly better performance in some games then other consoles, at least on ps3, that meant all of nothing as it had a powerful cpu but the gpu was bottlenecking it, opposite of the 360 where the cpu was bottlenecking that one at least if i remember the systems right.