Ryzen Versus Core i7 In 11 Popular Games

Introduction

Pre-release Cinebench and Blender benchmarks showing Ryzen ahead of Core i7-6900K gave enthusiasts hope they'd have a cheaper alternative to Intel's brawny Broadwell-E-based CPUs. And while it's fair to say the Ryzen launch went well for AMD in comparisons of pricing and professional application performance, gaming didn't paint the processor in a very good light at all.

We are always willing to make some concessions in the name of value, so Ryzen doesn't have to beat Intel's offerings across the board. It just needs to be competitive. Where that line exists for you is completely subjective. But for many, Ryzen’s frame rates are too low, even in light of its attractive pricing. And if gaming is the primary purpose for your PC, it's hard to ignore faster and cheaper Kaby Lake-based Core i7s and i5s that serve up better results in many popular games.

Theories abound as to why Ryzen processors are struggling in gaming metrics, but some of the disparity no doubt comes from an IPC and clock rate deficit compared to Intel's Kaby Lake design. The issue also appears to stem from AMD’s Zen architecture and how applications navigate its cache hierarchy.

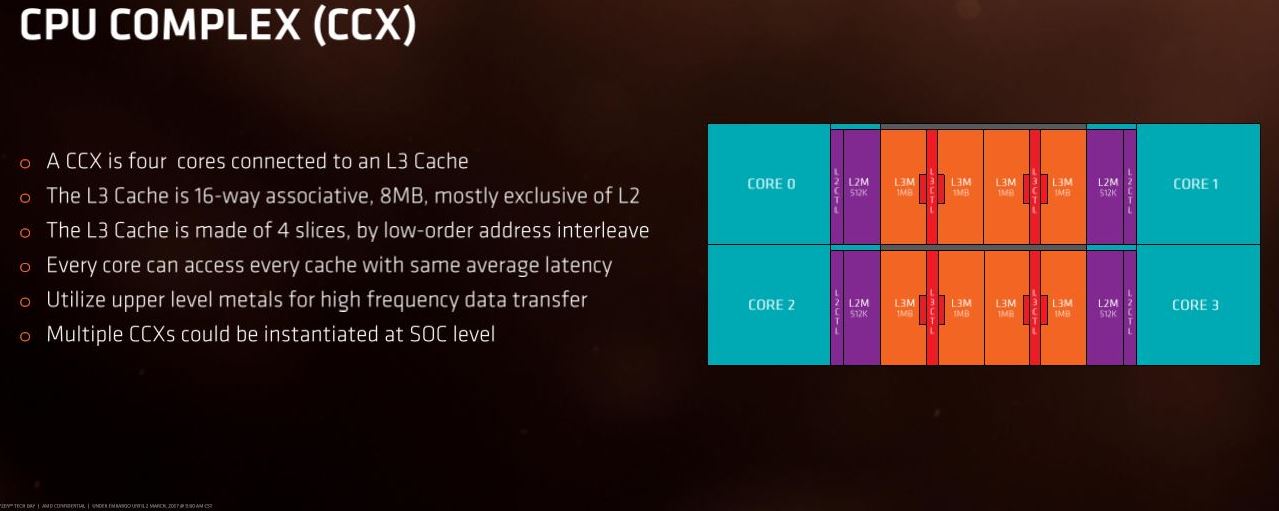

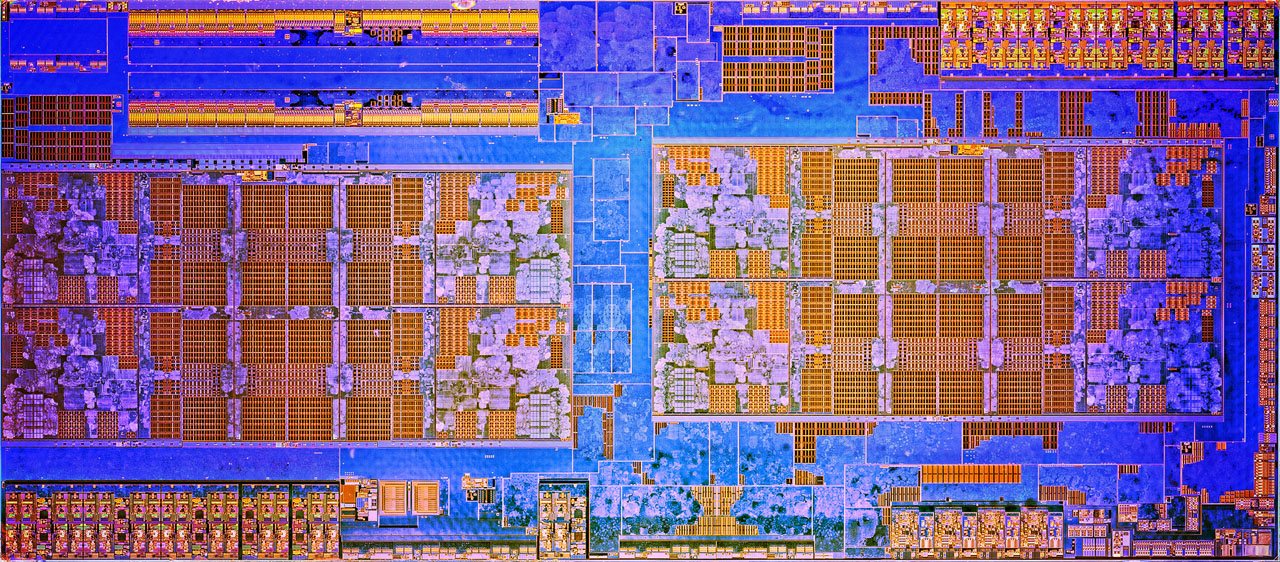

Article continues belowThe Zen architecture employs a four-core CCX (CPU Complex) building block. AMD outfits each CCX with a 16-way associative 8MB L3 cache split into four slices; each core in the CCX accesses this L3 with the same average latency. Two CCXes come together to create an eight-core Ryzen 7 processor (image below), and they communicate via AMD’s Infinity Fabric interconnect. Data that traverses the void between CCXes incurs increased latency, so it's ideal to avoid the trip altogether if possible.

Unfortunately, threads migrate between the CPU Complexes, thus suffering cache misses on the local CCX's L3. Threads might also communicate with other threads (and their data) running on the CCX next door, again adding latency and chipping away at overall performance.

AMD noted in a recent blog post that most games aren’t optimized for its implementation of simultaneous multi-threading, which is particularly painful due to Ryzen’s core advantage. In fact, we’ve found that disabling SMT actually improves the chip's performance in games like Ashes of the Singularity, Arma 3, Battlefield 1, and The Division.

Ryzen represents AMD's first attempt at an SMT technology, so teething pains on the application side are understandable. Two game developers have come forward and voiced their intention to support AMD’s implementation in future updates, and AMD says it seeded the industry with 300 developer kits to jump-start the optimization effort. There are thousands of games, though. While many existing titles probably won't receive patches written with AMD in mind, we do hope that newer titles incorporate the code needed to run more smoothly.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

According to AMD, this problem doesn't relate to the Windows scheduler. Normally we'd say that's a good thing, since it doesn't depend on Microsoft to fix. But if the issue was tied to the operating system, a single update could optimize for AMD's processors, similar to what we saw with Bulldozer in the Windows 8 days. Instead, we have to look out for improvements one application at a time.

AMD also points out that Ryzen is more competitive at 3840x2160 than at lower resolutions, such as 1920x1080. Obviously, gaming at higher resolutions shifts the bottleneck over to your GPU. So while AMD's observation is true, it isn't indicative of better processor performance, but rather the architecture's weakness hidden behind a slammed GPU. Many of us use our CPUs for several years, and as we swap out for faster graphics cards, the bottleneck will start swinging back to host processing. In many ways, today’s 4K is tomorrow's QHD.

The Socket AM4 ecosystem holds great promise, but our experience with the top motherboard manufacturers (and indeed their experience with AMD's chipsets) has been less than ideal. We've received a flurry of updates in the days leading up to and following Ryzen 7's launch. In some cases, new firmware improves performance. In others, the fix shines light on the underlying issues. General platform instability aside, we get the sense there wasn't enough preparation pre-launch, and AMD's partners are scrambling now as a result. But there's hope that things will get better. AMD recently announced it's working on an updated power profile to better accommodate normal desktop usage patterns (more on that in a bit).

In the meantime, we want to better understand the state of gaming with a Ryzen CPU. Today's feature includes a number of popular titles and all three Ryzen CPUs. Though we're still working on our reviews of the Ryzen 7 1700X and 1700, digging deeper on gaming, specifically, was our top priority for follow-up after publishing the AMD Ryzen 7 1800X CPU Review.

MORE: Best CPUs

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Overall good CPU but compared to Intel it sucks. You are better with Kaby Lake. AMD CPU needs this, that or that...same story as with their video cards. Wait for performance increase which happens but by that time competitor has newer generation product. Look at difference between Nvidia and AMD high end offering.Reply

Almost forgot...to me a real upgrade is going to be Intel 2066 socket. -

Sakkura Most of these performance differences are not that relevant. I mean if you have a 60Hz monitor, practically all these tests max that out.Reply

So Ryzen is definitely better value for money than Broadwell-E even for gaming. Neither of those can currently match Kaby Lake, but they're not supposed to anyway. Ryzen 3/5 will compete with Kaby Lake by being cheaper and presumably only a little slower, and thus better value for money. -

Paul Alcorn Reply19423857 said:Were all the test cpus at stock clocks?

Yes, we tested at stock clocks. -

Rookie_MIB Well, what I gather from this round up is that Ryzen 7 series is a workstation CPU which can game decently well. So - if you use your computer for productivity (video processing, VMs, compiling etc) in addition to gaming, it's the processor to buy. It's vastly less expensive that Broadwell-E, and performs as well (if not better) in some regards.Reply

If your computer is used for gaming first with some secondary workstation uses, you're better off with Kaby Lake. The almost 5ghz clock speeds rule for gaming where it's not highly optimized for higher thread counts.

My Ryzen 7 1700 arrives today BTW. :D :D :D

I am definitely curious to see how the APU's which are coming fare. An actual decent x86 architecture with a really good IGP? If they could stick a 2GB hunk of HBM on it.... lordy that would be fast. -

BulkZerker Anyone remember when disabling hyperthreading got you an fps boost in video games (ffs that was an issue in battlefield 3)? Its that, all over again.Reply -

mitch074 To be expected - most current games are developed to make use of 4 threads on Intel CPUs, no more no less, once compiled on PC.Reply

As for "it should have been finalized before release", yeah right - even consoles need firmware updates once out to fix non-optimal settings. And if the situation under Linux is any indication, even Intel isn't exempt - they had to rewrite a whole new power management scheme to make use of Sandy Bridge, and even then you may end up with a frozen system now and again if you don't disable power management. We're talking SERVERS here, people! the kind of machine that runs 24/7 and thus working power management means real MONEY!

So to me, a grounds up brand new CPU architecture (something Intel hasn't done in more than 5 years) that works reliably out of the box and can beat the established champion in several benchmarks and real-world tasks for half the price is a GREAT accomplishment. And if Deus Ex and Shadow of Mordor are any indication, Ryzen can indeed kick Kaby Lake in the butt when properly used.

What can be understood from this article is that, CURRENTLY, AMD's Ryzen isn't the best gamer CPU out there as games aren't geared towards it yet. You can game properly with it though, and it more than likely will get faster with time. If you need to build a gaming rig today, go Kaby Lake; if you're building a workstation, go Ryzen - knowing you can game on it too. If you can wait a few months though, all bets are off.

As for AMD's performance in the GPU market, look at how many GameWorks games are out there, and how fast AMD's performance climbs up after game release (from a couple of weeks to a few months) - while it took almost a full year for Nvidia to catch up on DX12 performance!