Ryzen Versus Core i7 In 11 Popular Games

Project CARS, Rise of the Tomb Raider, And The Division

Project CARS

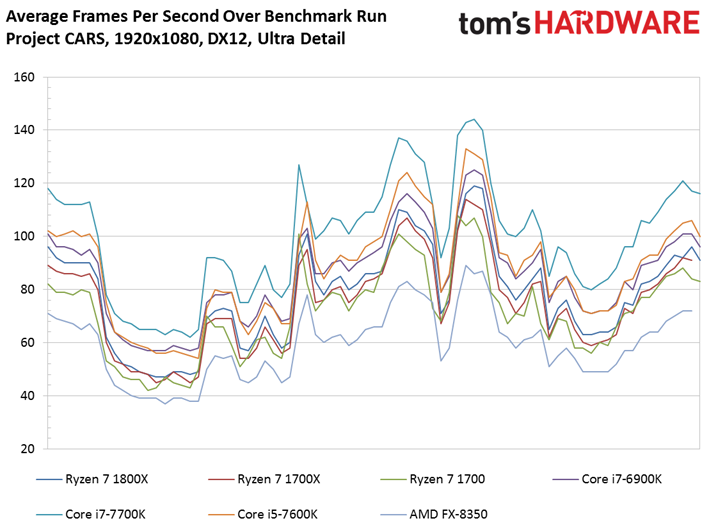

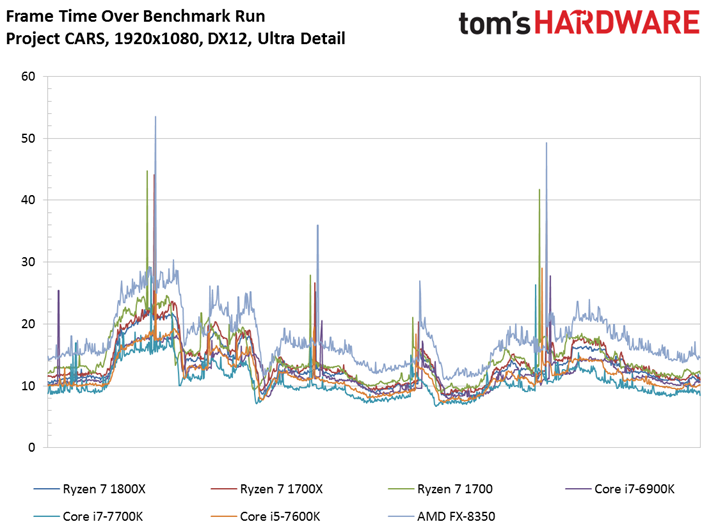

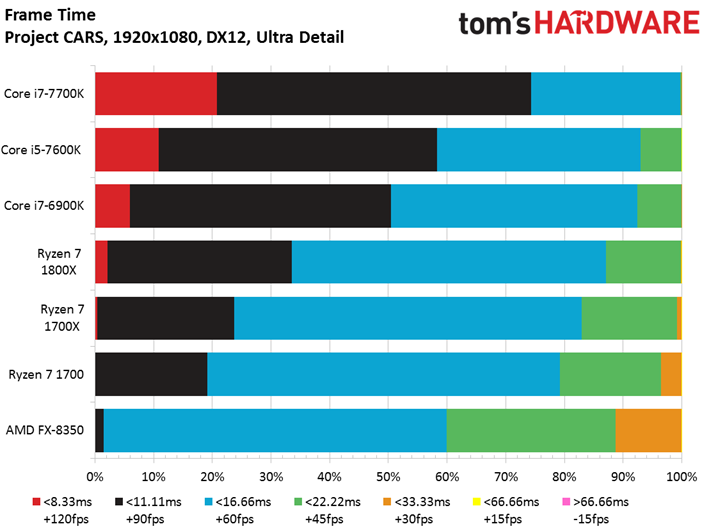

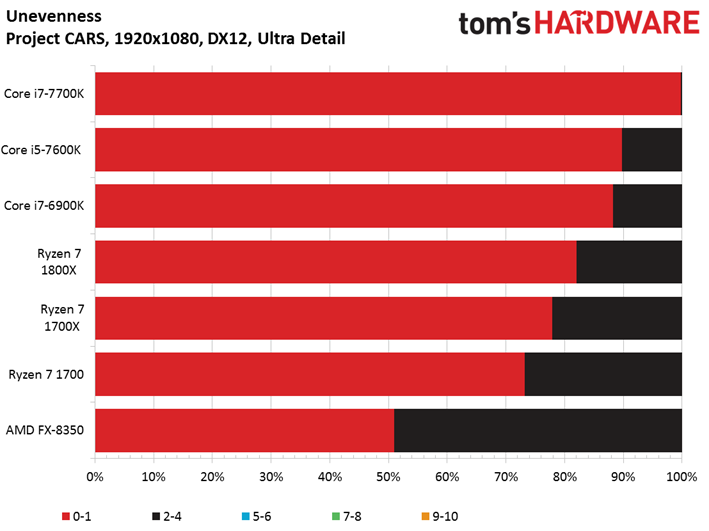

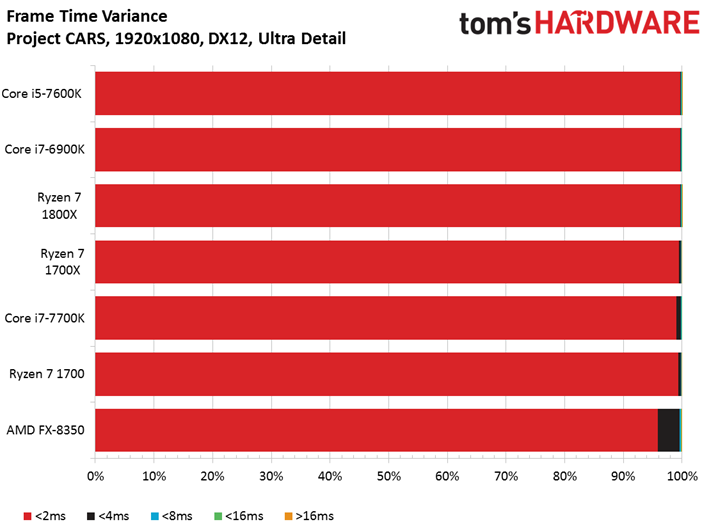

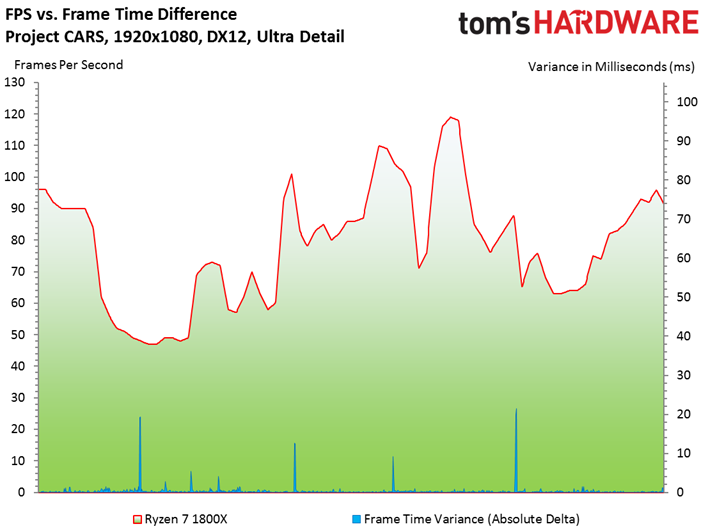

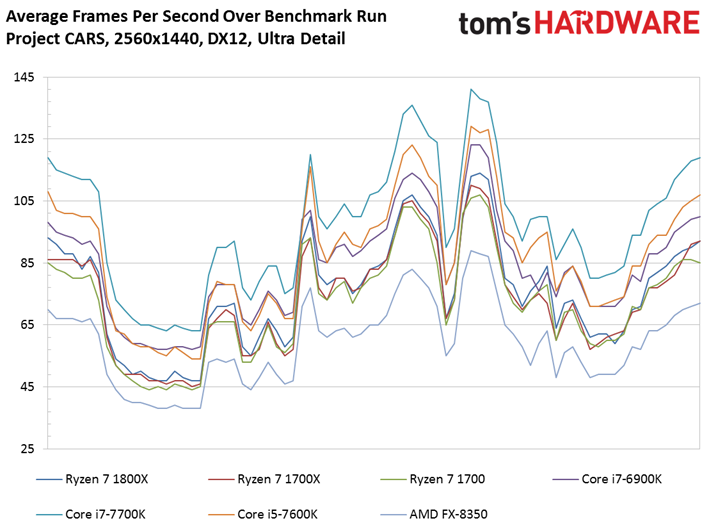

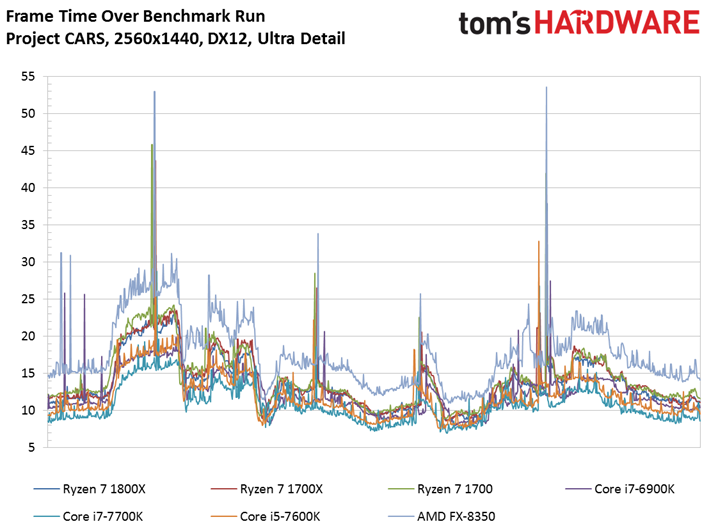

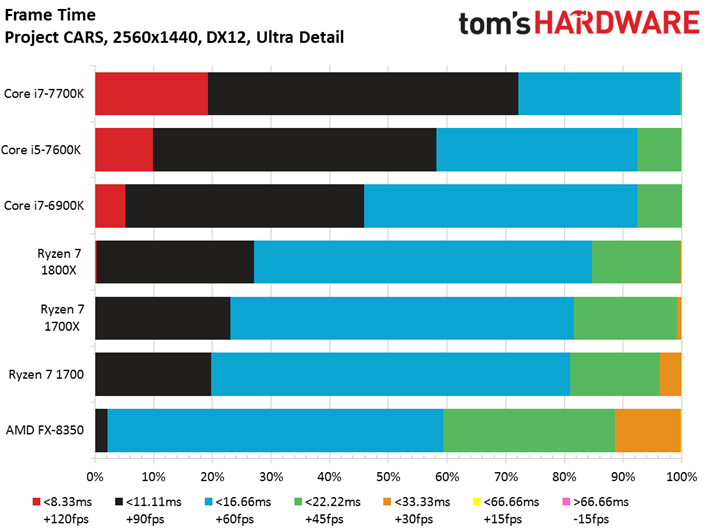

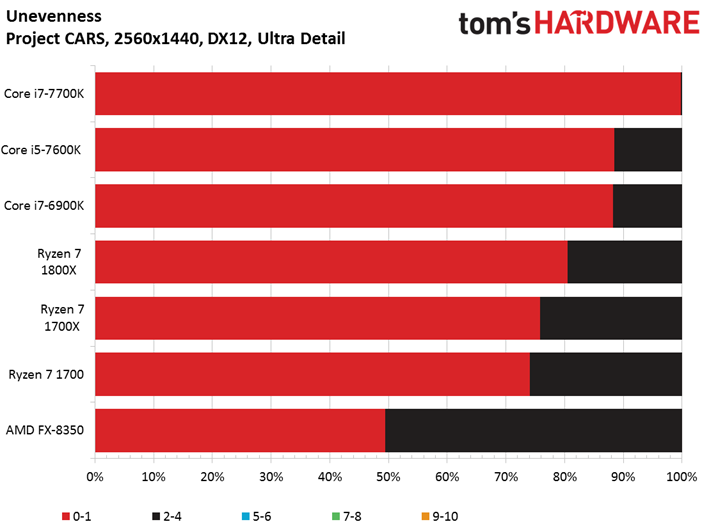

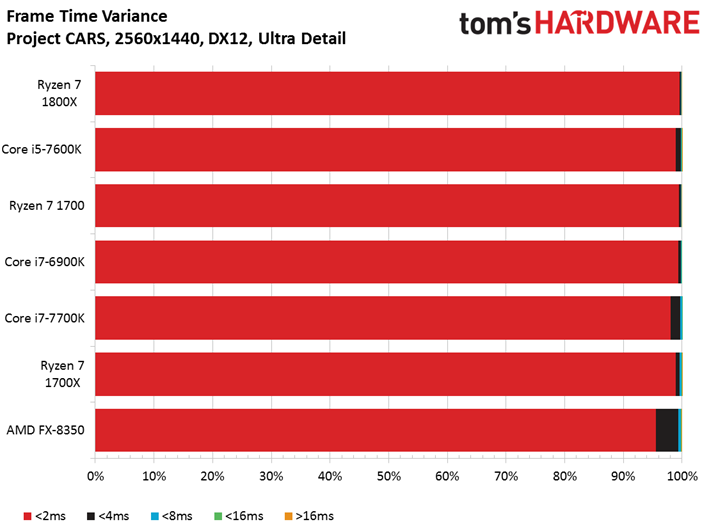

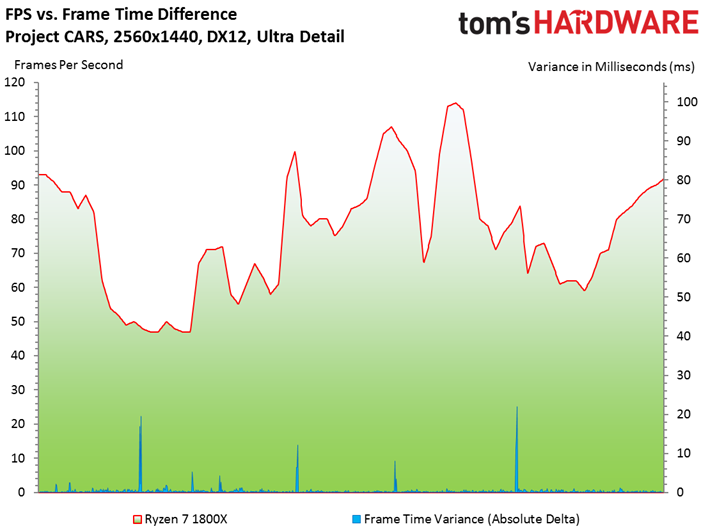

Slightly Mad Studios designed the Project CARS game engine specifically to promote parallelism by breaking tasks down into smaller chunks across available resources. The end result is a sophisticated engine that scales well with additional CPU cores and higher clock rates.

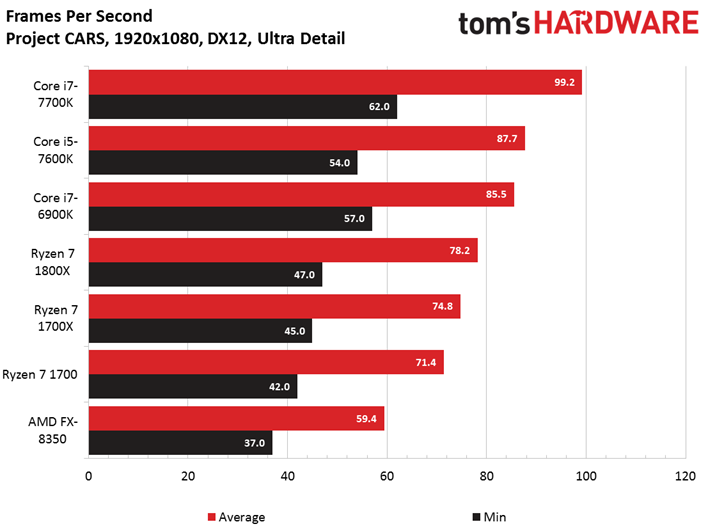

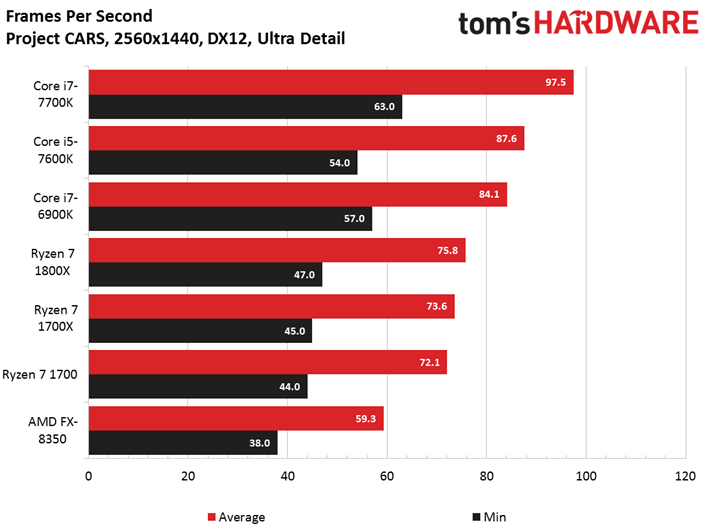

The prevalence of Kaby Lake at the top of this chart tells us that the game responds well to high IPC throughput and clock rates. After all, even the Core i5-7600K's four physical cores outpace Core i7-6900K's 8C/16T configuration (despite dropping to a lower minimum frame rate).

AMD's Ryzen CPUs line up predictably, given their frequencies.

As we've come to expect from Project CARS, we don't notice much of a performance drop as we shift to 2560x1440. This serves to underline the game's CPU-bound nature.

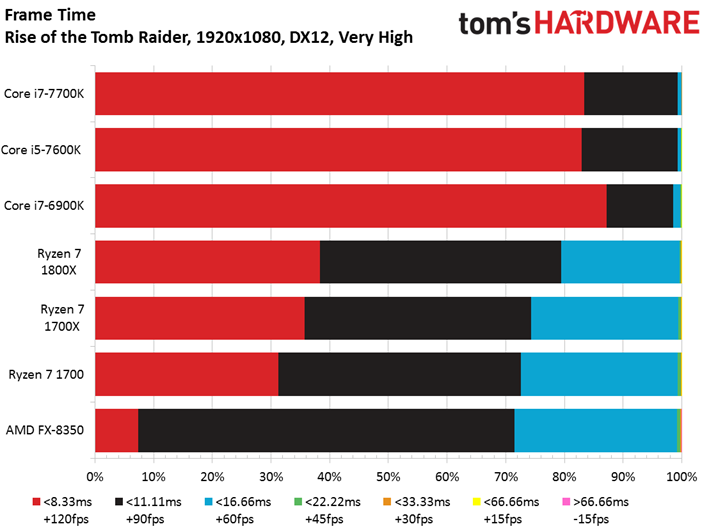

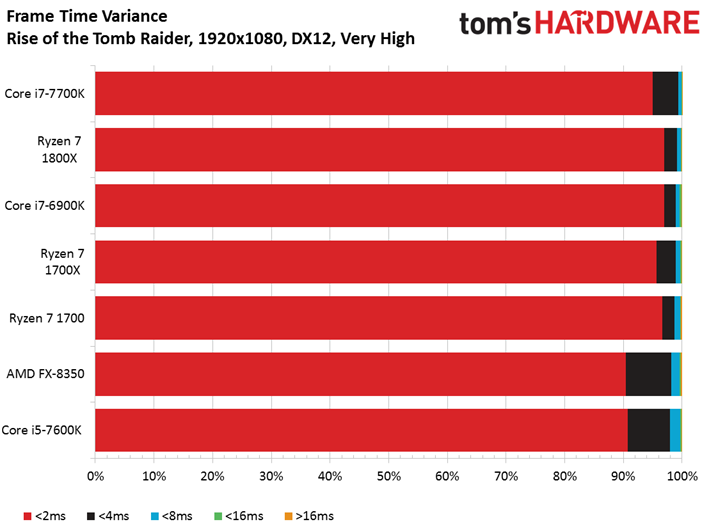

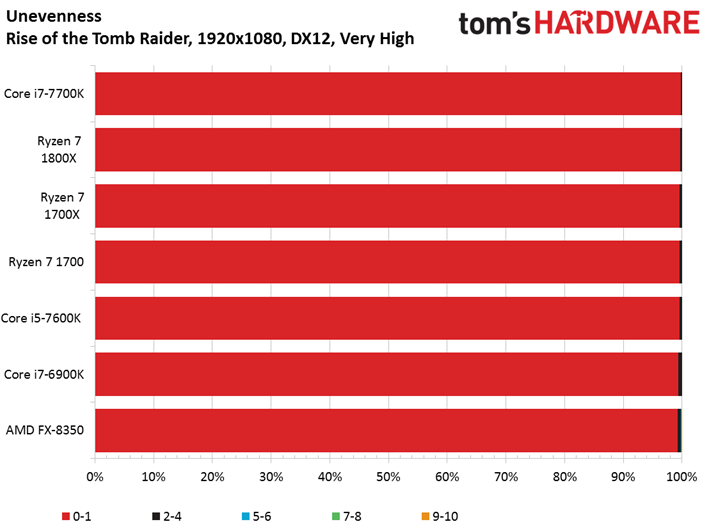

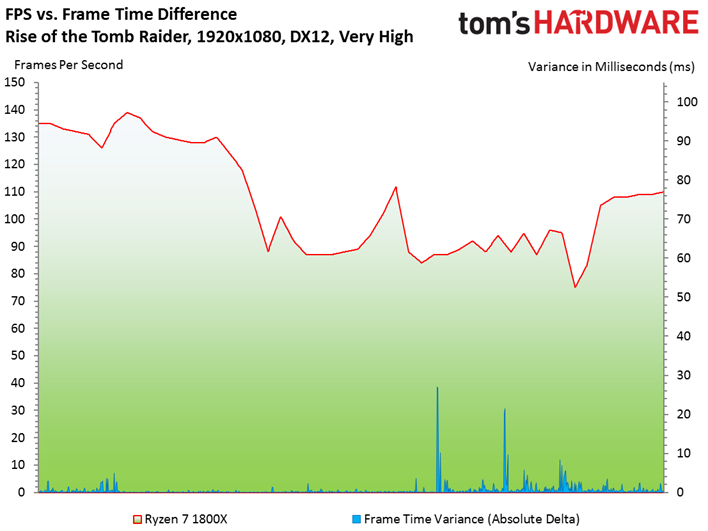

Rise of the Tomb Raider

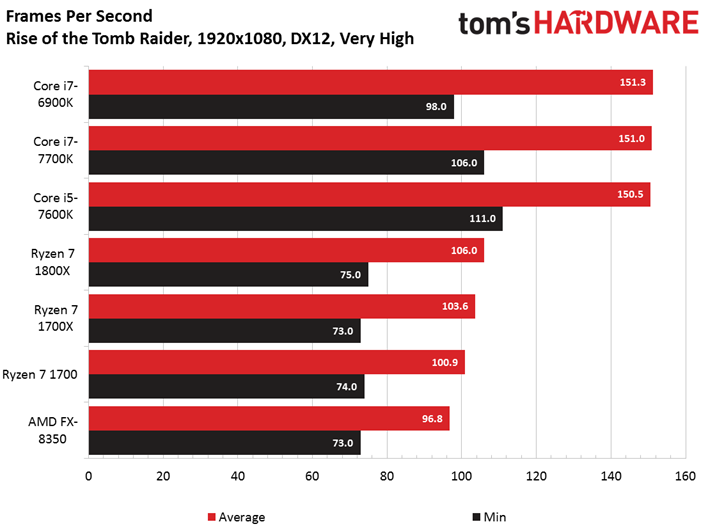

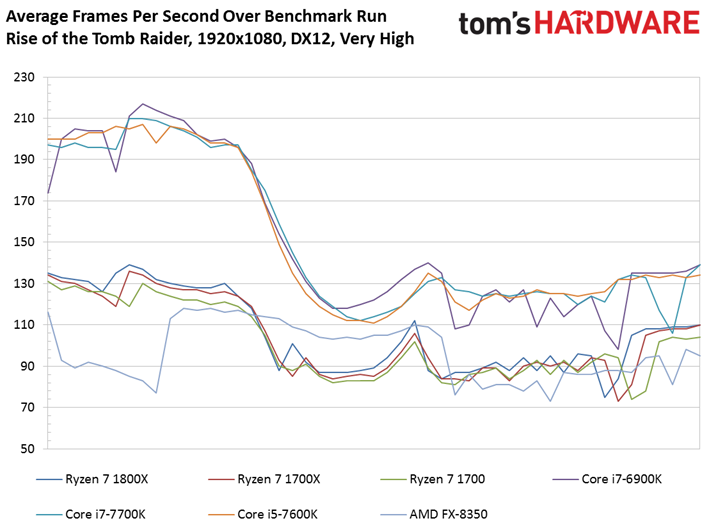

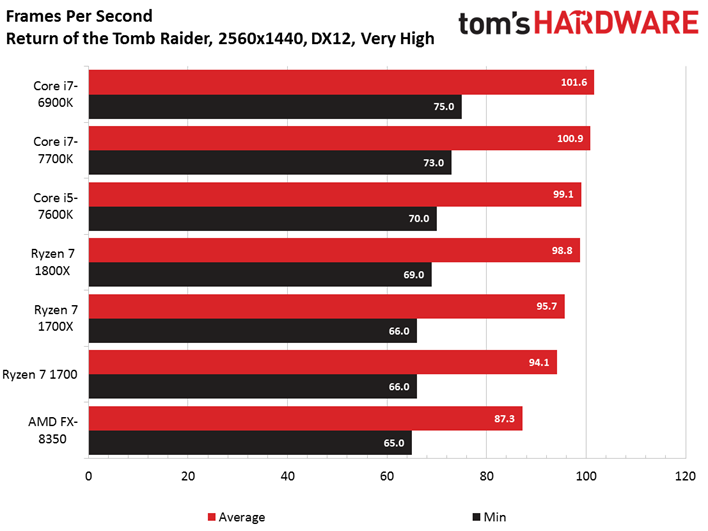

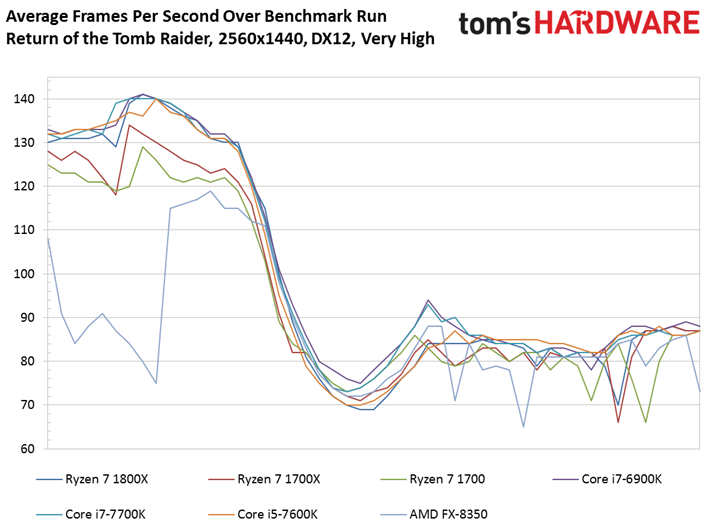

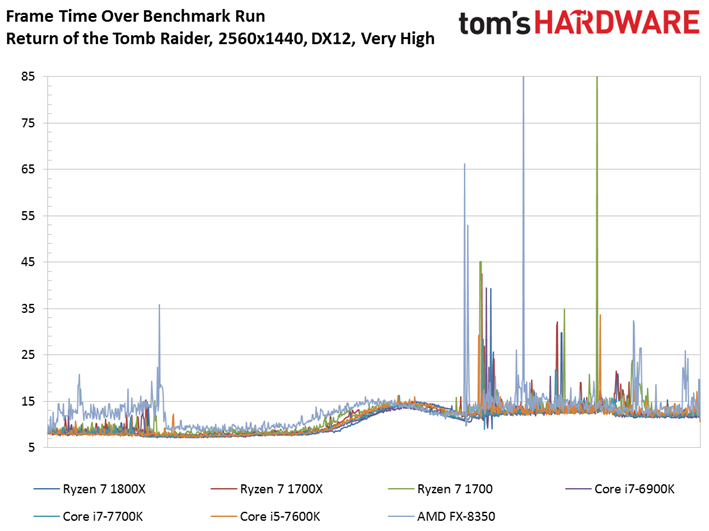

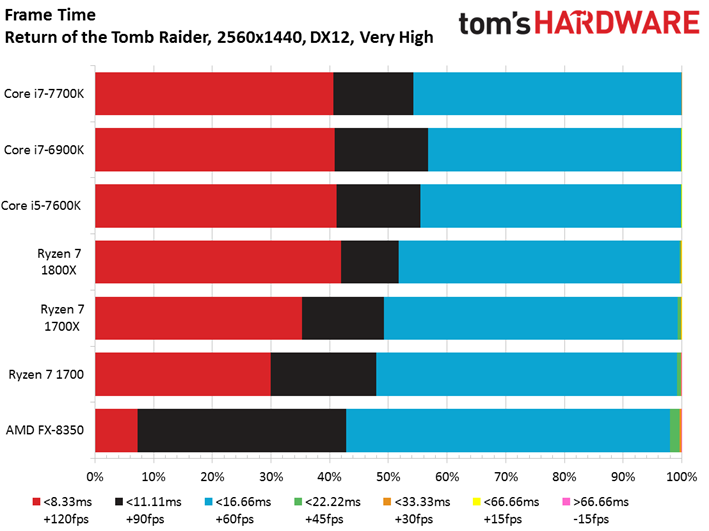

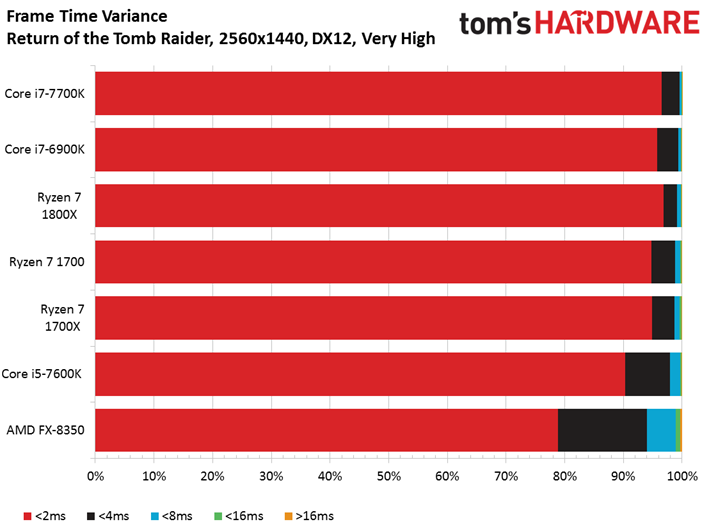

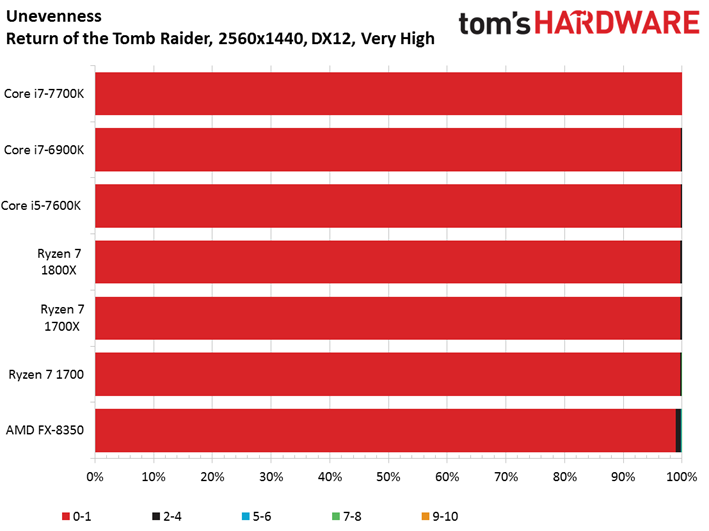

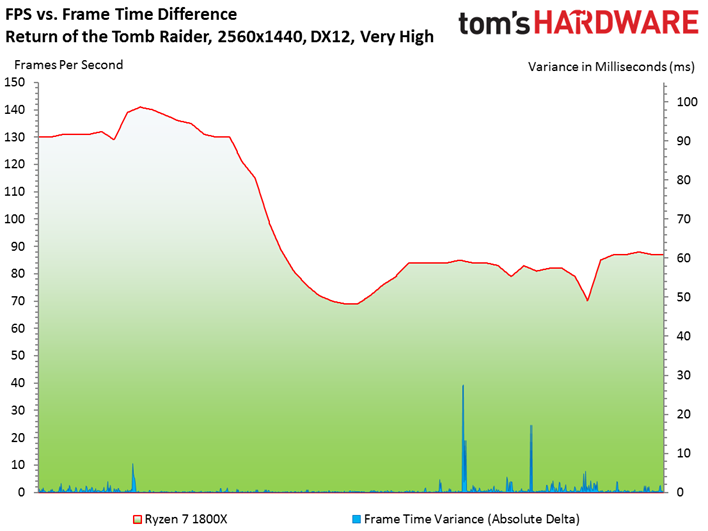

Just as we saw Deus Ex split into two distinct performance groups, so to does Rise of the Tomb Raider draw attention to the different architectures being tested. This time, however, it's Kaby Lake/Broadwell-E in the lead. Clearly, there's work to be done optimizing for Ryzen.

Ryzen 7 closes the gap at 2560x1440, suggesting a more graphics-bound scenario.

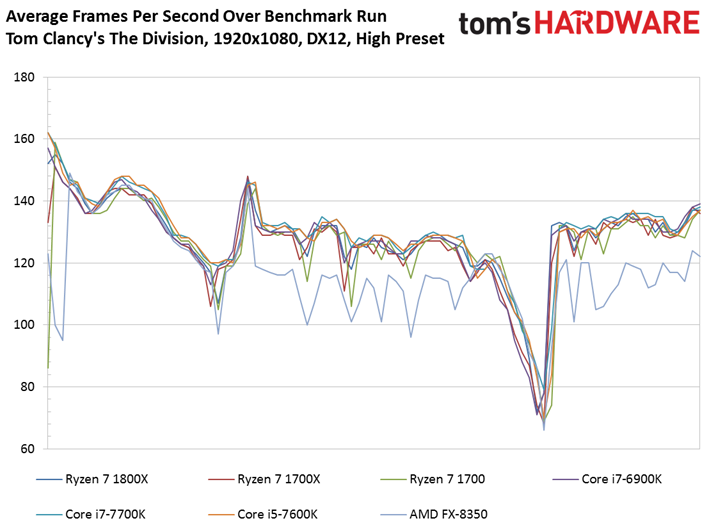

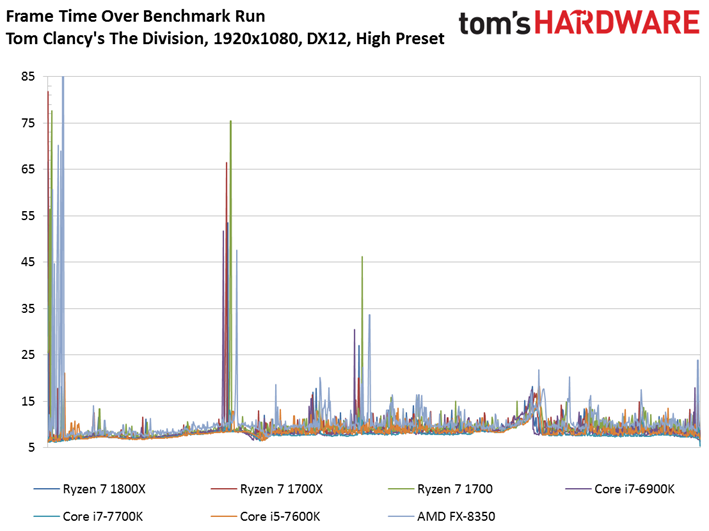

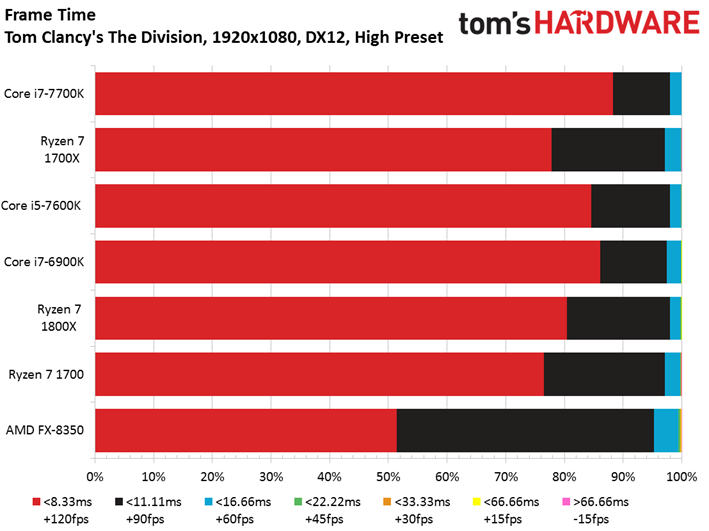

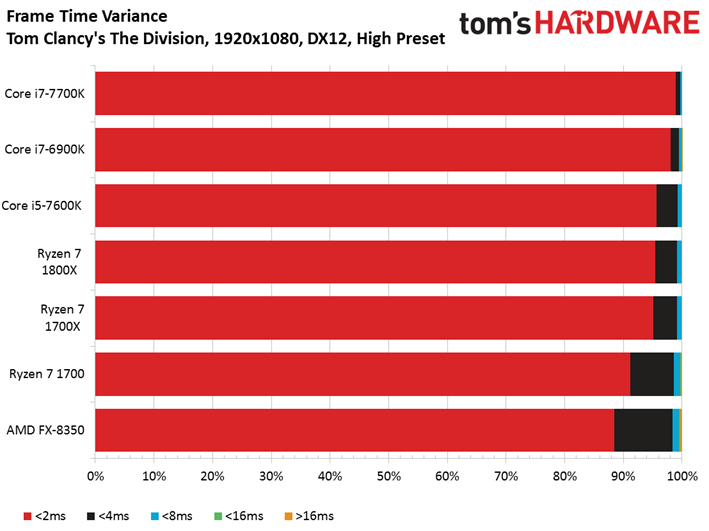

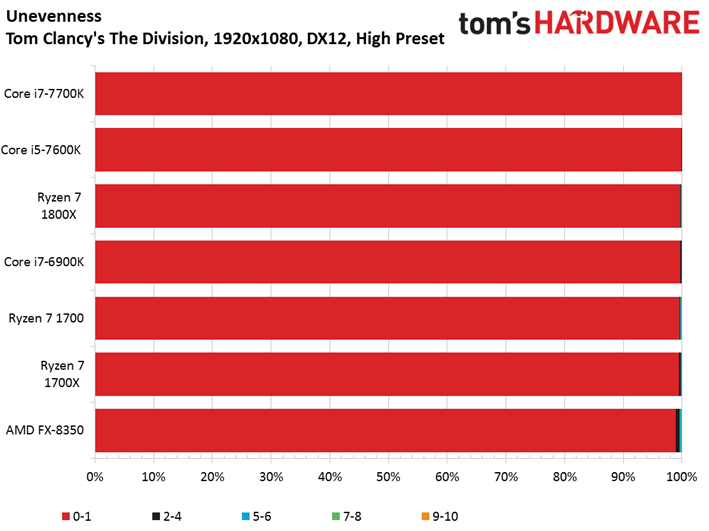

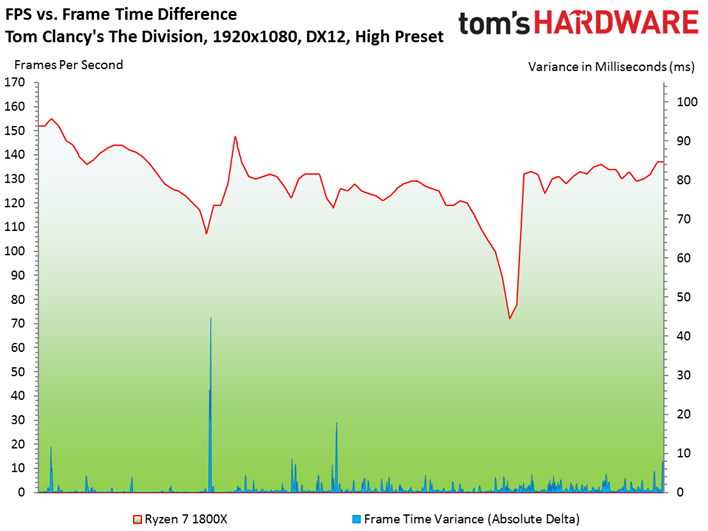

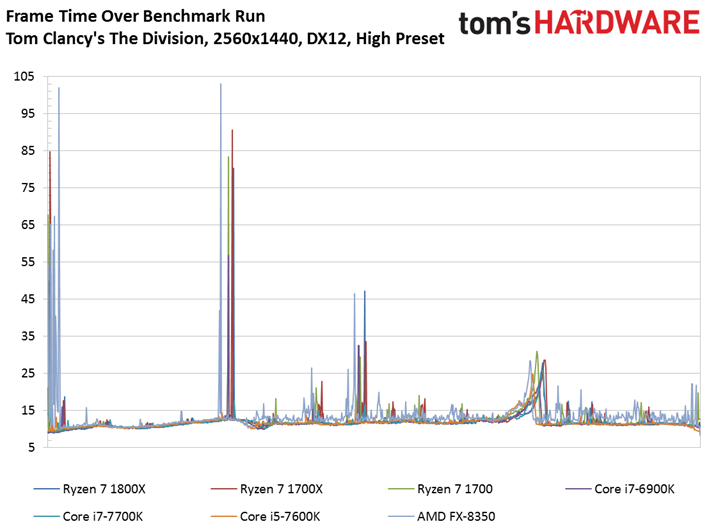

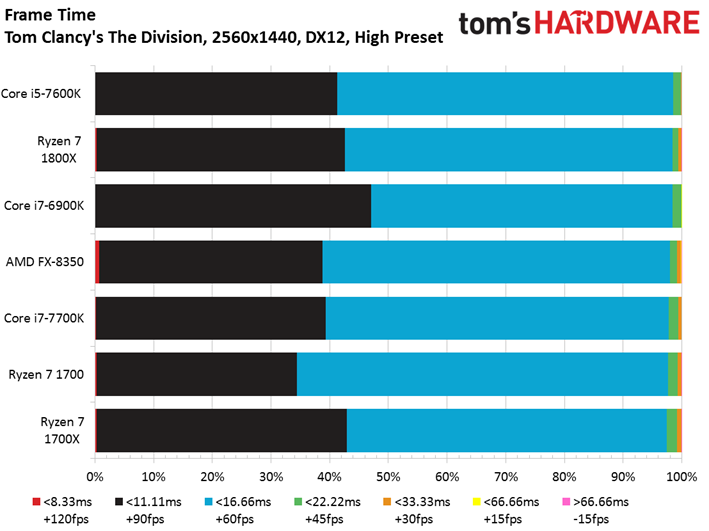

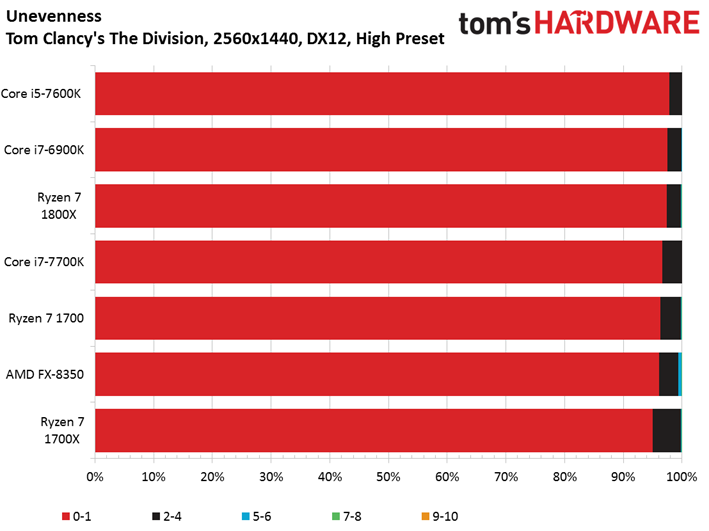

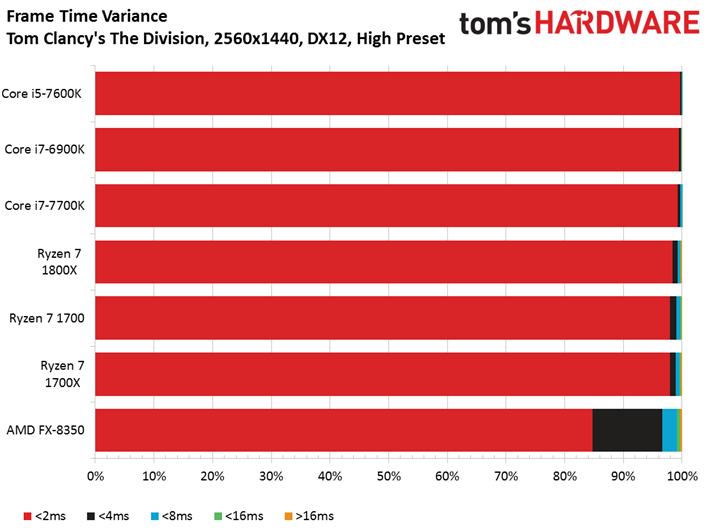

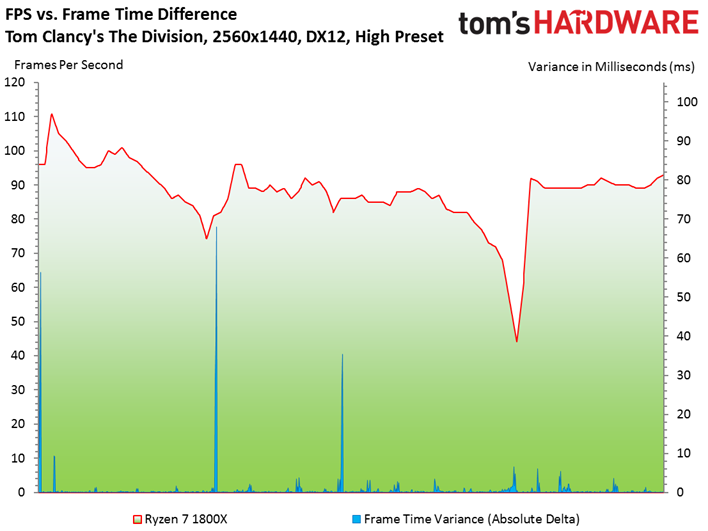

The Division

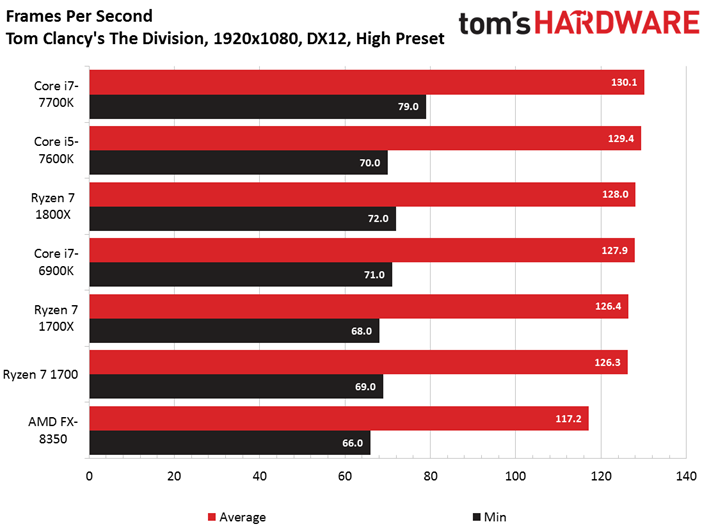

Tom Clancy's The Division is graphically demanding all the way down to 1920x1080, allowing Ryzen 7 1800X to climb in ahead of Core i7-6900K. Both Kaby Lake-based CPUs land in first and second place, but their performance advantage is imperceptible. The variable we cannot ignore here is price: the Core i5-7600K, especially, is a much more affordable solution if you're gaming-focused.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

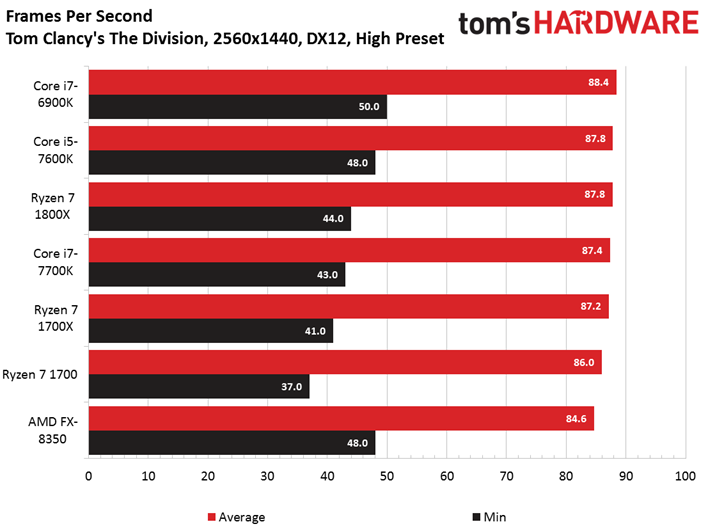

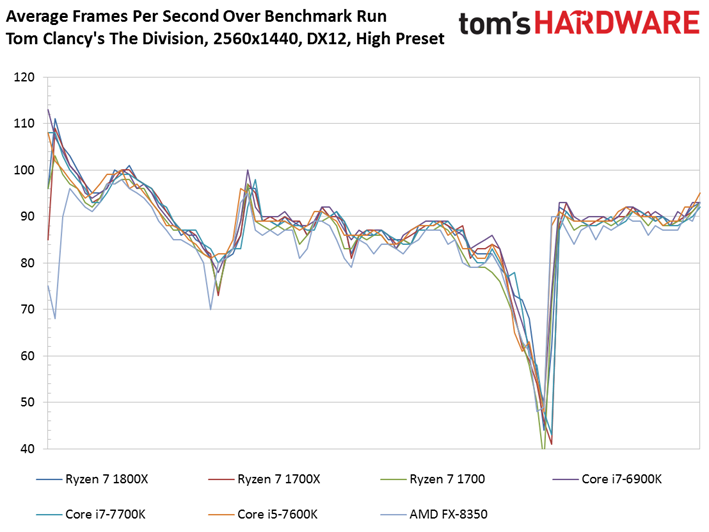

The Core i7-6900K lands back in the lead as we jump to 2560x1440. But at what cost? Curiously, Intel's Core i7-7700K tumbles three positions. We tested this condition several times to verify the result, but it does stand out as a possible outlier. The Ryzen 7 1800X matches the Core i5-7600K, though it does suffer a lower minimum frame rate during the test.

Current page: Project CARS, Rise of the Tomb Raider, And The Division

Prev Page Hitman (2016), Metro: Last Light Redux, Middle-earth: Shadow of Mordor Next Page Conclusion

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Overall good CPU but compared to Intel it sucks. You are better with Kaby Lake. AMD CPU needs this, that or that...same story as with their video cards. Wait for performance increase which happens but by that time competitor has newer generation product. Look at difference between Nvidia and AMD high end offering.Reply

Almost forgot...to me a real upgrade is going to be Intel 2066 socket. -

Sakkura Most of these performance differences are not that relevant. I mean if you have a 60Hz monitor, practically all these tests max that out.Reply

So Ryzen is definitely better value for money than Broadwell-E even for gaming. Neither of those can currently match Kaby Lake, but they're not supposed to anyway. Ryzen 3/5 will compete with Kaby Lake by being cheaper and presumably only a little slower, and thus better value for money. -

Paul Alcorn Reply19423857 said:Were all the test cpus at stock clocks?

Yes, we tested at stock clocks. -

Rookie_MIB Well, what I gather from this round up is that Ryzen 7 series is a workstation CPU which can game decently well. So - if you use your computer for productivity (video processing, VMs, compiling etc) in addition to gaming, it's the processor to buy. It's vastly less expensive that Broadwell-E, and performs as well (if not better) in some regards.Reply

If your computer is used for gaming first with some secondary workstation uses, you're better off with Kaby Lake. The almost 5ghz clock speeds rule for gaming where it's not highly optimized for higher thread counts.

My Ryzen 7 1700 arrives today BTW. :D :D :D

I am definitely curious to see how the APU's which are coming fare. An actual decent x86 architecture with a really good IGP? If they could stick a 2GB hunk of HBM on it.... lordy that would be fast. -

BulkZerker Anyone remember when disabling hyperthreading got you an fps boost in video games (ffs that was an issue in battlefield 3)? Its that, all over again.Reply -

mitch074 To be expected - most current games are developed to make use of 4 threads on Intel CPUs, no more no less, once compiled on PC.Reply

As for "it should have been finalized before release", yeah right - even consoles need firmware updates once out to fix non-optimal settings. And if the situation under Linux is any indication, even Intel isn't exempt - they had to rewrite a whole new power management scheme to make use of Sandy Bridge, and even then you may end up with a frozen system now and again if you don't disable power management. We're talking SERVERS here, people! the kind of machine that runs 24/7 and thus working power management means real MONEY!

So to me, a grounds up brand new CPU architecture (something Intel hasn't done in more than 5 years) that works reliably out of the box and can beat the established champion in several benchmarks and real-world tasks for half the price is a GREAT accomplishment. And if Deus Ex and Shadow of Mordor are any indication, Ryzen can indeed kick Kaby Lake in the butt when properly used.

What can be understood from this article is that, CURRENTLY, AMD's Ryzen isn't the best gamer CPU out there as games aren't geared towards it yet. You can game properly with it though, and it more than likely will get faster with time. If you need to build a gaming rig today, go Kaby Lake; if you're building a workstation, go Ryzen - knowing you can game on it too. If you can wait a few months though, all bets are off.

As for AMD's performance in the GPU market, look at how many GameWorks games are out there, and how fast AMD's performance climbs up after game release (from a couple of weeks to a few months) - while it took almost a full year for Nvidia to catch up on DX12 performance!