Reader's Voice: Building Your Own File Server

Build Your Own

There is another solution that is cheaper and offers significantly more flexibility: building your own file server. And there is no reason you can't build your own, just as enthusiasts regularly build their own computers instead of buying pre-built machines.

Of course, there are many decisions to make when building your file server. A few of the most important are how much data you want to store, how much redundancy you require, and how many disks you plan to use. If you want to house lots of information, I recommend minimizing cost per gigabyte rather than buying the biggest drives available. Today, the best value falls around the 1 TB range. I personally like RAID 5 because it can tolerate the failure of a single hard drive. If you are going to use more than eight or 10 drives, you probably should build multiple RAID 5 arrays with four or five hard drives each or use RAID 6 to shield against the failure of more than a single disk.

Enclosure And Storing Disks

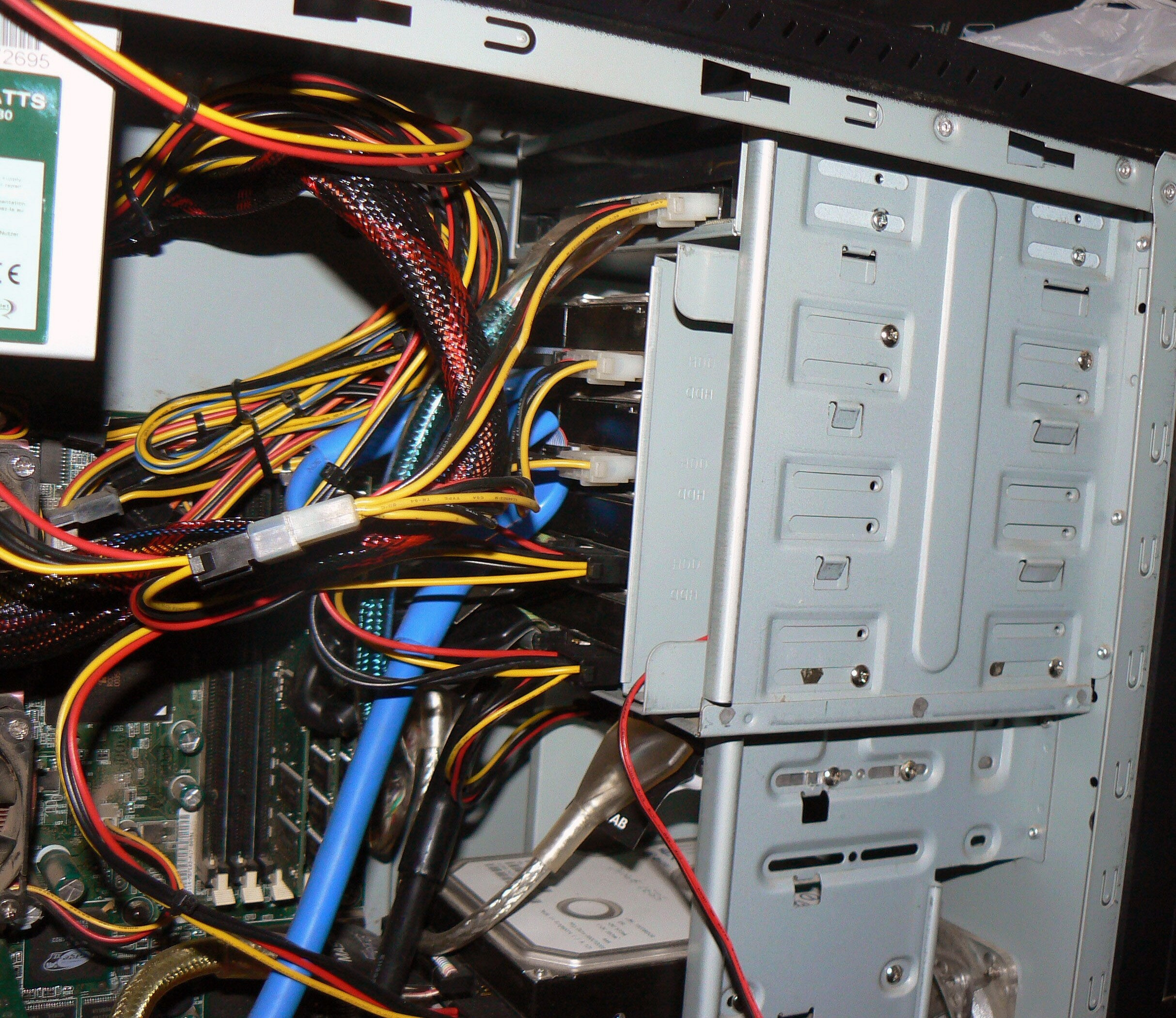

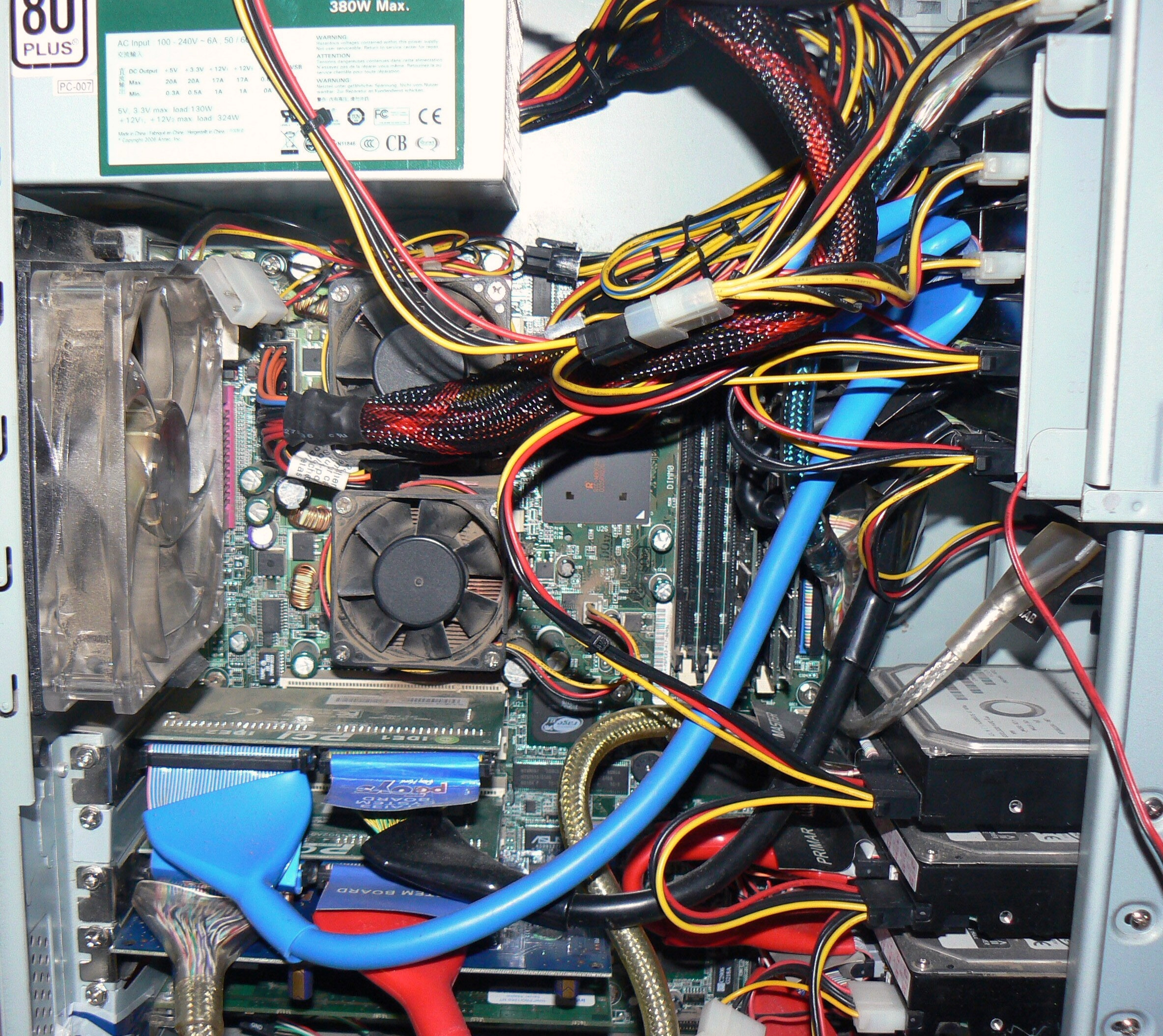

You will have to buy an enclosure big enough to house all of your hard drives. If you buy an enclosure that is too small, you can always switch to a larger one later on.

The enclosure will need to keep all the hard drives reasonably cool. There are many enclosures that offer decent cooling. For my first file server, I used a generic no-name case. Its best feature was a 120 mm fan in front of the hard drive rack and a 120 mm exhaust fan. I added a Cooler Master 4-in-3 module with a dedicated 120 mm fan to cool the hard drives. This is a great way to hold hard drives. The only downside is you have to remove the whole module to change a single disk.

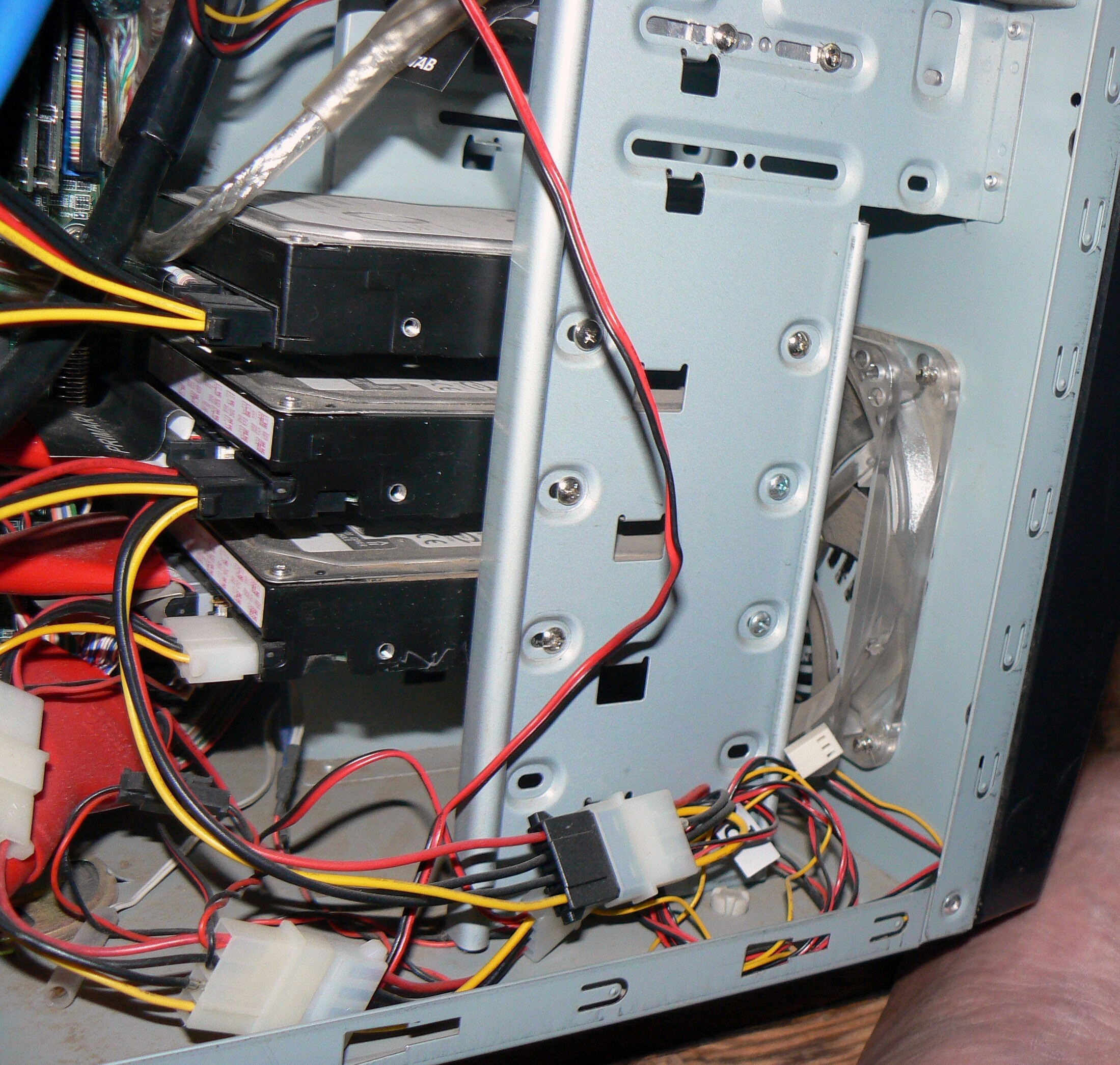

For my second file server, I selected two Supermicro hot-swap SATA hard drive racks, each holding five hard drives. They were much more expensive than the Cooler Master drive holder, but also have more features. The second enclosure uses a very loud 92 mm fan (which I slowed down with a fan controller), sounds an alarm if the fan fails or the temperature gets too high, and shows disk access for each drive. Best of all, you can change hard drives without opening the case, and if the operating system supports it, without even turning off the computer.

Network Interface

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You will want a Gigabit Ethernet interface for your file server to help speed up data transfer rates. You will likely use jumbo packets, so make sure your Ethernet switch and onboard controller supports jumbo frames (most new devices do).

Ethernet originally was specified to have a maximum payload size of 1,500 bytes. This was plenty when the technology ran at 10 Mb/s. When Gigabit Ethernet was introduced, the overhead associated with small packets became significant. The industry had a de-facto agreement to support larger payloads, and 9,000 bytes is the size that was picked. This means you can transmit the same amount of data as if you were using standard-sized packets, but with a six-fold decrease in packets and six times less overhead.

In practice, you will save CPU cycles and see increased throughput using jumbo frames if the network is a limiting factor when transferring data. If you do get a switch that doesn’t support jumbo frames, it might get confused and not pass the packets, so you should disable the feature if you have a switch that doesn’t support it.

You can get an eight-port switch for about $40. Most modern motherboards have Gigabit Ethernet connectivity onboard, but on the off chance that your motherboard does not support it, get a PCI-X or PCI Express (PCIe) card instead of a 32-bit PCI card. I have had good success with Intel’s and Broadcom’s PCI-X Ethernet cards.

-

wuzy Yet again why is this article written so unprofessionally? (by an author I've never heard of) Any given facts or numbers are just so vague! It's vague because the author has no real technical knowledge behind this article and are basing mainly on experience instead. That is not good journalism for tech sites.Reply

When I meant by experience, I didn't mean by self-learning. I meant developing your own ideas and not doing extensive research on every technical aspects for the specific purpose. -

wuzy And even if this is just a "Reader's Voice" I'd expect a minimum standard to be set by BoM on articles they publish to their website.Reply

Most IT professionals I have come to recognise in the Storage forum (including myself) can write a far higher caliber article than this. -

motionridr8 FreeNAS? Runs FreeBSD. Supports RAID. Includes tons of other features that yes, you can get working in a Linux build, but these all work with just the click of a box in the sleek web interface. Features include iTunes DAAP server, SMB Shares, AFP shares, FTP, SSH, UPnP Server, Rsync, Power Daemon just to name some. Installs on a 64MB usb stick. Mine has been running 24/7 for over a year with not a single problem. Designed to work with legacy or new hardware. I cant reccommend anything else. www.freenas.orgReply -

bravesirrobin I've been thinking on and off about building my own NAS for around a year now. While this article is a decent overview of how Jeff builds his NAS's, I also find it dancing with vagueness as I'm trying to narrow my parts search. Are you really suggesting we use PCI-X server motherboards? Why? (Besides the fact that their bandwidth is separate from normal PCI lanes.) PCI Express has that same upside, and is much more available in a common motherboard.Reply

You explain the basic difference between fakeRAID and "read RAID" adequately, but why should I purchase a controller card at all? Motherboards have about six SATA ports, which is enough for your rig on page five. Since your builds are dual-CPU server machines to handle parity and RAID building, am I to assume you're not using a "real RAID" card that does the XOR calculations sans CPU? (HBA = Host Bus Adapter?)

Also, why must your RAID cards support JBOD? You seem to prefer a RAID 5/6 setup. You lost me COMPLETELY there, unless you want to JBOD your OS disk and have the rest in a RAID? In that case, can't you just plug your OS disk into a motherboard SATA port and the rest of the drives into the controller?

And about the CPU: do I really need two of them? You advise "a slow, cheap Phenom II", yet the entire story praises a board hosting two CPUs. Do I need one or two of these Phenoms -- isn't a nice quad core better than two separate dual core chips in terms of price and heat? What if I used a real RAID card to offload the calculations? Then I could use just one dual core chip, right? Or even a nice Conroe-L or Athlon single core?

Finally, no mention of the FreeNAS operating system? I've heard about installing that on a CF reader so I wouldn't need an extra hard drive to store the OS. Is that better/worse than using "any recent Linux" distro? I'm no Linux genius so I was hoping an OS that's tailored to hosting a NAS would help me out instead of learning how to bend a full blown Linux OS to serve my NAS needs. This article didn't really answer any of my first-build NAS questions. :(

Thanks for the tip about ECC memory, though. I'll do some price comparisons with those modules. -

ionoxx I find tat there is really no need for dual core processors in a file server. As long as you have a raid card capable of making it's own XOR calculations for the parity, all you need is the most energy efficient processor available. My file server at home is running a single core Intel Celeron 420 and I have 5 WD7500AAKS drives plugged to a HighPoint RocketRAID 2320. I copy over my gigabit network at speeds of up to 65MB/s. Idle, my power consumption is 105W and I can't imagine load being much higher. Though i have to say, my celeron barely makes the cut. The CPU usage goes up to 70% while there are network transfers, and my switch doesn't support jumbo frames.Reply -

raptor550 Ummm... I appreciate the article but it might be more useful if it were written by someone with more practical and technical knowledge, no offense. I agree with wuzy and brave.Reply

Seriously, what is this talk about PCI-X and ECC? PCI-X is rare and outdated and ECC is useless and expensive. And dual CPU is not an option, remember electricity gets expensive when your talking 24x7. Get a cheap low power CPU with a full featured board and 6 HDDs and your good to go for much cheaper.

Also your servers are embarrassing. -

icepick314 Have anyone tried NAS software such as FreeNAS?Reply

And I'm worried about RAID 5/6 becoming obsolete because the size of hard drive is becoming so large that error correction is almost impossible to recover when one of the hard drive dies, especially 1 TB sized ones...

I've heard RAID 10 is a must in times of 1-2 TB hard drives are becoming more frequent...

also can you write pro vs con on the slower 1.5-2 TB eco-friendly hard drives that are becoming popular due to low power consumption and heat generation?

Thanks for the great beginner's guide to building your own file server... -

icepick314 Also what is the pro vs con in using motherboard's own RAID controller and using dedicated RAID controller card in single or multi-core processors or even multiple CPU?Reply

Most decent motherboards have RAID support built in but I think most are just RAID 5, 6 or JBOD.... -

Lans I like the fact the topic is being brought up and discussed but I seriously think the article needs to be expanded and cover a lot more details/alternative setup.Reply

For a long time I had a hardware raid-5 with 4 disk (PCI-X) on dual Athlon MP 1.2 ghz with 2 GB of ECC RAM (Tyan board, forgot exactly model). With hardware raid-5, I don't think you need such powerful CPUs. If I recalled, the raid controller cost about as much as 4x pretty cheap drives (smaller drives since I was doing raid and didn't need THAT much space, it at most 50% for the life of the server, also wanted to limit cost a bit).

Then I decided all I really needed was a Pentium 3 with just 1 large disk (less reliable but good enough for what I needed).

For past year or so, I have not had a fileserver up but planning to rebuild a very low powered one. I was eyeing the Sheeva Plug kind of thing. Or may be even a wireless router with usb storage support (Asus has a few models like that).

Just to show how wide this topic is... :-)