In Theory: How Does Lynnfield's On-Die PCI Express Affect Gaming?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

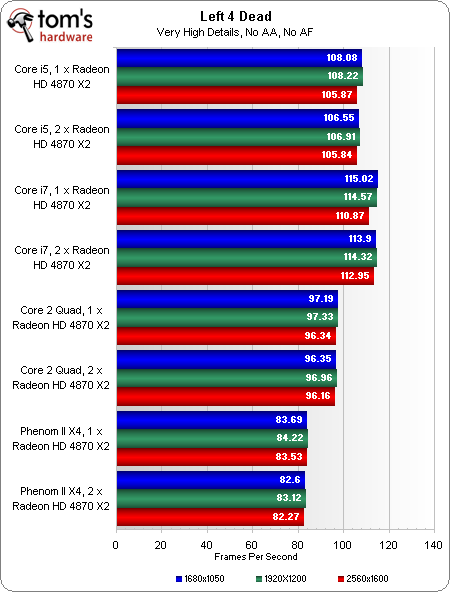

Benchmark Results: Left 4 Dead

Even more so than Far Cry 2, Left 4 Dead is very platform/CPU-dependent. In this case, the extreme dependency yields a fairly interesting chart when AA/AF aren’t being employed.

First of all, it’s interesting to note that Core i7 holds onto that slight advantage, even with a single Radeon HD 4870 X2 in play (and all platforms enjoying a full x16 link to the graphics subsystem). Core i5 falls just slightly behind, followed by the Core 2 Quad system and Phenom II setup. It looks like the theoretical advantage i5 held in 3DMark Vantage isn't going to pan out in actual gaming.

Tossing a second card into the mix utilizing CrossFire technology does absolutely nothing for performance. If anything, there’s a one or two frame penalty at the lower resolutions, which the Core i7 suffers as well (so it’s probably a result of overhead rather than a consequence of dividing 16 lanes into two eight-lane links for three of the four platforms).

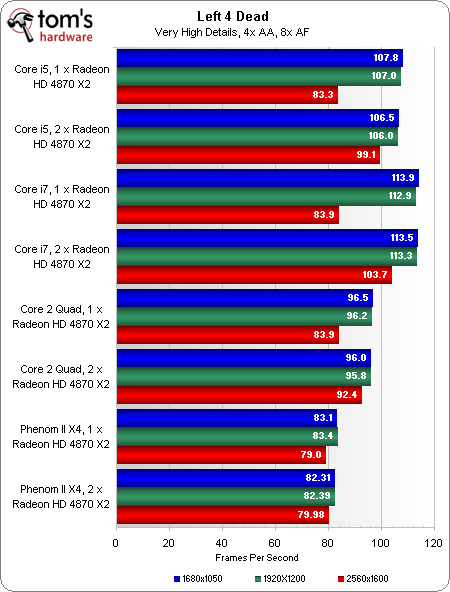

Because these configurations are so massively processor-bound, adding 4x anti-aliasing and 8x anisotropic filtering doesn’t do anything to hit performance at 1680x1050 or 1920x1200. There is an impact at 2560x1600, though, which makes this one setting an interesting point of comparison between the four different architectures. Again, we’re looking at red bars-only.

Core i7, Core i5, and Core 2 Quad achieve the same results at 2560 with one 4870 X2. The i7’s twin x16 links help it get the most out of a second card, while i5’s architecture leads to it placing second at the same resolution when another 4870 X2 is added. The Core 2 Quad’s speed-up is the smallest, amounting to just 8.5 frames.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Benchmark Results: Left 4 Dead

Prev Page Benchmark Results: Far Cry 2 Next Page Benchmark Results: World In Conflict-

bucifer I do not agree with the choices made in this article. You don't buy 2*4870x2 and the you slam a x4 920. The choices do not make sense.Reply

You should have used the best cpu(ex i7 920 oc@4GHz) to try to eliminate all bottlenecks and truly emphasize the limitations of x8/x16 pci-e lanes.

The rest of the testing was done to include the new i5 which is not bad but not relevant for the bottleneck. I know many people would like to see how i5+p55 handles the gpu power but it's a highly unlikely scenario that someone would actually but such powerful and expensive cards on pair them with a cheaper cpu and a limited platform.

I just think you should have tested things separately in different articles. -

radnor I know you used a 2.8Ghz Deneb for Clock-per-clock comparisons. MAkes sense. But a 2.8 Ghz Deneb is something really no unlocked. Ussually unlock versions go 3.5Ghz on stock VID, non BE PArts can reach 3.3Ghz safely.Reply

A 2.8 Deneb/Lynnfield/Bloomfield have completely diferent prices. You are comparing a R6 vs a R1. I7 is the Busa trouting everybody else. Of course the prices are very diferent. -

cangelini Gents, if you want to see the non-academic comparisons, I have the 965 BE compared in two other pieces for more real-world comparisons!Reply

http://www.tomshardware.com/reviews/intel-core-i5,2410.html

and

http://www.tomshardware.com/reviews/core-i5-gaming,2403.html

Thanks for the feedback notes! -

bounty "Will Core i5 handicap you right out of the gate with multi-card configurations? The aforementioned gains evaporated in real-world games, where Core i7’s trended slightly higher, perhaps as a result of Hyper-Threading or its additional memory channel"Reply

Well you answered will i5 handicap you without hyperthreading, x8 by x8 and dual channel. It will by 5-10% If you wanted to narrow it down to memory channels, hyperthreading or the x8 by x8 you could have pice the game with the biggest spread and enabled each of those options selectively. Would have been kinda interesting to see which had the biggest impact. -

Shnur Great article! But then again... I don't see why a 955 wasn't used in this scenario... since the 920 is thing that nobody uses. Already that we know that i7 is superior to AMD flagship in multi-GPU configurations you're taking a crappy AMD CPU, buying a 790GX doesn't mean you're going to cut on the chip... and you're talking about who's performing better in 8x lanes... from my point of view it's a bad comparison, and there should have been a chip that'll be actually able to take a difference between 1 card and two and the from 16x and 8x.Reply

And thanks for the other linked reviews, but I'm not talking about comparing the chips themselves, I'm trying to figure out is 8x still good enough or I need to pay more for 16x? -

cangelini Shunr,Reply

Thanks much for the feedback--again, this wasn't meant to be about the CPUs, but the PCI Express links. If you want to know about the processors themselves at retail clocks, check out the gaming story, which does reflect x16/x16 and x8/x8 in the LGA 1366 and LGA 1156 configs.

Hope that helps!

Chris -

Shadow703793 megabusterAMD better have something up its sleeves or it's instakill.lol! do you mean instagib?Reply

Joking aside, AMD needs something to counter this.