But Can It Run Crysis? 10 Years Later

For Crysis' 10th anniversary, we tested all of the flagship AMD and Nvidia GPUs released over the last decade at 1080p, 1440p, and 4K.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Final Analysis

Our benchmark analysis makes it seem like we’re dealing with a modern game challenging the latest graphics cards. There’s just one GPU able to average more than 60 FPS at 3840x2160? Seriously?

Yeah, that’s Crysis for you. Talk about a load of cool data, though.

On the AMD side, it was interesting to track the evolution from TeraScale 1 (Radeon HD 3870 and 4870) to TeraScale 2 (Radeon HD 5870), TeraScale 3 (Radeon HD 6970), and ultimately the various iterations of Graphics Core Next. Specifically, the jump from a VLIW-based architecture to a scalar SIMT one showed through in every one of our benchmark charts. AMD’s Southern Islands ISA whitepaper from 2012 made it clear that GCN set forth to improve resource utilization, calling out stable and predictable performance in particular. The scaling we observed from Radeon HD 7970 and up bears this out.

Article continues belowNvidia’s architectural evolution appears better-paced. From Tesla (GeForce 8, 9, and 200) to Fermi (GeForce 400 and 500), Kepler (GeForce 600 and 700), Maxwell (GeForce 900), and Pascal (GeForce 10), the gains are fairly consistent. Further, while high-end AMD and Nvidia cards are similarly bottlenecked at 1920x1080, the GeForce boards enjoy a ~10%-higher ceiling than the Radeons. Whether this is due to Crytek’s CryEngine 2, a lack of driver optimizations for 10-year-old games, or some other platform constraint isn’t clear.

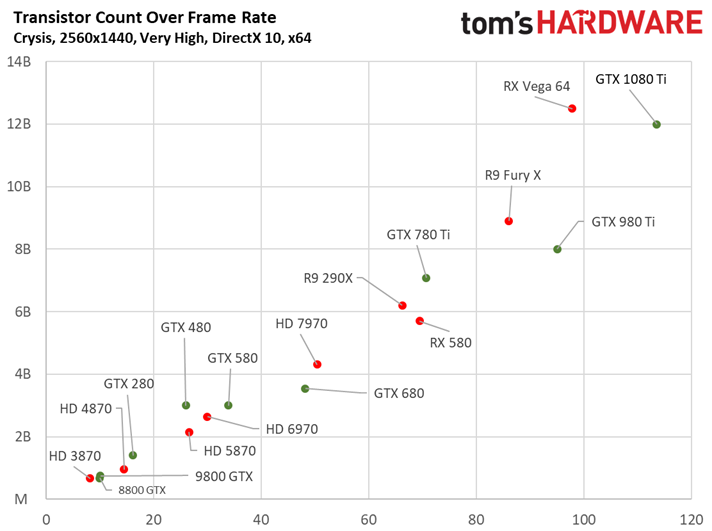

We don’t often get the opportunity to chart several generations of graphics flagships against each other, so we came up with the idea to plot GPU transistor count over frame rate.

By tracking from left to right (frame rate), it’s easy to compare competing generations of graphics hardware. For instance, we can see how Radeon HD 3870 landed behind GeForce 8800 GTX (true to what we observed 10 years ago). Similarly, Radeon HD 4870 debuted at a disadvantage to GeForce GTX 280. But it used a less complex processor, too. In 2010, Nvidia introduced GeForce GTX 480 with performance that actually trailed Radeon HD 5870—doubly problematic since the 250W card’s GF100 GPU incorporated ~39% more transistors. Nvidia ironed out its issues with GeForce GTX 580, and AMD’s answer back, Radeon HD 6970, underwhelmed. The following two generations saw AMD and Nvidia trading blows. More recently, in 2015, AMD’s Radeon R9 Fury X came pretty close to matching GeForce GTX 980 Ti’s performance in our launch story. But older games tend to favor Nvidia’s architecture, which is why you see GM200 and its eight billion transistors so far ahead of the more complex Fiji chip. It’s the last data point that hurts most, though: Vega 64 adds a ton of transistors, but lands quite a way behind GeForce GTX 1080 Ti.

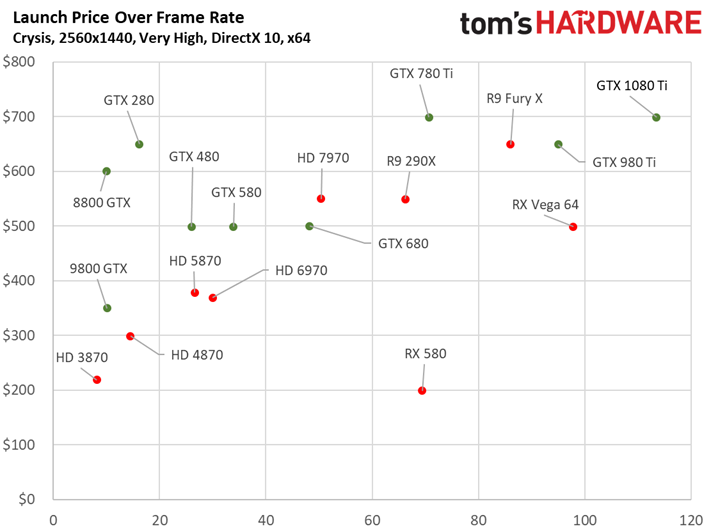

Assigning a launch price to each card yields a very different picture when we plot them over performance. In this context, Radeon RX Vega 64 doesn’t look all that bad in Crysis. And while you cannot find Radeon RX 580s for the $200 AMD originally advertised, it’s kind of cool to see Polaris serving up better performance than Radeon R9 290X for half the price just four years later.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

We hope you enjoyed our little jaunt back in time with a game that, 10 years later, still looks amazing as it hammers modern graphics cards at high resolutions. If you still have a copy of Crysis it may be time to dust it off and take this title for another spin.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Crysis

-

lun471k Quite interesting. I wonder how the rest of this generation's GPUs would compete in a Crysis benchmark!Reply -

Luis XFX This is awesome! Good work! I've always wondered how today's most powerful GPUs would handle Crysis. Crazy to see that even the GTX 1080ti still barely breaks 60fps at 4K. This game is a beast.Reply -

envy14tpe Wawawow. Love this comparison. Never seen anyone do this..seriously. Once again Tomshardware is the best.Reply -

wh3resmycar 10 years ago i debated someone in the forums that Crysis was crap since his supposed rig ran Unreal 3 games and COD4 just fine. i wonder if that guy is still here. i mean side by side circa 2017 put Crysis along COD4 you'd see and probably understand why the latter was so much ahead of its time. The Map scale and the physics, too.Reply -

gasaraki So the question "But can it run Crysis?" still applies. Only one card can run crysis at 4K above 60fps.Reply -

Kridian What would really be interesting is if they redid Crysis with Chris Robert's updated engine (Star Engine/Lumberyard). What kind of performance gains would we see from a software standpoint?Reply

-

hdmark can anyone give me a clean cut answer as to why crysis was/is so demanding? Is it that they took all of the new graphics technologies at the time and put them into one game? and then over time those technologies matured/were optimized and now we can see games that are better for less?Reply

was it just poorly optimized?

-

jonajohnson3 Right now im playing games like Gmod and Tf2 qute well with a 11 year old pc! Yes they might be really old titles to but they still run well. So for me yes I can run a 11 year old pc.Reply -

DataMeister @HDMARK, I think the game engine for the original Crysis was just poorly optimized, because Crysis 2 ran better on the same hardware.Reply -

therickmu25 There will never be another Crysis. Imagine a developer releasing a game where a 1080ti couldn't run it on the highest settings in 2017. 1. Optimization played a factor I know, but 2. Because it looked 20x better than any game available.Reply

It was a product of the times where developers were still trying to push the envelope for cutting edge graphical techniques.. Pretty cool