Opinion: AMD, Intel, And Nvidia In The Next Ten Years

A Lesson In History: The Death Of The Sound Card

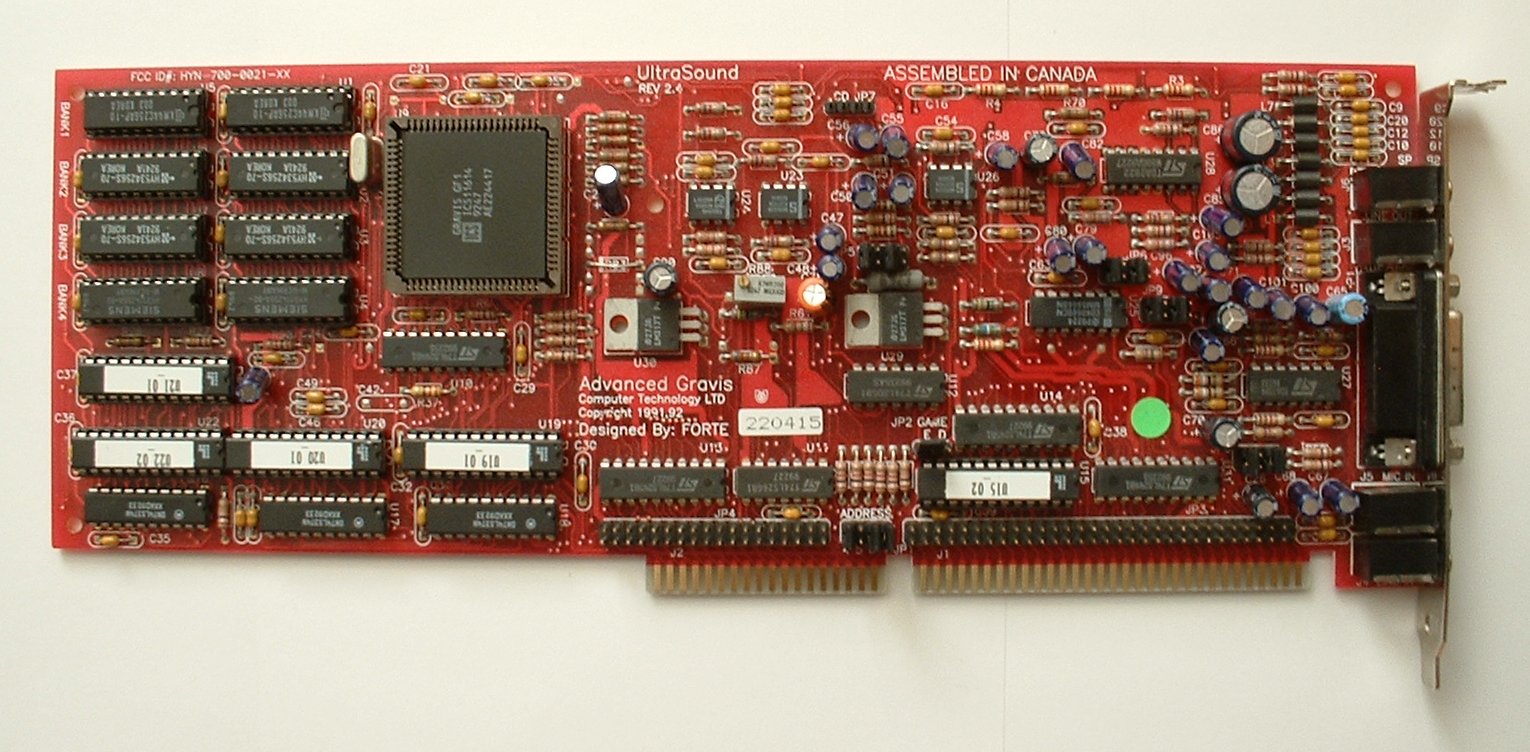

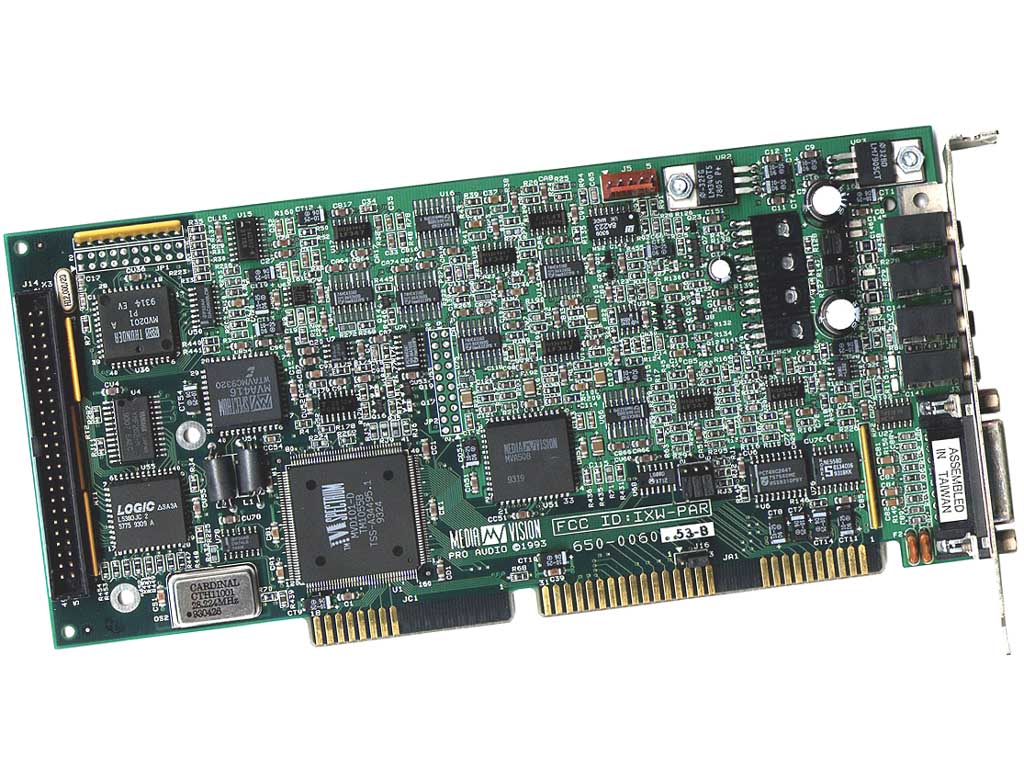

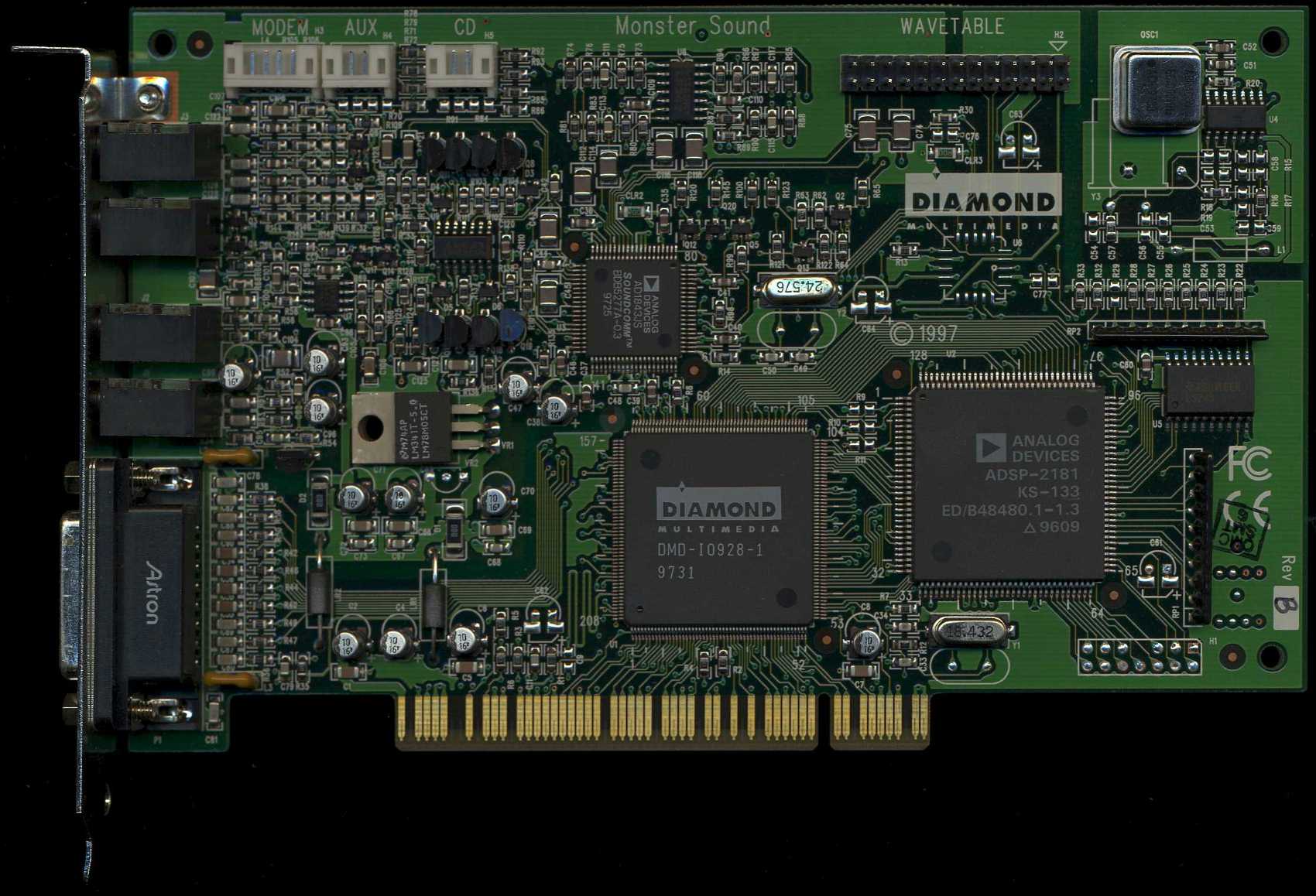

You can trace the evolution of the gaming sound card from the PC speaker to the Tandy 1000, Disney Sound Source, Sound Blaster, Sound Blaster Pro, Pro Audio Spectrum 16, Gravis Ultrasound, Monster Sound PCI, the whole Aureal/Sensaura/EAX era, and finally today, where the majority of gamers do well enough relying on integrated motherboard audio.

Early on, the PC speaker was only capable of very simple beeps and tones in-game (except for the rare title with speech synthesis produced purely via the CPU). Creative's Sound Blaster brought us into the modern era with 8-bit, 22kHz, monaural audio. Next came the SB Pro with stereo audio, and then came the PAS16, which brought CD-quality audio (and a beefy amplifier, actually) to the mainstream PC.

It was finally the Gravis Ultrasound that brought wavetable MIDI synthesis to the masses. With each product generation, new technology brought measurable and noticeable benefits to consumer. Things sounded better. By the end of the DOS era, you were no longer seeing the dramatic quality improvements that had happened over the years in the past.

The introduction of 3D and surround audio in PCs brought a brief but intense era of competition where Aureal, Sensaura, and Creative Labs/EAX fought for dominance. But with today's powerful CPUs, offloading the audio workload is no longer a major issue.

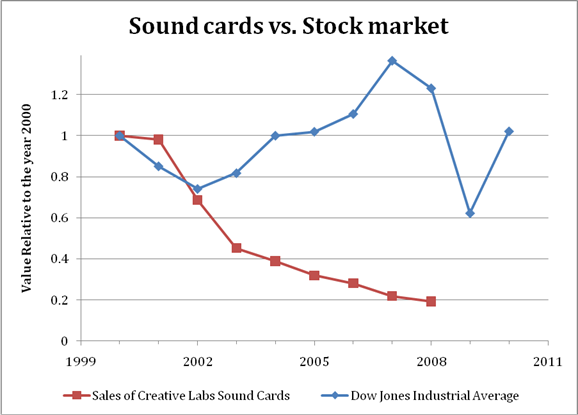

The percentage of total computational power required for audio processing is negligible and when it comes to quality itself; most sound cards are good enough. Though there is a small market for ASIO-capable professional recording devices, the facts speak for themselves. Sales of Creative Labs sound cards in 2008 were 12% lower than those in 2007, sales in 2007 were 22% lower than those in 2006, sales in 2006 were 12% lower than 2005, sales in 2005 were 18% lower than 2004, sales in 2004 were 14% lower than those in 2003, sales in 2003 were 34% lower than 2002, and sales in 2002 were 30% lower than 2001, which itself was 2% lower than 2000.

Do the math and you realize that from 2000 to 2007, the sound card market collapsed by 80% over seven years. The recent stock market crash? That was only a 53% drop during the worst of it, and a 25% drop at current values.

Audiophiles can argue over the subtle nuances that one DAC has over another in terms of musicality or noise floor, and point to the increasing popularity of HDD-based music servers as proof that PC audio has the potential to offer reference-quality audio. While I don't disagree, the majority of games and consumer applications already sound great with today's sound cards because the software isn't designed to take advantage of high-resolution audio.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

No game developer is going to take the time and money to properly record, mix, master, and distribute a soundtrack in 24-bit/96 kHz. In fact, many Hollywood films today are done at 24-bit/48 kHz. For the software developer, the money is better spent on adding new levels or artwork to a game, and the computational horsepower better put into graphics or physics.

Gamers that want better audio from their computer are better off spending an extra $100 on speakers than a sound card. This is because speaker technology advances more slowly than the rest of technology industry. My DeLorean-era Magnepan MG-IIIs, powered by a pair of Adcom GFA-555 IIs, are still commandingly better than anything you can buy at Best Buy (including what’s available in Magnolia AV mini-stores).

When it comes to PC audio, my recommendation really hasn't changed since 1999: get a Klipsch ProMedia setup. Those speakers paired with integrated motherboard audio give you the sweet spot in performance-per-dollar, whether you're talking games or music. If you're looking for something better than that, skip the computer audio market altogether and go with a dedicated receiver and home theater/hi-fi speakers.

The sound card market died once:

- the technology reached the point of diminishing returns for both the consumer and content creator

- advances in semiconductor technology allowed that level of "inflection point" performance to reach a negligible commodity cost

Current page: A Lesson In History: The Death Of The Sound Card

Prev Page Introduction Next Page Have 3D Graphics Reached The Point Of Diminishing Returns?-

anamaniac Alan DangAnd games will look pretty sweet, too. At least, that’s the way I see it.After several pages of technology mumbo jumbo jargon, that was a perfect closing statement. =)Reply

Wicked article Alan. Sounds like you've had an interesting last decade indeed.

I'm hoping we all get to see another decade of constant change and improvement to technology as we know it.

Also interesting is that you almost seemed to be attacking every company, you still managed to remain neutral.

Everyone has benefits and flaws, nice to see you mentioned them both for everybody.

Here's to another 10 years of success everyone! -

" Simply put, software development has not been moving as fast as hardware growth. While hardware manufacturers have to make faster and faster products to stay in business, software developers have to sell more and more games"Reply

Hardware is moving so fast and game developers just cant keep pace with it. -

Ikke_Niels What I miss in the article is the following (well it's partly told):Reply

I am allready suspecting a long time that the videocards are gonna surpass the CPU's.

You allready see it atm, videocards get cheaper, CPU's on the other hand keep going pricer for the relative performance.

In the past I had the problem with upgrading my videocard, but with that pushing my CPU to the limit and thus not using the full potential of the videocard.

In my view we're on that point again: you buy a system and if you upgrade your videocard after a year/year-and-a-half your mostlikely pushing your CPU to the limits, at least in the high-end part of the market.

Ofcourse in the lower regions these problems are smaller but still, it "might" happen sooner then we think especially if the NVidia design is as astonishing as they say and on the same time the major development of cpu's slowly break up.

-

lashton one of the most interesting and informativfe articles from toms hardware, what about another story about the smaller players, like Intel Atom and VILW chips and so onReply -

JeanLuc Out of all 3 companies Nvidia is the one that's facing the more threats. It may have a lead in the GPGPU arena but that's rather a niche market compared to consumer entertainment wouldn't you say? Nvidia are also facing problems at the low end of market with Intel now supplying integrated video on their CPU's which makes the need for low end video cards practically redundant and no doubt AMD will be supplying a smiler product with Fusion at some point in the near future.Reply -

jontseng This means that we haven’t reached the plateau in "subjective experience" either. Newer and more powerful GPUs will continue to be produced as software titles with more complex graphics are created. Only when this plateau is reached will sales of dedicated graphics chips begin to decline.Reply

I'm surprised that you've completely missed the console factor.

The reason why devs are not coding newer and more powerful games is nothing to do with budgetary constraints or lack thereof. It is because they are coding for an XBox360 / PS3 baseline hardware spec that is stuck somewhere in the GeForce 7800 era. Remember only 13% of COD:MW2 units were PC (and probably less as a % sales given PC ASPs are lower).

So your logic is flawed, or rather you have the wrong end of the stick. Because software titles with more complex graphics are not being created (because of the console baseline), newer and more powerful GPUs will not continue to produced.

Or to put it in more practical terms, because the most graphically demanding title you can possibly get is now three years old (Crysis), then NVidia has been happy to churn out G92 respins based on a 2006 spec.

Until we next generation of consoles comes through there is zero commercial incentive for a developer to build a AAA title which exploits the 13% of the market that has PCs (or the even smaller bit of that has a modern graphics card). Which means you don't get phat new GPUs, QED.

And the problem is the console cycle seems to be elongating...

J -

Swindez95 I agree with jontseng above ^. I've already made a point of this a couple of times. We will not see an increase in graphics intensity until the next generation of consoles come out simply because consoles is where the majority of games sales are. And as stated above developers are simply coding games and graphics for use on much older and less powerful hardware than the PC has available to it currently due to these last generation consoles still being the most popular venue for consumers.Reply