Graphics Overclocking: Getting The Most From Your GPU

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Graphics Chips And Our Test Setup

MSI’s GeForce GTX 260 Lightning is factory-overclocked, employing faster clock speeds for both the GPU and the shaders. While Nvidia’s reference design calls for 576 MHz (GPU) and 1242 MHz (shaders), this card runs at a combination of 655/1404 MHz. At 1792 MB, it also offers twice the memory of standard GTX 260 cards. Since the card’s factory-overclocked settings are saved in its BIOS, they were used as “standard” settings during testing. Any overclocking achieved here is thus a further improvement over the already elevated clock speeds.

| Product Name | Chip | GPU Frequency | Memory Size | Memory Type | Memory Frequency |

|---|---|---|---|---|---|

| MSI N260GTX Lightning | GTX 260 | 655 MHz | 1792 MB | GDDR3 | 2 x 999 MHz |

| MSI R4870-MD1G | HD 4870 | 750 MHz | 1024 MB | GDDR5 | 4 x 900 MHz |

| Nvidia and ATI Graphics Cards | |

|---|---|

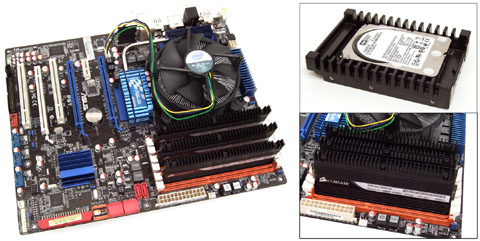

| CPU | Intel Core i7-920 @ 3.8 GHz (20x190), BIOS 1.2625 Volt, 45 nm, Socket 1366 LGA |

| Motherboard | Asus P6T, PCIe 2.0, ICH10R, 3-Way SLI |

| Chipset | Intel X58 |

| Memory | Corsair, 3 x 2 GB DDR3, TR3X6G1600C8D, 2x570 MHz 8-8-8-20 |

| Audio | Realtek ALC1200 |

| LAN | Realtek RTL8111C |

| HDDs | SATA, Western Digital, Raptor WD300HLFS, WD5000AAKS |

| DVD | Gigabyte GO-D1600C |

| Power Supply | Cooler Master RS-850-EMBA 850 Watts |

| Drivers & Configuration | |

| Graphics Drivers | MSI Driver 182.06, Nvidia GeForce 186.18, MSI Driver 8.542, ATI Catalyst 9.6 |

| Operating System | Windows Vista Ultimate 32 Bit, SP1 |

| DirectX | 9, 10 and 10.1 |

| Platform Driver | Intel 9.1.0.1007 |

Power consumption is measured in Watts at the wall socket and reflects the entire system (without the monitor).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Graphics Chips And Our Test Setup

Prev Page Keeping Cool (Enough) Next Page MSI’s D.O.T.-Enabled Driver-

dingumf What the hell, I thought this was a guide to overclocking the GPU as the title reads "Graphics Overclocking: Getting The Most From Your GPU"Reply

Then at the end Tom's Hardware screws me over and writes "Conclusion: It’s A Tie"

Isn't this a tutorial? -

they tell you how to overclock using CCC or riva tuner, or evga precision, they also tell you, overclocking = more performance at the cost of more power. what else do you want?Reply

-

dingumf joeman42What is really needed is a "continuous" OC utility that can detect artifacts during actual use and adjust accordingly. I've noticed that my max OC tends to change each time I test and depending on the tool I test with (e.g., atitool, gputool, rivatuner, and my favorite, atitraytool). Some games, l4d in particular, crash at the slightest error. Others such as COD and Deadspace are somewhat tolerant. Games like Far Cry 2 and Fear 2 don't seem to care at all. It would be nice if the utility could take this into account.As for the tools themselves, Atitraytool has far and away the best fan speed adjuster, the dual ladder Temp/Speed is a model of simplicity. Plus, it can automatically sense a game and auto OC just for the duration. Nothing like this exists on the NV side (you must explicitly specify each exe). Unfortunately, I am on a NVidia card now and Rivatuner is pretty much the only game in town for serious tweaking. IT IS A DESIGN DISASTER! random design with no discernable structure. A help file which consist solely of the author bragging about his creation, without explanation as to where each feature is implemented or how to use it. And no, scattered tooltips is not an acceptable alternative. It took forever to figure out that I needed to create a fan profile and then a macro and then create a rules to fire the macro which contains the fan profile just to set one(!) fan speed/temp point (and repeat as needed). Sorry for the rant, but I really hate Rivatuner!Reply

Oh hello. That's what OCCT is for. -

nitrium Rivatuner works just fine with the latest drivers (incl. 190.38). Just check the Power User tab and under System set Force Driver Version to 19038 (or in the articles case 18618) - no decimal point. Be sure that the hexidecimal display at the bottom is unchecked. All Rivatuner's usual features can now be accessed.Reply -

masterjaw I don't think this is intended to be an in-depth tutorial like dingumf perceives. It's just for people to realize that they could still get more from their GPUs using tools.Reply

On the other hand, I don't like the sound of "It's a tie". It looks like it is said just to show neutrality. ATI or Nvidia? It doesn't matter, as long as your satisfied with it. -

quantumrand I must say, the HD 2900 is a great card. I picked up the 2900 Pro for $250 back in 2007 and flashed the bios to a modified XT bios with slightly higher clocks (850/1000). The memory is only GDDR3, but with the 512bit interface, it really does rival the bandwidth of the 4870. I can get it to run Crysis at Very High, 1440x900 with moderately playable framerates (about 25fps, but the motion blur makes it seem quite smooth). Really quite amazing for any 2007 card, let alone one for $250.Reply -

quantumrand Just a bit of extra info on the 2900 Pro...Reply

The Pros were bassically binned XTs once ATI realized that the card was too difficult to manufacture cheaply (something about the high layer count it takes to make a 512bit PCB), so in order to sell their excess cores, the clocked them lower and branded them as Pros. Additionally, they changed the heatsink specs as well, adding an extra heatpipe. Because of this, the Pros could often OC higher than the XTs, making them essentially the best deal on the market (assuming you got a decent core). -

Ramar Overclocking a GPU generally isn't worth it IMO, but sometimes can give that extra push into +60fps average. Or to make yourself feel better about a purchase like myself; one week after I bought a 9800GTX they came out with the GTX+. A little tweaking in EVGA Precision brought an impressive 10% overclock up to GTX+ levels and left me satisfied.Reply -

manitoublack I run 2 Palit GTX295's and have had great success with palit's "Vtune" over clocking software. I believe it works with cards from other vendors as well. Easy to use and driver independent.Reply

Cheers for the great article

Jordan -

KT_WASP ReplyConclusion: It’s A Tie

I didn't know they were in competition until I read that.....I too thought this was about overclocking a GPU in general, not which card you should buy. Once again Toms throws that little barb at the end to stoke the fires.

I think they do this constantly to get more website hits.. If the can get a good ol' fanboy war on every article, they will get people coming back over and over again to add fuel to the fire. After all, the more hits they get, the more they get paid from their sponsors. Which BTW, seam to be taking up more real estate then actual content on this site these days.