Is Server Virtualization The New Clustering?

Local Clusters

Clustering isn't only for Internet DR. It can happen within the confines of a data center as well. This second method relies on the portability of virtual machines and the ability to replicate and, for example, bring up a new instance of Windows Server 2008 in a few milliseconds, for situations where you may need to provide additional capacity on an overloaded server or to take over from a server that is about to go offline in the case of a planned upgrade. For these situations, the cloud isn't necessary (although you still might want to have a backup virtual server hosted off premises).

This more local form of clustering can be useful if you have a server farm with a dozen machines all delivering a Web application. If an enterprise has designed things for peak load performance, then there are going to be plenty of other times when many of these machines are doing little or no work. The ideal solution allows you to spin up or spin down new instances of application servers when these loads change, letting you match a particular service delivery metric and to keep the costs of power and cooling at a minimum as well.

One of the issues with earlier custom clustering solutions is that they required identical hardware and operating system versions for each physical machine that was part of the cluster: virtualized servers are more forgiving and flexible, not to mention less expensive. Microsoft's Hyper-V R2, for example, now supports the ability to migrate a running virtual server to a new physical host, even one that has a different processor family, such as moving from an Intel-based server to one running on an AMD processor.

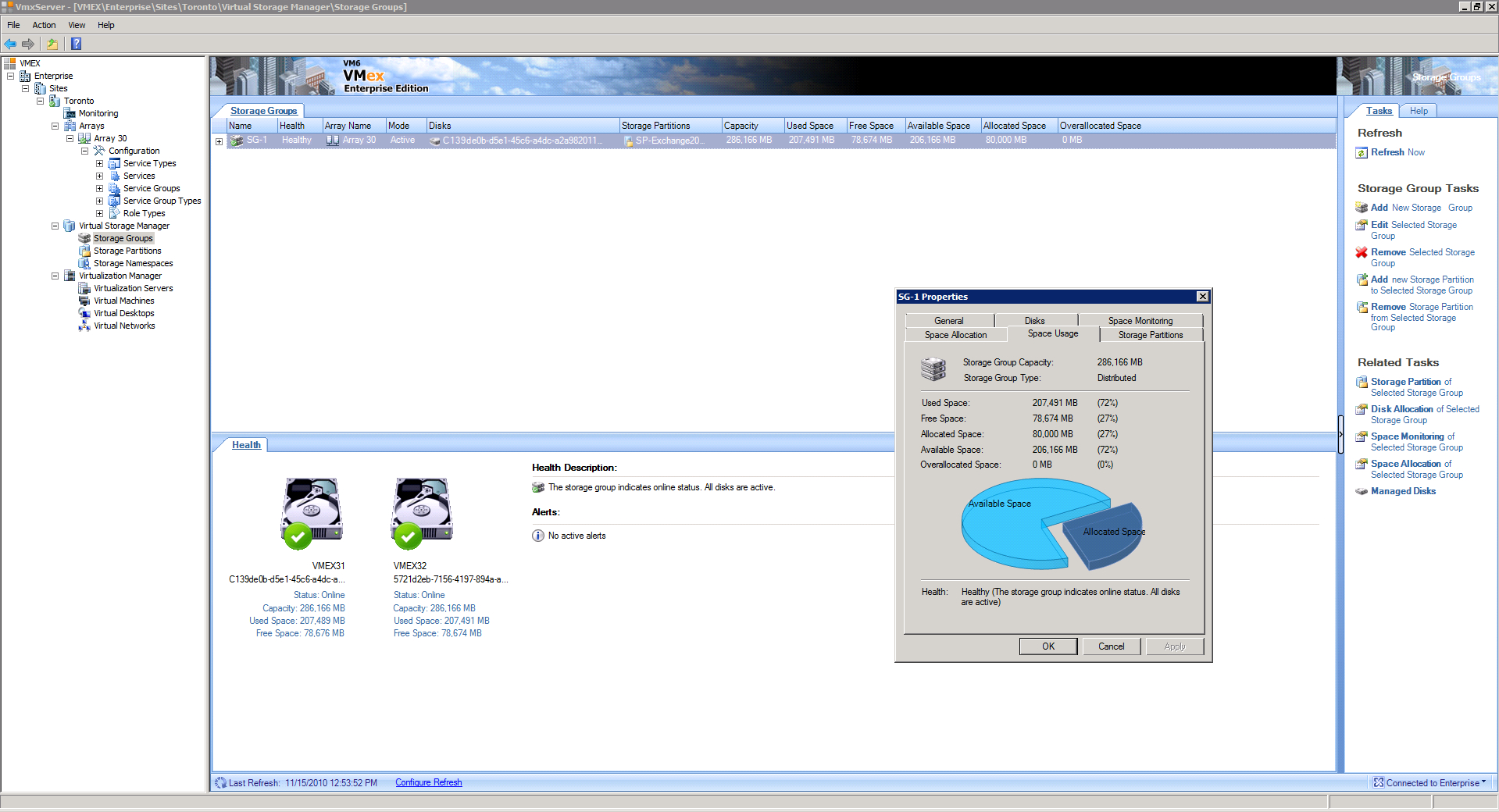

Another issue is that many of the older-style clusters required very high-speed links to tie the members of the cluster together: virtualized solutions are also less demanding on connection bandwidth and can make do with higher latency connections, even across typical Internet lines. VM6 Software has a high-availability solution called VMex that runs on Hyper-V. It can turn two PCs into a high-availability cluster without the need for specialized hardware, even using ordinary Ethernet connections for less demanding applications. When the first Hyper-V machine fails, all the virtual machines are brought up on the second machine automatically.

These solutions aren't appropriate for transaction processing applications where immediate failover is required to handle things like online payment processing or airline reservations. "There are still times when you need more precise clustering, such as when you can't afford to lose a single transaction and have to restart this transaction on the new machine after a failover," says Carl Drisko, an executive and data center evangelist at Novell. "If your virtual machine goes down, anything that is being processed in memory is going to be lost."

“If you are using some sort of transaction processing system where you need to preserve the state of the server, then you are going to need some special purpose clustering solution. But the majority of the applications in the data center don’t need this level of granularity, and you can get by with what we call the poor man’s high availability solution,” says Ken Oestreich, who is Cassatt's director of product management. Cassatt makes automation tools for managing virtualized sessions. But, high-availability virtualized applications can work for less demanding applications, such as enterprise email servers.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Local Clusters

Prev Page Disaster Recovery Replacements Next Page Powerful Solutions For Non-Transaction Based AppsDavid Strom is the former editor-in-chief at Tom's Hardware and the founding editor-in-chief of Network Computing magazine. He has written thousands of articles for dozens of technical publications and websites, and written two books on computer networking.