Red Dead Redemption 2: How it Plays on Different Graphics Cards

Tested on everything from integrated graphics to high-end graphics cards.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

A bit over a year after the original release, Rockstar surprised the PC Gaming community by announcing and almost immediately releasing a PC port of Red Dead Redemption 2, the company's popular open-world western.

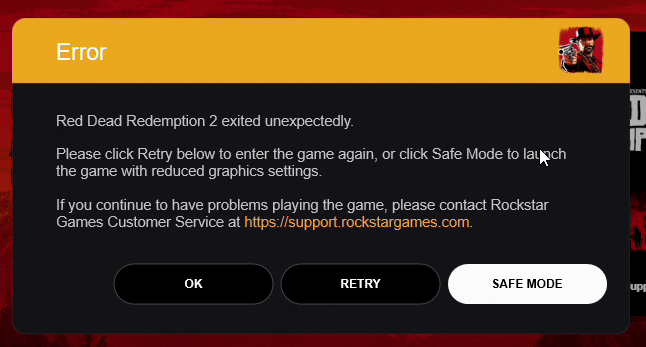

While the year-long development gap had many expecting a smooth PC experience, in practice the launch has seen mixed results with plenty of users experiencing constant game crashes and some others, myself included, finding it completely unable to boot the game at all due to launcher errors. After days of troubleshooting, a patch by Rockstar, a BIOS update, a driver update and disabling cloud saves I was able to enter the Red Dead Redemption 2 to answer the question: How well does the game scale? What can you expect if you own some of the better integrated graphics or you own a still popular GPU from Nvidia's previous 10 series?

| Setup | Settings | Result |

|---|---|---|

| Ryzen 3200G / Vega 11 iGPU / 8 GB RAM | Lowest possible: 1080p/70% (1344x756) | 30 fps |

| Ryzen 3200G / GTX 1050 2 GB / 8 GB RAM | "Balanced" (GTX 1050 preset): 1080p | 30 fps |

| Row 2 - Cell 0 | "Highest Performance" (GTX 1050 preset): 1080p/50% (960x540) | 60 fps |

| Ryzen 3200G / GTX 1060 6 GB / 8 GB RAM | "Highest Performance" (GTX 1060 preset) + Ultra Textures : 1080p | 60 fps |

| Ryzen 3200G / GTX 1080 8 GB / 8 GB RAM | "Balanced" (GTX 1080 preset): 1080p | 60 fps |

| Row 5 - Cell 0 | "Balanced" (GTX 1080 preset): 1080p - lowest LOD distance, disabled long shadows, low water quality; 1080p/150% (1880x1620) | 60-55 fps |

| Row 6 - Cell 0 | "Highest Performance" (GTX 1080 preset): 3840 x 2160 | 46 fps |

Settings Screen

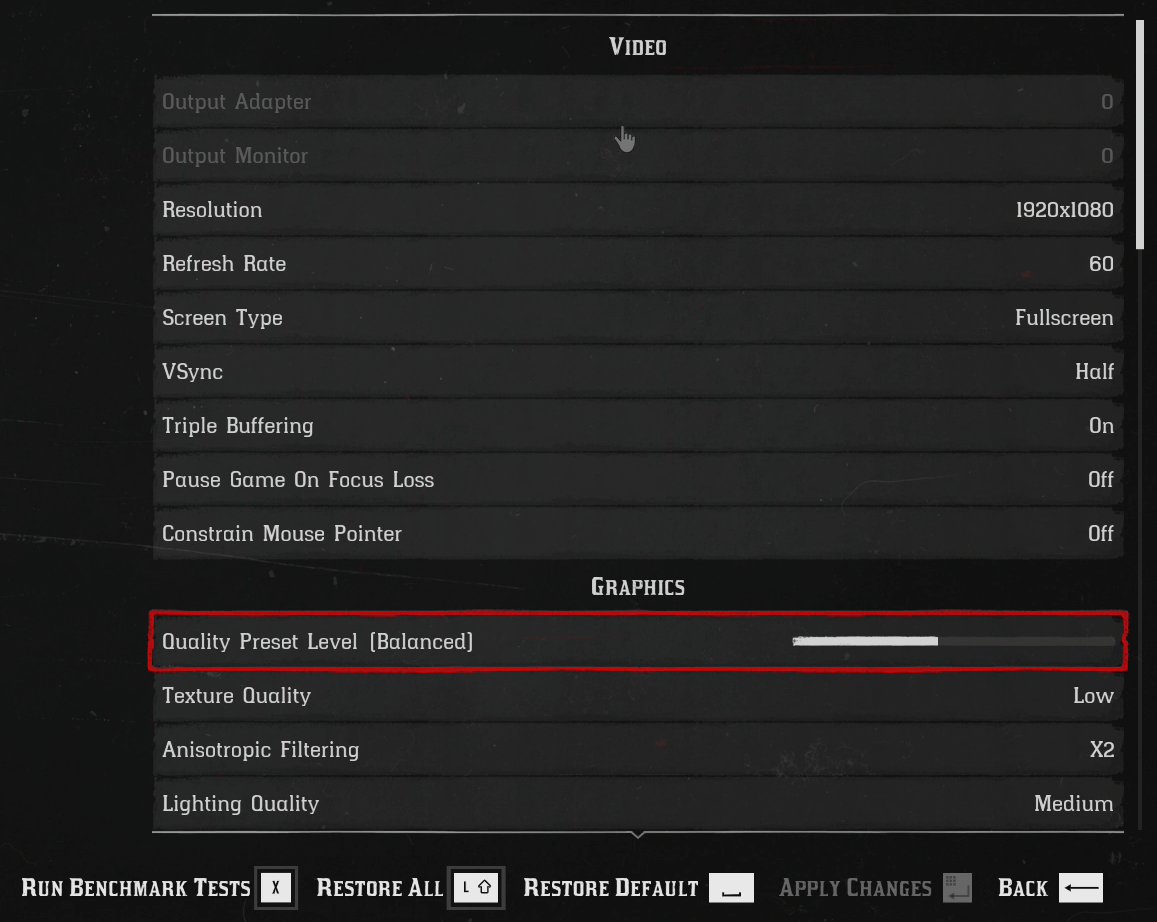

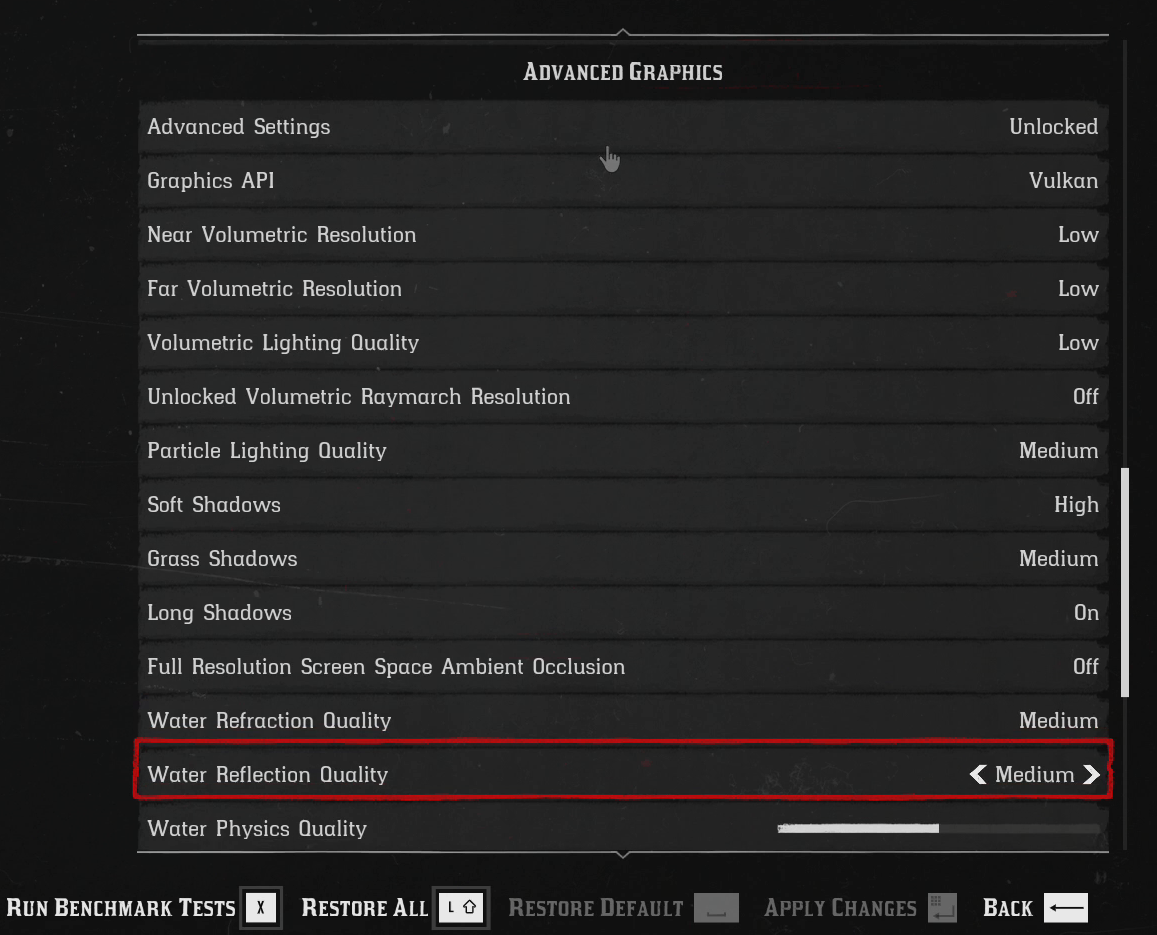

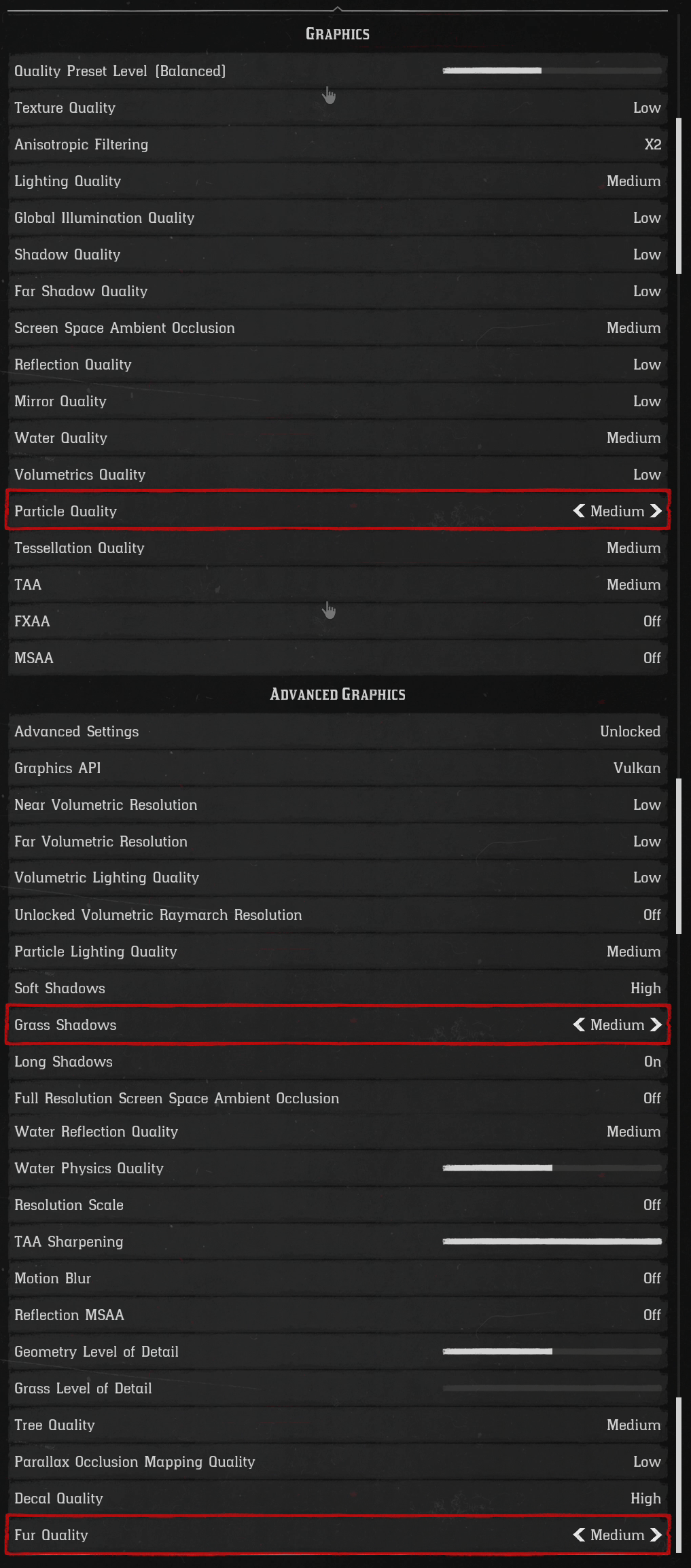

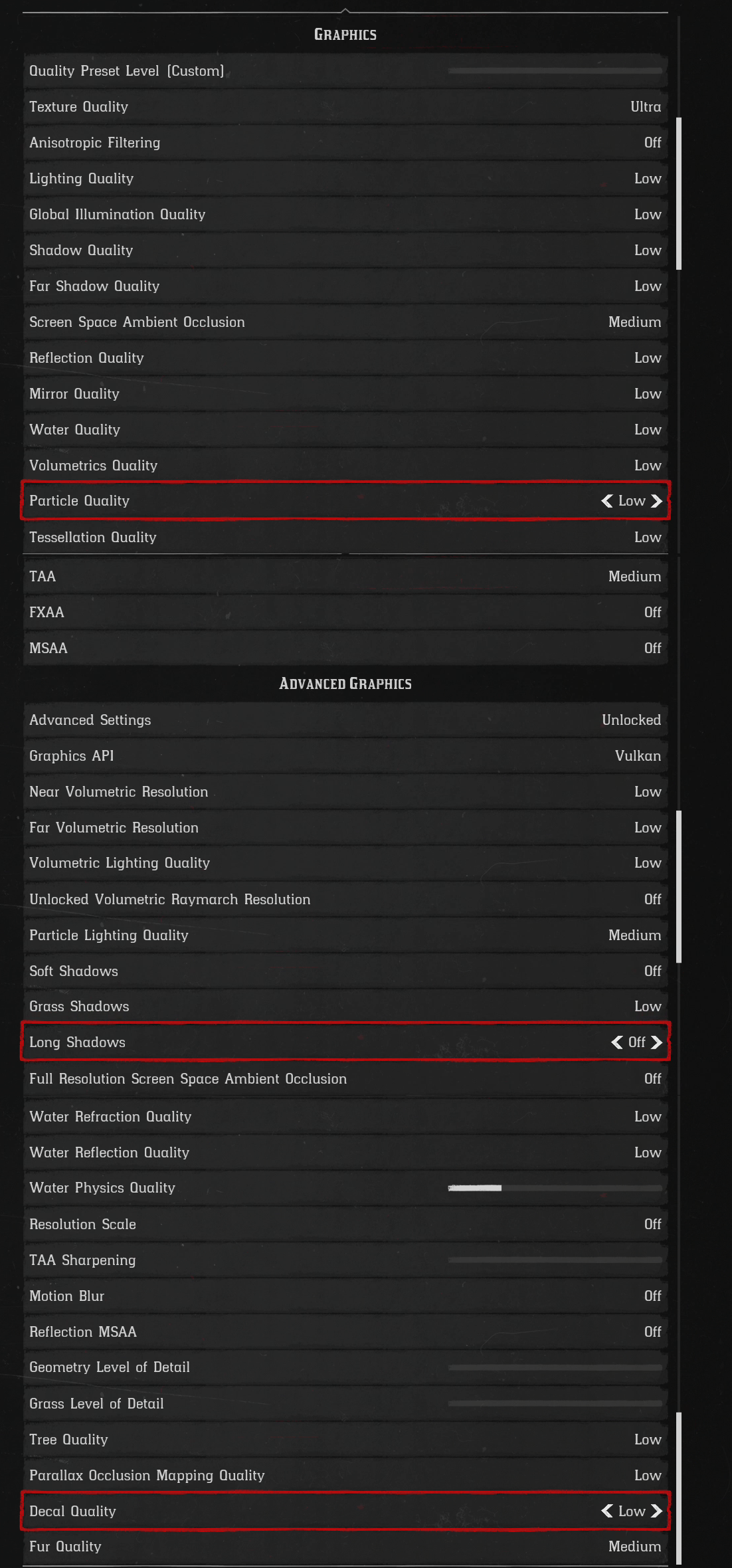

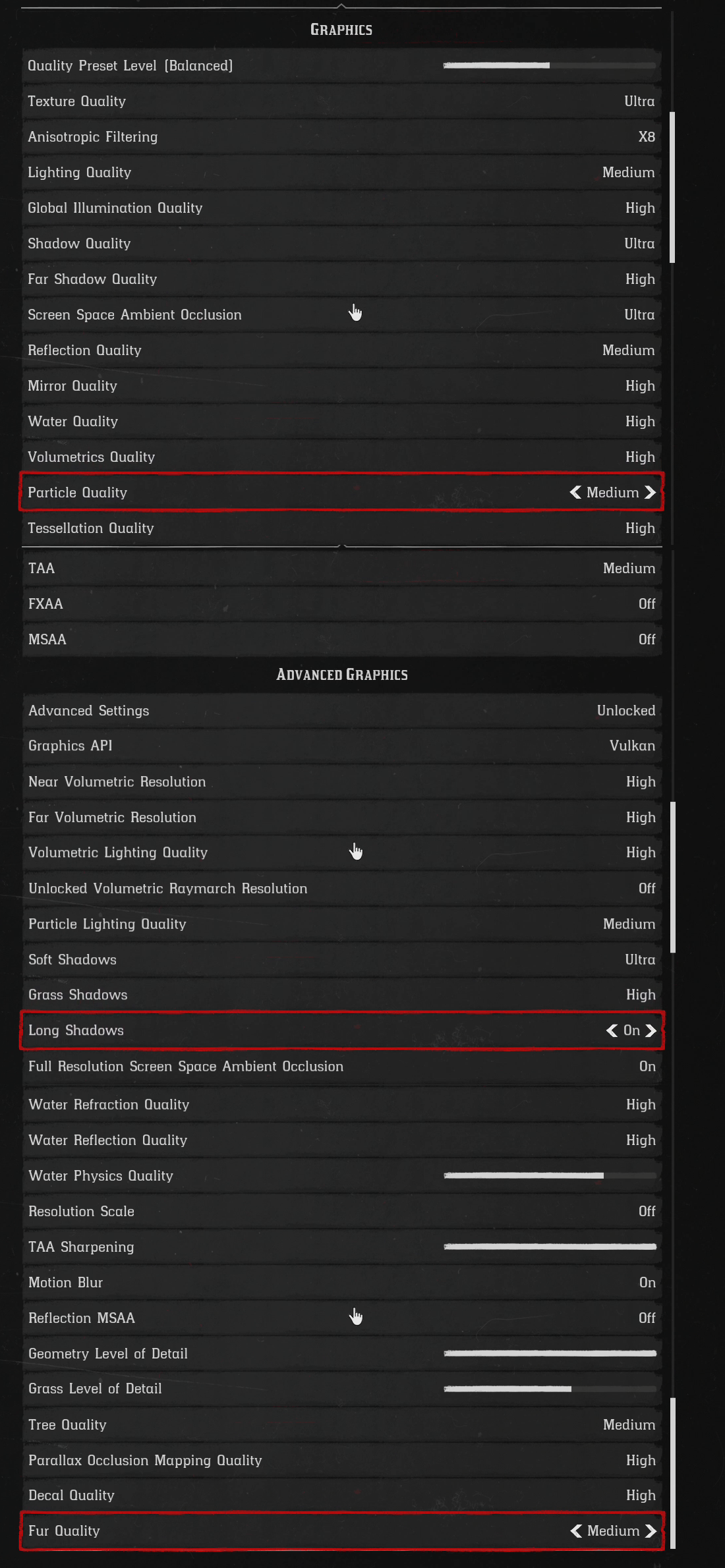

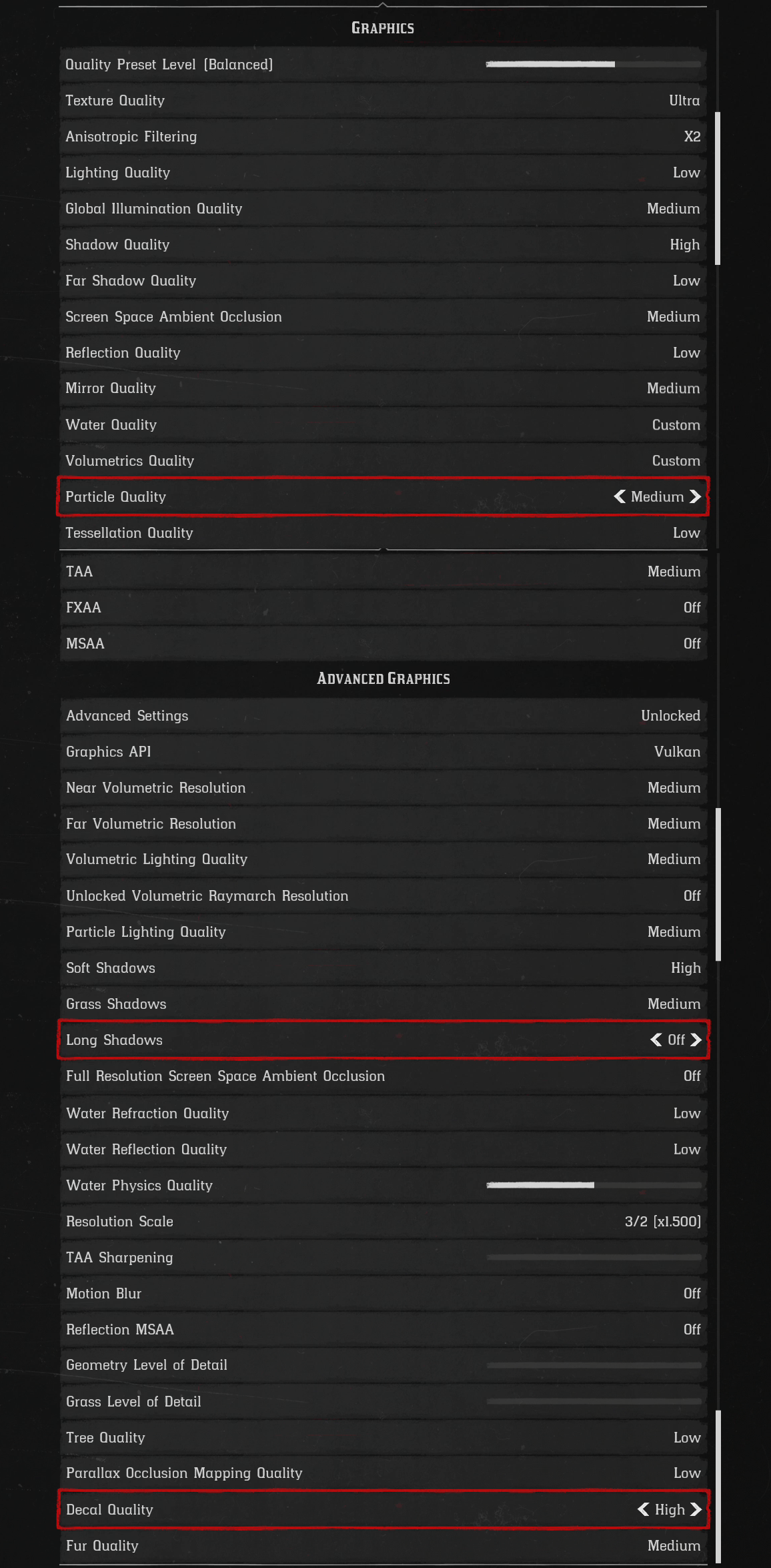

Red Dead Redemption 2 has one of the most extensive settings screen I have ever seen, with rows and rows of settings and toggles. Going into detail is nearly impossible, but thankfully most of them are easy to read clearly labelled from low to ultra or as sliders.

Article continues below

Rather than traditional low, medium, high presets this game has a slider that goes from preferring performance to higher fidelity and automatically toggles the settings, often in unpredictable ways and not obvious ways, to achieve the desired level.

The weird thing is that these presets are not the same for every GPU. Red Dead Redemption 2 seems to be pulling GPU specific presets from somewhere and adjusting the game based on that. While this makes benchmarking different GPUs a bit of a nightmare the feature works rather well if your GPU is supported and all you want to do is play to the best of what your GPU can without hassle. The bad news is that if your GPU is not supported the results can be extremely counterproductive. More on that in a moment.

In the advanced options, you can select Vulkan or DirectX12 as a rendering API, where Vulkan seemed to provide the most stable results across the board. Also here are a "Resolution Scale" settings that allow rendering the 3D elements at a fraction of the full resolution and then downsample or supersample the result back to the Window resolution to get a full resolution UI at all times.

Built-in Benchmark

The game includes a pretty complete Benchmark that runs the game through several scenarios, including a pretty heavy snow section and a gameplay slice of one of the game's heaviest towns. This seems to approximate actual game performance rather well, so I used this tool as a way to measure and compare what each setup was able to achieve.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

RDR2 on Integrated Vega 11 Graphics

We start our experiment with a Ryzen 3400G and its integrated Vega 11 graphics paired with 8 GB of 3000 MHz DDR4 RAM. These are marketed as the most powerful integrated graphics currently in the PC market, and surprisingly it can run Red Dead Redemption 2, but with some caveats.

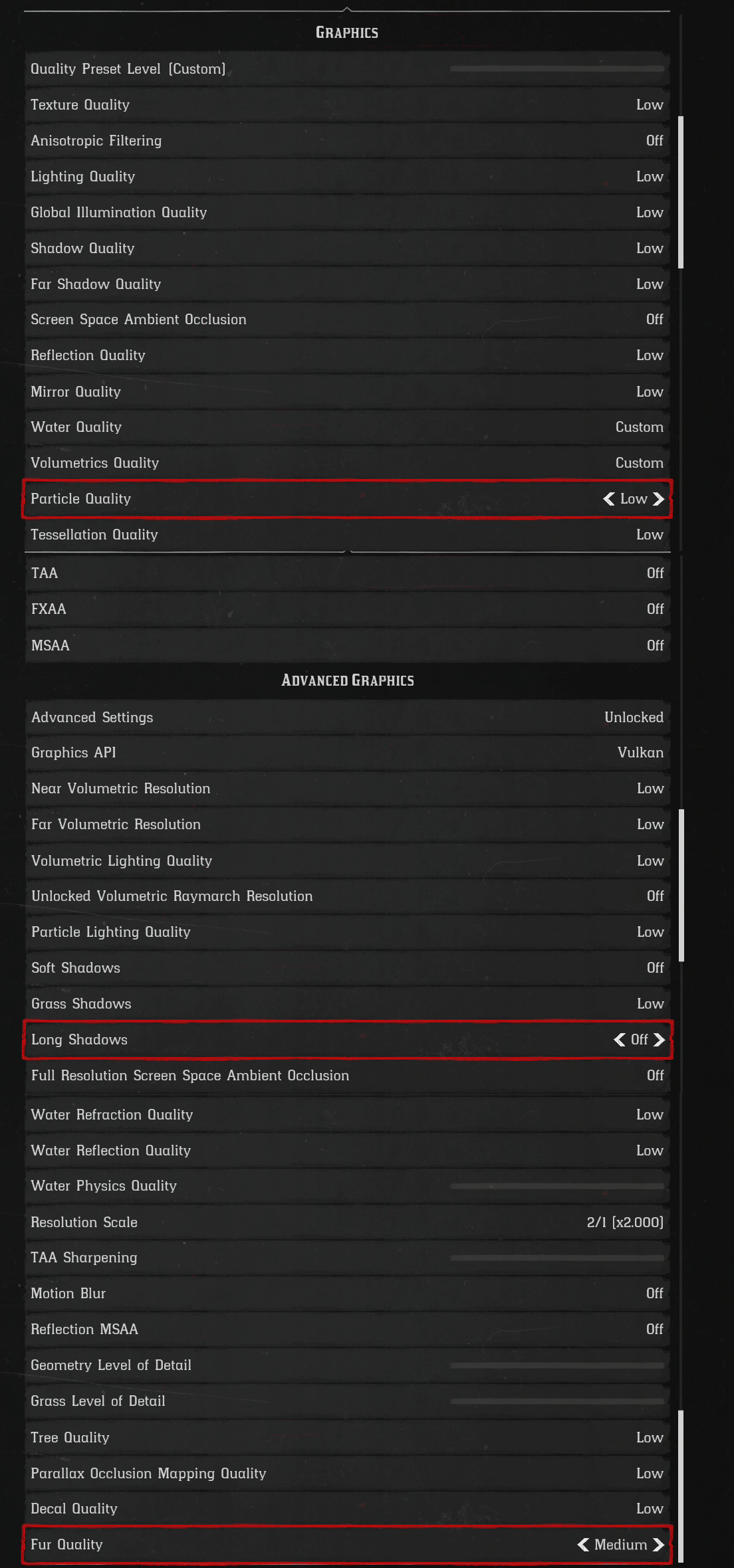

The first is that all settings have to be set to the lowest manually. The Vega 11 does not seem to be included in the GPU list for auto presets and so it will automatically set everything to high or ultra, which is definitely outside of the capabilities of this device.

The second caveat is that, while 60 fps is not in the cards for this setup, a 30 fps lock is theoretically possible. This is usually achieved using the half vsync option in the menu at a 60 Hz refresh rate, but for some reason, the game fails to lock the fps for the Vega 11. All other GPUs I tried this on worked fine.

The third caveat is that using an integrated GPU means that some of the RAM will be used as VRAM, which effectively reduces the quantity of RAM available to the game. Red Dead Redemption 2 is designed for a minimum of 8GB and so our setup is prone to very occasional crashes when the game runs out of memory. Thankfully this is not frequent, but it is worth keeping an eye on.

With all settings manually reduced to a minimum of a 70% resolution scale from 1080 (1344 x 756) the game can do 30 fps, and the lowest settings are not as dramatic as other games with only the low-resolution textures being truly notorious. Otherwise, given the circumstances, the game is quite tolerable and playable.

RDR2 on GTX 1050 Ti

Upgrading our RAM to 16 GB and adding a GTX 1050 Ti GPU improves matters a bit, but not as much as you would expect.

Full 1080 resolution is now possible as long as you lock to the console-like 30 fps. In terms of settings, the automatic tool keeps the same minimum settings for all "performance" labelled presets, but the "balanced" preset is still sufficient for 30 fps except for a couple of areas of the benchmark. In practice, this is a mixture of low and medium presets with some specific areas such as soft shadows and decal quality on high.

This is visually acceptable but not impressive. Gets the job done if all you can access is an entry-level GPU.

60 fps regrettably requires a big sacrifice, dropping the render resolution to 50% (960 x 540) at the same graphical preset.

Red Dead Redemption 2 was not truly designed for sub-720p resolutions, and with the AA enabled the world becomes a bit of a difficult to read blur. At this level, I highly recommend sticking to 30 fps.

RDR2 on GTX 1060

Interestingly enough, the GTX 1060 6GB is Rockstar Games' recommended GPU for Red Dead Redemption 2. While there is always a great degree of interpretation as to what a publisher is aiming for as "recommended," in this case it seems to be the bare minimum needed to play 1080p/60 fps at performance-oriented settings.

Getting 1080p / 60 fps on a GTX 1060 card is only possible on the highest performance / lowest graphics preset of the automatic tool, which still maintains TAA anti-aliasing, Ambient Occlusion, Water Quality and decent shadows for this GPU. The good news is that the 6GB of VRAM allows for a manual adjustment of the Textures to Ultra.

At this preset, the game looks pretty decent, with the lowest settings only noticeable on very close inspection such as details on far-away objects. This is one of those instances when the GPU specific presets works pretty well at 1080p.

RDR2 on GTX 1080

This game seems to be designed with the future in mind, as even current generation high-end GPUs are having trouble maxing the game's settings so don't expect an ultra-high preset on a GTX 1080.

With that said, the middle "Balanced" preset, which assigns a mixture of Ultra and High settings, with some strategic settings at medium, the GPU is perfectly able to do 60 fps at 1080p while looking stunning with high-quality lightning (especially on the darker scenes) and details at every distance. While not maxed, it does not disappoint visually.

I tried raising the resolution scaler to 1.5 (1880 x 1620), which is just a bit higher than 1440p. This is achievable by taking the same preset and removing long shadows, lowering lod scalers to the lowest and lowering water reflection and refractions, so basically sacrificing details in the distance and water.

The result is pretty good. There were still some drops during the most intense scenes towards 53 fps but for the most part, the GTX 1080 card can maintain a high level of graphical fidelity while working in higher resolutions on Red Dead Redemption 2.

You probably don't want to go to a full 4k (4096 x 2160) with a GTX 1080 card. I manually dropped every setting to the lowest possible, which resulted in an average of 46 fps. Going back to the lowest graphics possible is quite a shock and not worth it when 60 fps is not even an achievable target. So consider going to 4K in Red Dead Redemption 2, only if you have one of the more powerful current gen cards.

Bottom Line

Red Dead Redemption 2 is a game that offers an impressive array of graphics options. If you are the type of user that just wants to play the game, without spending hours experimenting with individual settings, the automatic preset tools work well, as long as your GPU is supported.

A GTX 1060 is the bare minimum for 1080p/60 fps on Red Dead Redemption 2, and auto performance settings are decent-looking at that level. A GTX 1080 gets you an important increase in visual fidelity and 60 fps at 1440p, but it is not powerful enough for 4K play.

At the entry-level, a GTX 1050 can achieve 30 fps, but the game doesn't look spectacular. An integrated Vega 11 can do 720p/30 fps on the lowest possible settings. This GPU is not supported, so it requires manual setting tweaking, as well as dealing with minor technical issues.

-

rolli59 Everything from IGP to 1080? Looks to me very few examples and single sided! Quality here is going down.Reply -

Blytz Solid testing on all 4 of the only cards in existence, apparently amd don't make any discrete cards any more...Reply -

AndrewJacksonZA Again, the same thing I said in my comment on the "How Call of Duty: Modern Warfare 2019 Plays on Different Graphics Cards" article:Reply

I had higher hopes for this article than your previous one, Alex, but was disappointed. Just like last time, I opened the article to see how this would run on my RX470.

Please change the title to something like "How RDR2 Plays on Different Nvidia Graphics Cards, and on AMD APUs" as you most certainly did not "test on everything from integrated graphics to GTX 1080"

Another thumbs down.

Edit: Formatting. -

Leafdrop Yeah,there is the OnChip Gpu manufacturer and the other one who makes proper cards yeah?nvidia bribes everyone!No Rx 570 580 590 vega 56 vega 64 radeon 7 benchmarks?really?Swap the name to : Nvidia Owns Tom and His hardware" Asap!Reply -

mitch074 Great - Tom's determines the RX570 as the best budget card - and it's nowhere to be found on an article that tests a high profile game on "every card". I won't even mention the rest of the slant here, many have done it before me.Reply -

Peter Maessen Reply

any Ideas on what settings for two gtx 1080ti's?admin said:We tested to find out how RDR2 looks on everything from integrated graphics to a GTX 1080.

Red Dead Redemption 2: How it Plays on Different Graphics Cards : Read more