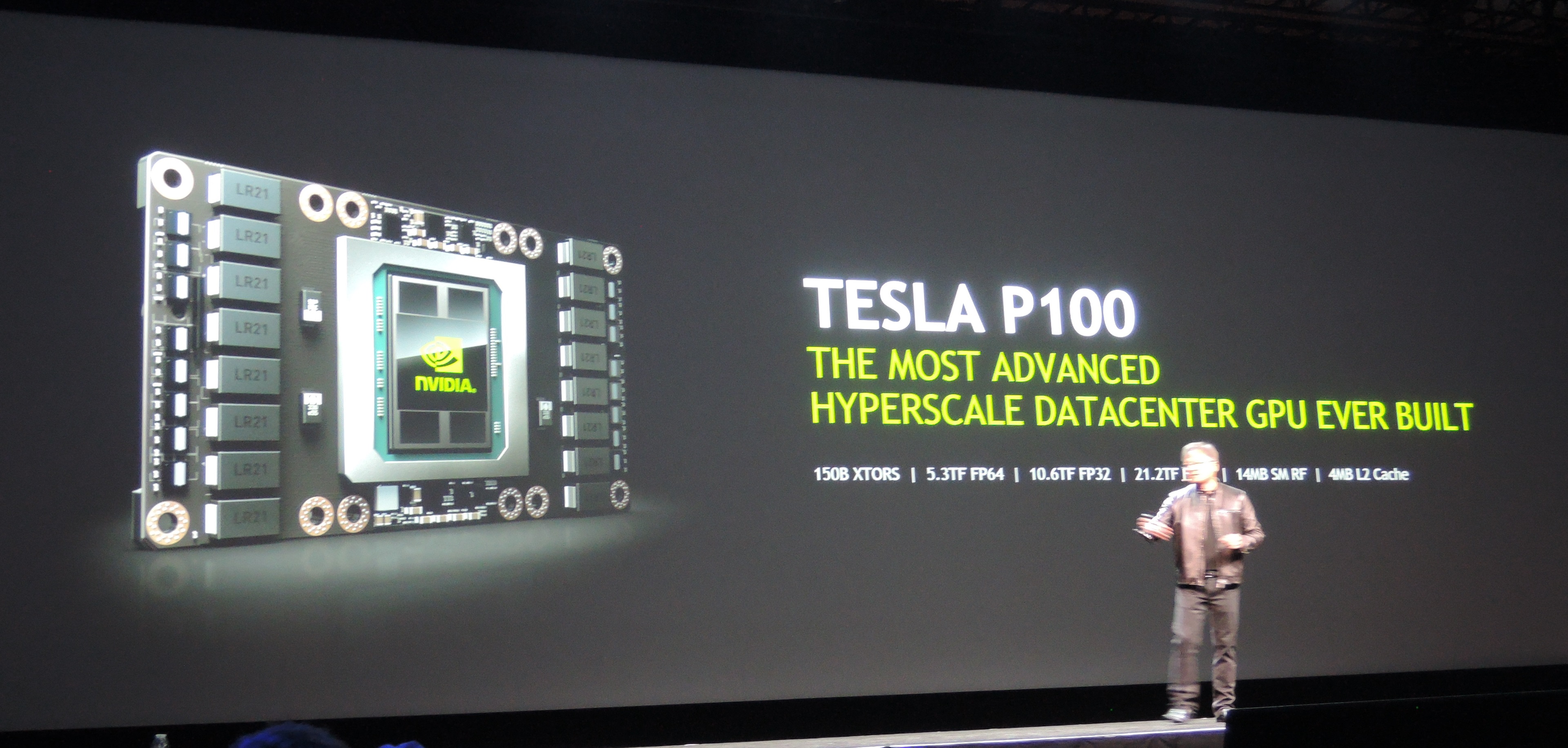

Nvidia Announces Pascal-Based Tesla P100 GPU With HBM2

Nvidia's new Pascal GPU is aimed at super computing, packing 15.3-billion transistors, 16-nm FinFET technology and HBM 2.0 memory. Meet the Tesla P100.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

During its big Tuesday Keynote at GTC 2016, Nvidia announced the Tesla P100 GPU. Based on the new Pascal architecture, which uses 16nm FinFET technology, it packs in 15.3 billion transistors, uses HBM2 memory, and steps up performance with some vigor. The chip is 600 mm square and connects to the memory with 4,000 wires (Maxwell has 384).

It also includes NVLink, a new interconnect capable of 160 GB/s. NVLink is what Nvidia will use to connect the graphics card to the system instead of PCI-Express, which would not offer enough bandwidth. Nvidia claimed a 5x performance improvement with its new interconnect.

The Tesla P100 has 16 GB of CoWoS (Chip on Wafer on Substrate) 3D-stacked HBM2 memory, with an enormous 720 GB/s memory bandwidth. It is a new unified memory architecture. The chip has 14 MB of shared memory register files with an aggregate throughput of 80 TB/s, along with 4 MB of L2 cache.

Article continues belowAt FP64, the Tesla P100 pulls off 5.3 TF of performance, jumping to 10.6 FP at FP32. At FP16, that number becomes a staggering 21.2 TF.

The Tesla P100 is in volume production today and will ship “soon." Jen-Hsun Huang said that really means it will show up in the cloud first, and ship via OEMs in Q1 2017.

Of course, as a consumer, you can’t have this chip yet. That’s no surprise. In the past, Nvidia also first implemented its new architectures and GPUs in scientific super computing before bringing it to the consumer market. Nvidia did not announce when we would be seeing a consumer-oriented Pascal chip.

Follow Niels Broekhuijsen @NBroekhuijsen. Follow us @tomshardware, on Facebook and on Google+.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Niels Broekhuijsen is a Contributing Writer for Tom's Hardware US. He reviews cases, water cooling and pc builds.

-

gadgety Mindblowing numbers there. Finally MadVR will have a hardware platform to match it's capabilities?Reply -

turkey3_scratch Is this like a Quadro replacement? I'm not so up-to-info on workstation/data center cards.Reply -

Snipergod87 Tesla P100.Reply

All they need to do is add a "D" on the end, then you will have presumably the next Model S variant, the P100D.

I doubt this is a coincidence. -

Matt_550 ReplyNo HBM 2 until Fall. No units until Q1 2017 (March?). Is nVidia really paper launching a card that is a year off?! Are they THAT desperate!?

Also:

FirePro S9300 X2 = 13.9 TFLOPS Single Precision @ 28nm + HBM 1 & 300w TDP

NVIDIA Tesla Pascal = 10.6TFLOPS Single Precision @ 14nm + HBM 2 & 300w TDP

I guess Pascal really is Maxwell v1.1.

That's a dual GPU card, I'd really hope it would beat a single GPU card. Though not by much at all and after it's single precision the S9300 X2 is a joke. Double precision of 868GFLOPS, embarrassing. -

ragenalien ReplyNo HBM 2 until Fall. No units until Q1 2017 (March?). Is nVidia really paper launching a card that is a year off?! Are they THAT desperate!?

Also:

FirePro S9300 X2 = 13.9 TFLOPS Single Precision @ 28nm + HBM 1 & 300w TDP

NVIDIA Tesla Pascal = 10.6TFLOPS Single Precision @ 14nm + HBM 2 & 300w TDP

I guess Pascal really is Maxwell v1.1.

For one please note that the TDP for the new pascal card was not revealed and pascal is on 16nm FinFets not 14nm. Secondly, and this is probably more of something you should try and pay attention to in the beginning, the FirePro S9300 X2 is two Fiji GPU's on one board. Since their clock speed is roughly the same as their singular counterparts that gives a single one 6.95 TFLOPS of single precision performance. Given that 10.6 is SIGNIFICANTLY faster than that I think this should be a wonderful card. -

viewtyjoe ReplyThat's a dual GPU card, I'd really hope it would beat a single GPU card. Though not by much at all and after it's single precision the S9300 X2 is a joke. Double precision of 868GFLOPS, embarrassing.

I doubt that the S9300 X2 was built with double precision loads in mind. Why waste design time/space on FP64 performance when your target audience only cares about FP32 performance?

This is a shot across the bow at Intel's compute cards more than anything else, since Intel's products were more aimed at a balanced FP32/FP64 performance. -

SpAwNtoHell So no pascal in my pc this year but maybe next year Q3 if am optimistic? Where is that credit card to order myself a 980ti as is the best i am going to get for another year or 2...:( sadReply -

FritzEiv ReplySo no pascal in my pc this year but maybe next year Q3 if am optimistic? Where is that credit card to order myself a 980ti as is the best i am going to get for another year or 2...:( sad

I don't think that's what you should take away from this. GTC, and Nvidia's push around GTC, is about more complex workloads, happening at scale, and so this (Tesla P100) is just the first glimpse of Pascal that we are seeing for now. -

danbfree What about using current, decent efficiency architecture in the new 16nm process as a solid stop gap? I'm assuming the delay is due to new architecture and interface required. At 16nm, current architecture could see significant clock boosts, right?Reply -

This is a platform to get and play games on DX11 under Windows 7 with more visual details faster than Microsoft AMD/Windows 10/DX12 exclusivity bullshit.Reply