Deep Instinct: A New Way to Prevent Malware, With Deep Learning (Updated)

Malware has proven increasingly difficult to detect via signature or heuristic-based methods, which means most Antivirus (AV) programs are woefully ineffective against mutating malware, and especially ineffective against APT attacks (Advanced Persistent Threats). Typical malware consists of about 10,000 lines of code. Changing only 1% of the code renders most AV ineffective.

Five to six years ago marked the beginning of the use of machine learning to solve non-linear problems such as facial recognition or understanding malware, and what features one needs to extract to uniquely identify such programs. Other techniques, such as sandboxing and machine-based techniques, are not as fast nor as accurate as Deep Learning.

Deep Instinct, founded by Guy Caspi and Eli David, Israeli Defense Force Cybersecurity veterans, applies artificial intelligence Deep Learning algorithms to detect structures and program functions that are indicative of malware. Deep Instinct is able to both detect and prevent “first-seen” malicious activities on all organization assets. Most of Deep Instinct's personnel have advanced mathematics degrees, and it has offices in Tel Aviv and Silicon Valley.

To implement the Deep Learning, Deep Instinct constructed a large neural network in a laboratory, and trained its program against a very large set of malware samples. The training is done on databases of tens of millions of malicious and legitimate files. The output of this continuous training is a prediction model that can be sent to the protected device enabling detection and prevention in real-time.

The idea was to train the software to detect combinations of programmatic calls and small operations indicative of malware. Deep Instinct's learning method breaks the malware samples into many, many small pieces so that malware can be mapped, much like a genomic sequence, and like one of the ways genomic sequences are constructed from tens of thousands of smaller sequences. These torn-apart samples are on the order of binary bit strings, which are then introduced to the artificial neural network for training on pattern recognition. This neural network, doing many millions of calculations, runs on a GPU cluster, and the end result is a static neural network that can be pushed to endpoints.

Because this solution – the neural network instance – itself cannot be updated, it runs very fast, in real time, and uses little computer power. The built –in recognition aspect from all the training is what powers Deep Instinct to claim a very low false positive rate, and conversely, a very high detection rate.But new neural network solutions may be pushed out, and the network administrator decides on the update interval, based on threat ecosystem, and Deep Instinct supplies updates.

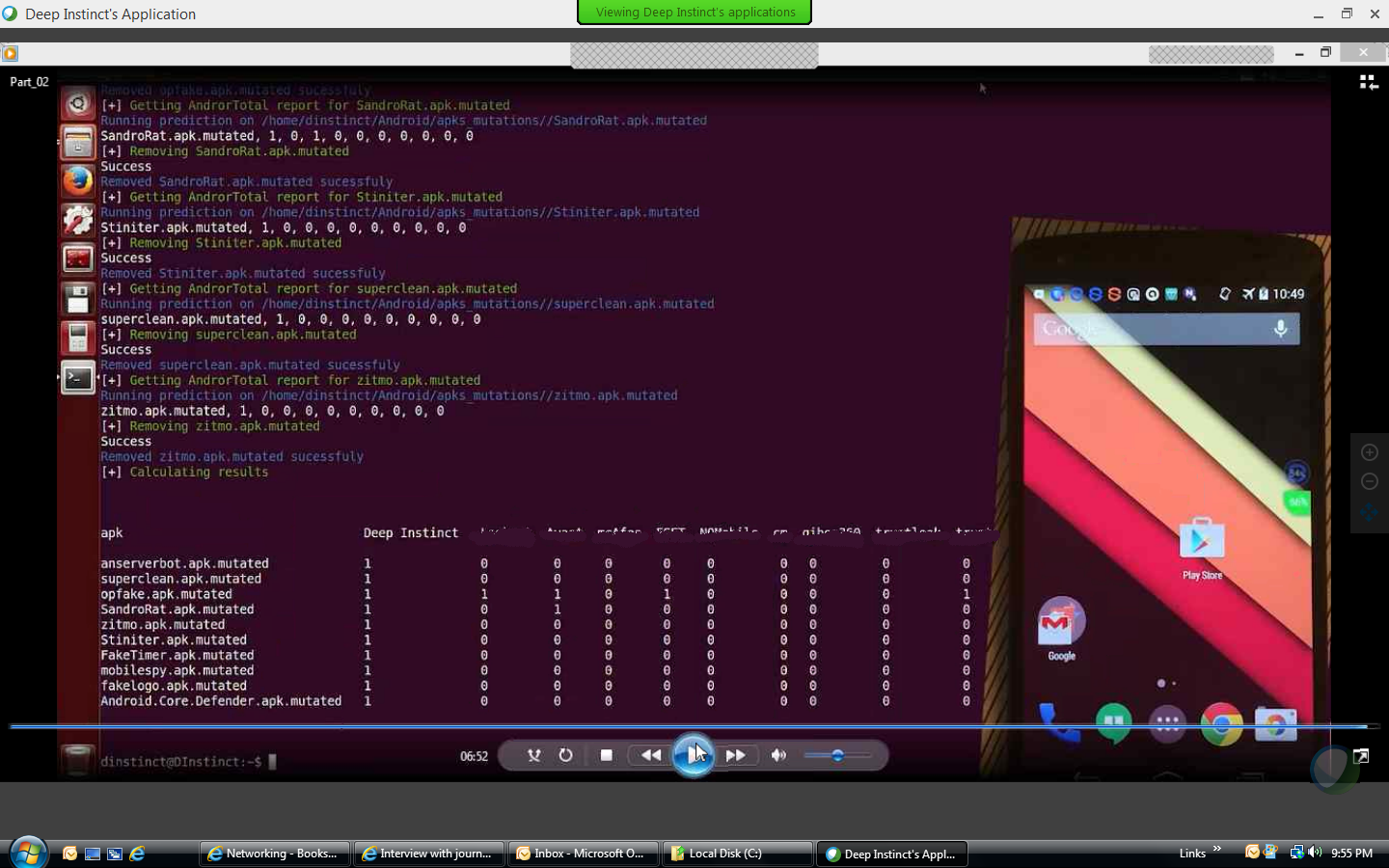

In tests of recognition against 16,000 malware samples conducted at the University of Göttingen and Siemens CERT, Bit-Defender, McAfee, Trend, AVG, Kaspersky, Sophos, and others scored an average recognition rate of 61%, whereas Deep Instinct averaged 98.86%. This test used the German-developed DREBIN test, described here. Some of these malware samples were automatically mutated, but in such a way that functionality wasn't affected. On PDF malware, the detection rate was 99.7% and 99.2% on point executables. To be fair, Deep Instinct was trained on a subset of about 8,000 of these, but that is still an impressive result.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Both Cylance and FireEye use machine learning and make some of the more theoretically advanced detection software now available. According to Deep Instinct, Fireeye uses sandboxing, and neither do real time detection with a very low false positive rate.

In Q2 of this year, Deep Instinct hopes to have a traffic module to detect malware and APTs that it claims could replace a firewall, but it would more likely would serve as a useful adjunct. How do Deep Instinct’s methods compare to notable competitors, particularly to some of the emerging companies using advanced techniques? One such company, UK based Dark Trace, uses threat indicators for traffic and changed its detection method to use machine learning. Cybereason developed a different detection approach: It analyzes other threat patterns, such as external indicators, different domains, and threat intelligence.

According to Guy Caspi, the cyber attack from North Korea against Sony used a new, slight modification of existing malware that was not very sophisticated. The problem of malware detection is increasingly difficult for many companies to solve as the organization perimeter moves. Mobile access and the emergence of new threat actors has moved most organization cyber perimeters.

Some big companies may be investing in Deep Instinct, including Samsung, Qualcomm and Nvidia. There is a fee for the Deep Instinct appliance, and each endpoint will likely be priced at approximately $50-75 per instance, depending on volume. The mobile solution will be priced slightly more. This pricing is competitive with other players in this space.

Signature-based malware detection is becoming increasingly inaccurate and was never scalable. By breaking up malware into tiny “bits” and analyzing them via neural networks, Deep Instinct may have discovered an inherent characteristic of malware, one that can’t be changed by mutation if that malware is to retain its functionality. If true, Deep Instinct’s product is a game changer in the detection marketplace.

Update, 4/4/16, 2:25pm PT: Added clarification on the number of malware samples used. Added clarification on neural network updates. Added update to FireEye sandboxing.

-

Osama68 That's sounds very similar to what Cylance does. Cylance does not use a Sandbox as the article suggests.Reply -

michaelzehr As alluded to, the results would be more impressive if they didn't include the training samples. Additionally while the article mentions low false positive, it doesn't mention a rate. It sounds like it's still based on analyzing the code, not the behavior, which means it can still be beaten. (Though the network analysis one sounds like it will be behavior-based.) But until we see more results, we don't know if it's a neural net that recognizes malware or a neural net that recognizes applications that do a lot of network activity.Reply

-

gibbousmoon100 I'll never be impressed by a high success rate that isn't accompanied by a concrete and unconcealed low false positive rate. The necessity for both is common sense, imo. Why isn't it talked about more in this article?Reply -

gibbousmoon100 Accidentally upvoted my own comment, and now it won't let me downvote it. But why are we allowed to upvote our own comments to begin with?Reply -

Urzu1000 Like others have stated, they really need to include false positive statistics to make this worthwhile.Reply -

HSelden They did include some very low false positive rates, but I couldn't publish them. At least in a uniform test, it beat all other competitors - if you blow up the image you can see the mobile test "false positives. "Reply

I agree, the proof is real world testing, and what would Deep Instinct do with with evolving malware. Could be very interesting - time will tell. Is there an inherent structure or activity to malware that is part of it being malware, so cannot be readily disguised? Did Deep Instinct find this? If malware didn't have these behaviors or elements, it wouldn't be (malicious) malware? How could this be detected?