Nvidia's CUDA: The End of the CPU?

Hardware Point of View, Continued

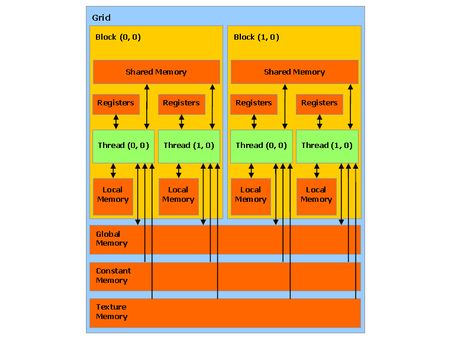

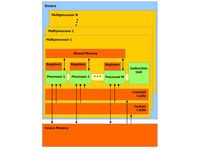

This memory area provides a way for threads in the same block to communicate. It’s important to stress the restriction: all the threads in a given block are guaranteed to be executed by the same multiprocessor. Conversely, the assignment of blocks to the different multiprocessors is completely undefined, meaning that two threads from different blocks can’t communicate during their execution. That means that using this memory is complicated. But it can also be worthwhile, because except for cases where several threads try to access the same memory bank, causing a conflict; the rest of the time, access to shared memory is as fast as access to the registers.

The shared memory is not the only memory the multiprocessors can access. Obviously they can use the video memory, but it has lower bandwidth and higher latency. Consequently, to limit too-frequent access to this memory, Nvidia has also provided its multiprocessors with a cache (approximately 8 KB per multiprocessor) for access to constants and textures.

The multiprocessors also have 8,192 registers that are shared among all the threads of all the blocks active on that multiprocessor. The number of active blocks per multiprocessor can’t exceed eight, and the number of active warps are limited to 24 (768 threads). So, an 8800GTX can have up to 12,288 threads being processed at a given instant. It’s worth mentioning all these limits because it helps in dimensioning the algorithm as a function of the available resources.

Optimizing a CUDA program, then, essentially consists of striking the optimum balance between the number of blocks and their size – more threads per block will be useful in masking the latency of the memory operations, but at the same time the number of registers available per thread are reduced. What’s more, a block of 512 threads would be particularly inefficient, since only one block might be active on a multiprocessor, potentially wasting 256 threads. So, Nvidia advises using blocks of 128 to 256 threads, which offers the best compromise between masking latency and the number of registers needed for most kernels.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Hardware Point of View, Continued

Prev Page The Theory: CUDA from the Hardware Point of View Next Page The Theory: CUDA from the Software Point of View-

CUDA software enables GPUs to do tasks normally reserved for CPUs. We look at how it works and its real and potential performance advantages.Reply

Nvidia's CUDA: The End of the CPU? : Read more -

Well if the technology was used just to play games yes, it would be crap tech, spending billions just so we can play quake doesnt make much sense ;)Reply

-

dariushro The Best thing that could happen is for M$ to release an API similar to DirextX for developers. That way both ATI and NVidia can support the API.Reply -

dmuir And no mention of OpenCL? I guess there's not a lot of details about it yet, but I find it surprising that you look to M$ for a unified API (who have no plans to do so that we know of), when Apple has already announced that they'll be releasing one next year. (unless I've totally misunderstood things...)Reply -

neodude007 Im not gonna bother reading this article, I just thought the title was funny seeing as how Nvidia claims CUDA in NO way replaces the CPU and that is simply not their goal.Reply -

LazyGarfield I´d like it better if DirectX wouldnt be used.Reply

Anyways, NV wants to sell cuda, so why would they change to DX ,-) -

I think the best way to go for MS is announce to support OpenCL like Apple. That way it will make things a lot easier for the developers and it makes MS look good to support the oen standard.Reply

-

Shadow703793 Mr RobotoVery interesting. I'm anxiously awaiting the RapiHD video encoder. Everyone knows how long it takes to encode a standard definition video, let alone an HD or multiple HD videos. If a 10x speedup can materialize from the CUDA API, lets just say it's more than welcome.I understand from the launch if the GTX280 and GTX260 that Nvidia has a broader outlook for the use of these GPU's. However I don't buy it fully especially when they cost so much to manufacture and use so much power. The GTX http://en.wikipedia.org/wiki/Gore-Tex 280 has been reported as using upwards of 300w. That doesn't translate to that much money in electrical bills over a span of a year but never the less it's still moving backwards. Also don't expect the GTX series to come down in price anytime soon. The 8800GTX and it's 384 Bit bus is a prime example of how much these devices cost to make. Unless CUDA becomes standardized it's just another niche product fighting against other niche products from ATI and Intel.On the other hand though, I was reading on Anand Tech that Nvidia is sticking 4 of these cards (each with 4GB RAM) in a 1U formfactor using CUDA to create ultra cheap Super Computers. For the scientific community this may be just what they're looking for. Maybe I was misled into believing that these cards were for gaming and anything else would be an added benefit. With the price and power consumption this makes much more sense now. Agreed. Also I predict in a few years we will have a Linux distro that will run mostly on a GPU.Reply