Nvidia's CUDA: The End of the CPU?

A Few Definitions

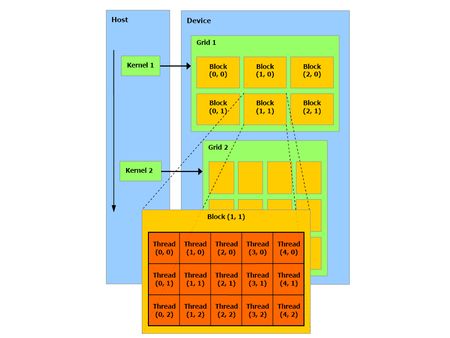

Before we dive into CUDA, let’s define a few terms that are sprinkled throughout Nvidia’s documentation. The company has chosen to use a rather special terminology that can be hard to grasp. First we need to define what a thread is in CUDA, because the term doesn’t have quite the same meaning as a “CPU thread,” nor is it the equivalent of what we call "threads" in our GPU articles. A thread on the GPU is a basic element of the data to be processed. Unlike CPU threads, CUDA threads are extremely “lightweight,” meaning that a context change between two threads is not a costly operation.

The second term frequently encountered in the CUDA documentation is warp. No confusion possible this time (unless you think the term might have something to do with Start Trek or Warhammer). No, the term is taken from the terminology of weaving, where it designates “threads arranged lengthwise on a loom and crossed by the woof.” A warp in CUDA, then, is a group of 32 threads, which is the minimum size of the data processed in SIMD fashion by a CUDA multiprocessor.

But that granularity is not always sufficient to be easily usable by a programmer, and so in CUDA, instead of manipulating warps directly, you work with blocks that can contain 64 to 512 threads.

Finally, these blocks are put together in grids. The advantage of the grouping is that the number of blocks processed simultaneously by the GPU are closely linked to hardware resources, as we’ll see later. The number of blocks in a grid make it possible to totally abstract that constraint and apply a kernel to a large quantity of threads in a single call, without worrying about fixed resources. The CUDA runtime takes care of breaking it all down for you. This means that the model is extremely extensible. If the hardware has few resources, it executes the blocks sequentially; if it has a very large number of processing units, it can process them in parallel. This in turn means that the same code can target both entry-level GPUs, high-end ones and even future GPUs.

The other terms you’ll run into frequently in the CUDA API are used to designate the CPU, which is called the host, and the GPU, which is referred to as the device. After that little introduction, which we hope hasn’t scared you away, it’s time to plunge in!

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: A Few Definitions

Prev Page The CUDA APIs Next Page The Theory: CUDA from the Hardware Point of View-

CUDA software enables GPUs to do tasks normally reserved for CPUs. We look at how it works and its real and potential performance advantages.Reply

Nvidia's CUDA: The End of the CPU? : Read more -

Well if the technology was used just to play games yes, it would be crap tech, spending billions just so we can play quake doesnt make much sense ;)Reply

-

dariushro The Best thing that could happen is for M$ to release an API similar to DirextX for developers. That way both ATI and NVidia can support the API.Reply -

dmuir And no mention of OpenCL? I guess there's not a lot of details about it yet, but I find it surprising that you look to M$ for a unified API (who have no plans to do so that we know of), when Apple has already announced that they'll be releasing one next year. (unless I've totally misunderstood things...)Reply -

neodude007 Im not gonna bother reading this article, I just thought the title was funny seeing as how Nvidia claims CUDA in NO way replaces the CPU and that is simply not their goal.Reply -

LazyGarfield I´d like it better if DirectX wouldnt be used.Reply

Anyways, NV wants to sell cuda, so why would they change to DX ,-) -

I think the best way to go for MS is announce to support OpenCL like Apple. That way it will make things a lot easier for the developers and it makes MS look good to support the oen standard.Reply

-

Shadow703793 Mr RobotoVery interesting. I'm anxiously awaiting the RapiHD video encoder. Everyone knows how long it takes to encode a standard definition video, let alone an HD or multiple HD videos. If a 10x speedup can materialize from the CUDA API, lets just say it's more than welcome.I understand from the launch if the GTX280 and GTX260 that Nvidia has a broader outlook for the use of these GPU's. However I don't buy it fully especially when they cost so much to manufacture and use so much power. The GTX http://en.wikipedia.org/wiki/Gore-Tex 280 has been reported as using upwards of 300w. That doesn't translate to that much money in electrical bills over a span of a year but never the less it's still moving backwards. Also don't expect the GTX series to come down in price anytime soon. The 8800GTX and it's 384 Bit bus is a prime example of how much these devices cost to make. Unless CUDA becomes standardized it's just another niche product fighting against other niche products from ATI and Intel.On the other hand though, I was reading on Anand Tech that Nvidia is sticking 4 of these cards (each with 4GB RAM) in a 1U formfactor using CUDA to create ultra cheap Super Computers. For the scientific community this may be just what they're looking for. Maybe I was misled into believing that these cards were for gaming and anything else would be an added benefit. With the price and power consumption this makes much more sense now. Agreed. Also I predict in a few years we will have a Linux distro that will run mostly on a GPU.Reply