When Will Ray Tracing Replace Rasterization?

Limitations Of Ray Tracing

Now that we've made a point of deflating certain myths associated with ray tracing, let's look at the real issues that the technique involves.

We'll start with the major problem associated with the rendering algorithm: its slowness. Of course, there are those who'll say that that's not really a problem, since, after all, ray tracing is highly parallelizable and with the number of processor cores increasing each year, we should see nearly linear increases in ray tracing performance. And what's more, research on the optimizations that can be applied to ray tracing is still in its infancy. When you look back at the earliest 3D cards and compare them to what's available today, you might tend to be optimistic.

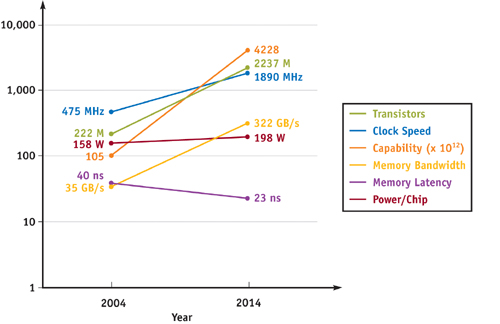

However, that point of view misses an essential point: the real interest of ray tracing lies in the secondary rays. In practice, visibility calculation using primary rays doesn't really represent any improvement in image quality over a classic Z-buffer algorithm. But the problem with these secondary rays is that they have absolutely no coherence. From one pixel to another, completely different data can be accessed, which cancels out all the usual caching techniques that are essential for good performance. That means that the calculation of secondary rays becomes extremely dependent on the memory subsystem, and in particular on latency. This is the worst possible scenario, because of all memory characteristics, latency is the one that has made the least progress in recent years, and there's no indication that's likely to change any time soon. It's easy enough to increase bandwidth by using several chips in parallel, whereas latency is inherent in the way memory functions.

The reason for the success of GPUs is that building hardware dedicated to rasterization was an extremely effective solution. With rasterization, memory access is coherent, regardless of whether it involves access to pixels, texels, or vertices. So, small caches coupled with massive bandwidth were ideal for achieving excellent performance. Bandwidth is computationally expensive, but at least it's a feasible solution if the economics justify it. Conversely, there just aren't any solutions for accelerating memory access with secondary rays. That's one reason why ray tracing will never be as efficient as rasterization.

Another intrinsic problem with ray tracing has to do with anti-aliasing (AA). The rays being shot are in fact simple mathematical abstractions and have no actual size. Consequently, the test for intersection with a triangle returns a simple Boolean result, but it provides no details, such as "40% of the ray intersects this triangle." The direct consequence of that is aliasing.

Whereas with rasterization it was possible to dissociate shader frequency from sampling frequency, it's not that simple with ray tracing. Several techniques have been studied to try to solve this problem, such as beam tracing and cone tracing, which give the rays thickness, but their complexity has held them back. So the only technique that can get good results is to shoot more rays than there are pixels, which amounts to supersampling (rendering at a higher resolution). Needless to say, that technique is much more computationally-expensive than the multisampling used by current GPUs.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Limitations Of Ray Tracing

Prev Page A Simple Algorithm? Next Page A Hybrid Rendering Engine?-

IzzyCraft Greed? You give an inch they take a mile? Very pessimistic conclusion although it helps drive the industry so hard to really complain. ;)Reply -

Ramar I'm definitely the kind of person that would prefer to lose some performance in exchange for elegance and perfection. The eye can tell when something is done cheaply in a render. I've made this argument that quite often we find computationally cheap methods of doing something in a game, and after time it seems to me that we've got a 400 horsepower muscle car that, on close inspection, is held together with duct tape and dreams. I'd much rather have a V6 sedan that's spotless and responds properly.Reply

Okay, well in real life, the Half Life 2 buggy would be a lot cooler to drive around than a Jetta, but you get the analogy. -

zodiacfml i still like the simplicity of ray tracing and how close it is to physics/science. it is just how it works, bounce light to everything.Reply

there are a lot of diminishing returns i can see in the future, some are, how complex can rasterization can get? what is the diminishing returns for image resolution especially on the desktop/living room?

ray tracing has a lot of room for optimization.

for years to come, indeed, raster is good for what is possible in hardware. look further ahead,more than 5 years, we'll have hardware fast enough and efficient algorithm for ray tracing. not to mention the big cpu companies, amd & intel, who will push this and earn everyones money. -

stray_gator aargh. start typing, then sign in to find your first words posted.Reply

Anyway, what I liked about this article is its being under the hood, but not related to a new product, announcement or such.

"deep tech" articles accompanying product launches tend inevitably to follow the lines of press kits, PR slides, etc.

Articles like this, while take longer to research, are exactly that - they are researched rather than detailing "company X implemented techniques Y and Z in their new product, which works this way, benefits performance that way and is really cool.". it gives an independent, comprehensive view of the subject, and gives the reader real understanding in the field. -

enewmen The ray-tracing code on the business card was way cool. I was hoping (real-time)ray-tracing and photo-realistic rendering will come with DX11 and GPGPU offloading - this seems completely unrealistic.Reply

I still never read of any dedicated ray-tracing hardware, at any price. It seems the better we understand ray-tracing and it's limitations, the more cloudy the future becomes. -

LORD_ORION Ray tracing will inevtiably replace rasterization. It will just flat out look better to the human perception, when in motion, than pure rasterization, and that is all that is required.Reply

Heh... this article brought to you by Nvidia. -

annymmo Hopefully GPGPU (OpenCL)Reply

will make raytracing possible.

(Together with a huge number of processing cores per graphic card and an advanced raytracing algorithm.)

-

Inneandar nice article.Reply

I wouldn't mind having just a little bit more technical depth, but I'd be glad to seem more like this on Tom's.