AMD vs Nvidia: Which GPUs Are Best for Ray Tracing?

We tested the latest ray tracing-capable GPUs from AMD and Nvidia to find out how they stack up, including DLSS Quality mode testing.

The best graphics cards should let you play your favorite games with stunning visual effects, including life-like reflections and shadows. Sure, ray tracing may not radically improve the look of some games, but you should be the one to decide whether or not to enable it. Getting locked out just because it doesn't run well is no fun. But which graphics cards perform best in ray tracing, and what sort of performance should you expect from Nvidia and AMD? To find out, we tested all the ray-tracing capable GPUs from the two major graphics brands.

PC:

Intel Core i9-9900K

MSI MEG Z390 Ace

Corsair 2x16GB DDR4-3200 CL16

XPG SX8200 Pro 2TB

Seasonic Focus 850 Platinum

Corsair Hydro H150i Pro RGB

Phanteks Enthoo Pro M

GPUs:

Nvidia RTX 3090 FE

Nvidia RTX 3080 FE

Nvidia RTX 3070 FE

Nvidia RTX 3060 Ti FE

EVGA RTX 3060 12GB

Nvidia RTX 2080 Ti FE

Nvidia RTX 2060 FE

AMD RX 6900 XT

AMD RX 6800 XT

AMD RX 6800

AMD RX 6700 XT

We've gathered all of the latest AMD RDNA2 and Nvidia Ampere GPUs into one place and commenced benchmarking. We've also included the fastest and slowest Nvidia Turing RTX GPUs from the previous generation, to show the full spectrum of performance. You can see the complete list of GPUs we've benchmarked along with specs for our test PC, which uses a Core i9-9900K paired with 32GB of DDR4-3600 memory. All of the graphics cards are reference models from AMD and Nvidia, with the exception of the RTX 3060 12GB — Nvidia doesn't make a reference card, but the EVGA card we used does run reference clocks.

The premise sounds simple enough: Run a bunch of ray tracing benchmarks on all the GPUs. Things aren't quite so simple, however, as not every ray tracing enabled game will run on every GPU. Most will, but Wolfenstein Youngblood unfortunately uses pre-VulkanRT extensions to the Vulkan API and thus requires an Nvidia RTX card. Maybe the game will get a patch to VulkanRT at some point, but probably not. There are likely other pre-existing games that supported RTX cards back before AMD's RX 6000 series launched that don't properly work, but most of the games we've tried are now working okay.

Article continues belowWe've selected ten of the current DirectX Raytracing (DXR) games that work on both AMD and Nvidia GPUs for these ray tracing benchmarks. Given Nvidia's pole position in the RT hardware world — its RTX 20-series cards launched in the fall of 2018, over two years before AMD's RX 6000-series parts — it's no surprise that most DXR games were focused on Nvidia hardware. However, we did select two of the current four AMD-promoted games with DXR, just to see how things might change. Targeted developer optimizations are certainly possible.

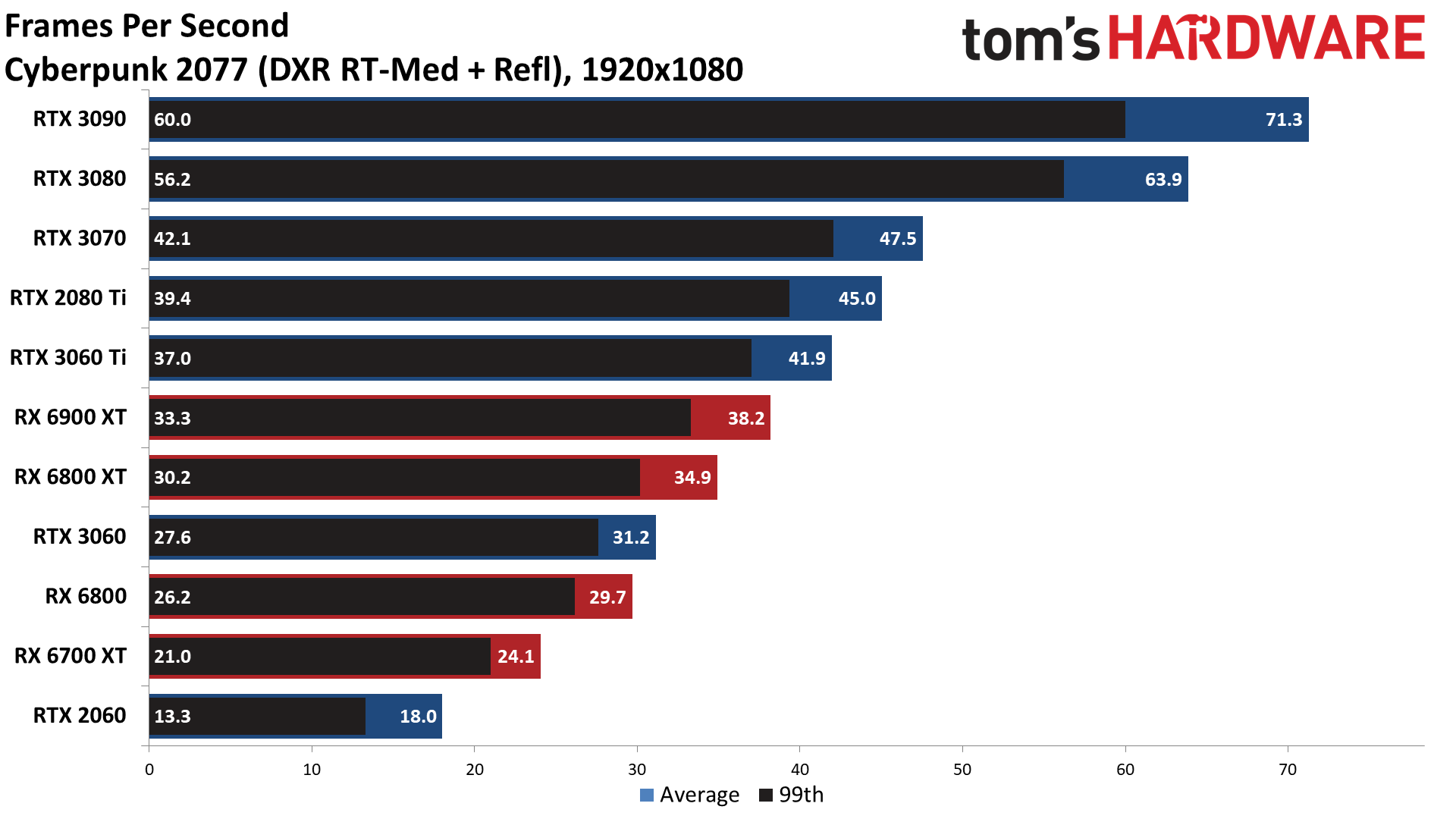

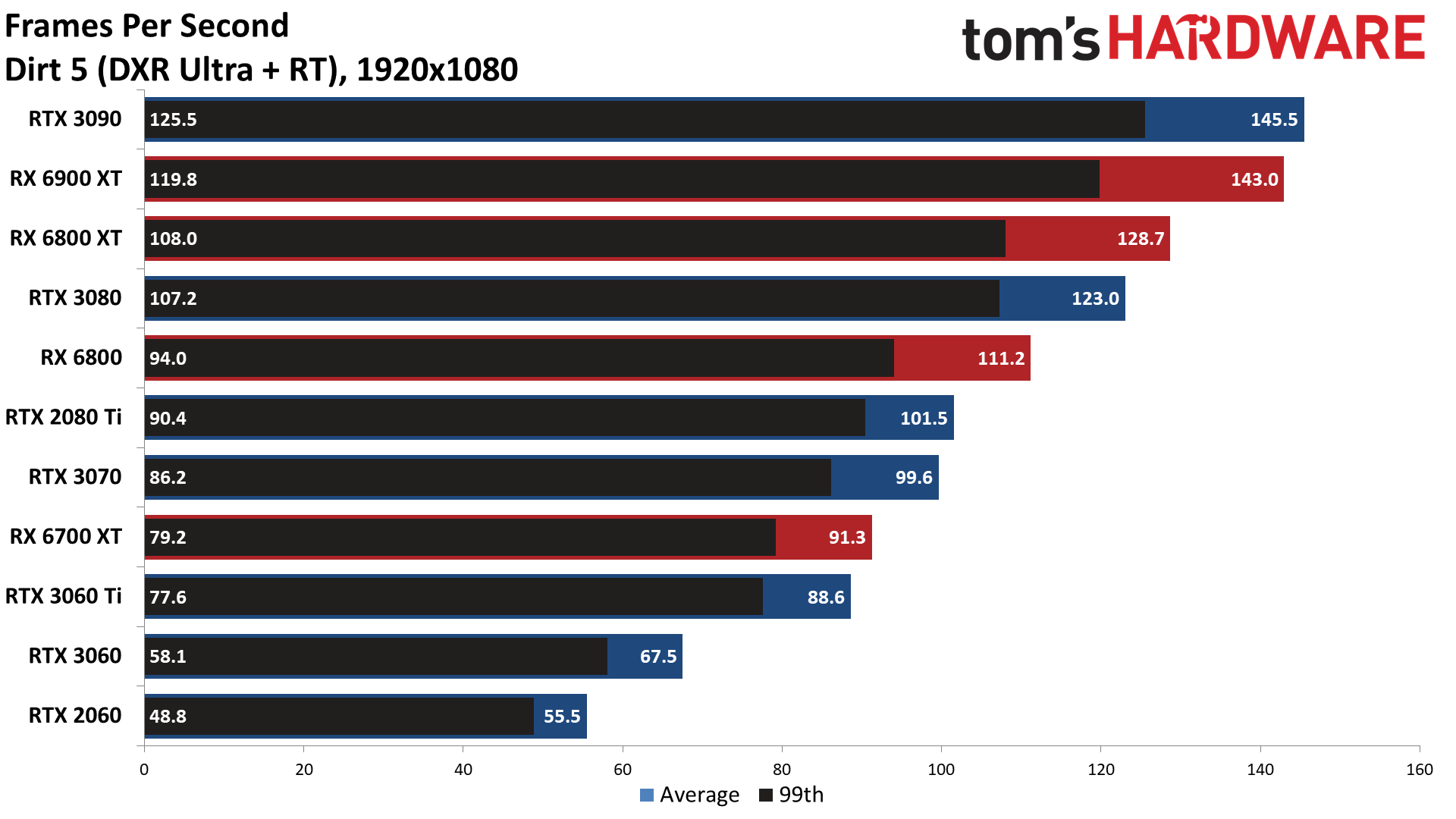

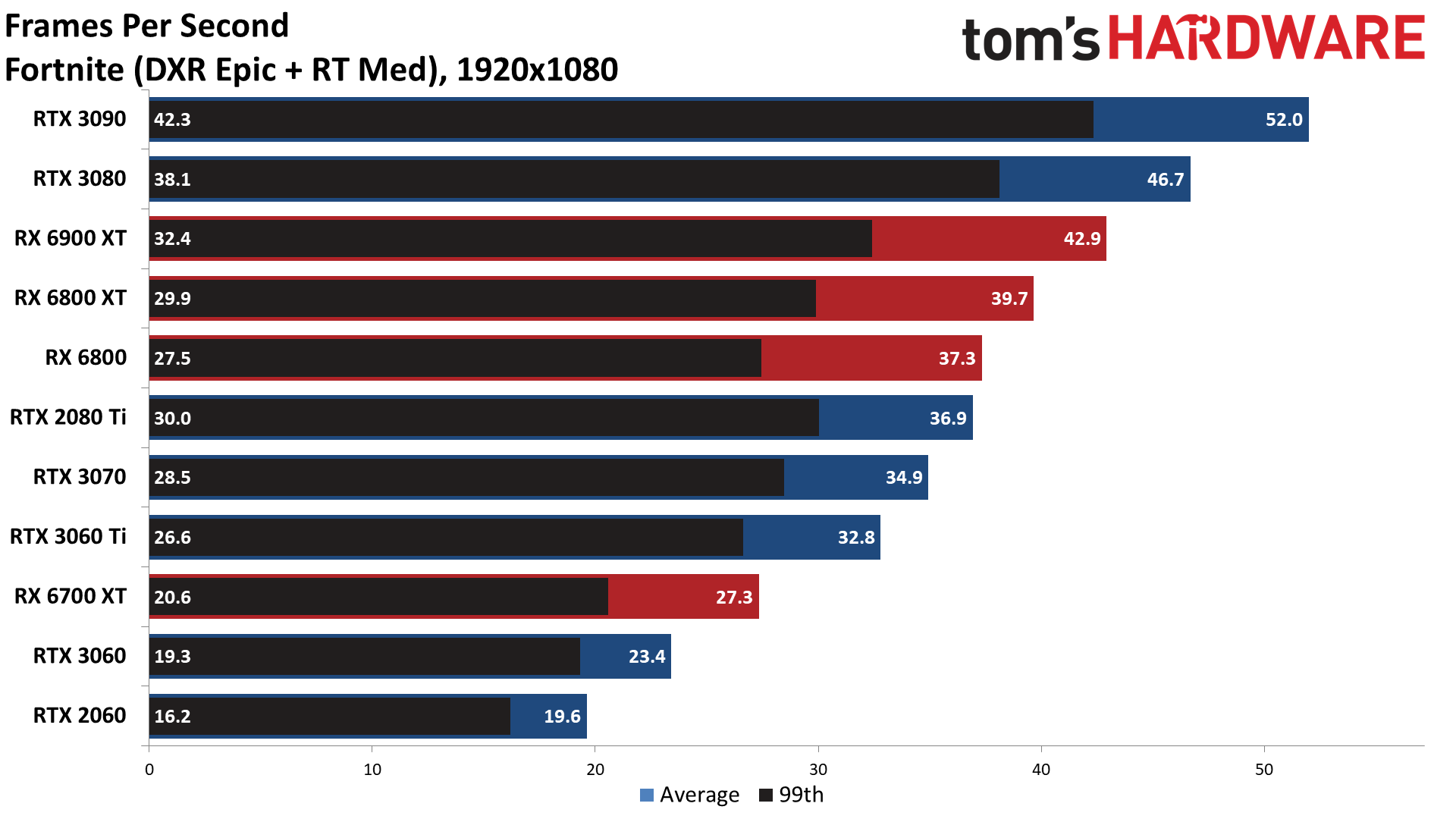

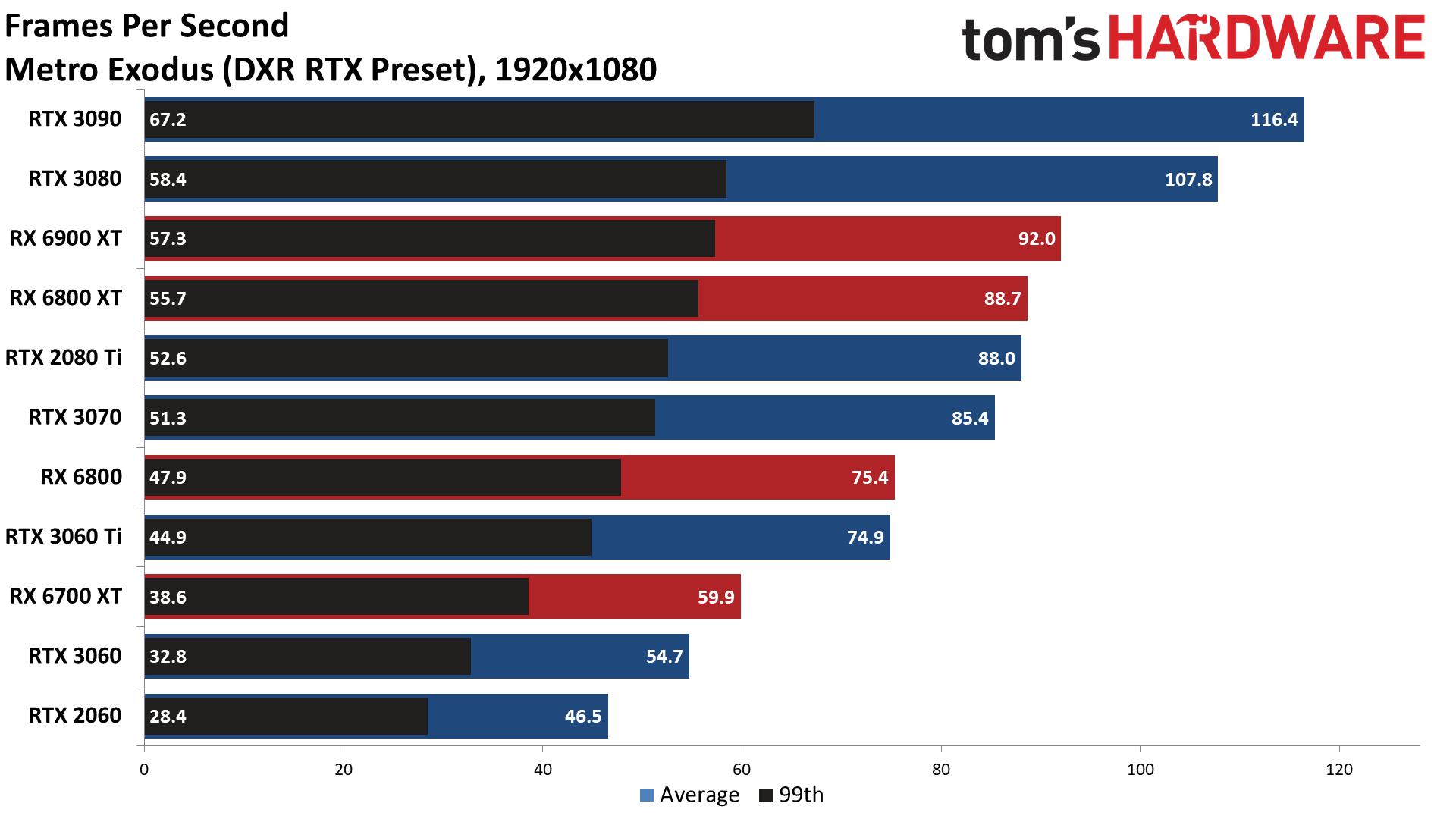

The ten games are: Bright Memory Infinite, Control, Cyberpunk 2077, Dirt 5, Fortnite, Godfall, Metro Exodus, Minecraft, Shadow of the Tomb Raider, and Watch Dogs Legion. Dirt 5 and Godfall are the AMD-promoted games, while most of the others are Nvidia-promoted, the exception being Bright Memory Infinite — it's currently a standalone benchmark of the upcoming expanded version of Bright Memory. We've tested at 'reasonable' quality levels for ray tracing, which mostly means maxed out settings, though we did step down a notch or two in Cyberpunk 2077 and Fortnite.

Besides DXR, eight of the games also support Nvidia's DLSS (Deep Learning Super Sampling) technology, which uses an AI trained network to upscale and anti-alias frames in order to boost performance while delivering similar image quality. DLSS has proven to be a critical factor in Nvidia's ray tracing push, as rendering at a lower resolution and then upscaling can result in far better framerates. Metro Exodus and Shadow of the Tomb Raider currently use DLSS 1.0, which wasn't quite as nice looking and had some other oddities (Metro is slated to get a DLSS 2.0 update in the near future), so we've confined our DLSS testing to the six remaining games that implement DLSS 2.0/2.1, and we've tested all of these with DLSS in Quality mode — the best image quality mode with 2X resolution upscaling, which tends to result in similar image fidelity as native rendering with temporal AA.

Because ray tracing tends to be extremely demanding, we've opted to stick with testing at only 1080p and 1440p. Nvidia's cards may be able to manage playable framerates at 4K with DLSS in some cases, but most of the cards simply aren't cut out to handle games at 4K native with DXR. We'll start with the native benchmarks at each resolution and then move on to DLSS 2.0 Quality testing.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Ray Tracing Benchmarks at 1080p

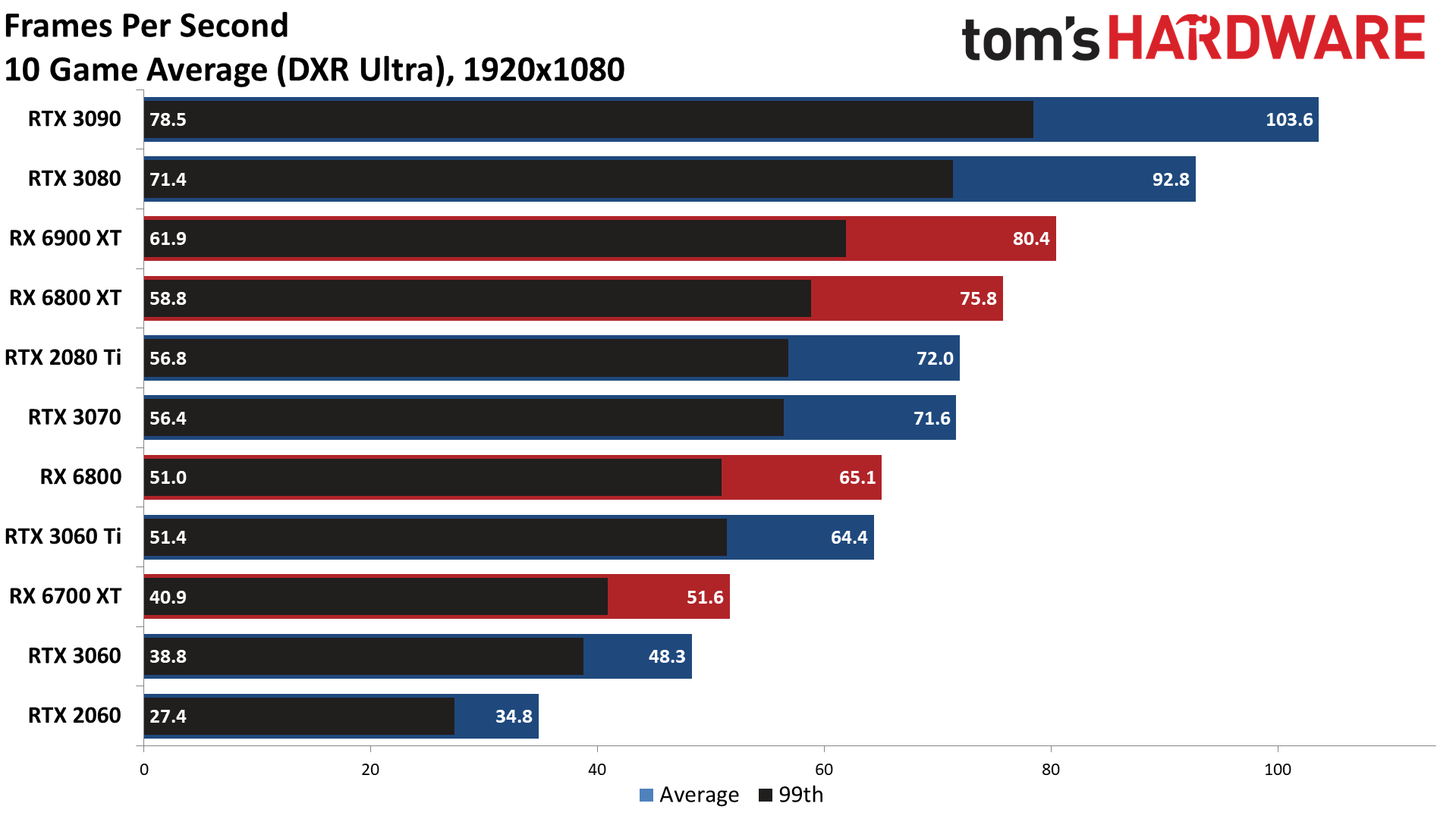

Running 1080p ultra with DXR enabled already pushes several of the cards well below a steady 60 fps. Only the RTX 3060 Ti (which is about the same performance as an RTX 2080 Super) and the RX 6800 and above average more than 60 fps across our test suite. Even then, there are games where performance dips well below that mark.

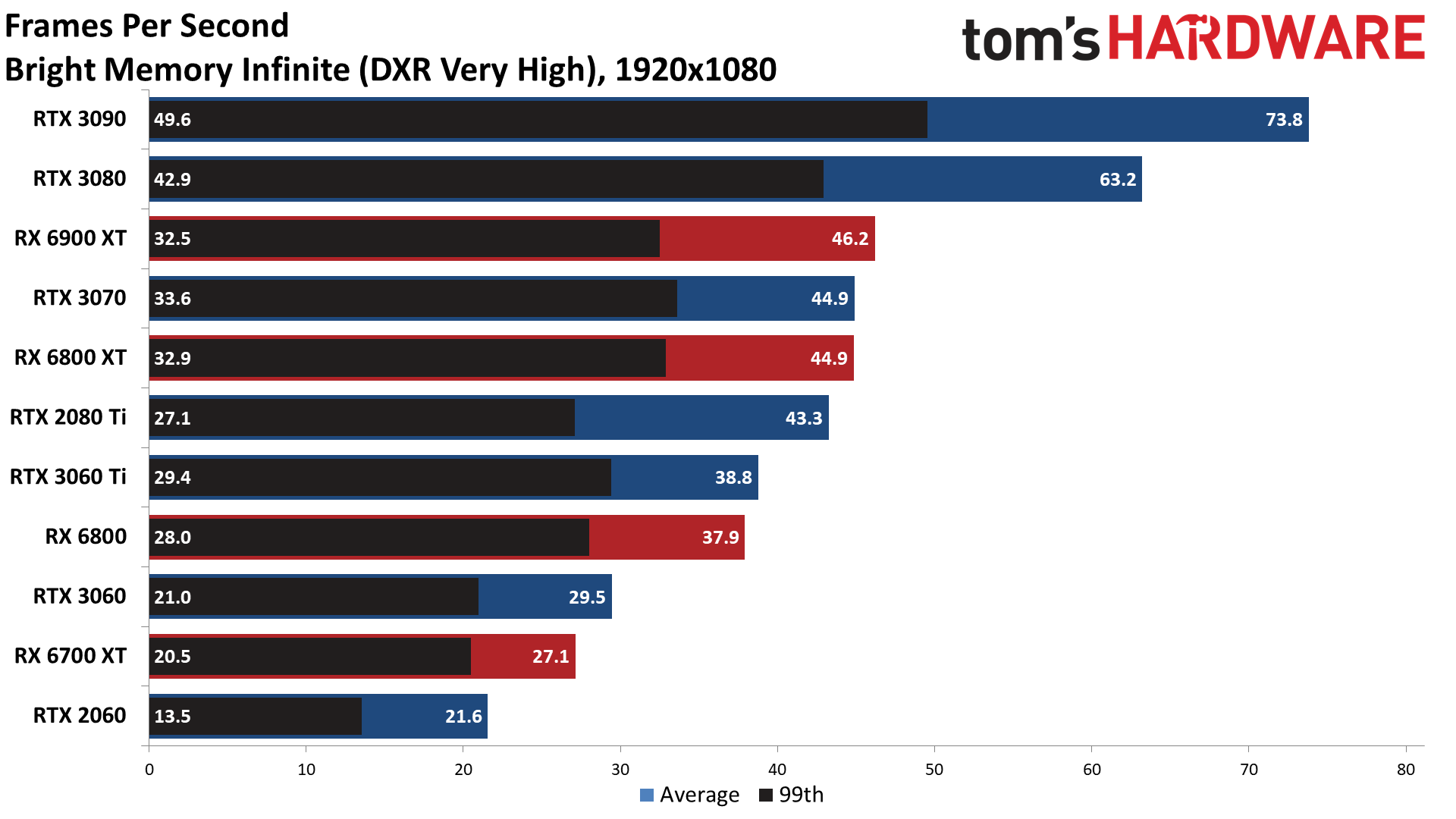

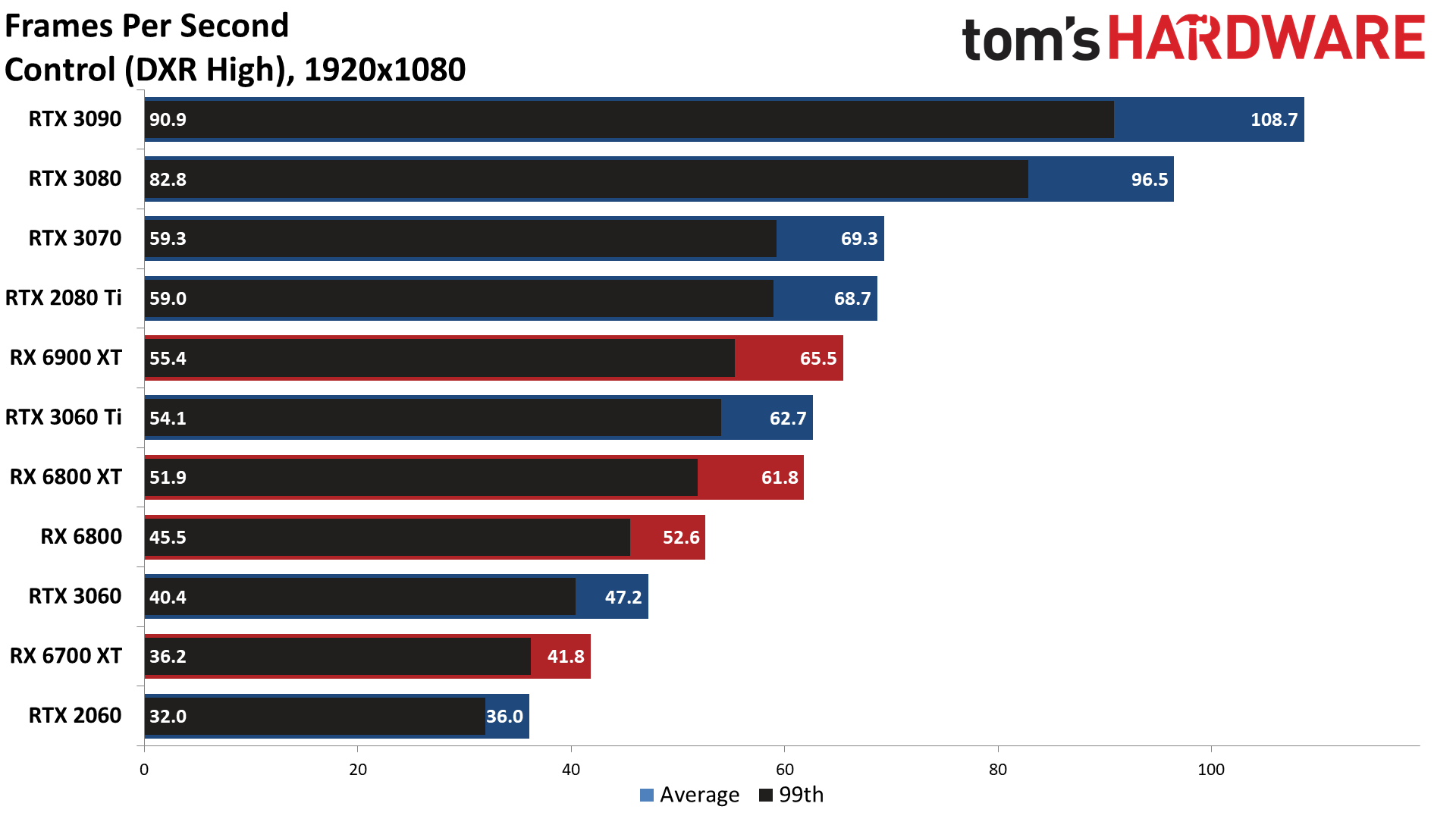

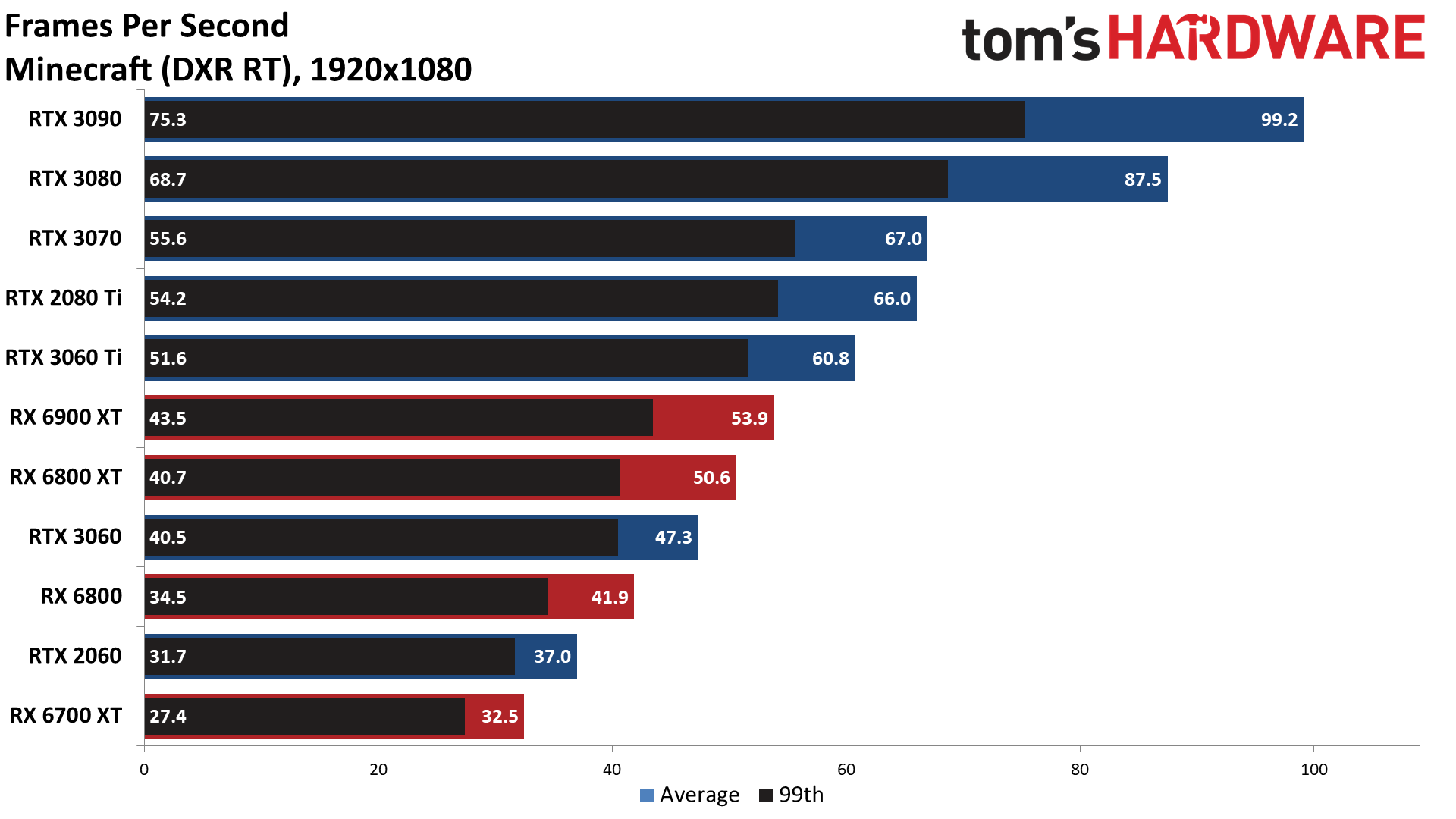

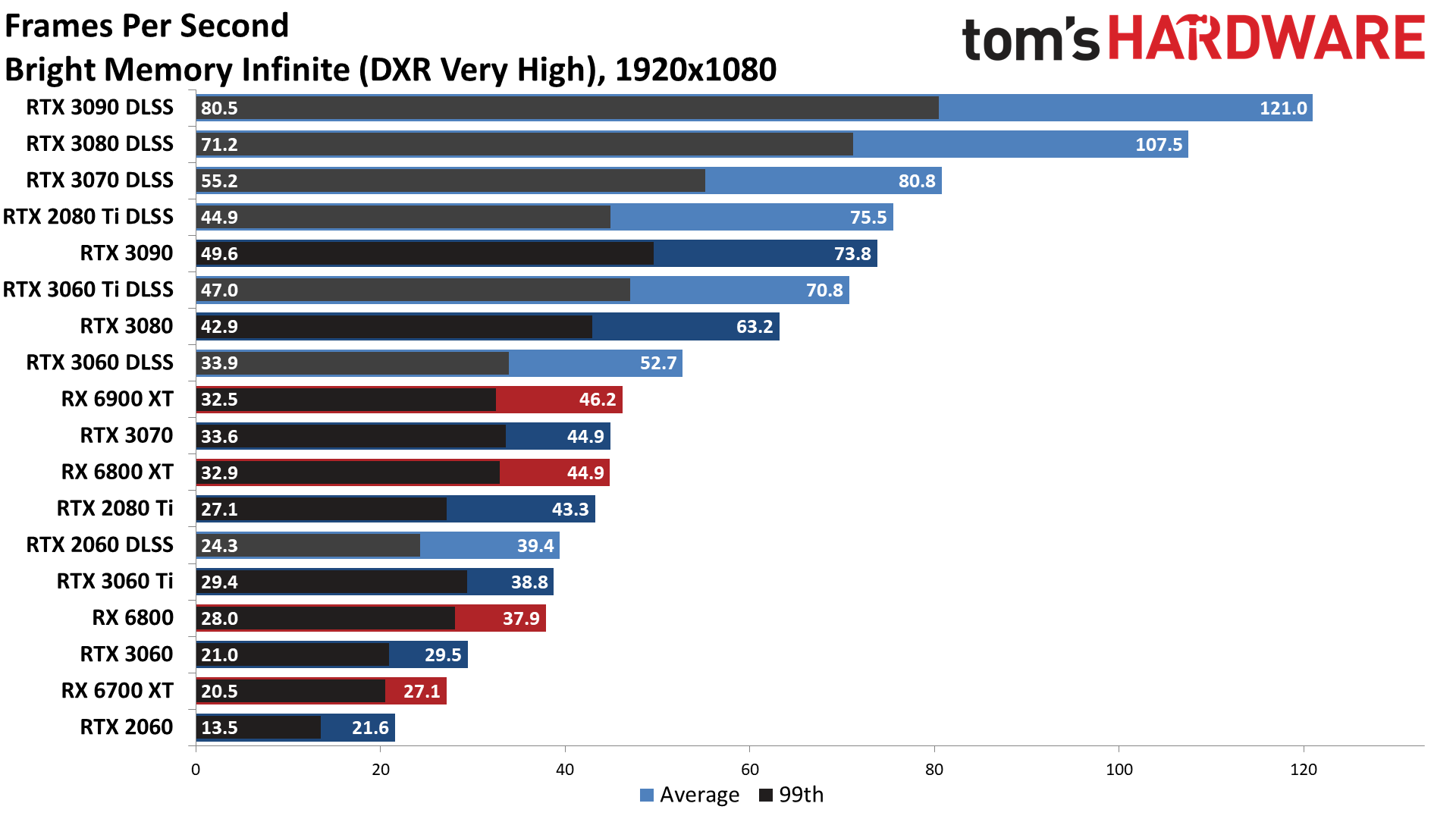

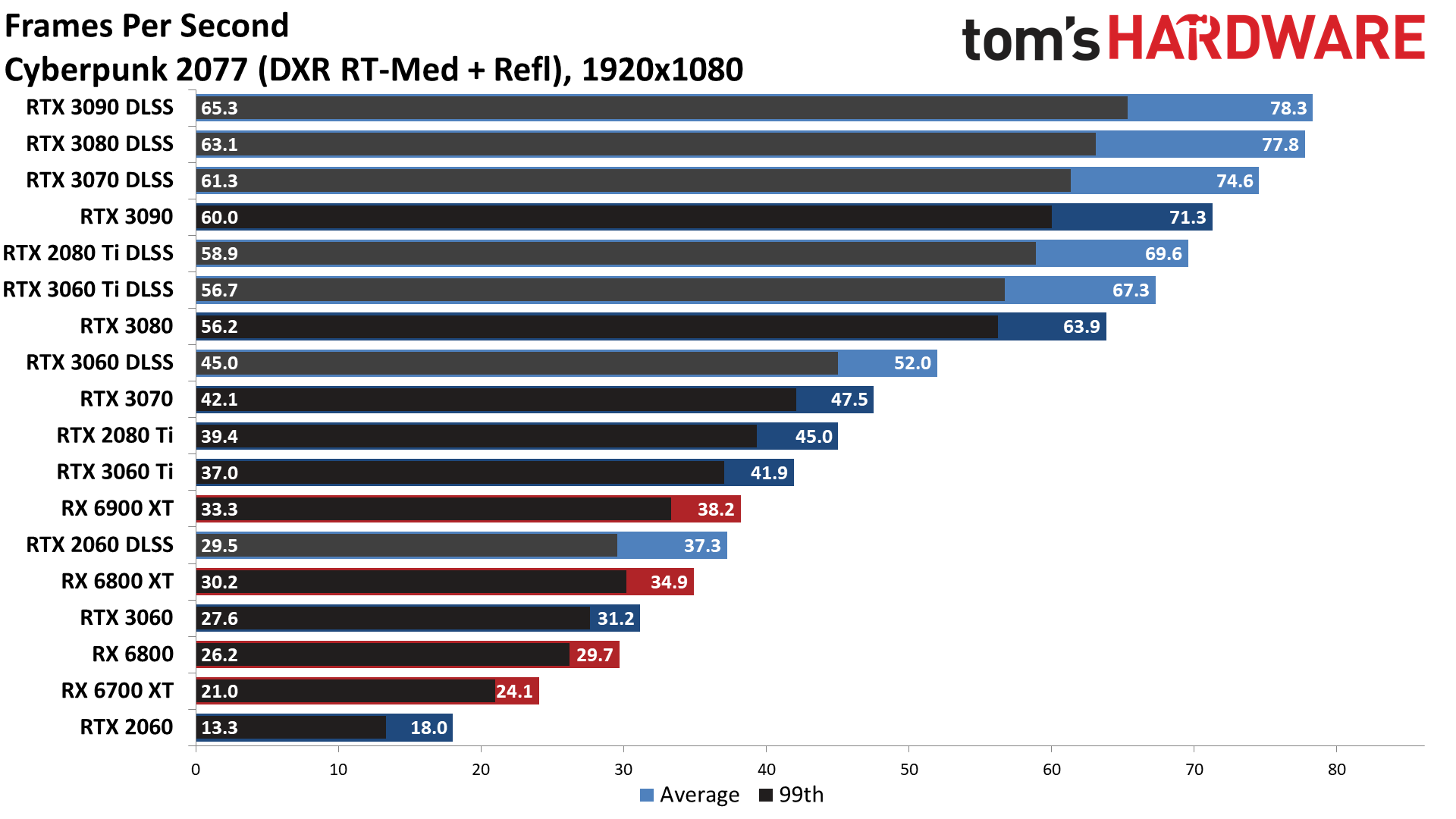

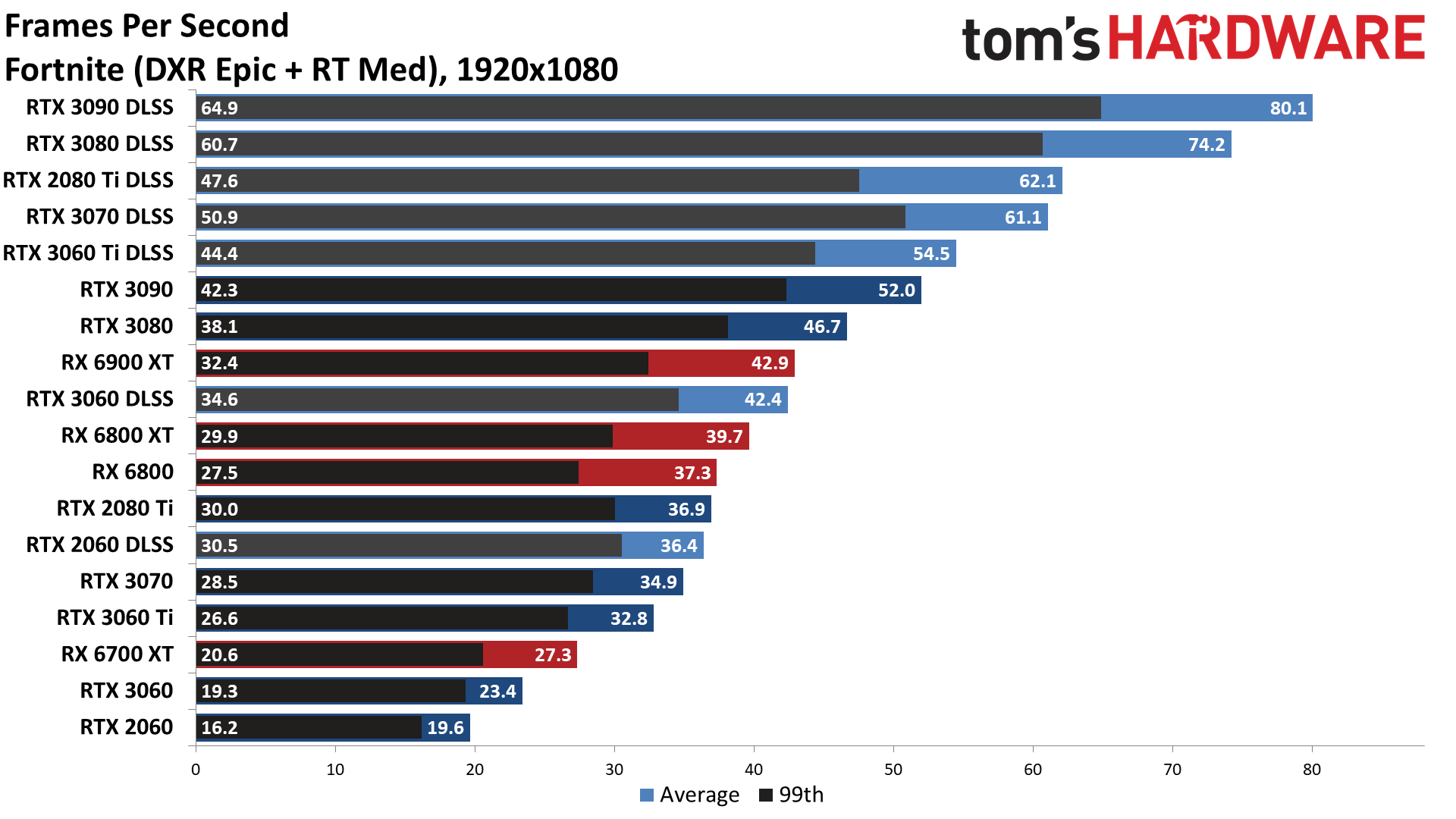

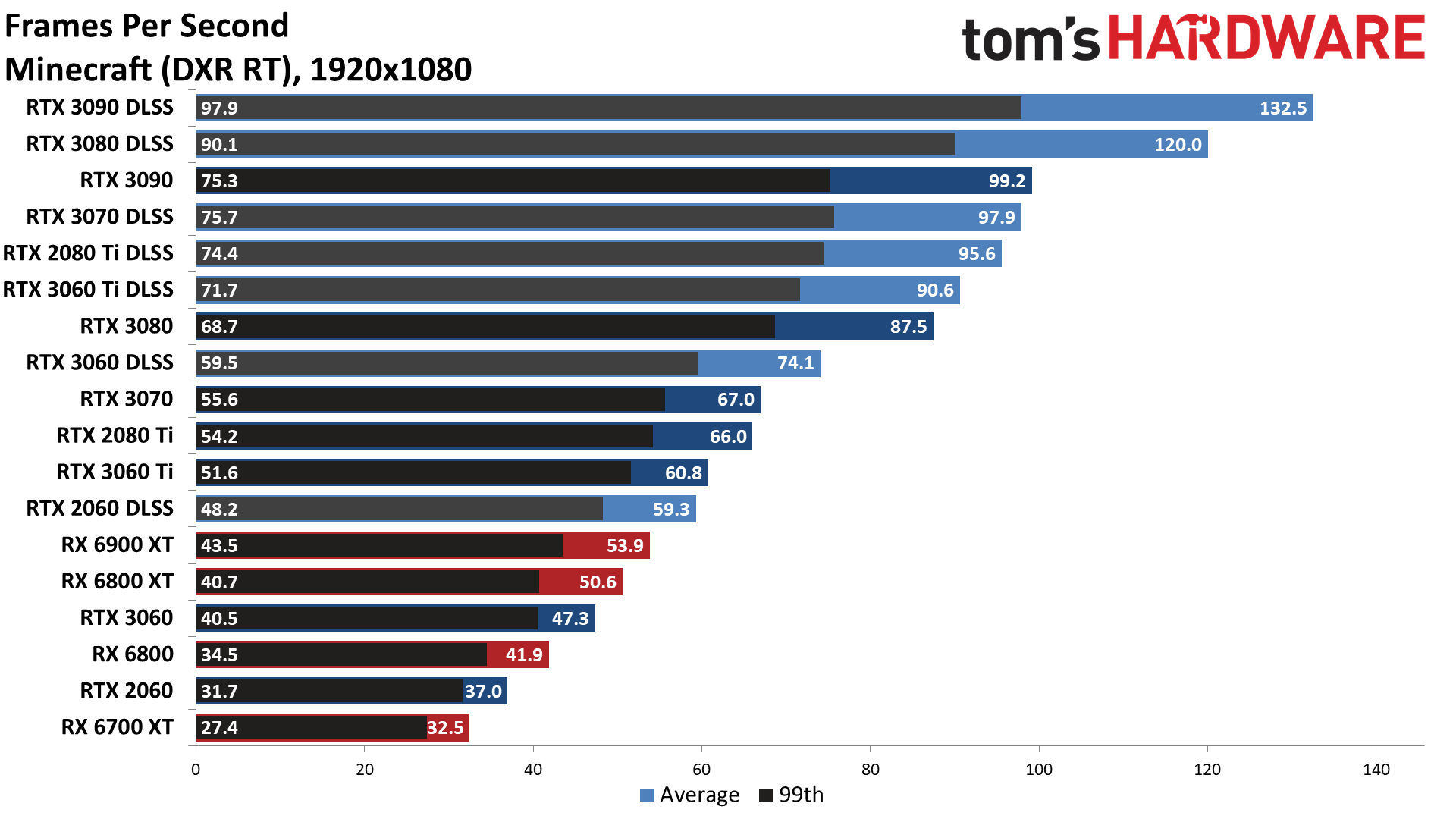

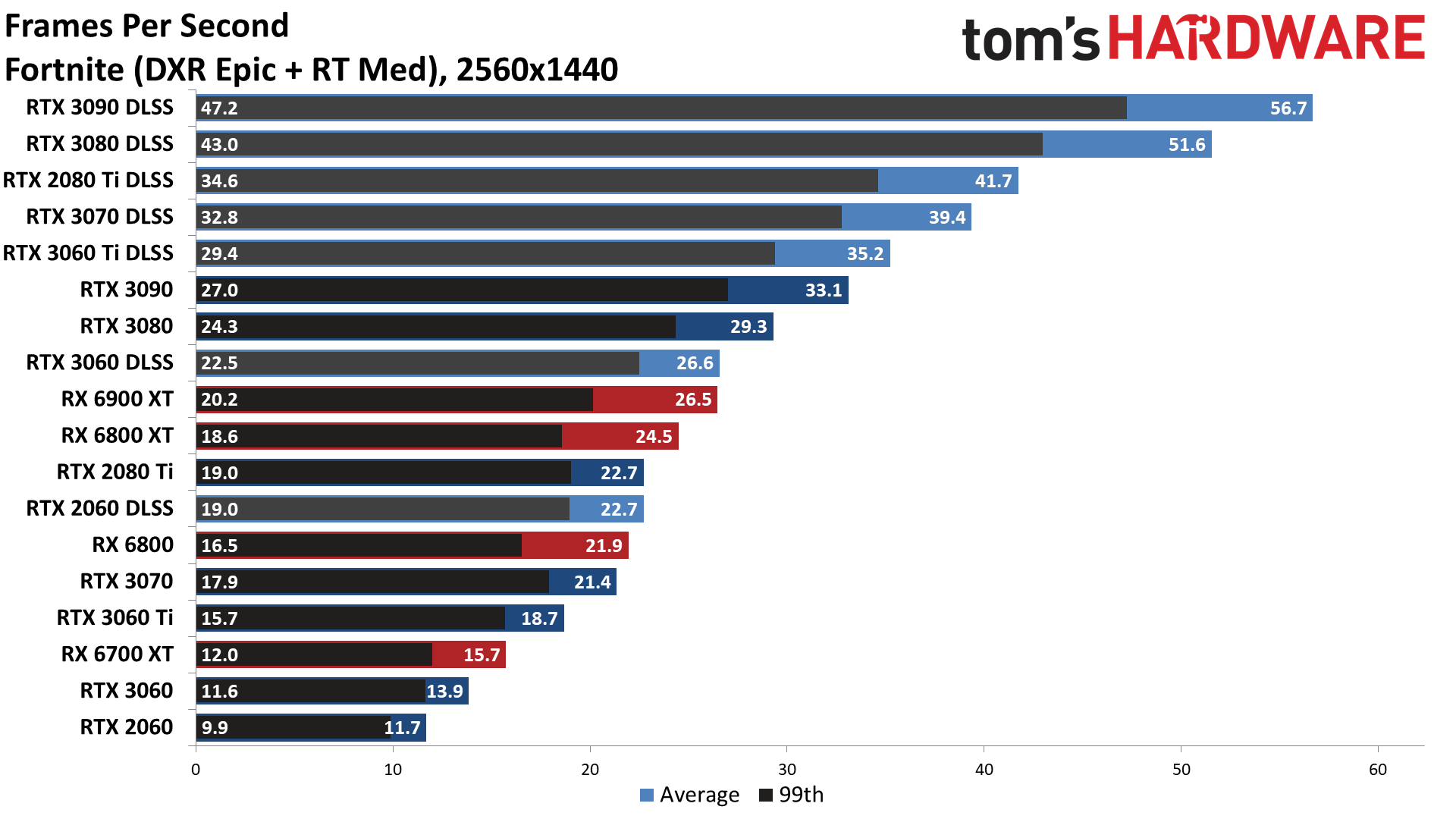

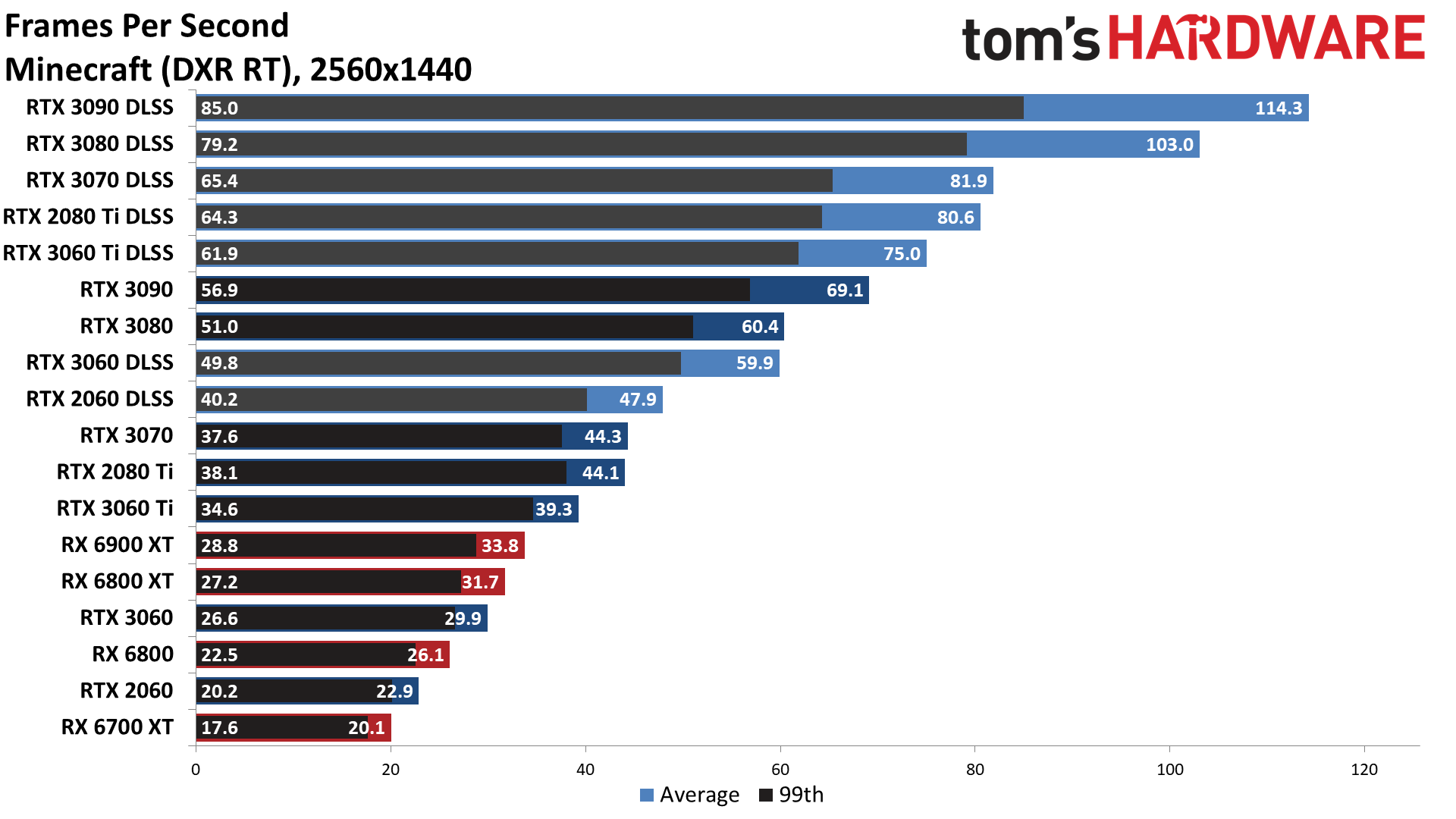

Fortnite ends up as the most demanding ray tracing game right now, followed closely by Cyberpunk 2077 and Bright Memory Infinite. All of those use DXR for multiple effects, including shadows, lighting, reflections, and more, which is why they're so demanding. Control and Minecraft also use plenty of ray tracing effects, and Minecraft actually implements what Nvidia calls "full path tracing" — the simple block graphics make it easier to do more ray tracing calculations.

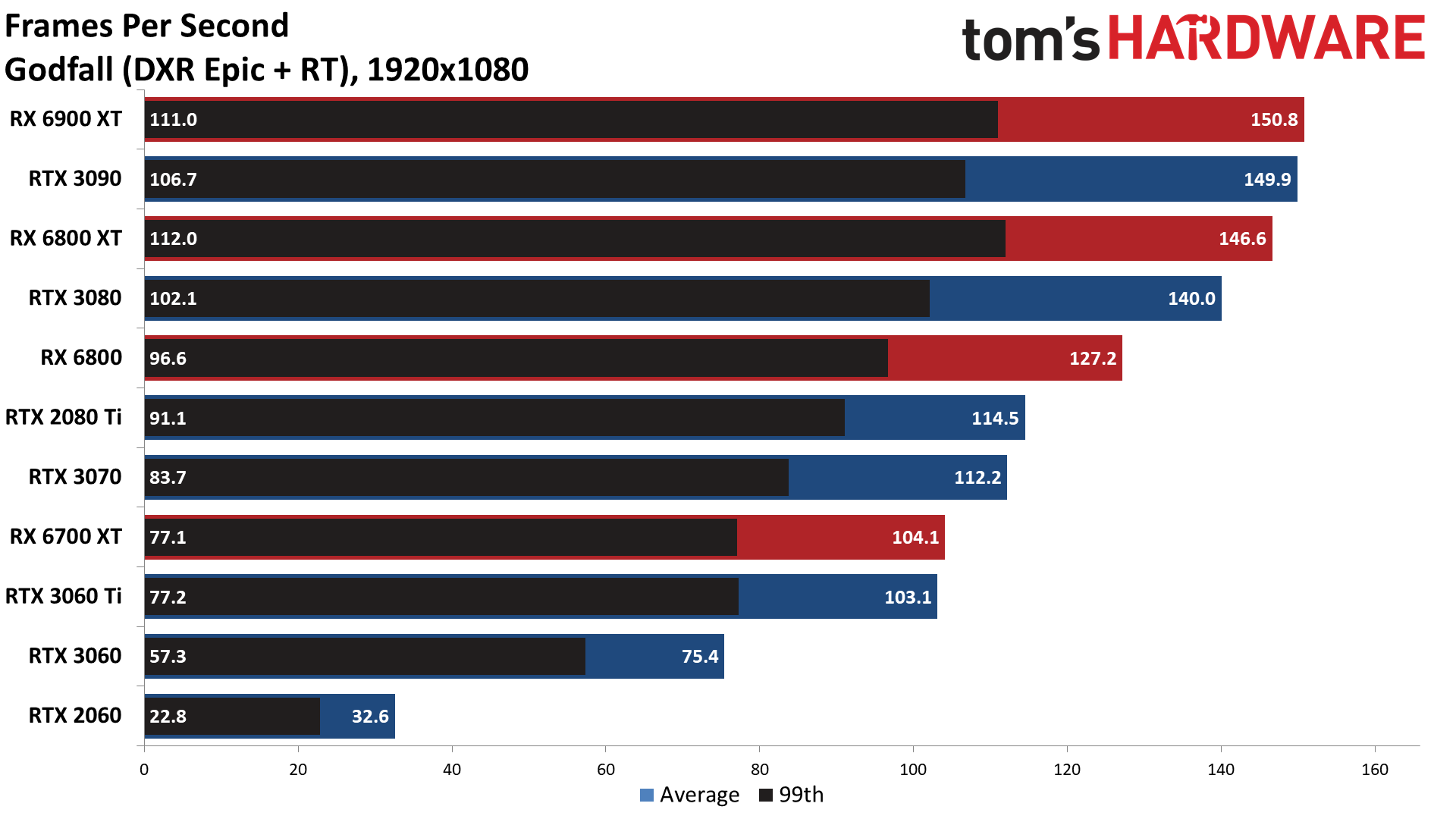

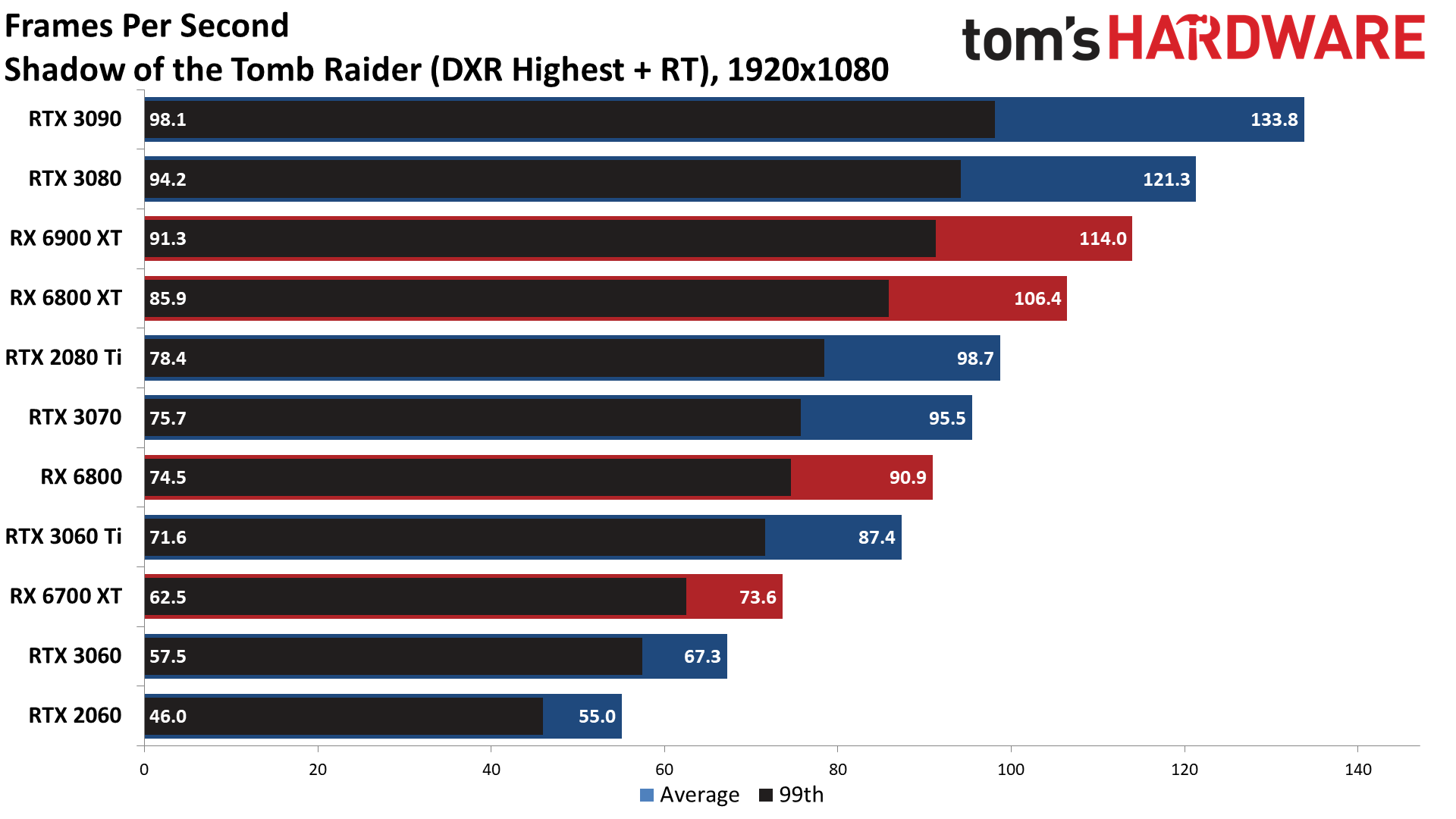

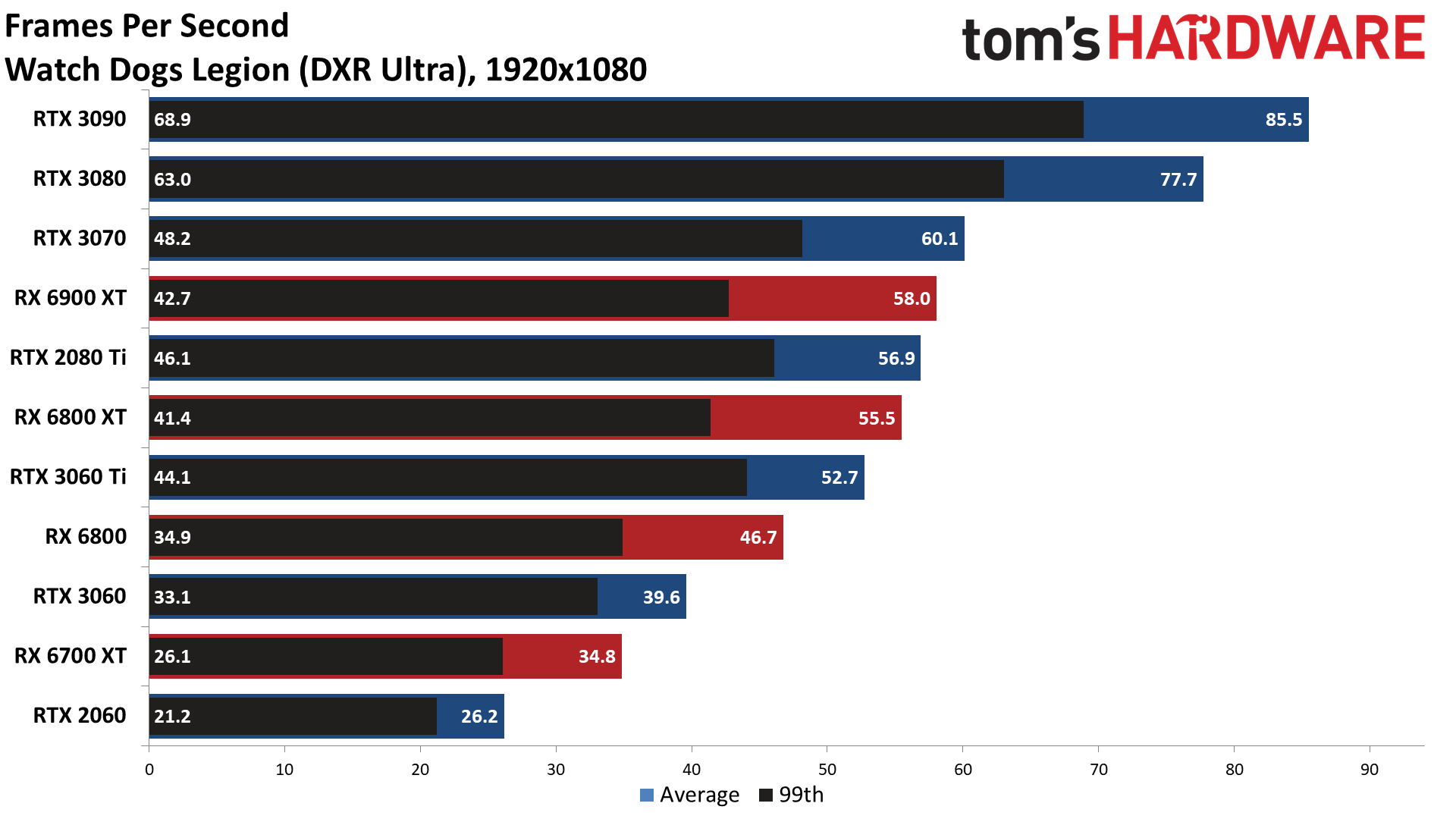

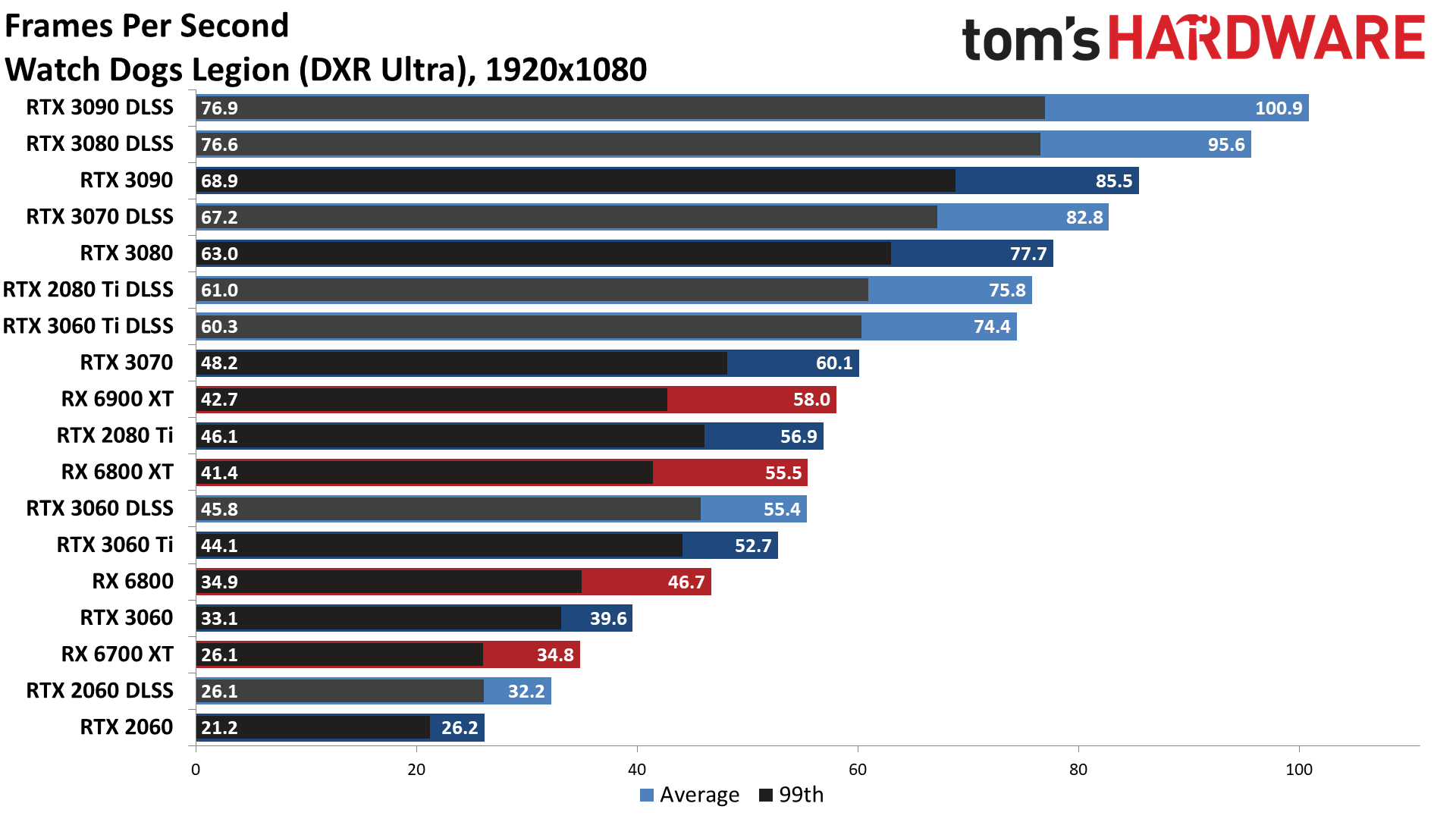

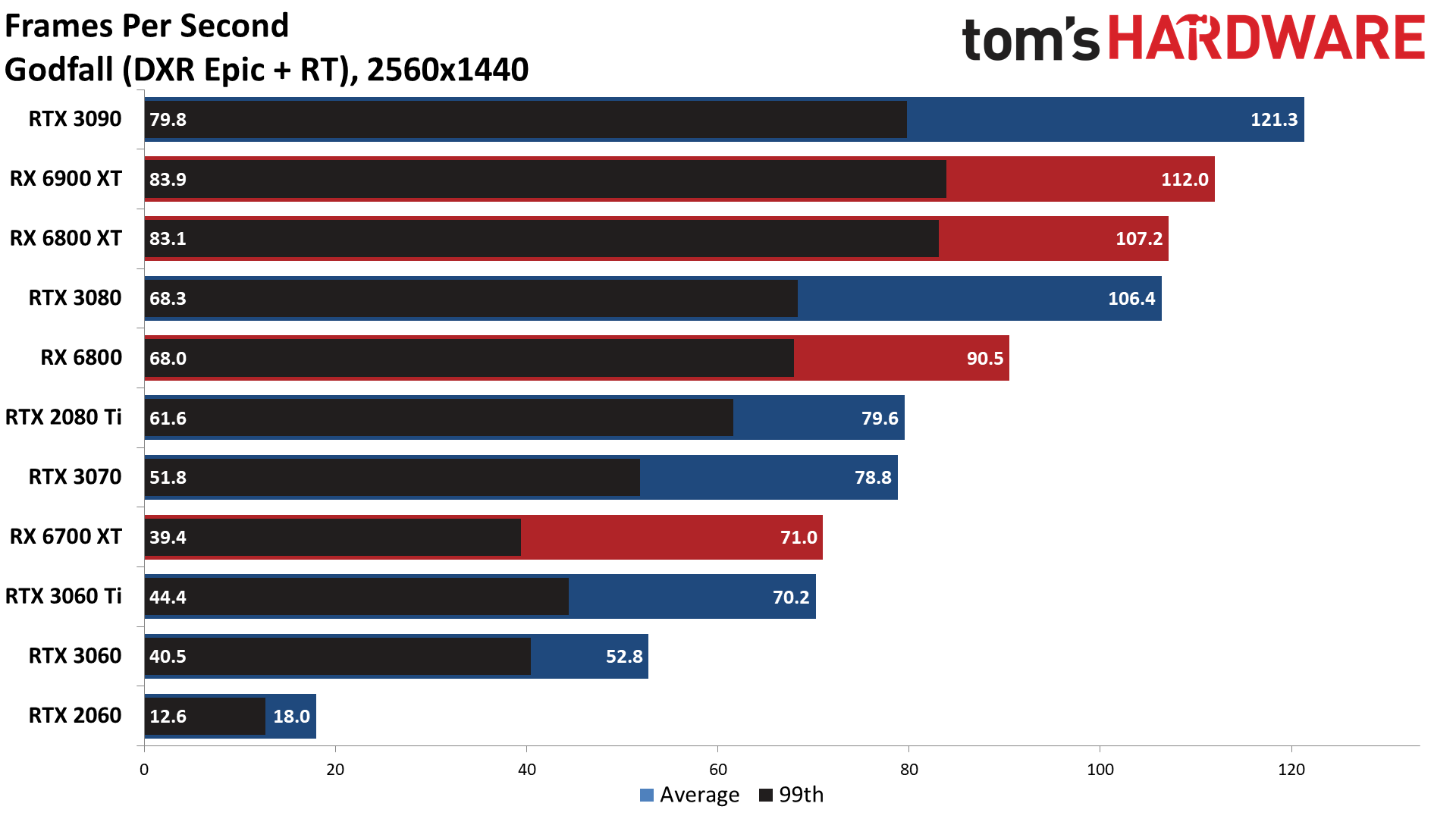

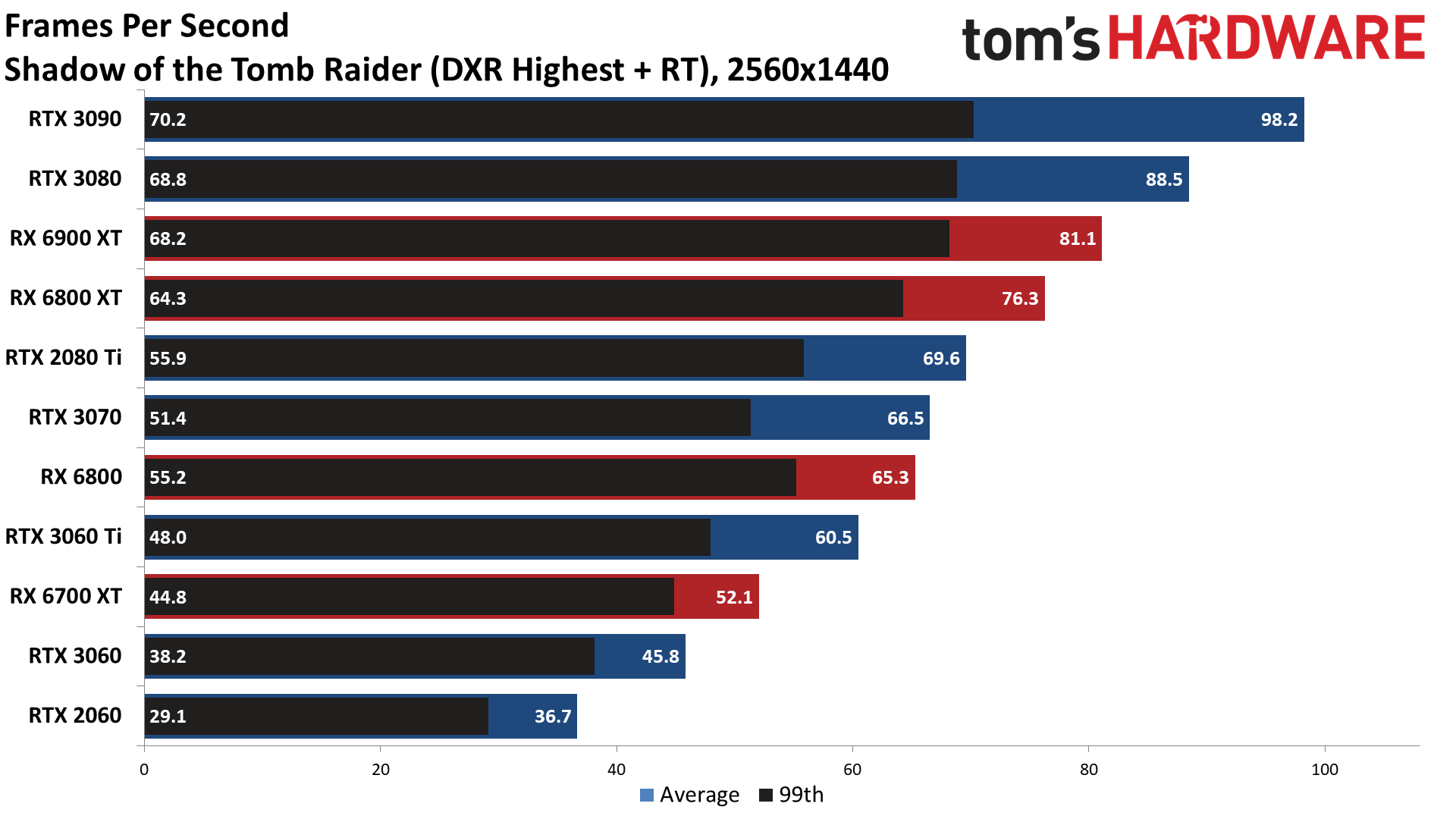

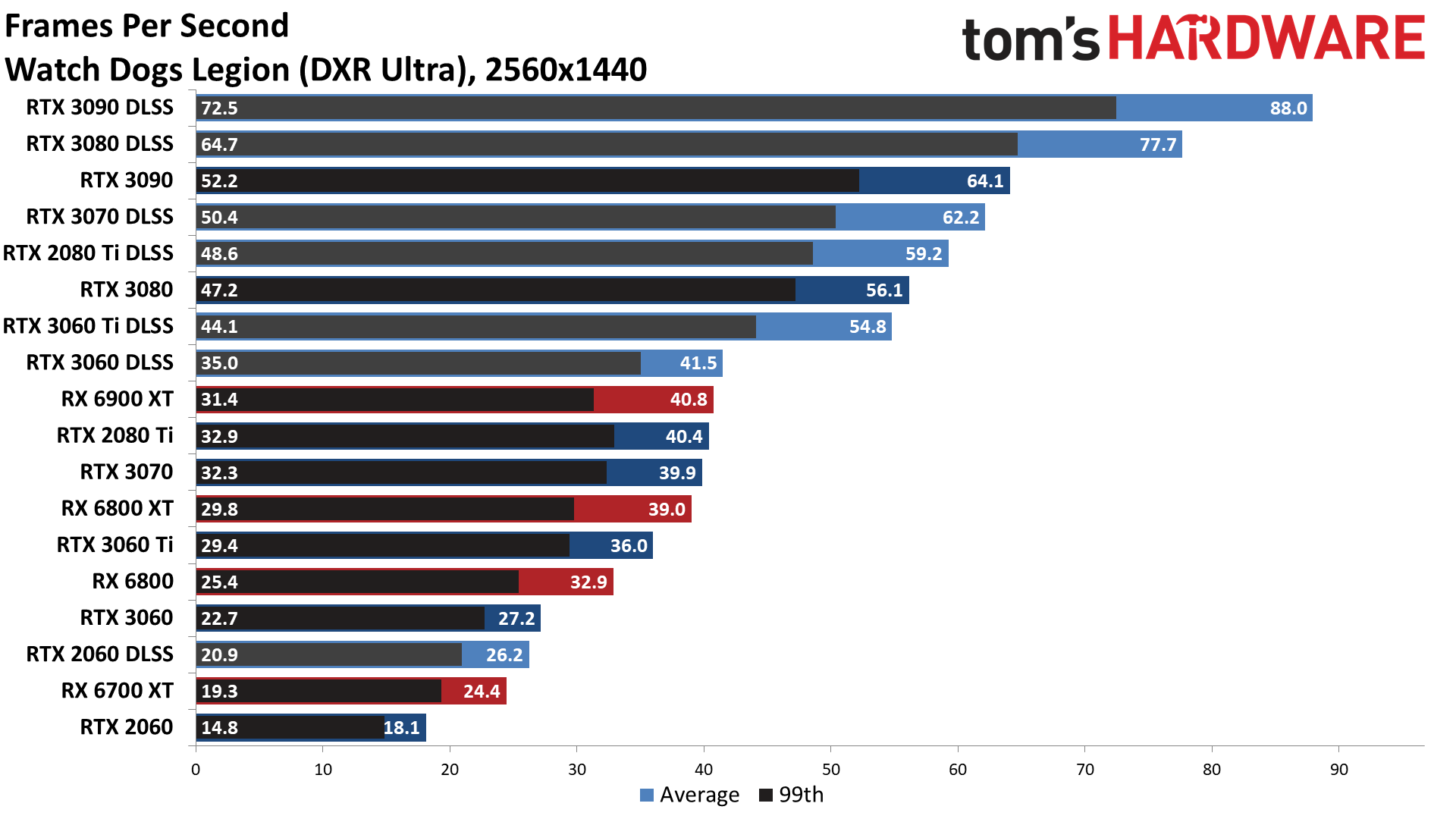

Most of the games that only implement one ray tracing effect — Dirt 5, Godfall, and Shadow of the Tomb Raider only use RT for shadows, while Metro uses it for global illumination and Watch Dogs Legion uses it for reflections — perform better, though the RTX 2060 still struggles to hit 30 fps in several games. Godfall is an interesting case as well, as not only is it AMD promoted, but it appears to use more VRAM, which can tank performance on cards with less than 12GB VRAM at times.

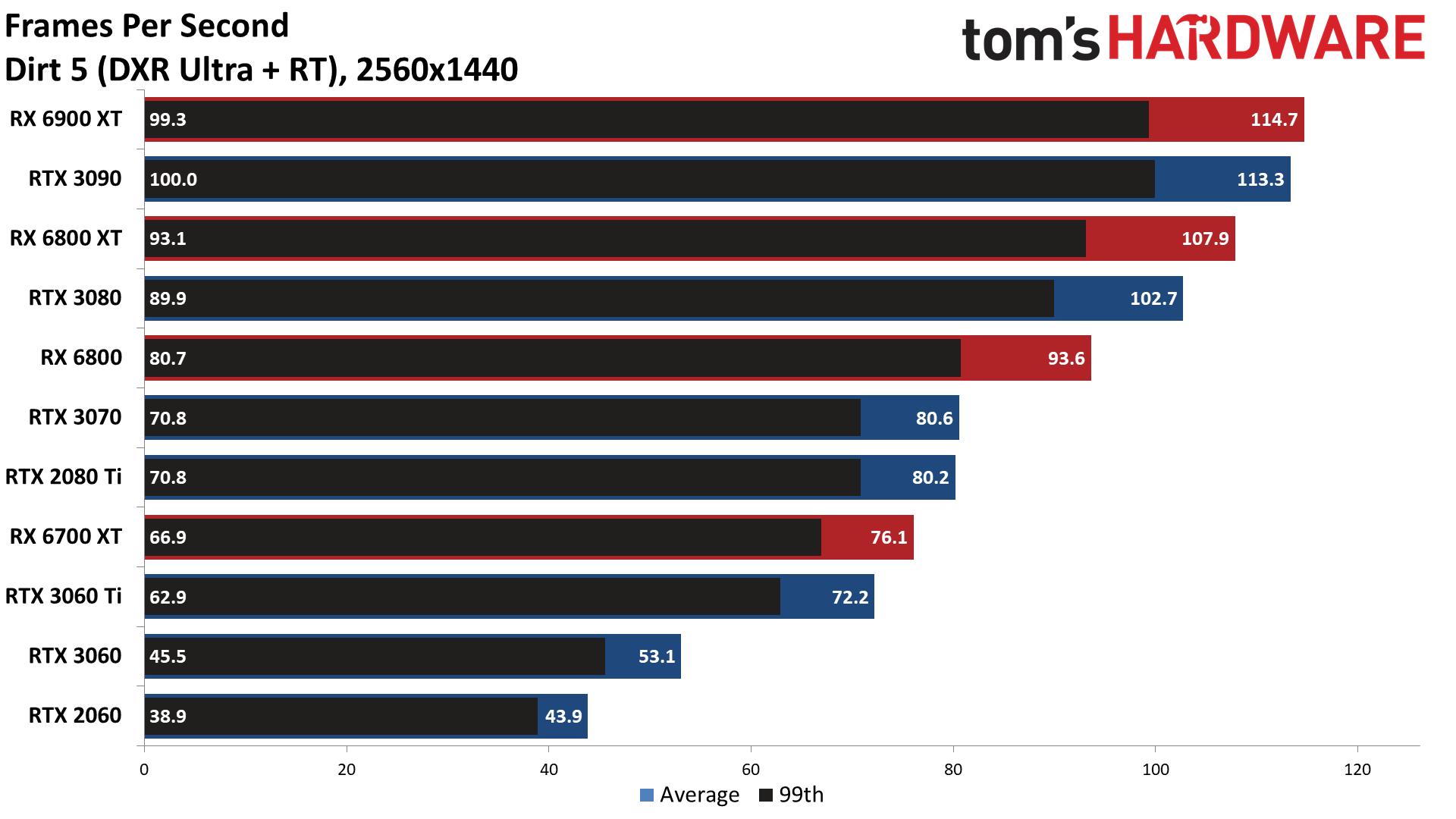

Overall, the 3090 and 3080 take top honors, followed by the RX 6900 XT and RX 6800 XT. The RTX 2080 Ti and RTX 3070 are effectively tied, as are the RX 6800 and RTX 3060 Ti, with the RX 6700 XT and RTX 3060 12GB also landing close together. Only the RTX 2060 really falls off the pace set by the other cards. Without the two AMD-promoted games, the RX 6900 XT would have ended up closer to the RTX 3070, though it's still interesting to see how performance varies by game — AMD's GPUs did reasonably well in Dirt 5, Fortnite, Godfall, Metro Exodus, and Shadow of the Tomb Raider.

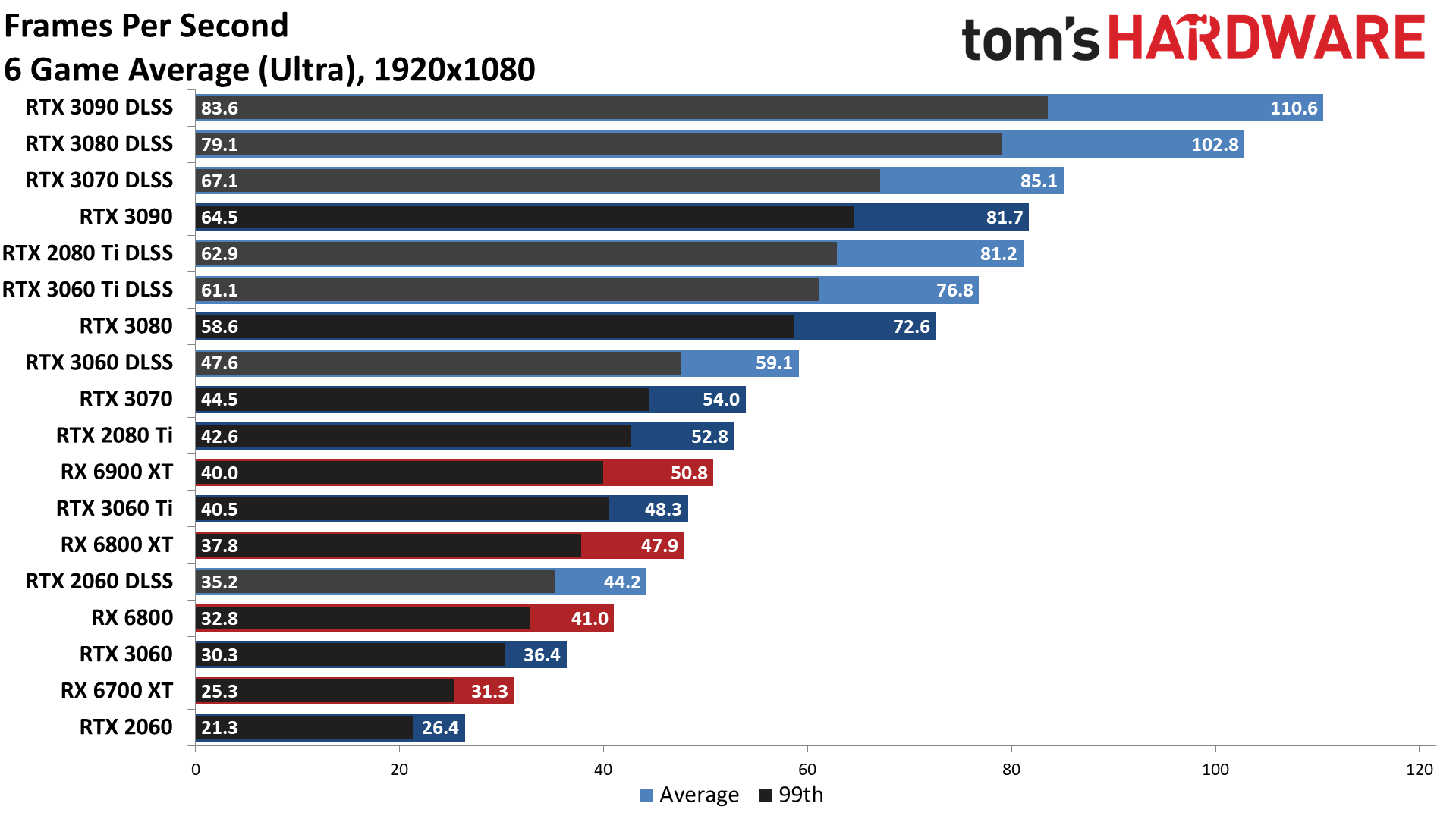

As you'd expect, enabling DLSS 2x upscaling via the Quality mode changes the rankings a lot. By restricting the benchmarks to the six games with DLSS 2.0 support, suddenly AMD's best only manages to rank at about the same level as the RTX 3060 Ti and RTX 3070 — and that's before turning on DLSS! With DLSS Quality mode enabled, only the RTX 2060 falls behind AMD's 6900 XT and 6800 XT in the overall rankings. Of course, as noted earlier, all of these games are inherently more Nvidia-promoted, though the level of promotion varies quite a bit.

It's also interesting to see that the RTX 2080 Ti falls a bit further behind the RTX 3070 now. That makes sense, as Ampere's Tensor cores have up to four times the throughput as the Turing Tensor cores (2X for raw throughput, and another 2X for sparsity). Even with more memory and memory bandwidth, the 2080 Ti is only moderately faster than the RTX 3060 Ti.

Looking at the individual charts, even at 1080p — a resolution that tends to be more CPU limited — there's still plenty of differentiation between the various GPUs. Enabling DLSS also results in impressive performance improvements even at the top of the product stack with the RTX 3090 and 3080. Cyberpunk 2077 looks to be the most CPU-limited, topping out at just under 80 fps regardless of settings on our test system, and Watch Dogs Legion also appears to encounter a bit of CPU bottlenecking. Both have lots of NPC characters roaming around, which helps explain why they hit the CPU harder.

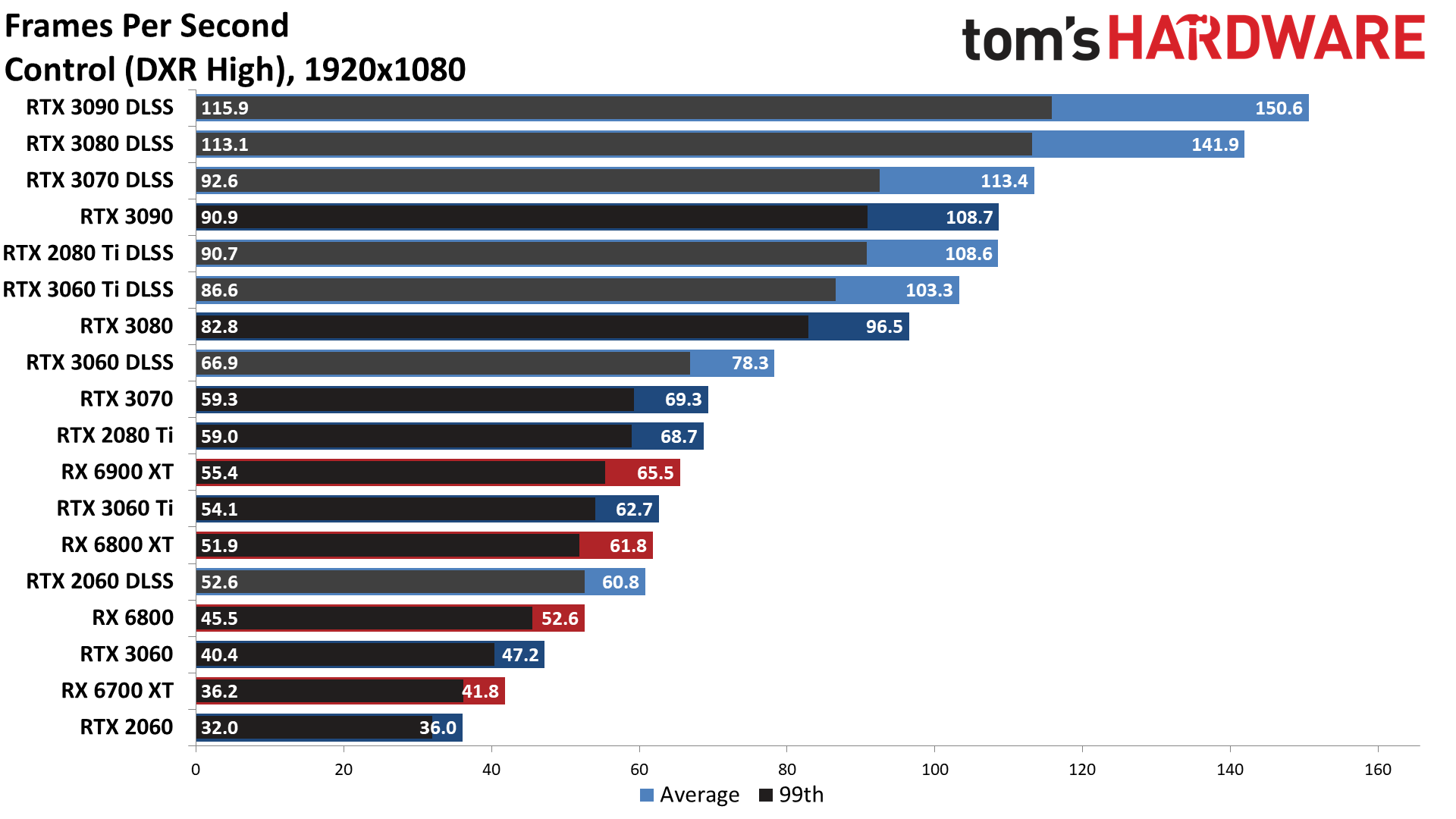

Bright Memory Infinite and Fortnite end up as the two biggest beneficiaries of DLSS Quality mode. The 3060 Ti with DLSS nearly matches the RTX 3090 at native in BMI, while in Fortnite the 3060 Ti and above with DLSS all beat the 3090 at native. Control also shows some significant performance gains, and even the RTX 2060 manages to clear 60 fps now.

Despite the lack of VRAM, the RTX 2060 with DLSS actually turns in better overall performance than the RX 6700 XT and RX 6800. It may not be significantly faster than the 6800, but that it's even mentioned in the same breath shows just how much DLSS 2.0 helps, and how badly AMD needs to get its FidelityFX Super Resolution (FSR) into the hands of game developers.

There are now over 30 shipping games with DLSS 2.0 support, and you don't need to have ray tracing enabled to see performance benefits from DLSS — there are significantly more games with DLSS support than there are games with ray tracing support right now. Sixteen shipping games have DLSS 2.0/2.1 support that don't utilize ray tracing, for example. Plus, Unreal Engine and Unity both have built-in DLSS 2.0 support, meaning developers using either of those engines can easily enable DLSS in their games.

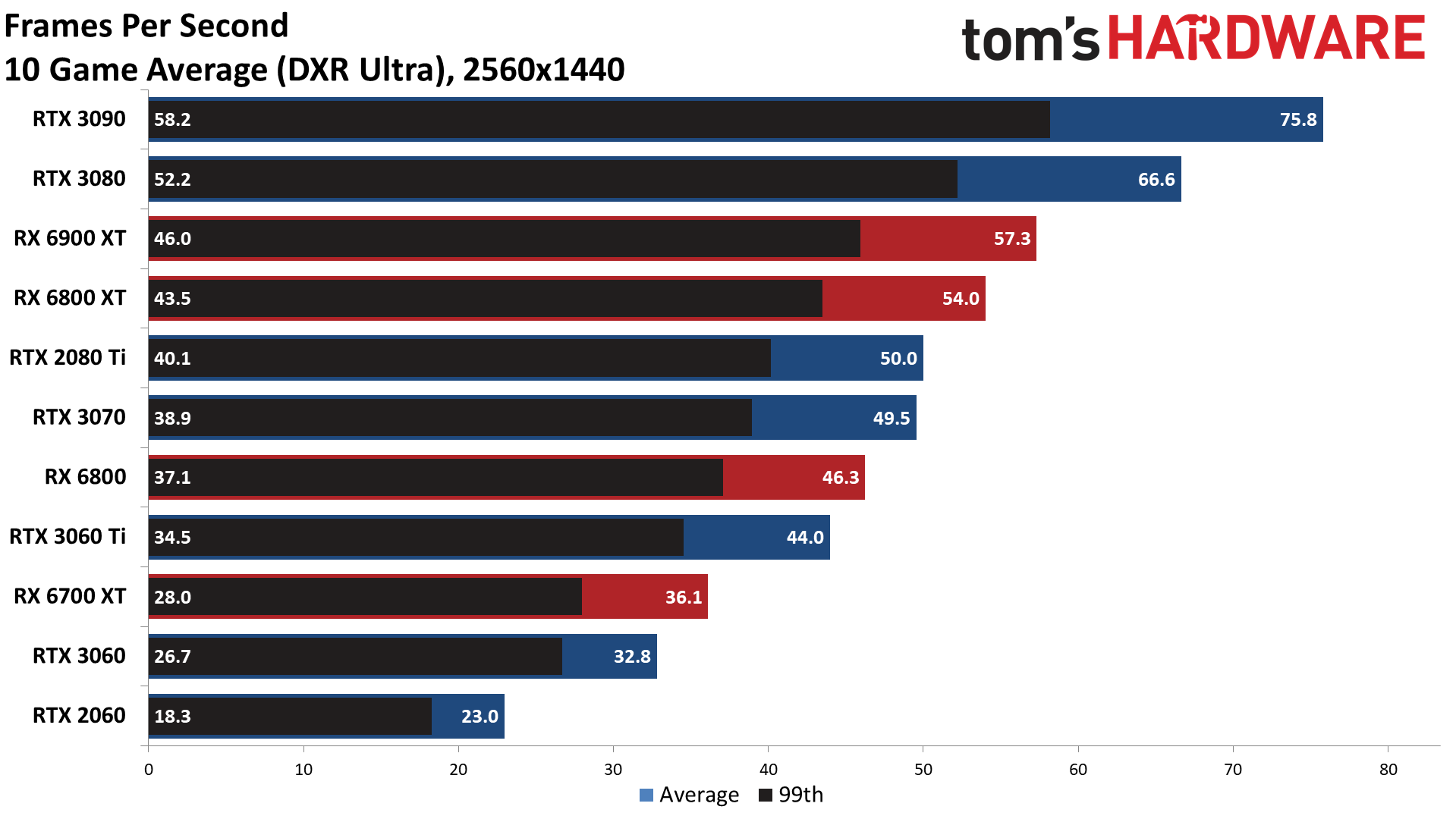

Ray Tracing Benchmarks at 1440p

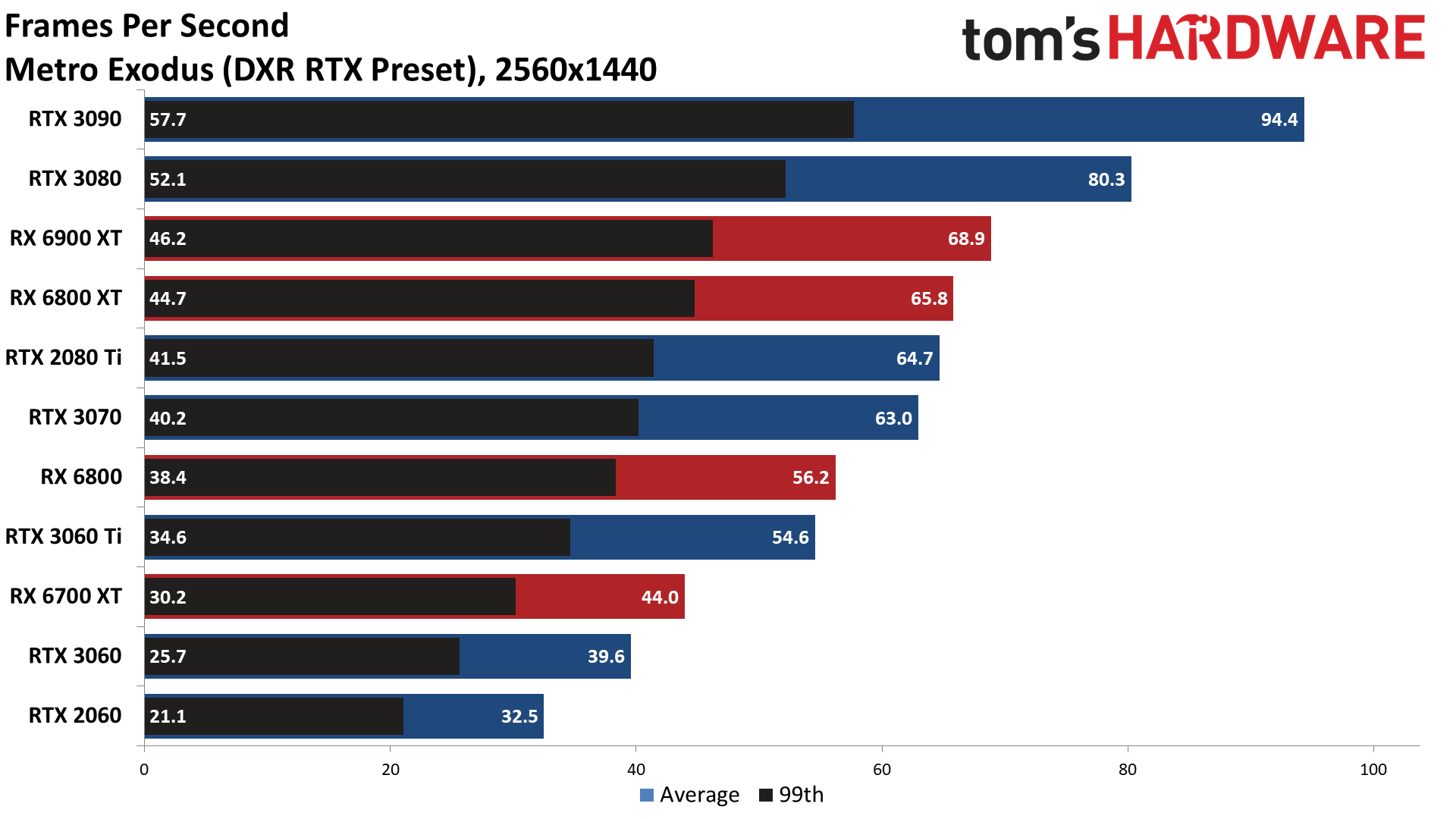

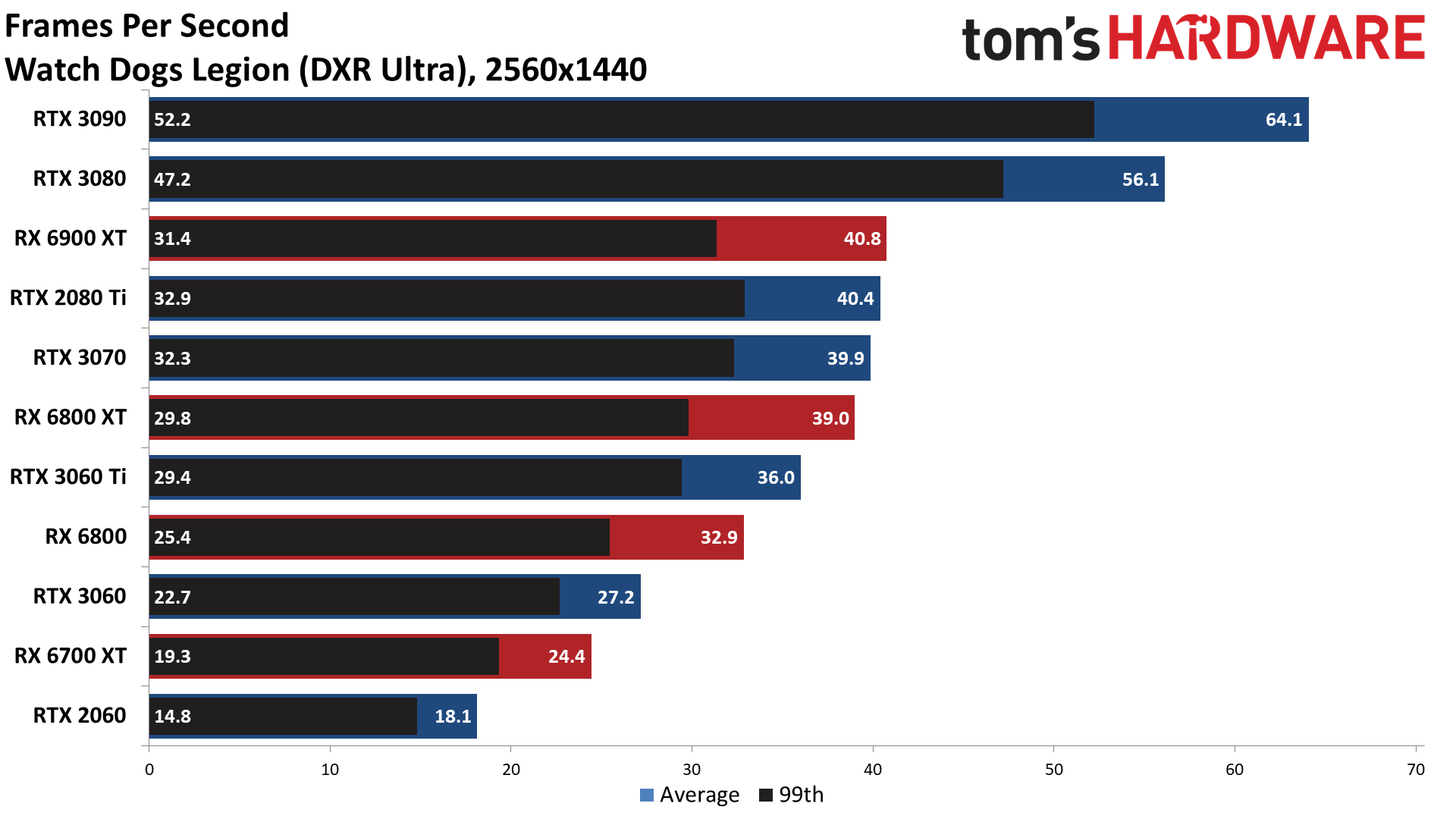

Bumping the resolution up to 1440p doesn't change the overall rankings at all at native resolution, though the margin of victory does increase quite a bit in some cases. The RTX 3080 and 3090 are the main beneficiaries of the higher resolution, while the RTX 2060 takes a pretty hard hit to performance — it's the only GPU that couldn't average 30 fps or more across our test suite.

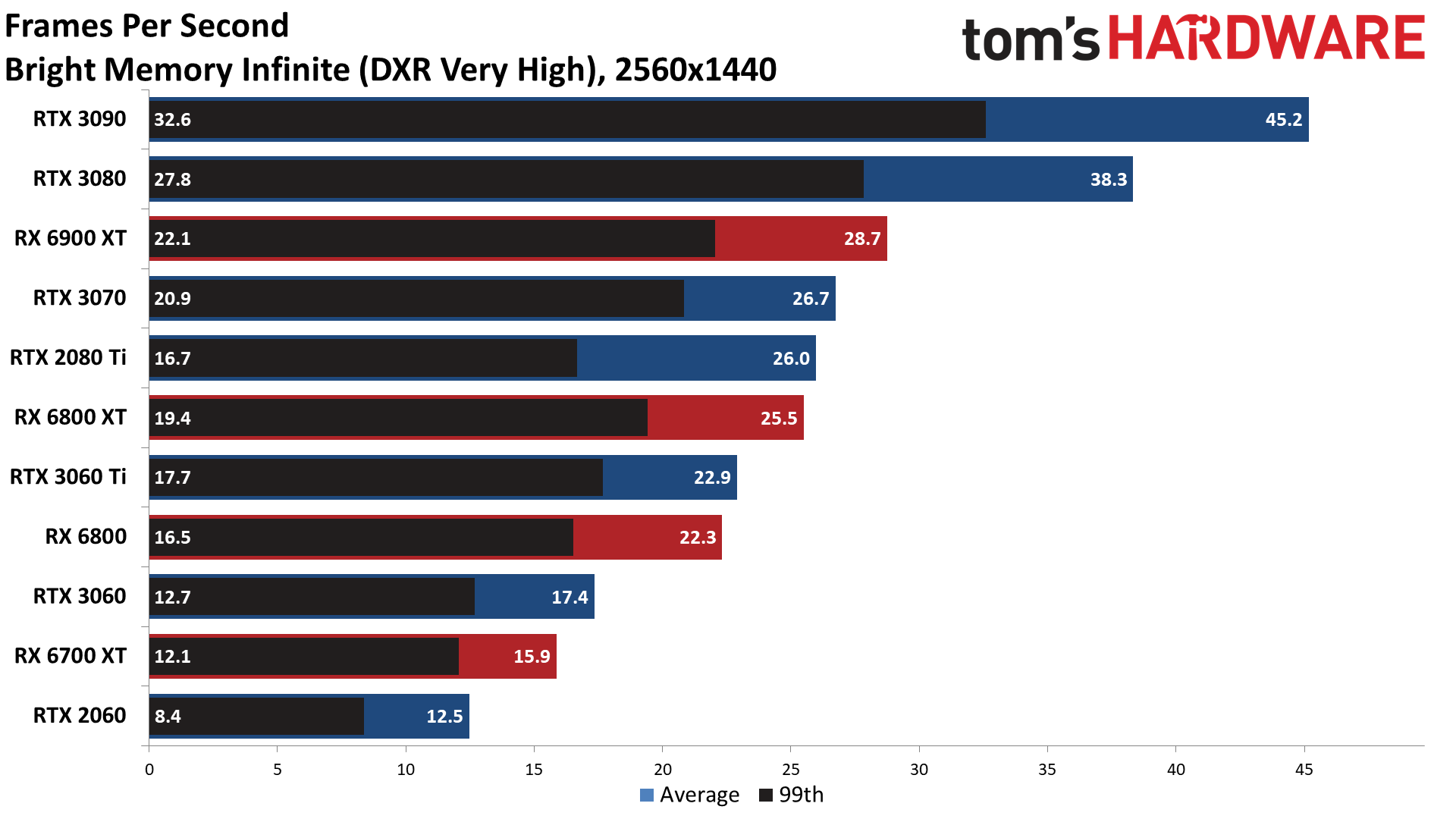

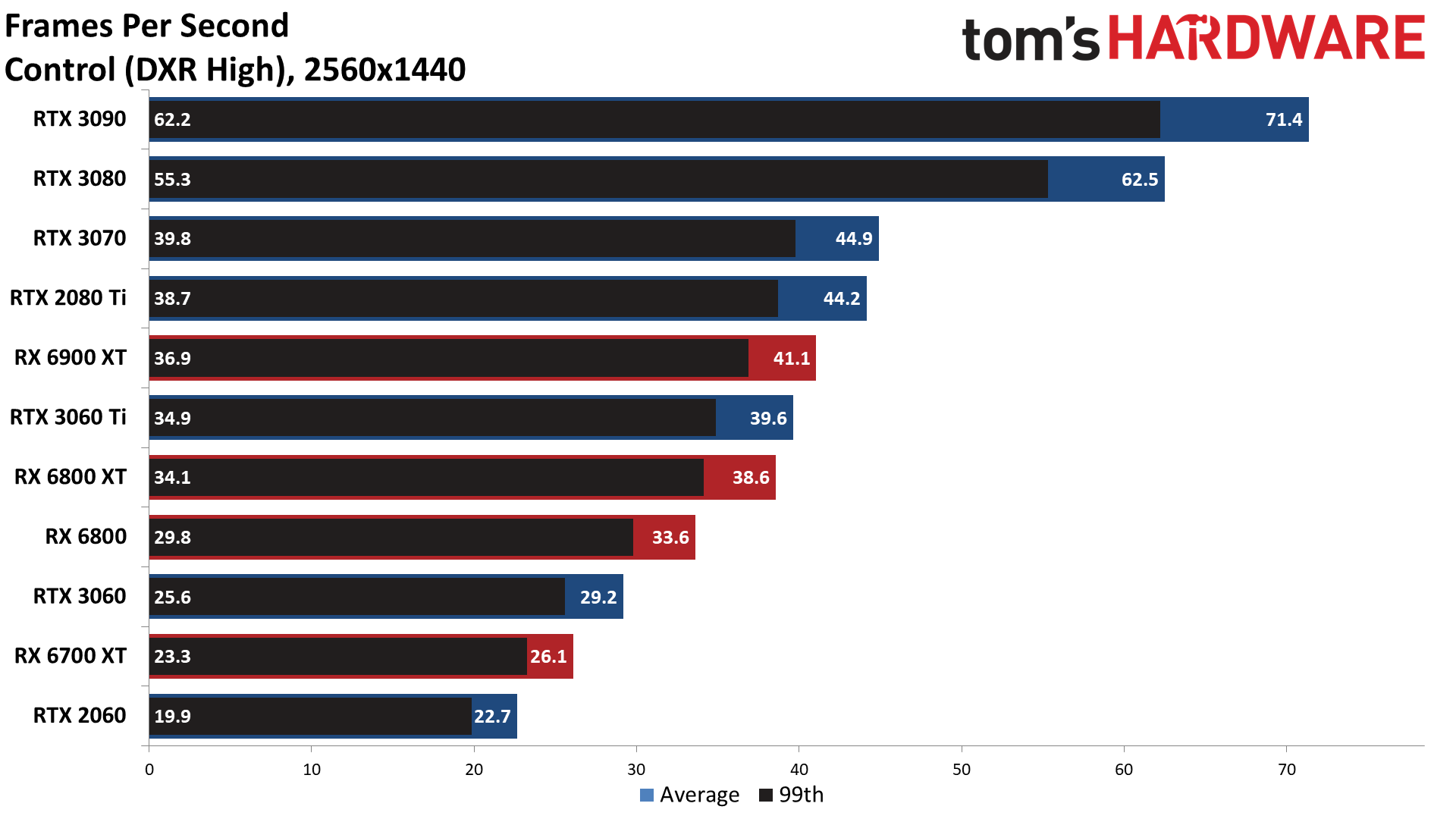

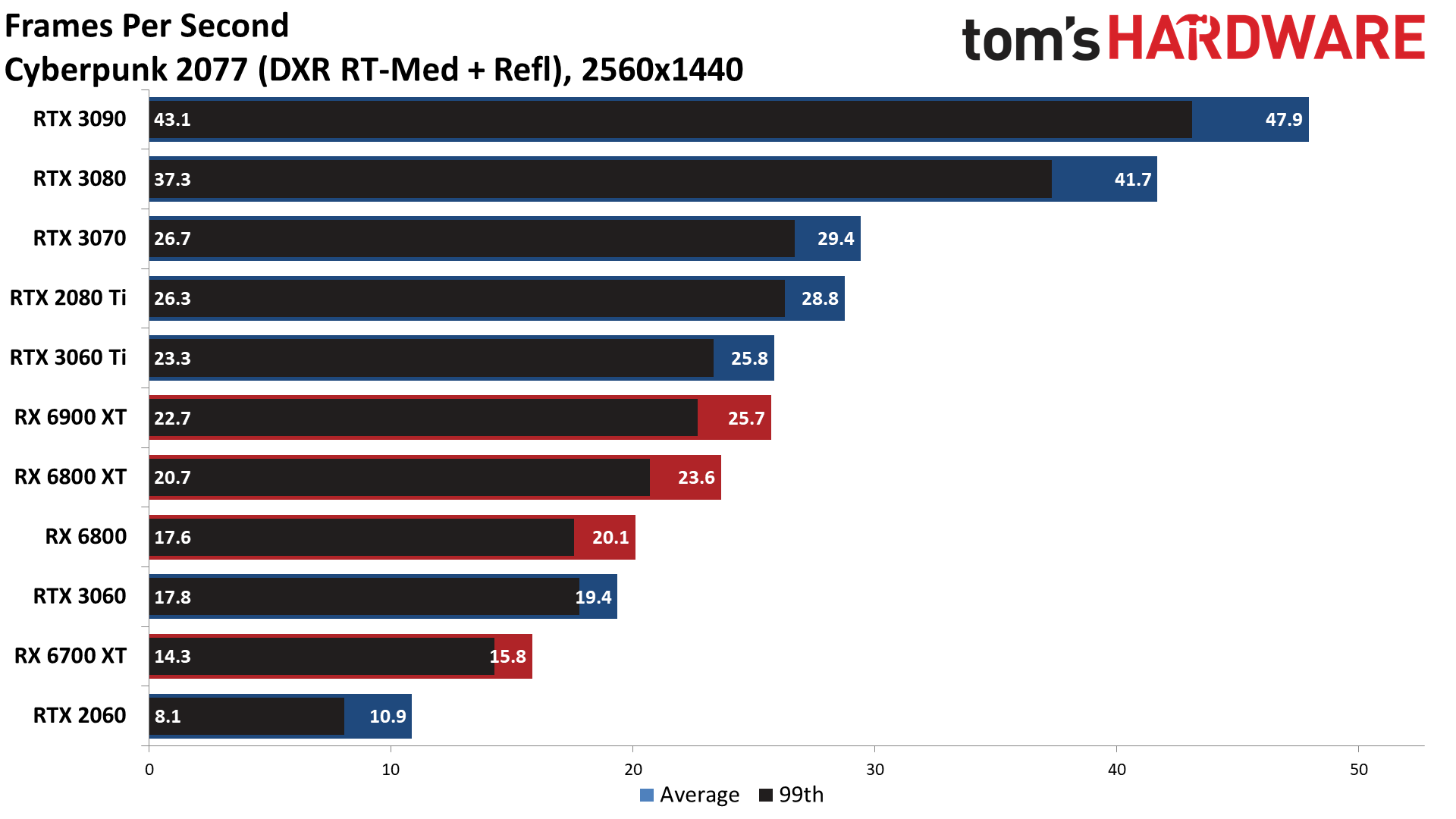

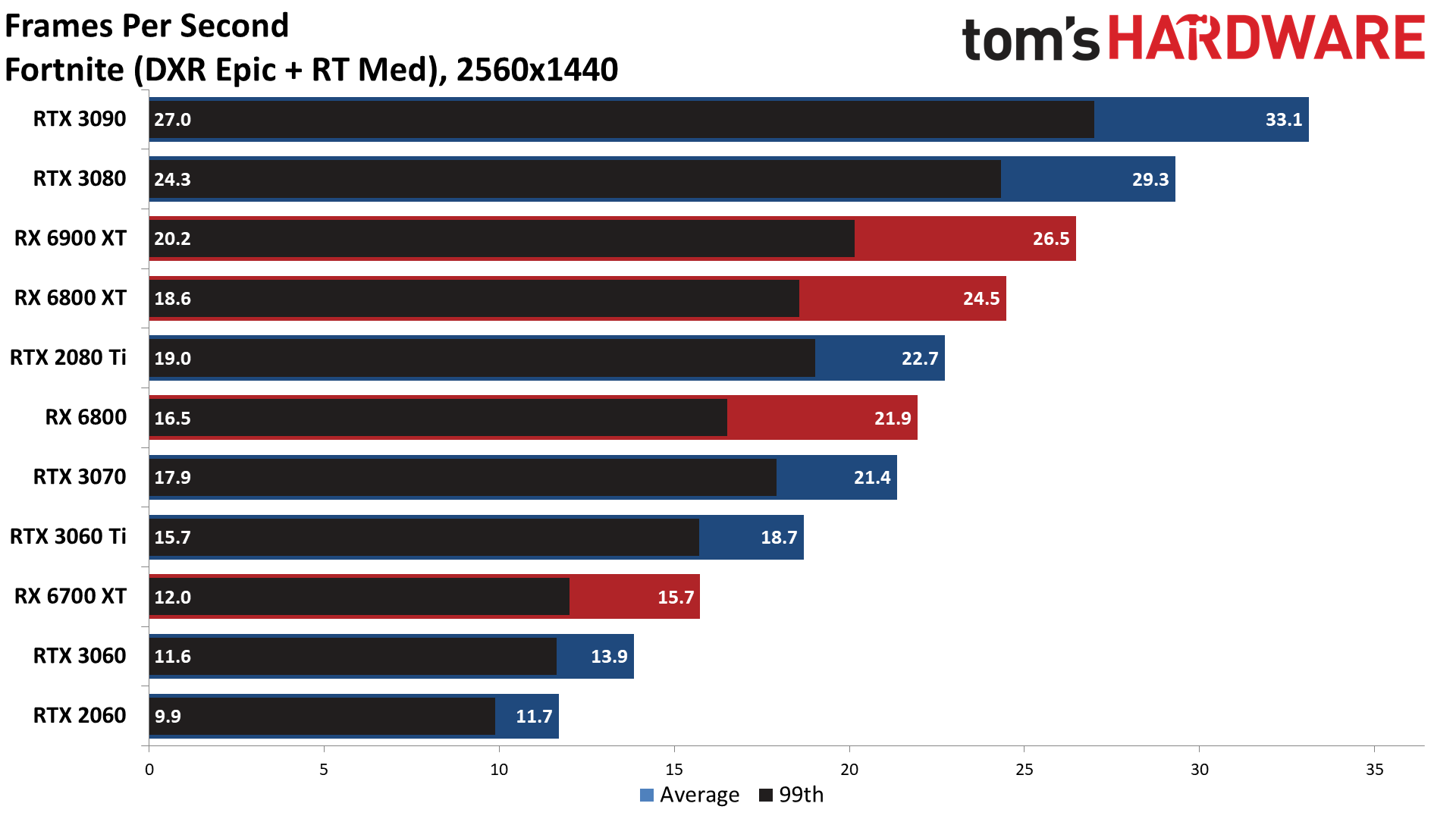

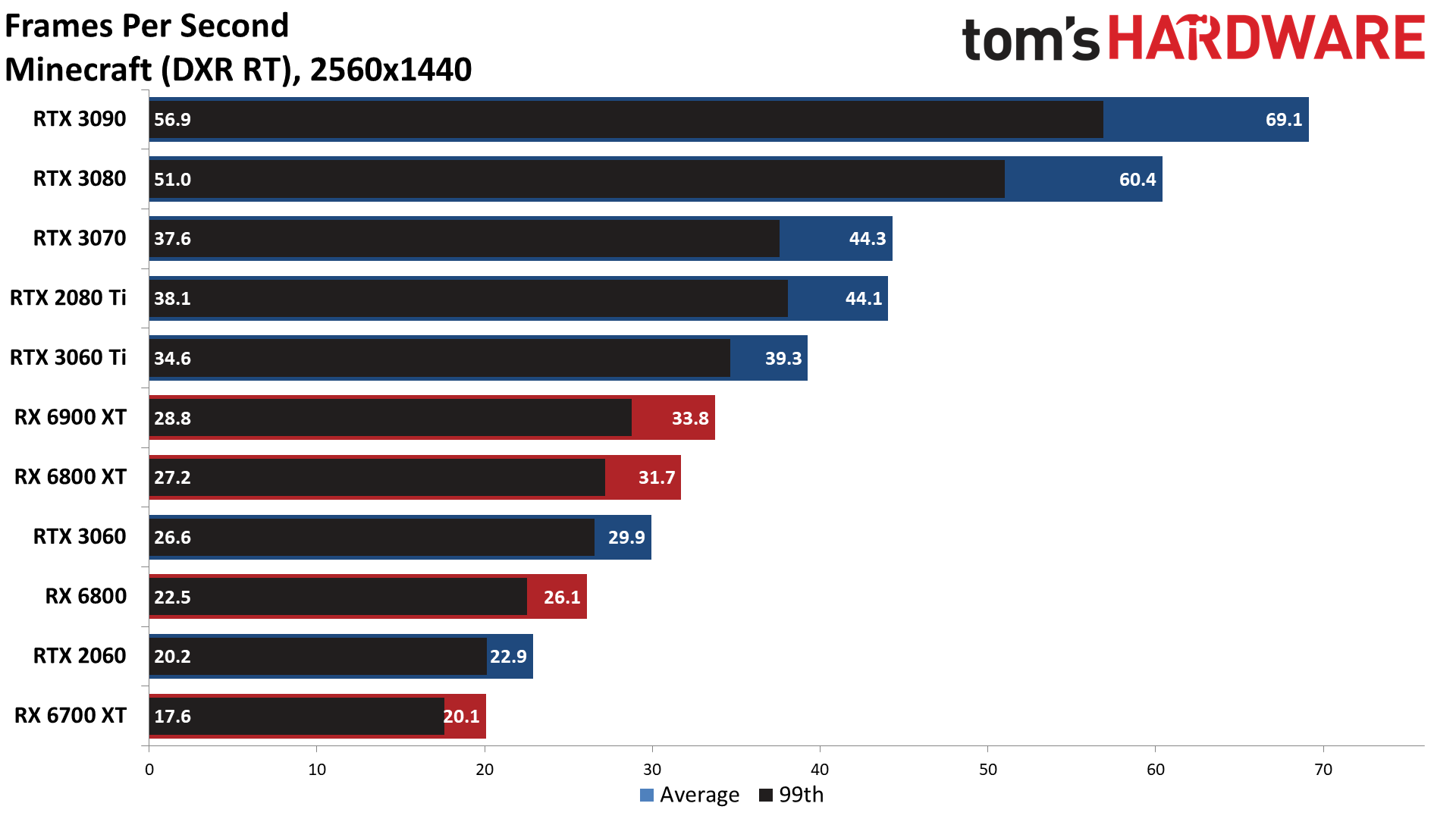

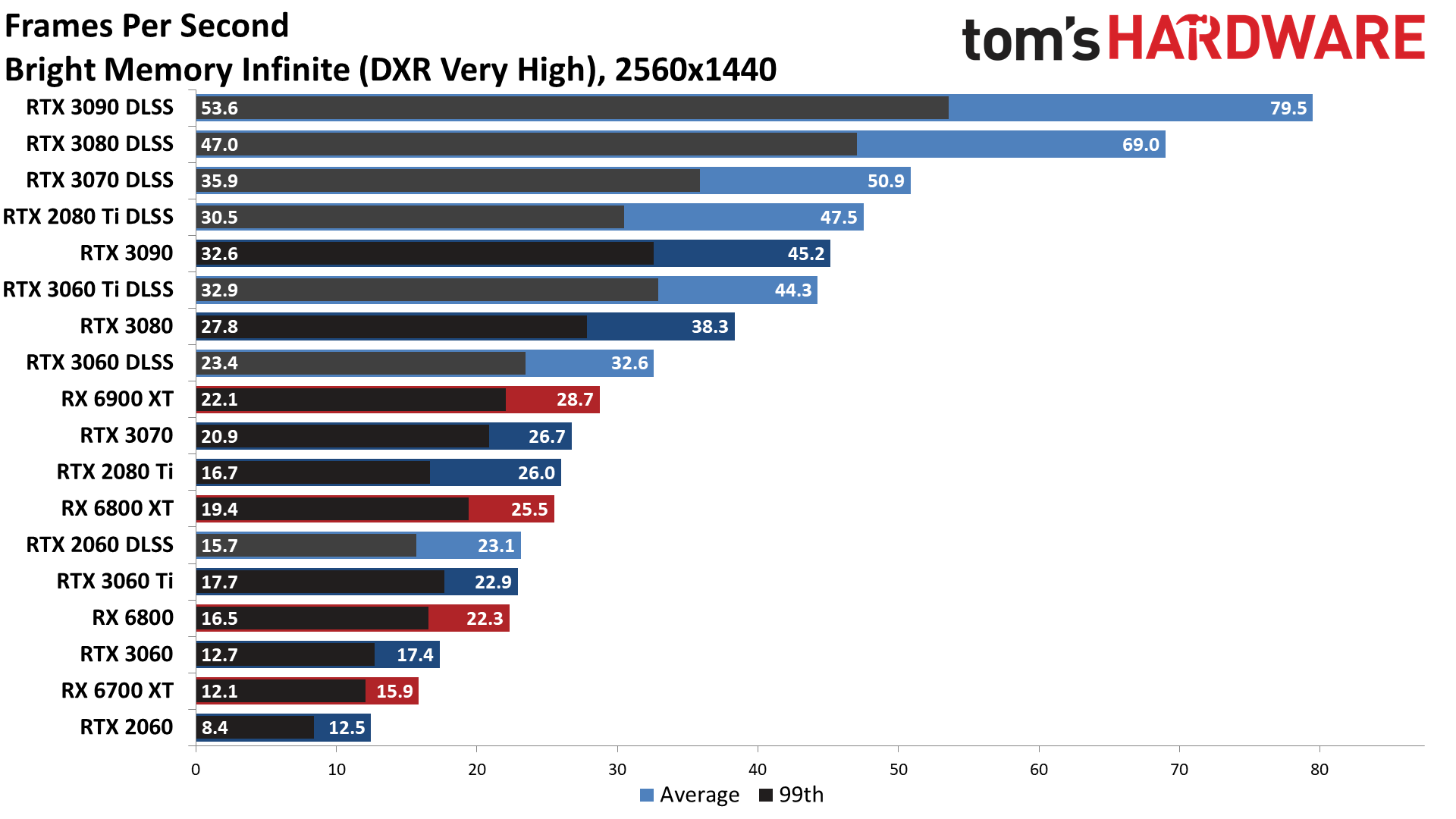

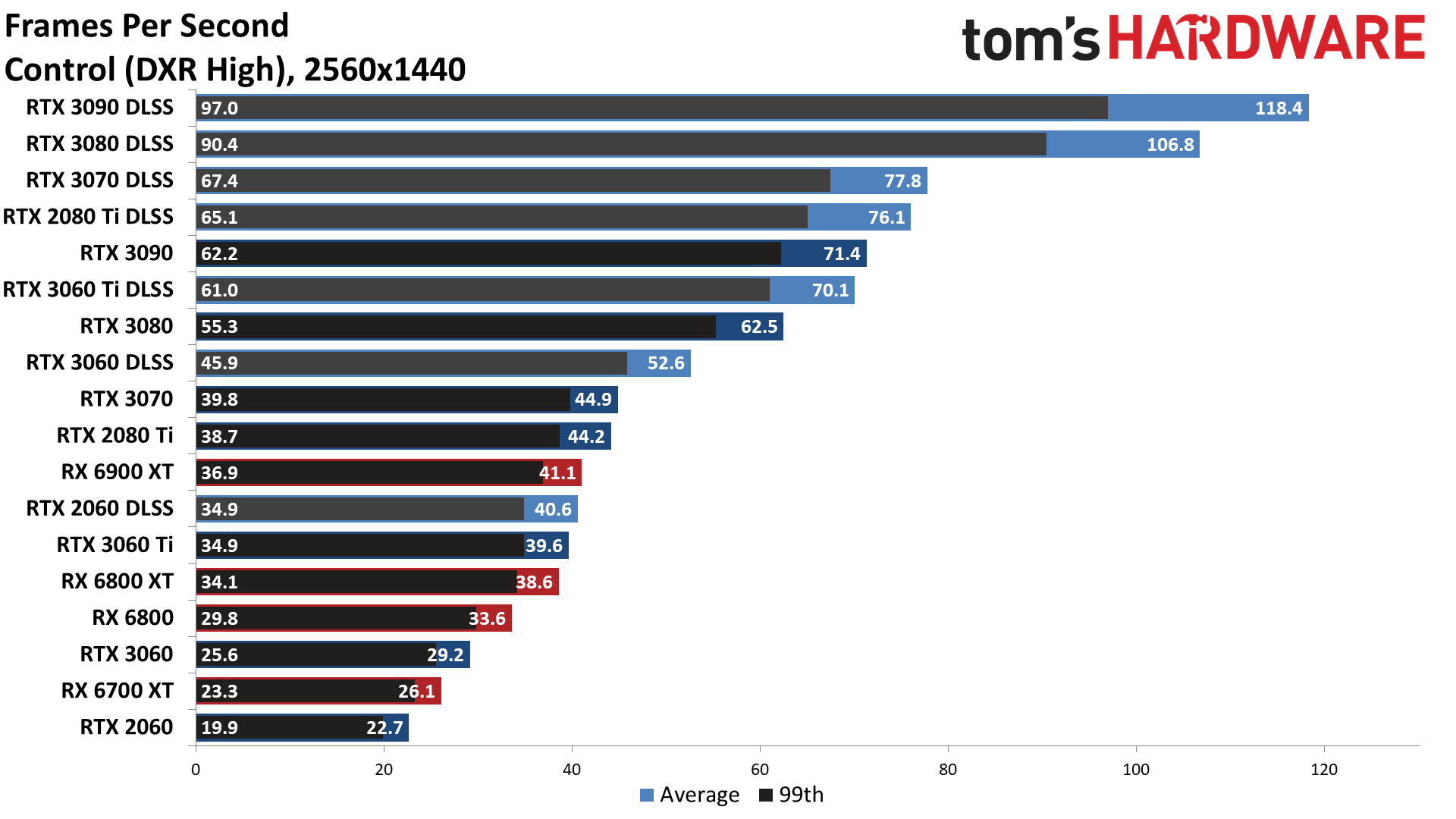

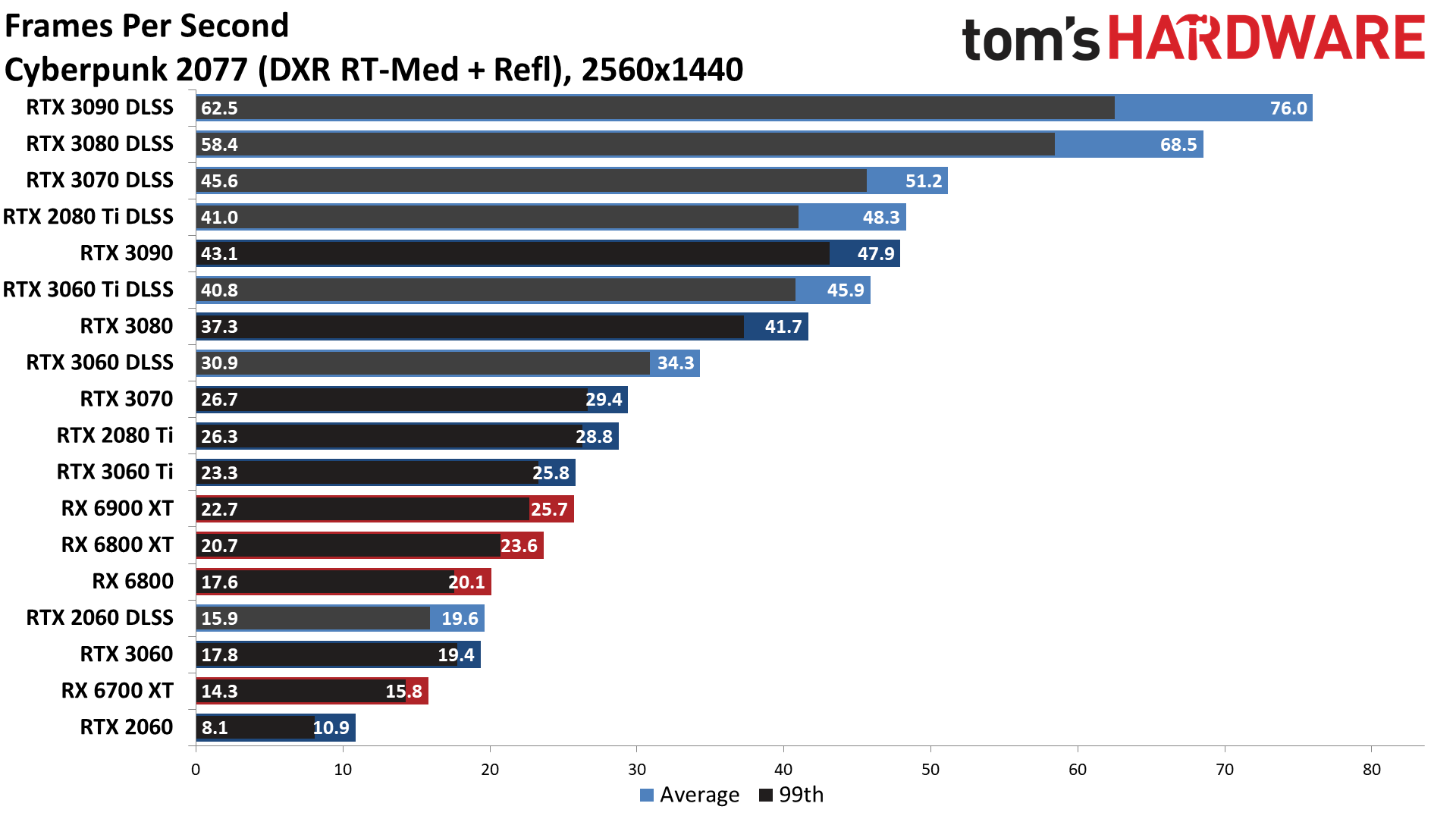

Not surprisingly, multiple games do fall below 30 fps on multiple GPUs at 1440p. Only the 3080 and 3090 break 30 fps in Bright Memory Infinite and Cyberpunk 2077, and only the 3090 manages to do so in Fortnite. Godfall meanwhile clearly punishes the 2060's lack of VRAM, where it's about one third the performance of the RTX 3060. Several GPUs also struggled in Control, Minecraft, and Watch Dogs Legion.

It should be pretty obvious that, of the potential ray tracing effects, reflections tend to be the most demanding, with shadows being the least demanding. Not coincidentally, RT reflections often have the most noticeable effect on image fidelity. Ray traced shadows can be nice, but the various shadow mapping techniques have gotten quite good at 'faking' it.

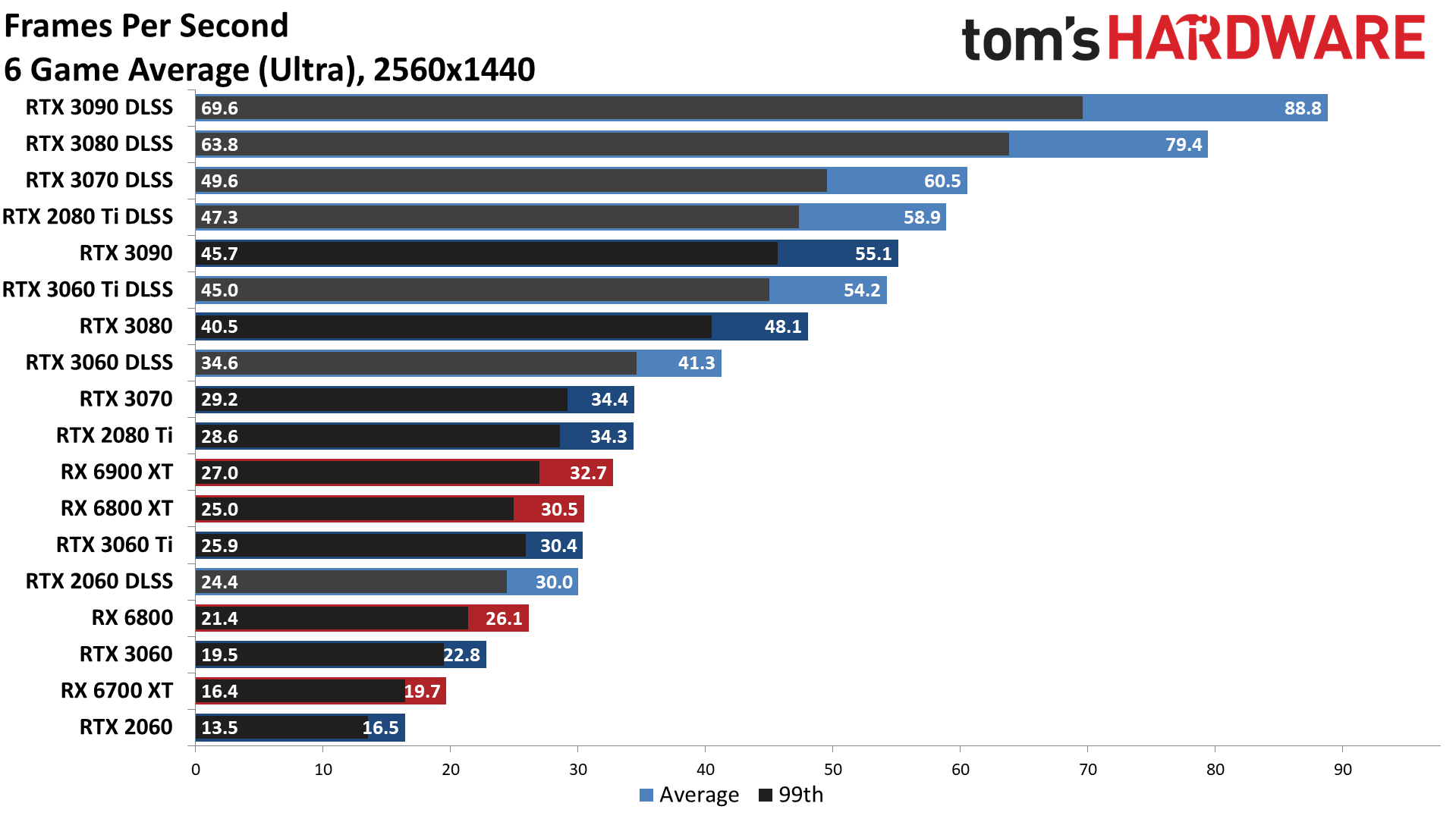

Where DLSS was a potential nice extra at 1080p, it's almost required to get good performance on most GPUs at 1440p. Without DLSS, none of the GPUs we tested can break 60 fps in the overall average performance chart, but with DLSS even the RTX 3070 (barely) gets there. Memory bandwidth clearly becomes a differentiating factor as well, with the 3080 and 3090 really pulling ahead in the most demanding titles — which is actually all six games in our DLSS test suite.

Again, Nvidia dominates the performance charts once DLSS Quality mode gets turned on. Only the RTX 2060 fails to beat the RX 6900 XT in our overall results, and it's basically tied with the RX 6800 XT. That applies to the individual results as well, though the RX 6900 XT does tie the RTX 3060 in Fortnite. In Minecraft, meanwhile, even the RTX 2060 comes out ahead of the RX 6900 XT — along with the non-DLSS 3070 and below.

There's only so much DLSS can accomplish, of course. Bright Memory Infinite, Cyberpunk 2077, Fortnite, and Watch Dogs Legion still fail to break 30 fps with the RTX 2060. That's probably the main reason why we're not seeing an RTX 3060 6GB card — though it exists on laptops and may eventually show up on desktops. (Sigh.) If you care about image quality enough to want ray tracing, you'd be well advised to get a card with more VRAM rather than less. It's too bad that Nvidia's cards (outside of the 3060 and 3090) generally aren't as generous with VRAM as AMD's cards.

Ray Tracing Winner: Nvidia, by a lot

Considering Nvidia was the first company to begin shipping ray tracing capable GPUs, over two years ago, it's not too surprising that it comes out ahead in the ray tracing benchmarks. Technologies like DLSS prove Nvidia wasn't just whipping something up as quickly as possible, either. It knew how demanding ray tracing would be, and looked at how movie studios were optimizing performance for inspiration. Denoising of path traced images, which is at least somewhat similar to upscaling via DLSS, can dramatically improve performance.

Today, Nvidia has second-generation ray tracing hardware and third-generation Tensor cores in the RTX 30-series GPUs. AMD meanwhile has first-generation ray accelerators, and no direct equivalent of Nvidia's Tensor cores or DLSS. Perhaps AMD's FSR will eventually show up and prove that the Tensor cores aren't strictly necessary, but after nearly six months since first hearing about FidelityFX Super Resolution, we're becoming increasingly skeptical.

As it stands now, even without DLSS, Nvidia clearly leads in the majority of games that use DirectX Raytracing. Look at our rasterization-only GPU benchmarks and you'll find the RX 6900 XT and RX 6800 XT in spots two and three, with the RX 6800 in fifth place. With DXR, the 3080 goes from being just barely behind the 6800 XT to leading by over 30%. The same goes for the RTX 3070 and RX 6800: Without DXR, the 6800 is about 12% faster than the 3070; with DXR, the 3070 turns the tables and leads by 15%.

Turn on DLSS Quality mode and things go from bad to worse for Team Red. The RTX 3080 more than doubles the performance of the RX 6900 XT, never mind the 6800 XT. The RTX 3070 also more than doubles the performance of the RX 6800. Heck, even the RTX 3060 12GB beats the 6900 XT by 16% at 1080p and 23% at 1440p. Bottom line: AMD needs FSR, and it really should have had a working solution before the RDNA2 GPUs and consoles even launched. Better late than never, hopefully.

Of course there's still a bigger question of how much ray tracing really benefits the player in most games. The best ray tracing games like Control, Cyberpunk 2077, Fortnite, and Minecraft show substantial visual improvements with ray tracing, to the point where we'd much rather have it on than off. (Okay, not in Fortnite where fps matters more than visuals, though it can be nice in creative mode.) But for each of those games, there are at least five other games where ray tracing merely tanks performance without a major visual benefit.

It took half a decade or more for programmable shaders to really make a difference in the way games looked, and games of the future will eventually reach that point with ray tracing. But we're not there yet. Bottom line: Nvidia reigns as the king of ray tracing GPUs for games. Now we just need more games where the visual benefits are worth the performance hit.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

Phaaze88 Oh, Radeon isn't that bad - before DLSS, then it's, "OH."Reply

DLSS has gained more traction than ray tracing... nice /S -

BeedooX I'd be more interested if you wrote some low level API to access and test the hardware with.Reply

Everyone know what the current landscape looks like. Few know what the actual potential of both cards looks like. -

jimmysmitty ReplyPhaaze88 said:Oh, Radeon isn't that bad - before DLSS, then it's, "OH."

DLSS has gained more traction than ray tracing... nice /S

I mean nVidia specific gaming features do tend to get more adoption than AMD but thats because they work more with game developers. AMD is picking up but I still think nVidia has a bit of a head start with the hardware aspect of Ray Tracing. It could easily switch as we have seen before when ATI used to come out swinging like a giant but they may not.

What I hope is Intel can flood the market with mid range GPUs and eventually get to the high end market and we can end the duopoly in the GPU market. Hell it would be nice to see duopolies all around disappear but odds are most wont. I mean its insane to see that a 3080 even minus the price gouging is $800 for a decent one. -

jhatfie I'd be interested to see how AMD does with CAS enabled and RT. While I have a 3090 FE now, I was running a 6900XT for a couple months and the image quality with CAS enabled and 80% resolution scale generally looked as good as native and was a solid performance boost. Not as good a performance boost as DLSS, but decent and honestly even DLSS 2.0 at less than the quality setting, generally starts to degrade the image to the point I do not want to use it.Reply -

carocuore ReplyMost will, but Wolfenstein Youngblood unfortunately uses pre-VulkanRT extensions

Has someone unironically played that game?

Anyway, here we are, stuck without anymore cards that don't pack ultra-super-wet floors HW rendering engines that add $500 to the cards MSRP.

RIP, AMD. -

Krotow Seems for 1080p and above resolutions DLSS 2.0 make more sense than RT. And that is are where until now Nvidia got much further than AMD. Though I believe that AMD will get there too soon.Reply

Intel indeed would advance their iGPUs to make AAA games playable in budget laptops with acceptable FPS (60+). Then they would get back in track pretty soon. -

JarredWaltonGPU Reply

I actually finished it -- and I didn't finish the previous two Wolfenstein games. It wasn't awesome, but it wasn't all bad either. Writing was not the greatest, but the gunplay was good.carocuore said:Has someone unironically played that game?

Anyway, here we are, stuck without anymore cards that don't pack ultra-super-wet floors HW rendering engines that add $500 to the cards MSRP.

RIP, AMD. -

Mithrandir95 Seems with the exception of Minecraft the AMD cards aren't doing terribly in Ray Tracing at native 1440p. Yes, with DLSS it's a different story, but that's not an apples to apples comparison, although I thought AMD would be offering their apple (CAS) more reliably by now.Reply -

hotaru.hino Reply

Contrast Adaptive Sharpening isn't their answer to DLSS and I find it silly they advertise it as something similar.Mithrandir95 said:Seems with the exception of Minecraft the AMD cards aren't doing terribly in Ray Tracing at native 1440p. Yes, with DLSS it's a different story, but that's not an apples to apples comparison, although I thought AMD would be offering their apple (CAS) more reliably by now.