AMD Radeon RX 6800 XT and RX 6800 Review: Nipping at Ampere's Heels

AMD's best GPUs to date are strong in rasterization but fall behind in ray tracing.

The AMD Radeon RX 6800 XT and Radeon RX 6800 have arrived, joining the ranks of the best graphics cards and making some headway into the top positions in our GPU benchmarks hierarchy. Nvidia has had a virtual stranglehold on the GPU market for cards priced $500 or more, going back to at least the GTX 700-series in 2013. That's left AMD to mostly compete in the high-end, mid-range, and budget GPU markets. "No longer!" says Team Red.

Big Navi, aka Navi 21, aka RDNA2, has arrived, bringing some impressive performance gains. AMD also finally joins the ray tracing fray, both with its PC desktop graphics cards and the next-gen PlayStation 5 and Xbox Series X consoles. How do AMD's latest GPUs stack up to the competition, and could this be AMD's GPU equivalent of the Ryzen debut of 2017? That's what we're here to find out.

We've previously discussed many aspects of today's launch, including details of the RDNA2 architecture, the GPU specifications, features, and more. Now, it's time to take all the theoretical aspects and lay some rubber on the track. If you want to know more about the finer details of RDNA2, we'll cover that as well. If you're just here for the benchmarks, skip down a few screens because, hell yeah, do we have some benchmarks. We've got our standard testbed using an 'ancient' Core i9-9900K CPU, but we wanted something a bit more for the fastest graphics cards on the planet. We've added more benchmarks on both Core i9-10900K and Ryzen 9 5900X. With the arrival of Ryzen 5000, running AMD GPUs with AMD CPUs finally means no compromises.

Update: We've added additional results to the CPU scaling charts. This review was originally published on November 18, 2020, but we'll continue to update related details as needed.

AMD Radeon RX 6800 Series: Specifications and Architecture

Let's start with a quick look at the specifications, which have been mostly known for at least a month. We've also included the previous generation RX 5700 XT as a reference point.

| Graphics Card | RX 6800 XT | RX 6800 | RX 5700 XT |

|---|---|---|---|

| GPU | Navi 21 (XT) | Navi 21 (XL) | Navi 10 (XT) |

| Process (nm) | 7 | 7 | 7 |

| Transistors (billion) | 26.8 | 26.8 | 10.3 |

| Die size (mm^2) | 519 | 519 | 251 |

| CUs | 72 | 60 | 40 |

| GPU cores | 4608 | 3840 | 2560 |

| Ray Accelerators | 72 | 60 | N/A |

| Game Clock (MHz) | 2015 | 1815 | 1755 |

| Boost Clock (MHz) | 2250 | 2105 | 1905 |

| VRAM Speed (MT/s) | 16000 | 16000 | 14000 |

| VRAM (GB) | 16 | 16 | 8 |

| Bus width | 256 | 256 | 256 |

| Infinity Cache (MB) | 128 | 128 | N/A |

| ROPs | 128 | 96 | 64 |

| TMUs | 288 | 240 | 160 |

| TFLOPS (boost) | 20.7 | 16.2 | 9.7 |

| Bandwidth (GB/s) | 512 | 512 | 448 |

| TBP (watts) | 300 | 250 | 225 |

| Launch Date | Nov. 2020 | Nov. 2020 | July 2019 |

| Launch Price | $649 | $579 | $399 |

When AMD fans started talking about "Big Navi" as far back as last year, this is pretty much what they hoped to see. AMD has just about doubled down on every important aspect of its architecture, plus adding in a huge amount of L3 cache and Ray Accelerators to handle ray tracing ray/triangle intersection calculations. Clock speeds are also higher, and — spoiler alert! — the 6800 series cards actually exceed the Game Clock and can even go past the Boost Clock in some cases. Memory capacity has doubled, ROPs have doubled, TFLOPS has more than doubled, and the die size is also more than double.

Support for ray tracing is probably the most visible new feature, but RDNA2 also supports Variable Rate Shading (VRS), mesh shaders, and everything else that's part of the DirectX 12 Ultimate spec. There are other tweaks to the architecture, like support for 8K AV1 decode and 8K HEVC encode. But a lot of the underlying changes don't show up as an easily digestible number.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

For example, AMD says it reworked much of the architecture to focus on a high speed design. That's where the greater than 2GHz clocks come from, but those aren't just fantasy numbers. Playing around with overclocking a bit — and the software to do this is still missing, so we had to stick with AMD's built-in overclocking tools — we actually hit clocks of over 2.5GHz. Yeah. I saw the supposed leaks before the launch claiming 2.4GHz and 2.5GHz and thought, "There's no way." I was wrong.

AMD's cache hierarchy is arguably one of the biggest changes. Besides a shared 1MB L1 cache for each cluster of 20 dual-CUs, there's a 4MB L2 cache and a whopping 128MB L3 cache that AMD calls the Infinity Cache. It also ties into the Infinity Fabric, but fundamentally, it helps optimize memory access latency and improve the effective bandwidth. Thanks to the 128MB cache, the framebuffer mostly ends up being cached, which drastically cuts down memory access. AMD says the effective bandwidth of the GDDR6 memory ends up being 119 percent higher than what the raw bandwidth would suggest.

The large cache also helps to reduce power consumption, which all ties into AMD's targeted 50 percent performance per Watt improvements. This doesn't mean power requirements stayed the same — RX 6800 has a slightly higher TBP (Total Board Power) than the RX 5700 XT, and the 6800 XT and upcoming 6900 XT are back at 300W (like the Vega 64). However, AMD still comes in at a lower power level than Nvidia's competing GPUs, which is a bit of a change of pace from previous generation architectures.

It's not entirely clear how AMD's Ray Accelerators stack up against Nvidia's RT cores. Much like Nvidia, AMD is putting one Ray Accelerator into each CU. (It seems we're missing an acronym. Should we call the ray accelerators RA? The sun god, casting down rays! Sorry, been up all night, getting a bit loopy here...) The thing is, Nvidia is on its second-gen RT cores that are supposed to be around 1.7X as fast as its first-gen RT cores. AMD's Ray Accelerators are supposedly 10 times as fast as doing the RT calculations via shader hardware, which is similar to what Nvidia said with its Turing RT cores. In practice, it looks as though Nvidia will maintain a lead in ray tracing performance.

That doesn't even get into the whole DLSS and Tensor core discussion. AMD's RDNA2 chips can do FP16 via shaders, but they're still a far cry from the computational throughput of Tensor cores. That may or may not matter, as perhaps the FP16 throughput is enough for real-time inference to do something akin to DLSS. AMD has talked about FidelityFX Super Resolution, which it's working on with Microsoft, but it's not available yet, and of course, no games are shipping with it yet either. Meanwhile, DLSS is in a couple of dozen games now, and it's also in Unreal Engine, which means uptake of DLSS could explode over the coming year.

Anyway, that's enough of the architectural talk for now. Let's meet the actual cards.

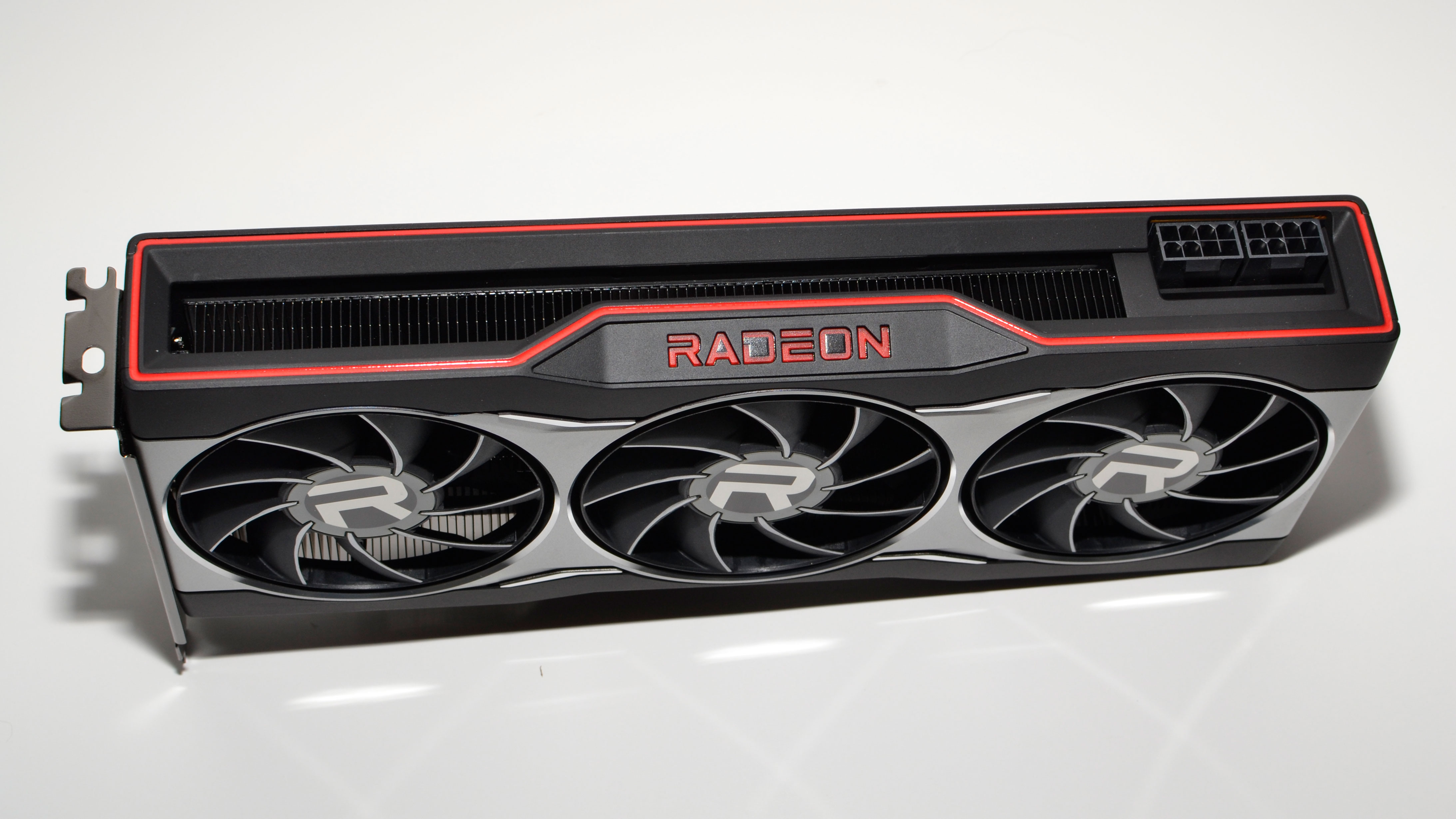

Meet the Radeon RX 6800 XT and RX 6800 Reference Cards

We've already posted an unboxing of the RX 6800 cards, which you can see in the above video. The design is pretty traditional, building on previous cards like the Radeon VII. There's no blower this round, which is probably for the best if you're worried about noise levels. Otherwise, you get a similar industrial design and aesthetic with both the reference 6800 and 6800 XT. The only real change is that the 6800 XT has a fatter heatsink and weighs 115g more, which helps it cope with the higher TBP.

Both cards are triple fan designs, using custom 77mm fans that have an integrated rim. We saw the same style of fan on many of the RTX 30-series GPUs, and it looks like the engineers have discovered a better way to direct airflow. Both cards have a Radeon logo that lights up in red, but it looks like the 6800 XT might have an RGB logo — it's not exposed in software yet, but maybe that will come.

Otherwise, you get dual 8-pin PEG power connections, which might seem a bit overkill on the 6800 — it's a 250W card, after all, why should it need the potential for up to 375W of power? But we'll get into the power stuff later. If you're into collecting hardware boxes, the 6800 XT box is also larger and a bit nicer, but there's no real benefit otherwise.

The one potential concern with AMD's reference design is the video ports. There are two DisplayPort outputs, a single HDMI 2.1 connector, and a USB Type-C port. It's possible to use four displays with the cards, but the most popular gaming displays still use DisplayPort, and very few options exist for the Type-C connector. There also aren't any HDMI 2.1 monitors that I'm aware of, unless you want to use a TV for your monitor. But those will eventually come. Anyway, if you want a different port selection, keep an eye on the third party cards, as I'm sure they'll cover other configurations.

And now, on to the benchmarks.

Radeon RX 6800 Series Test Systems

It seems AMD is having a microprocessor renaissance of sorts right now. First, it has Zen 3 coming out and basically demolishing Intel in every meaningful way in the CPU realm. Sure, Intel can compete on a per-core basis … but only up to 10-core chips without moving into HEDT territory. The new RX 6800 cards might just be the equivalent of AMD's Ryzen CPU launch. This time, AMD isn't making any apologies. It intends to go up against Nvidia's best. And of course, if we're going to test the best GPUs, maybe we ought to look at the best CPUs as well?

For this launch, we have three test systems. First is our old and reliable Core i9-9900K setup, which we still use as the baseline and for power testing. We're adding both AMD Ryzen 9 5900X and Intel Core i9-10900K builds to flesh things out. In retrospect, trying to do two new testbeds may have been a bit too ambitious, as we have to test each GPU on each testbed. We had to cut a bunch of previous-gen cards from our testing, and the hardware varies a bit among the PCs.

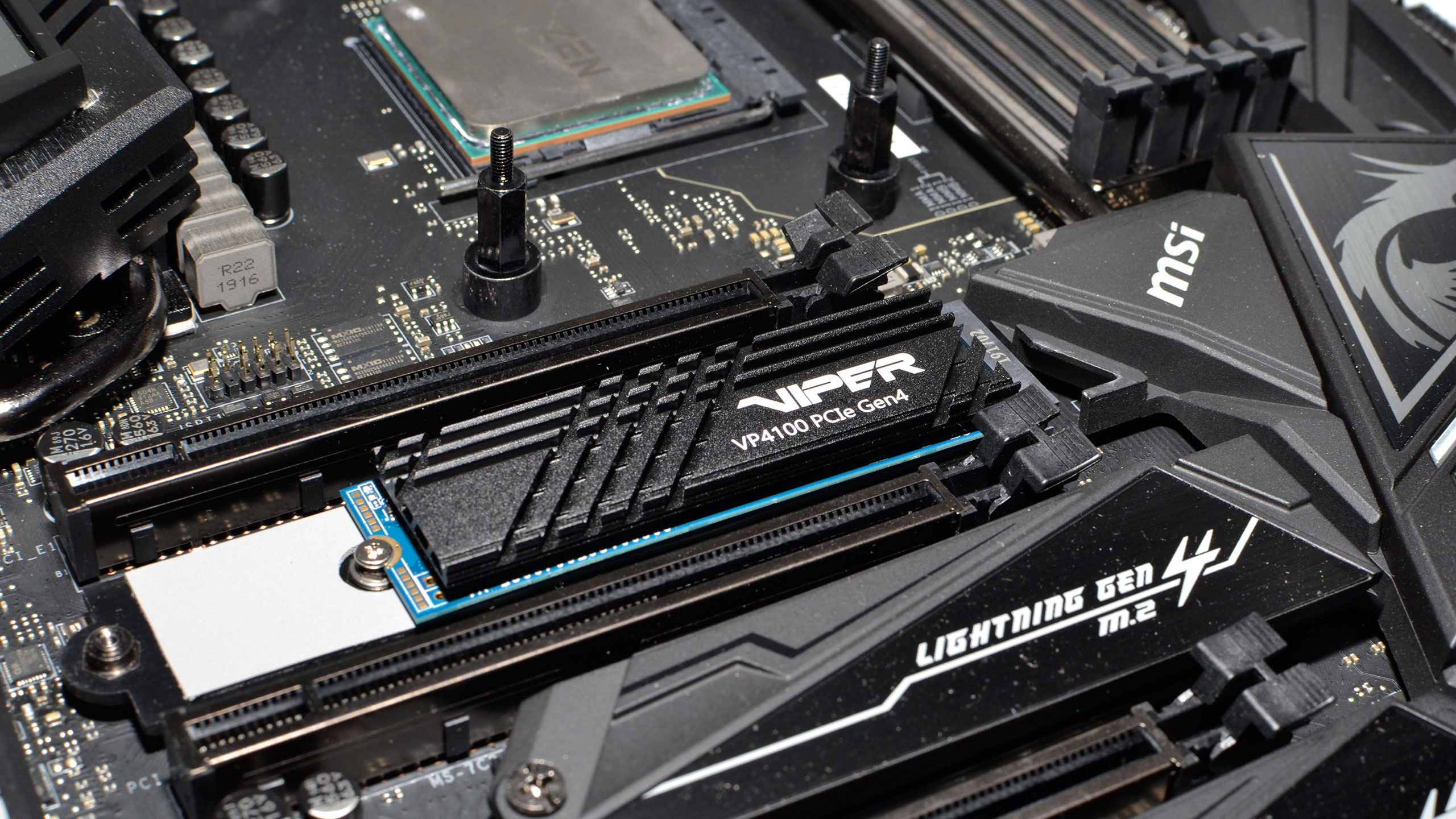

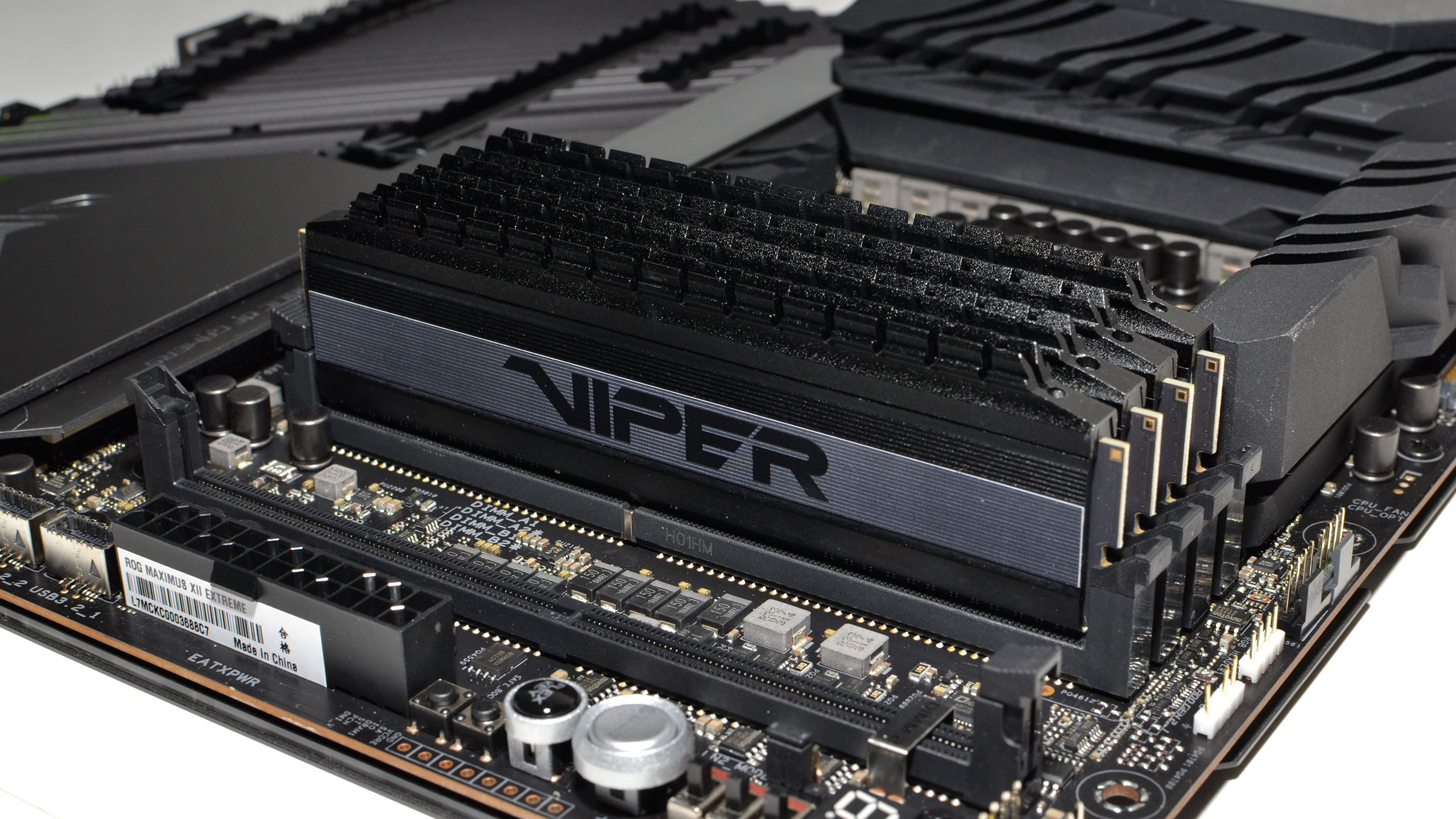

For the AMD build, we've got an MSI X570 Godlike motherboard, which is one of only a handful that supports AMD's new Smart Memory Access technology. Patriot supplied us with two kits of single bank DDR4-4000 memory, which means we have 4x8GB instead of our normal 2x16GB configuration. We also have the Patriot Viper VP4100 2TB SSD holding all of our games. Remember when 1TB used to feel like a huge amount of SSD storage? And then Call of Duty: Modern Warfare (2019) happened, sucking down over 200GB. Which is why we need 2TB drives.

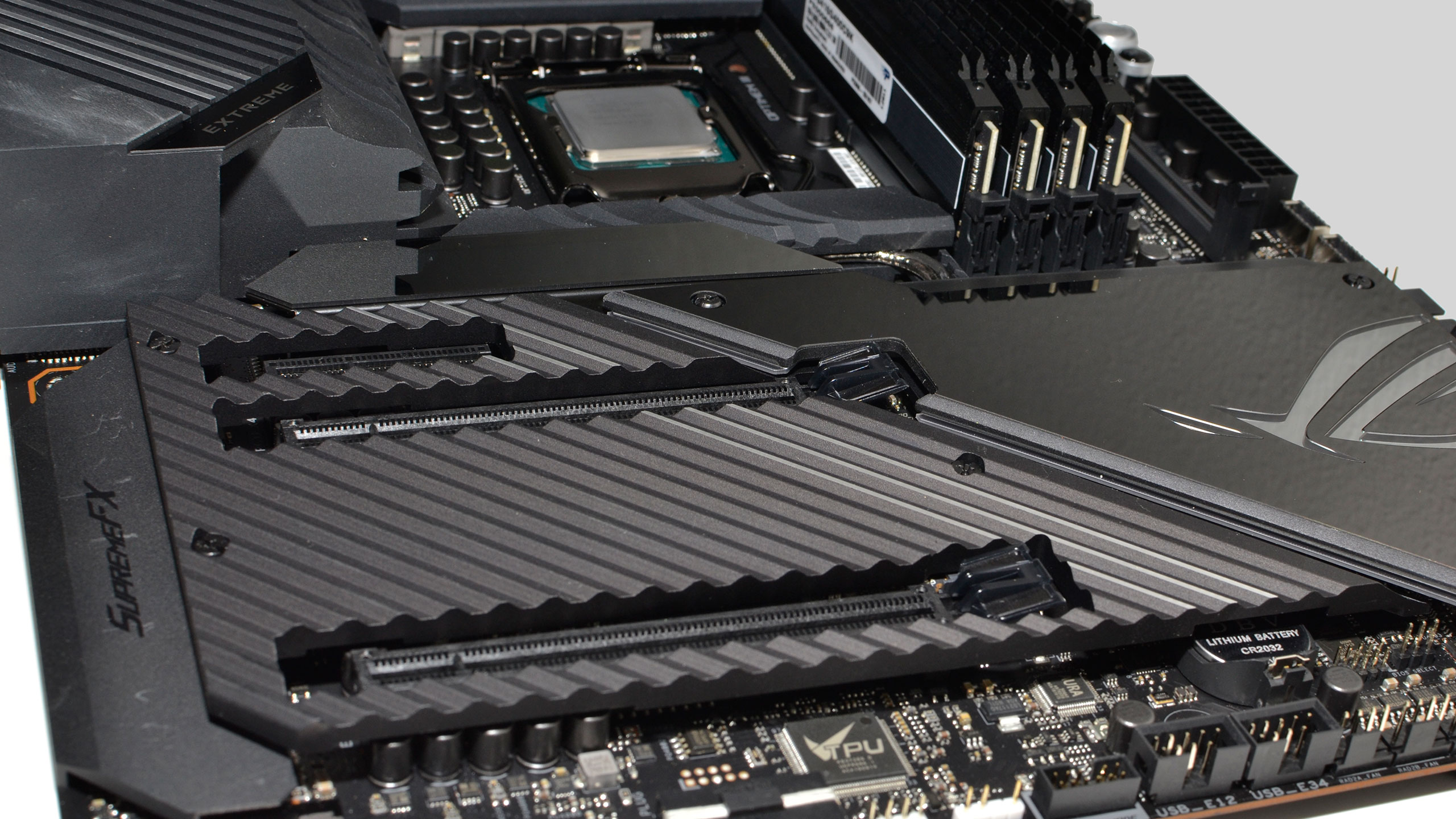

Meanwhile, the Intel LGA1200 PC has an Asus Maximum XII Extreme motherboard, 2x16GB DDR4-3600 HyperX memory, and a 2TB XPG SX8200 Pro SSD. (I'm not sure if it's the old 'fast' version or the revised 'slow' variant, but it shouldn't matter for these GPU tests.) Full specs are in the table below.

Anyway, the slightly slower RAM might be a bit of a handicap on the Intel PCs, but this isn't a CPU review — we just wanted to use the two fastest CPUs, and time constraints and lack of duplicate hardware prevented us from going full apples-to-apples. The internal comparisons among GPUs on each testbed will still be consistent. Frankly, there's not a huge difference between the CPUs when it comes to gaming performance, especially at 1440p and 4K.

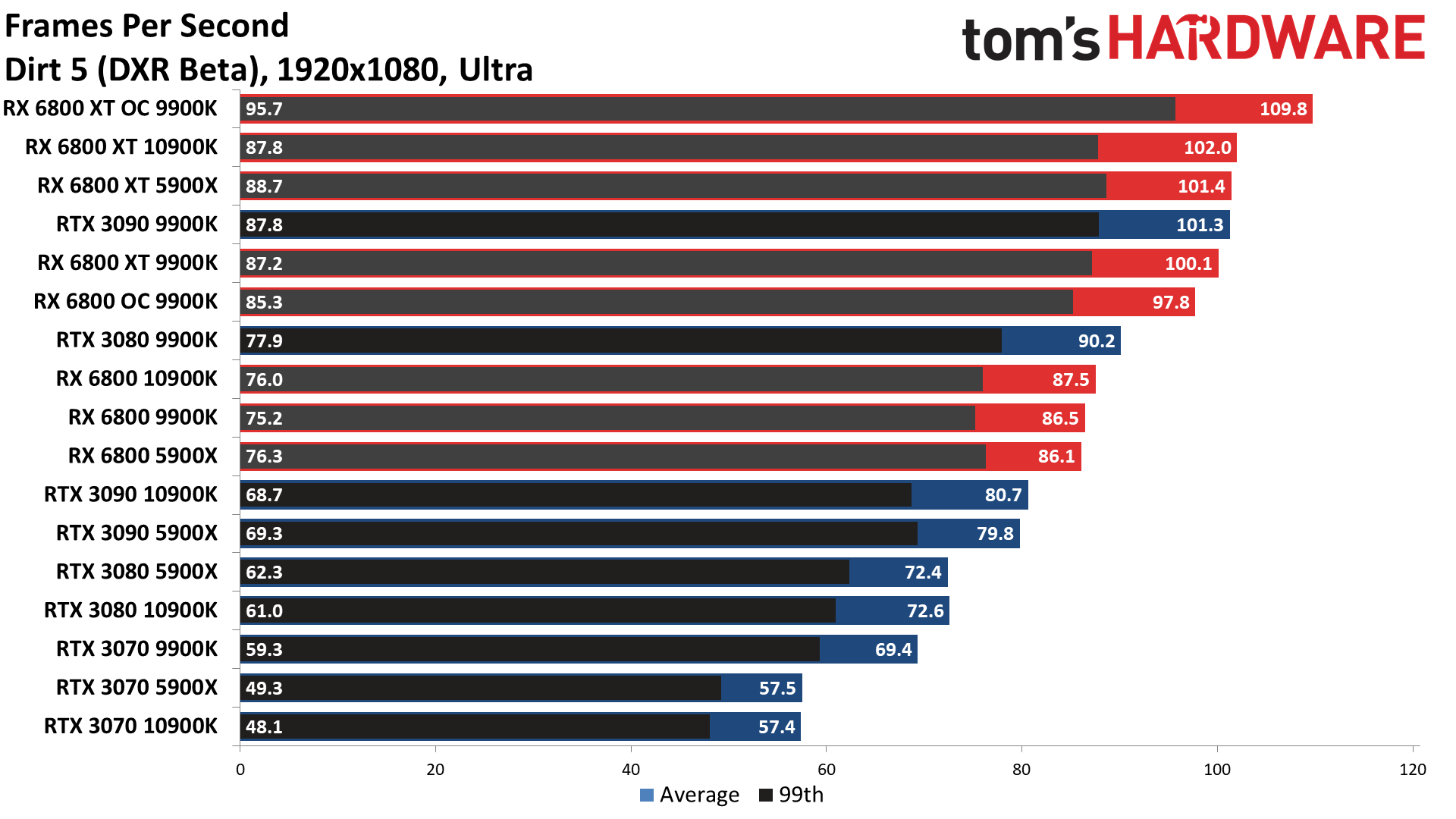

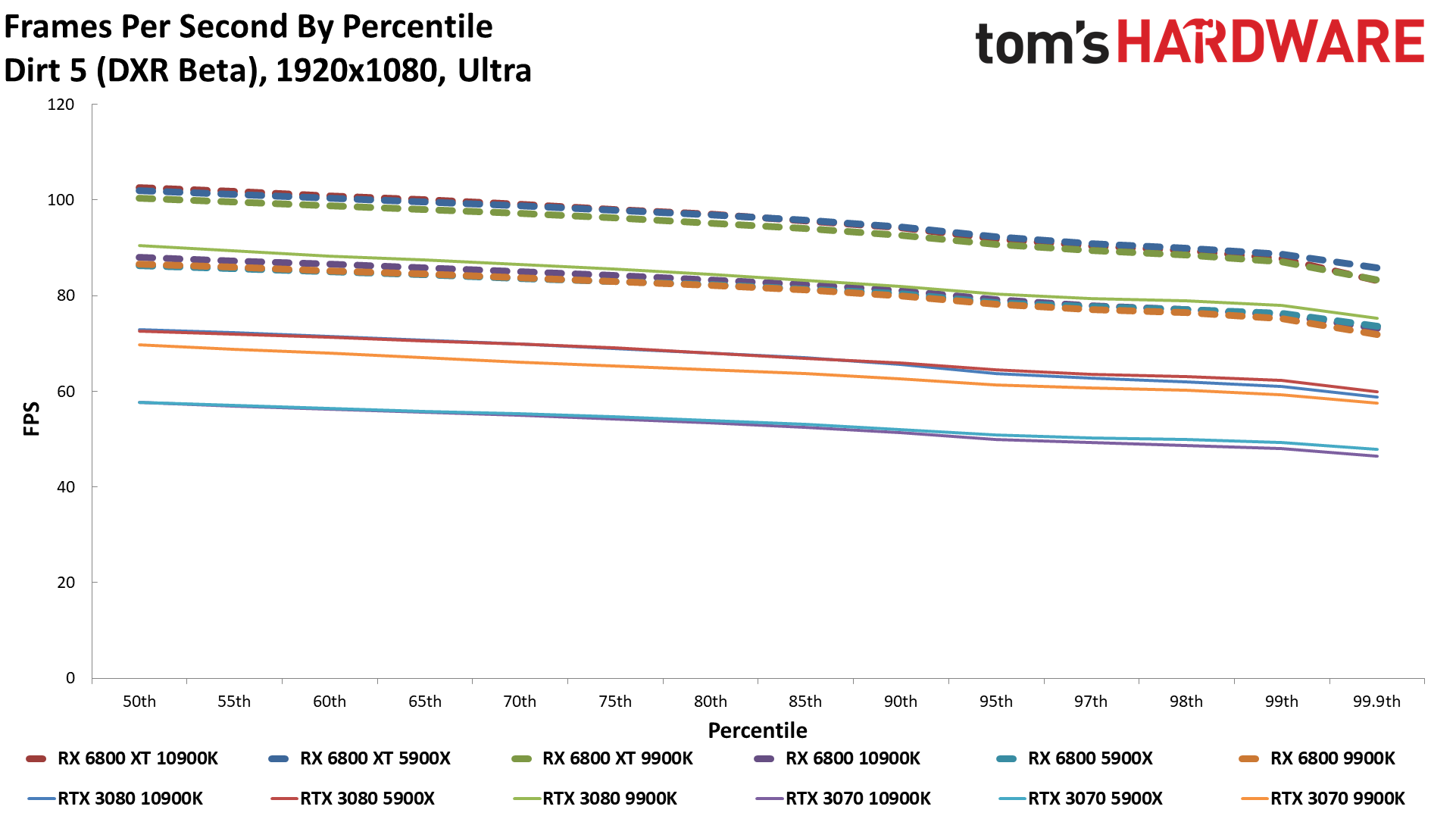

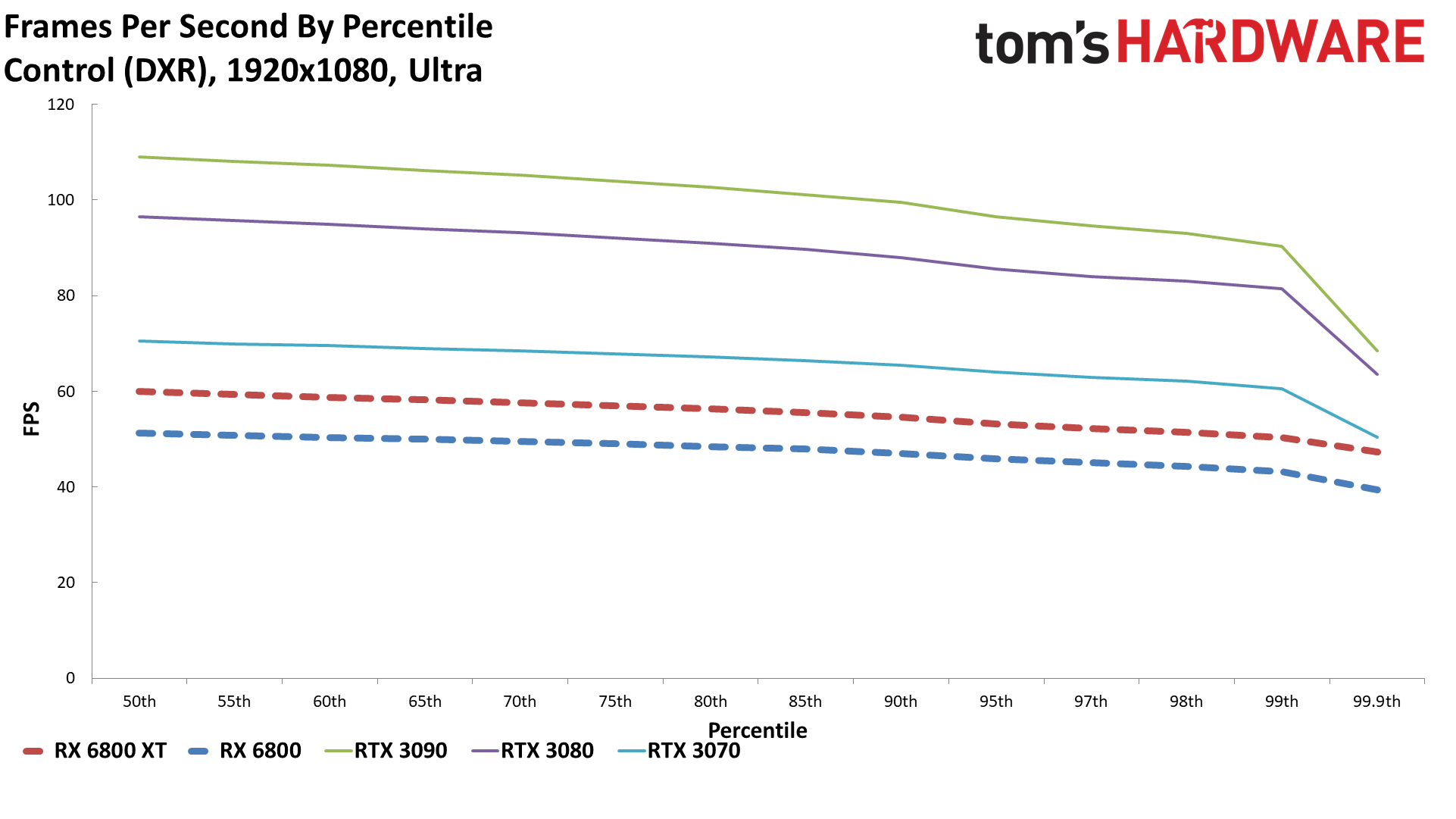

Besides the testbeds, I've also got a bunch of additional gaming tests. First is the suite of nine games we've used on recent GPU reviews like the RTX 30-series launch. We've done some 'bonus' tests on each of the Founders Edition reviews, but we're shifting gears this round. We're adding four new/recent games that will be tested on each of the CPU testbeds: Assassin's Creed Valhalla, Dirt 5, Horizon Zero Dawn, and Watch Dogs Legion — and we've enabled DirectX Raytracing (DXR) on Dirt 5 and Watch Dogs Legion.

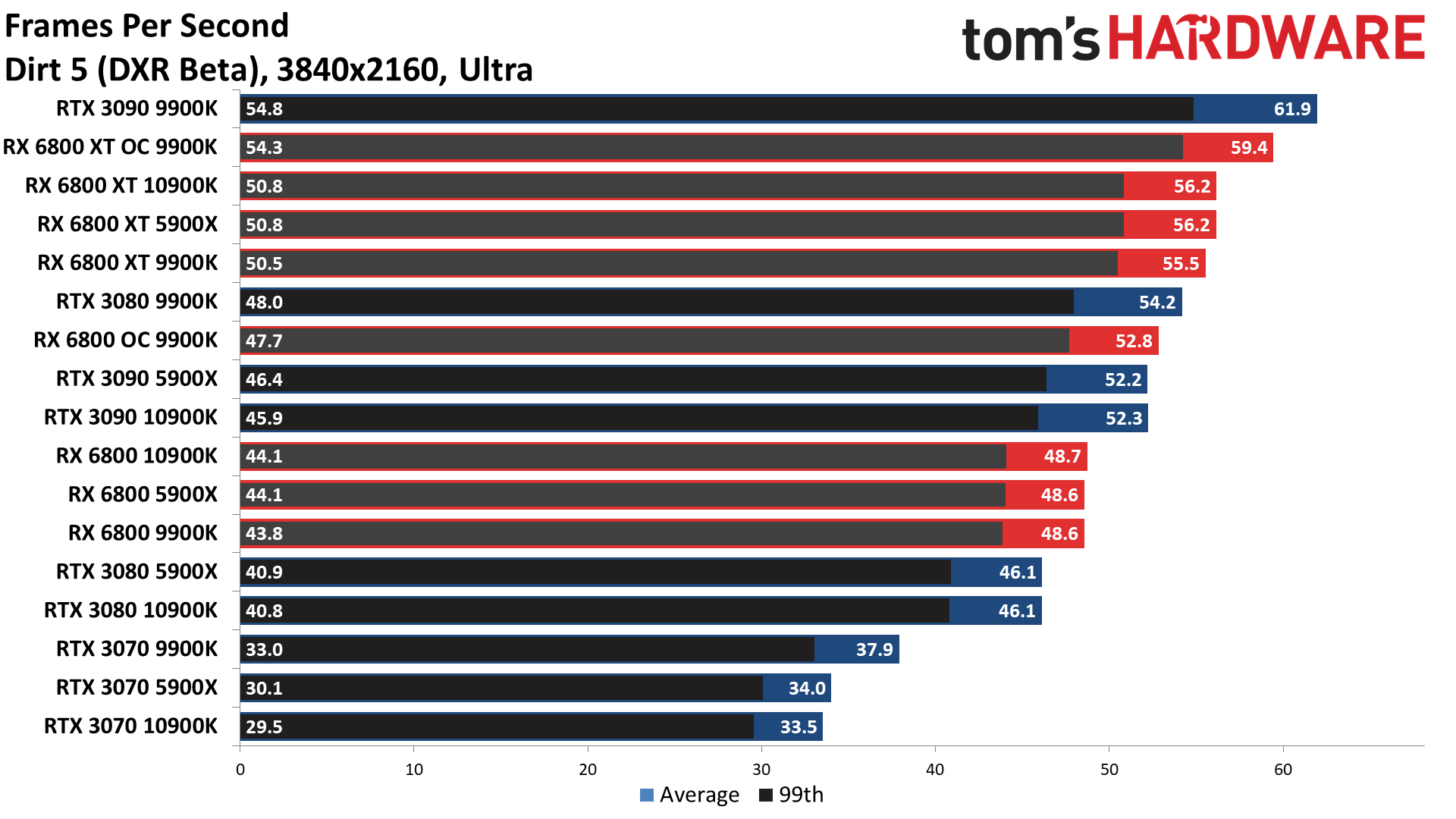

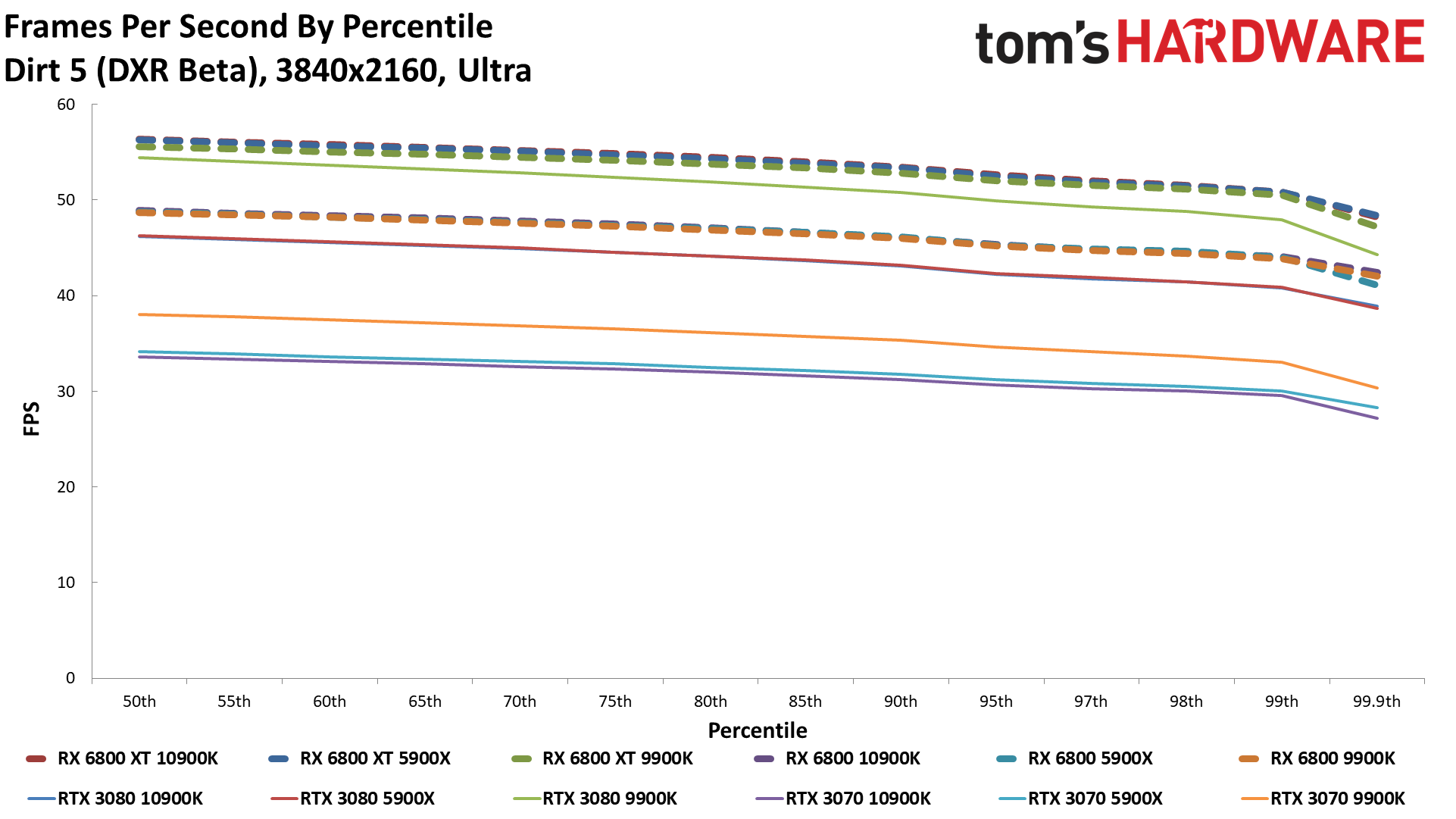

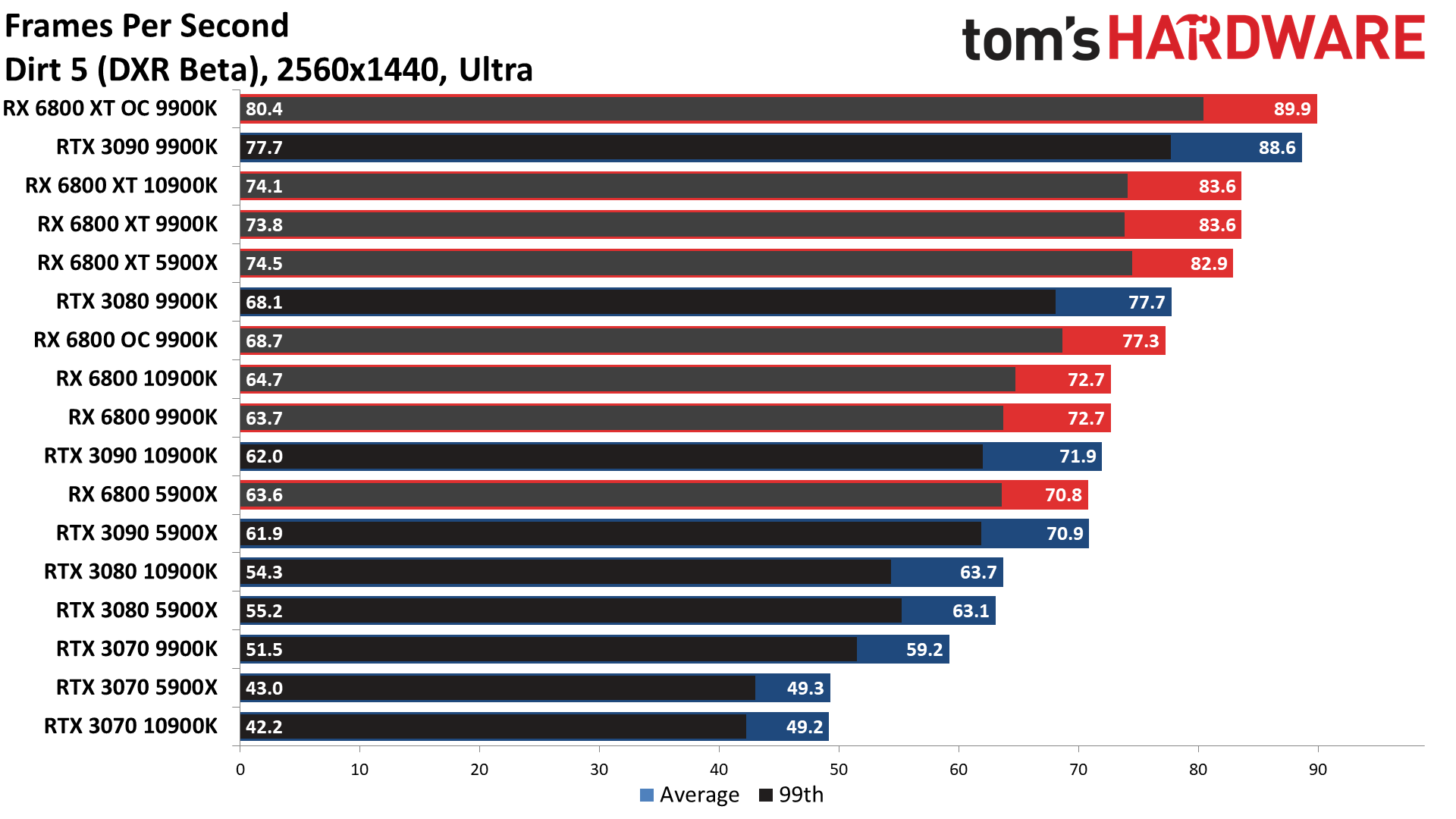

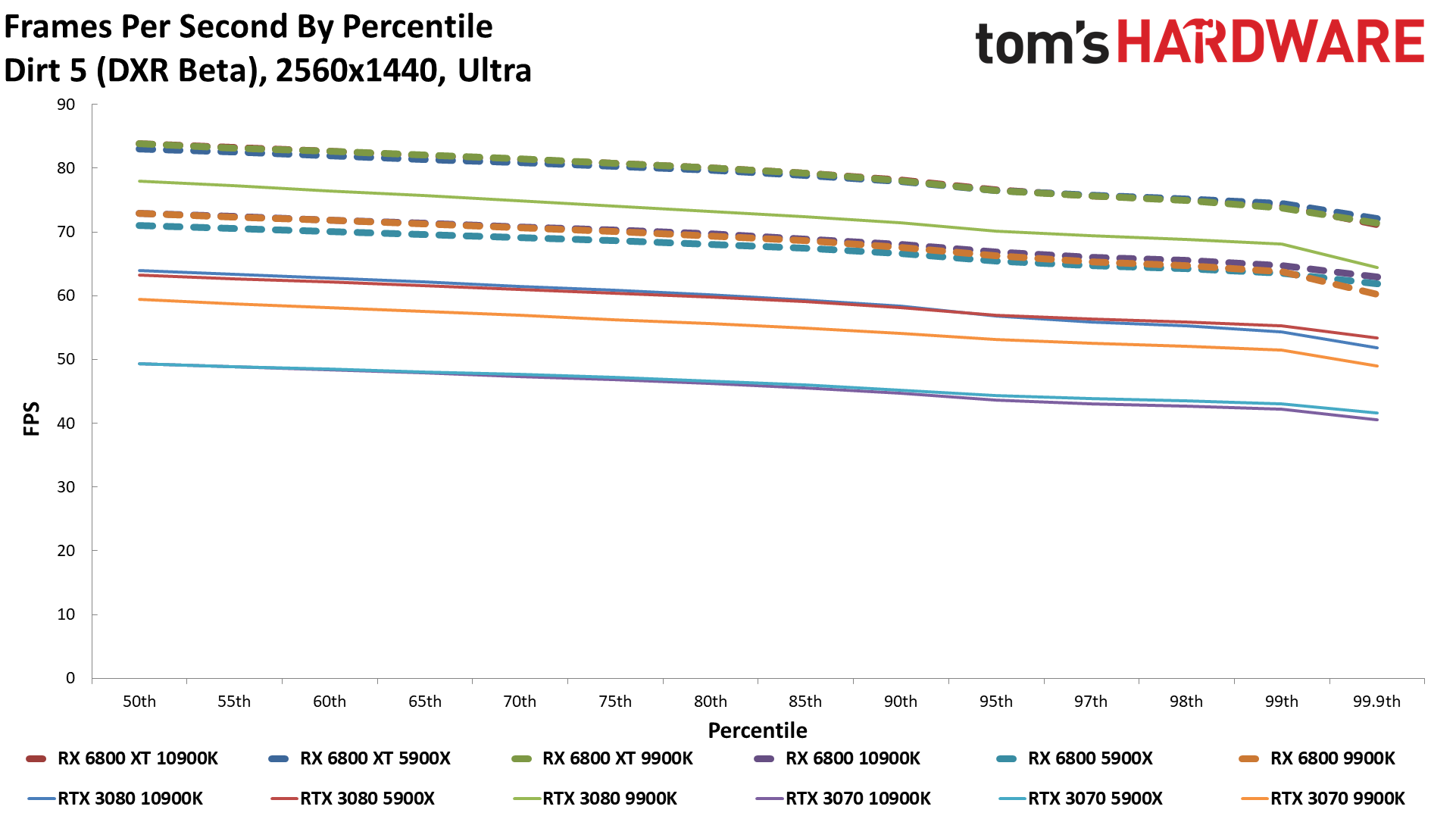

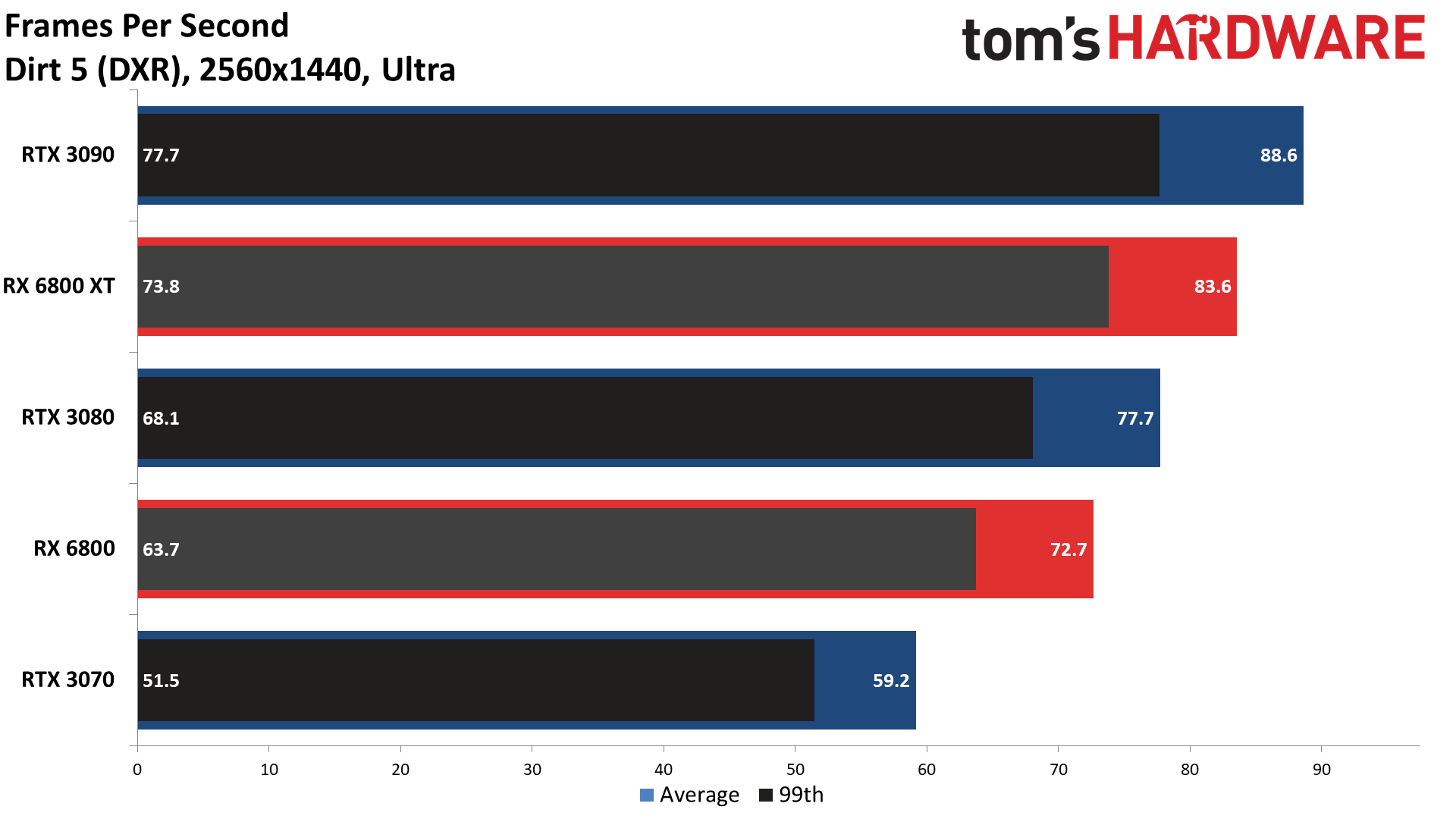

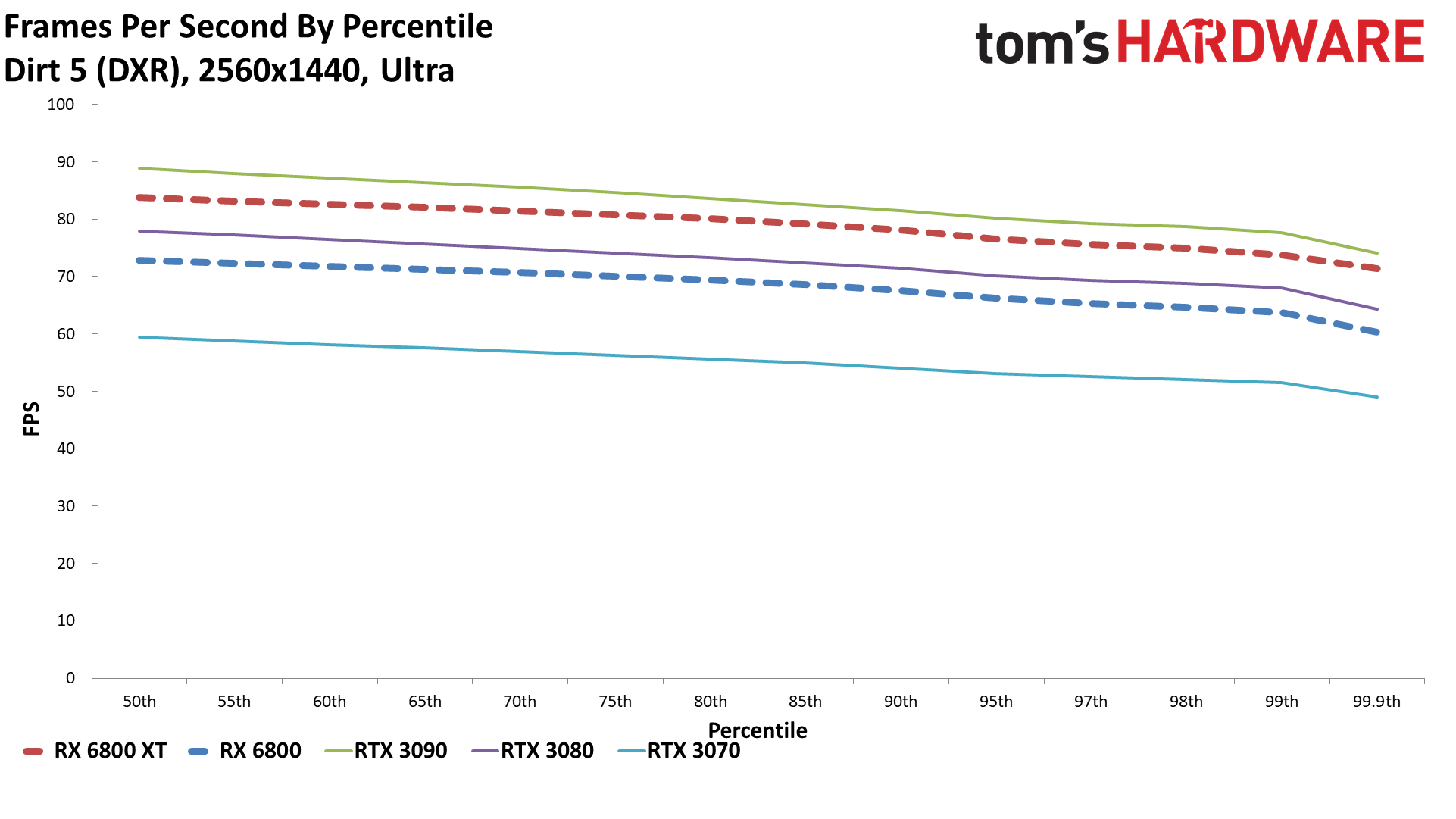

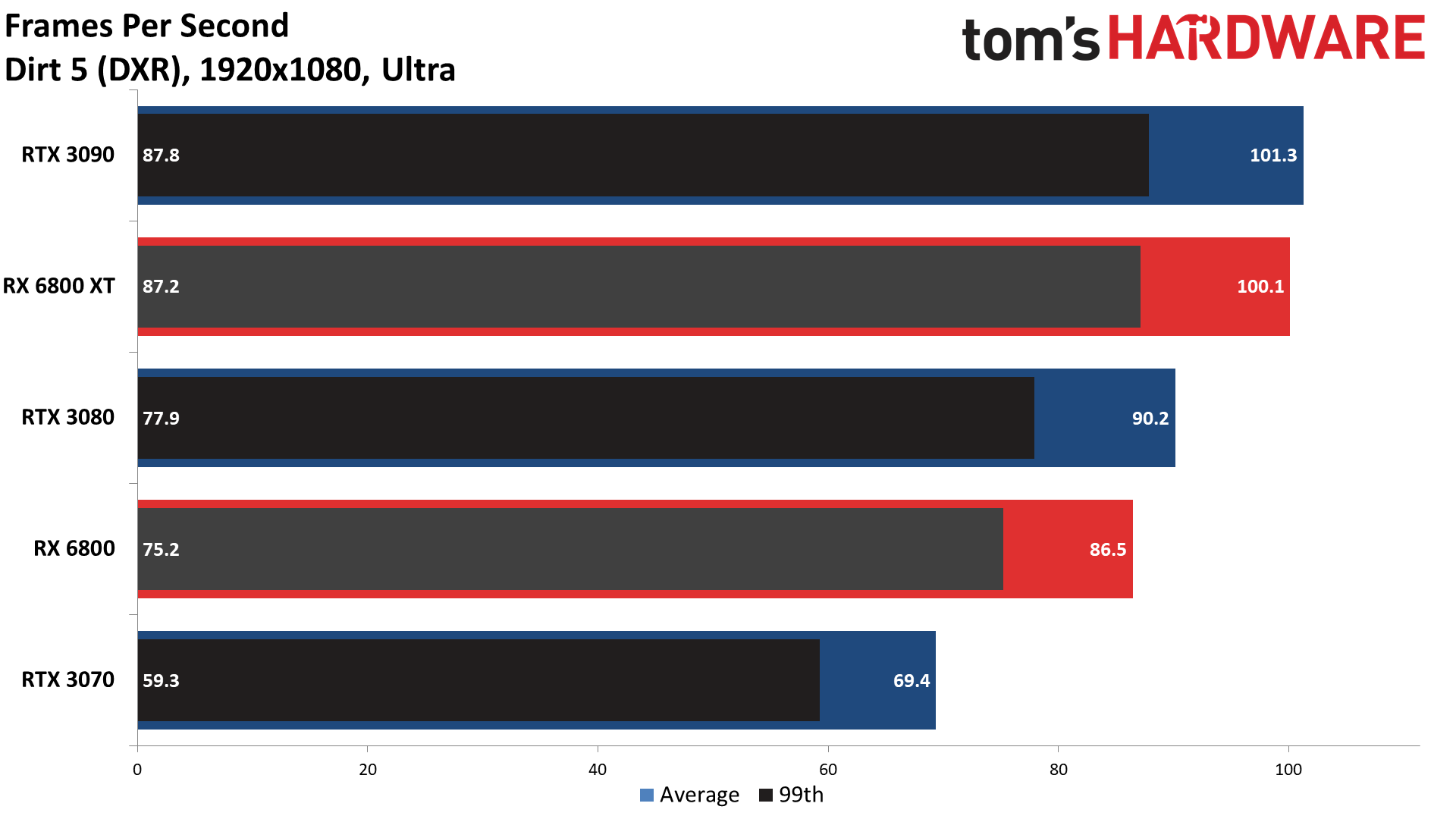

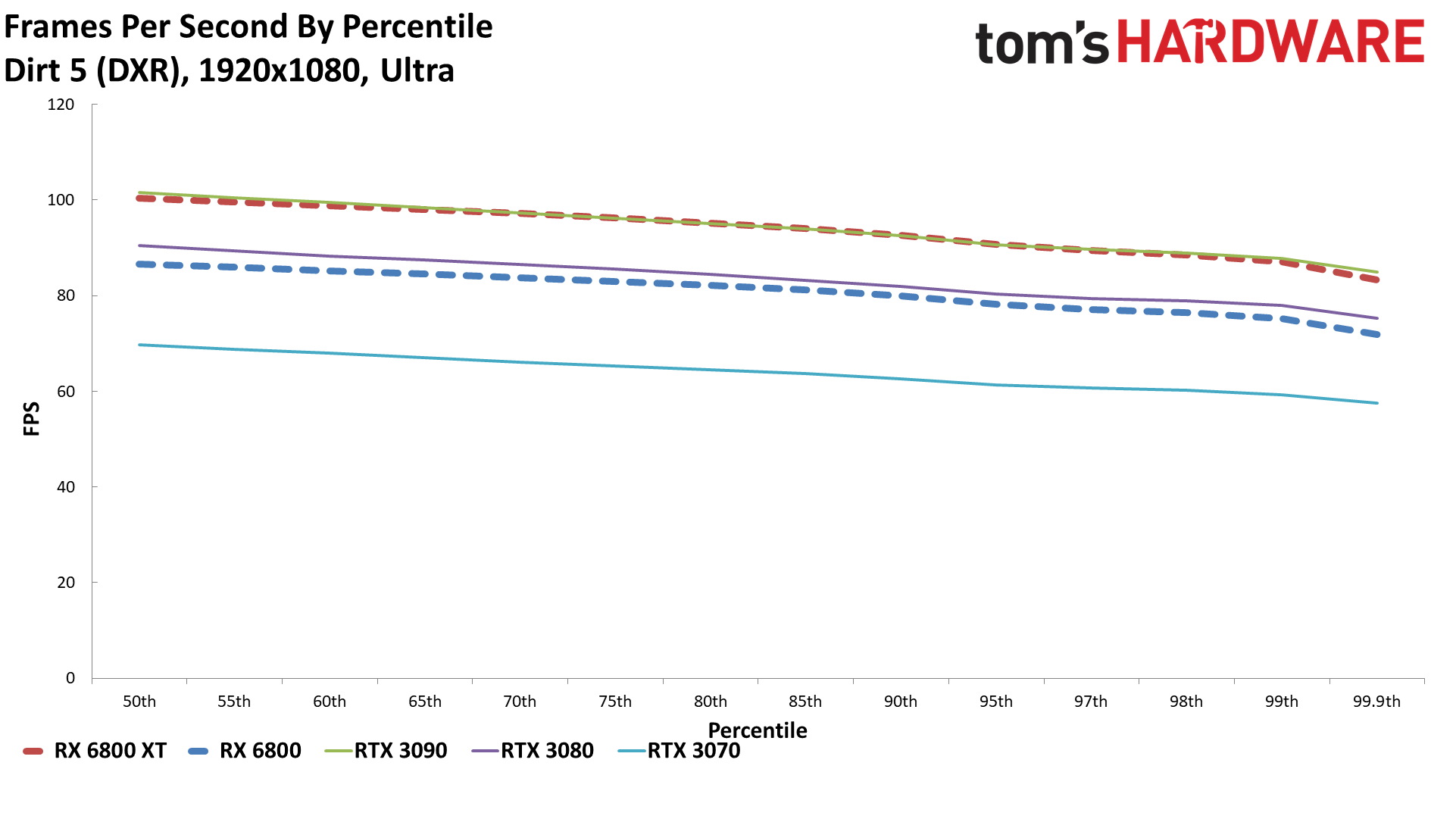

There are some definite caveats, however. First, the beta DXR support in Dirt 5 doesn't look all that different from the regular mode, and it's an AMD promoted game. Coincidence? Maybe, but it's probably more likely that AMD is working with Codemasters to ensure it runs suitably on the RX 6800 cards. The other problem is probably just a bug, but AMD's RX 6800 cards seem to render the reflections in Watch Dogs Legion with a bit less fidelity.

Besides the above, we have a third suite of ray tracing tests: nine games (or benchmarks of future games) and 3DMark Port Royal. Of note, Wolfenstein Youngblood with ray tracing (which uses Nvidia's pre-VulkanRT extensions) wouldn't work on the AMD cards, and neither would the Bright Memory Infinite benchmark. Also, Crysis Remastered had some rendering errors with ray tracing enabled (on the nanosuits). Again, that's a known bug.

Radeon RX 6800 Gaming Performance

We've retested all of the RTX 30-series cards on our Core i9-9900K testbed … but we didn't have time to retest the RTX 20-series or RX 5700 series GPUs. The system has been updated with the latest 457.30 Nvidia drivers and AMD's pre-launch RX 6800 drivers, as well as Windows 10 20H2 (the October 2020 update to Windows). It looks like the combination of drivers and/or Windows updates may have dropped performance by about 1-2 percent overall, though there are other variables in play. Anyway, the older GPUs are included mostly as a point of reference.

We have 1080p, 1440p, and 4K ultra results for each of the games, as well as the combined average of the nine titles. We're going to dispense with the commentary for individual games right now (because of a time crunch), but we'll discuss the overall trends below.

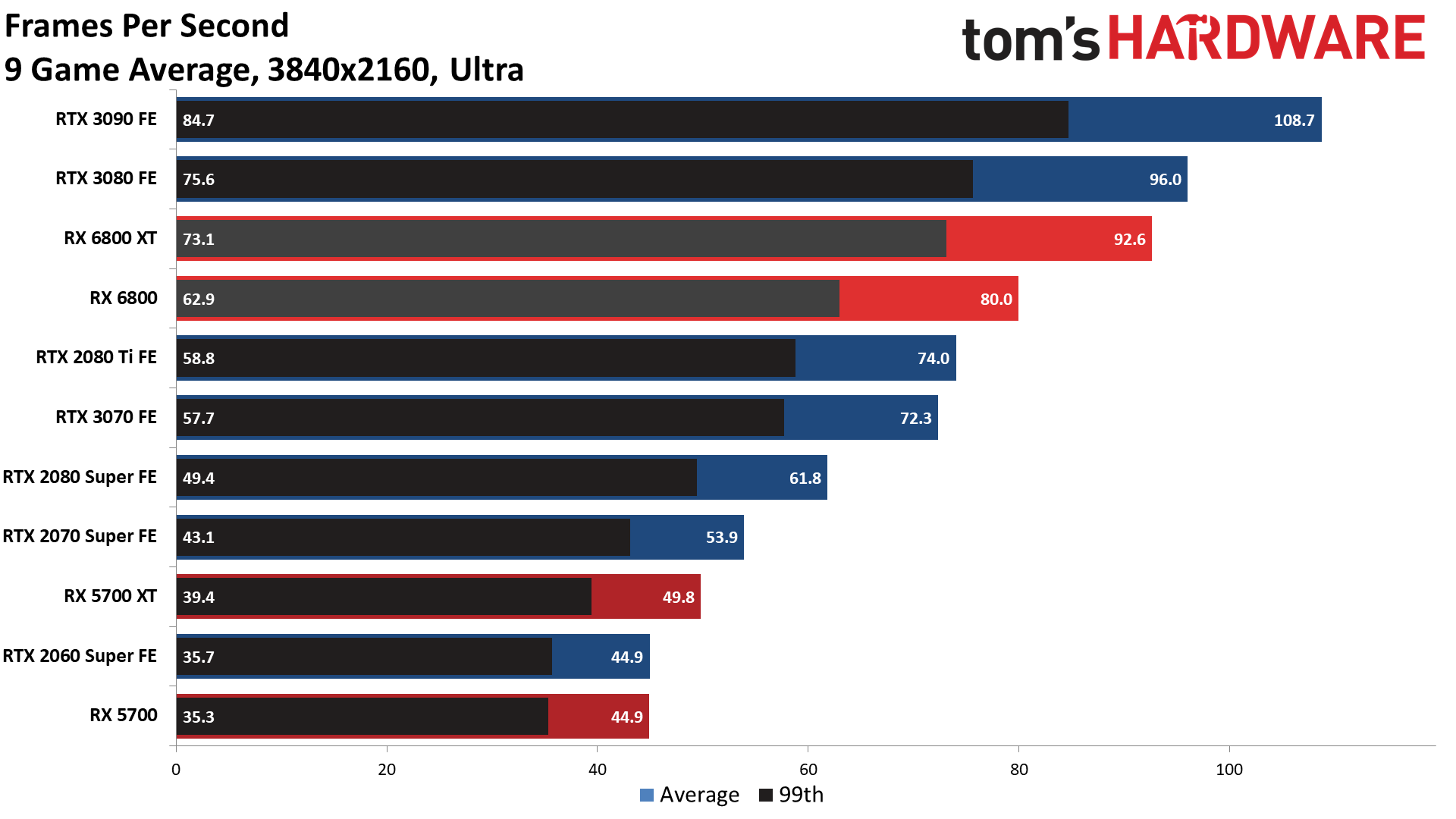

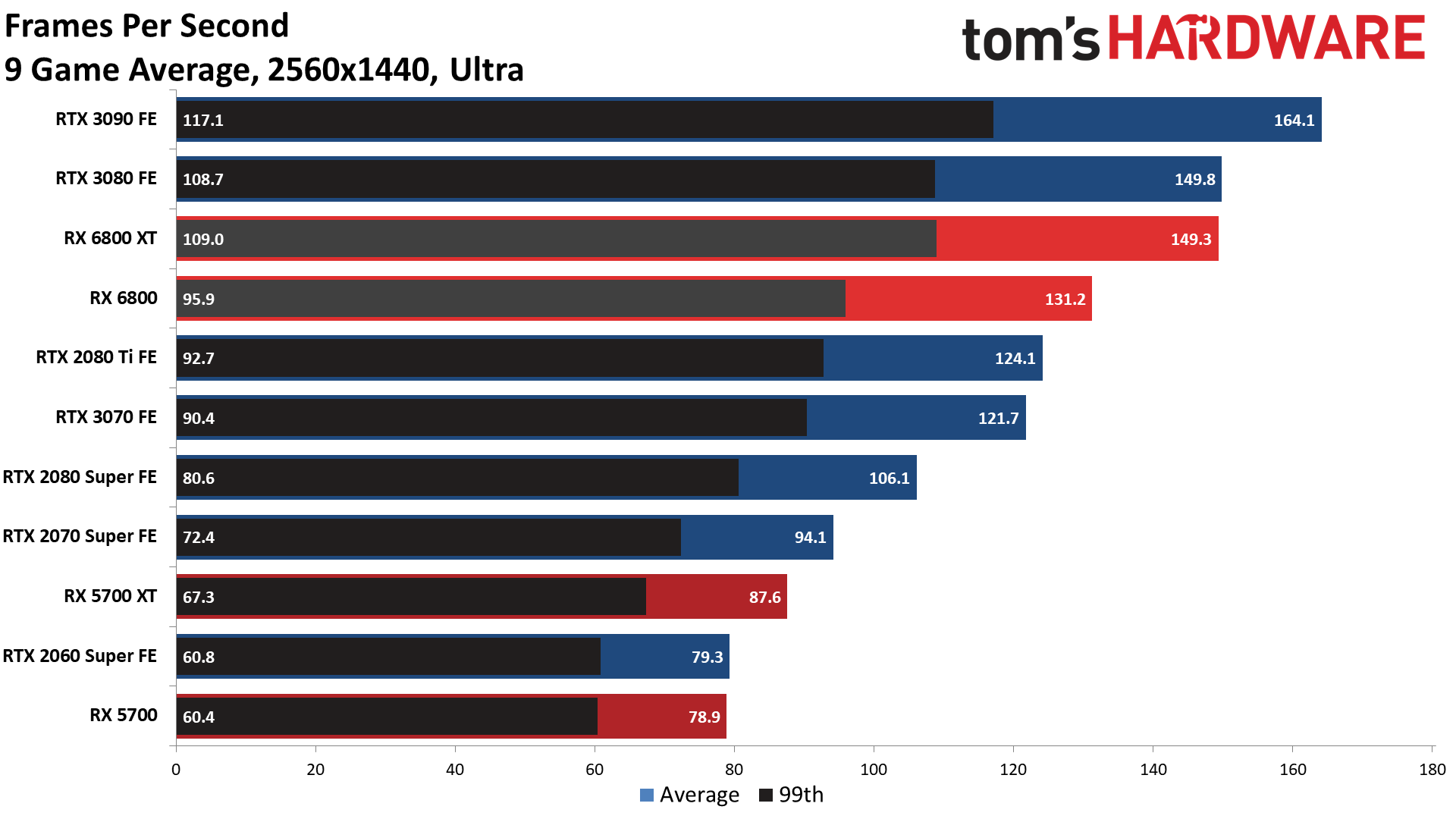

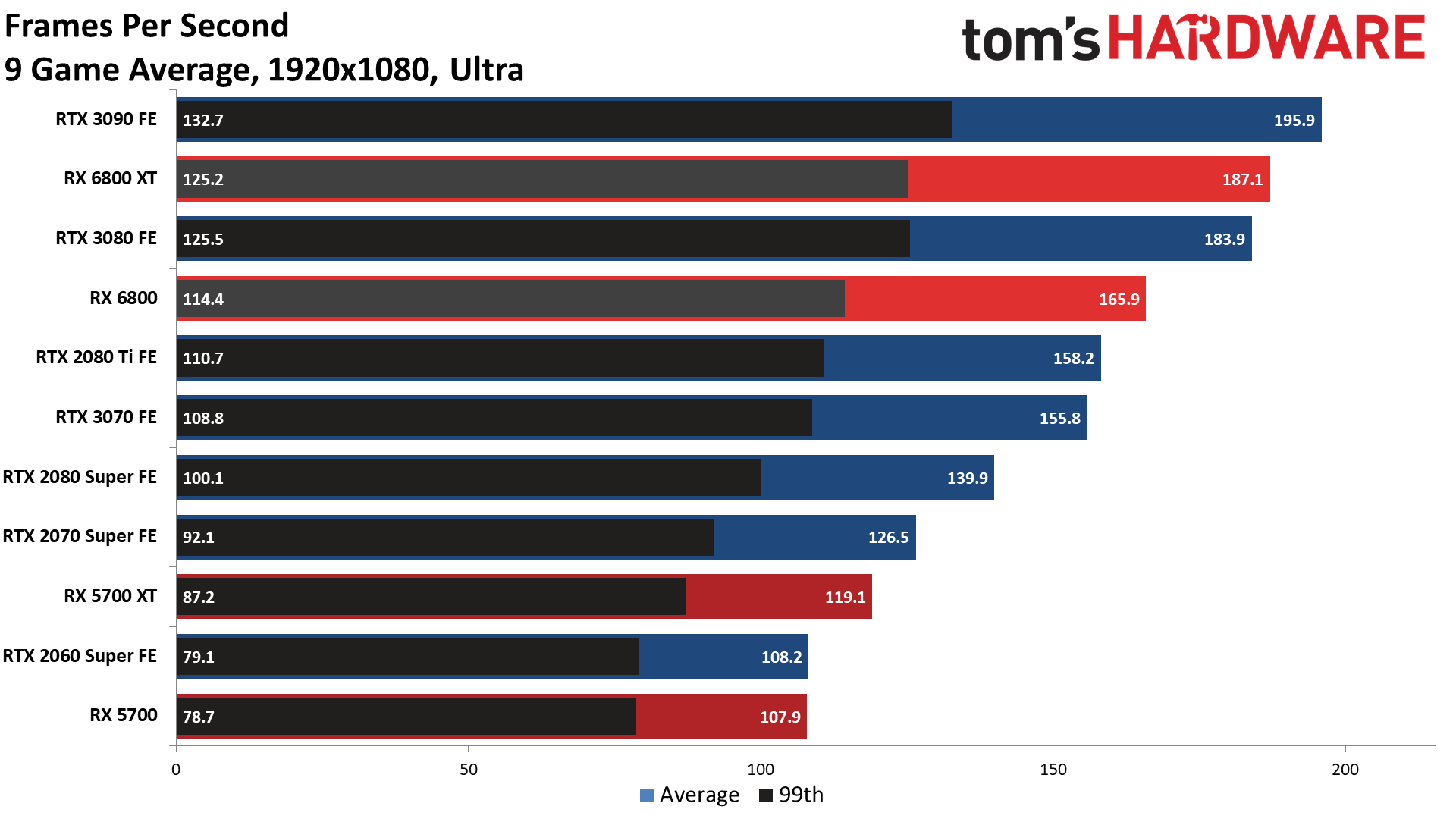

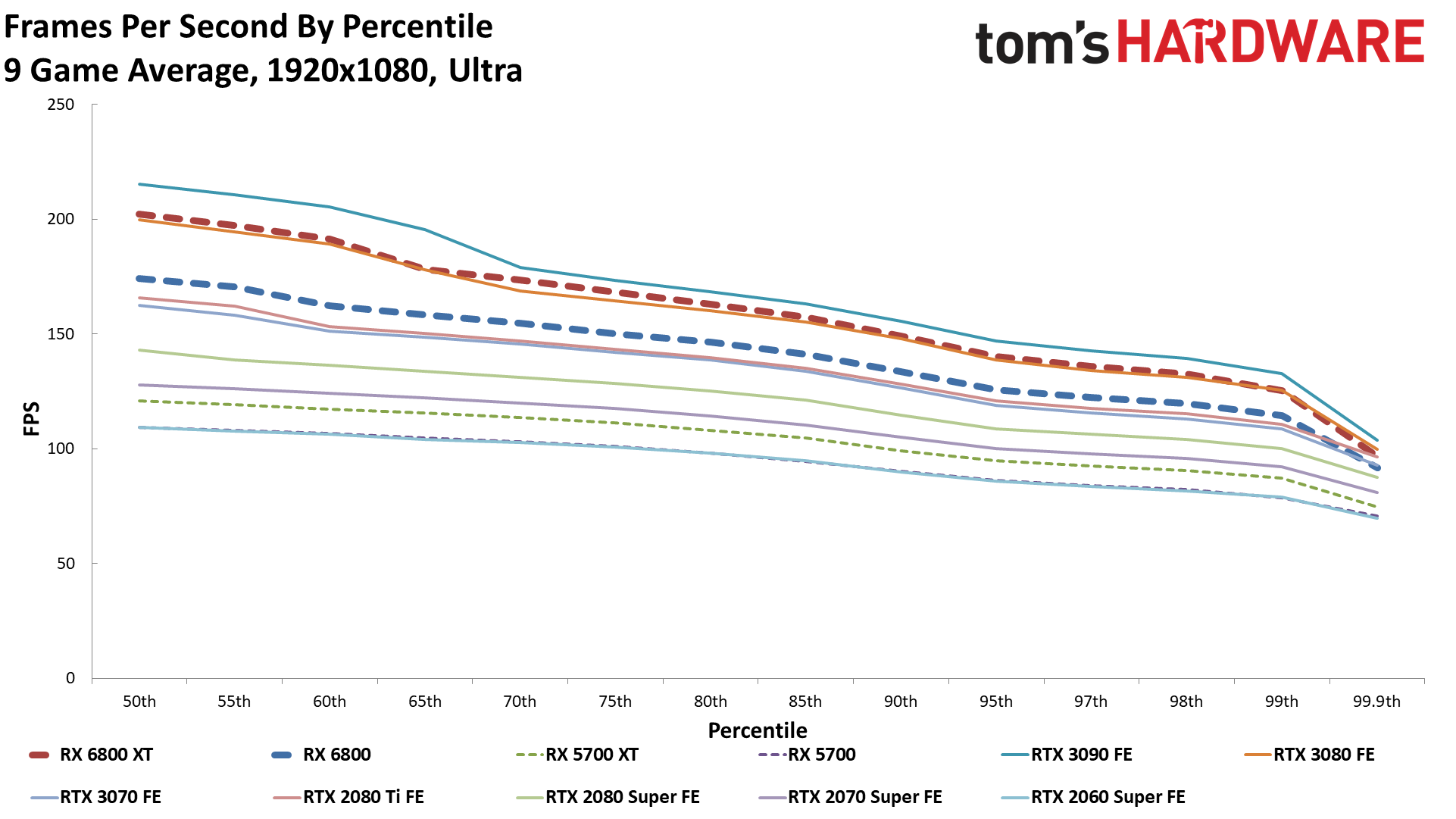

9 Game Average

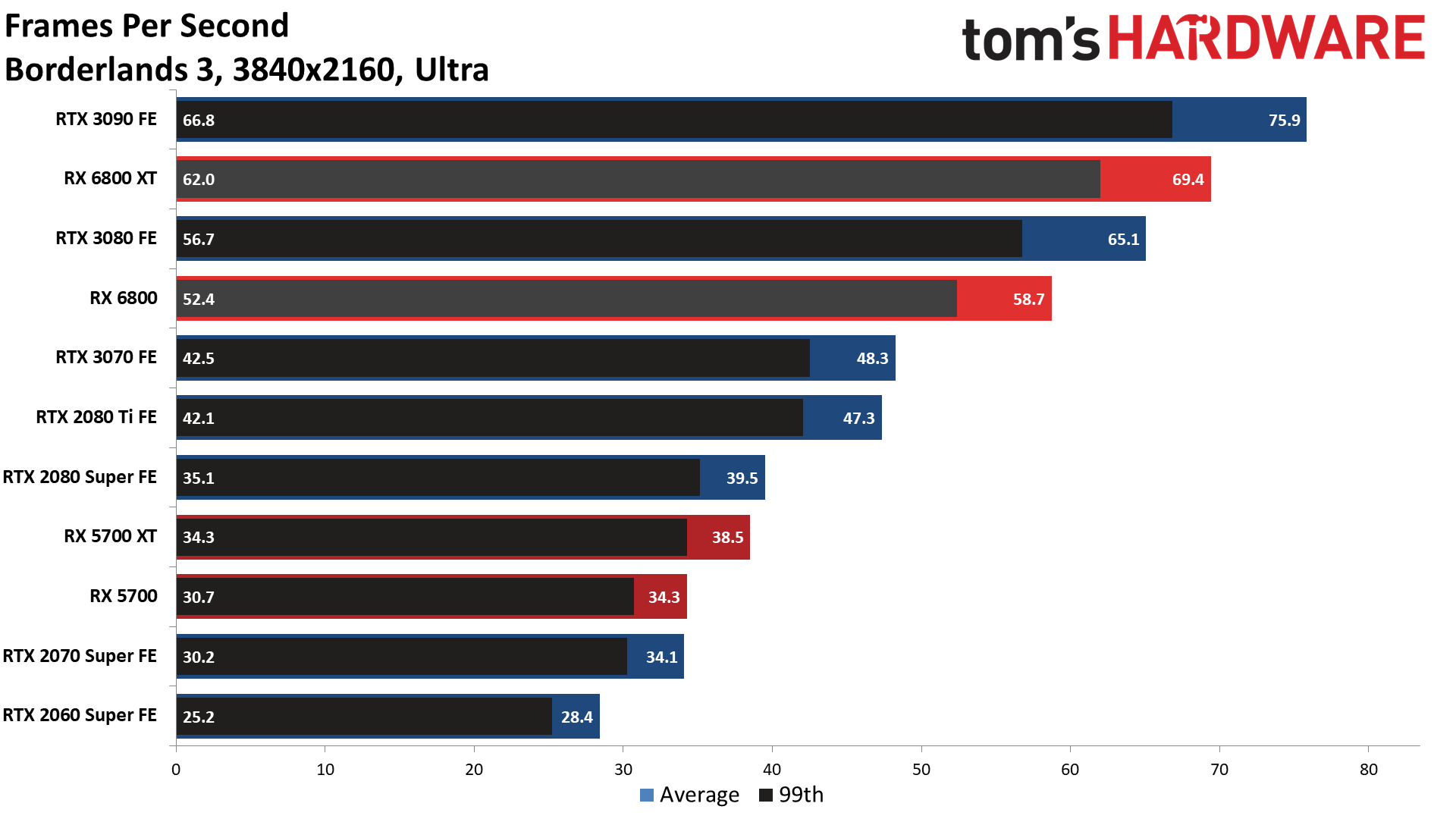

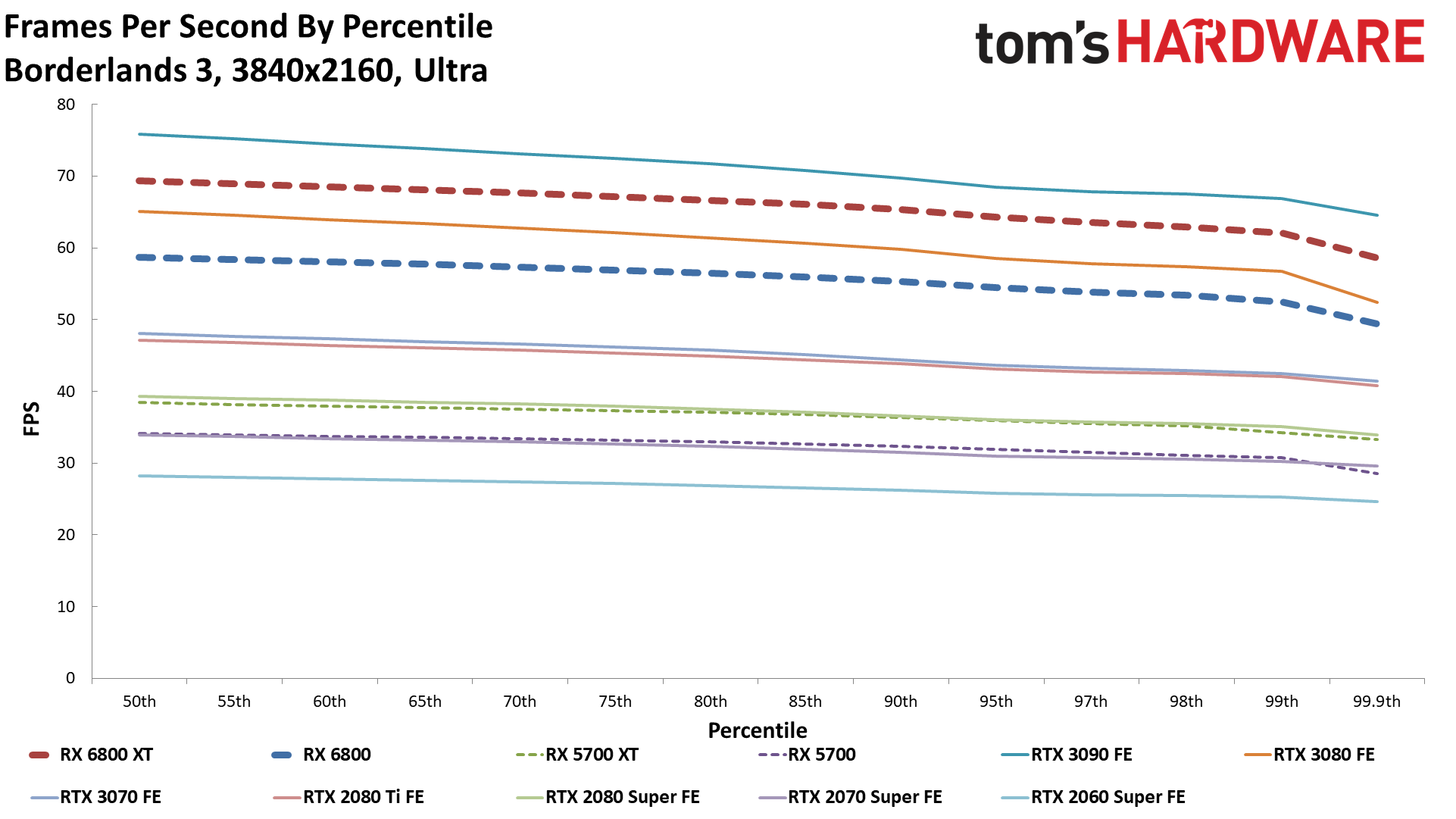

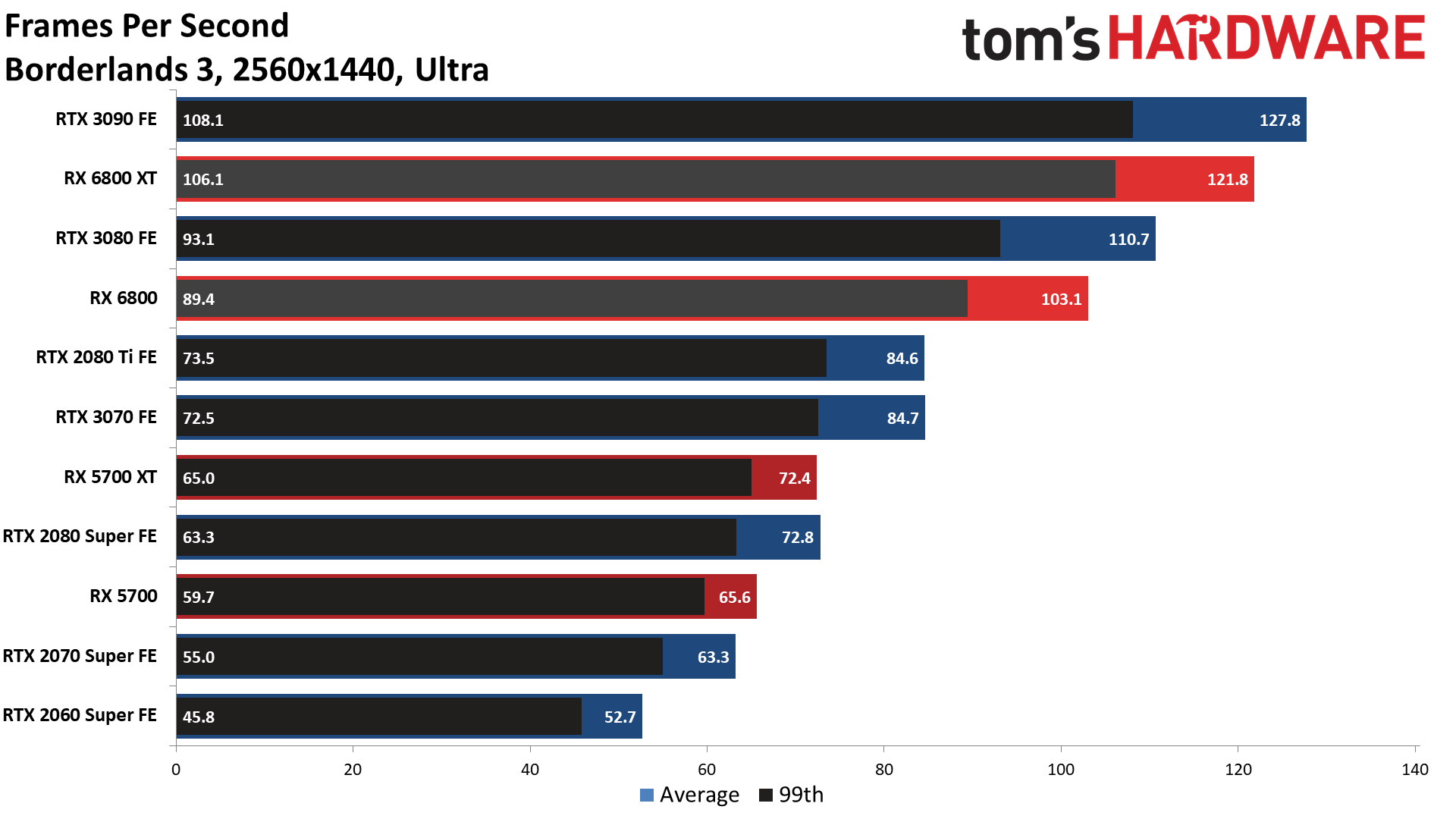

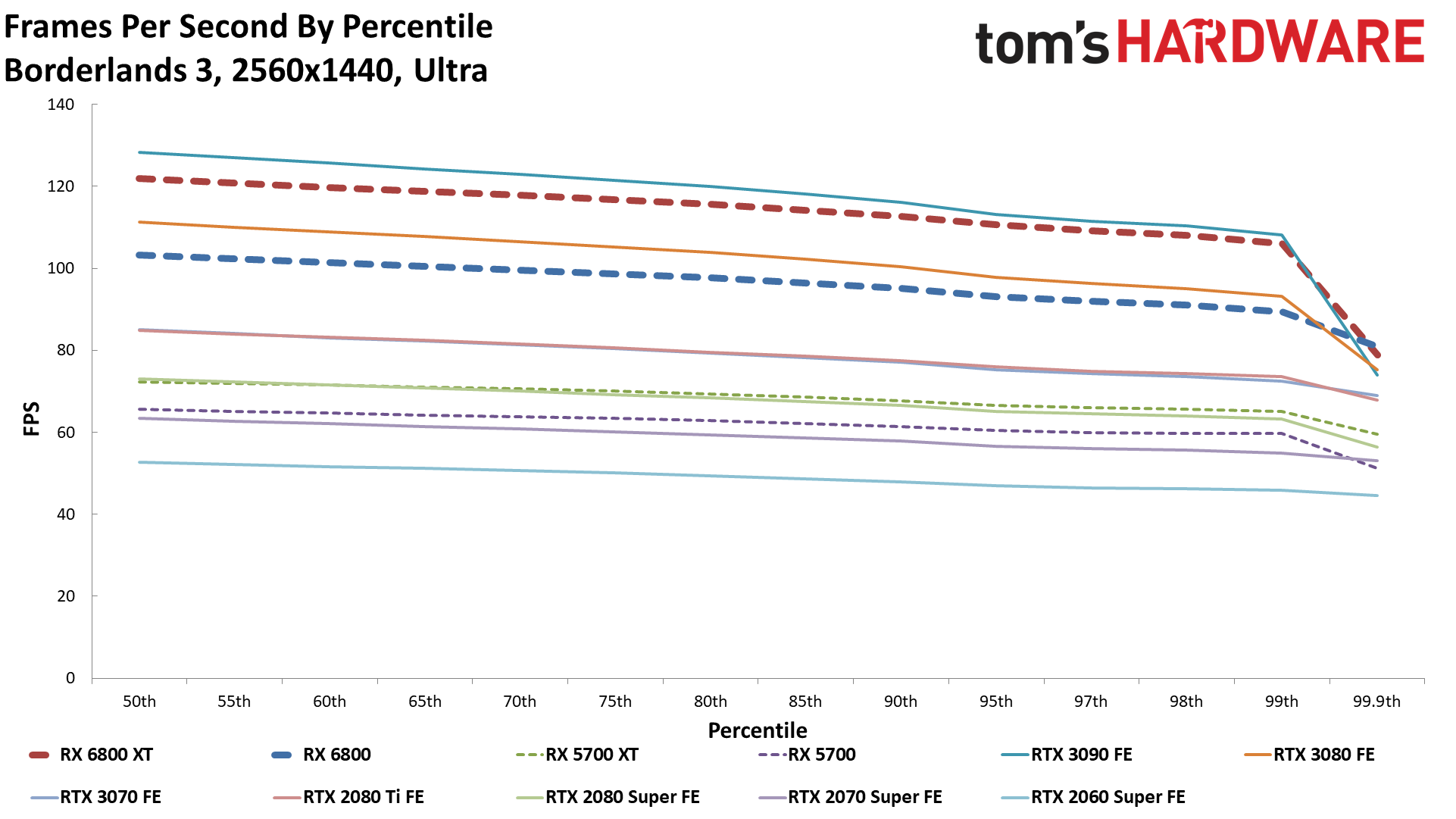

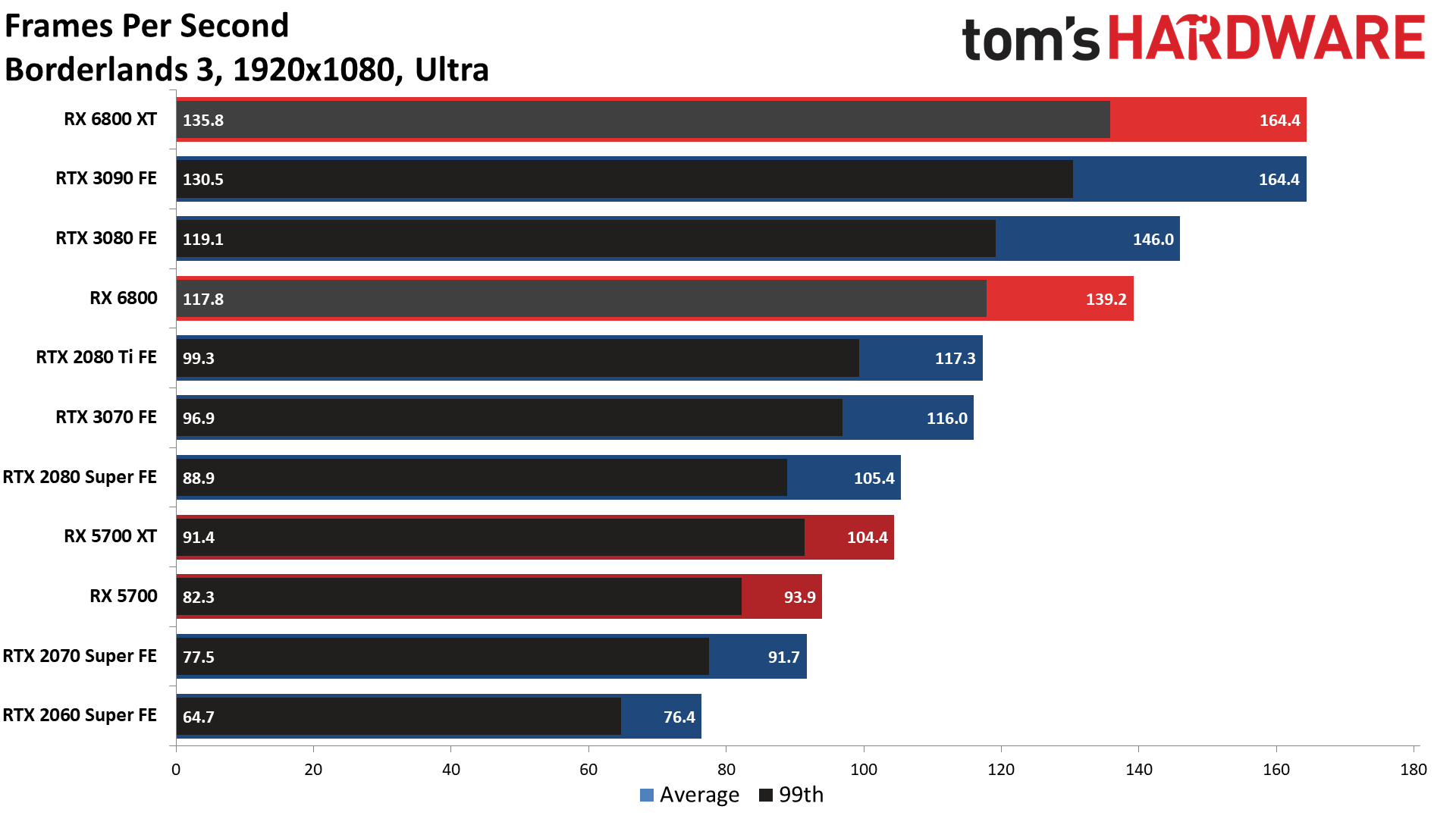

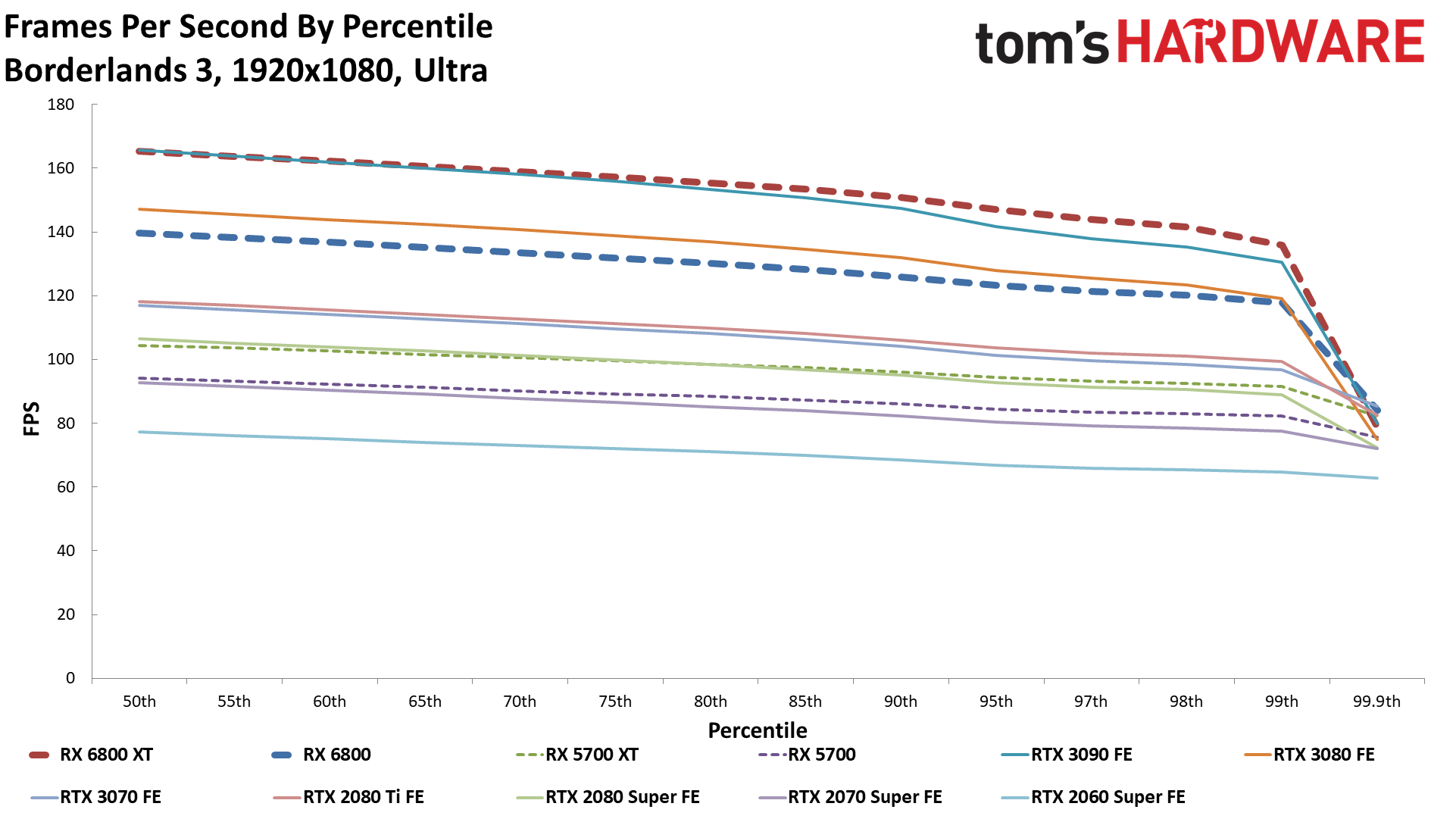

Borderlands 3

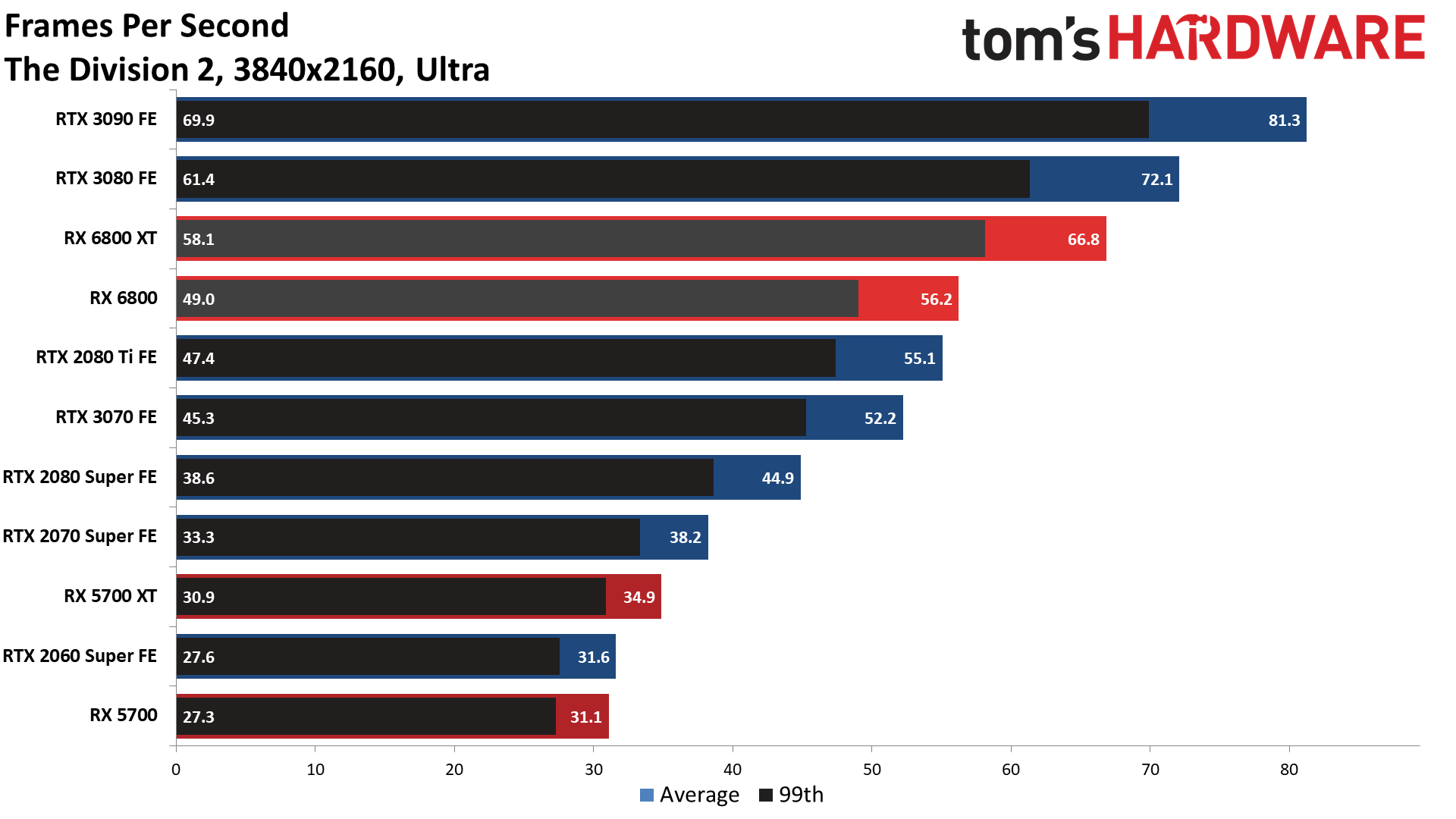

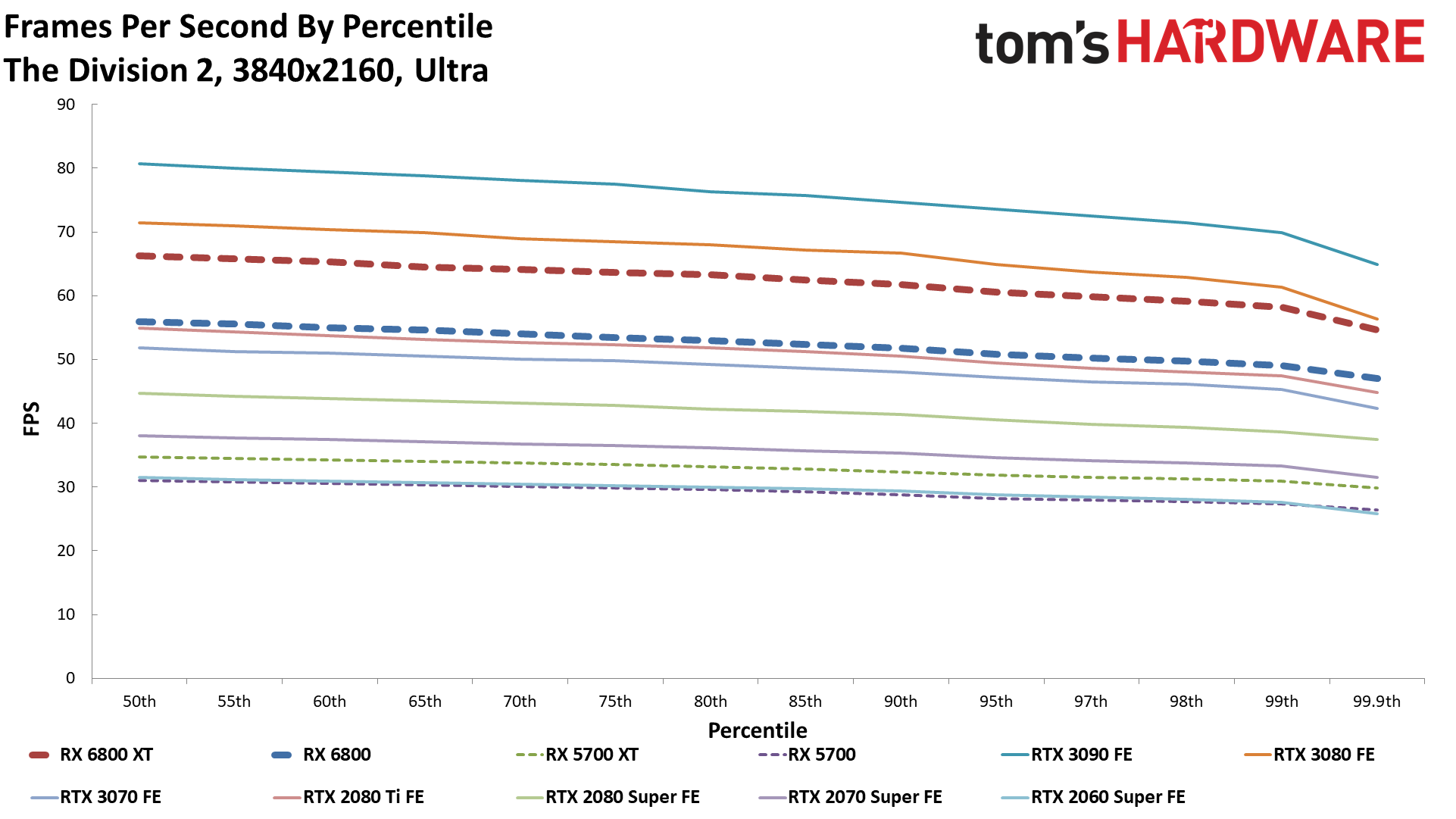

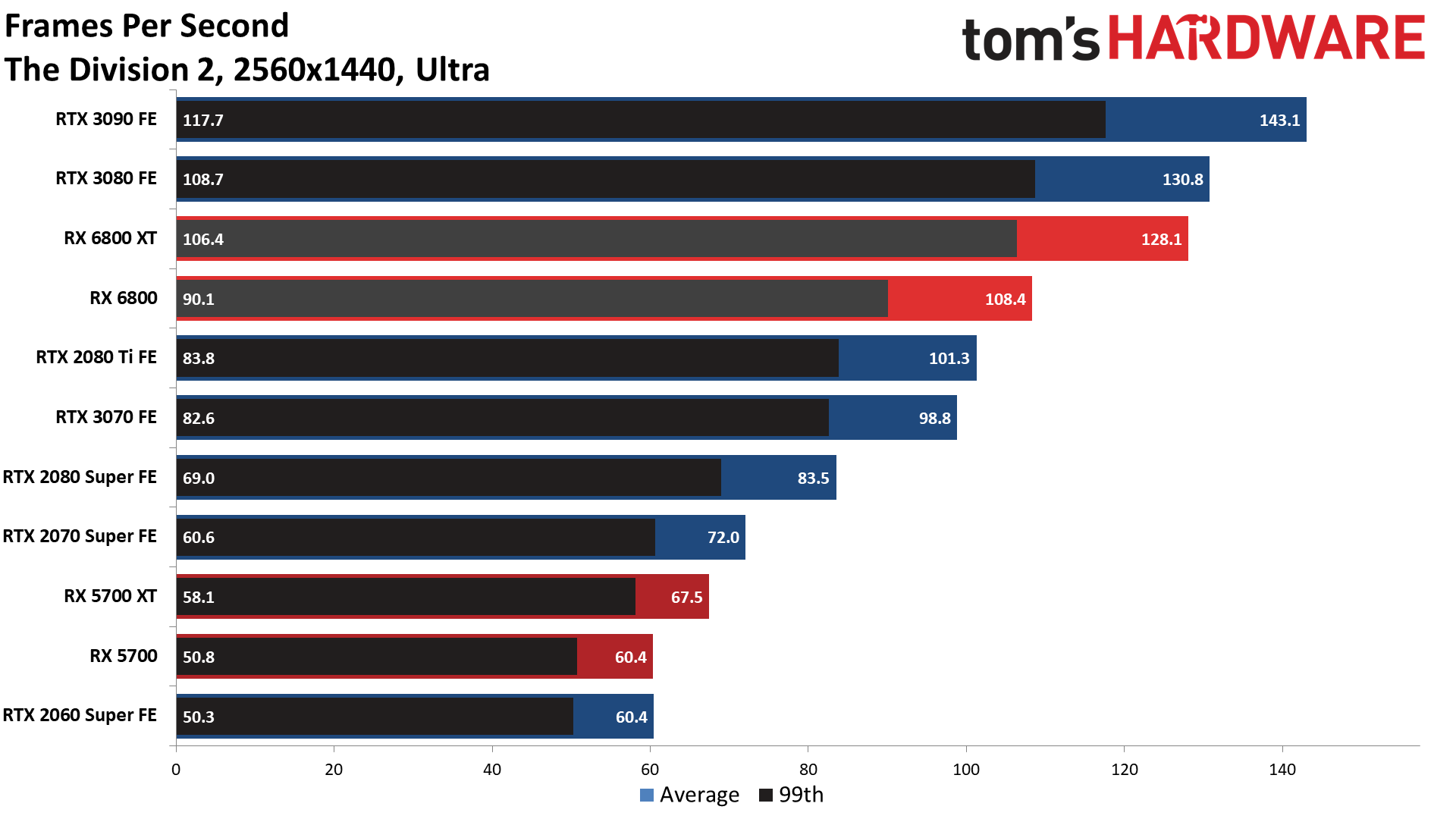

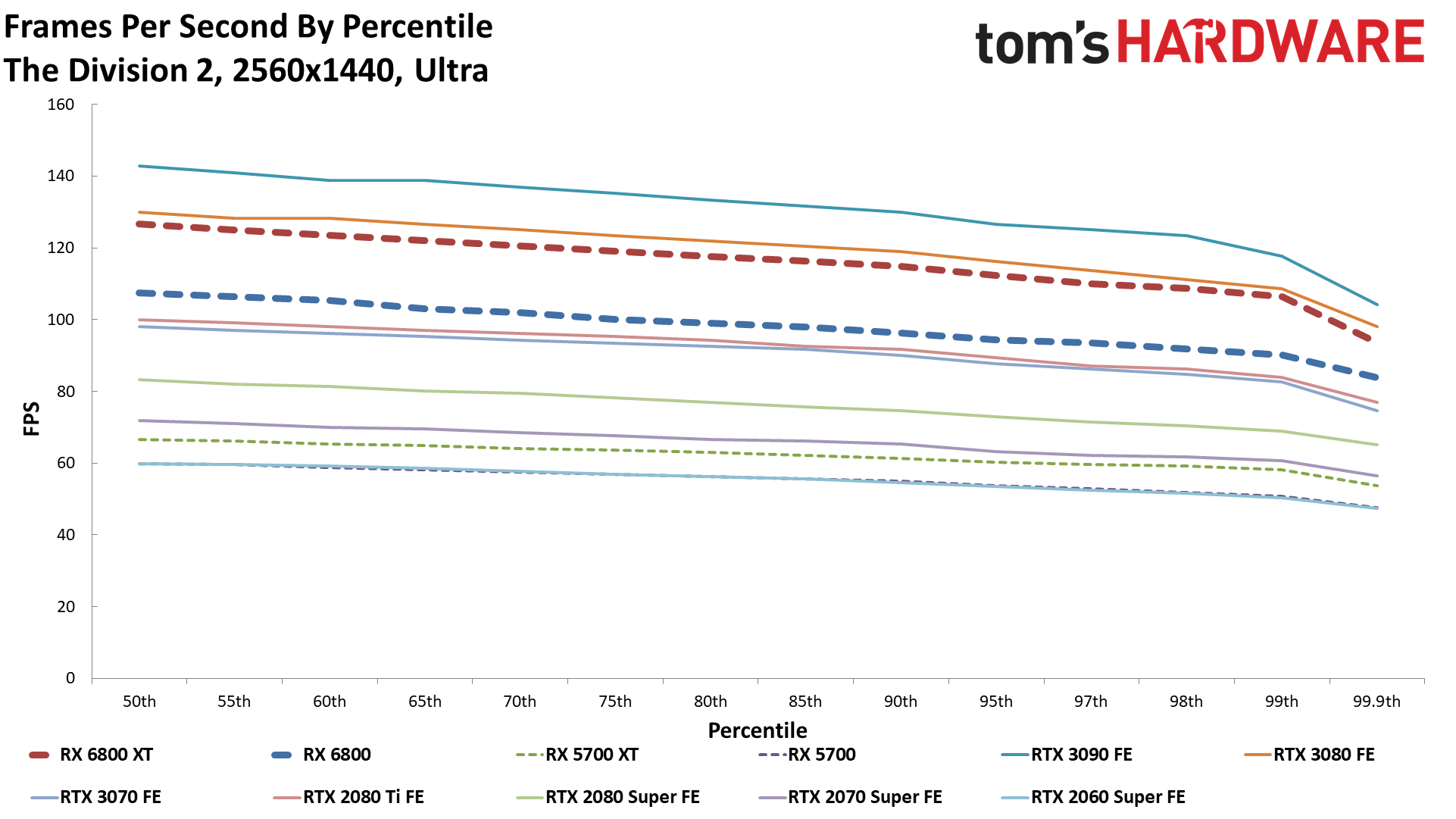

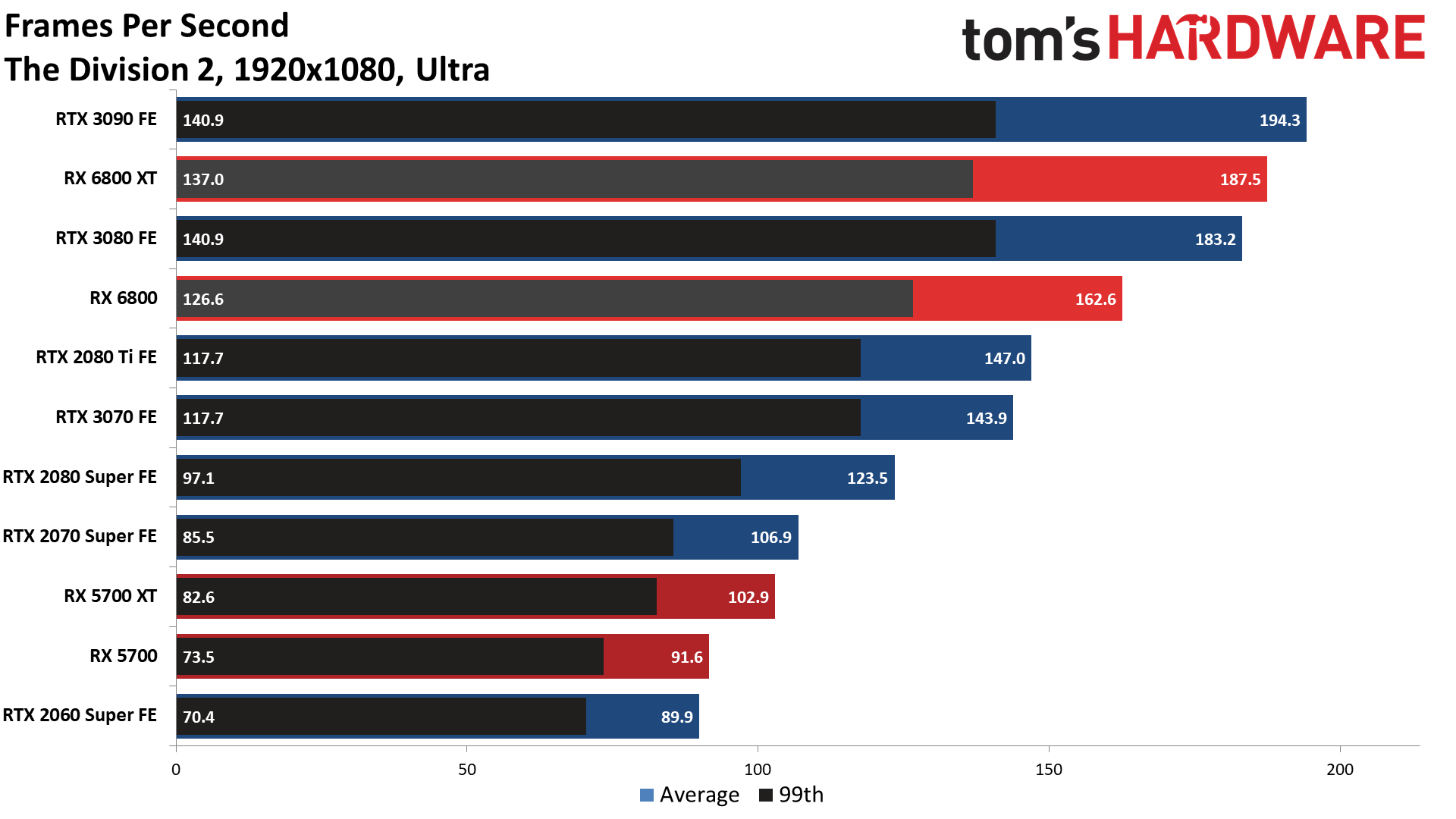

The Division 2

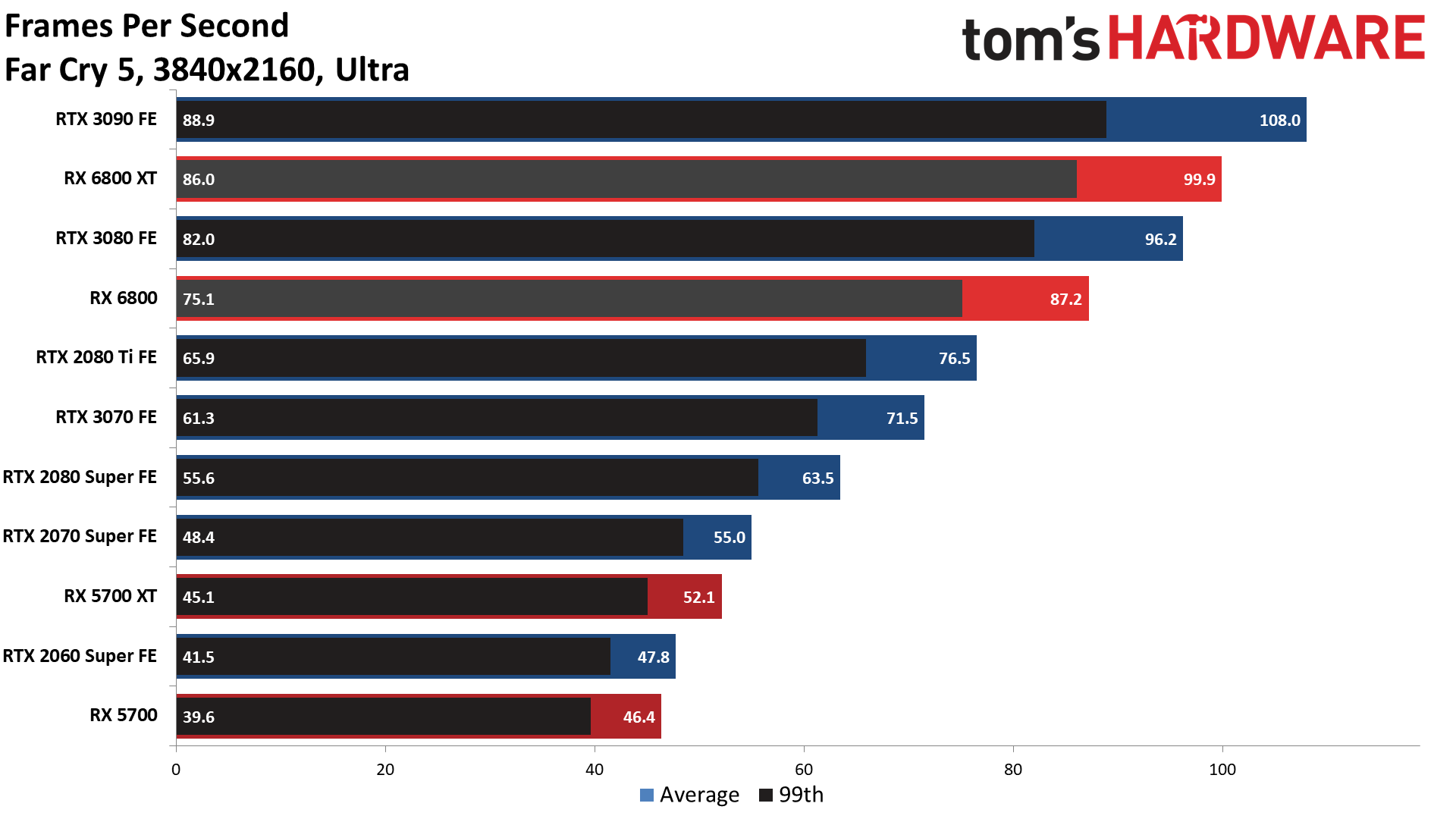

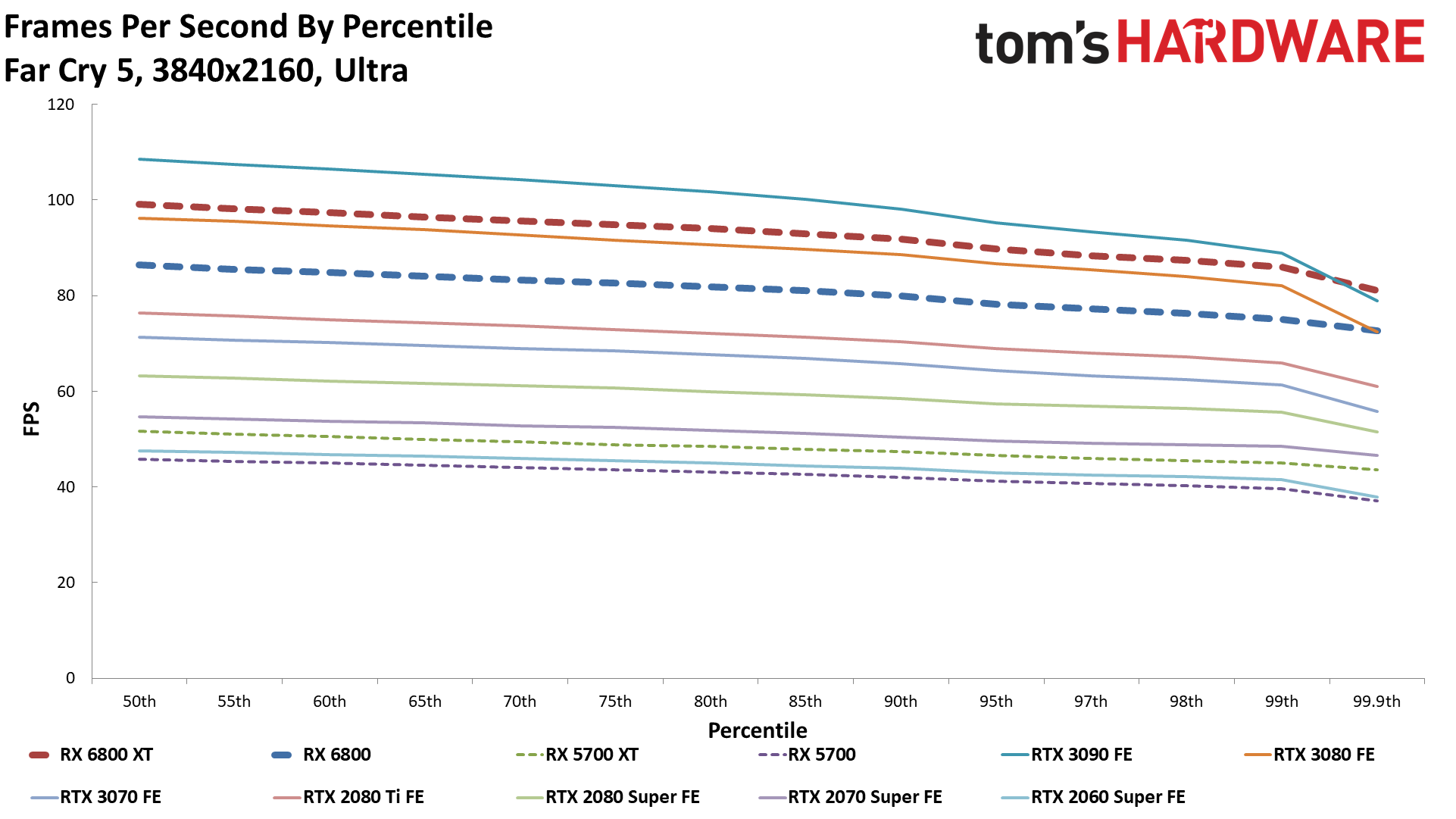

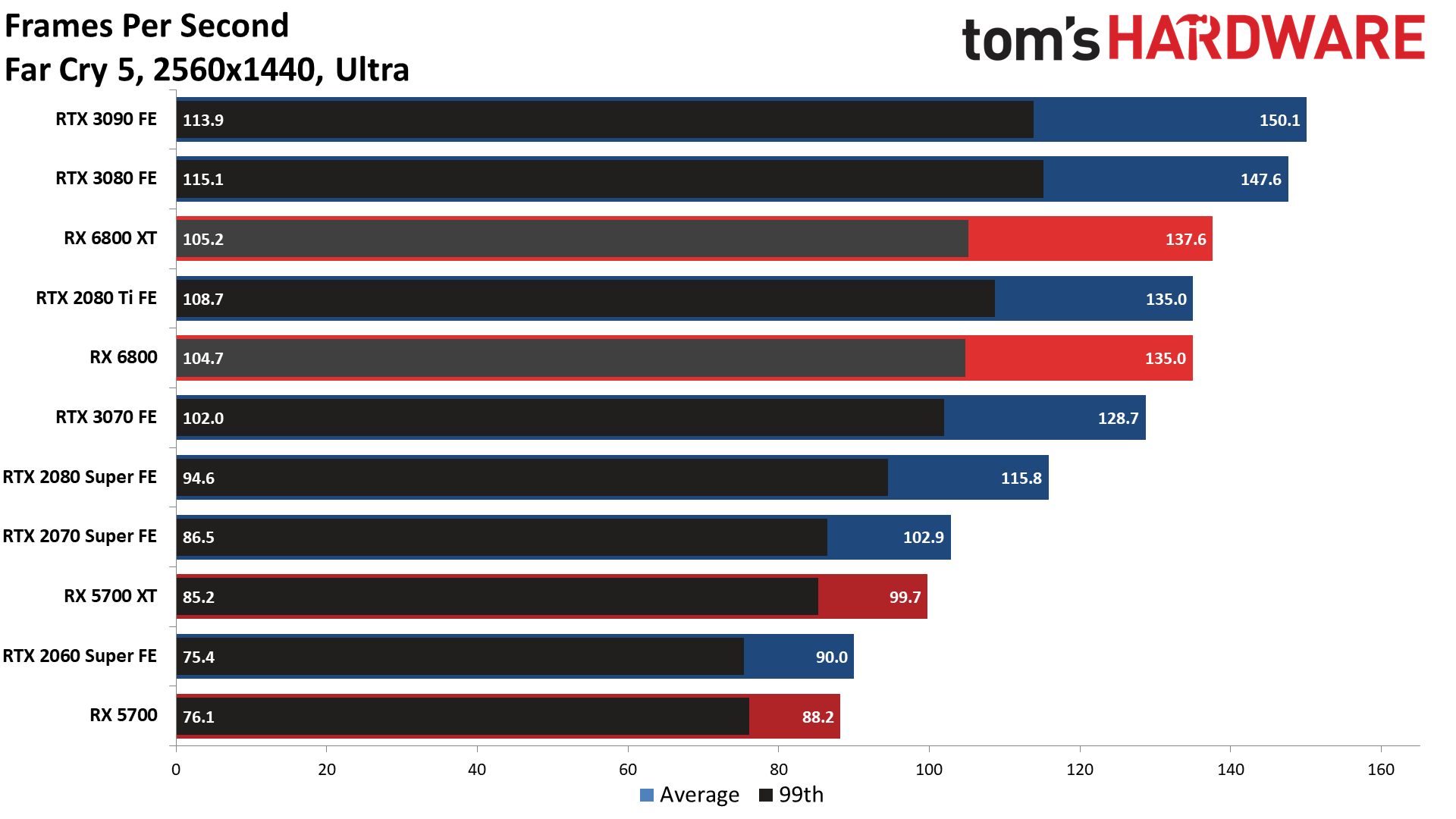

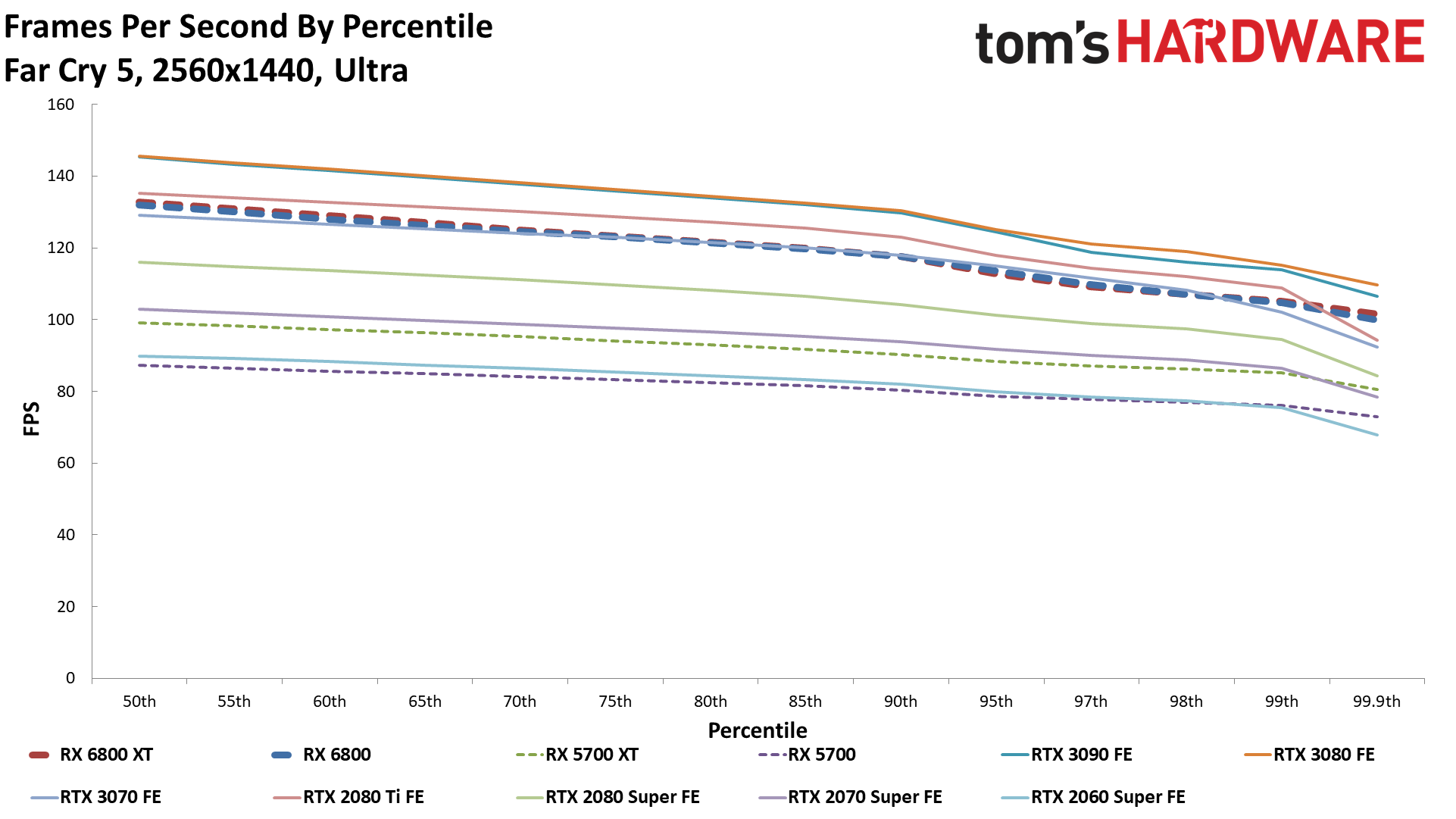

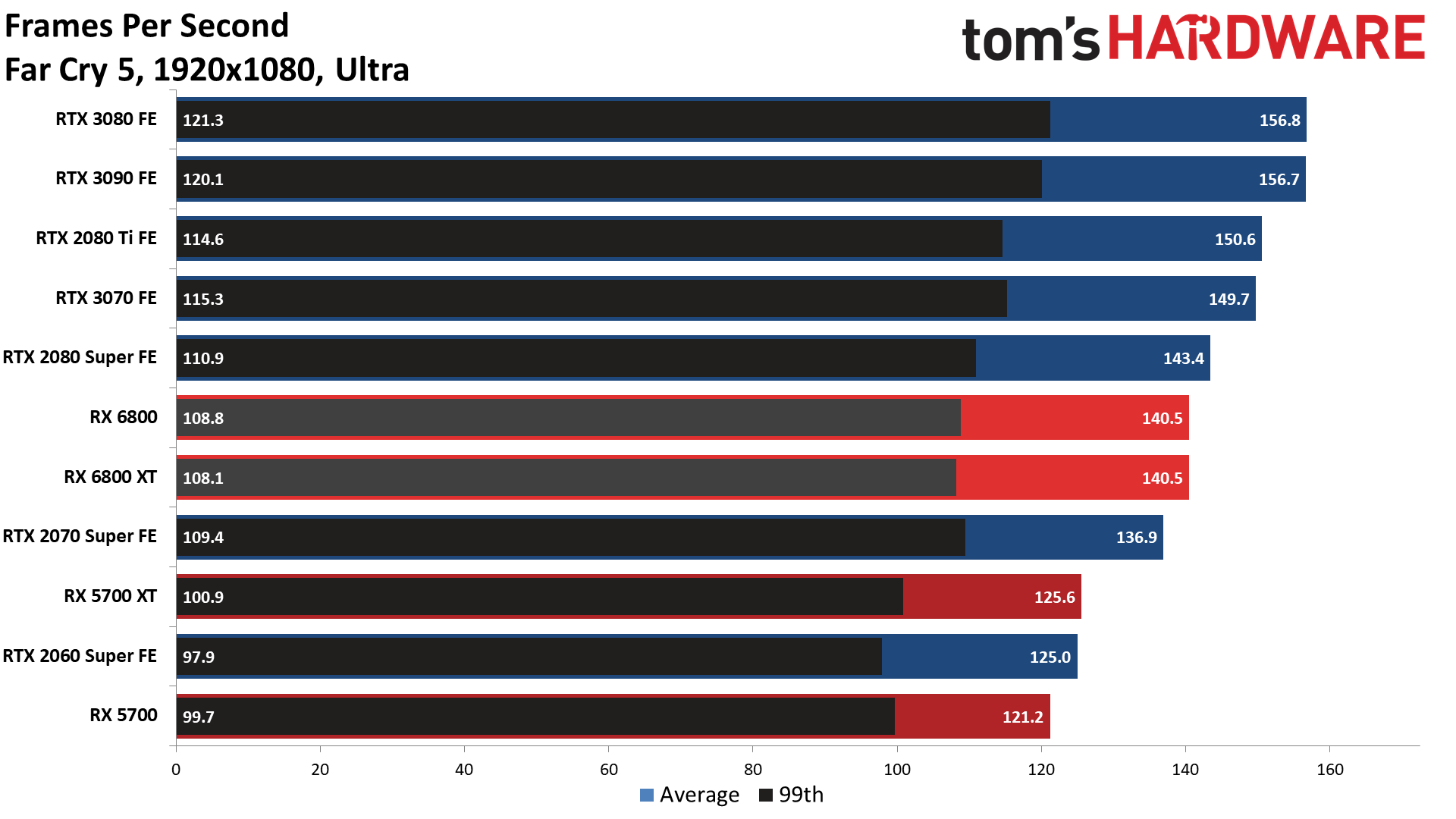

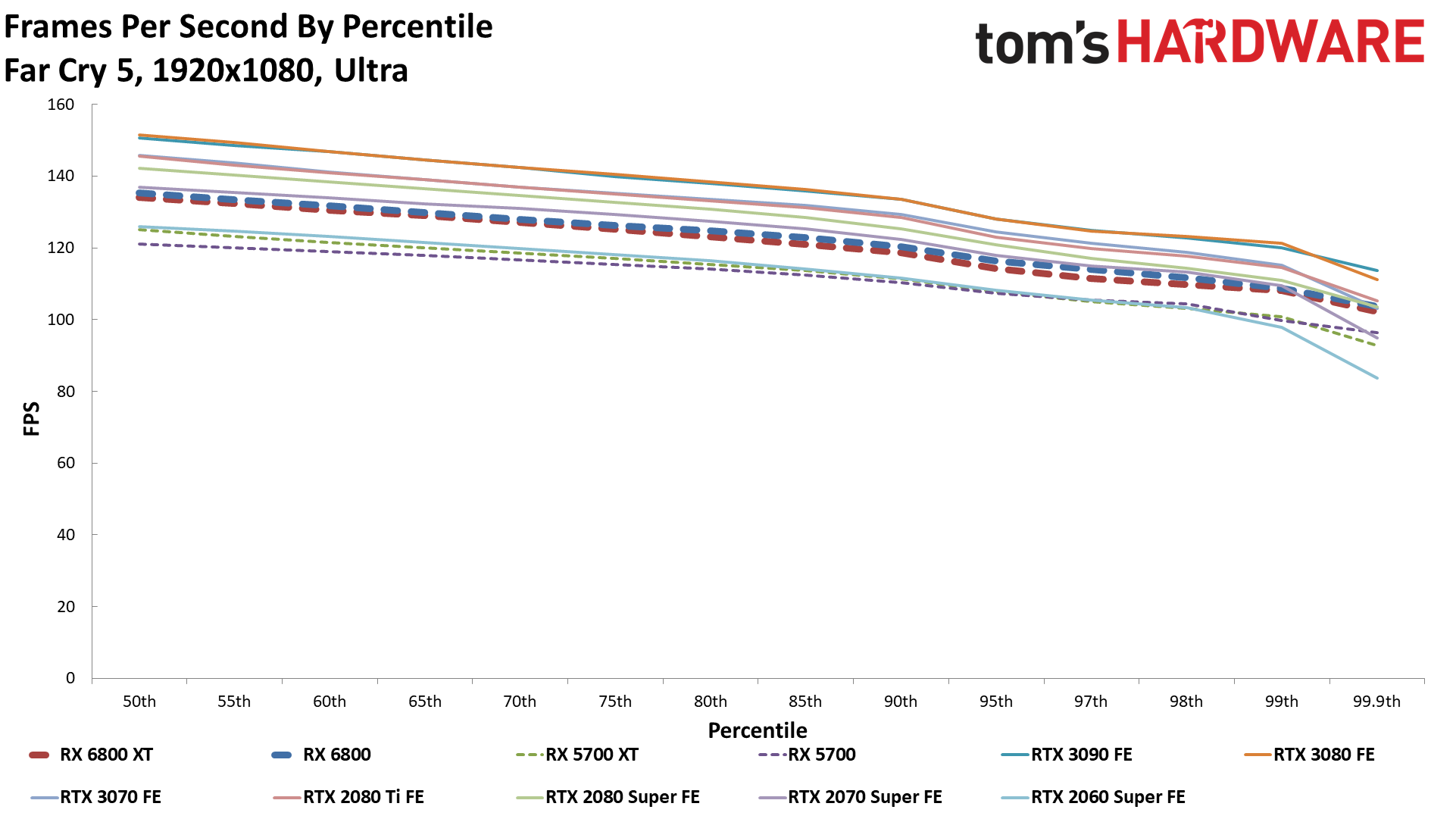

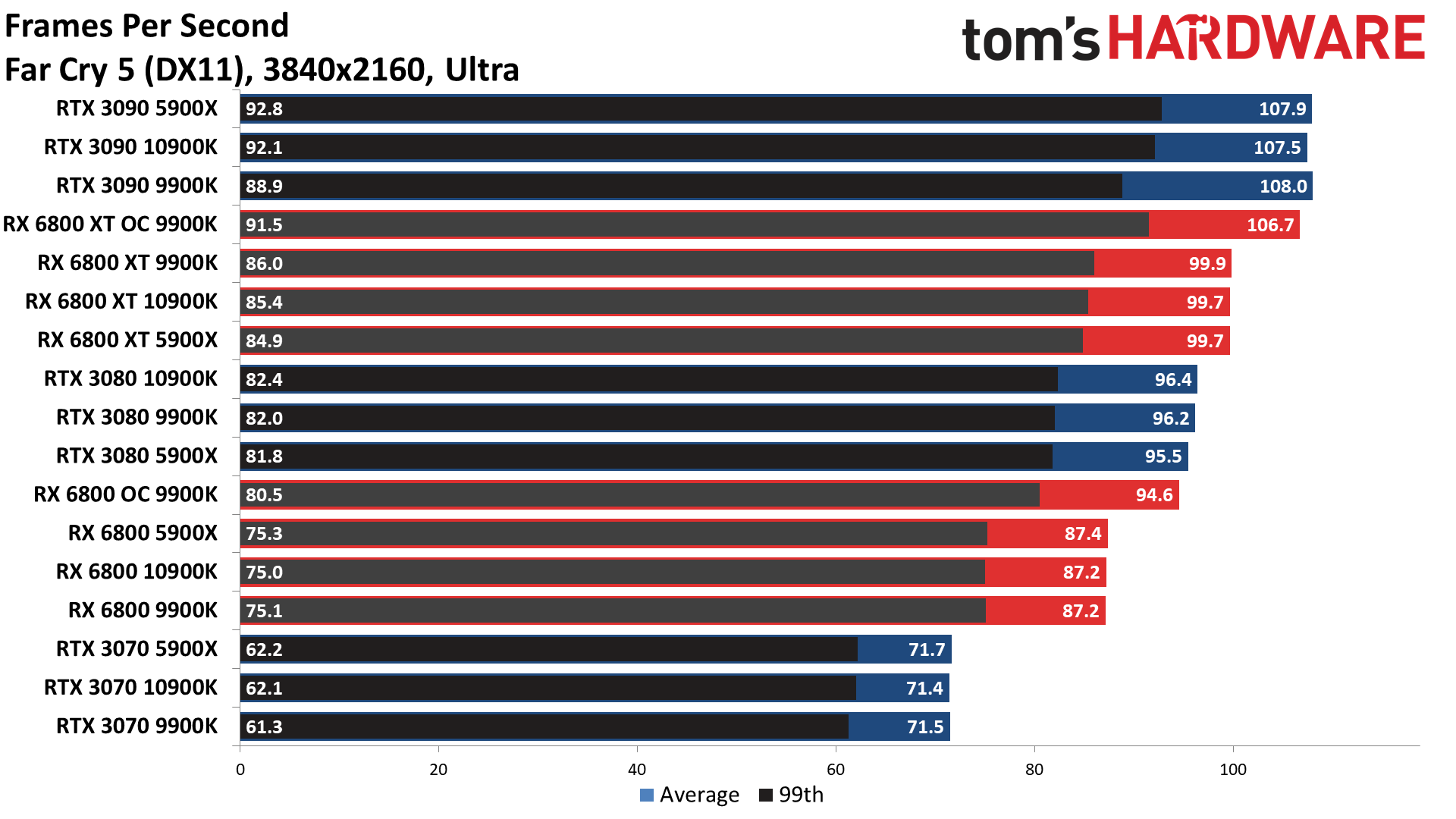

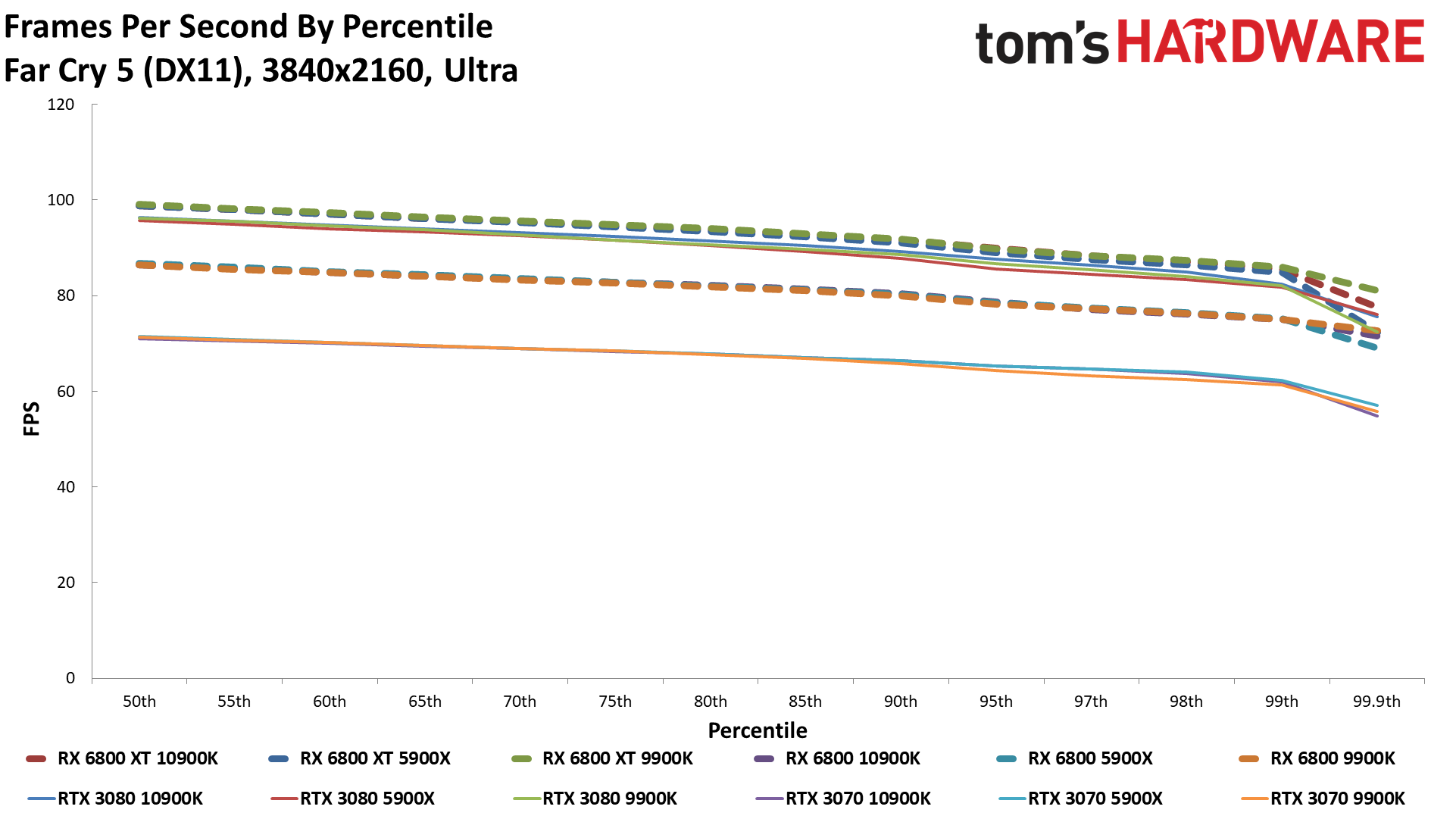

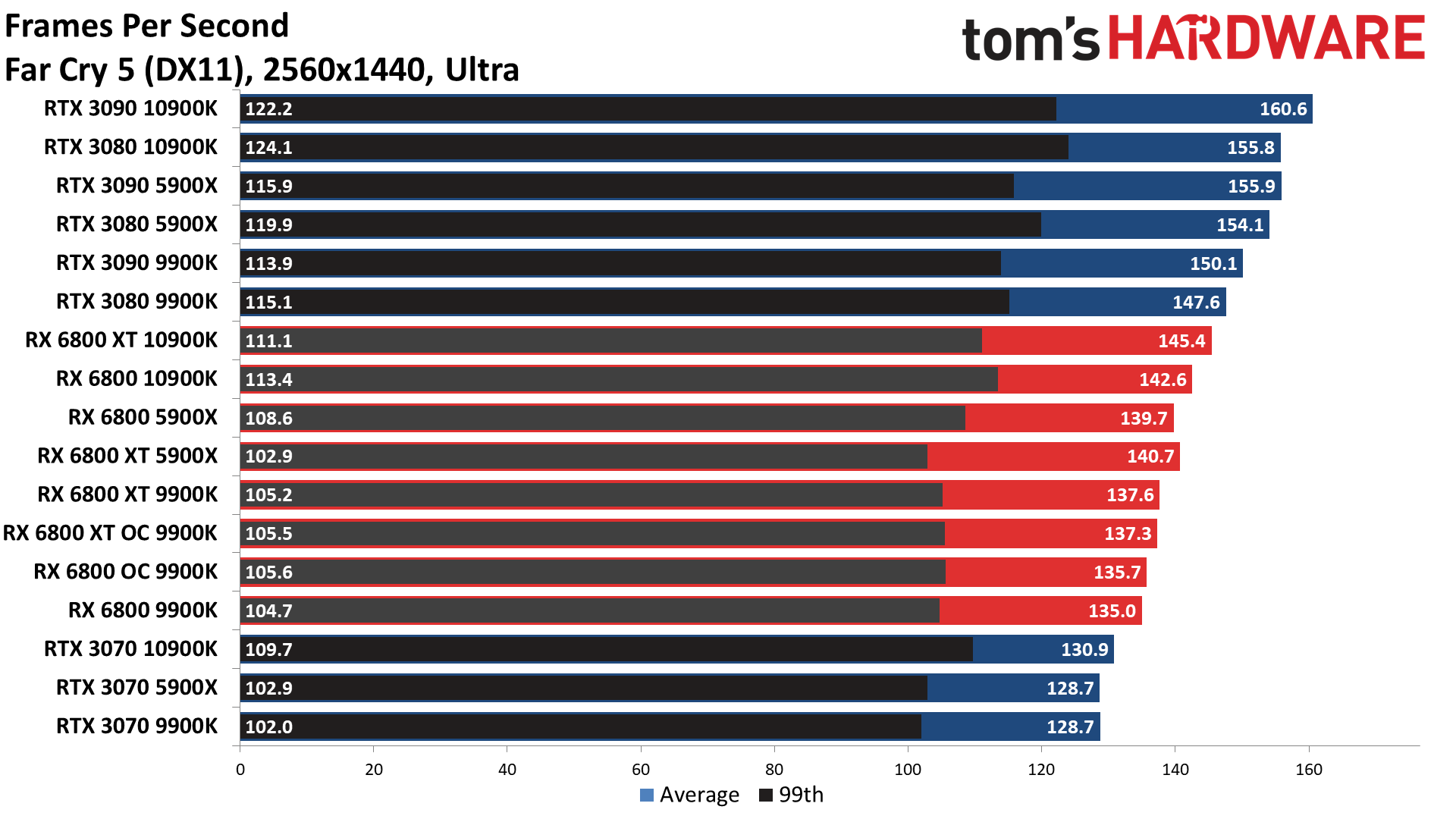

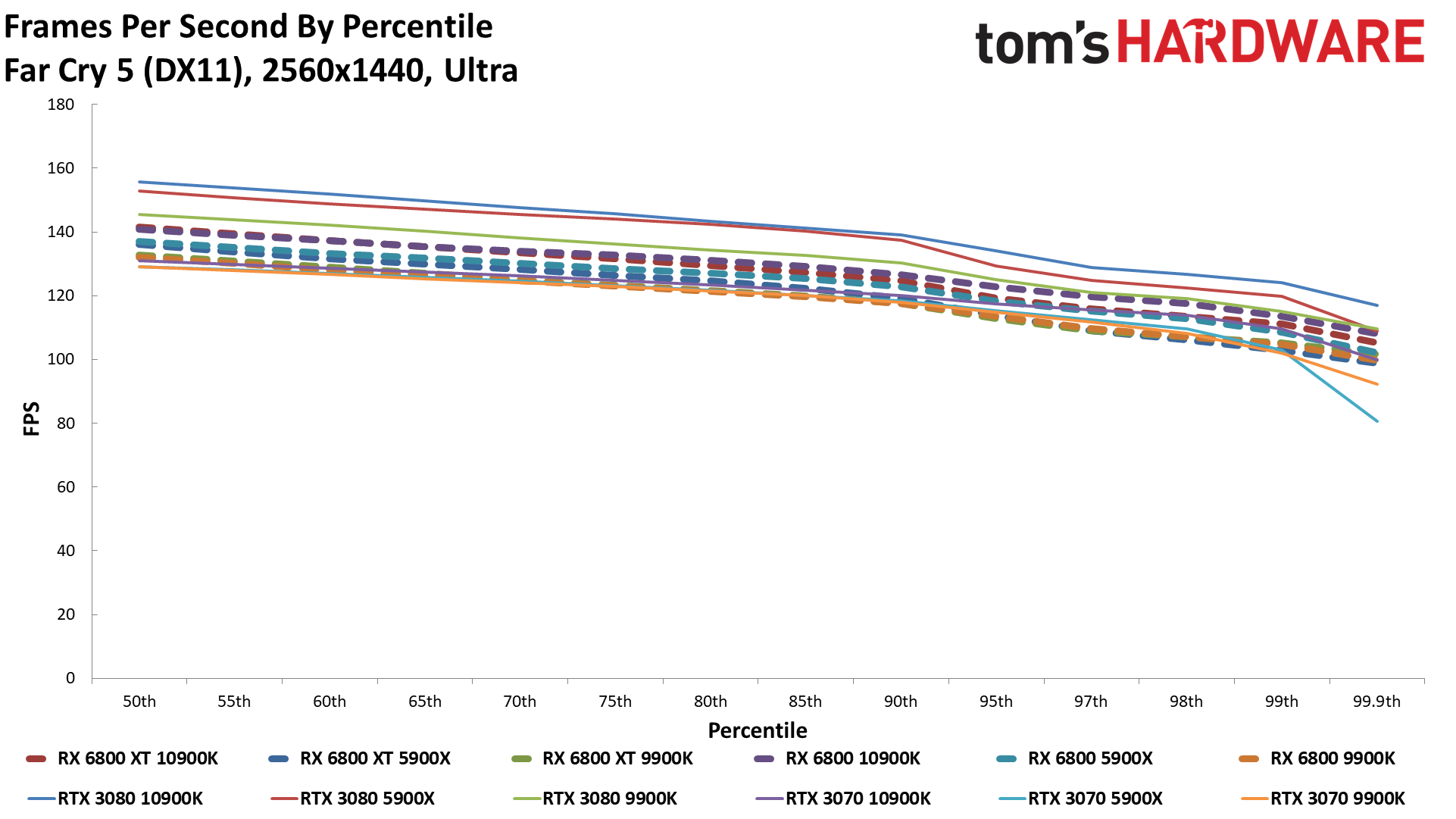

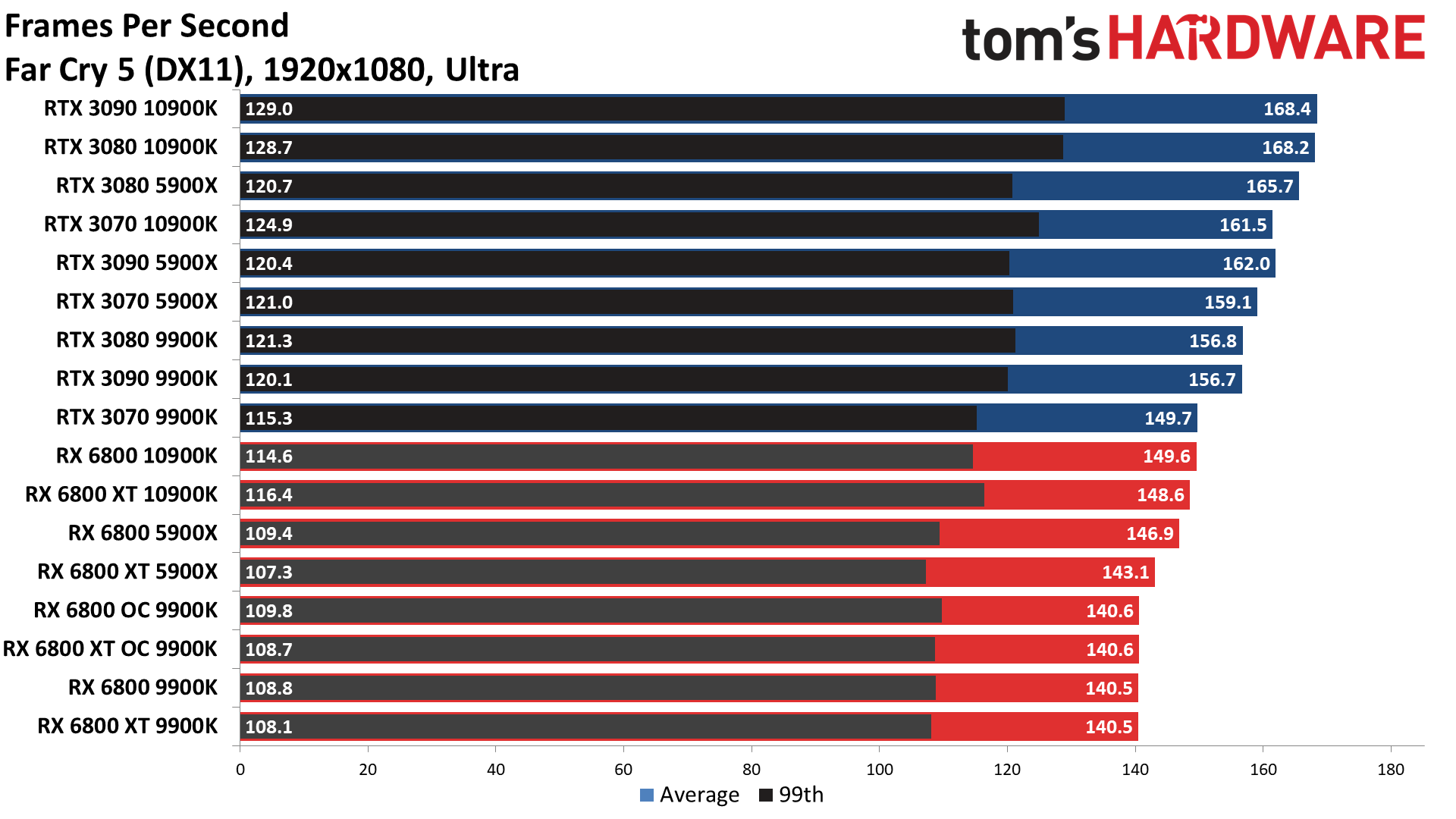

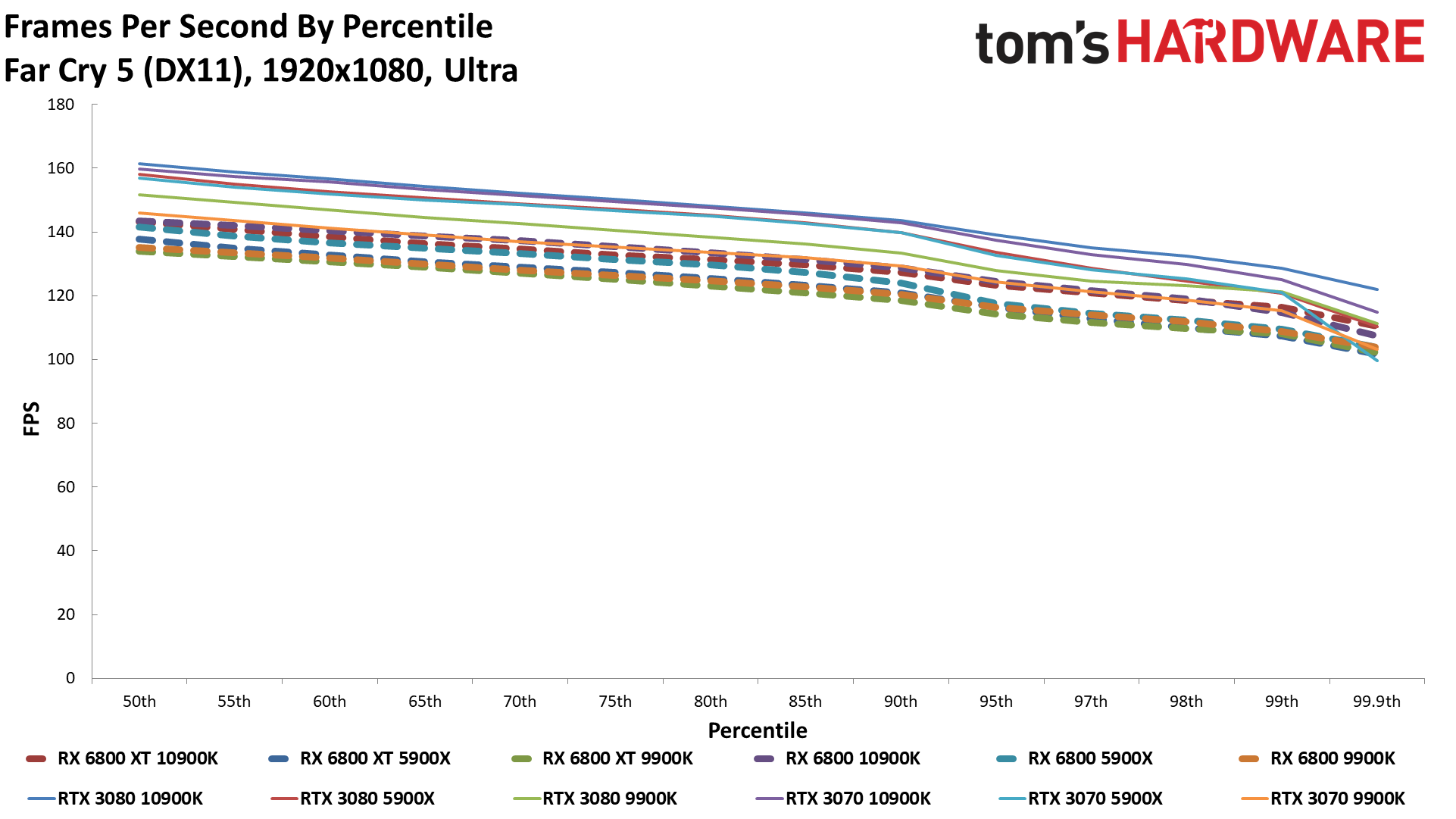

Far Cry 5

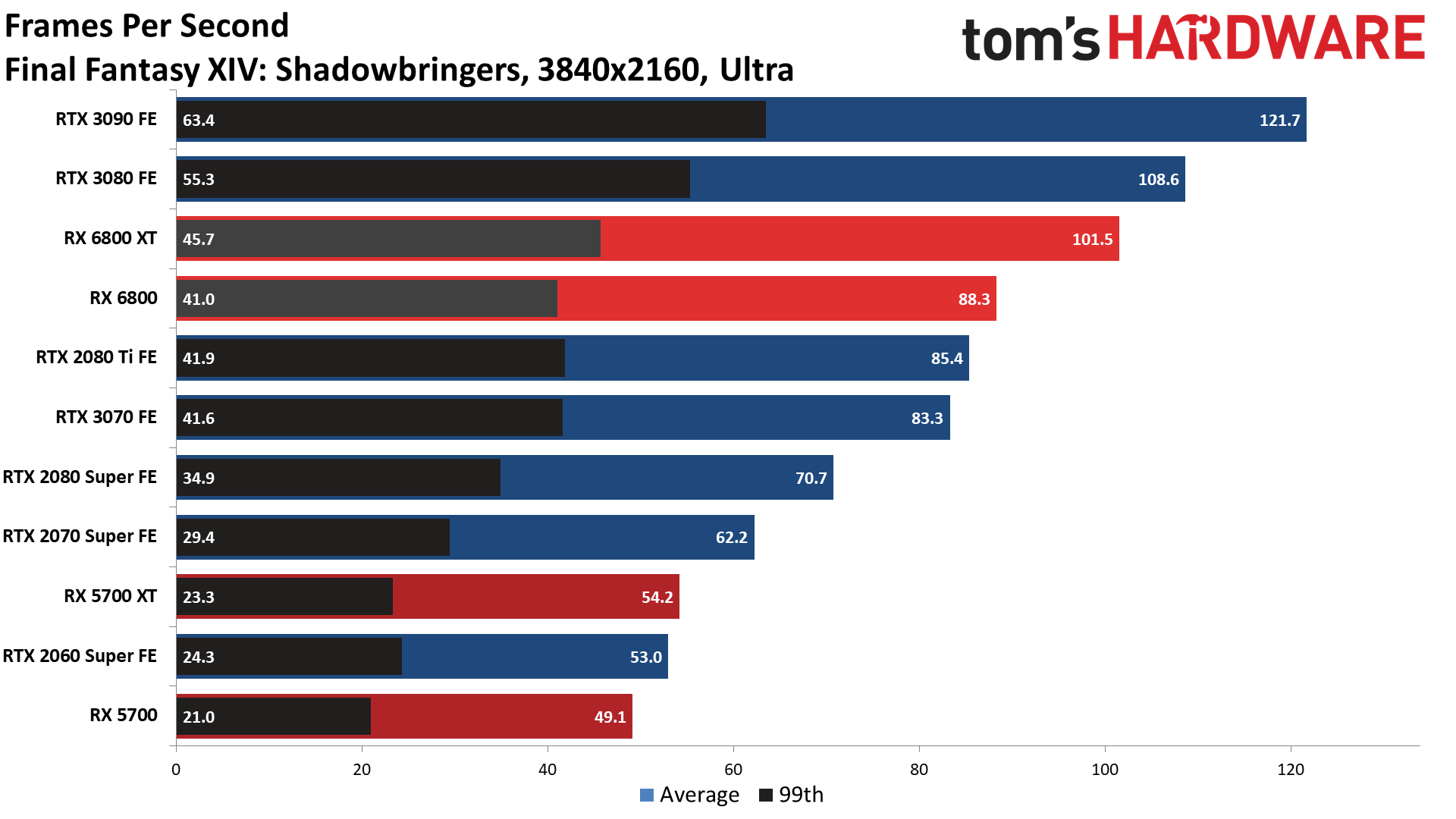

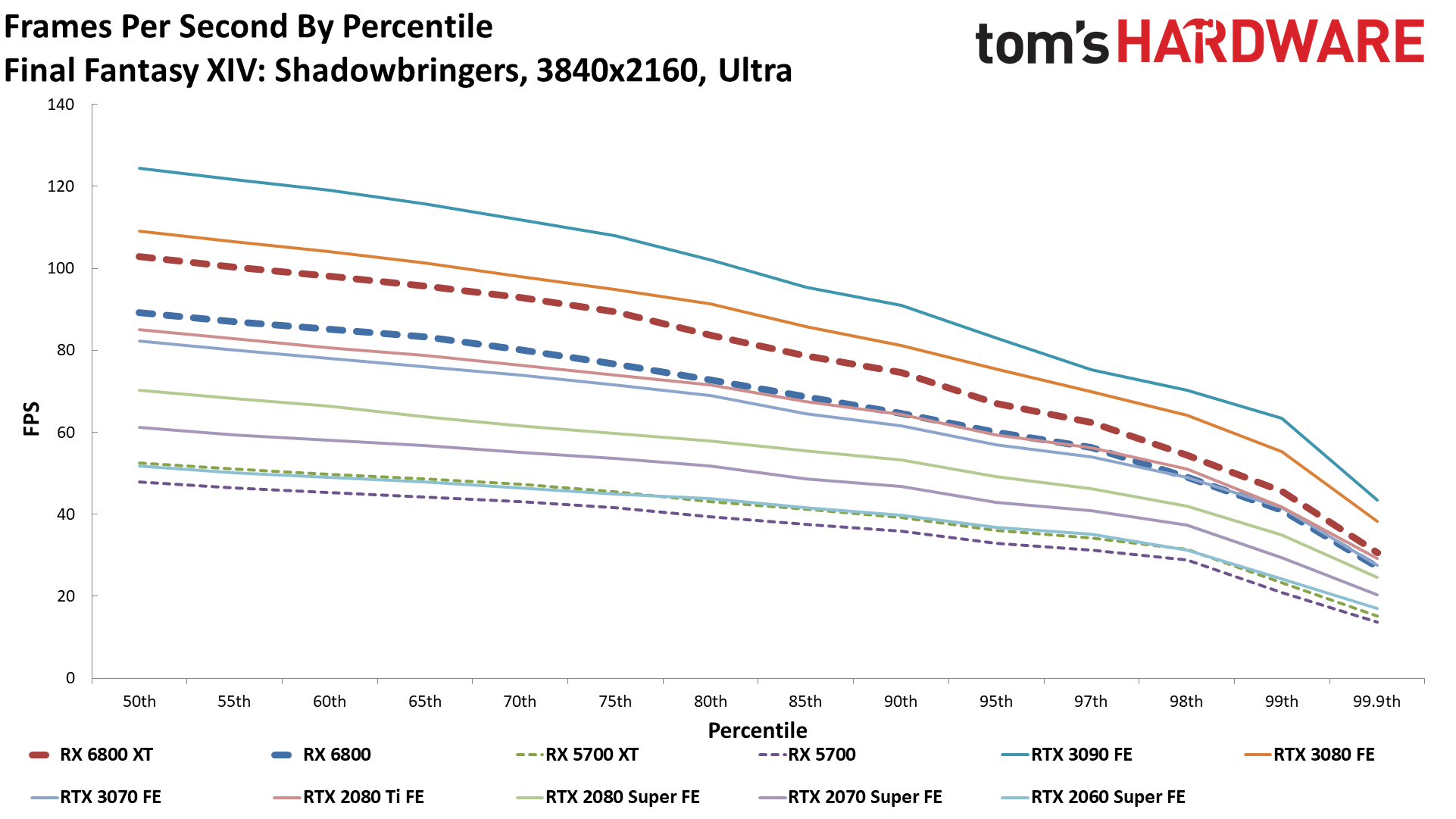

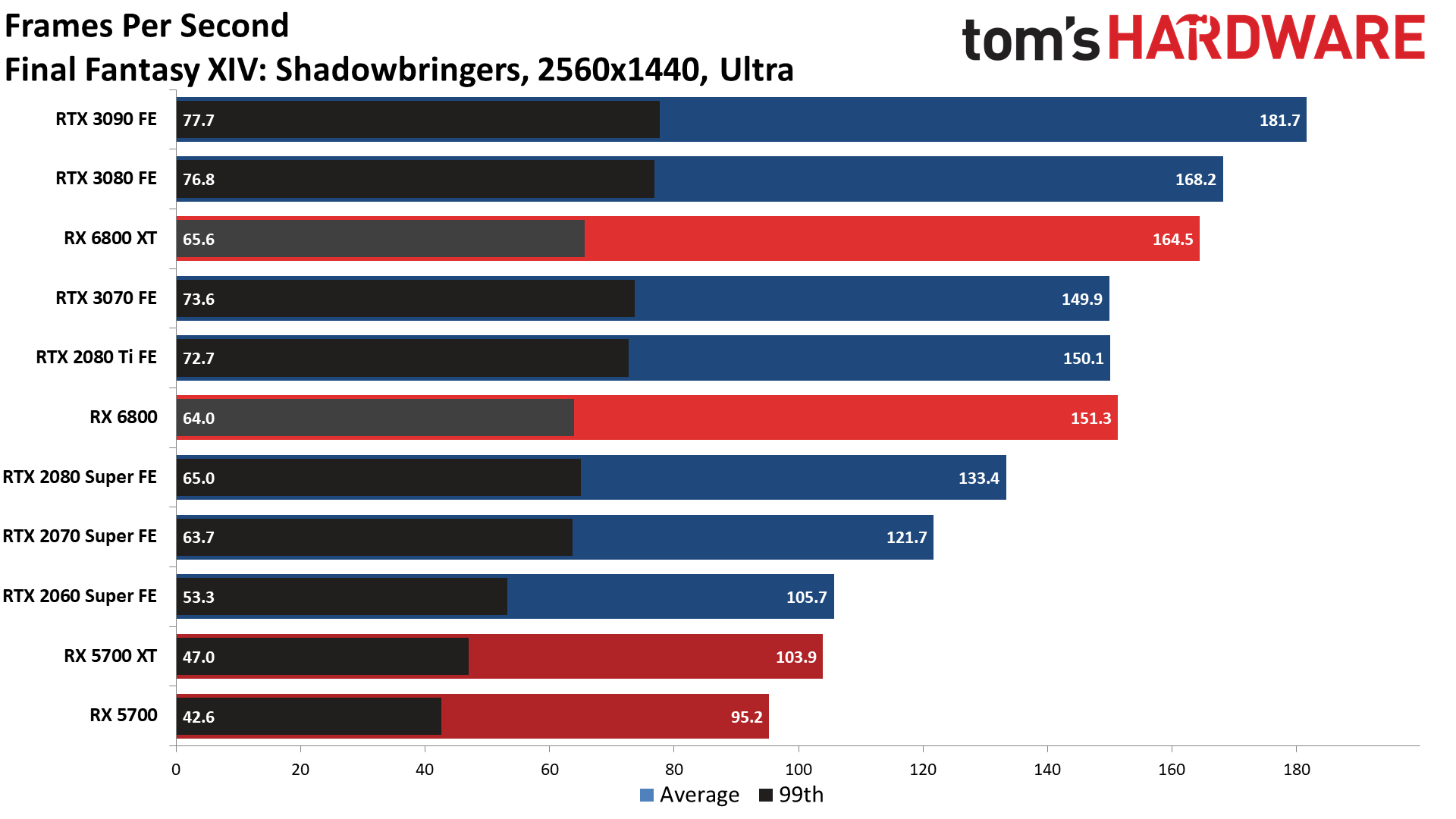

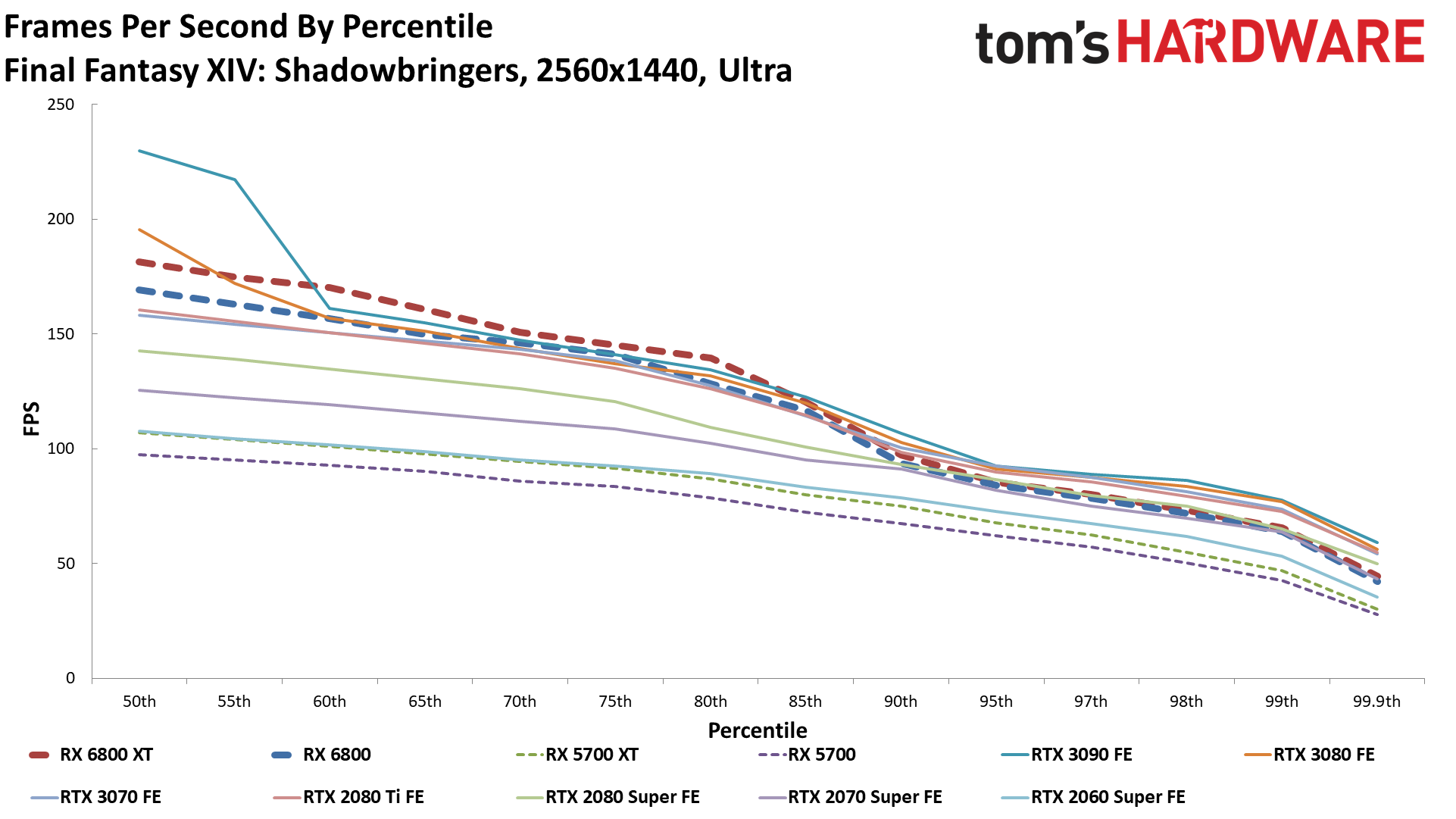

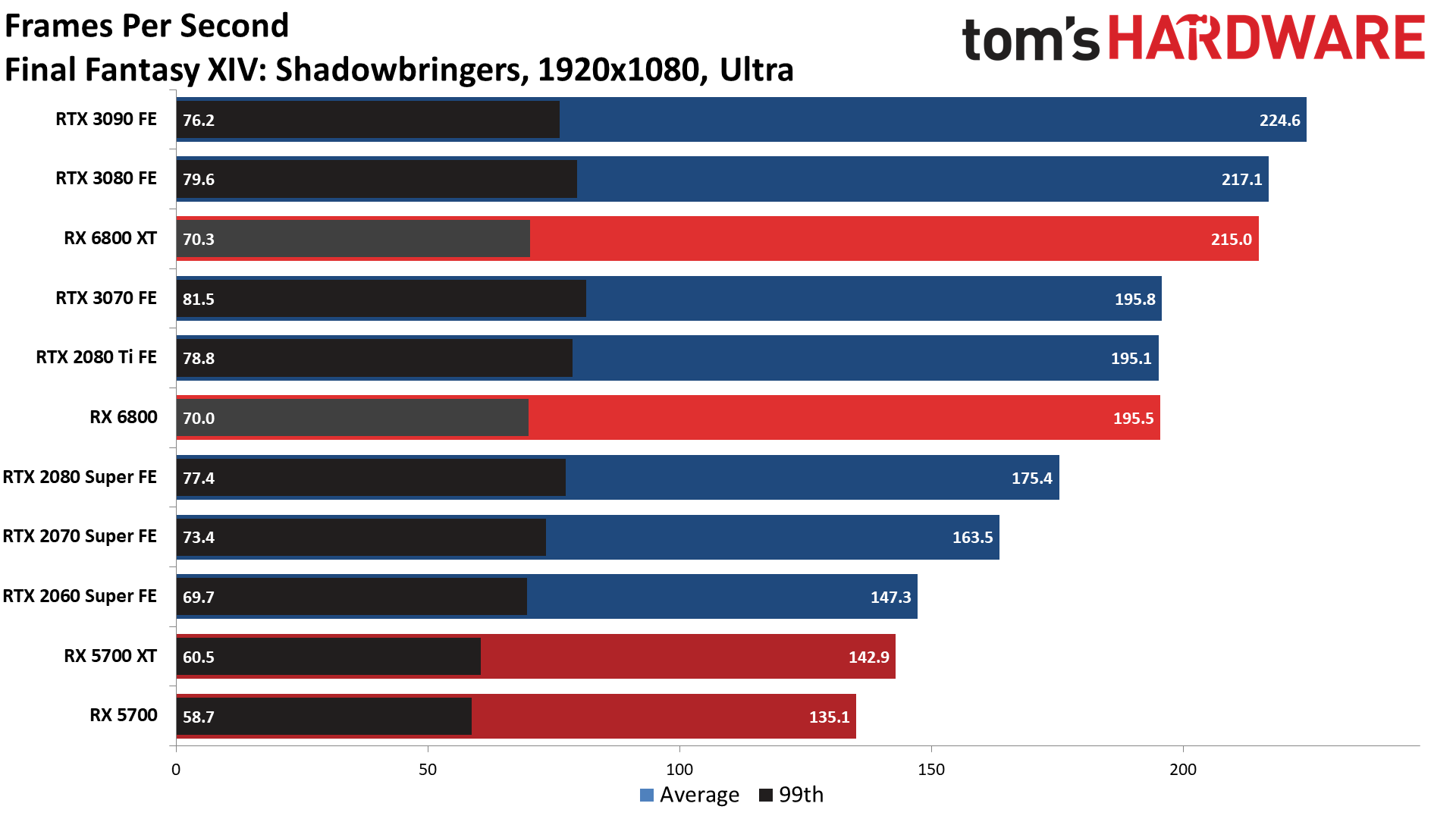

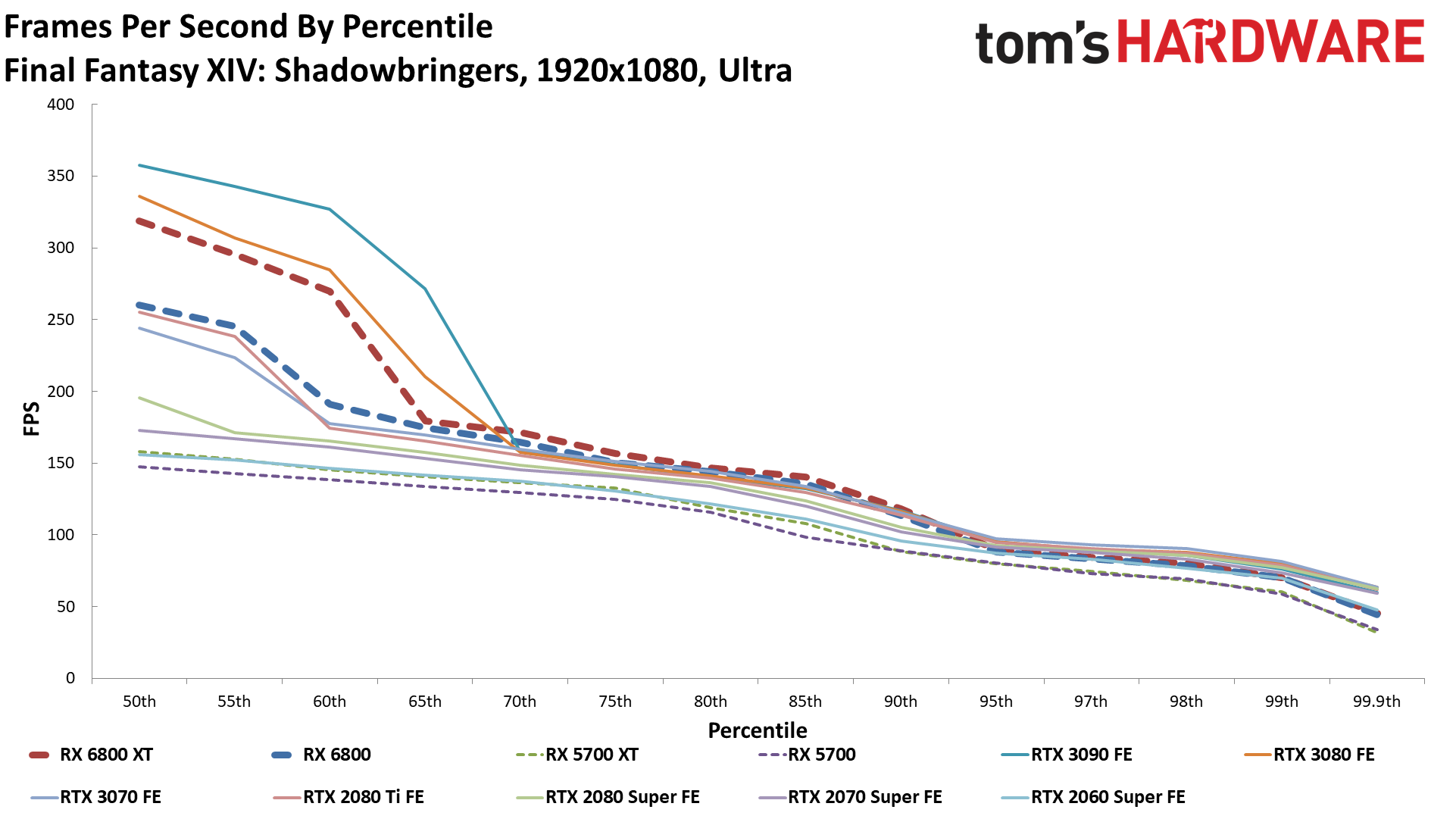

Final Fantasy XIV

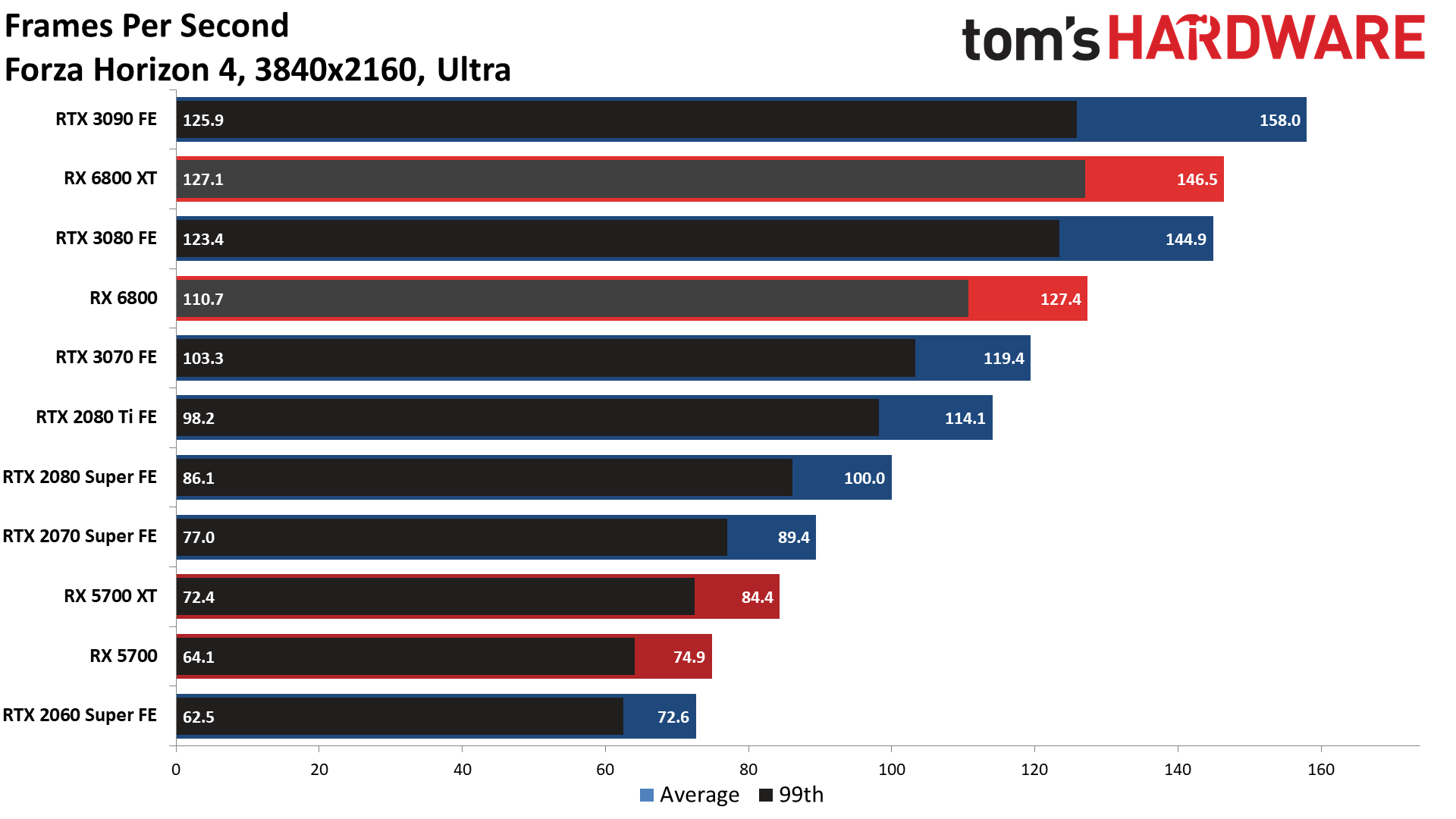

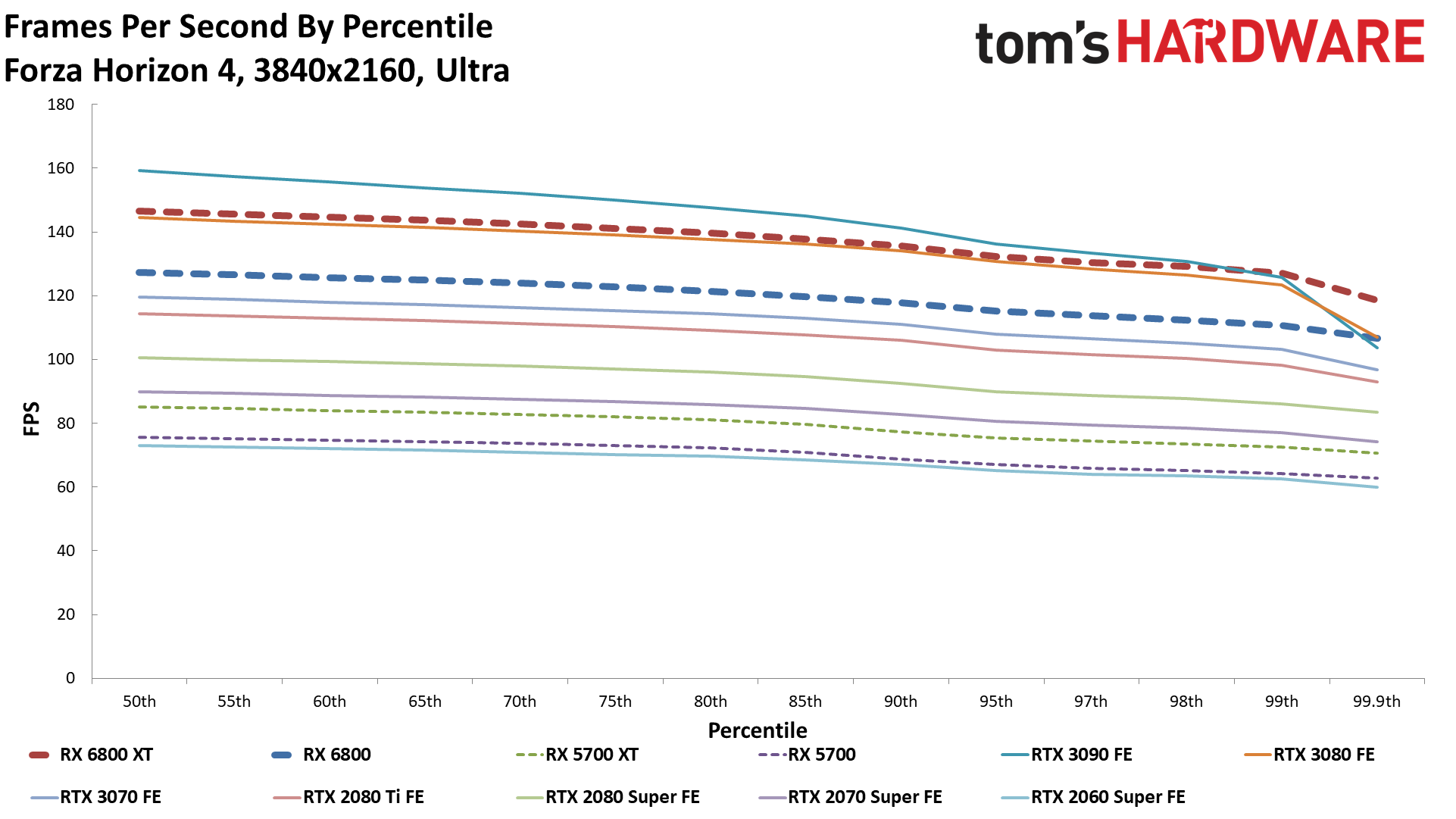

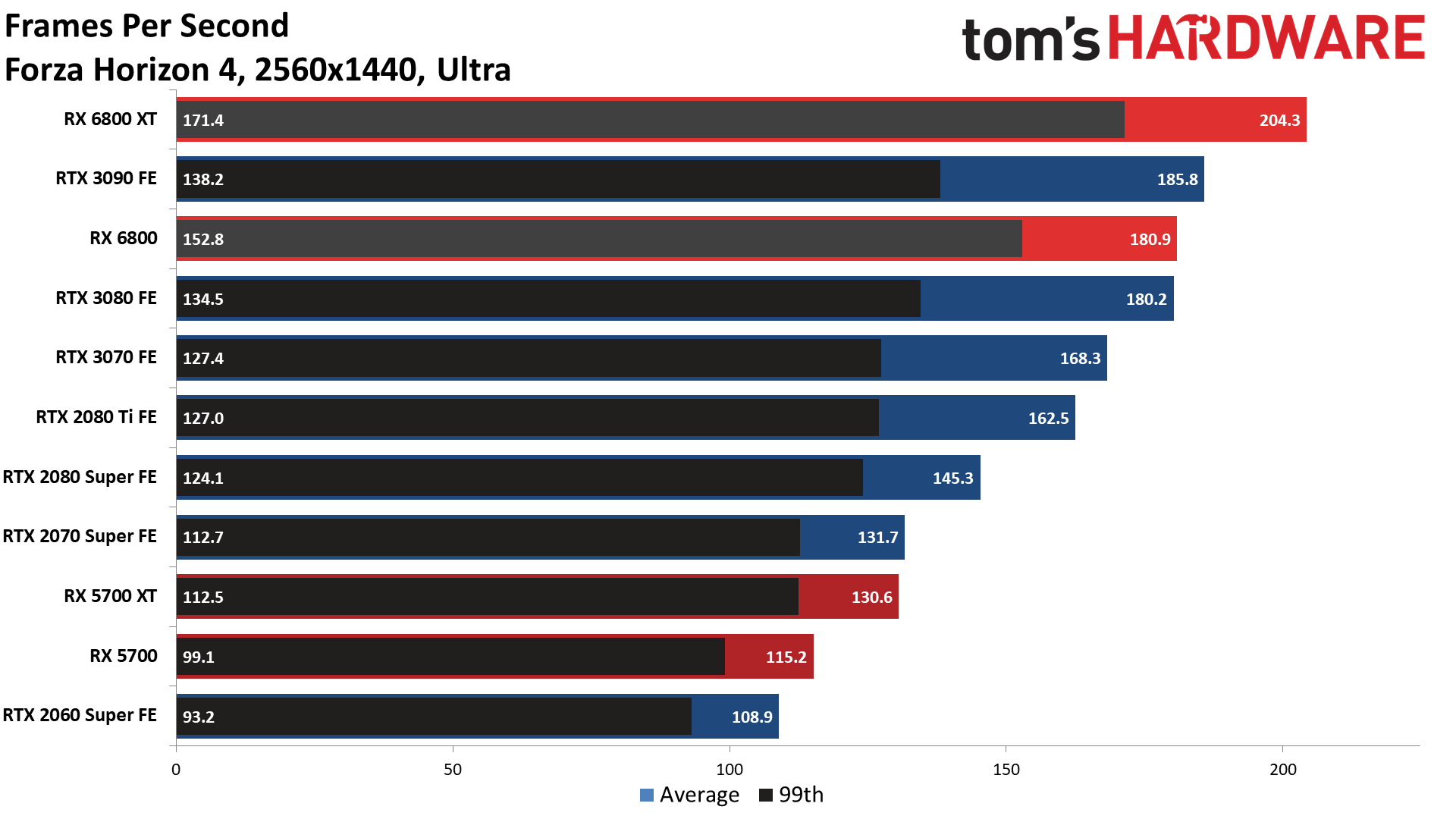

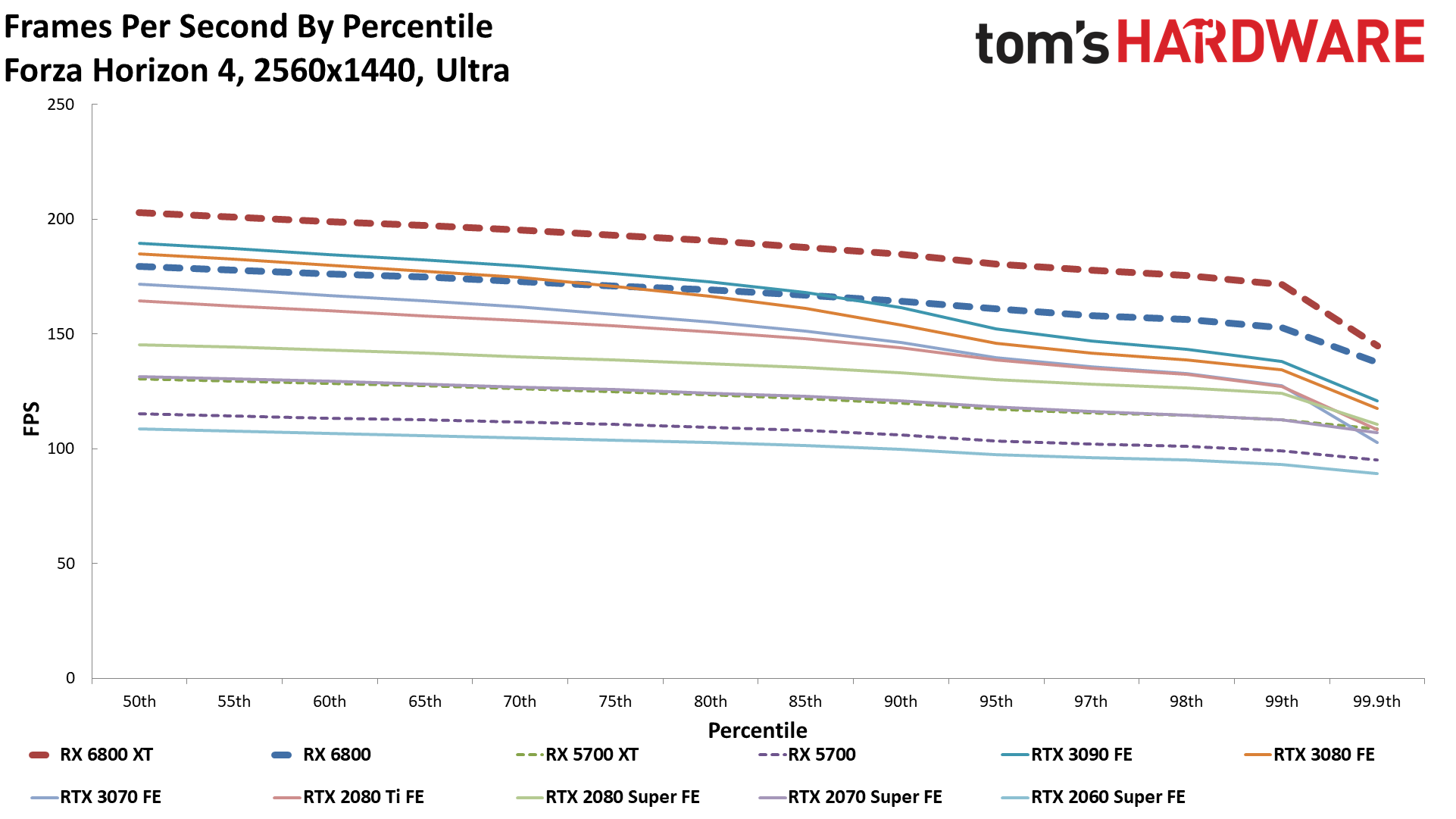

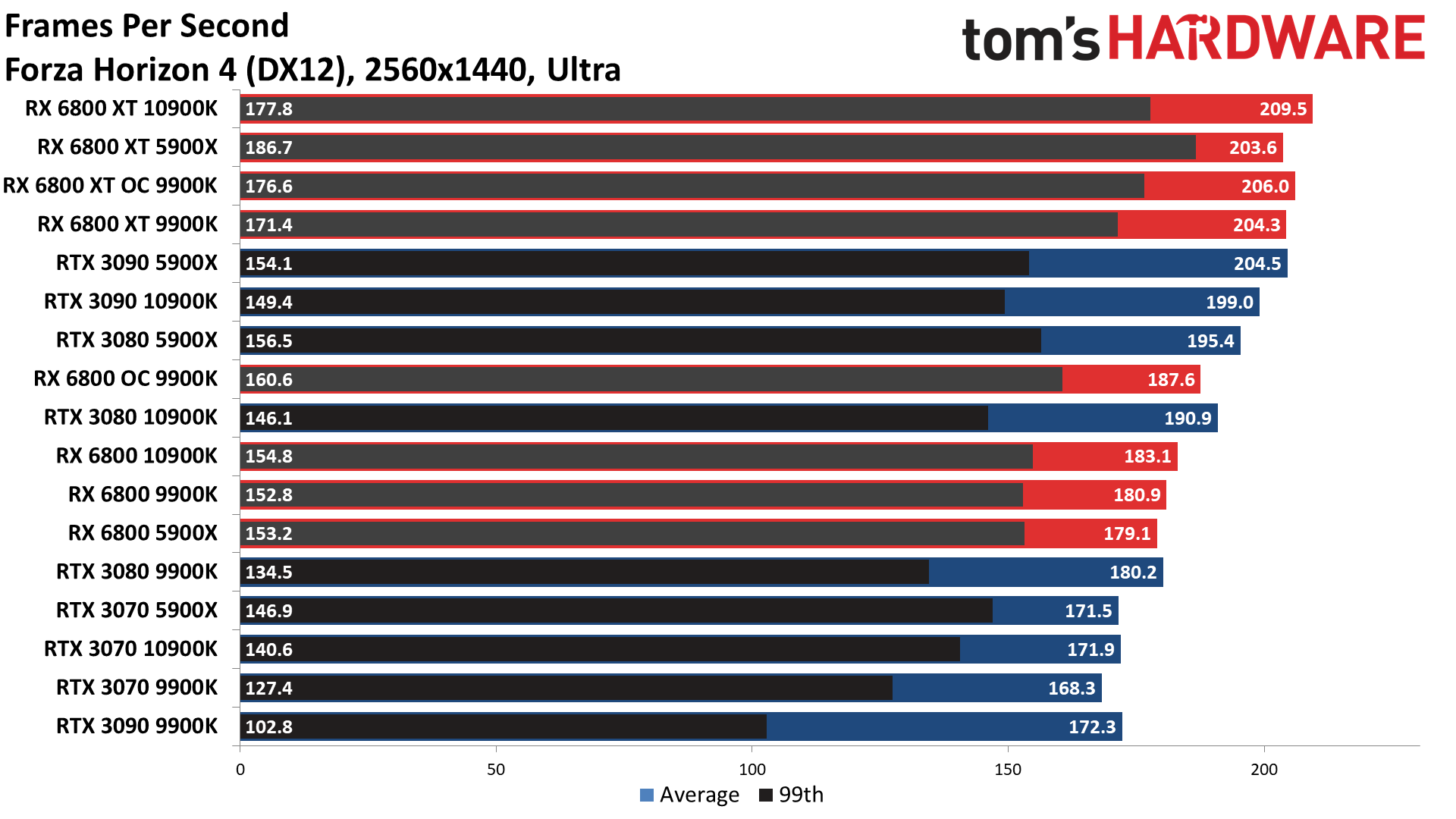

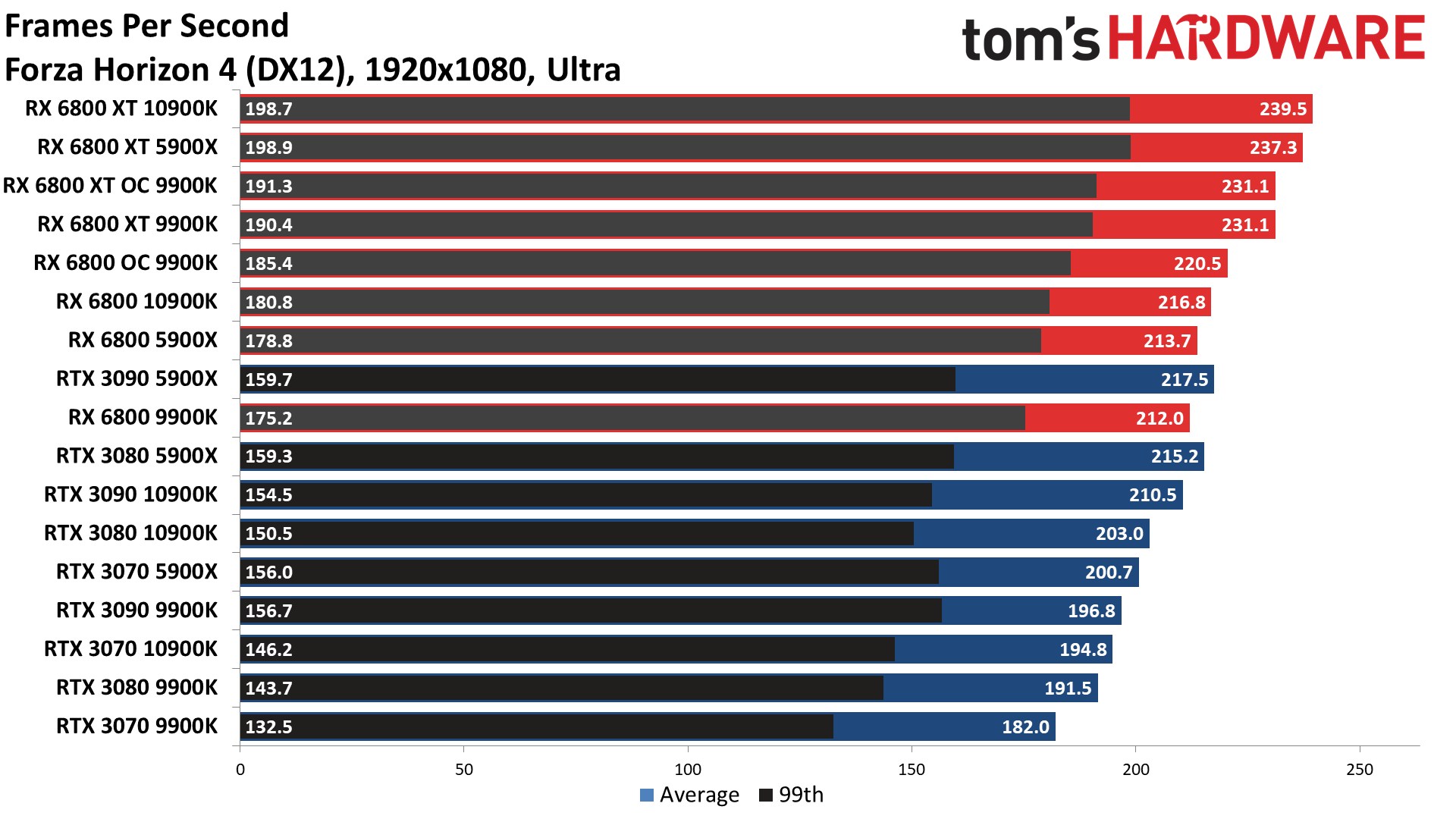

Forza Horizon 4

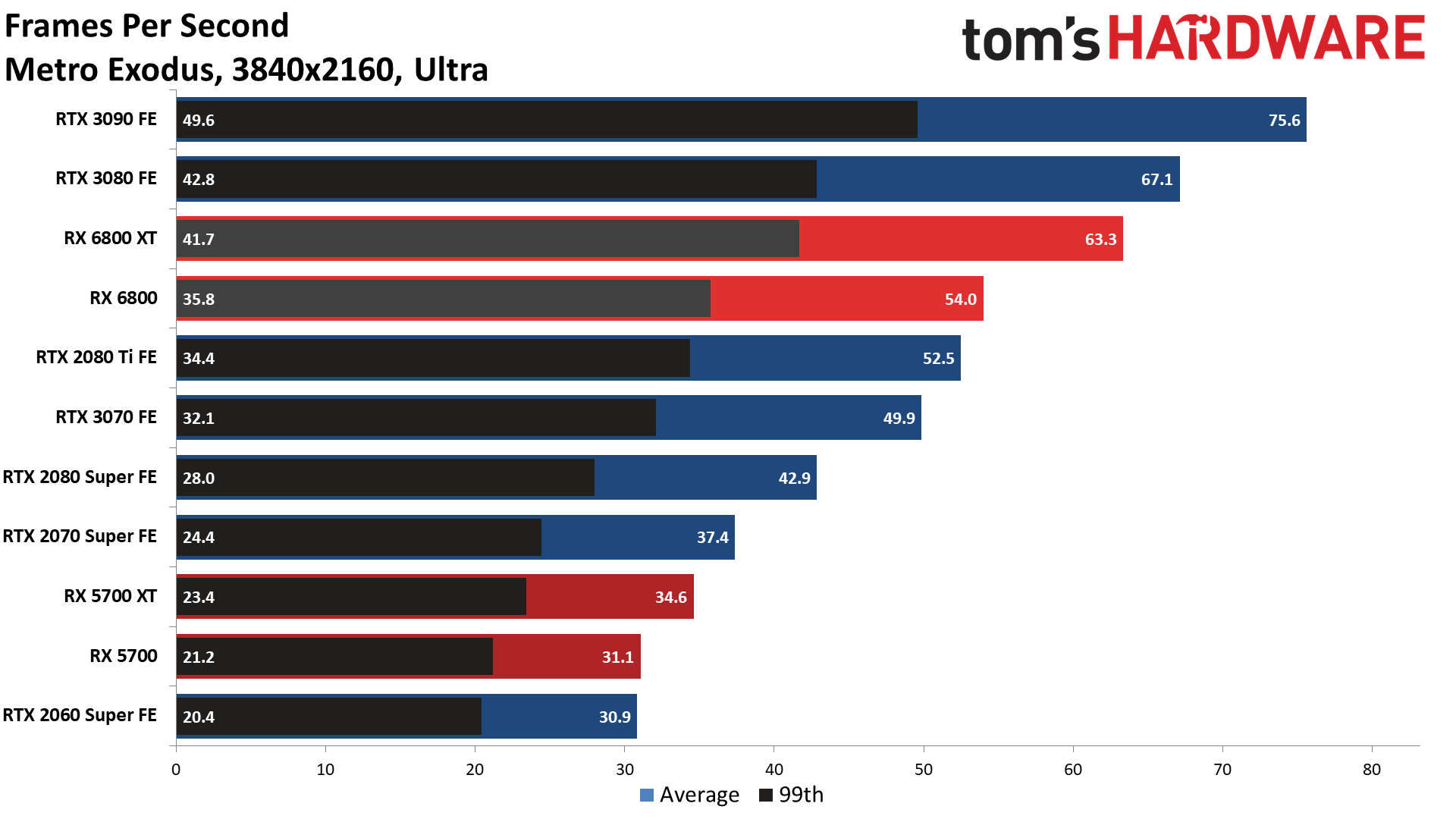

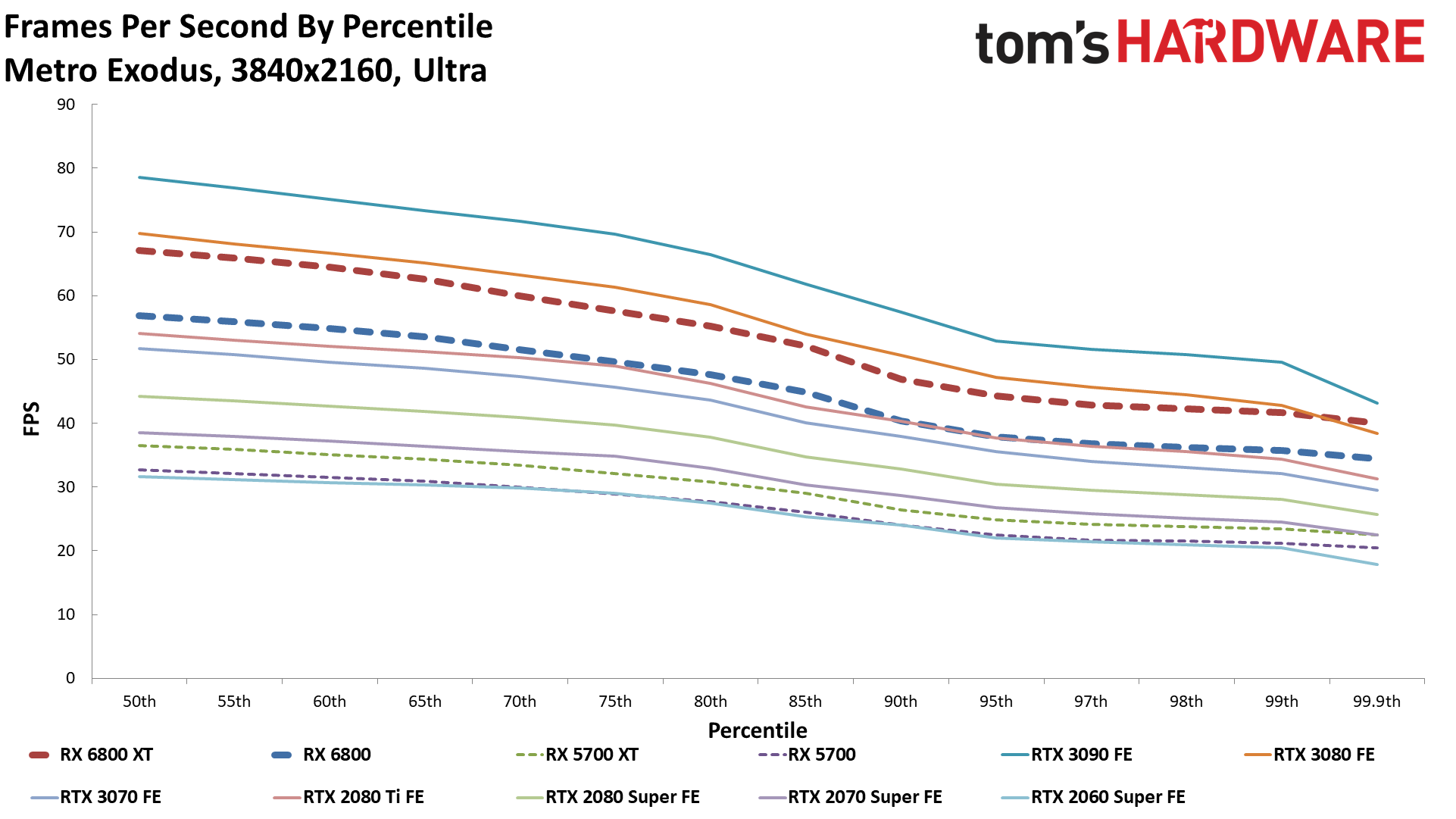

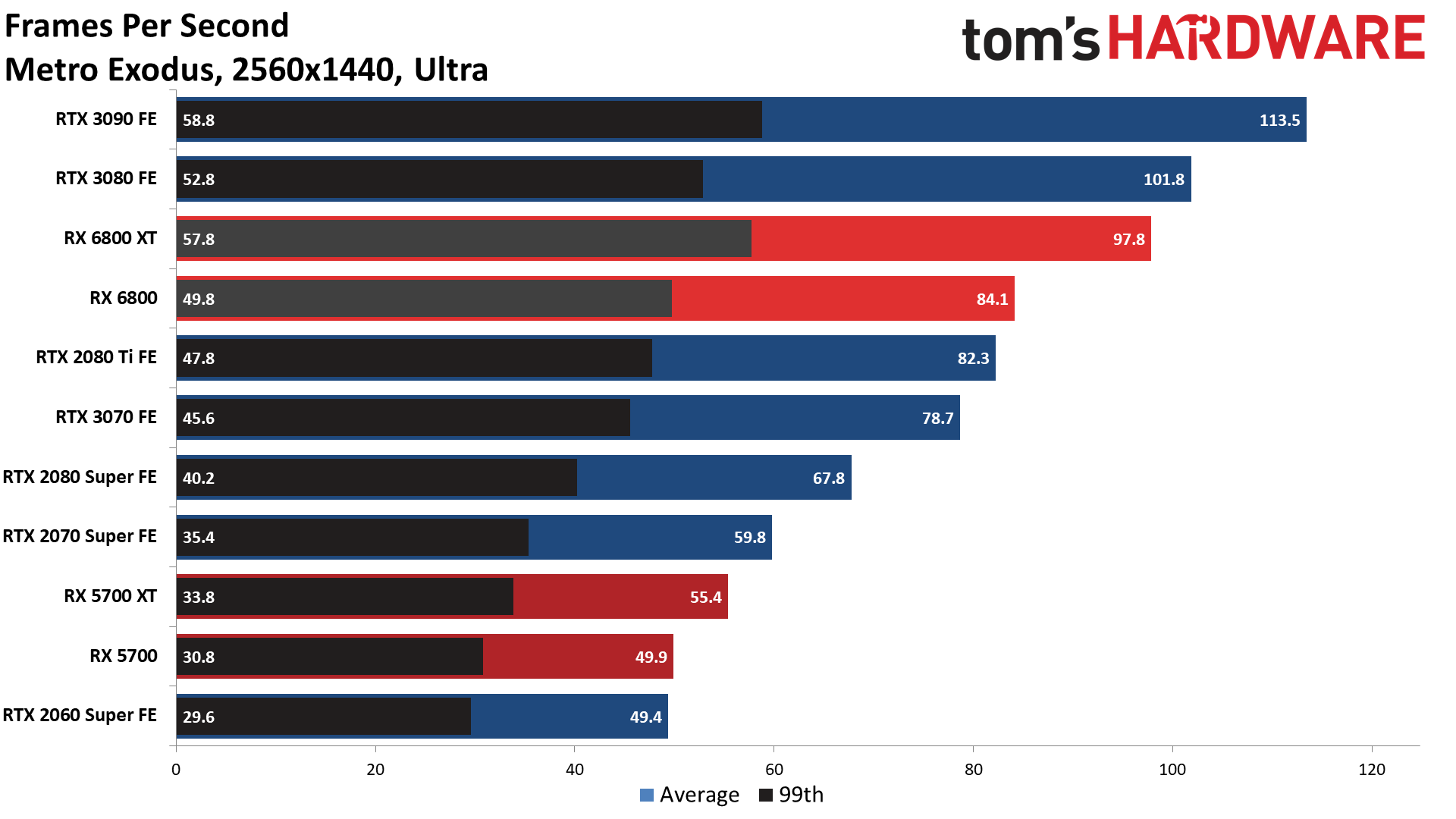

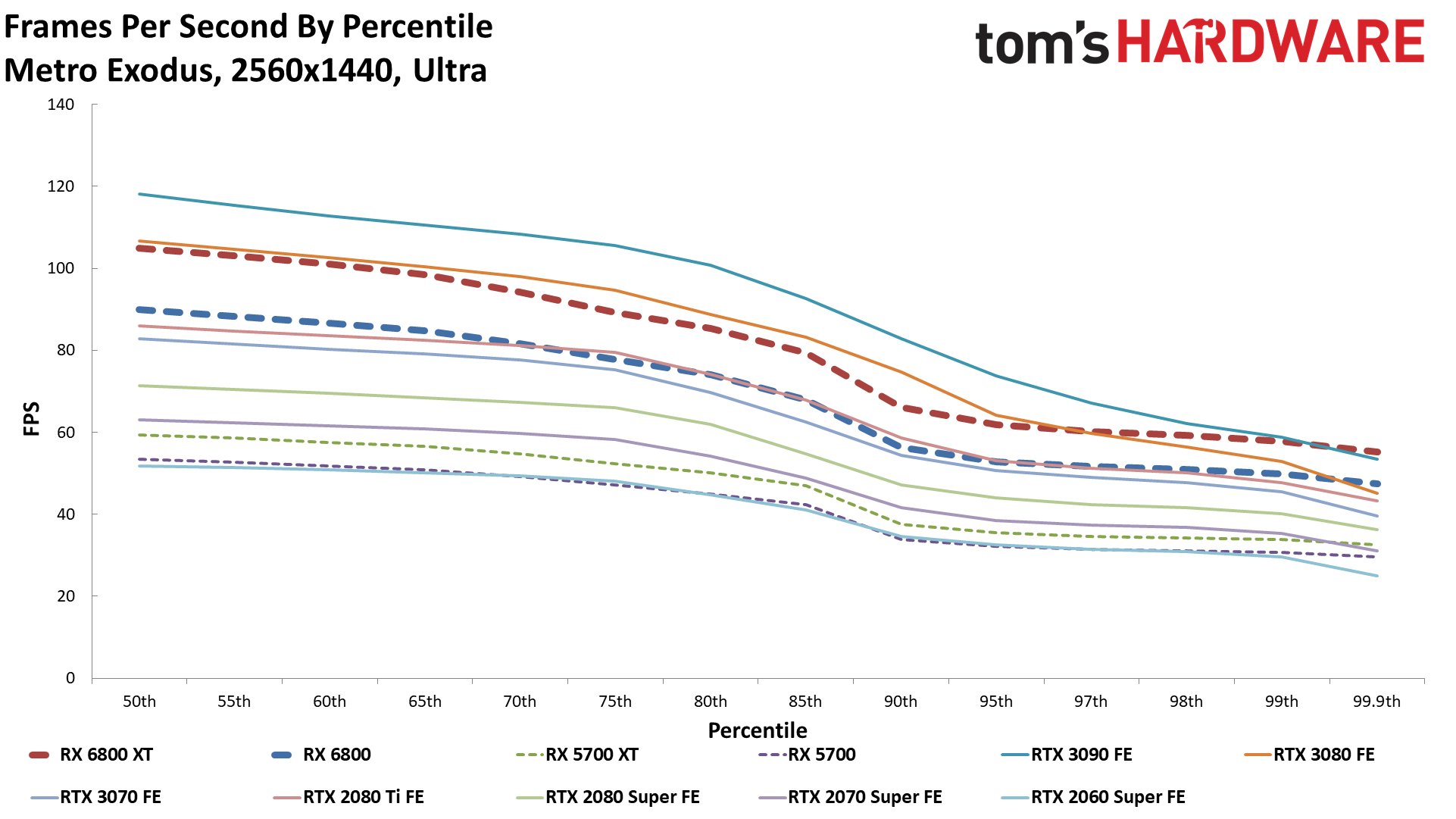

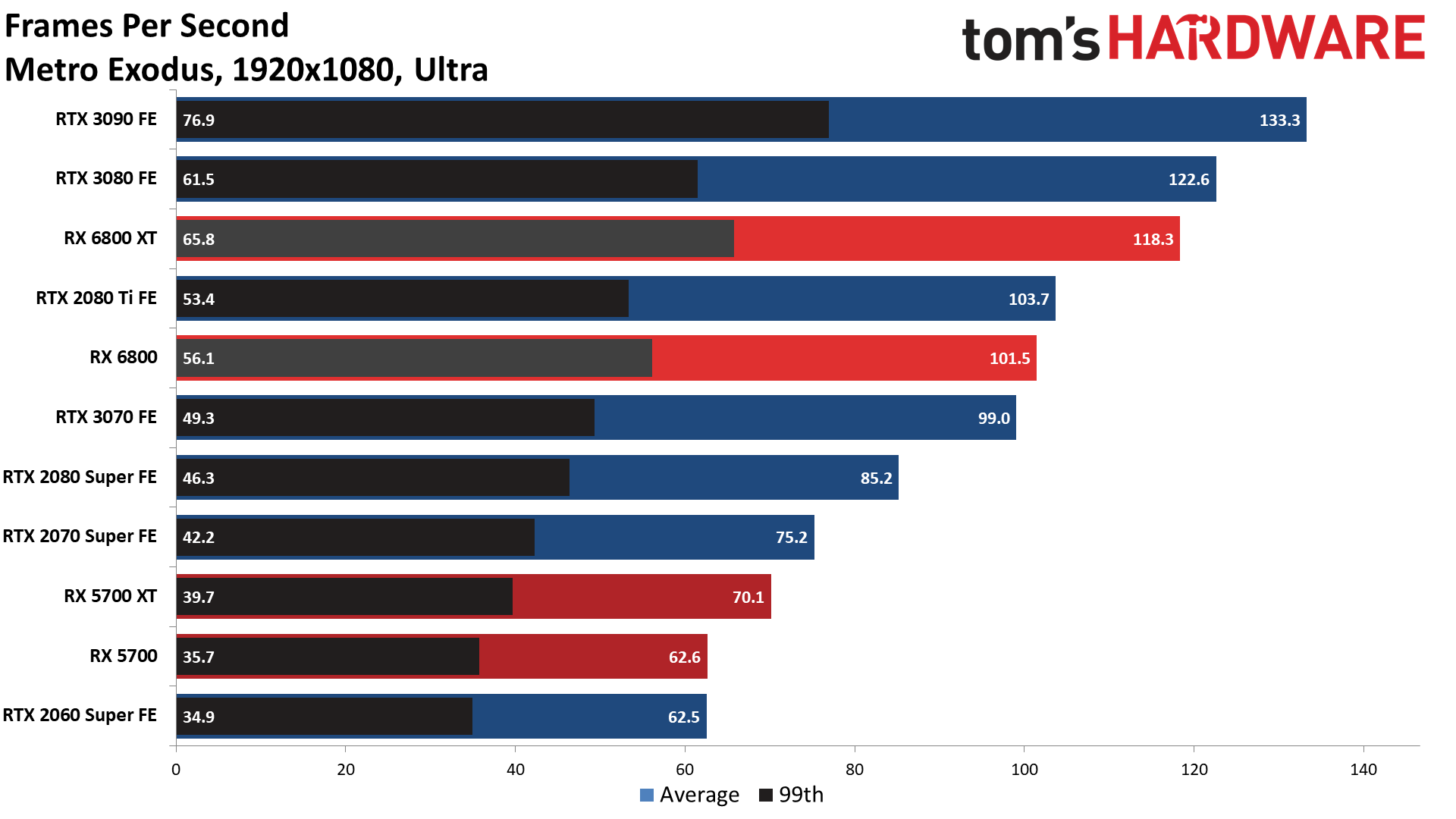

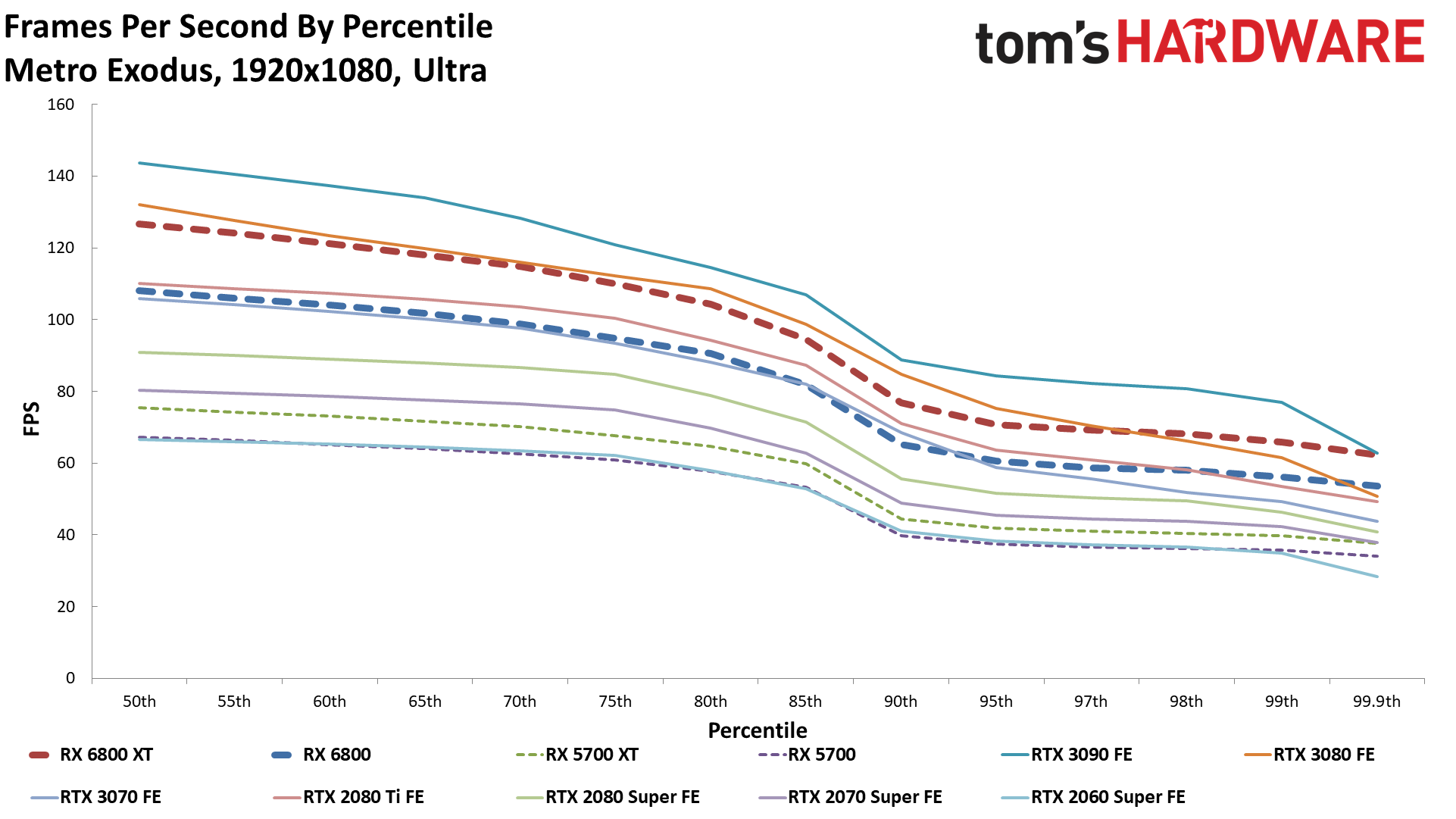

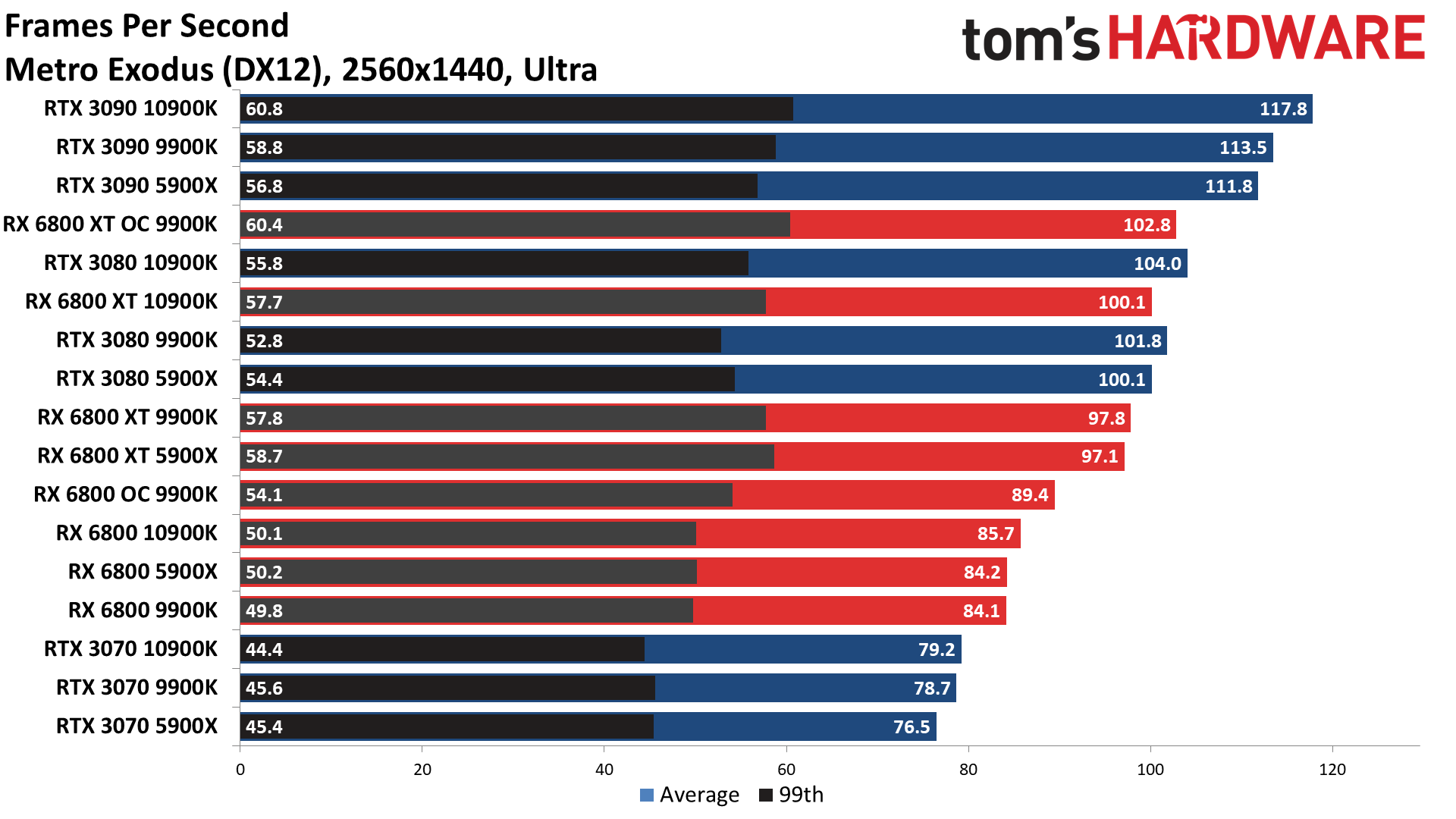

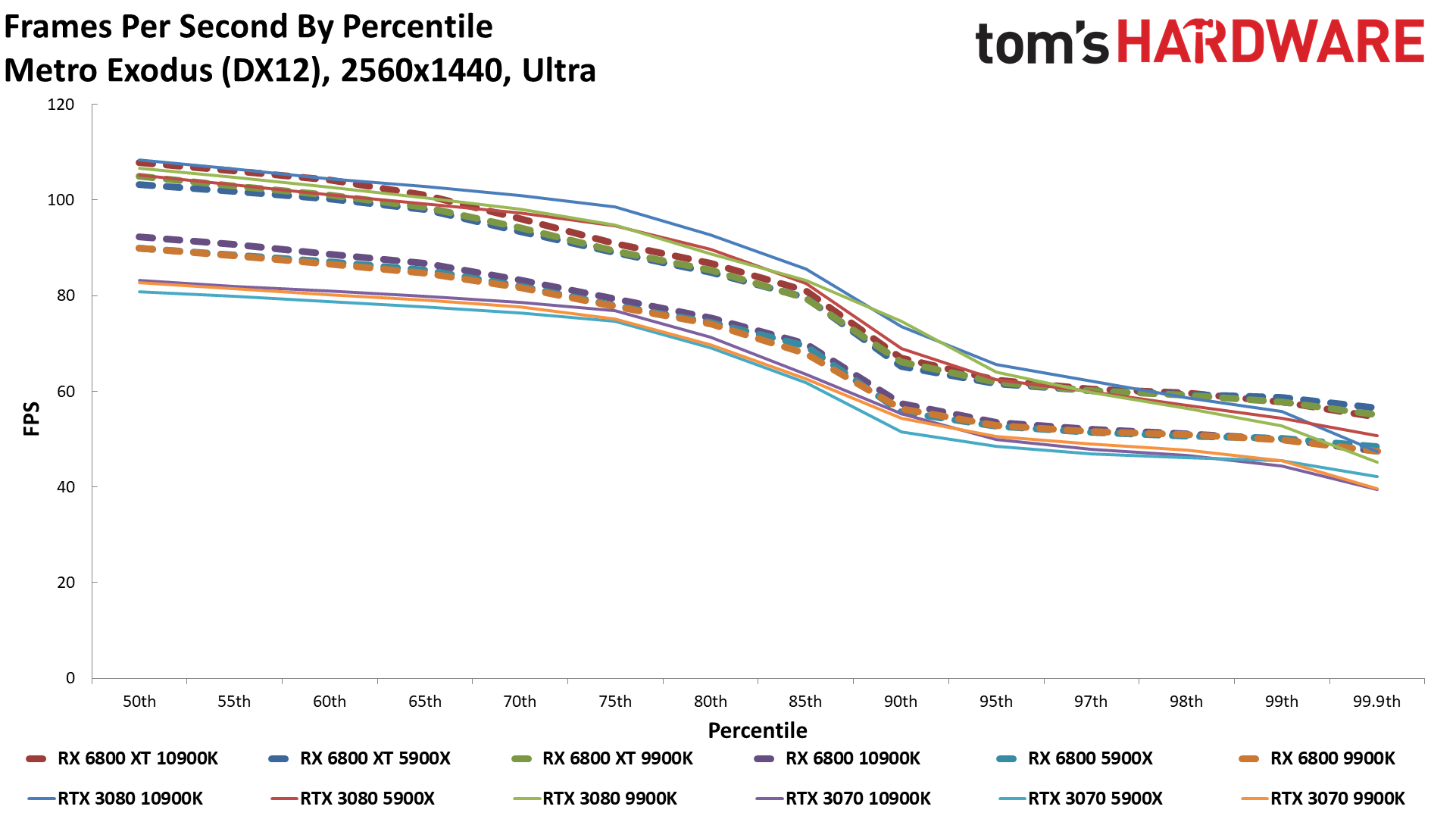

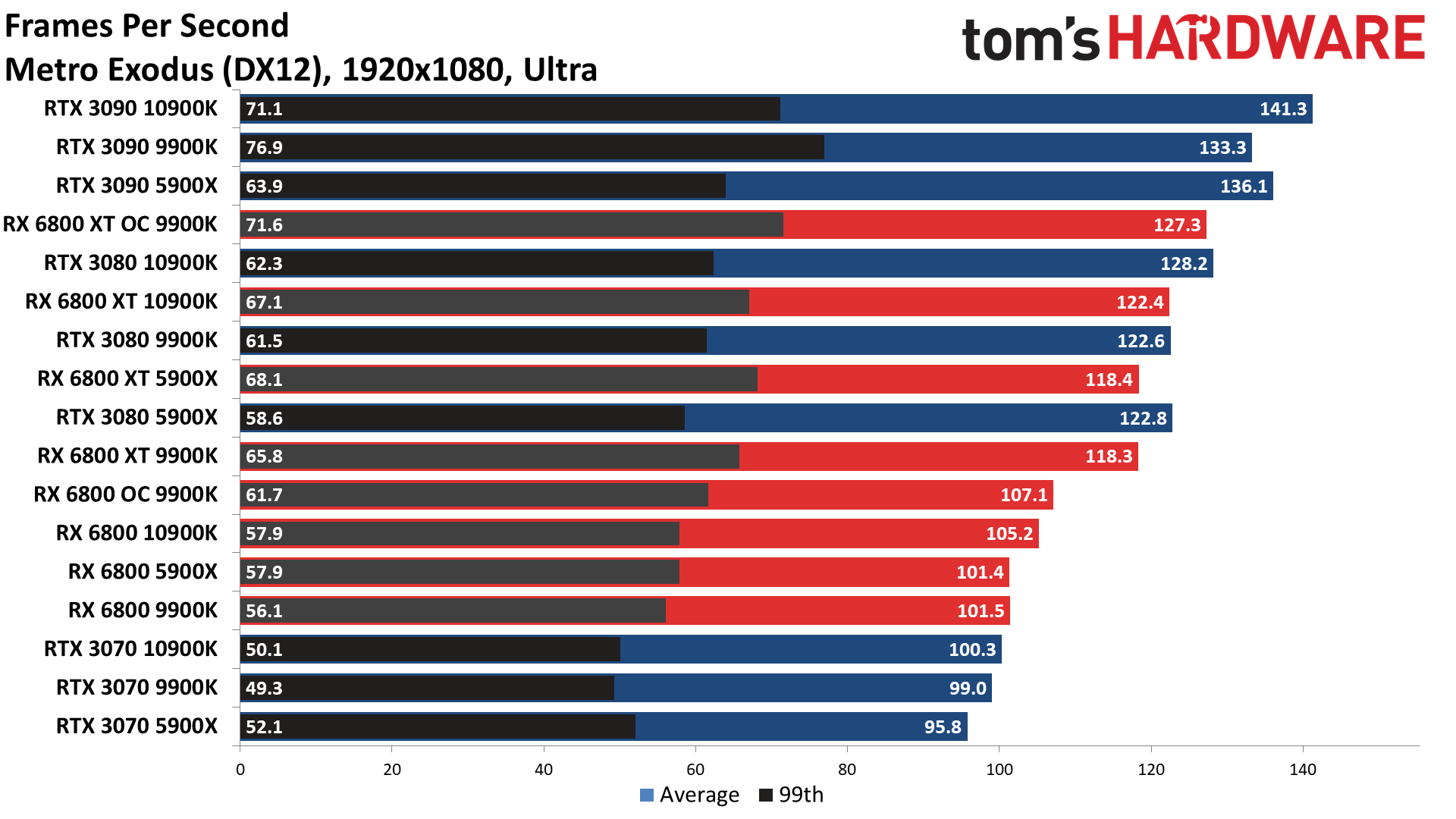

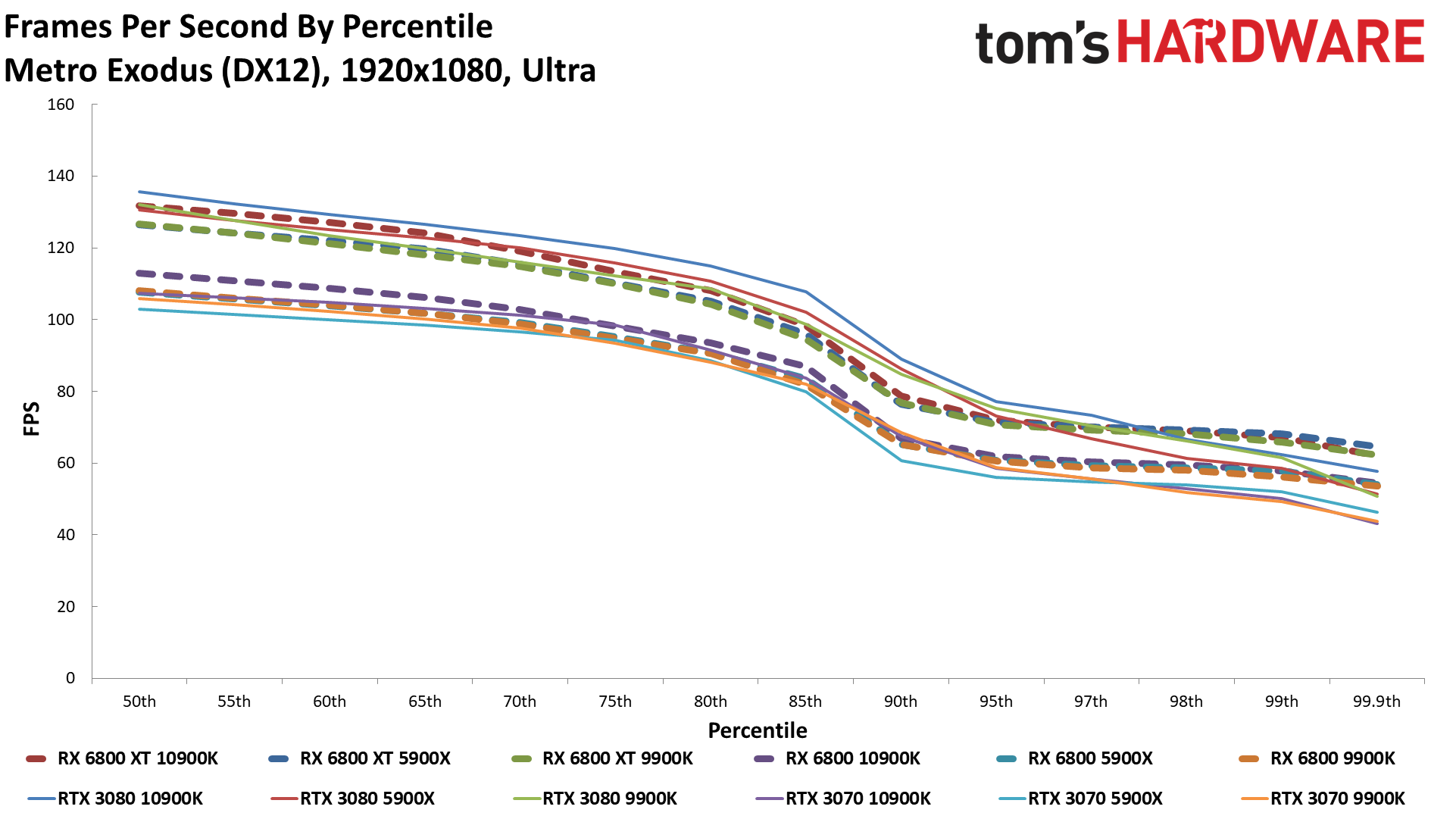

Metro Exodus

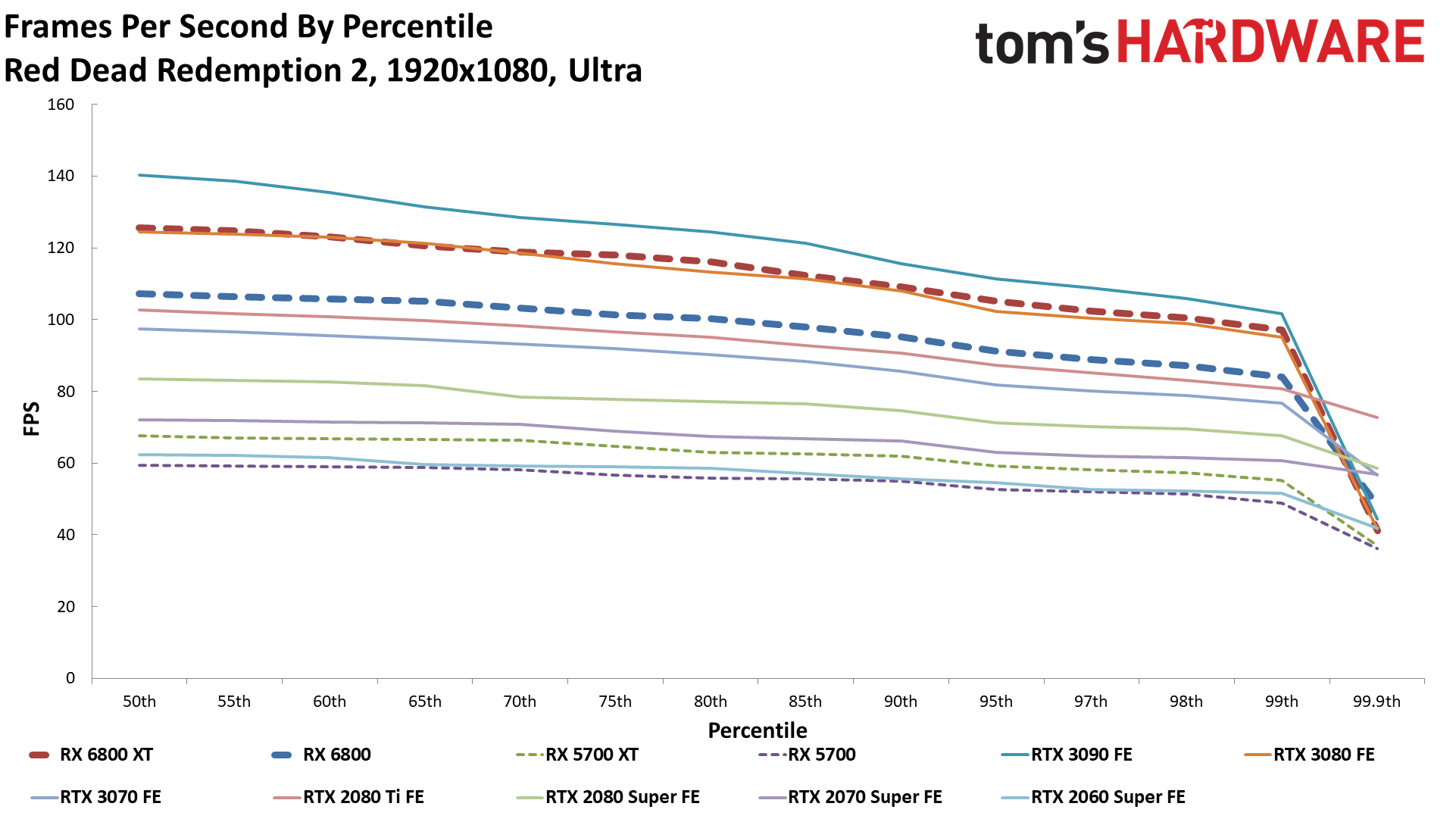

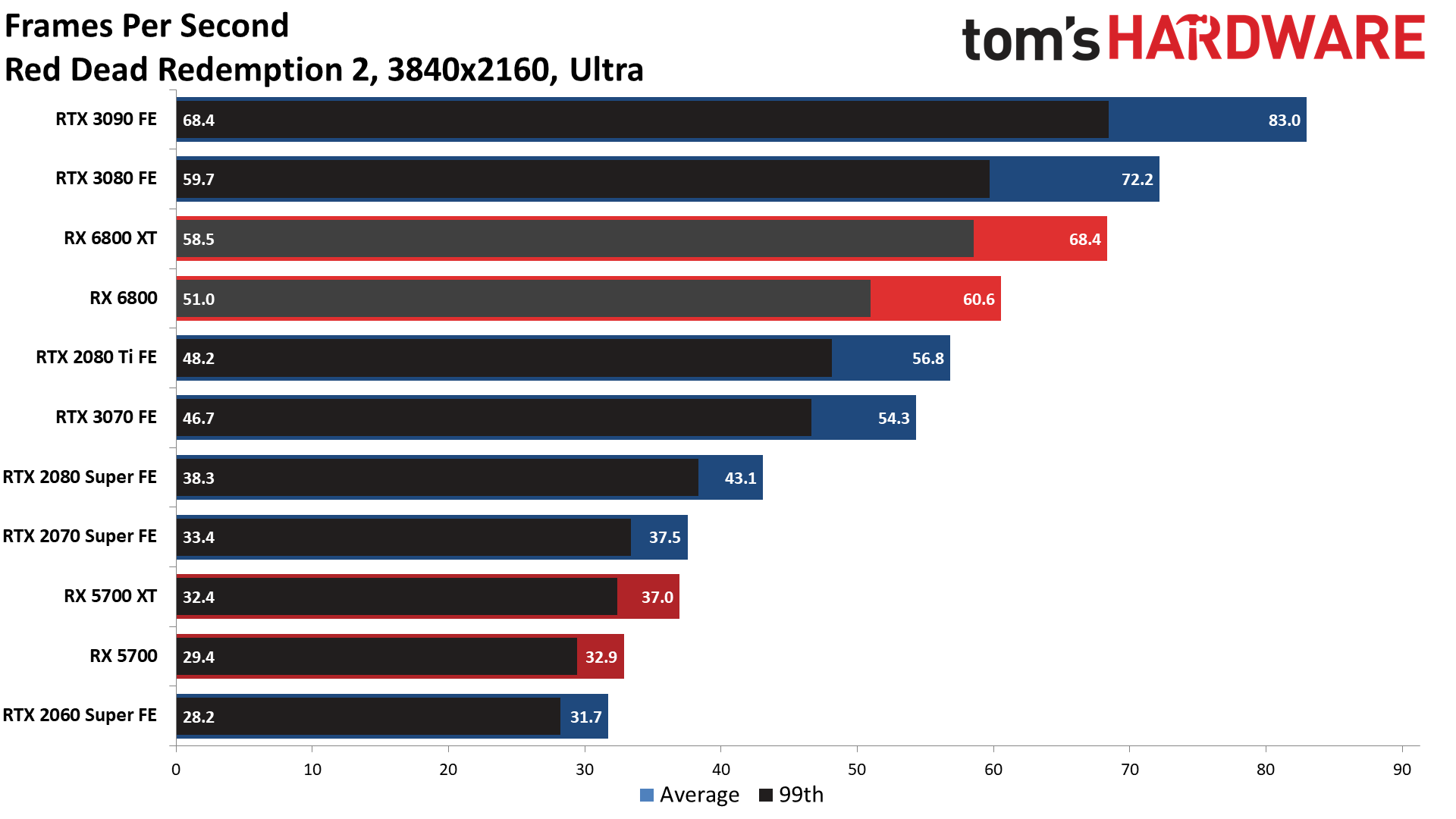

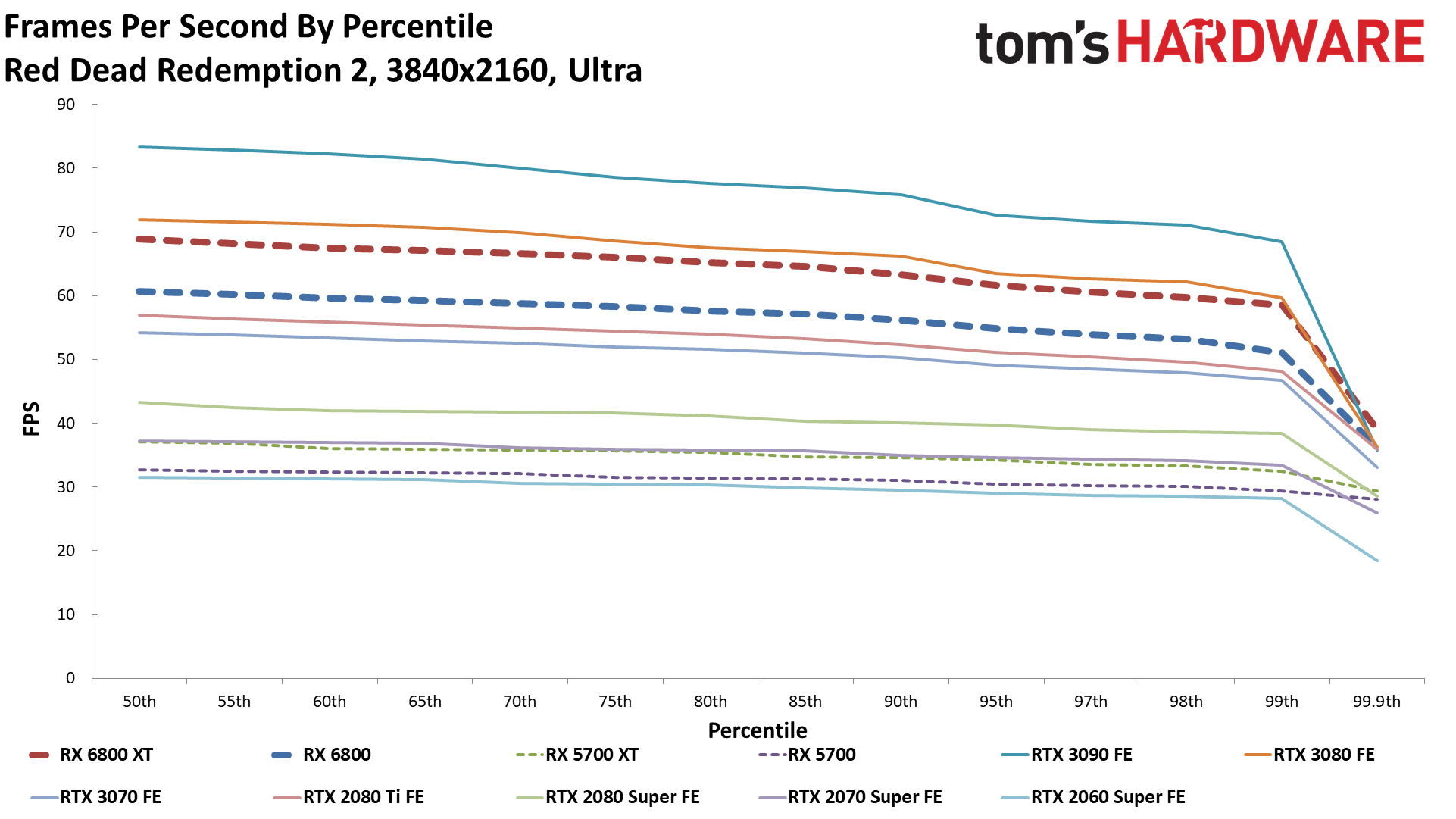

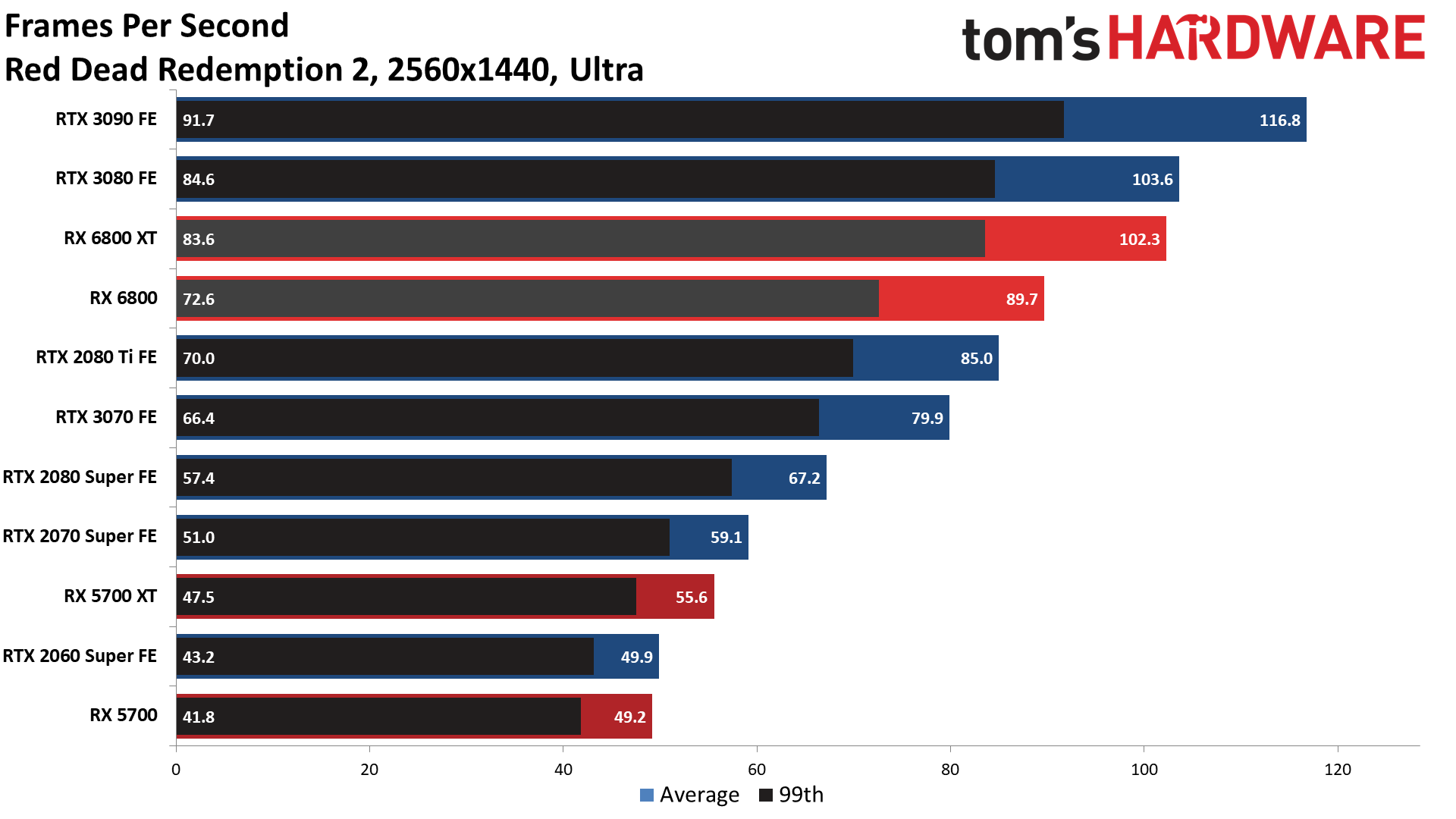

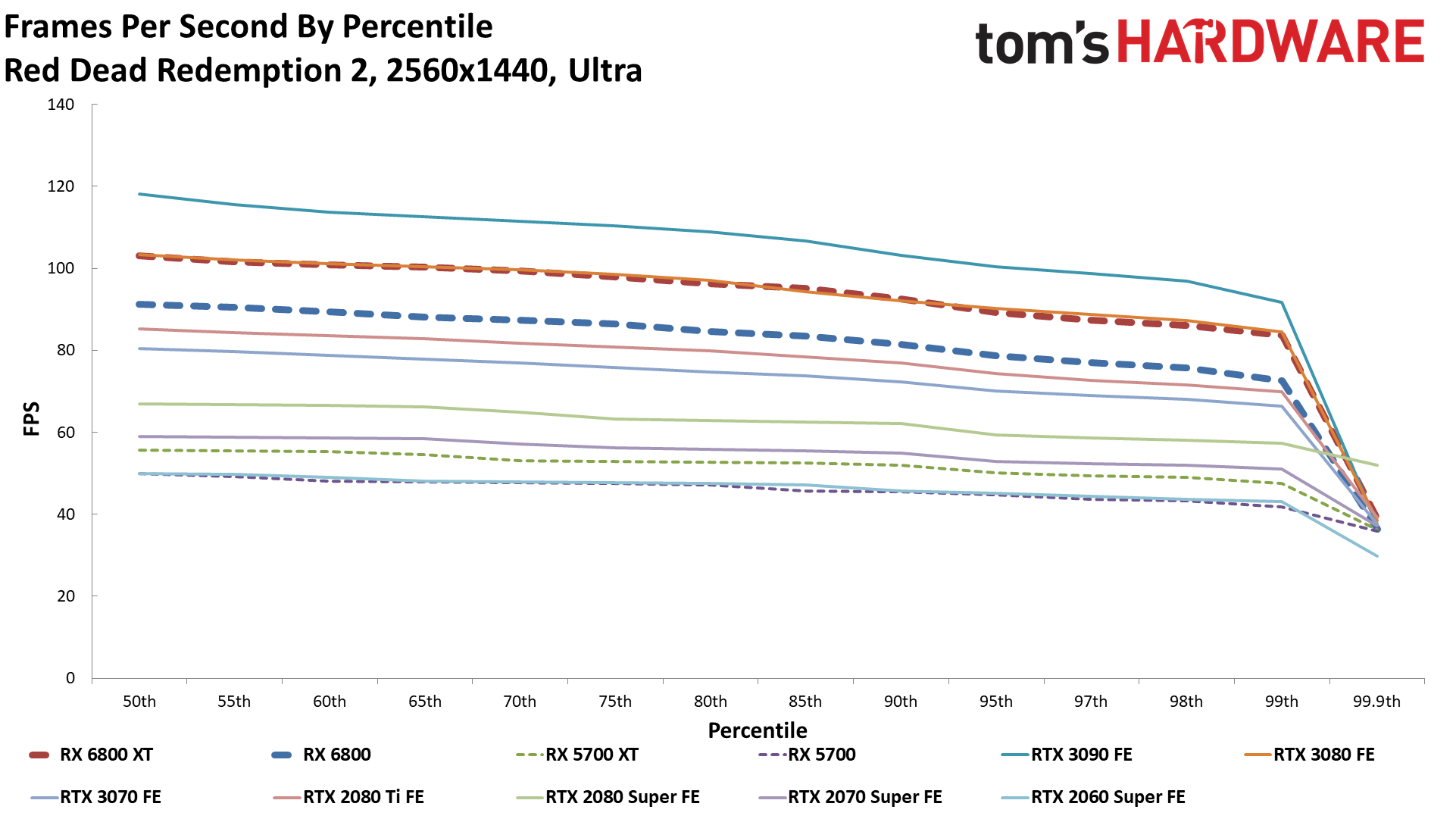

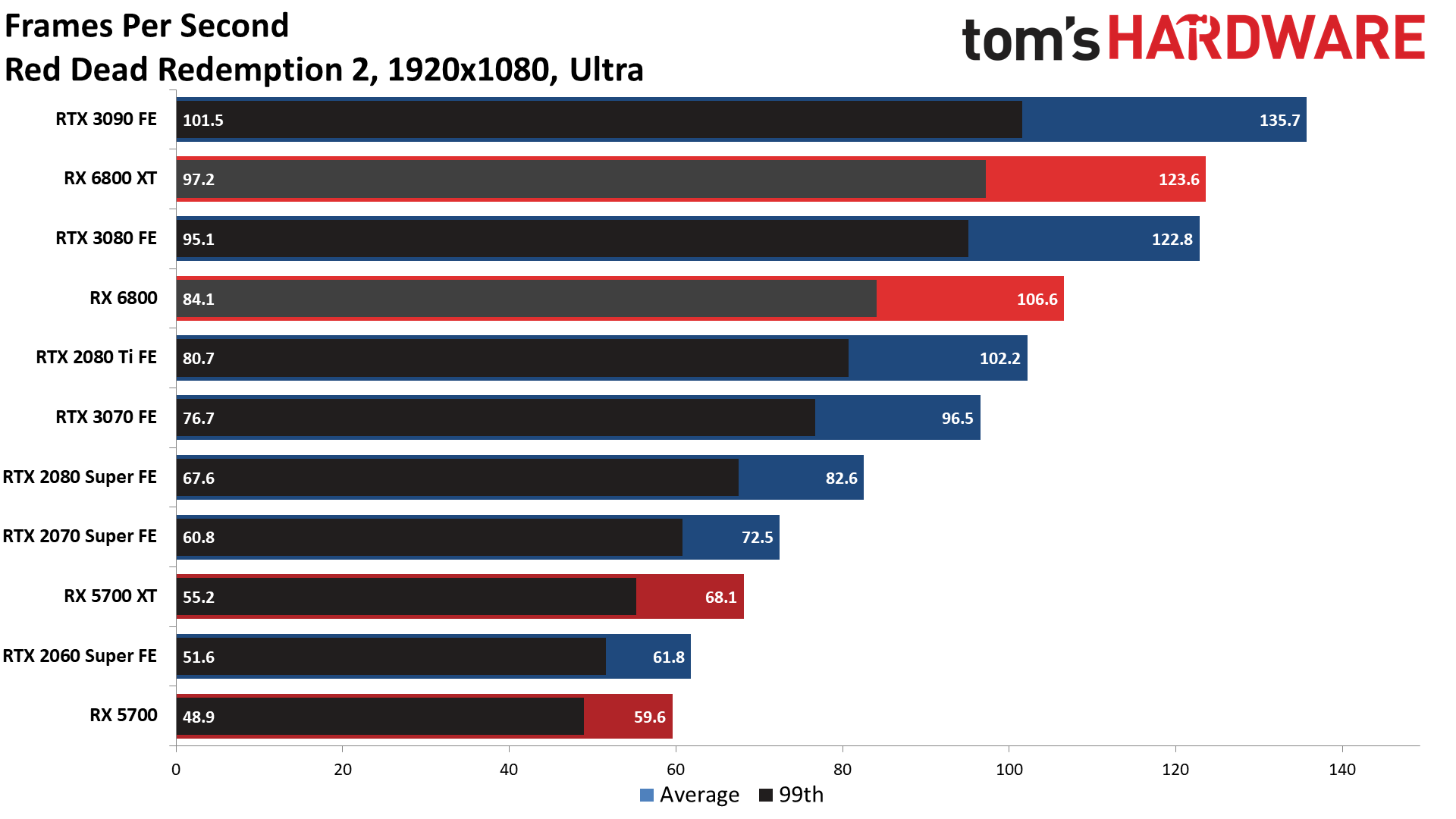

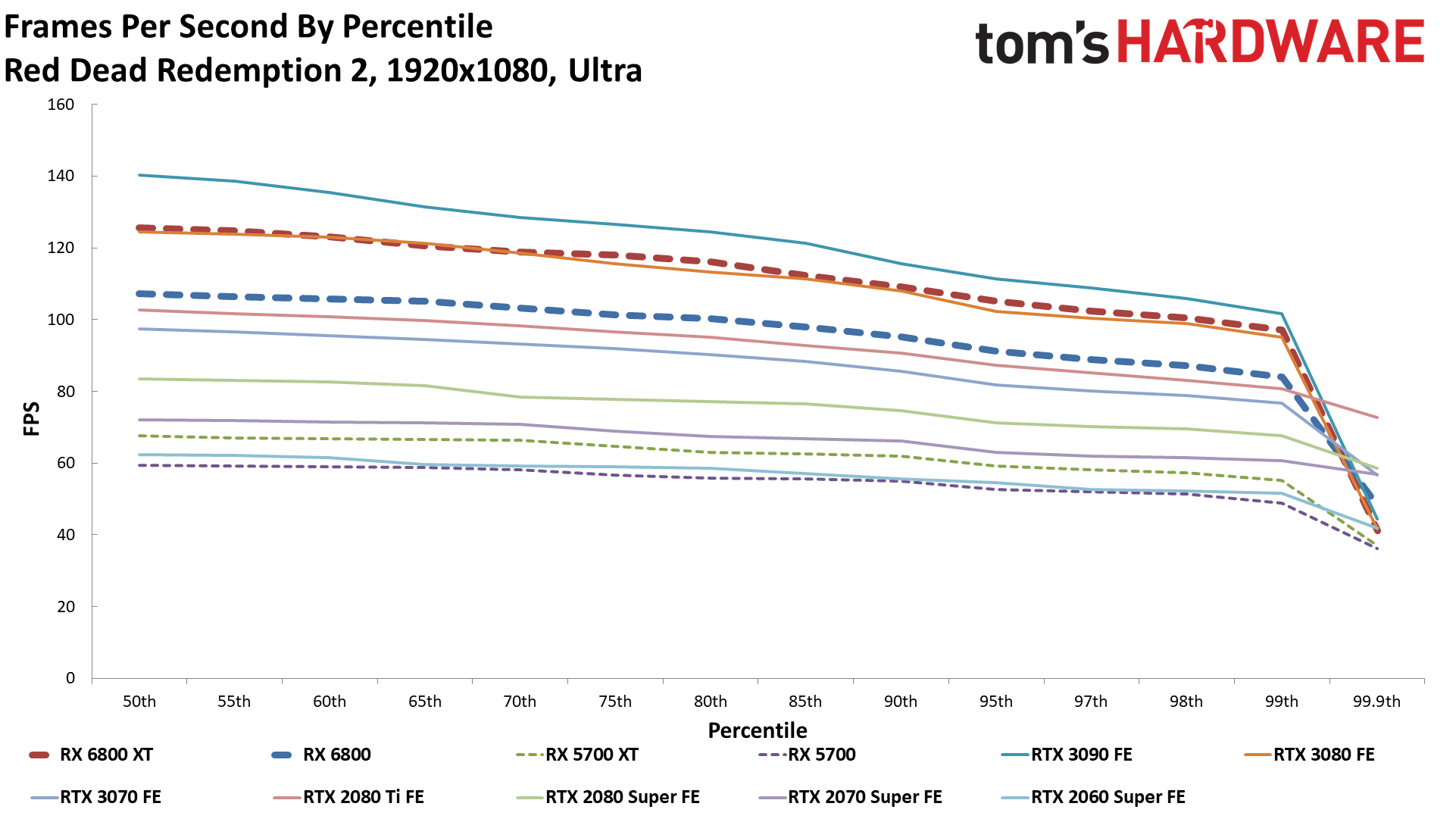

Red Dead Redemption 2

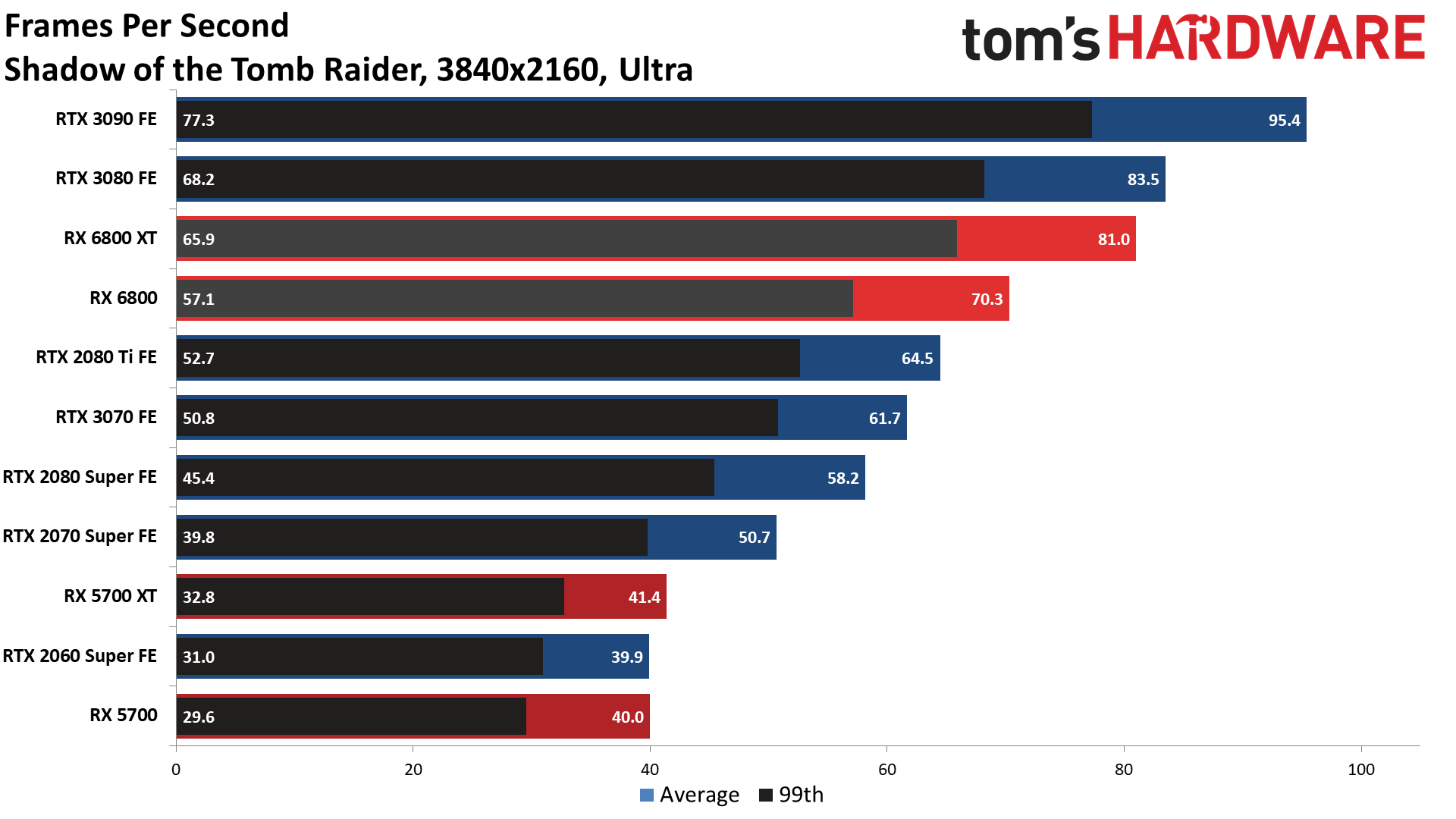

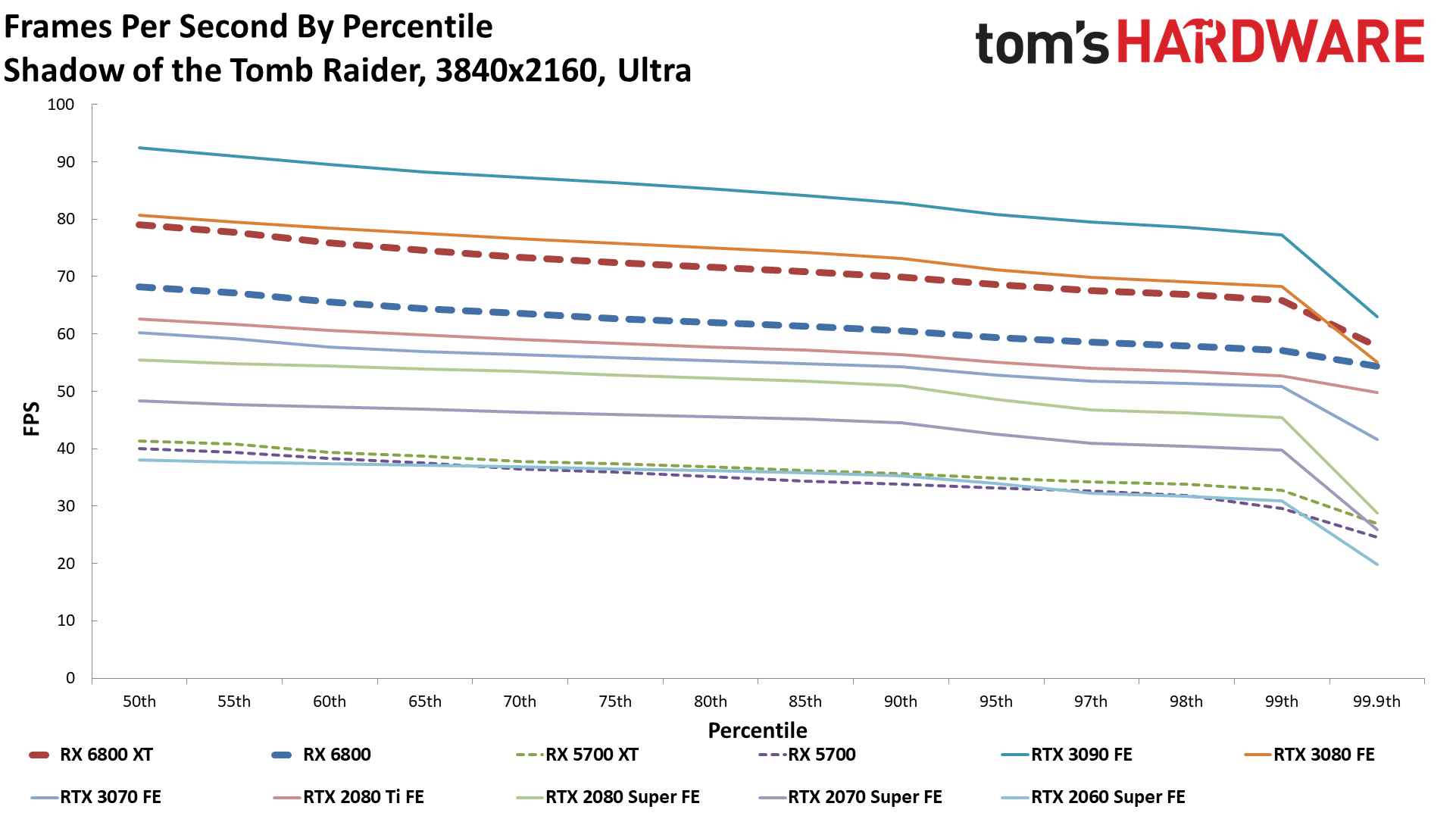

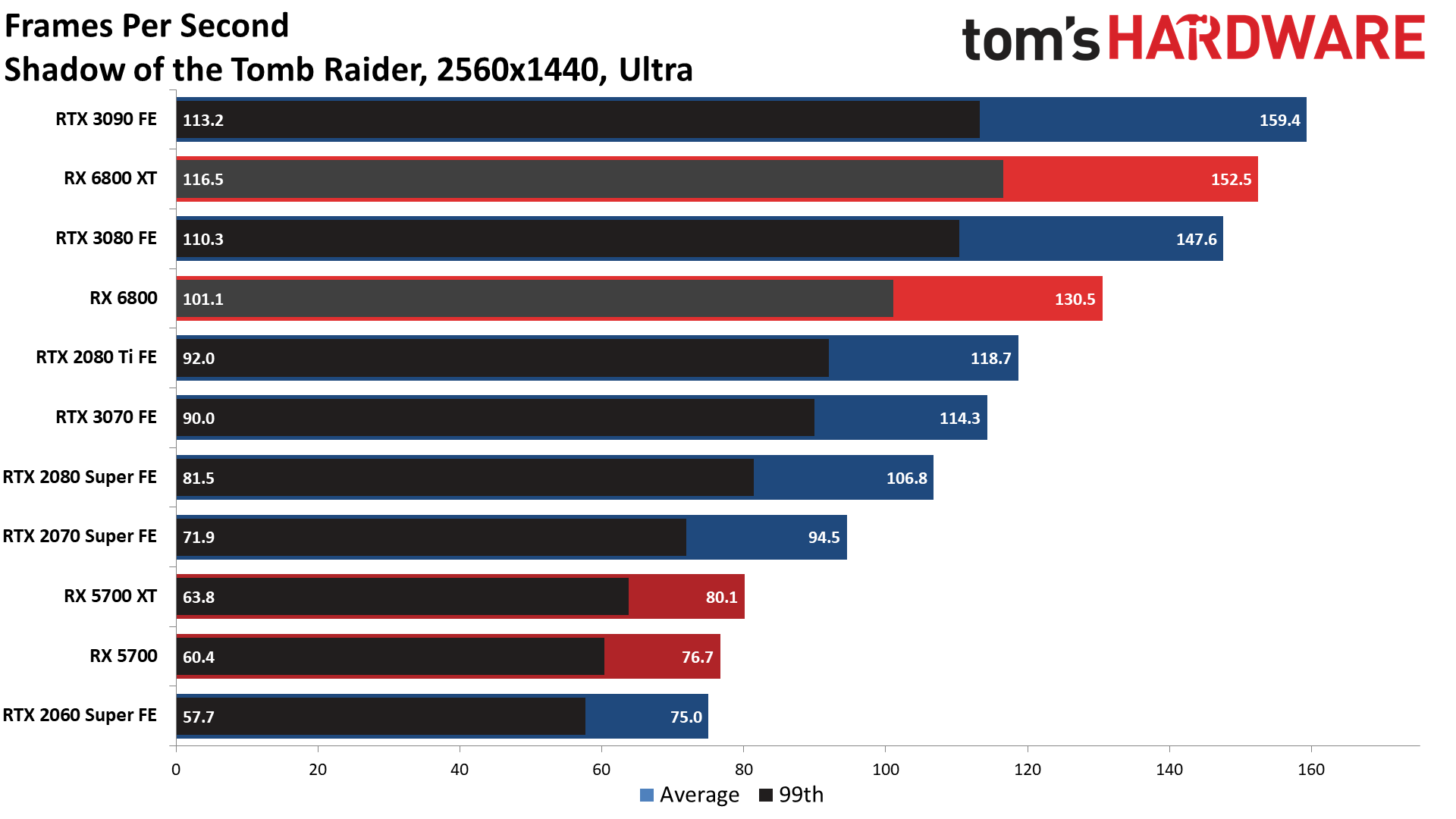

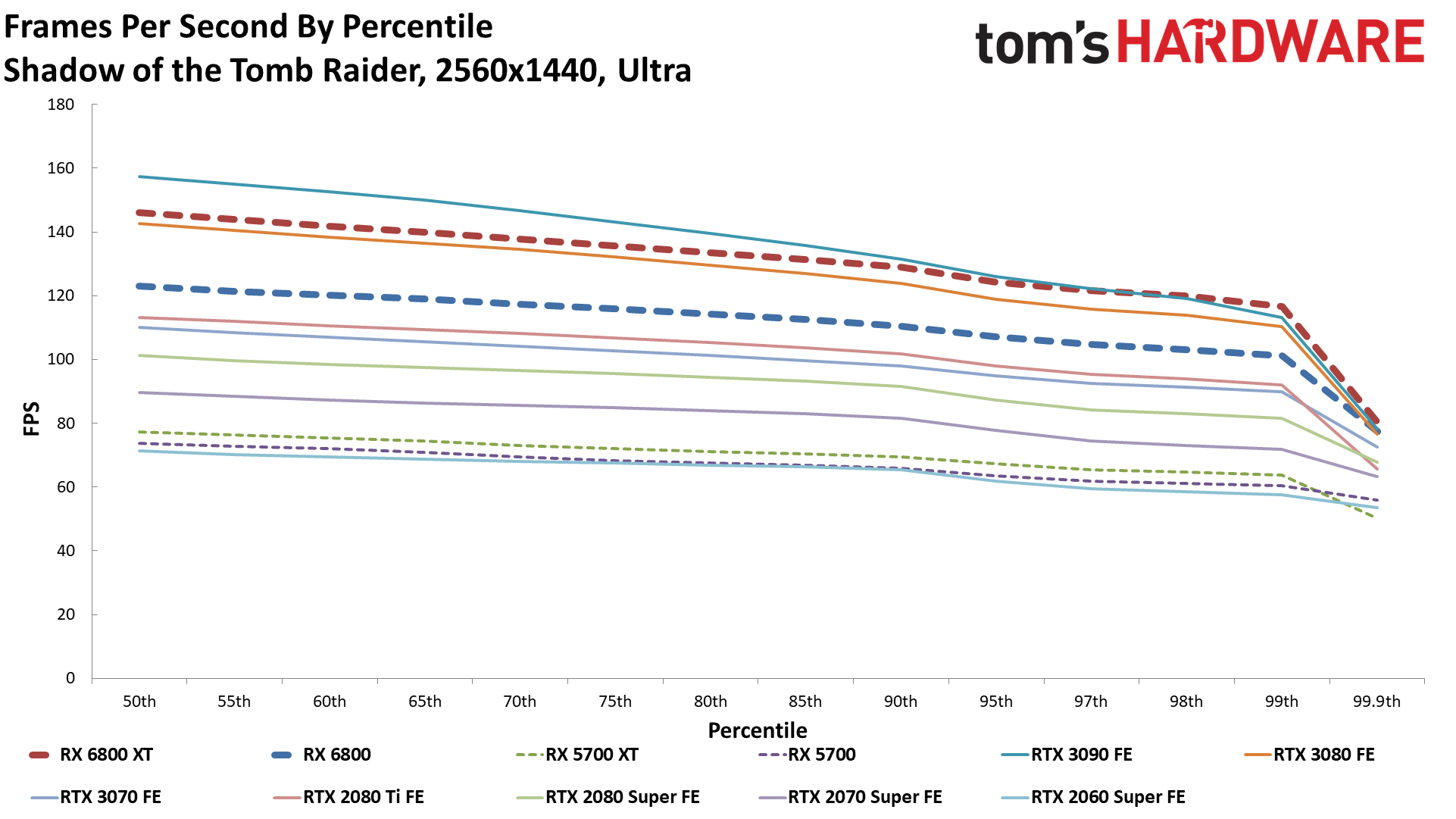

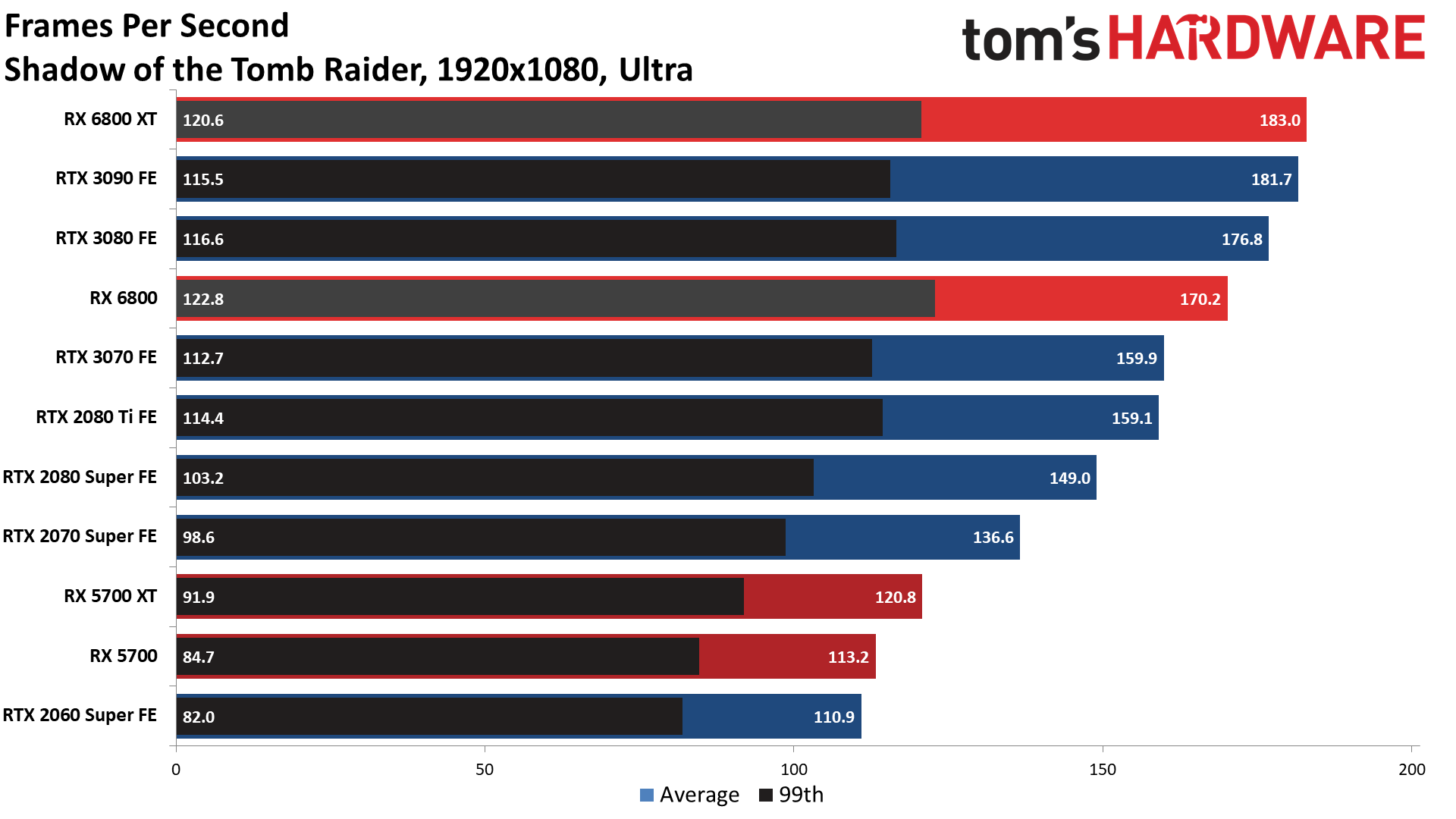

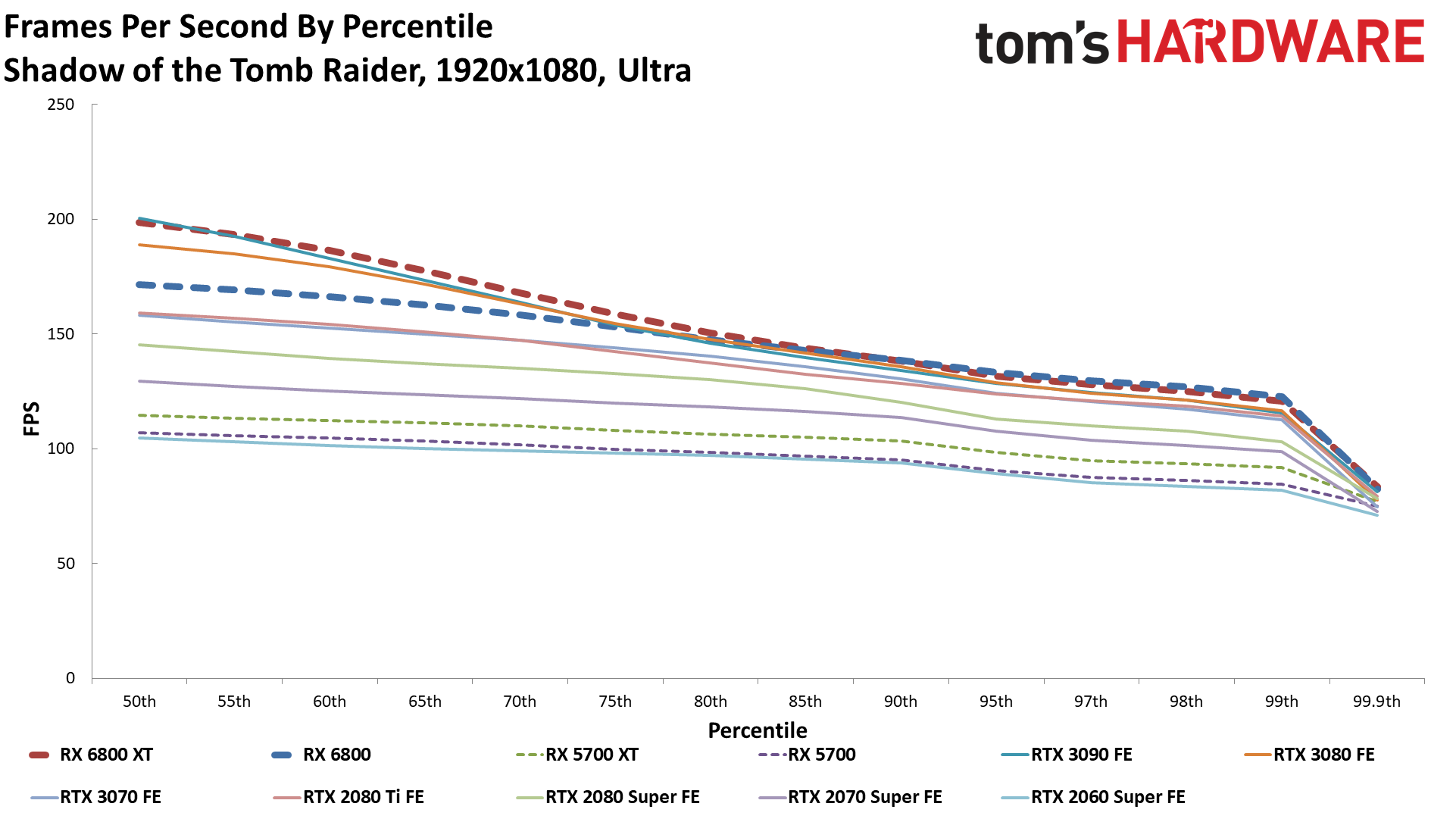

Shadow Of The TombRaider

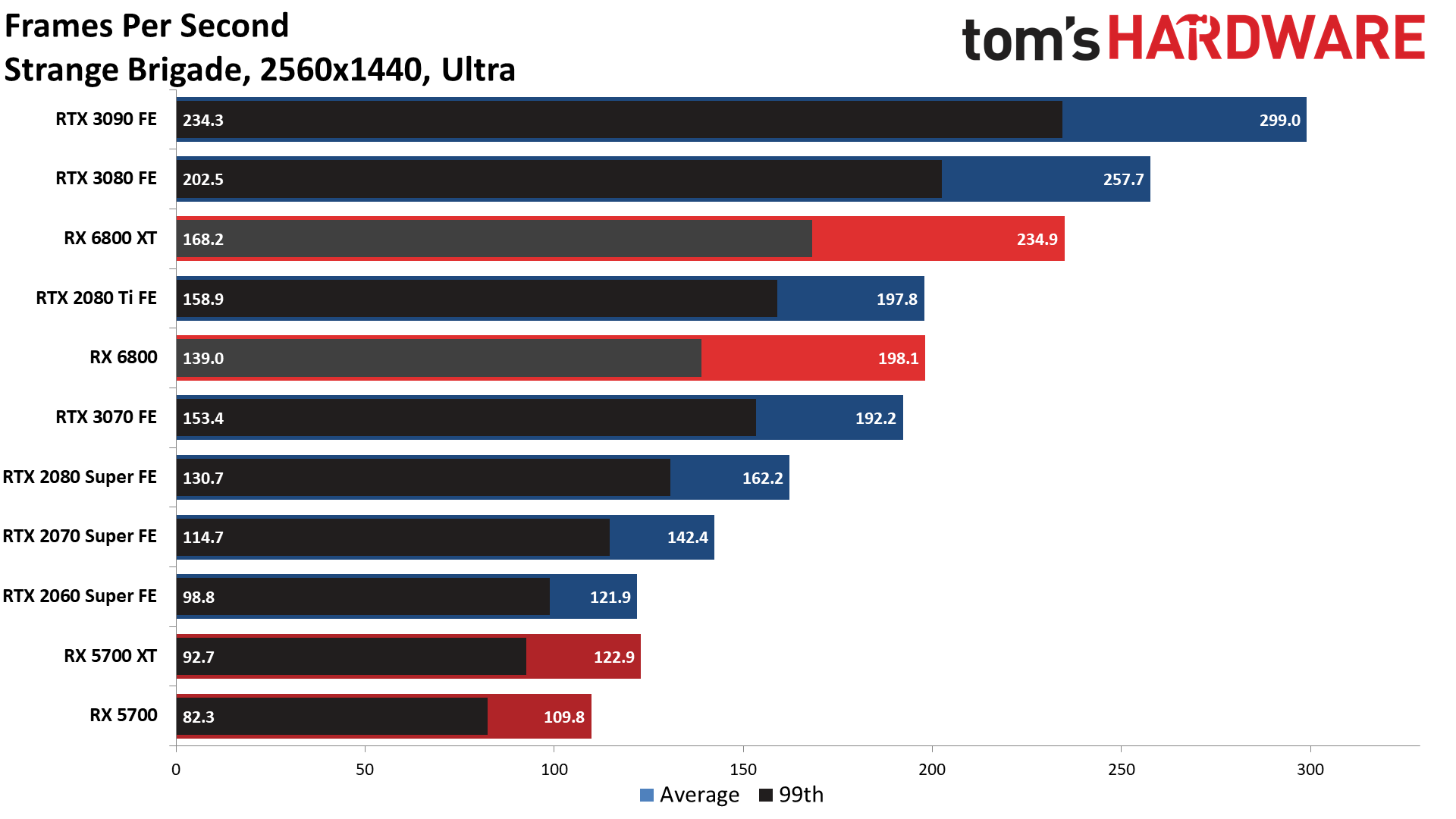

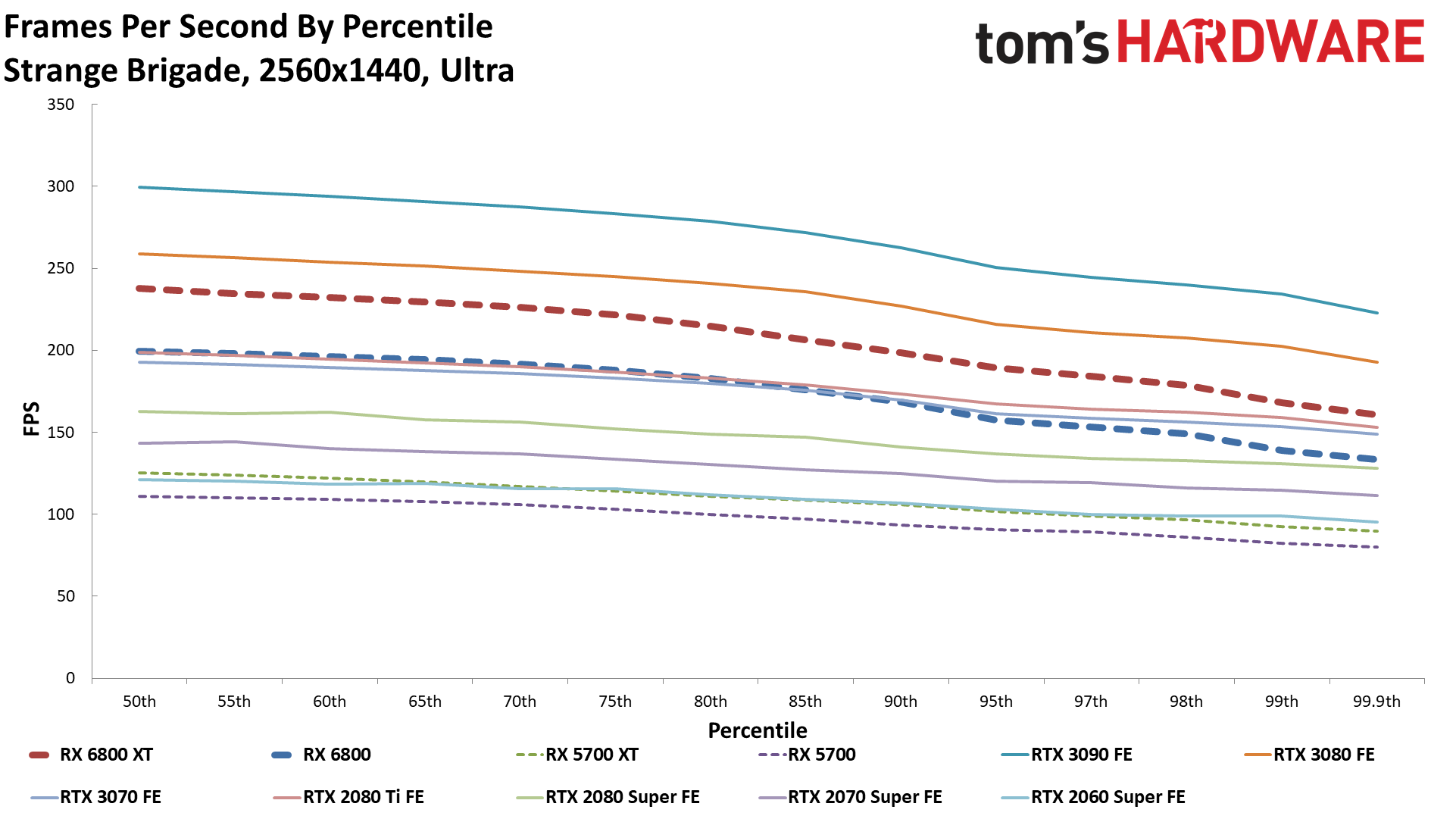

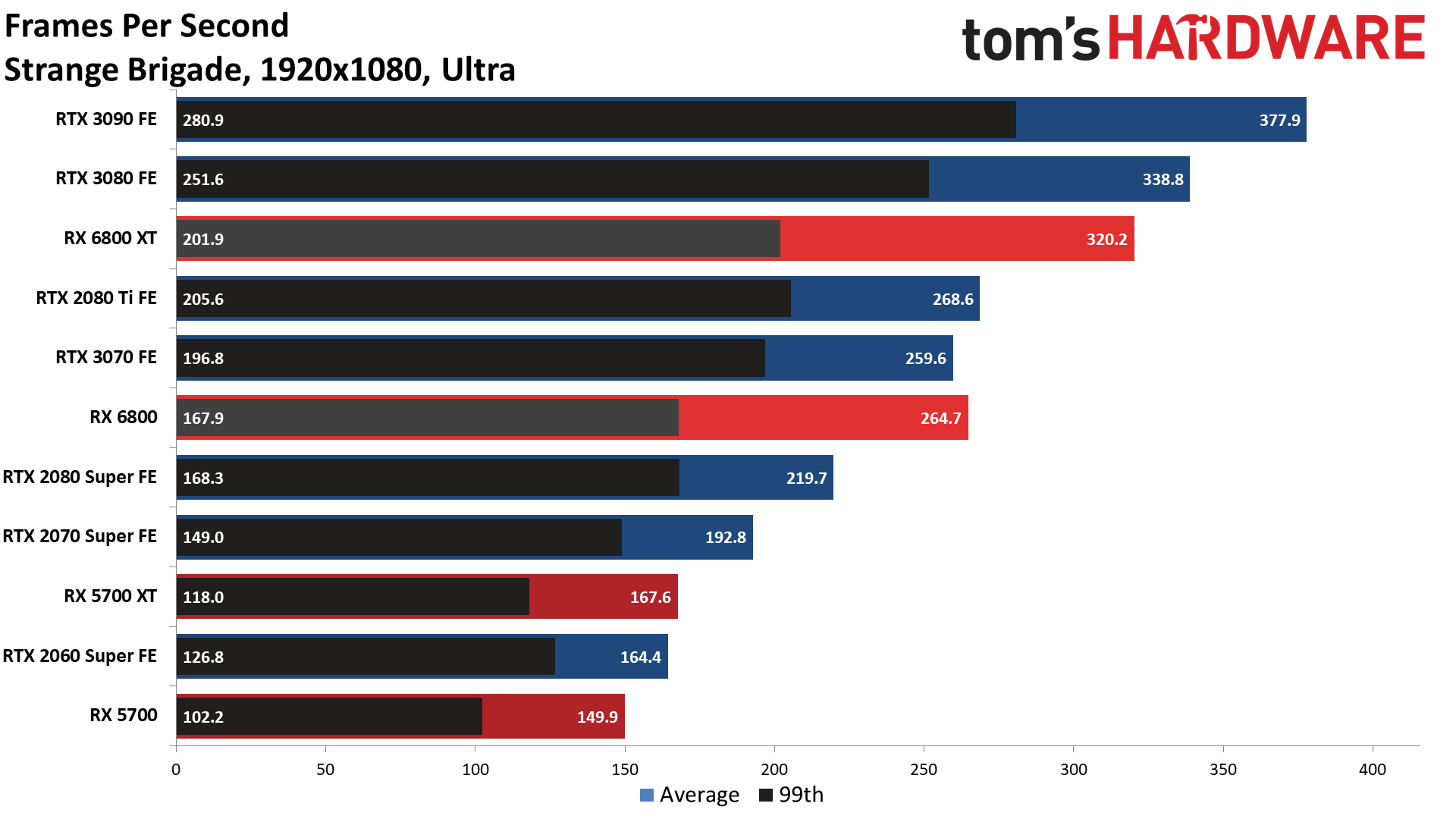

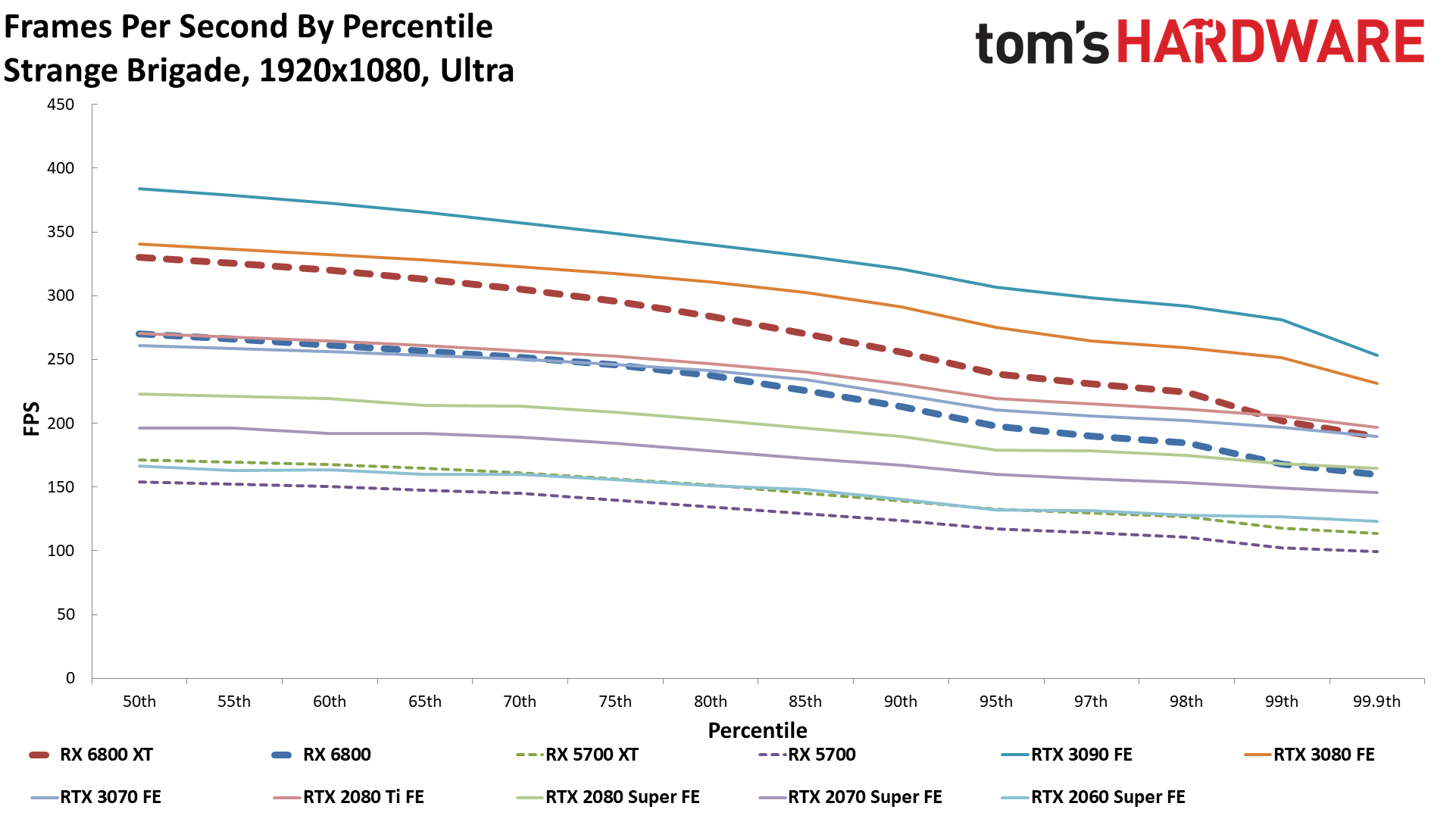

Strange Brigade

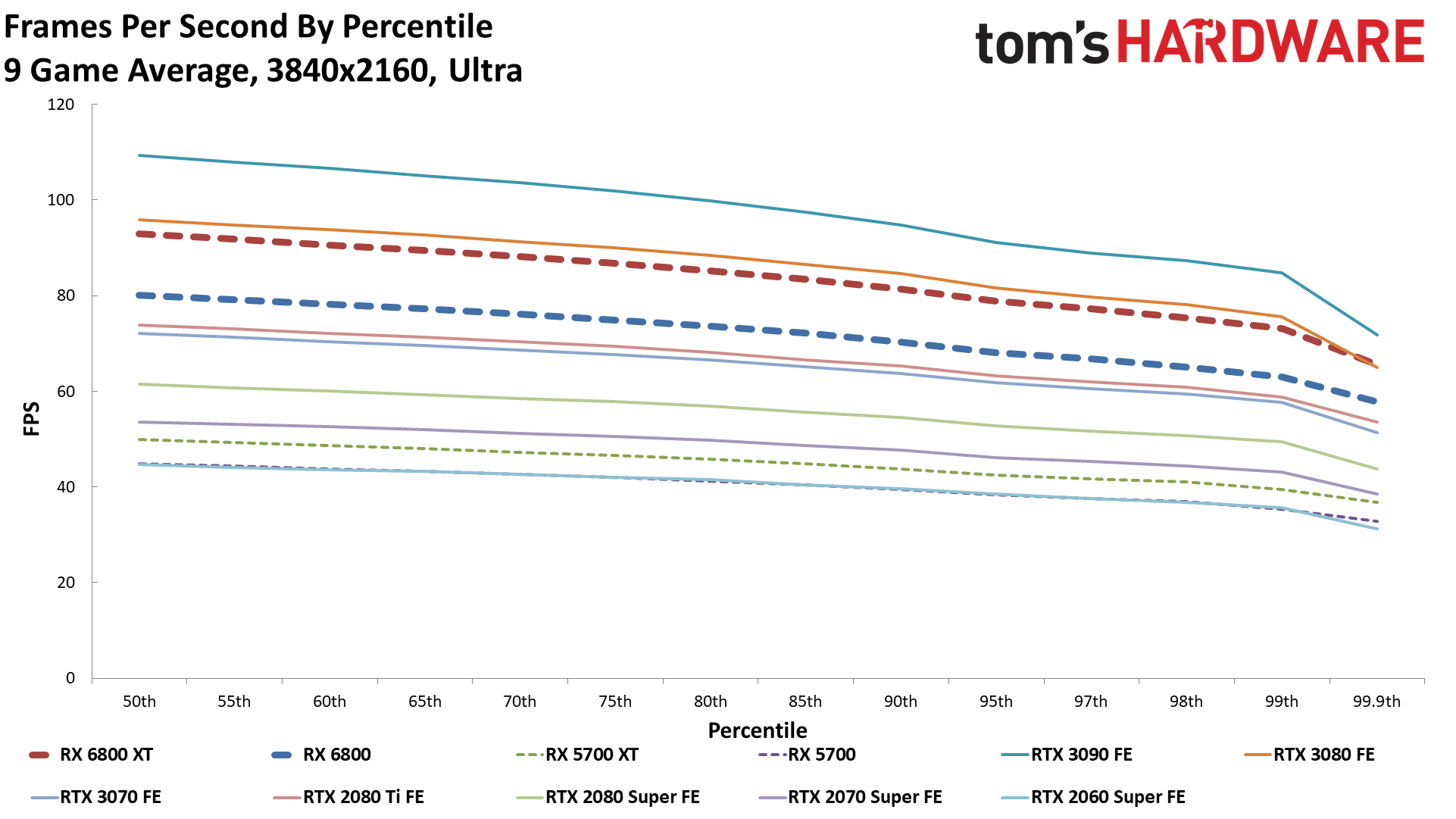

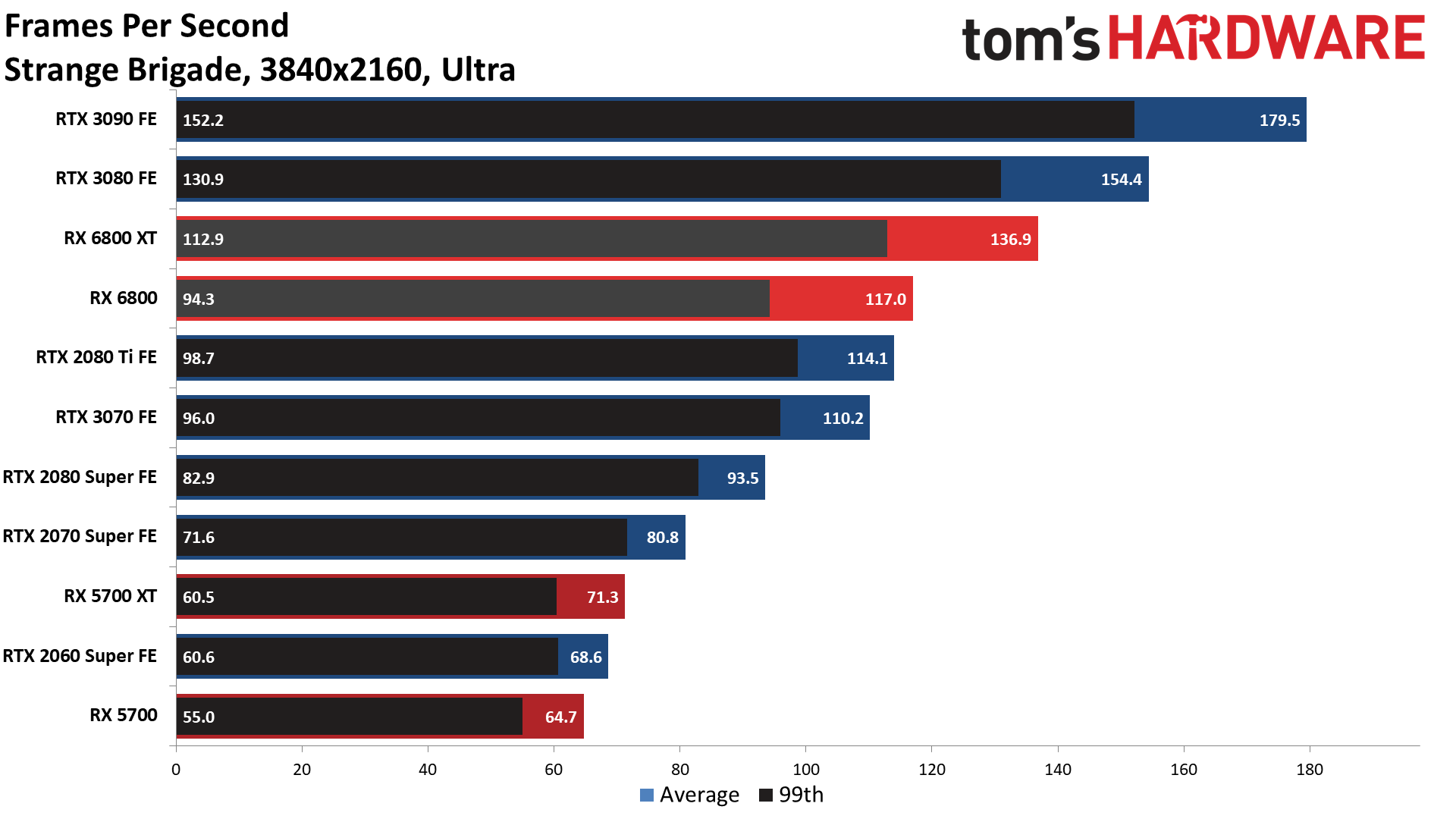

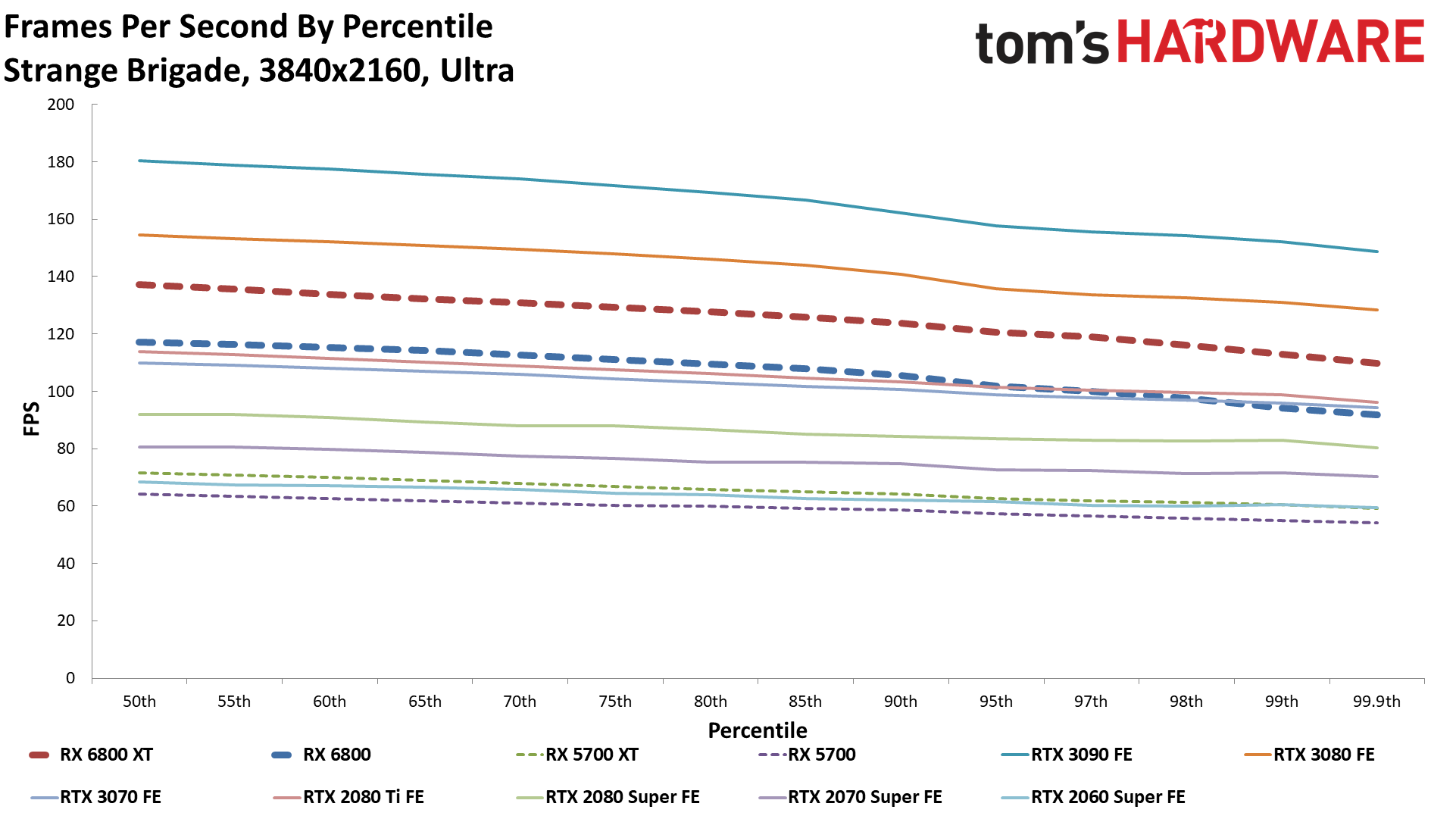

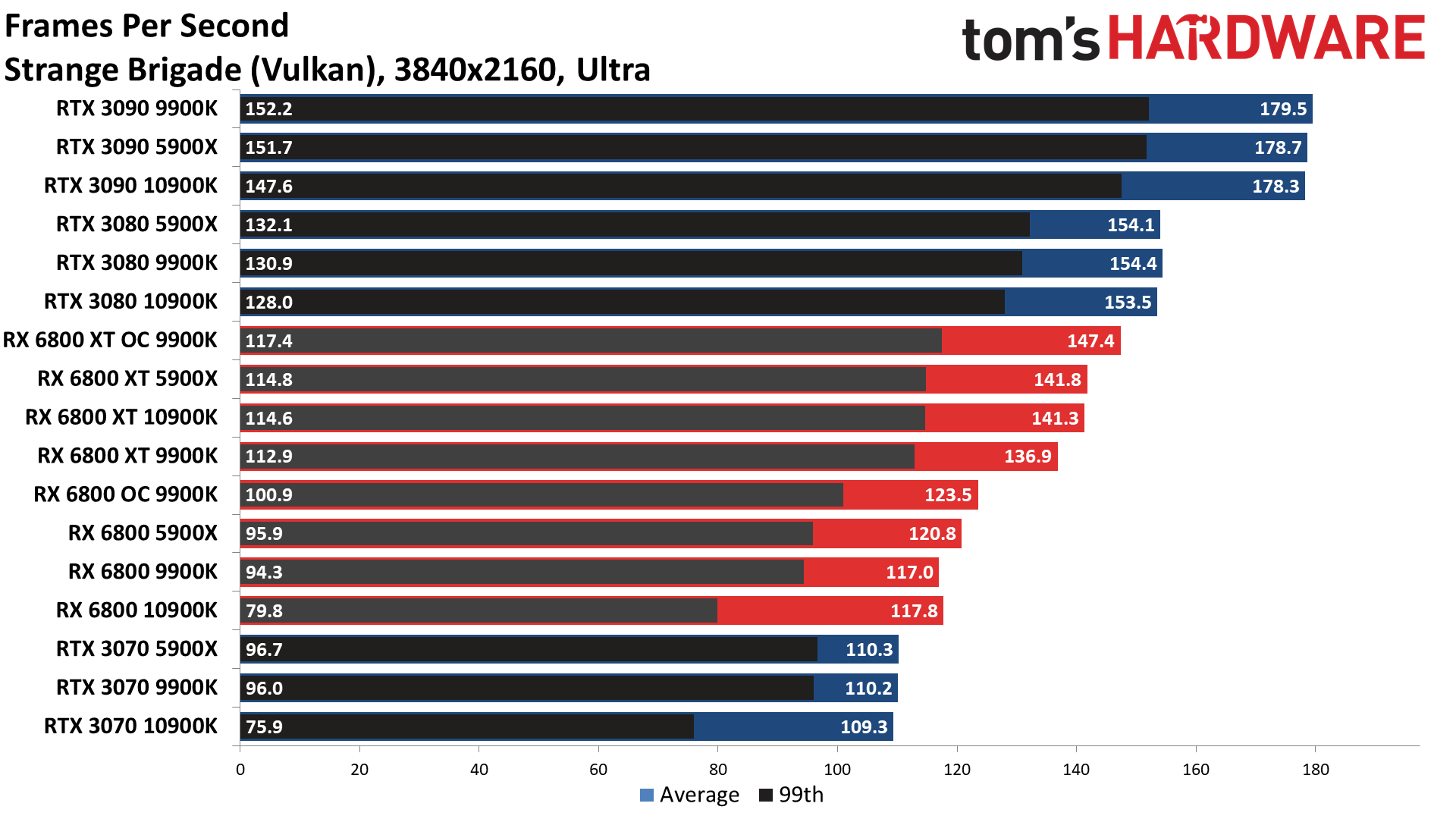

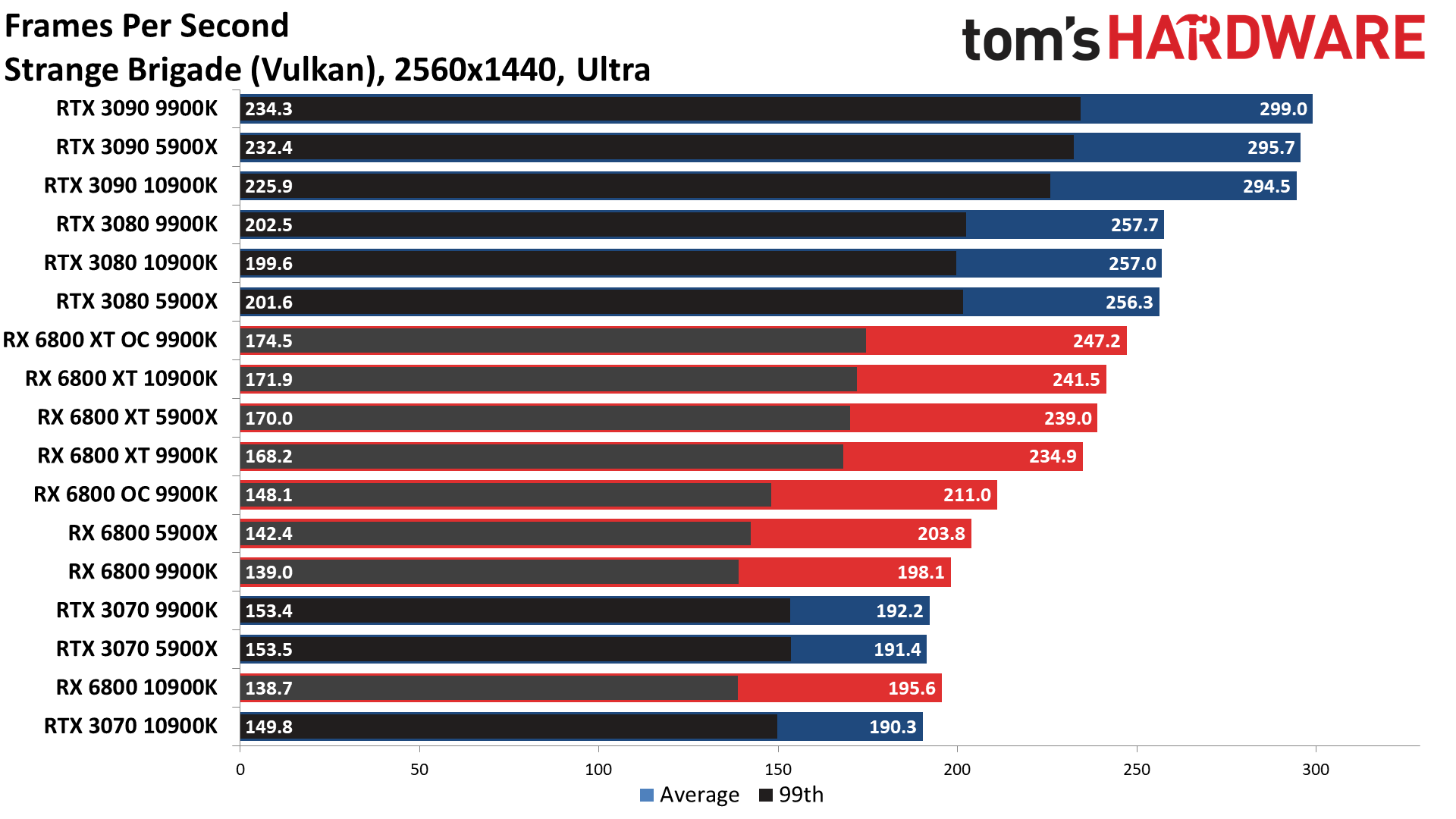

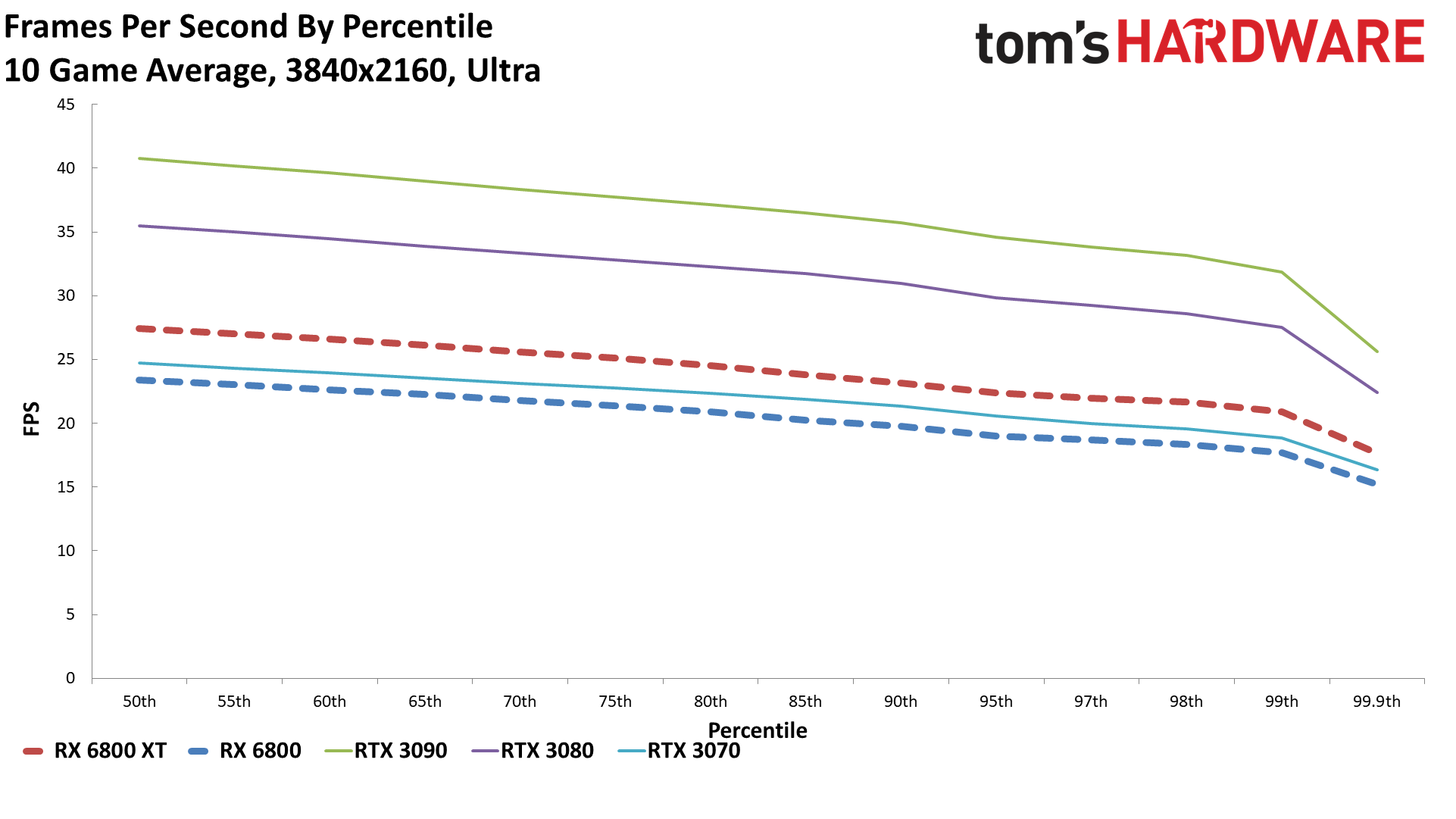

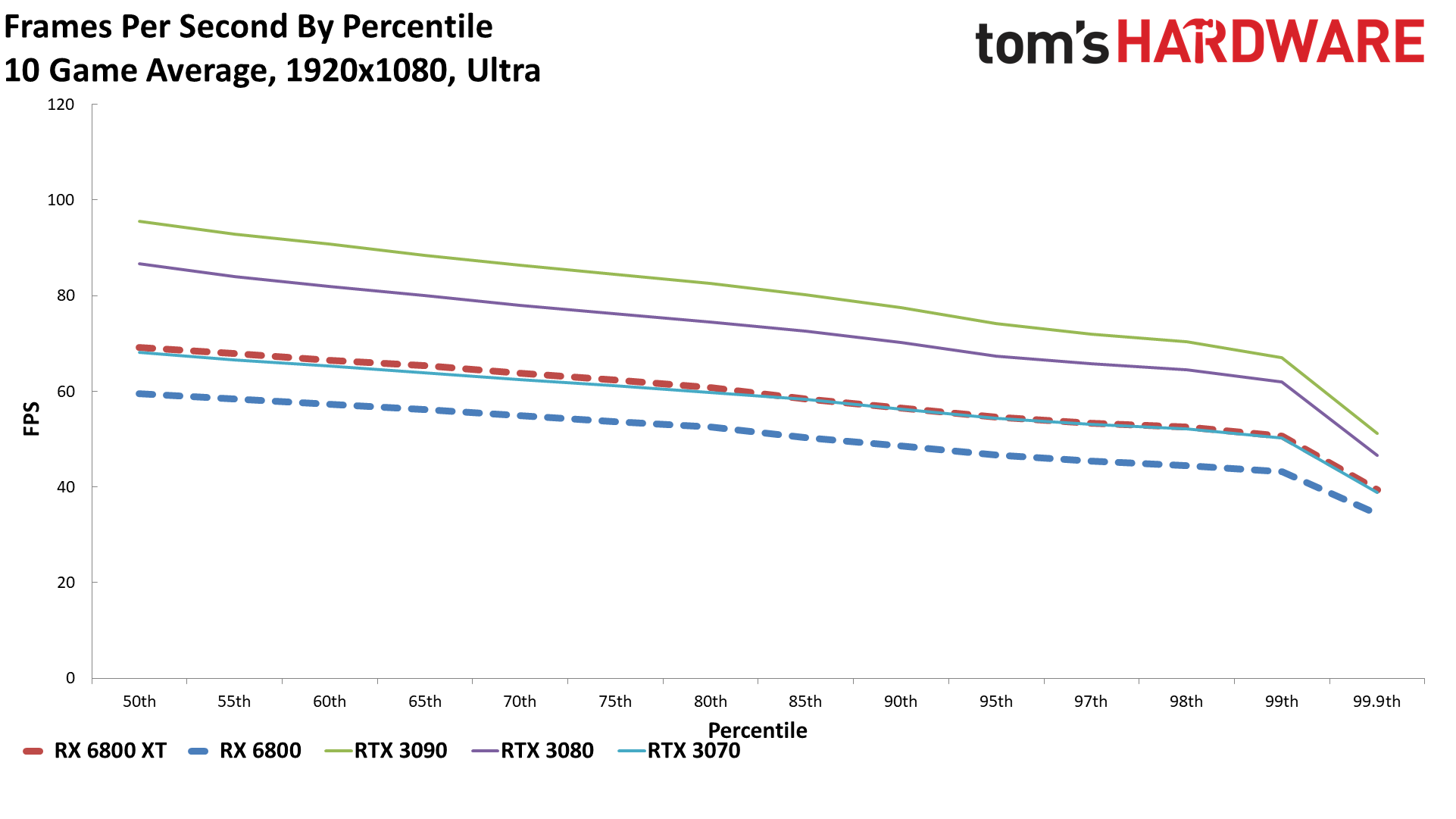

AMD's new GPUs definitely make a good showing in traditional rasterization games. At 4K, Nvidia's 3080 leads the 6800 XT by three percent, but it's not a clean sweep — AMD comes out on top in Borderlands 3, Far Cry 5, and Forza Horizon 4. Meanwhile, Nvidia gets modest wins in The Division 2, Final Fantasy XIV, Metro Exodus, Red Dead Redemption 2, Shadow of the Tomb Raider, and the largest lead is in Strange Brigade. But that's only at the highest resolution, where AMD's Infinity Cache may not be quite as effective.

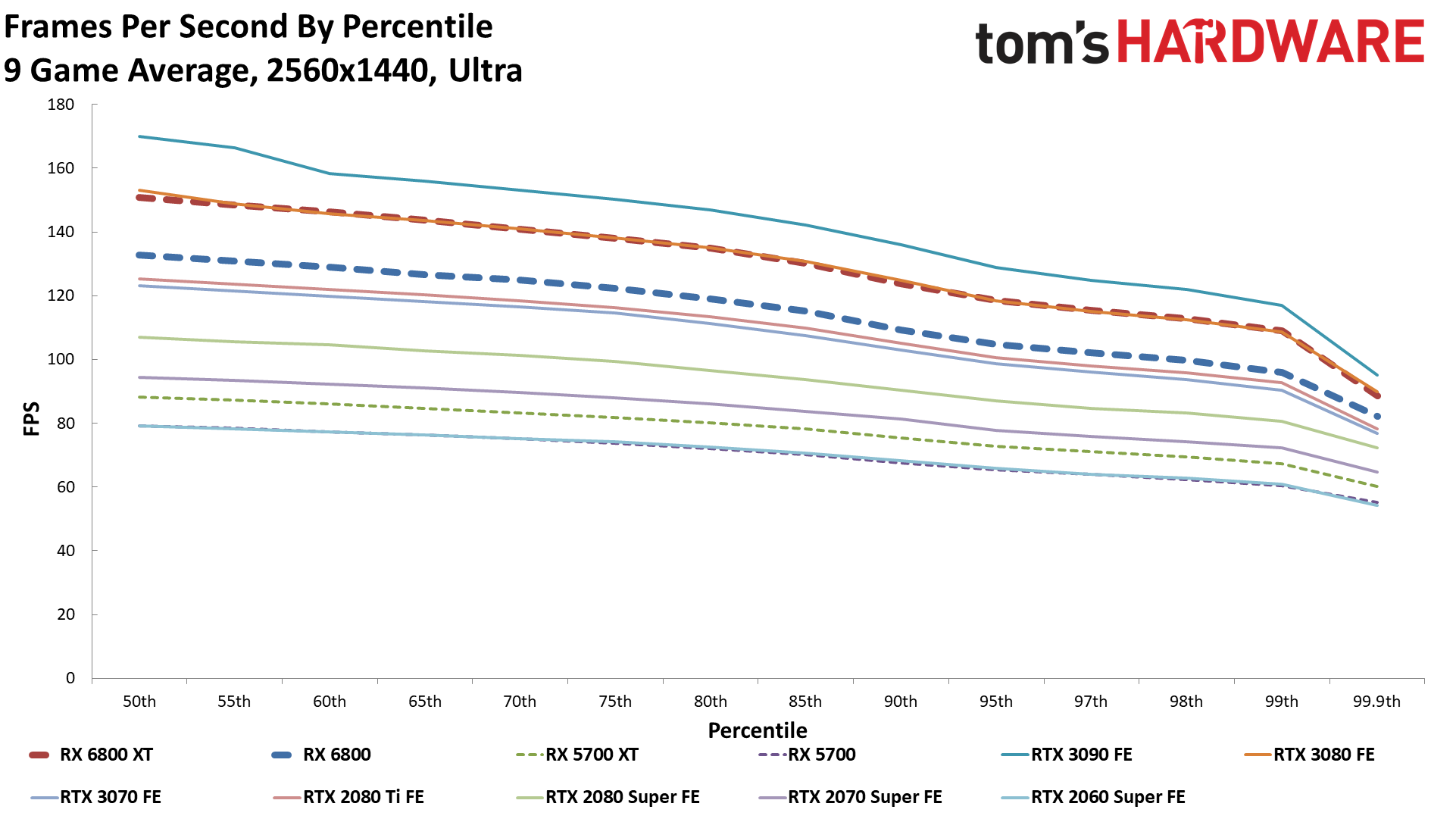

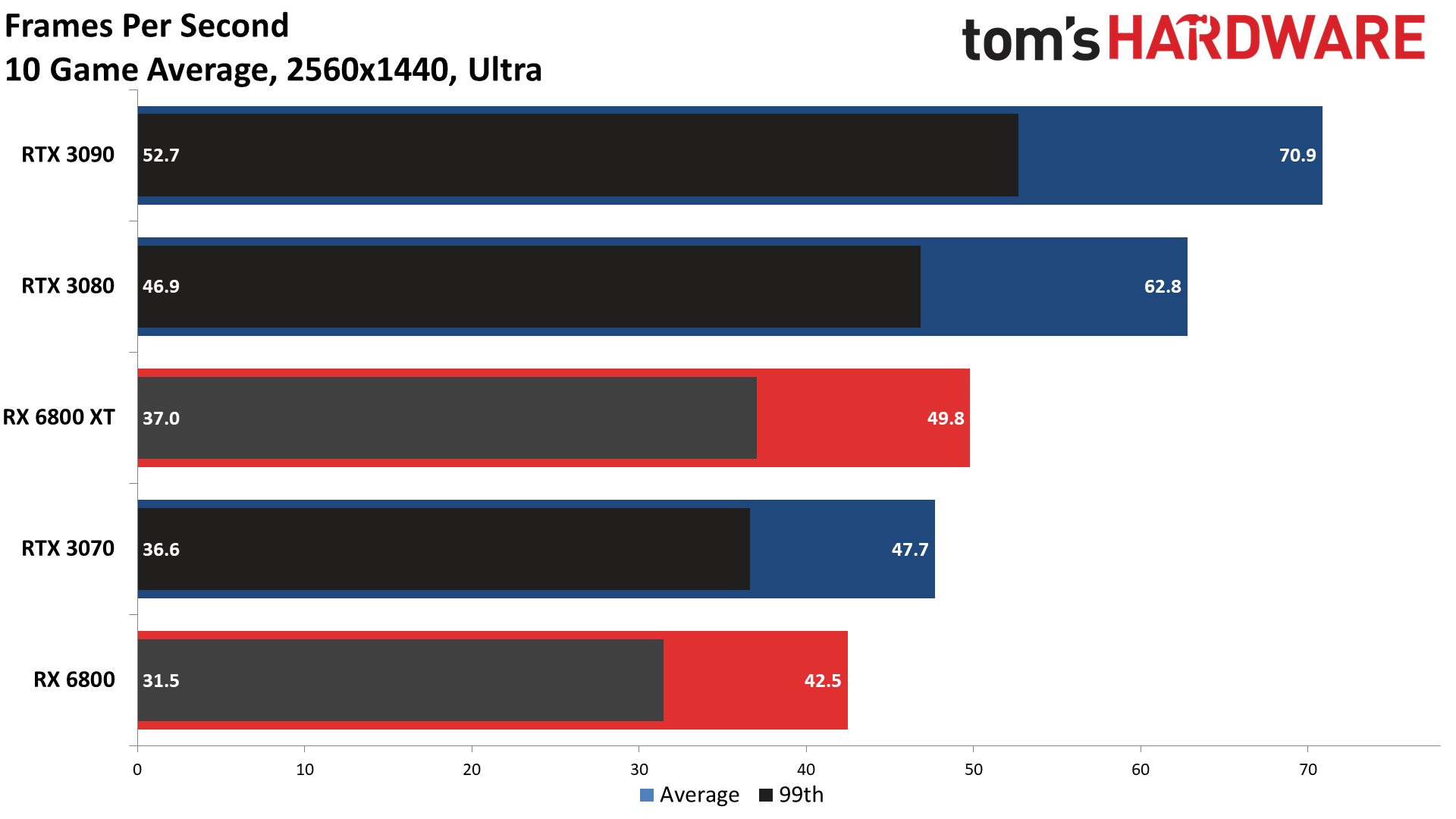

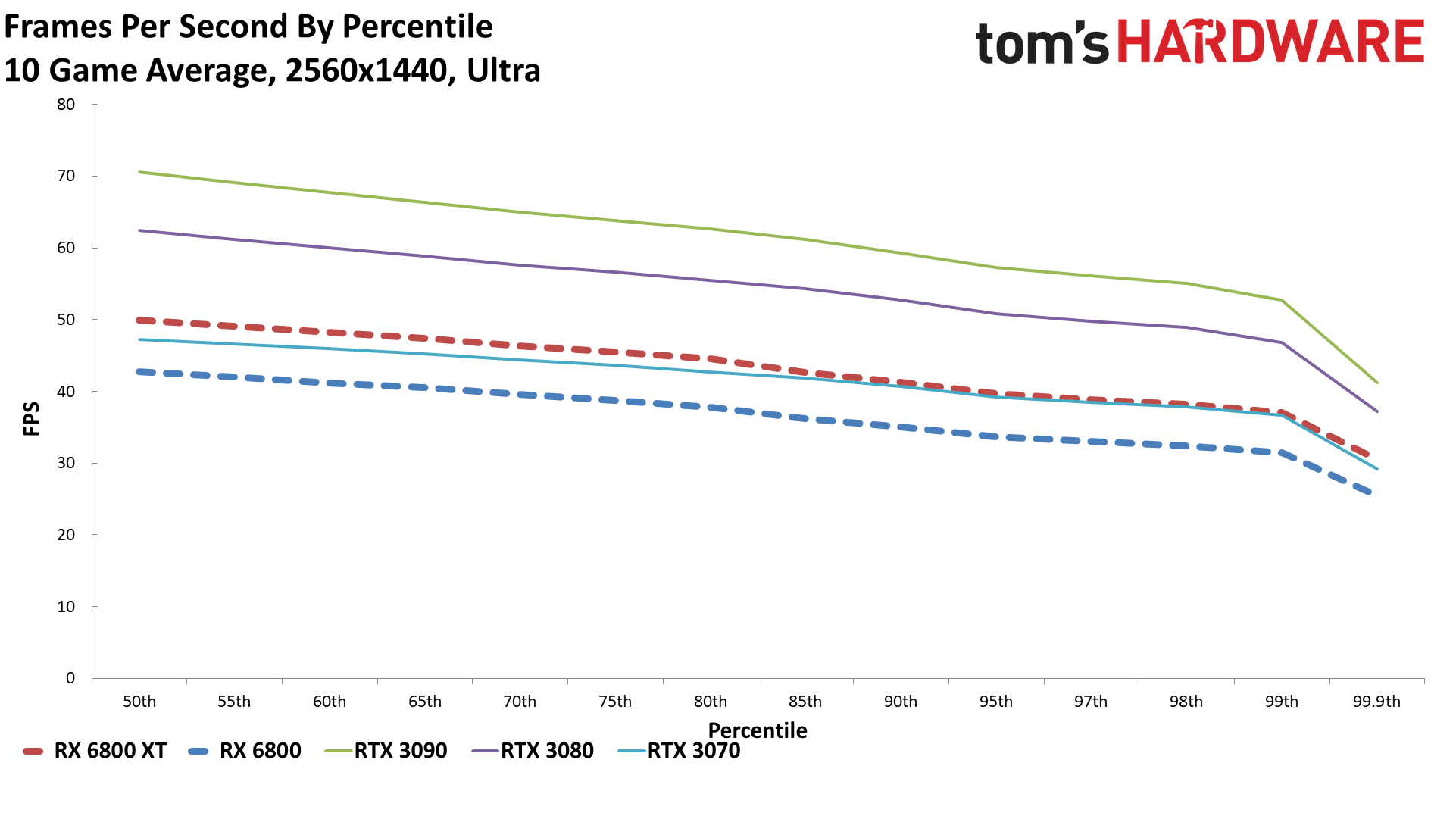

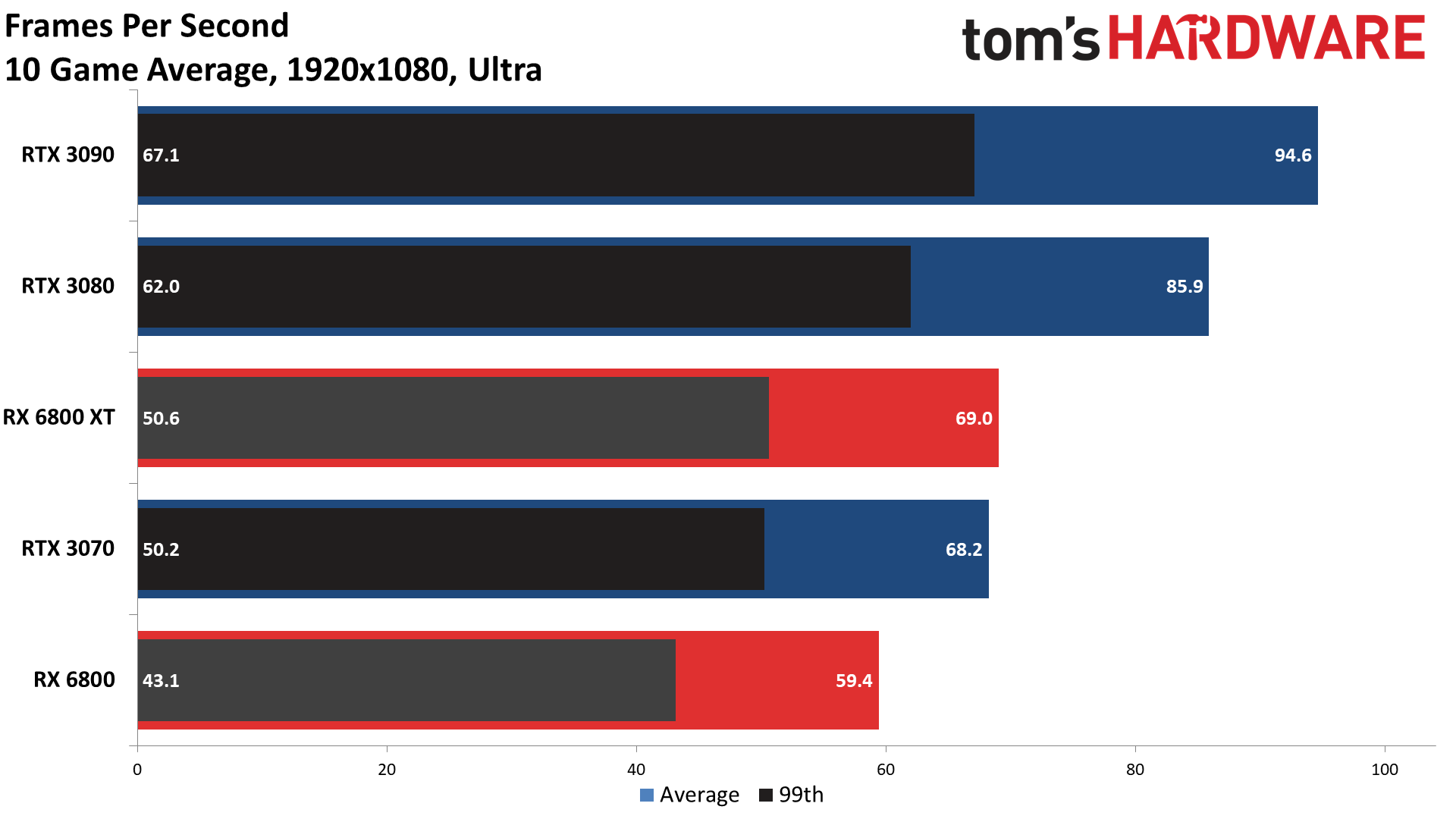

Dropping to 1440p, the RTX 3080 and 6800 XT are effectively tied — again, AMD wins several games, Nvidia wins others, but the average performance is the same. At 1080p, AMD even pulls ahead by two percent overall. Not that we really expect most gamers forking over $650 or $700 or more on a graphics card to stick with a 1080p display, unless it's a 240Hz or 360Hz model.

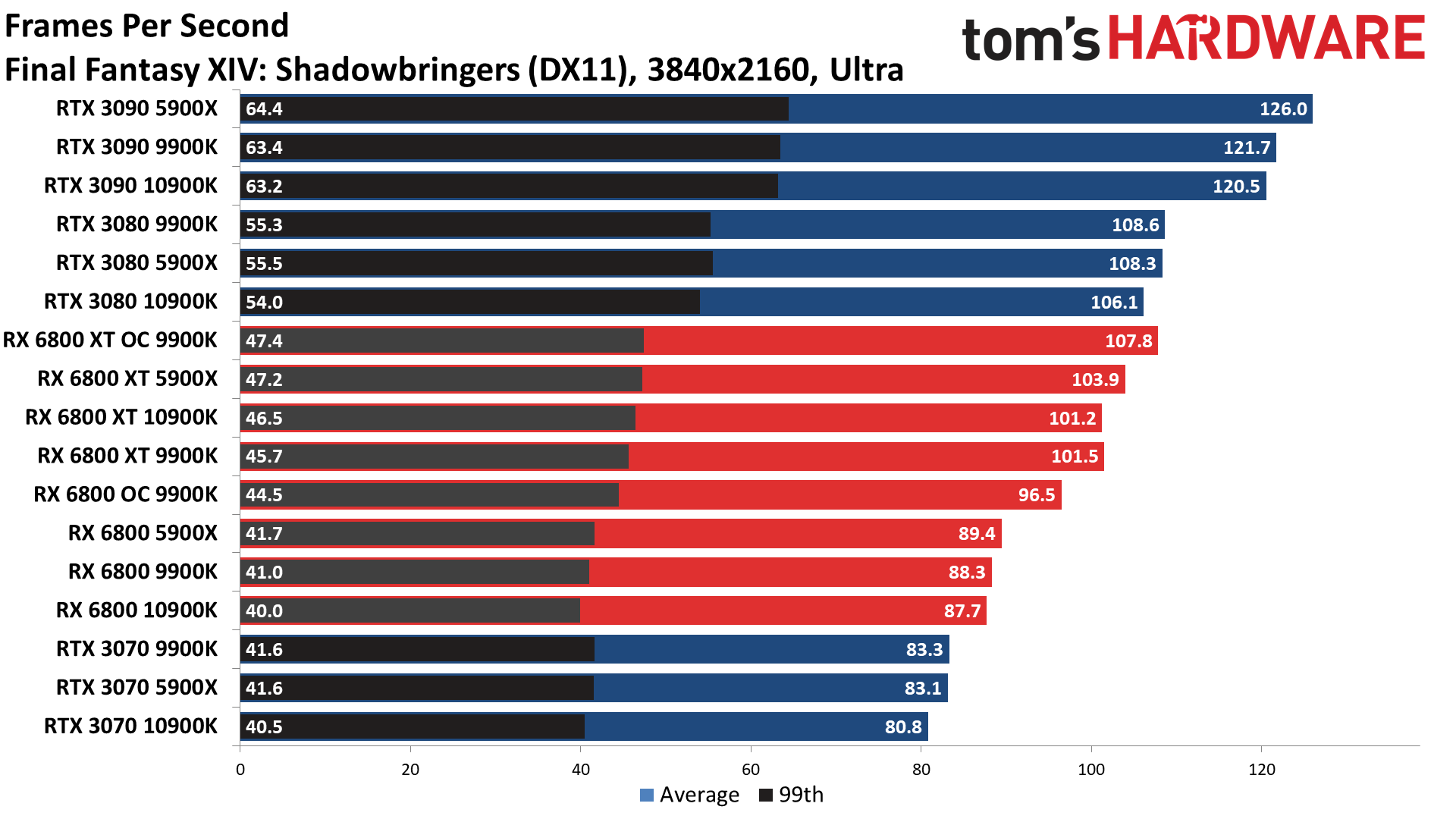

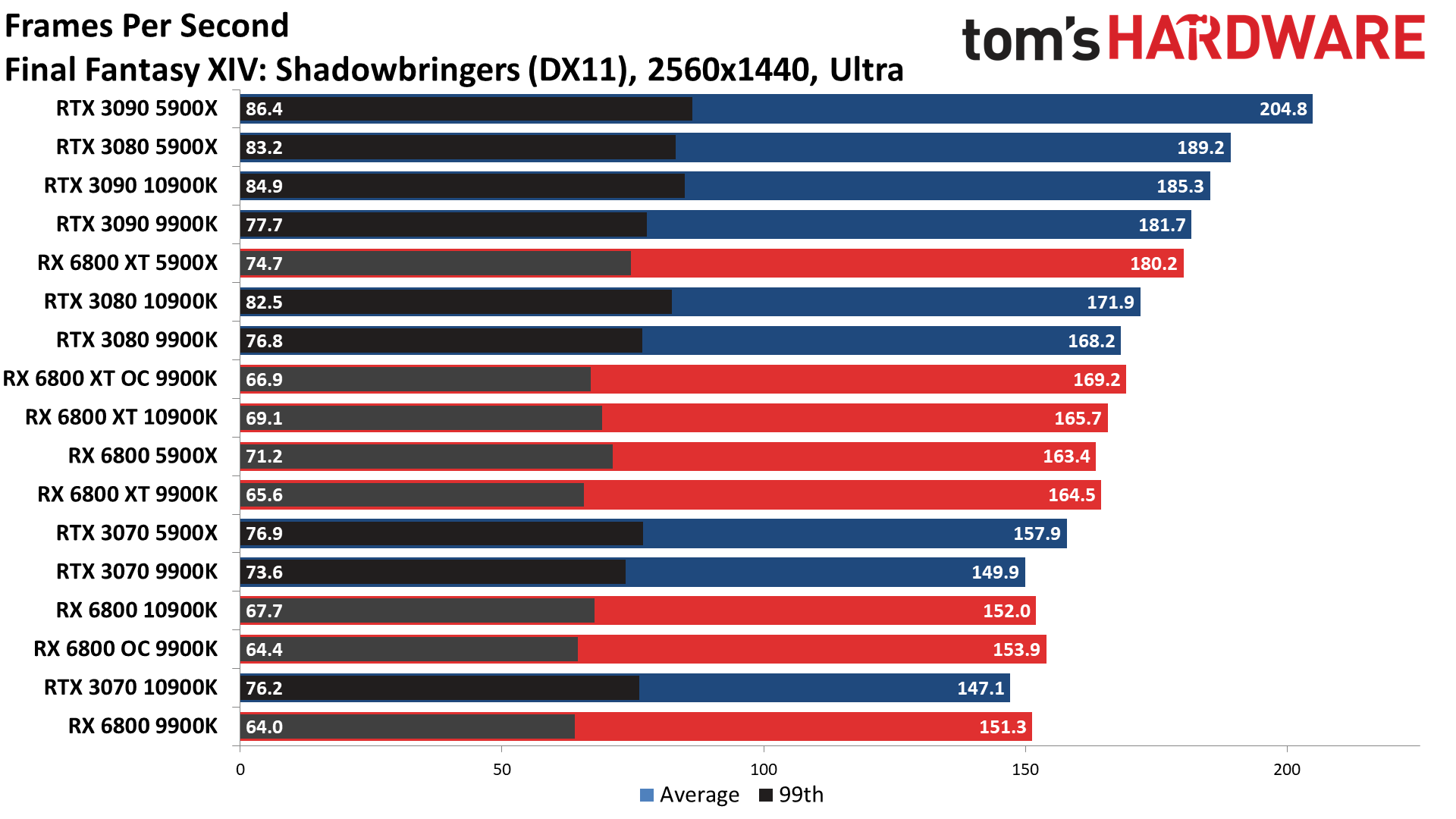

Flipping over to the vanilla RX 6800 and the RTX 3070, AMD does even better. On average, the RX 6800 leads by 11 percent at 4K ultra, nine percent at 1440p ultra, and seven percent at 1080p ultra. Here the 8GB of GDDR6 memory on the RTX 3070 simply can't keep pace with the 16GB of higher clocked memory — and the Infinity Cache — that AMD brings to the party. The best Nvidia can do is one or two minor wins (e.g., Far Cry 5 at 1080p, where the GPUs are more CPU limited) and slightly higher minimum fps in FFXIV and Strange Brigade.

But as good as the RX 6800 looks against the RTX 3070, we prefer the RX 6800 XT from AMD. It only costs $70 more, which is basically the cost of one game and a fast food lunch. Or put another way, it's 12 percent more money, for 12 percent more performance at 1080p, 14 percent more performance at 1440p, and 16 percent better 4K performance. You also get AMD's Rage Mode pseudo-overclocking (really just increased power limits).

Radeon RX 6800 CPU Scaling and Overclocking

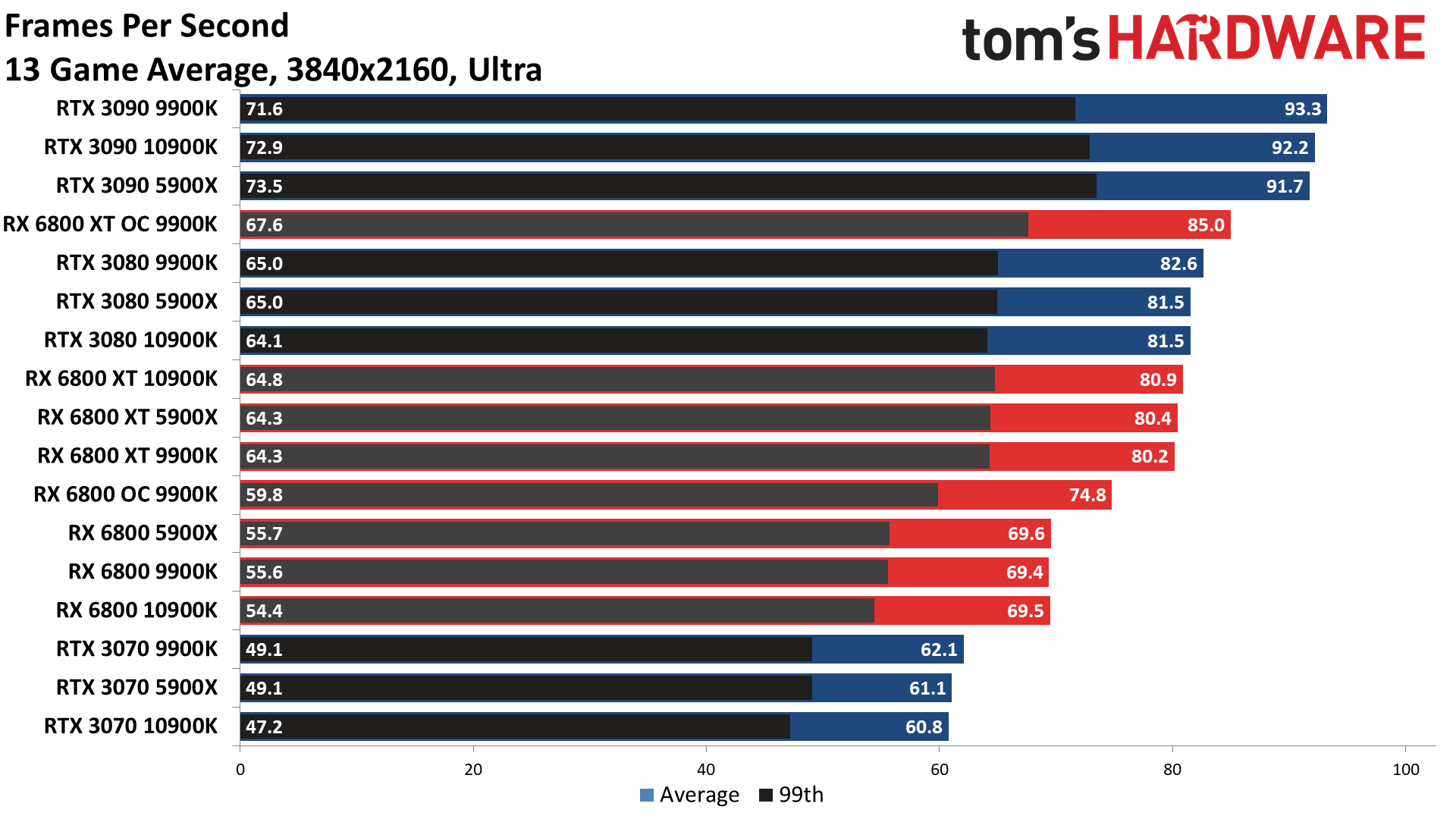

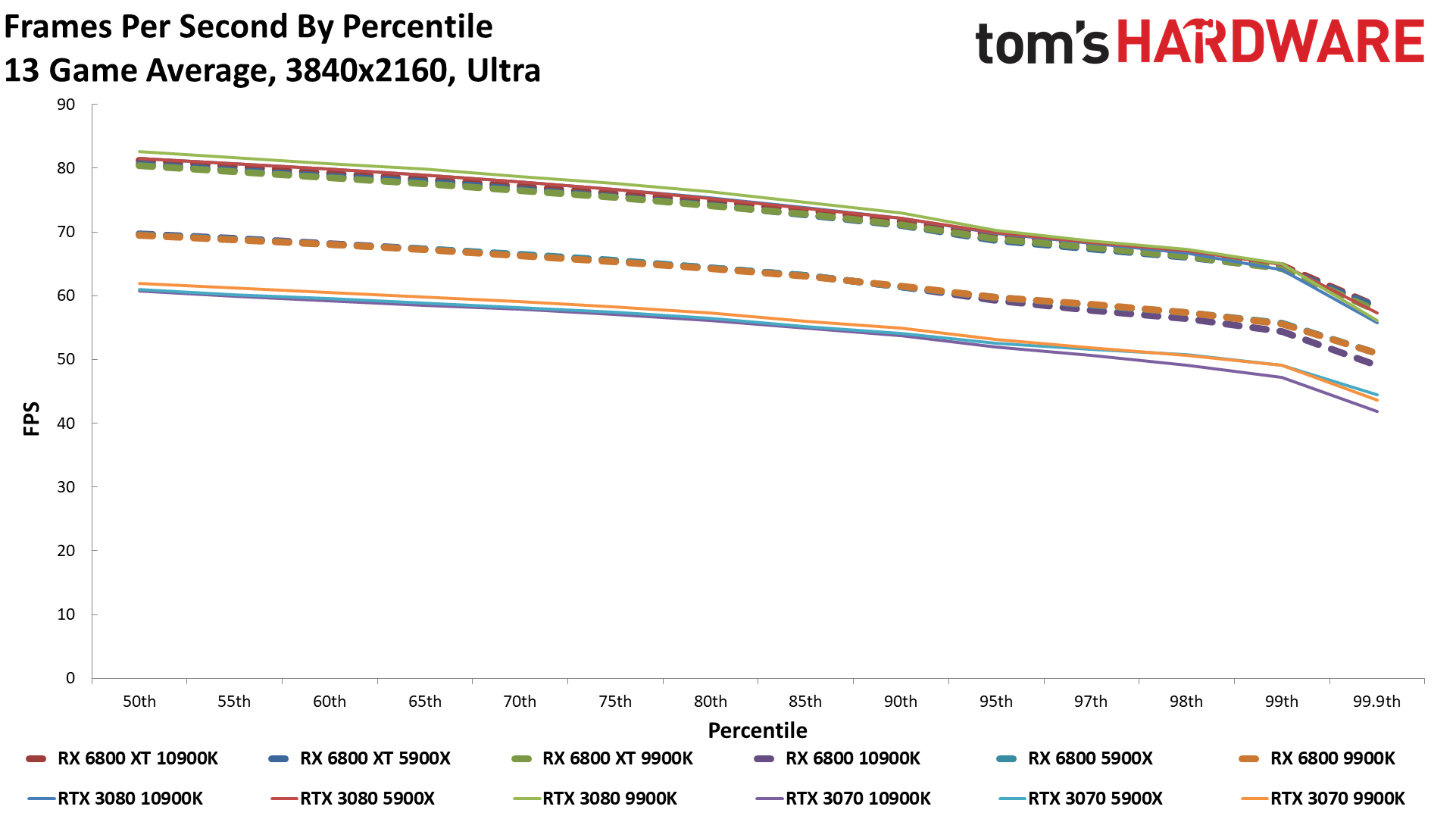

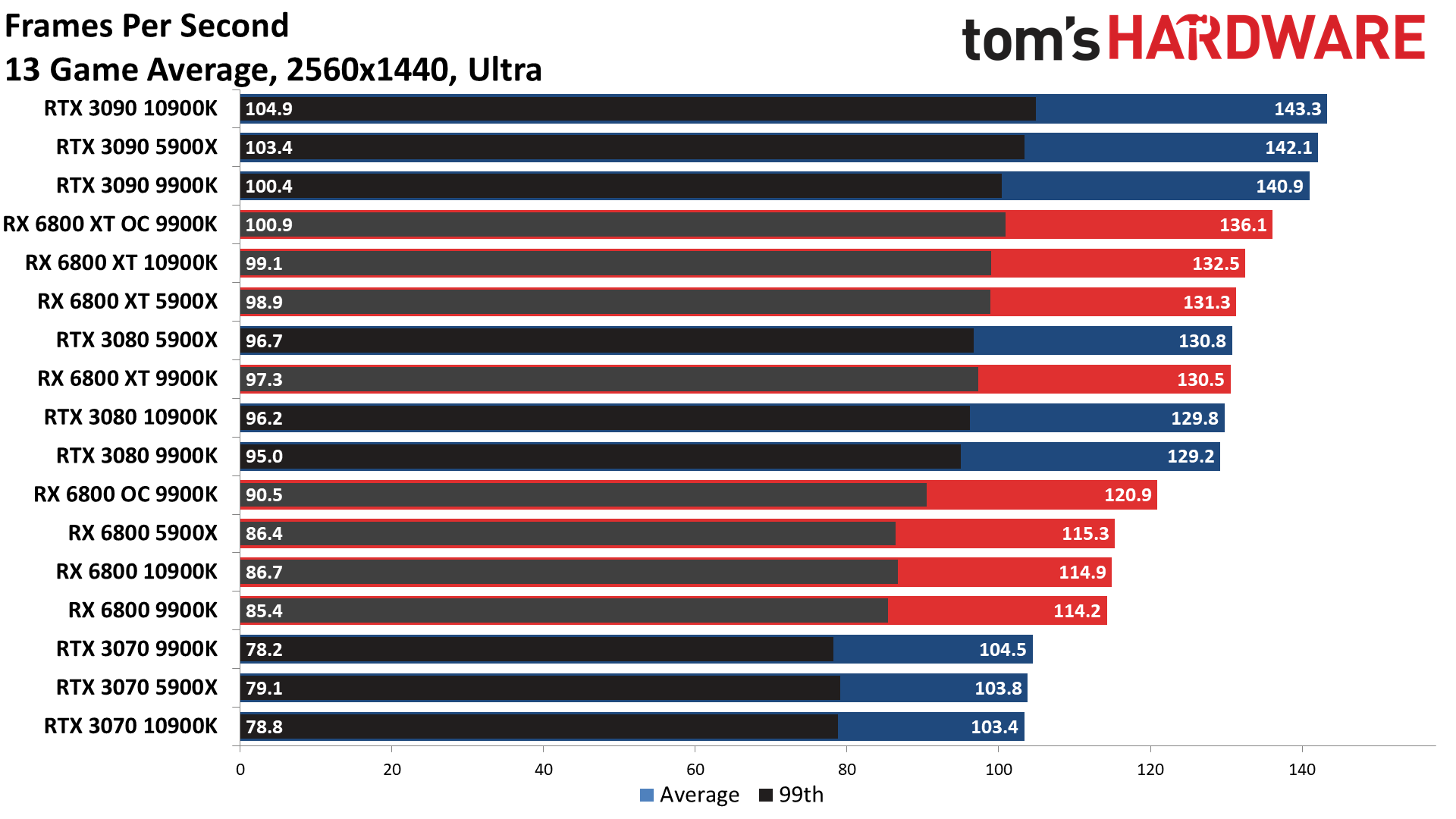

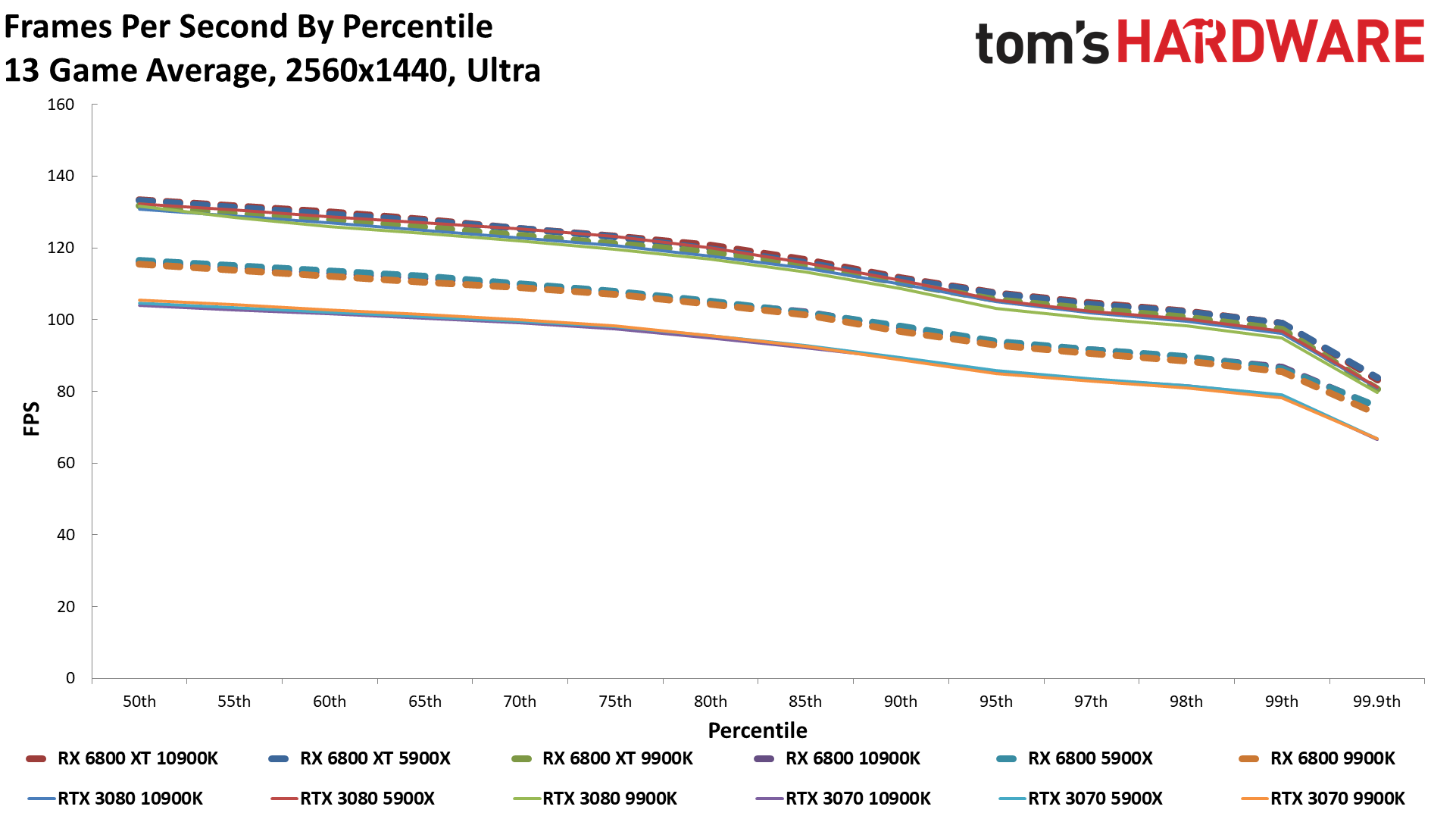

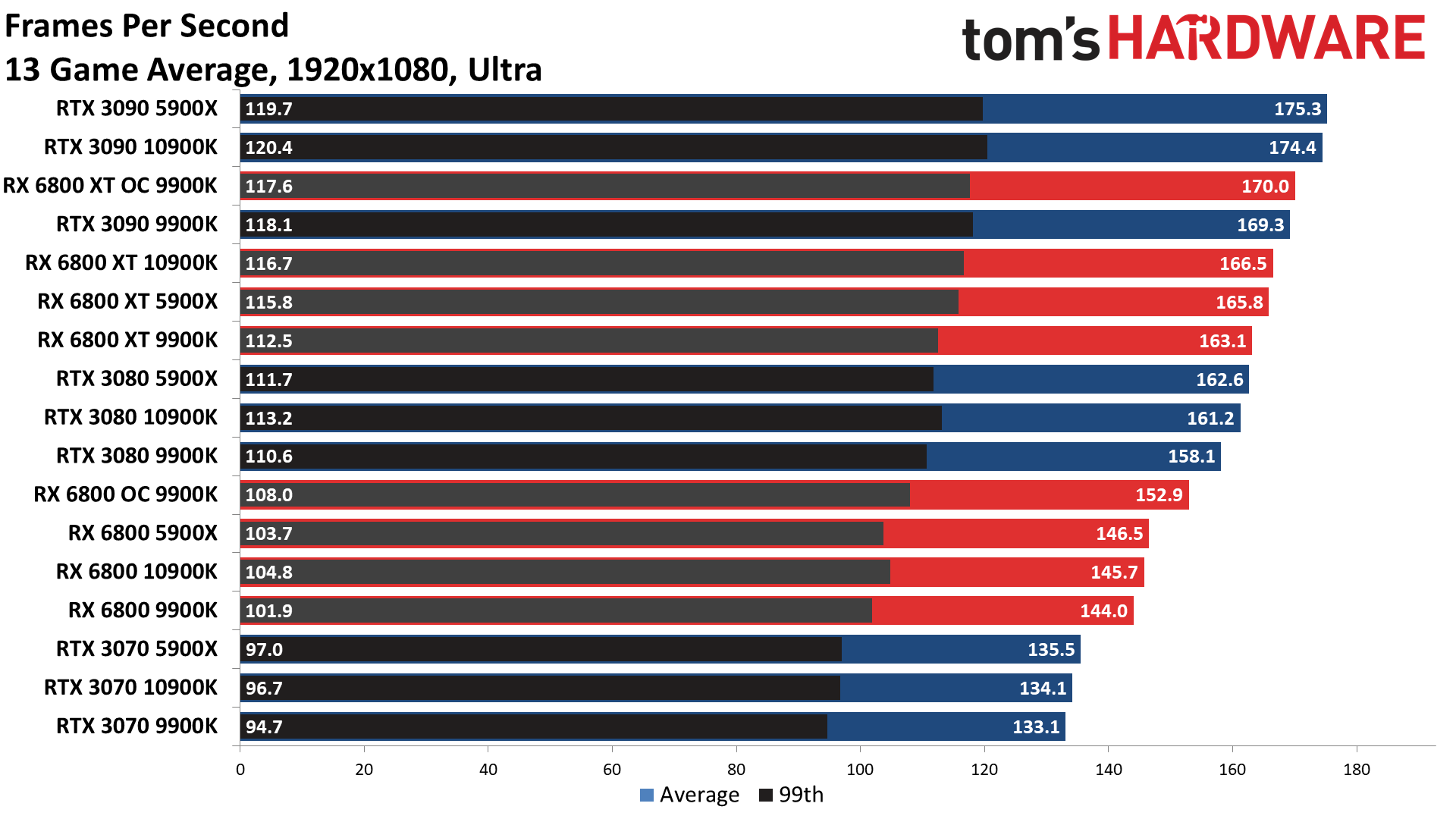

Our traditional gaming suite is due for retirement, but we didn't want to toss it out at the same time as a major GPU launch — it might look suspicious. We didn't have time to do a full suite of CPU scaling tests, but we did run 13 games on the five most recent high-end/extreme GPUs on our three test PCs. Here's the next series of charts, again with commentary below.

13-Game Average

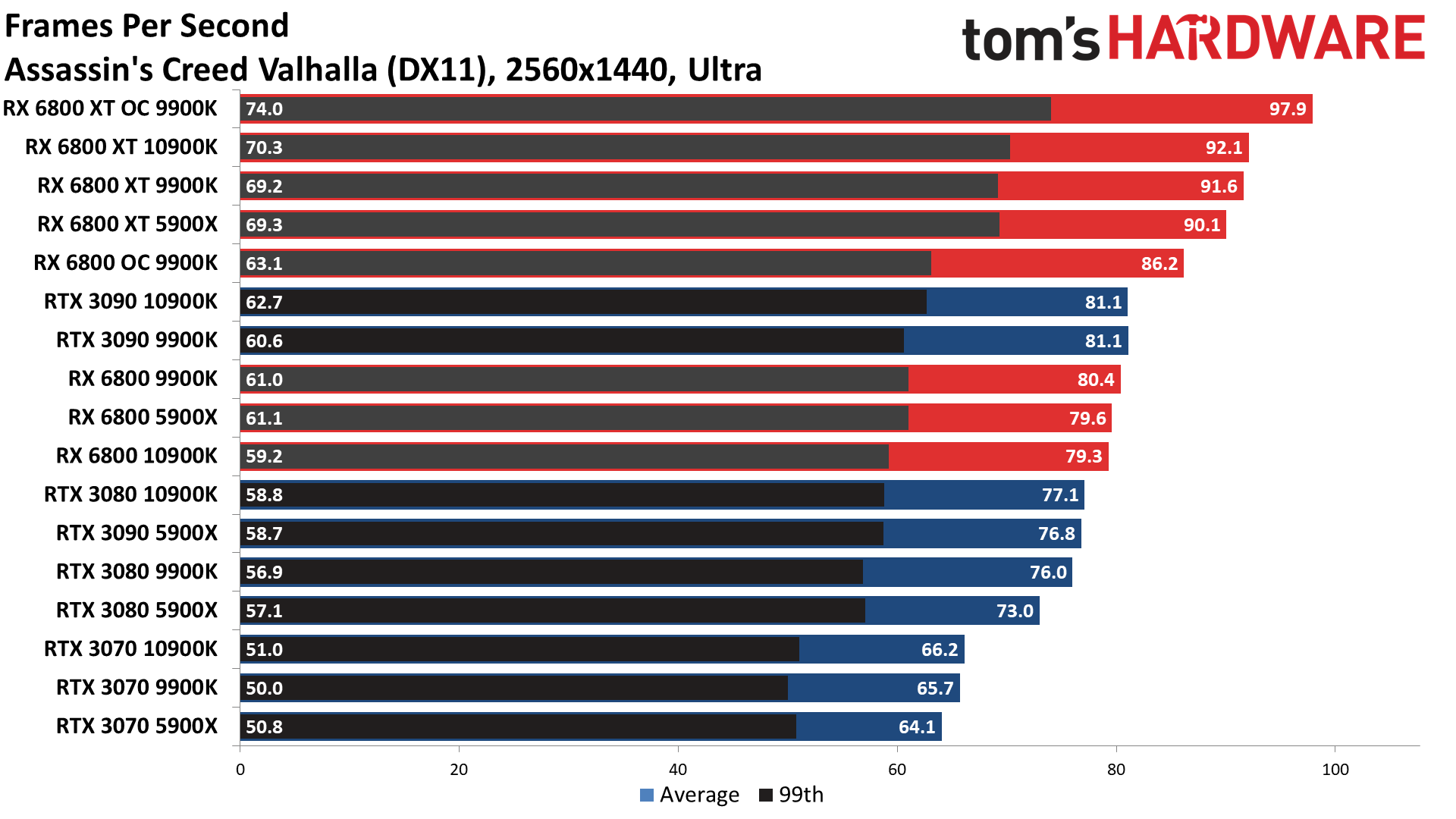

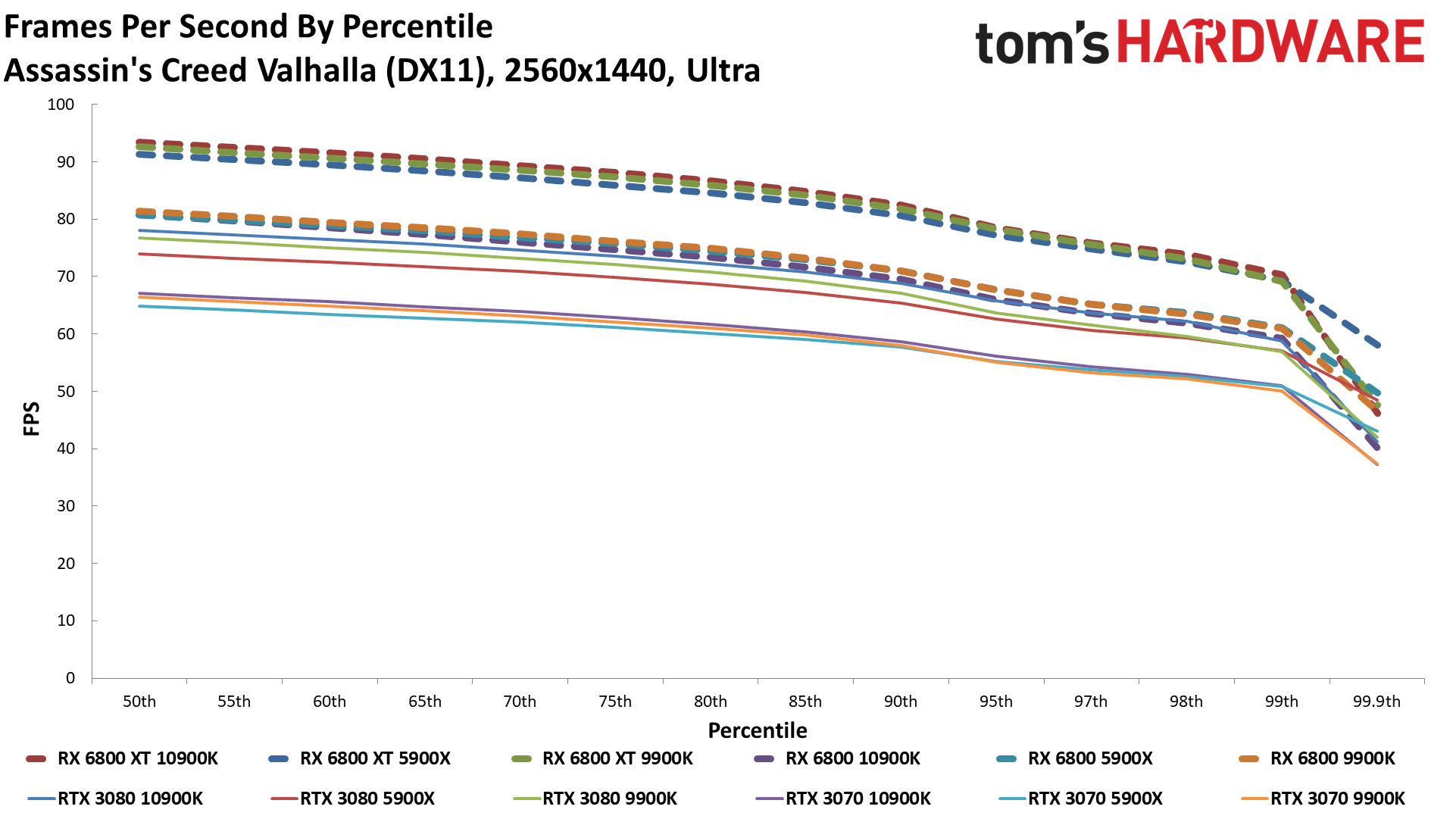

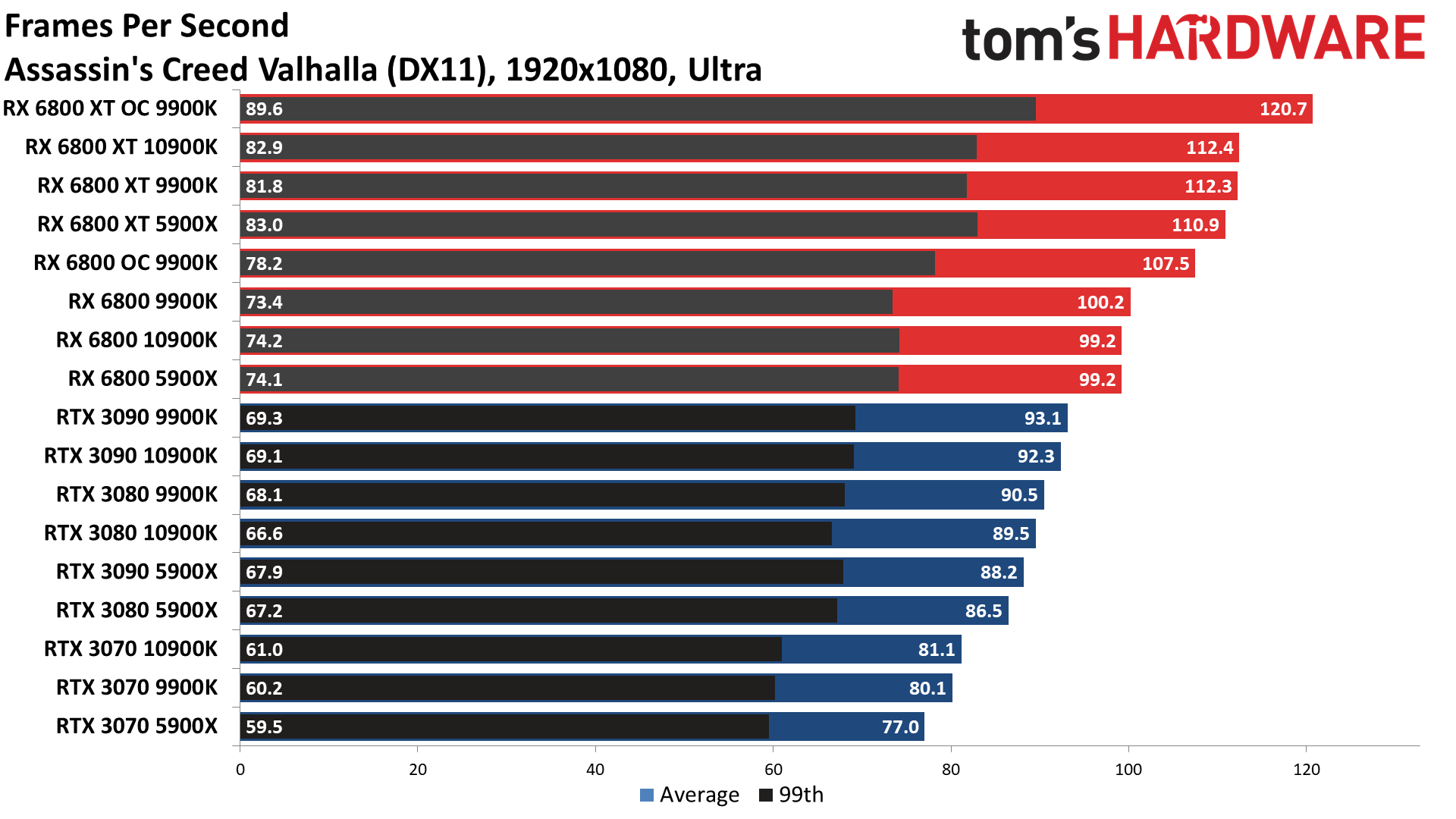

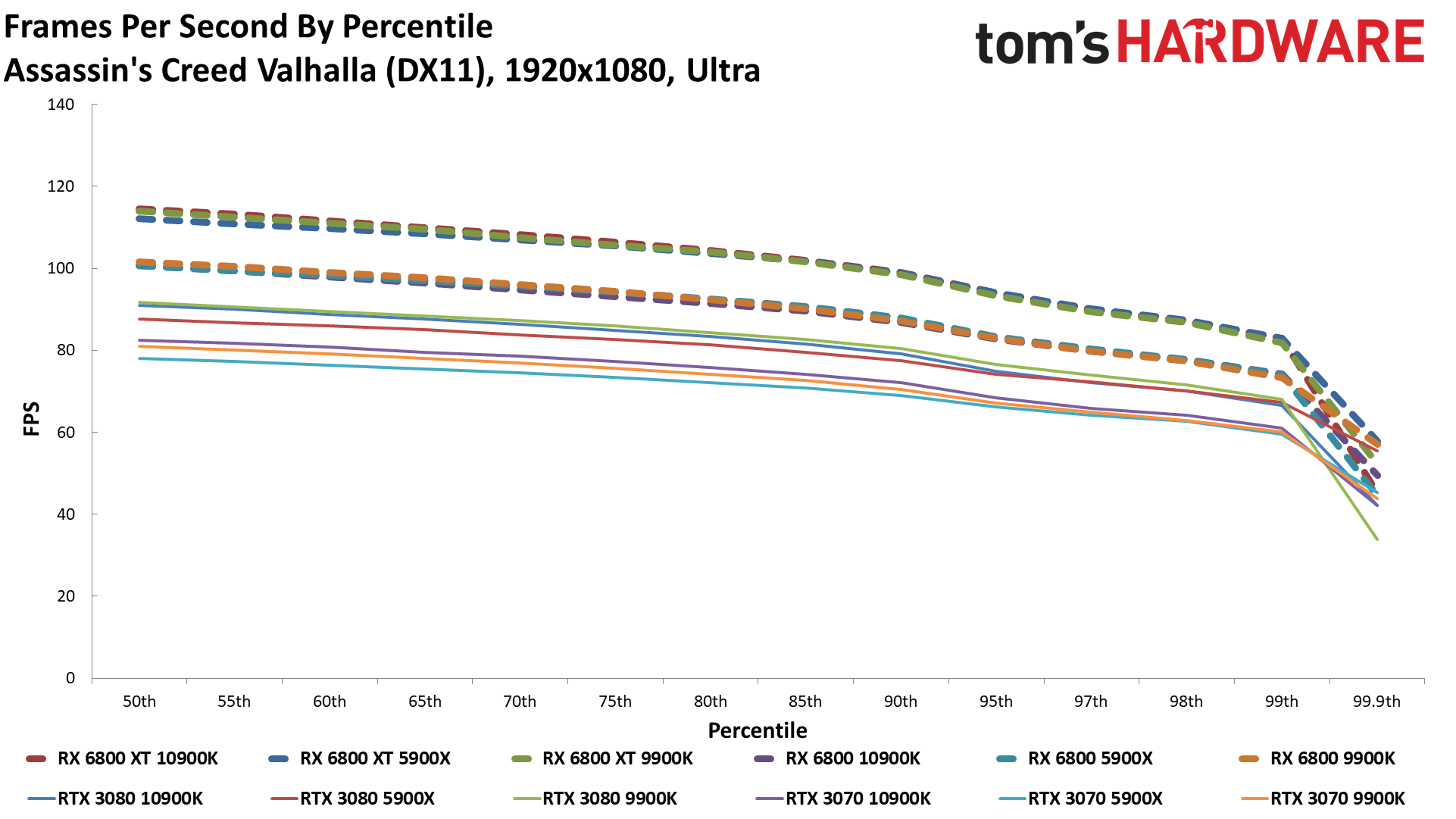

Assassin's Creed Valhalla

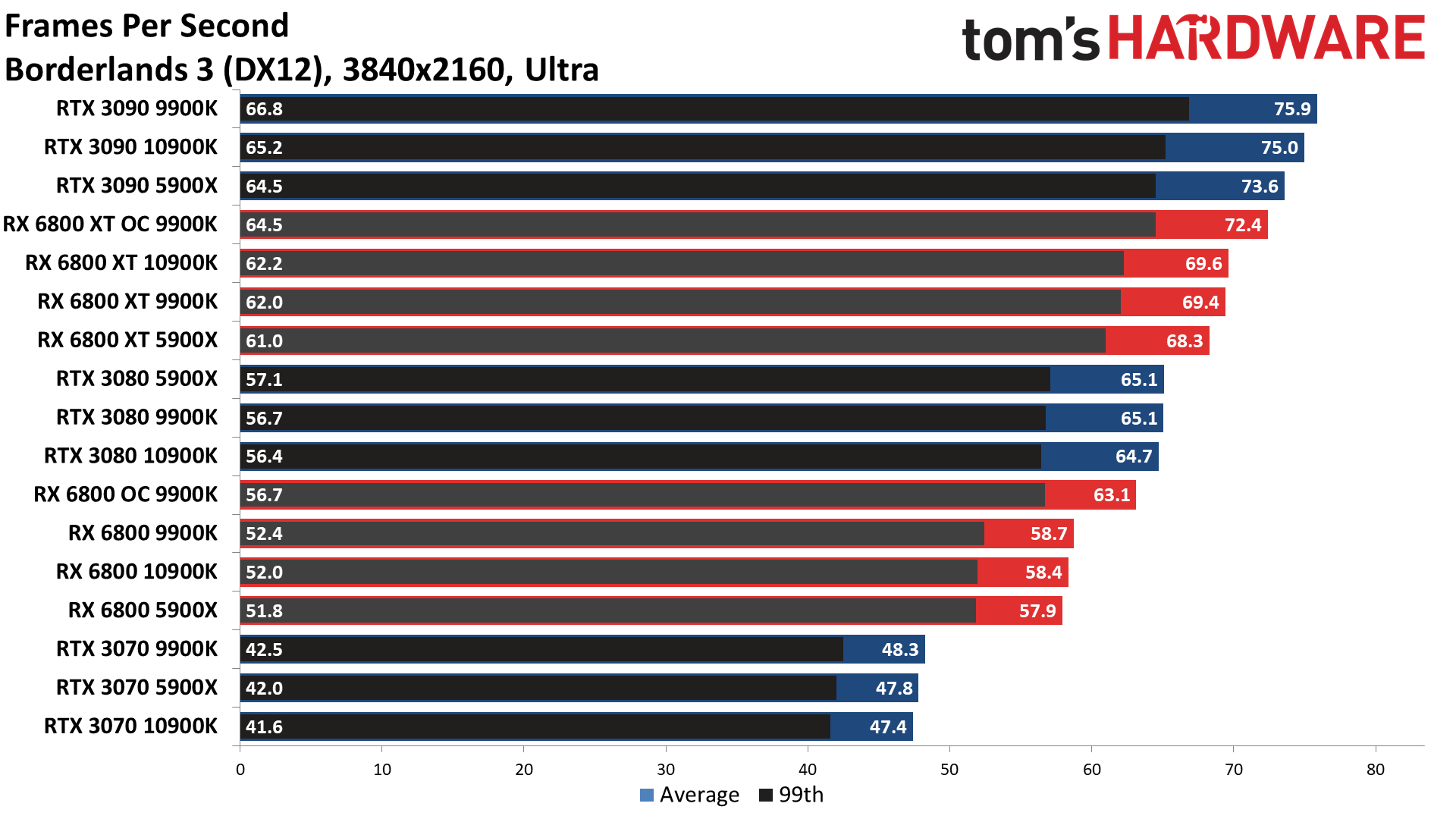

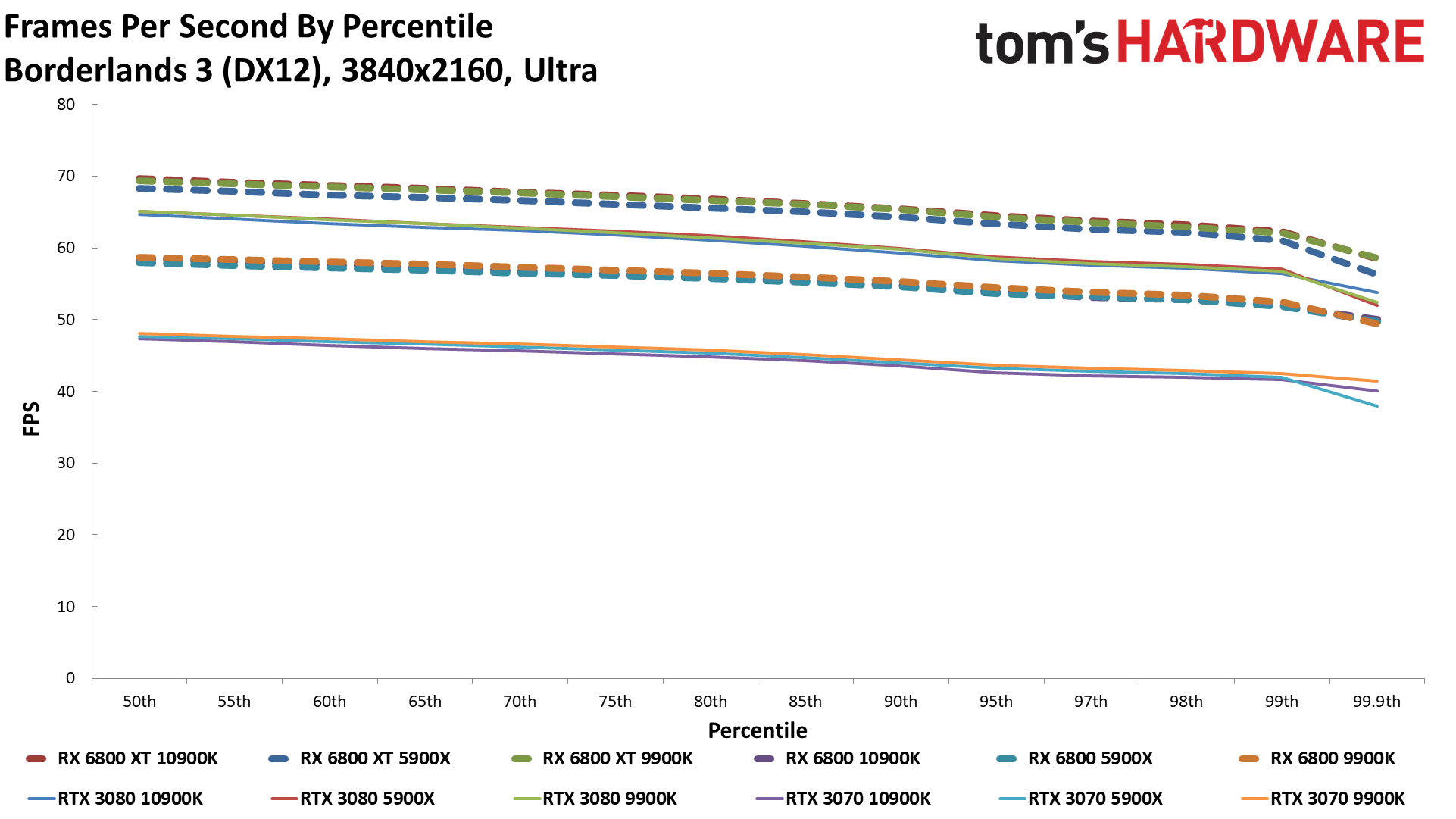

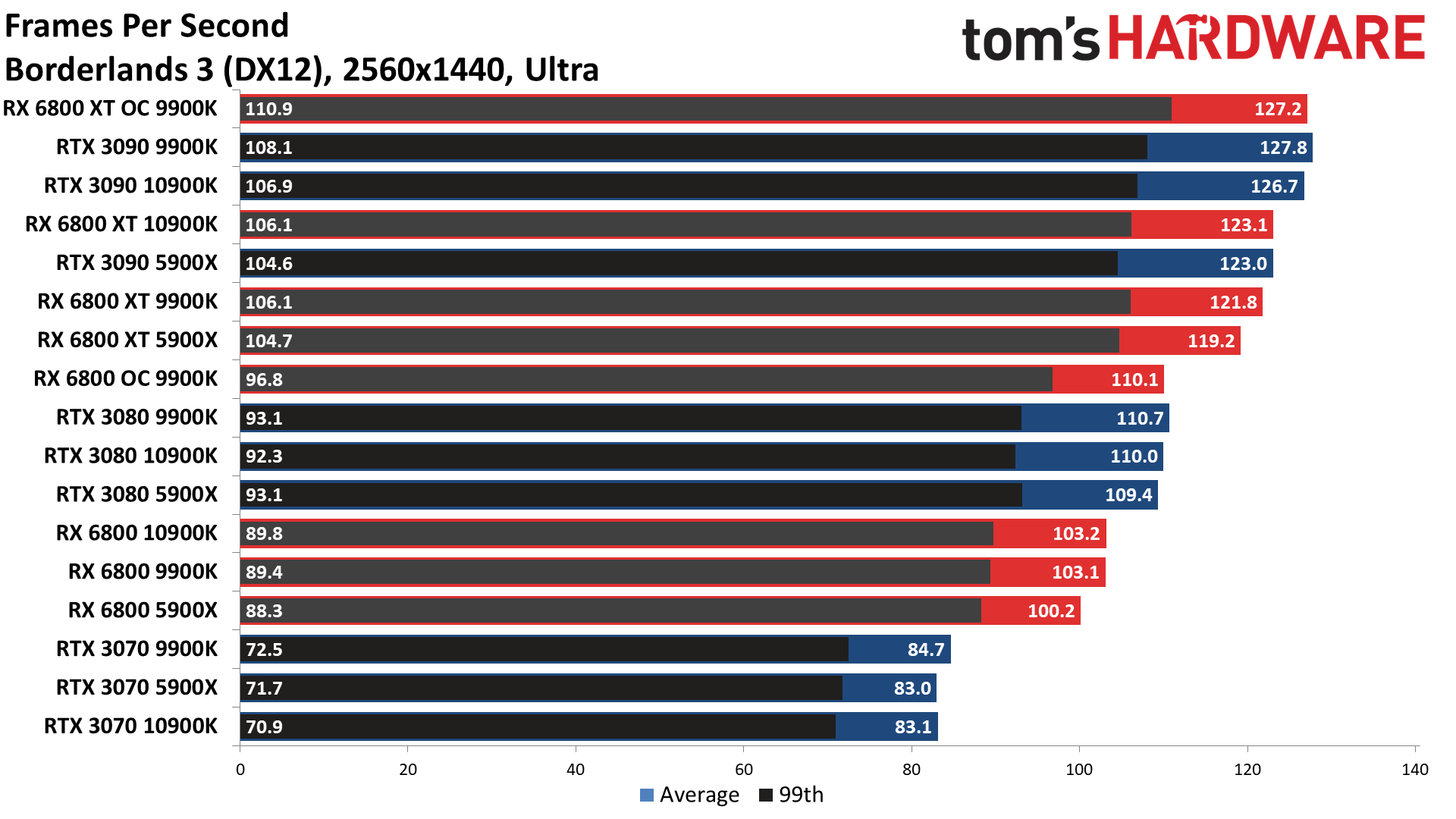

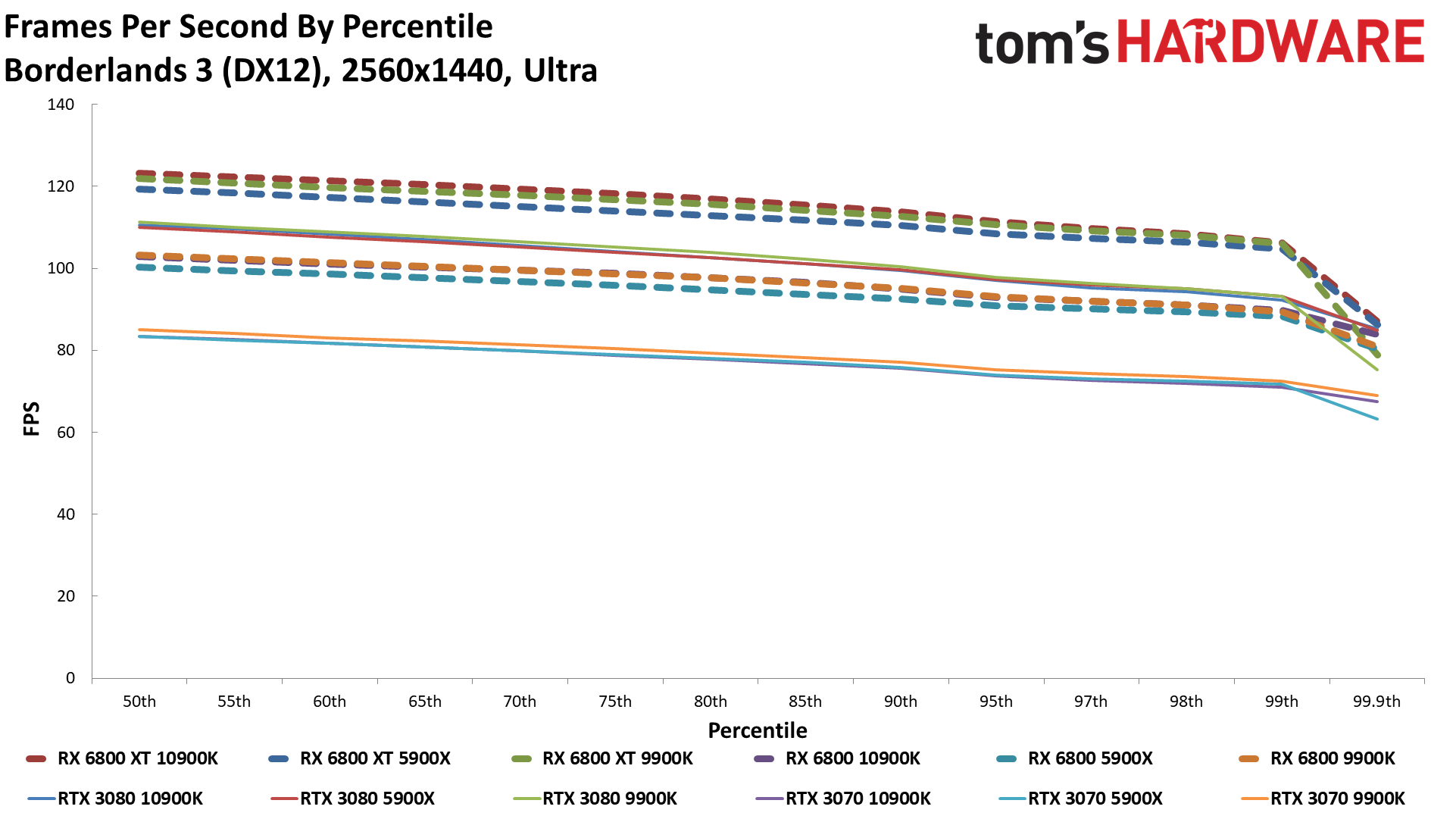

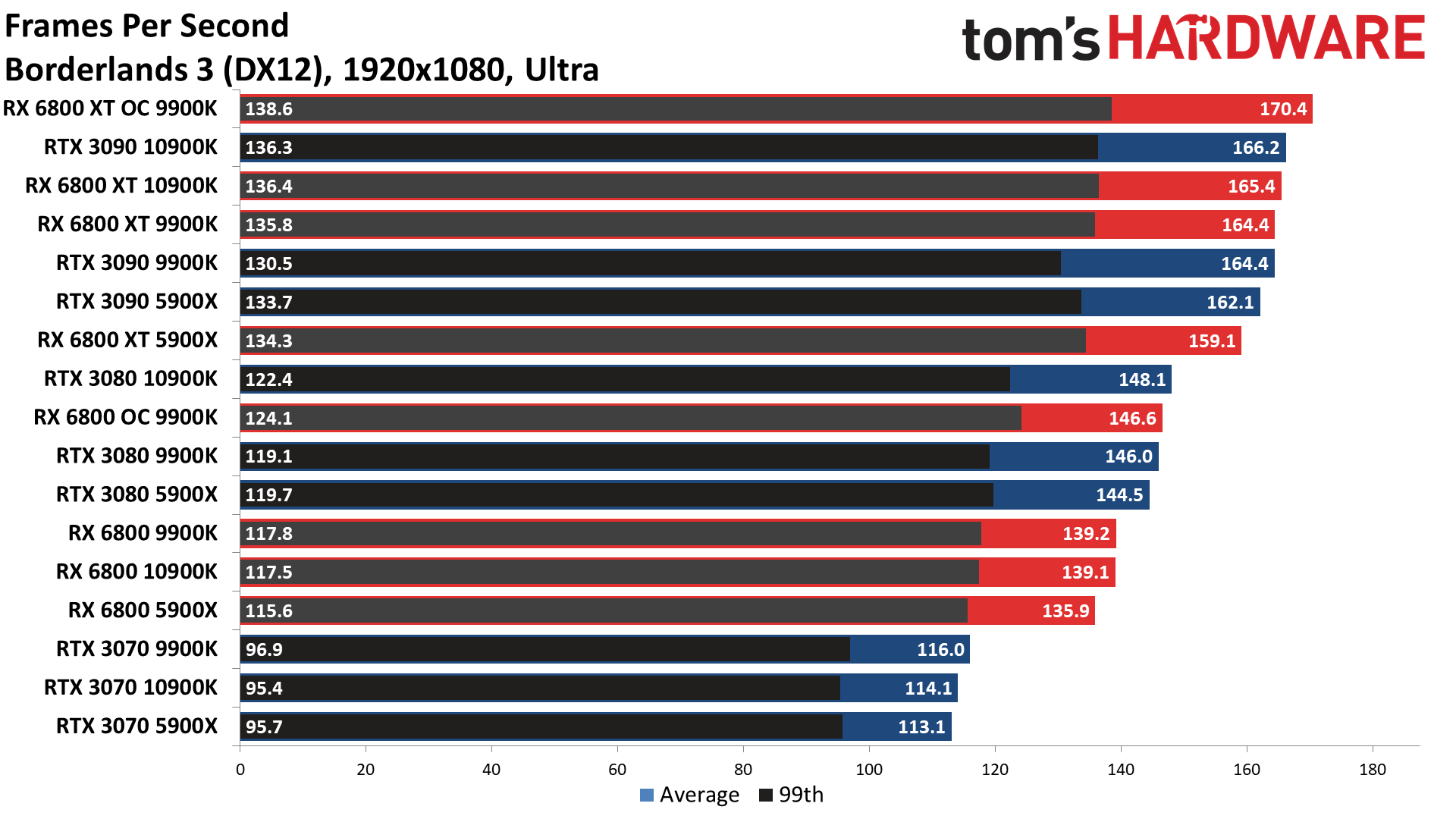

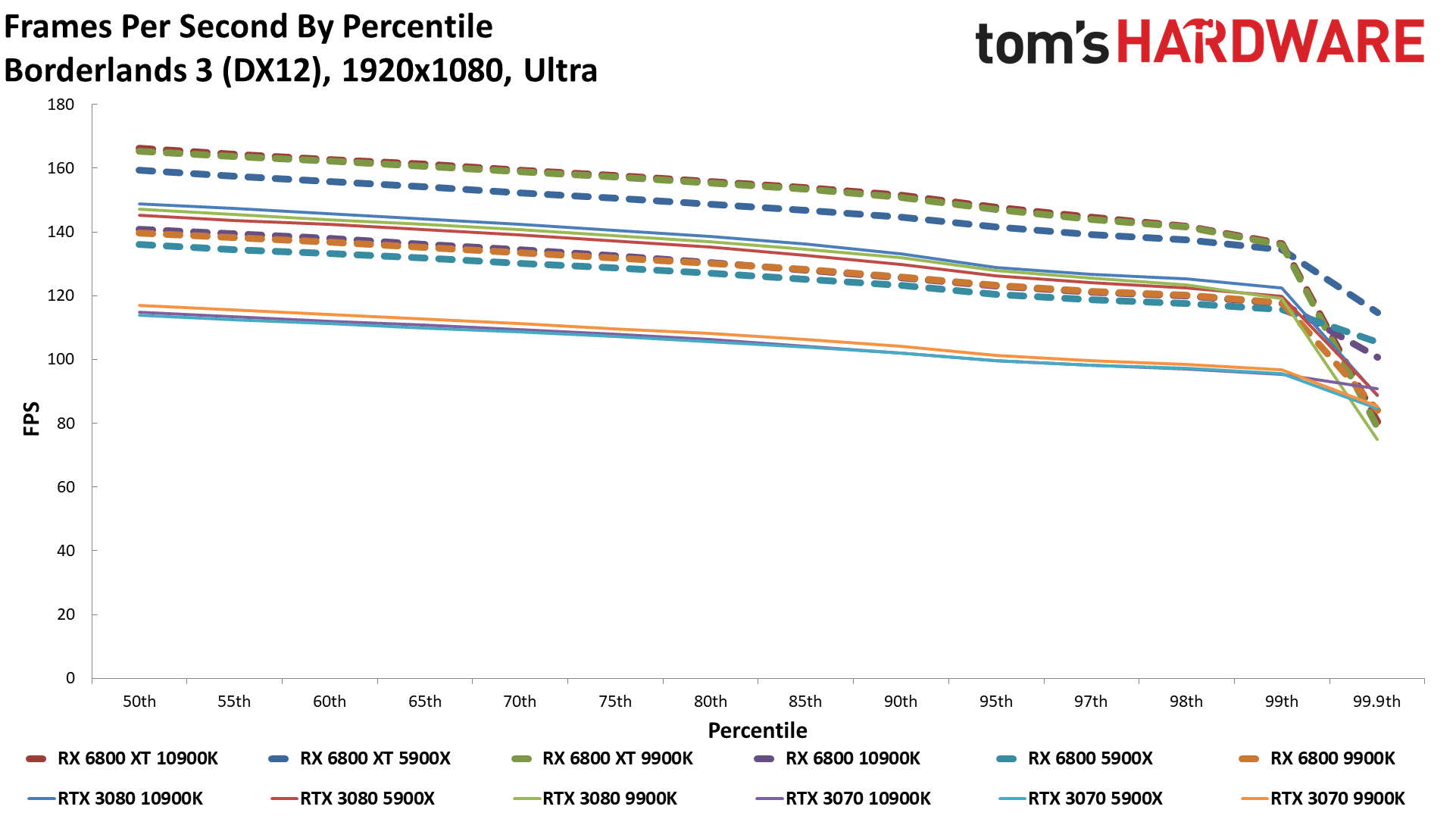

Borderlands 3

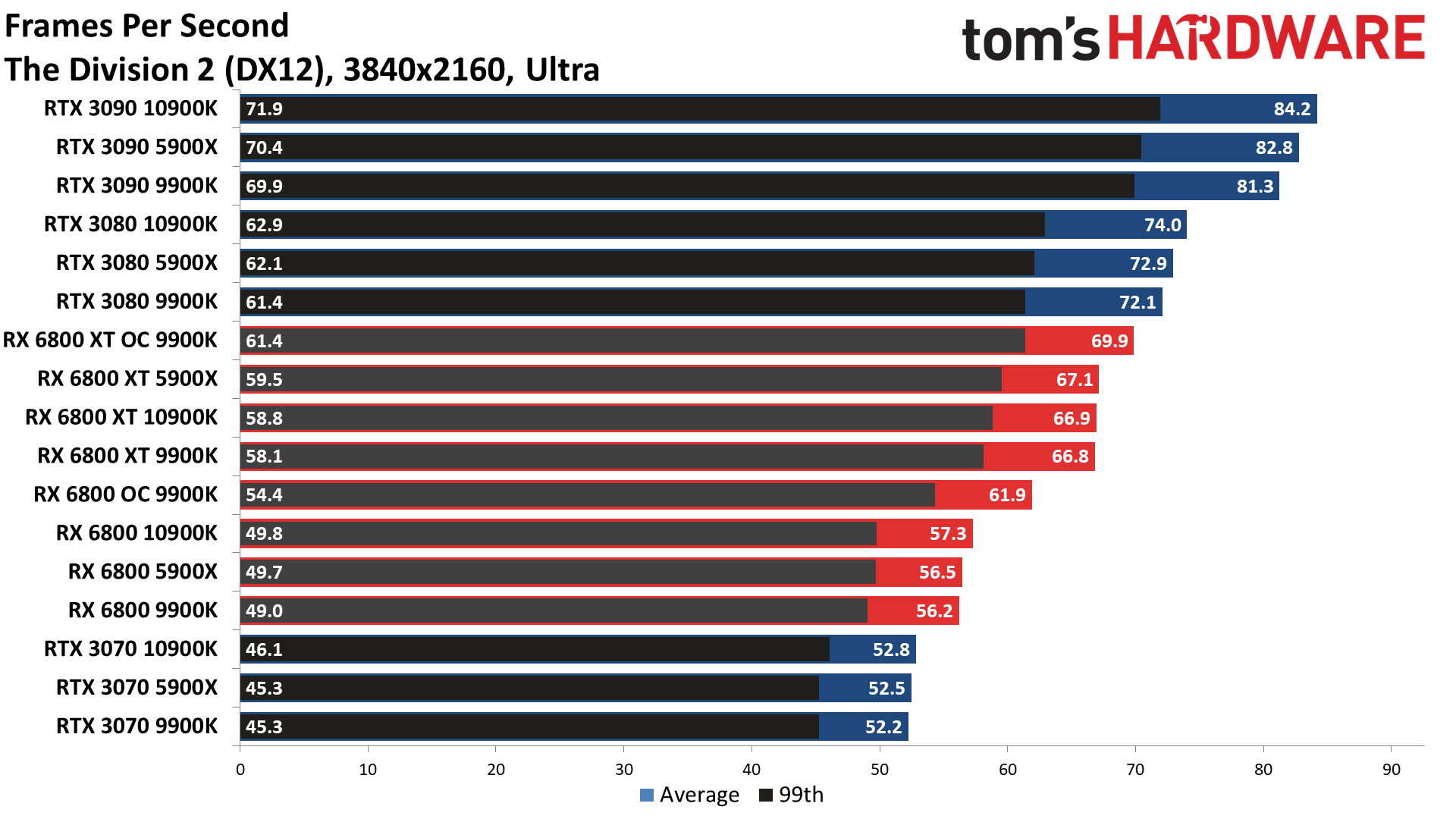

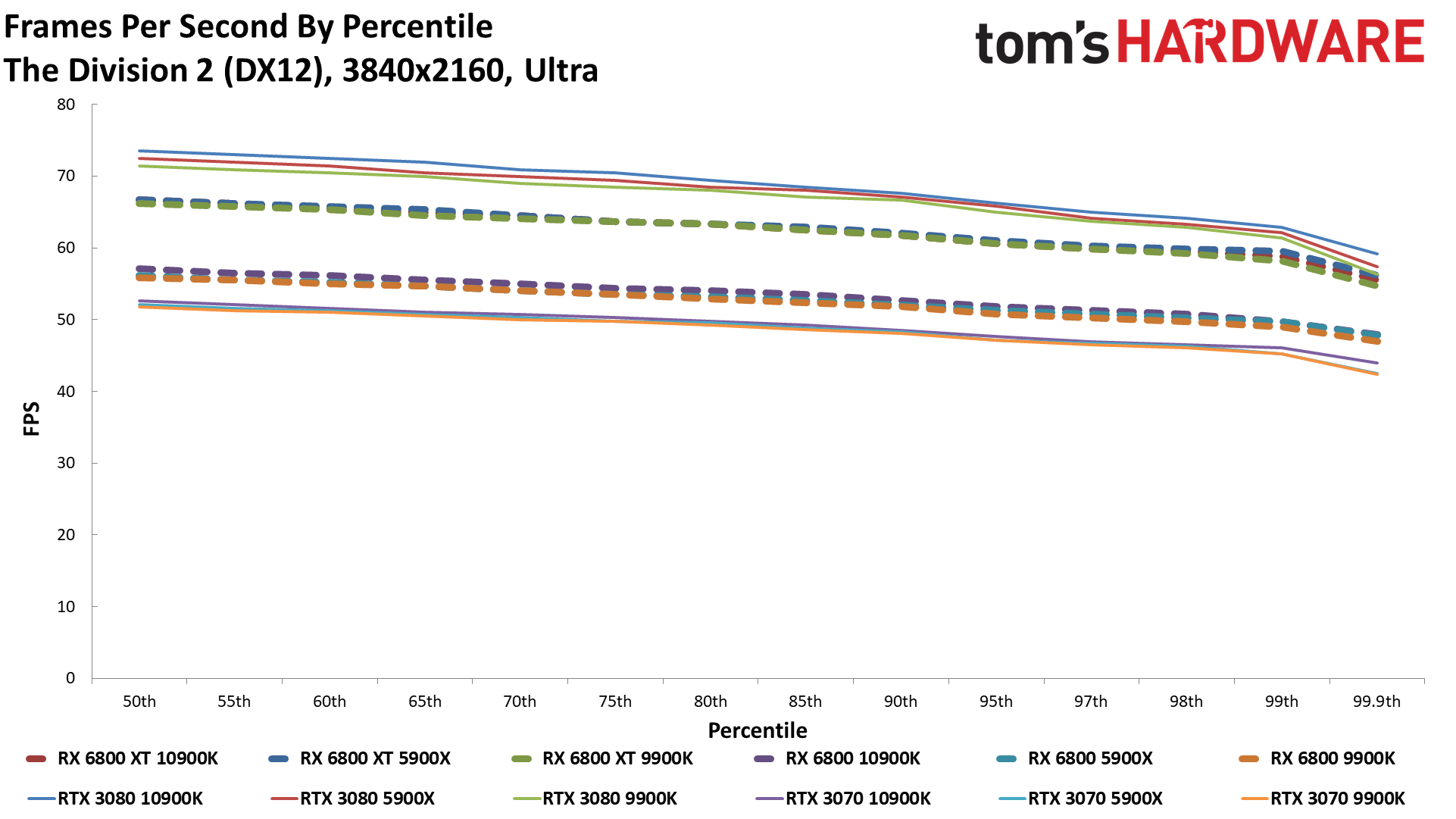

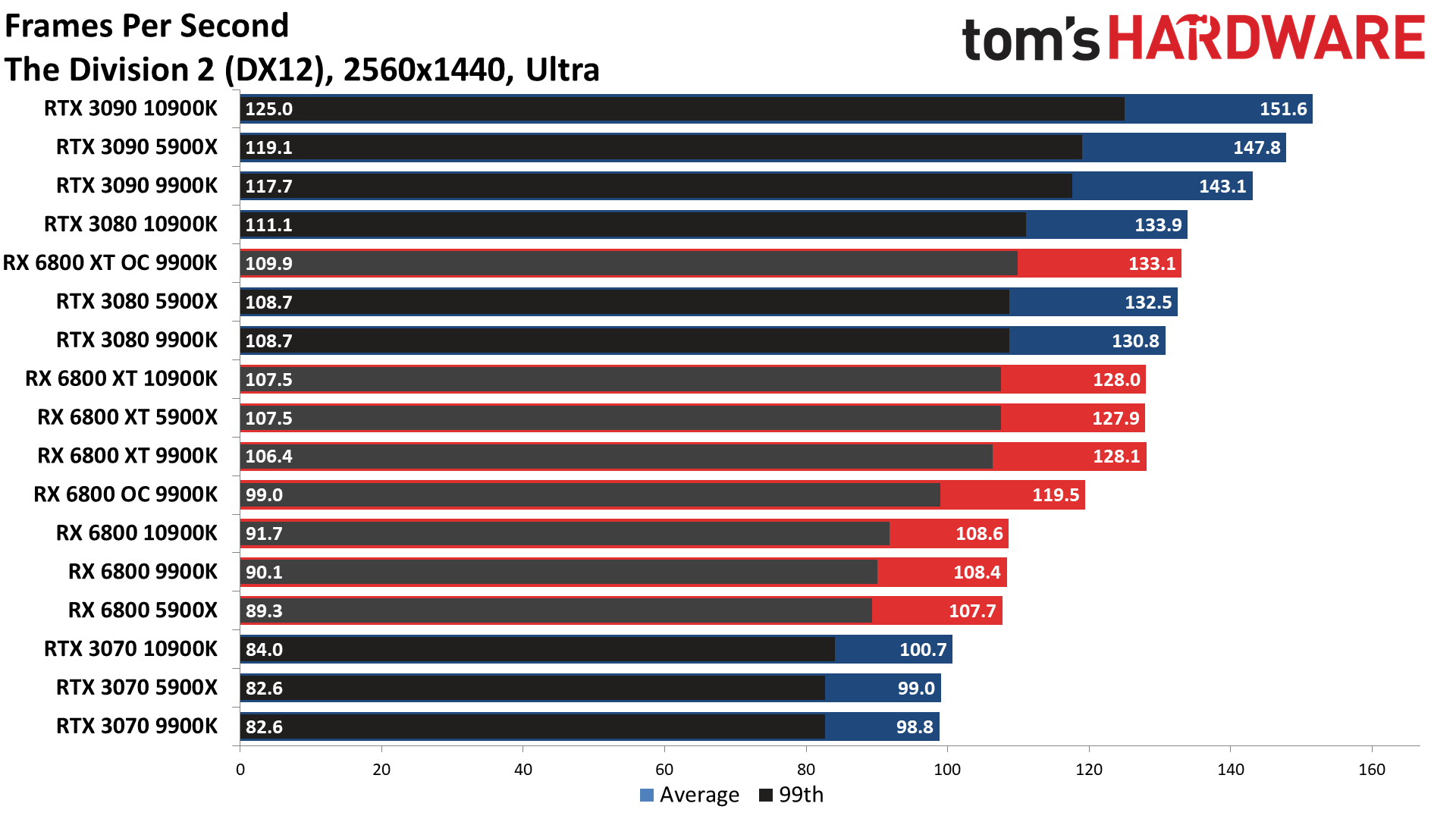

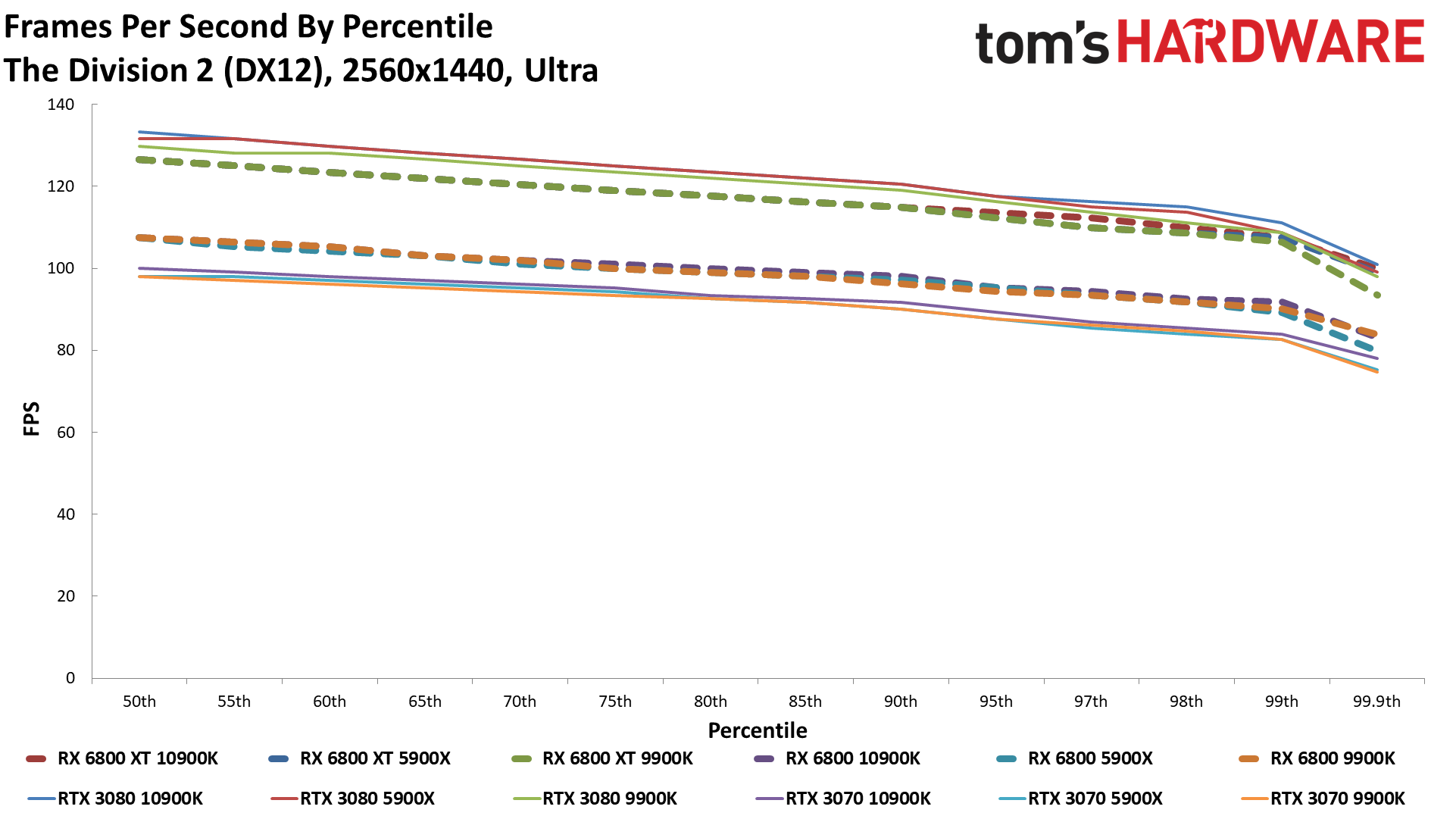

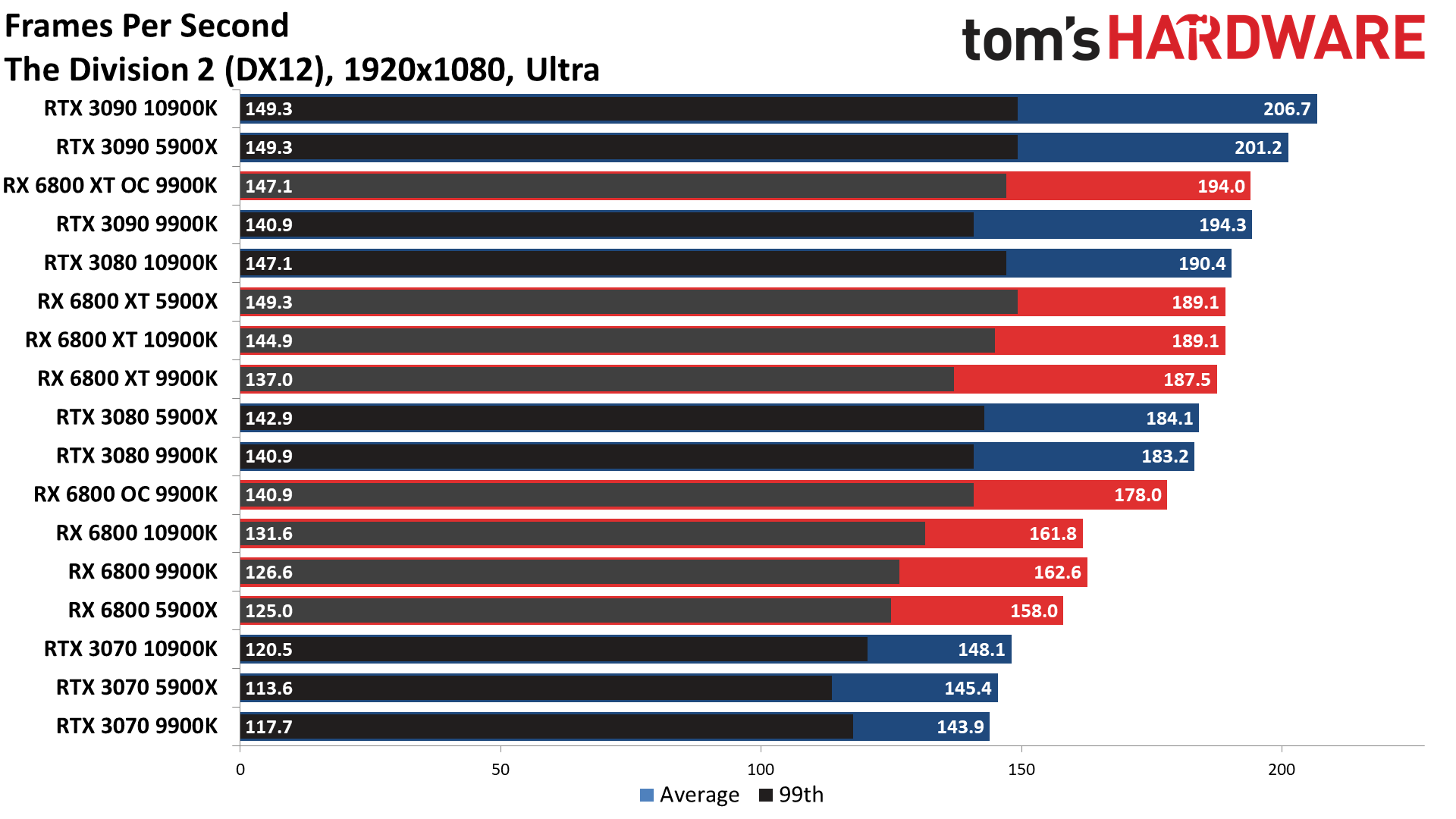

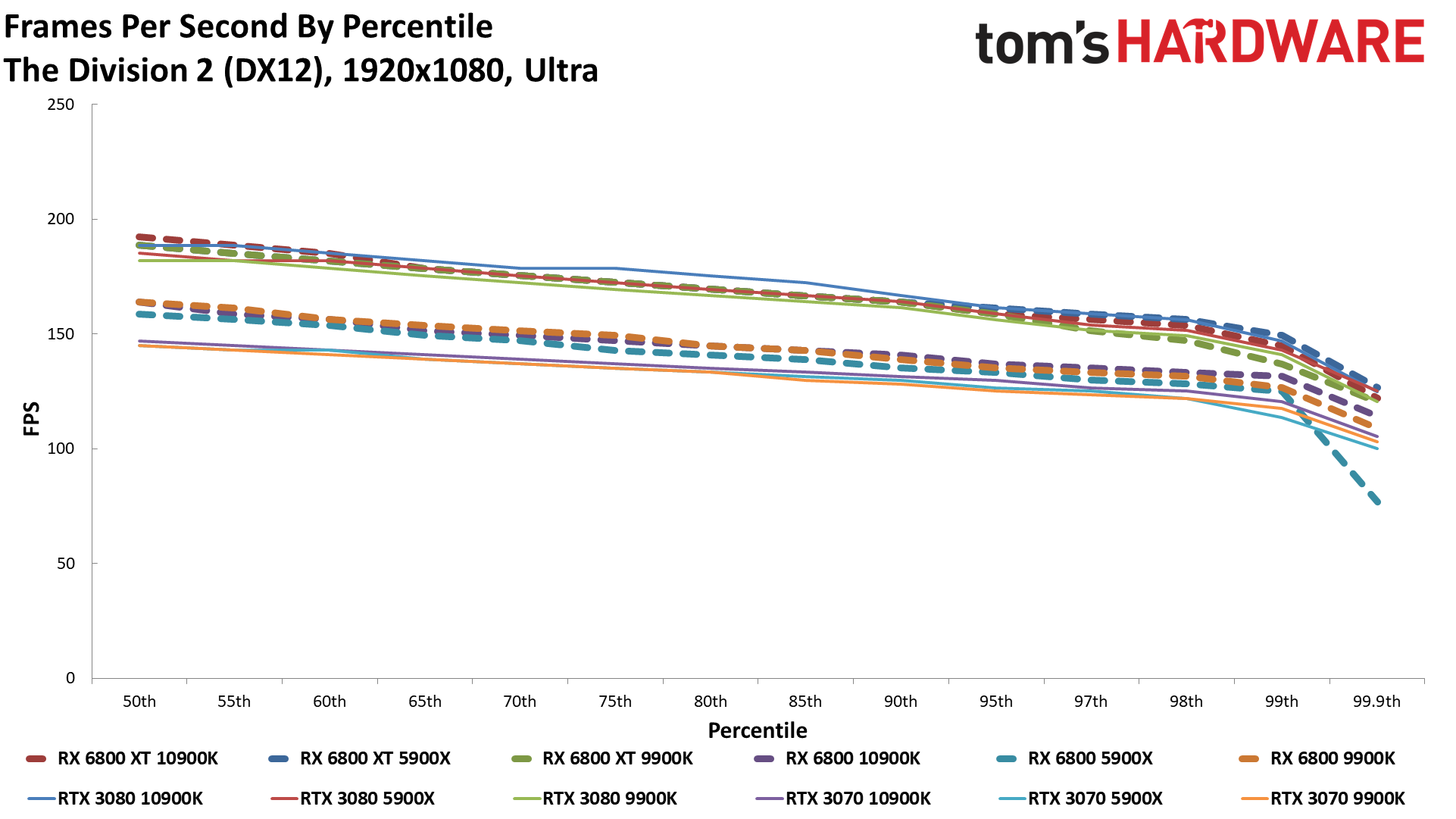

The Division 2

Dirt 5

Far Cry 5

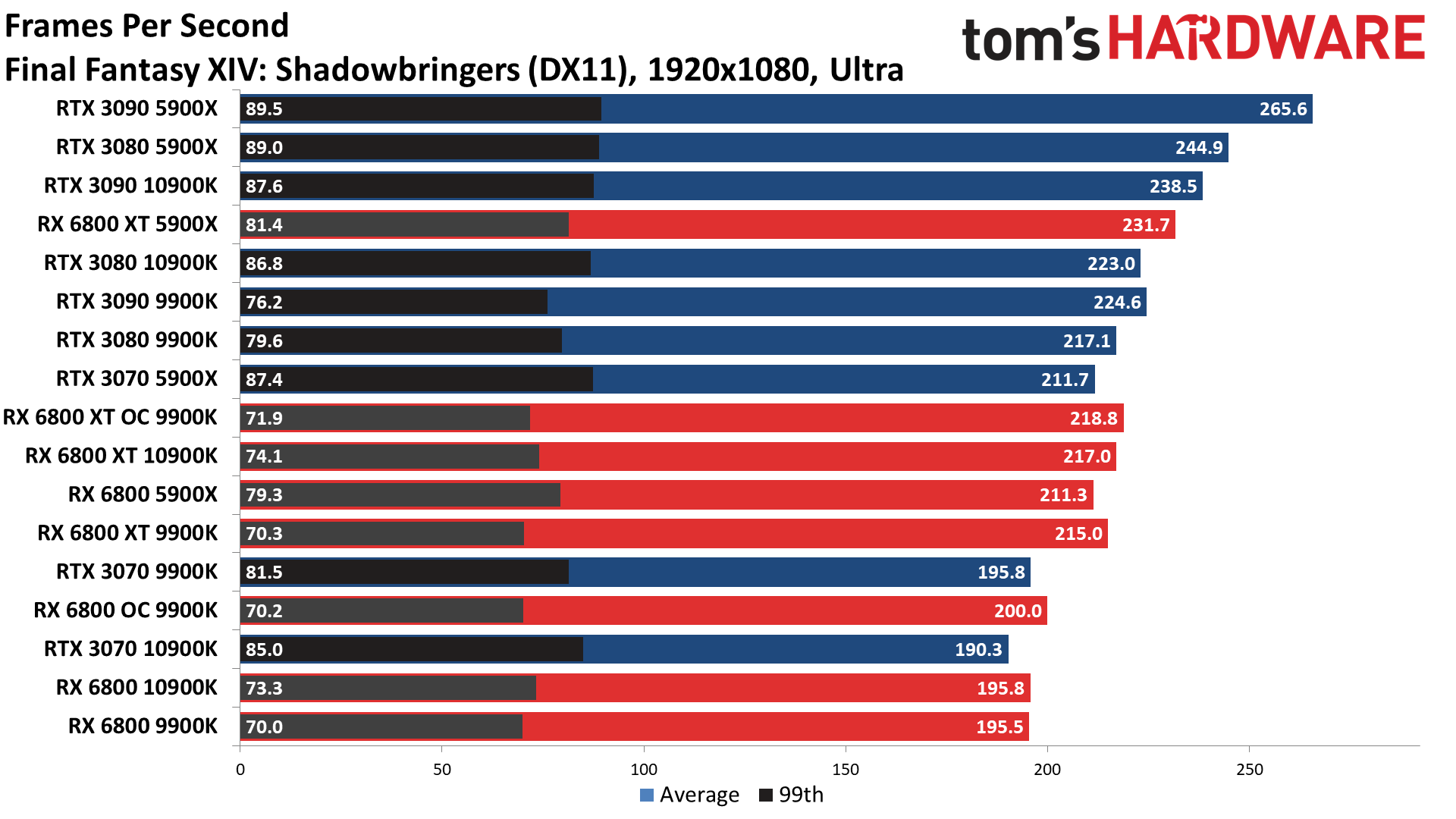

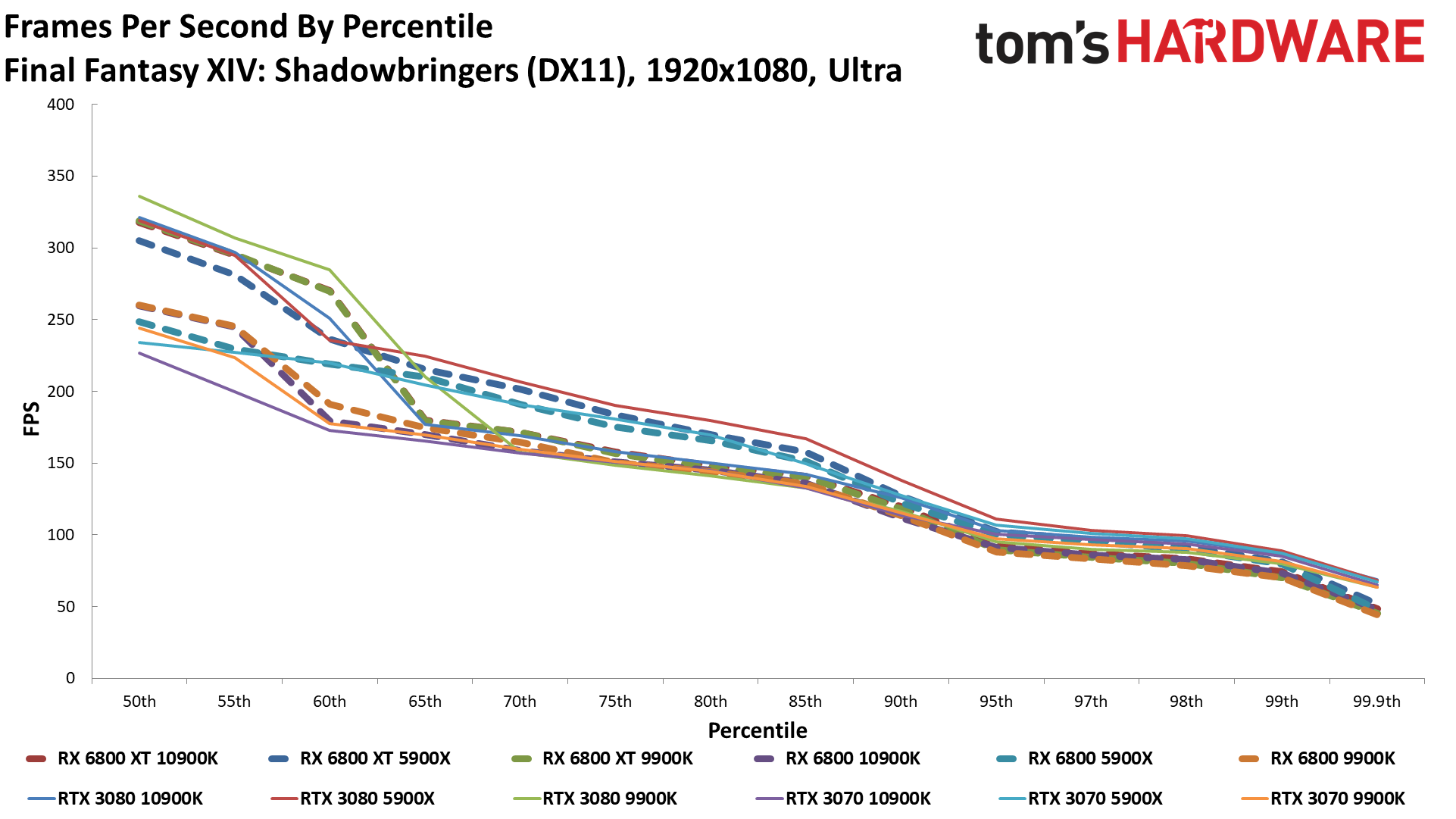

Final Fantasy XIV

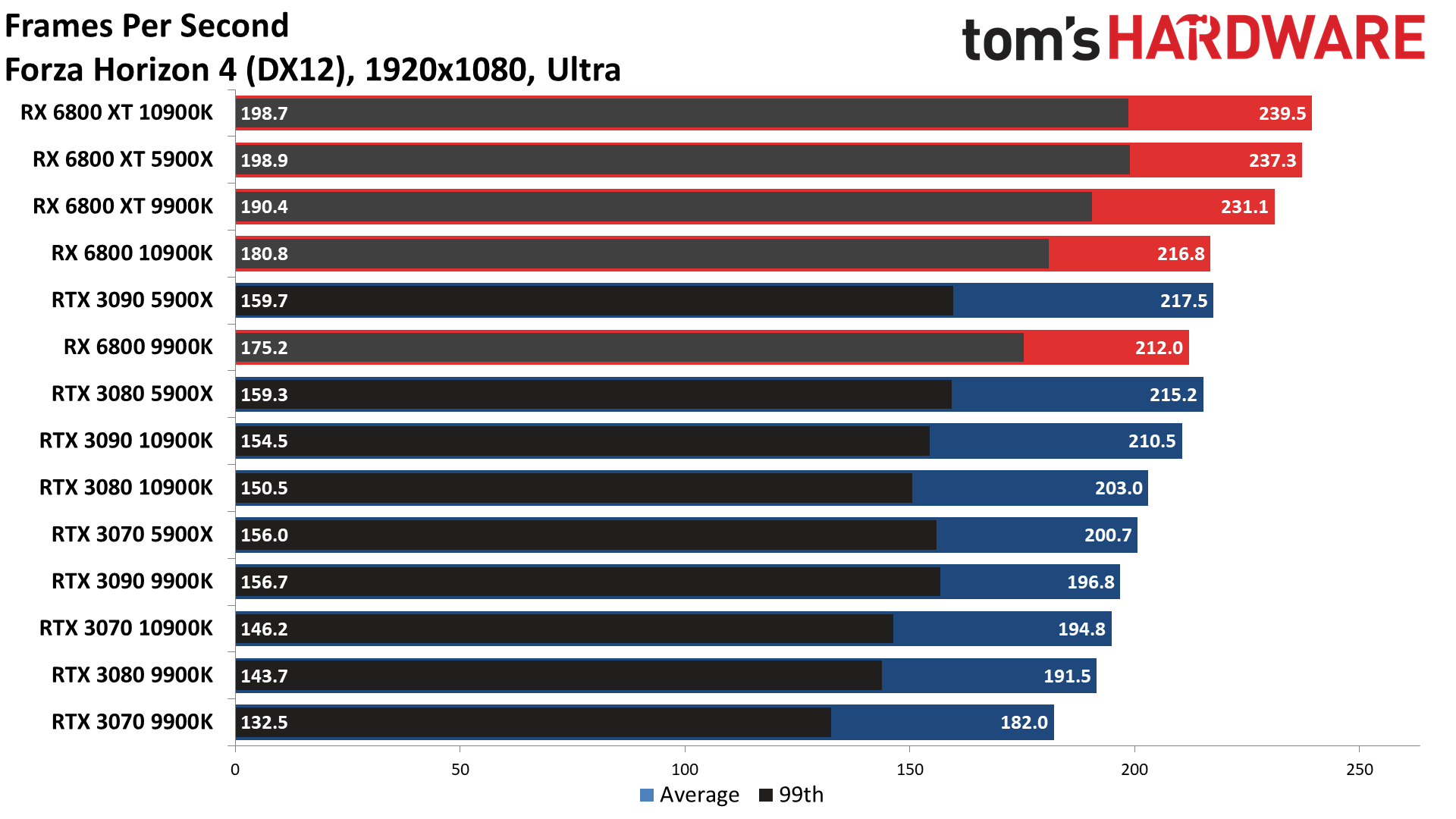

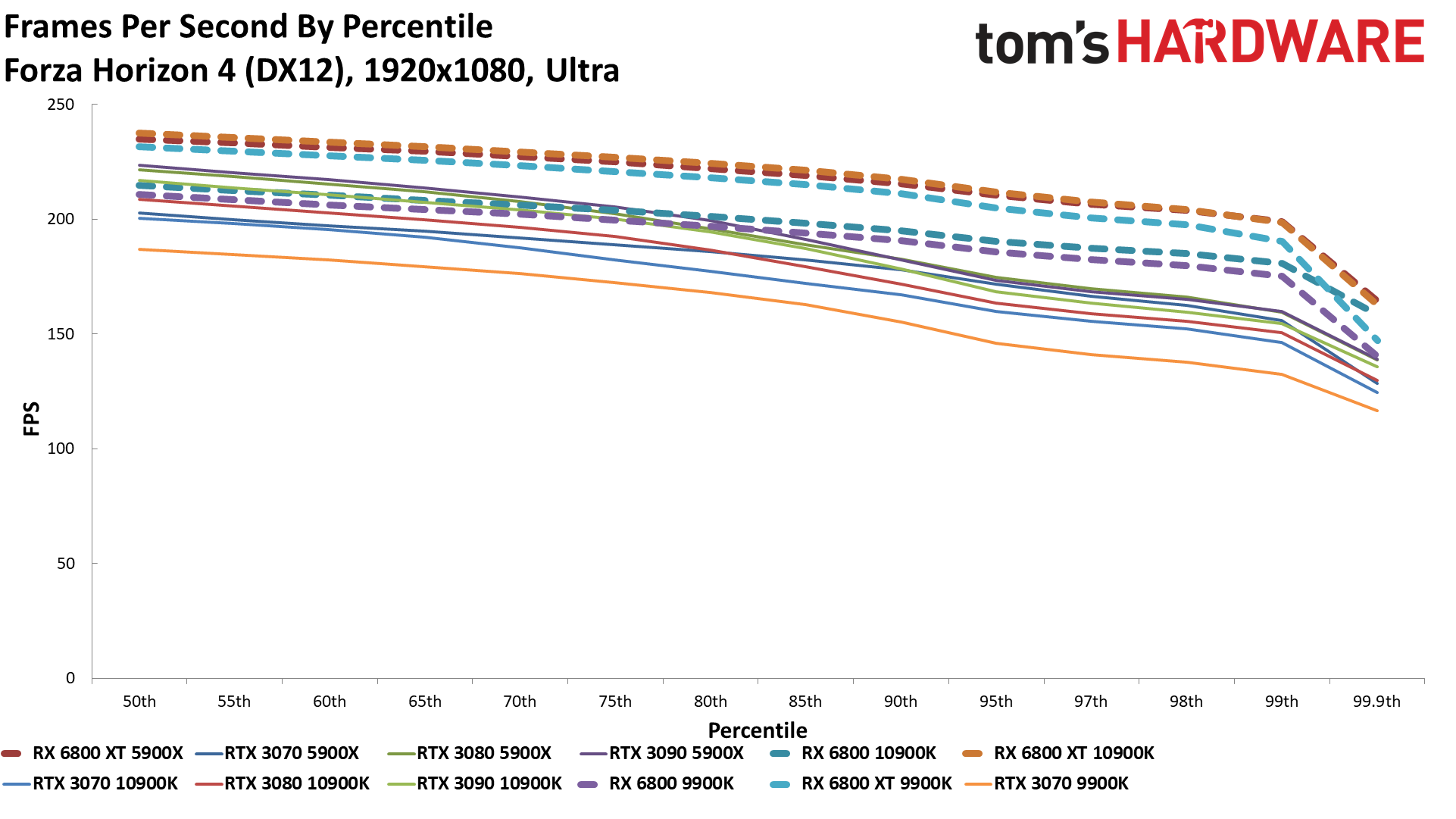

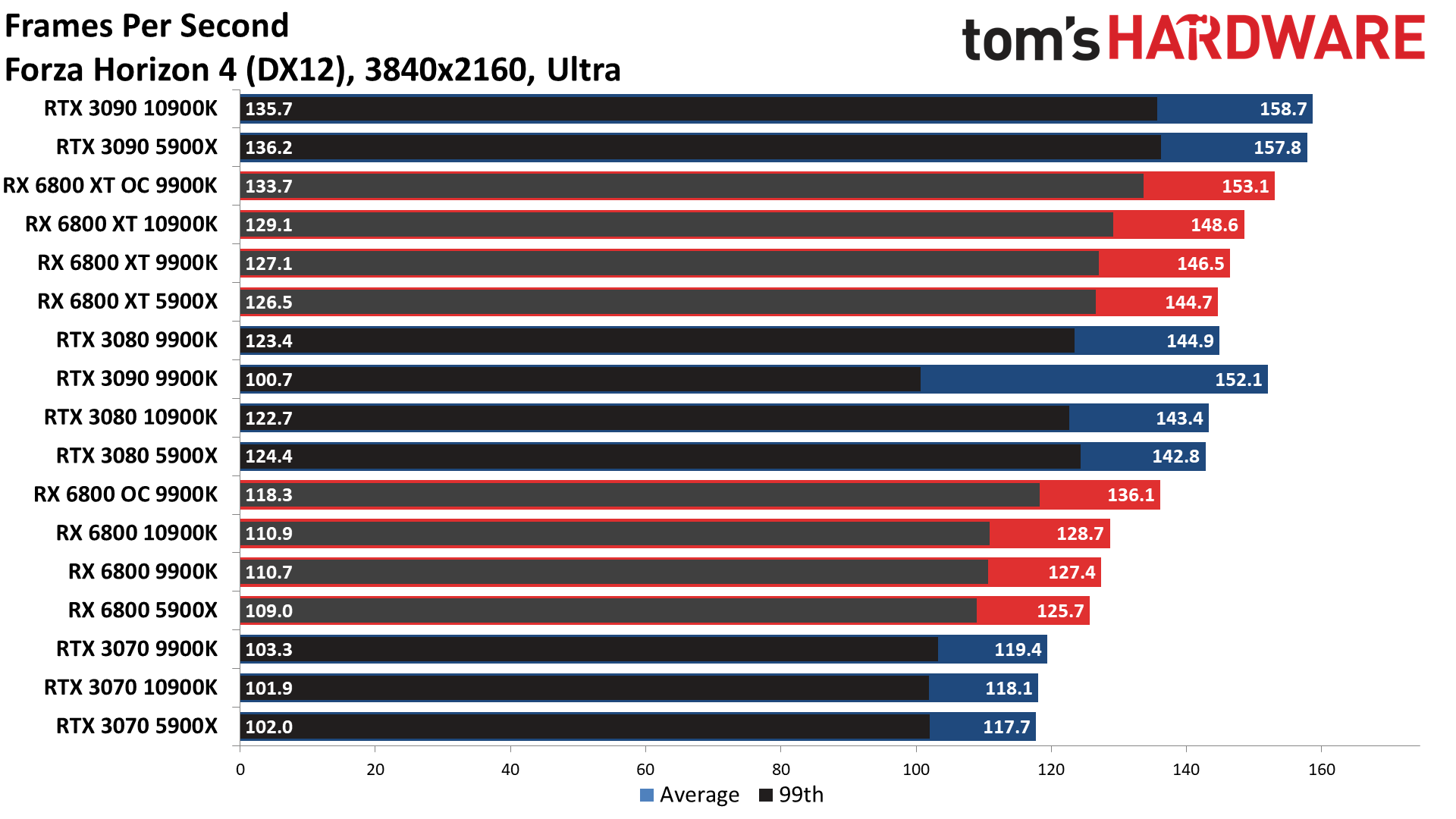

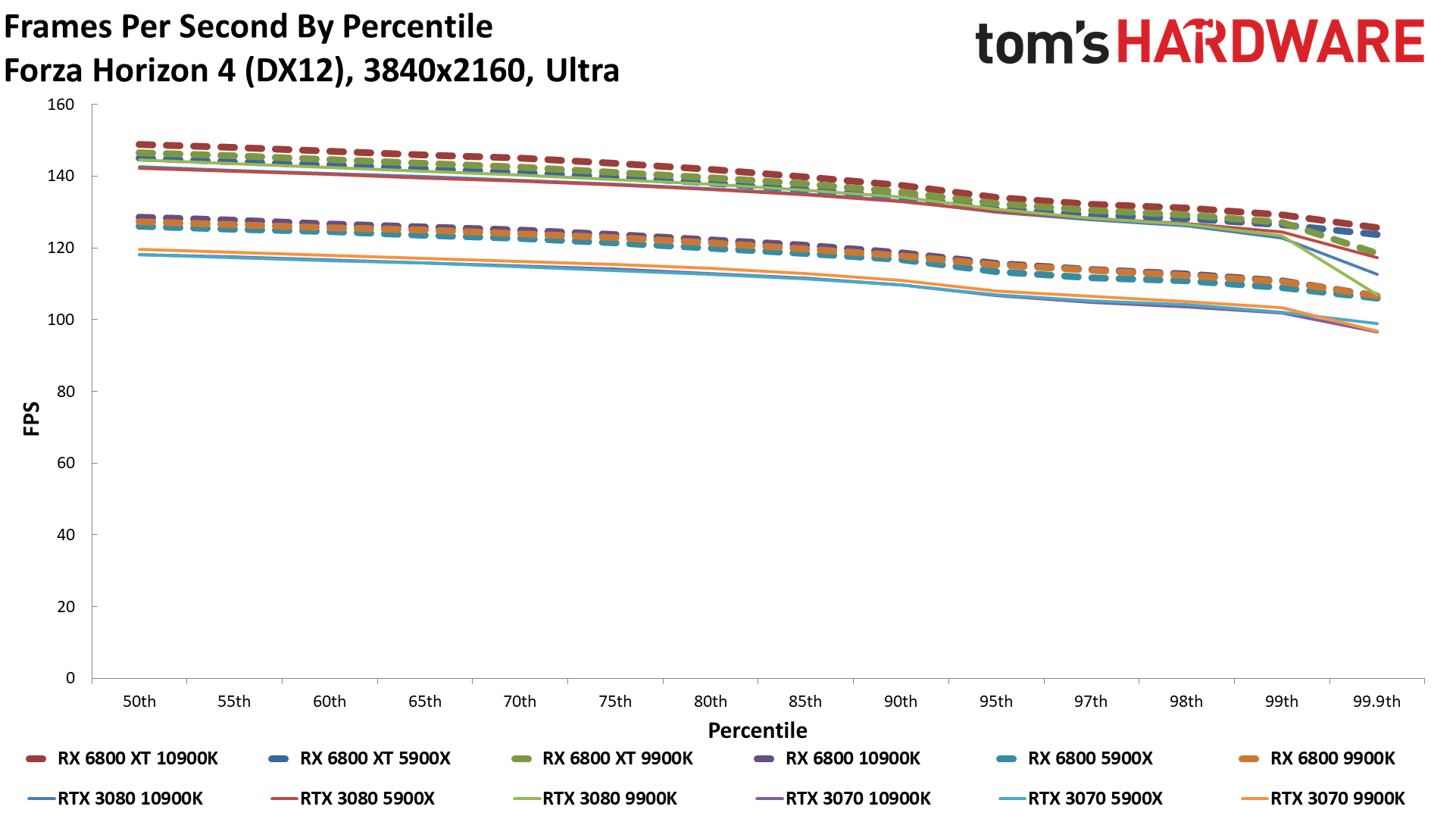

Forza Horizon 4

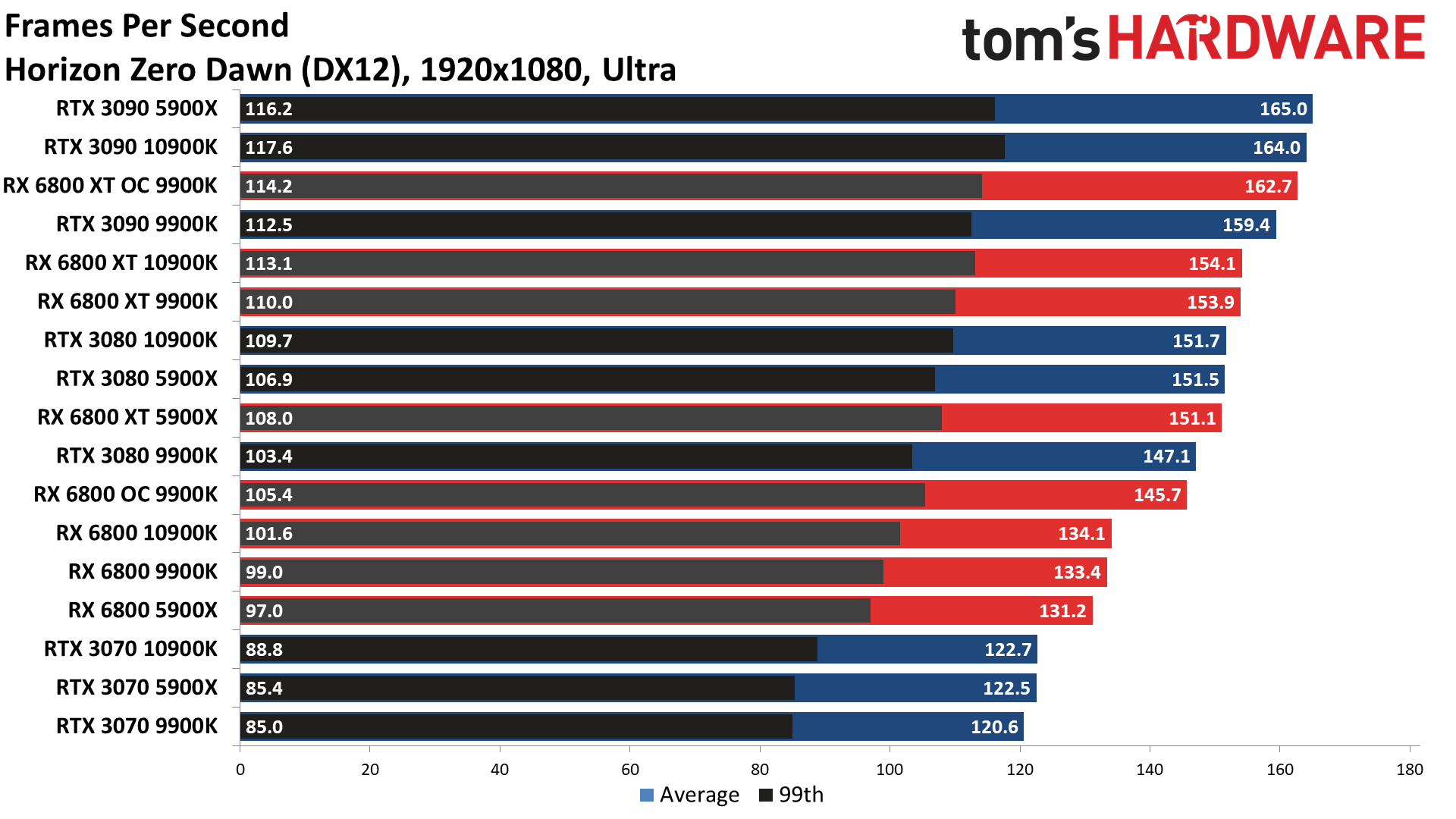

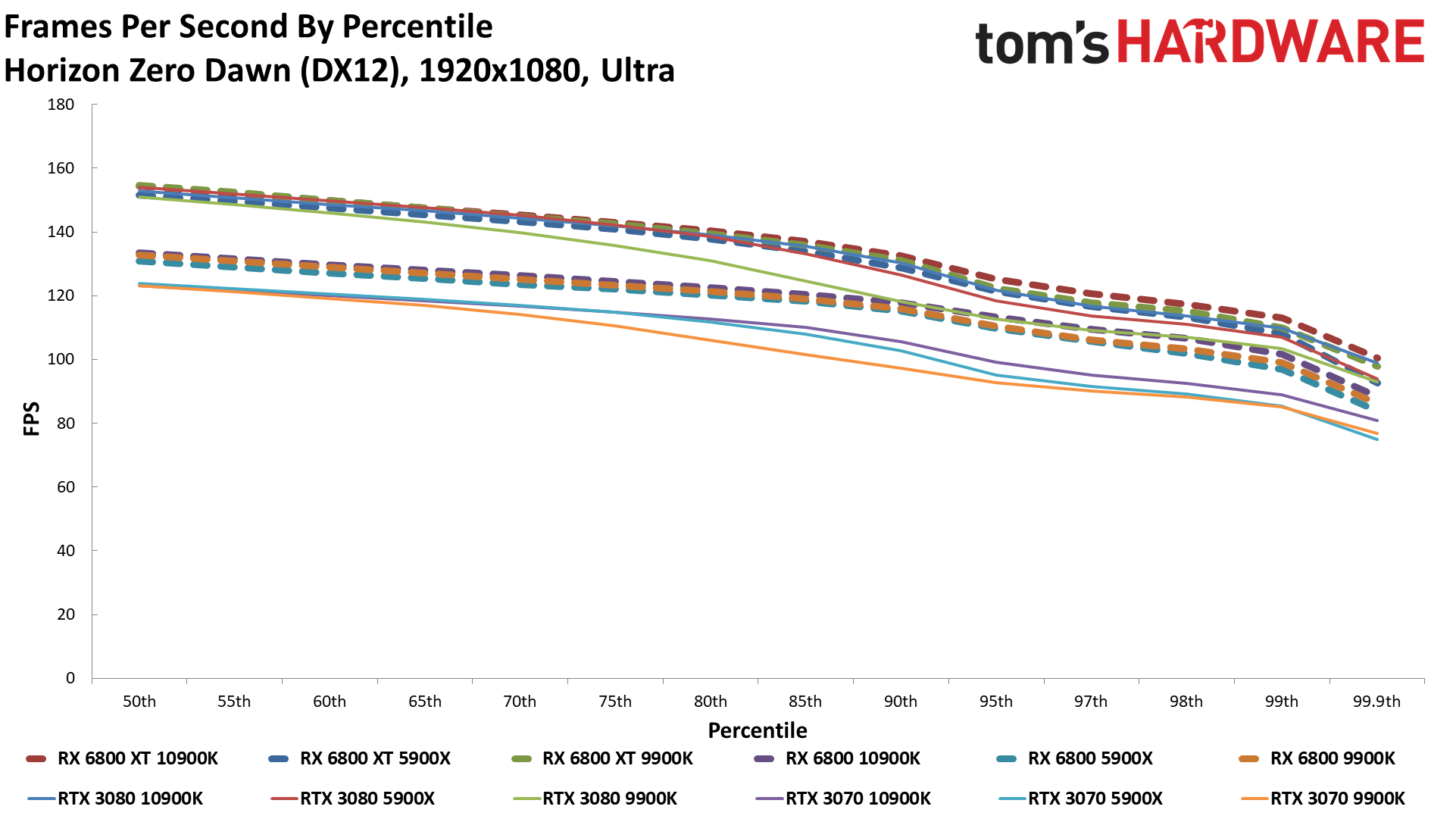

Horizon Zero Dawn

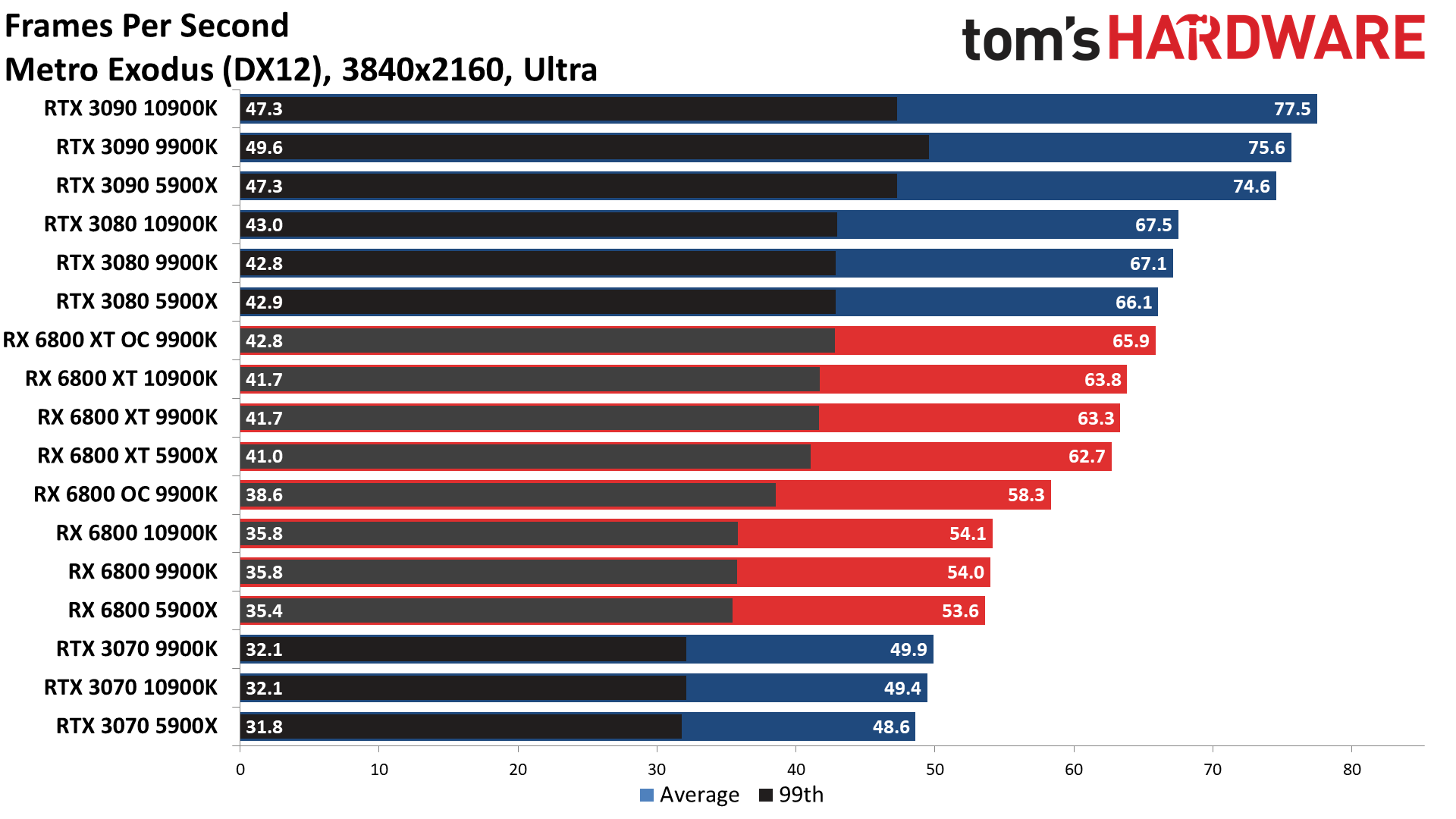

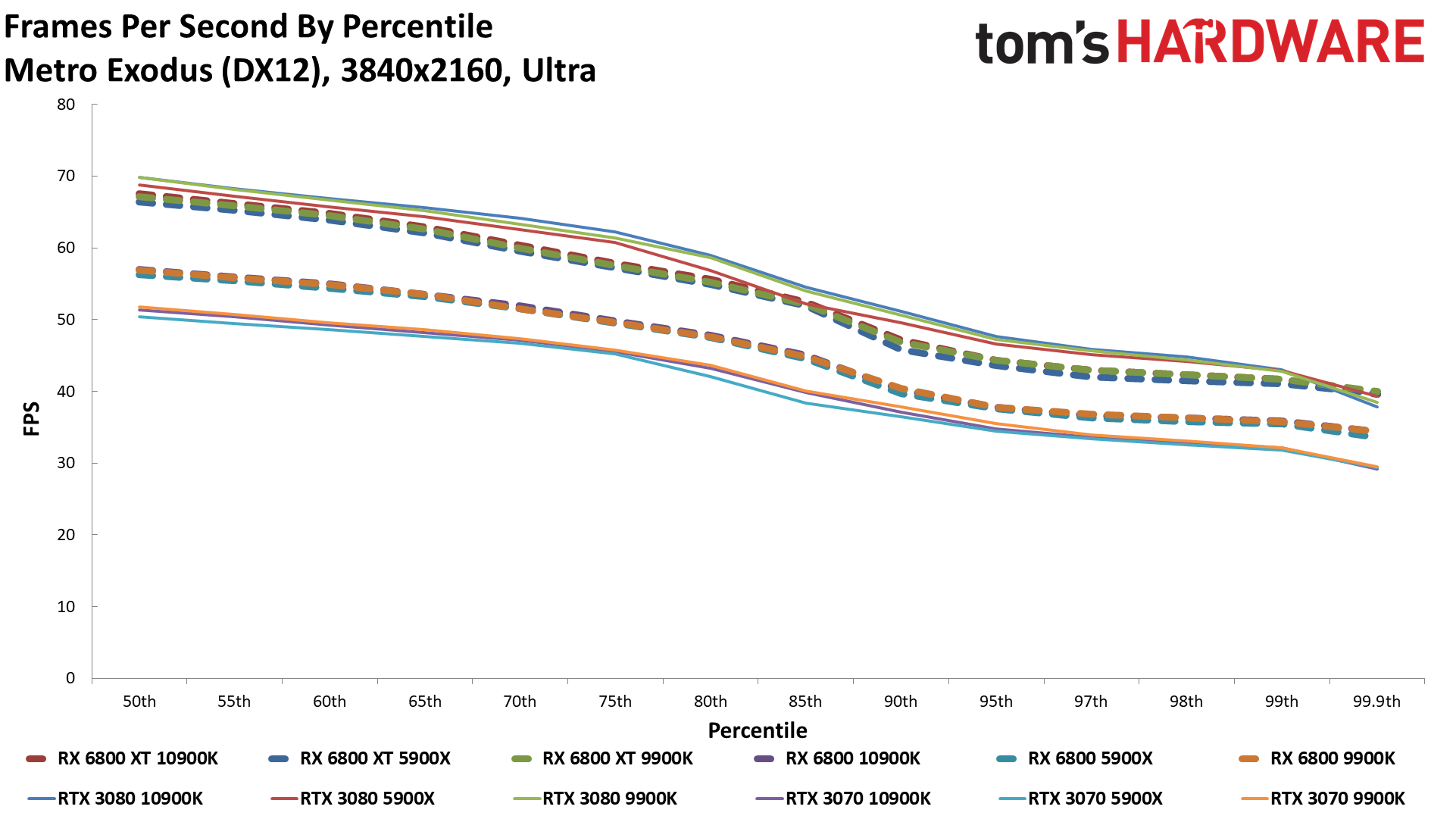

Metro Exodus

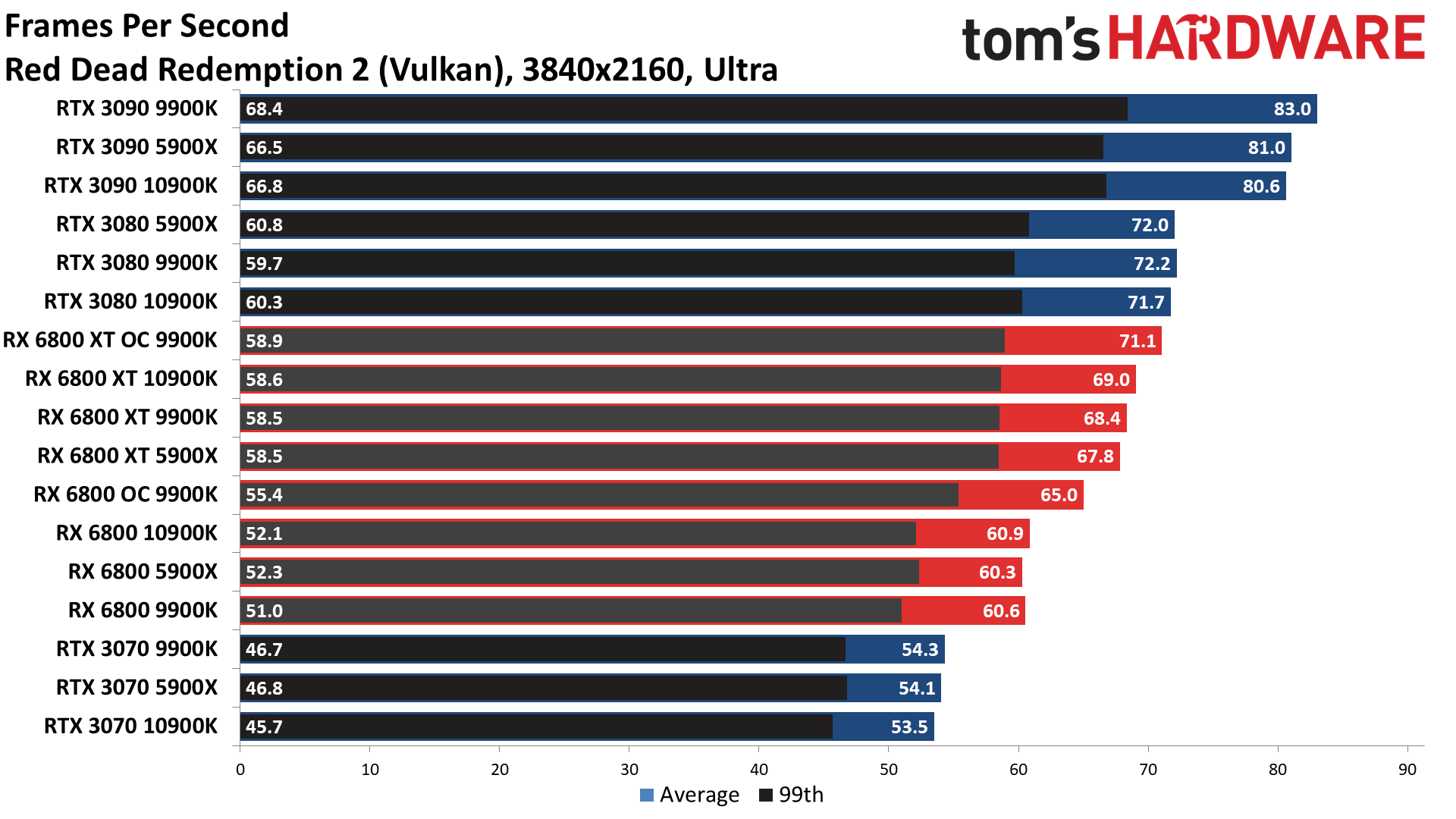

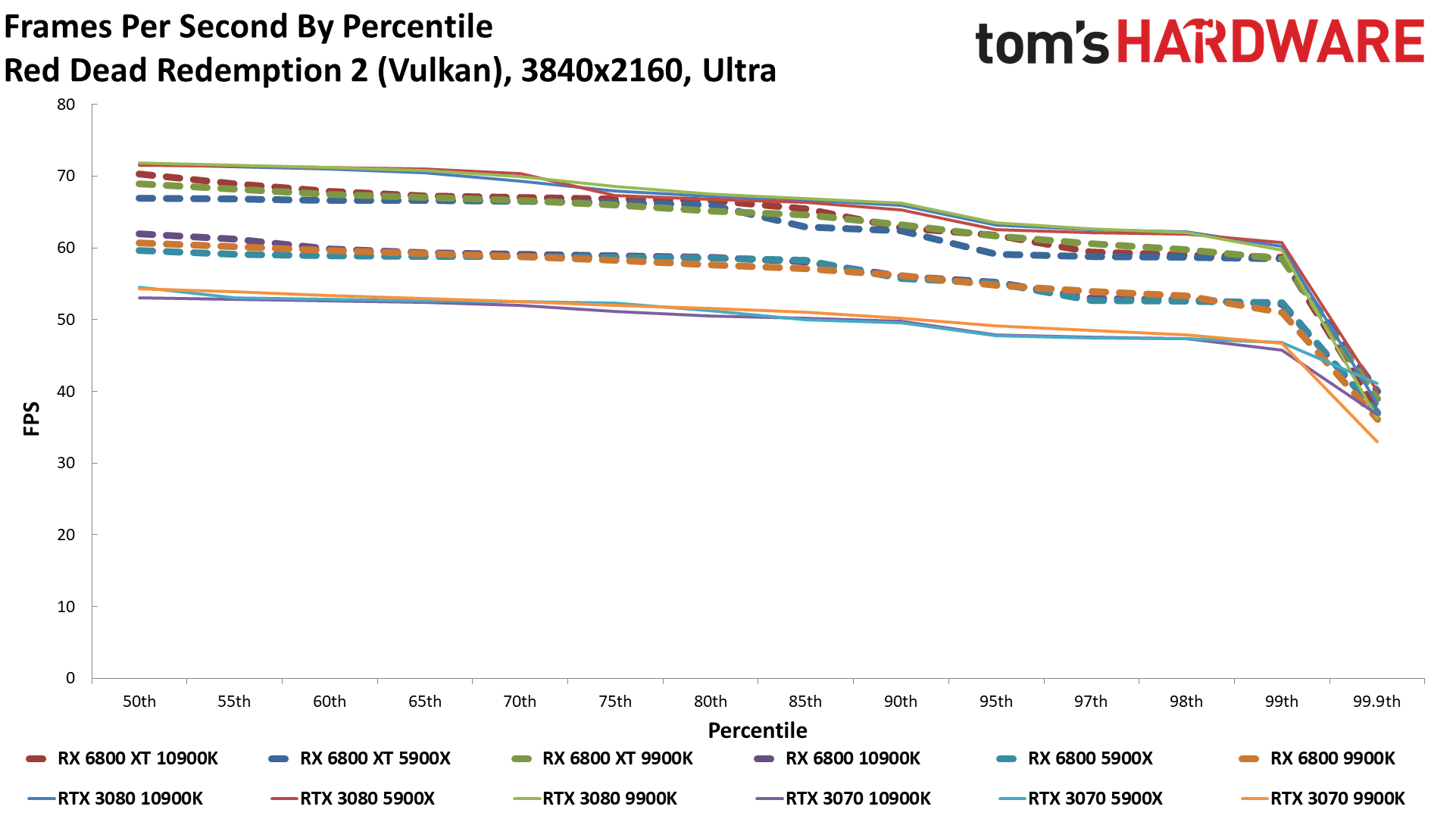

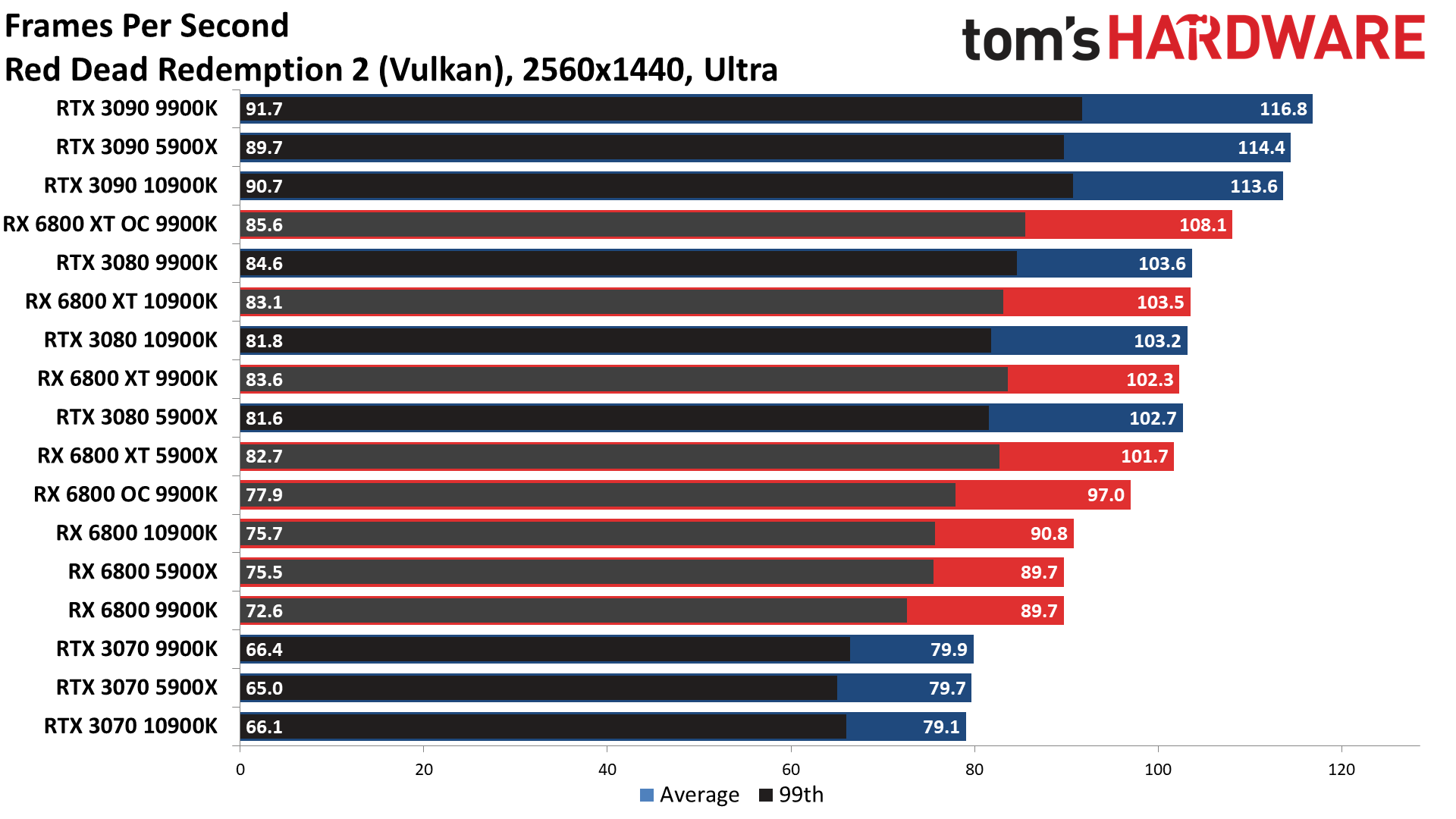

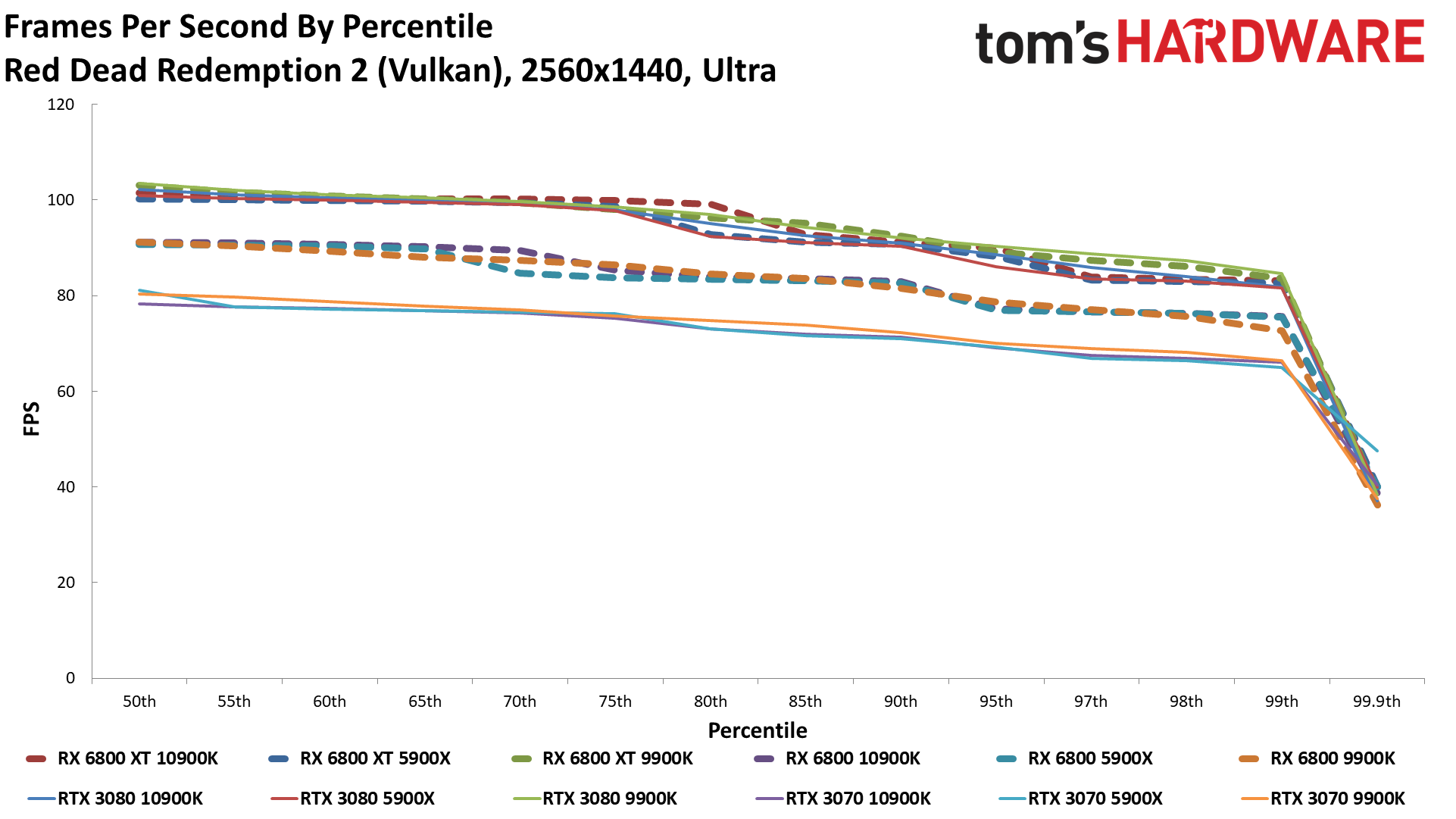

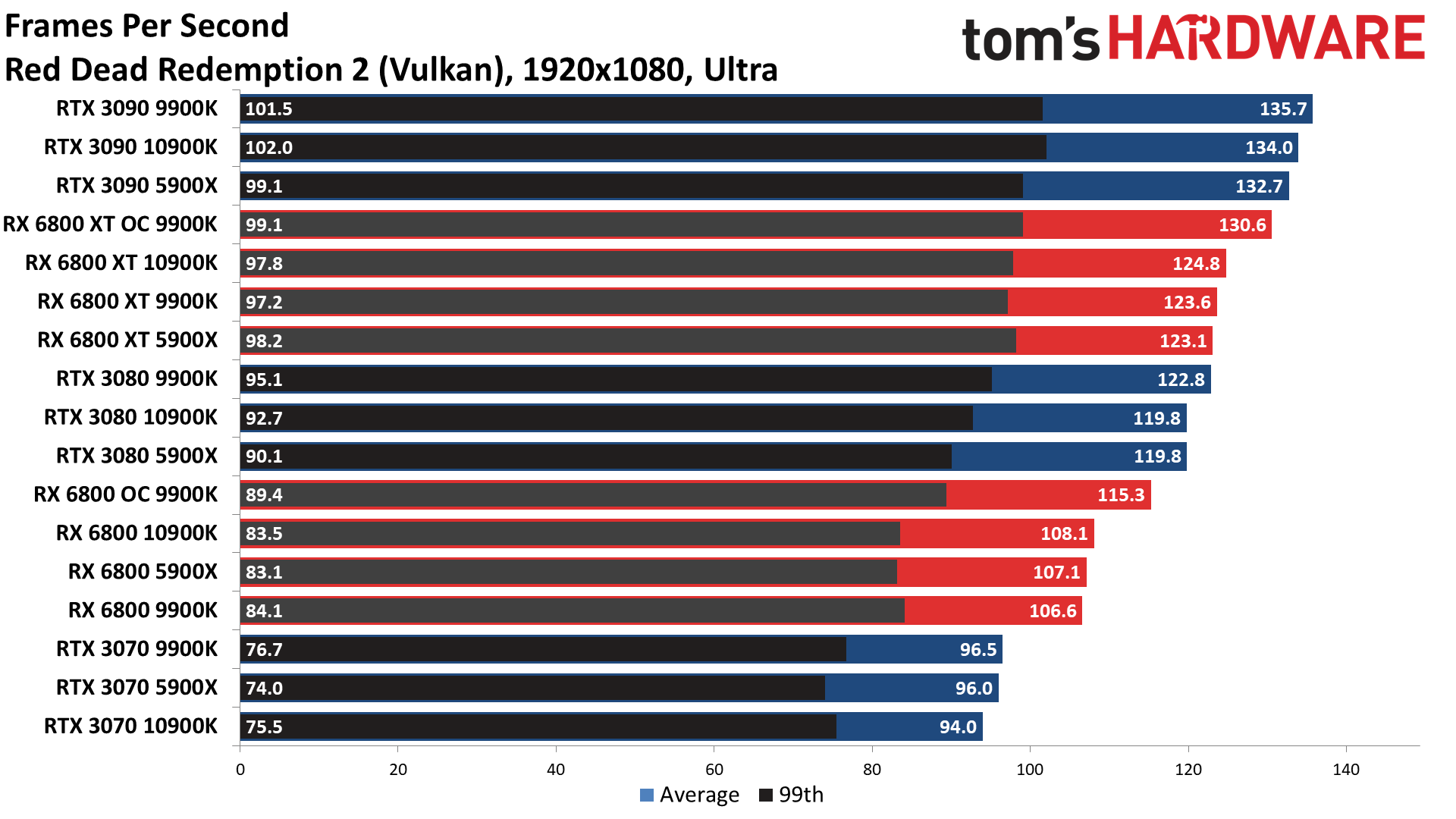

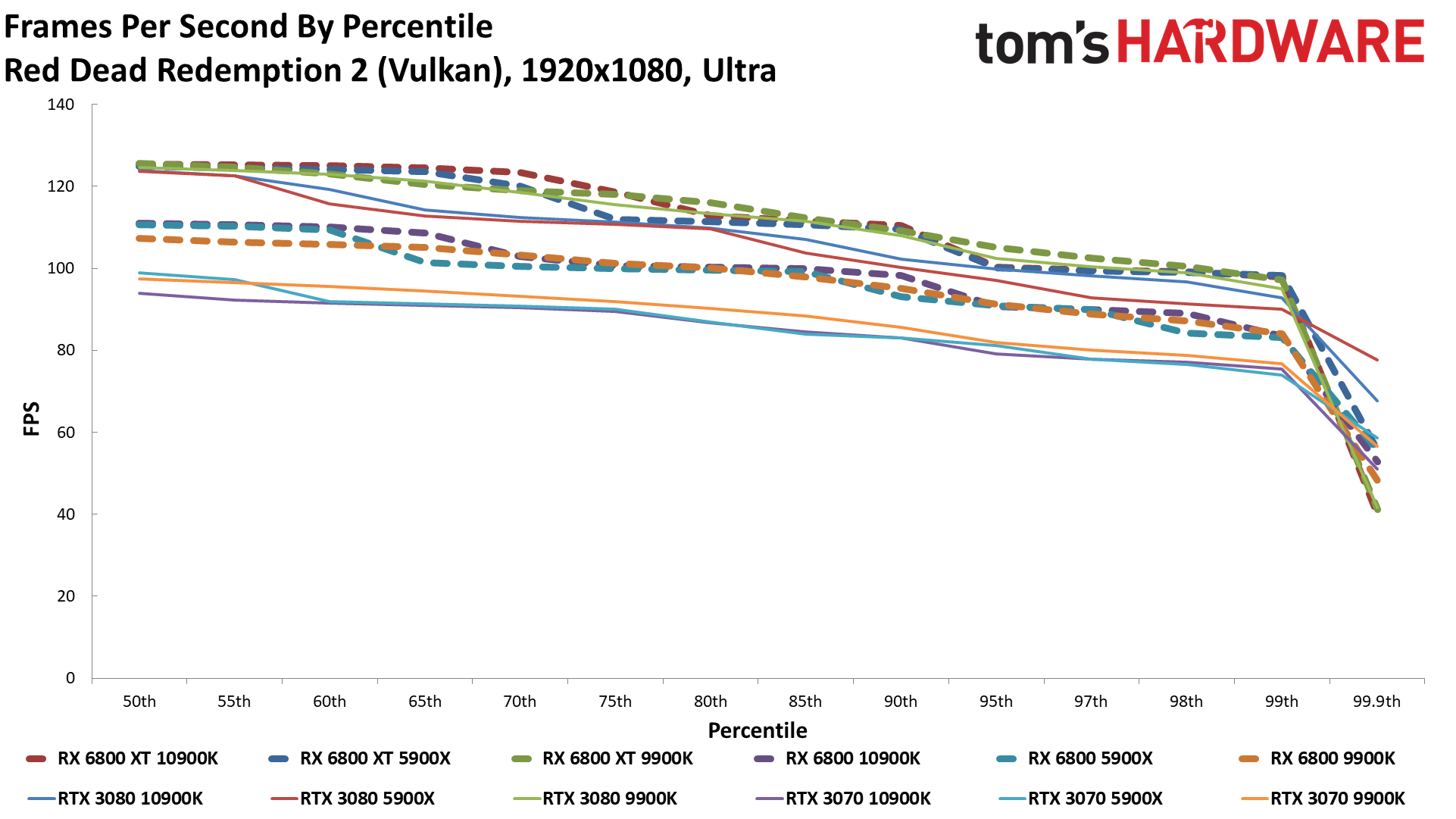

Red Dead Redemption 2

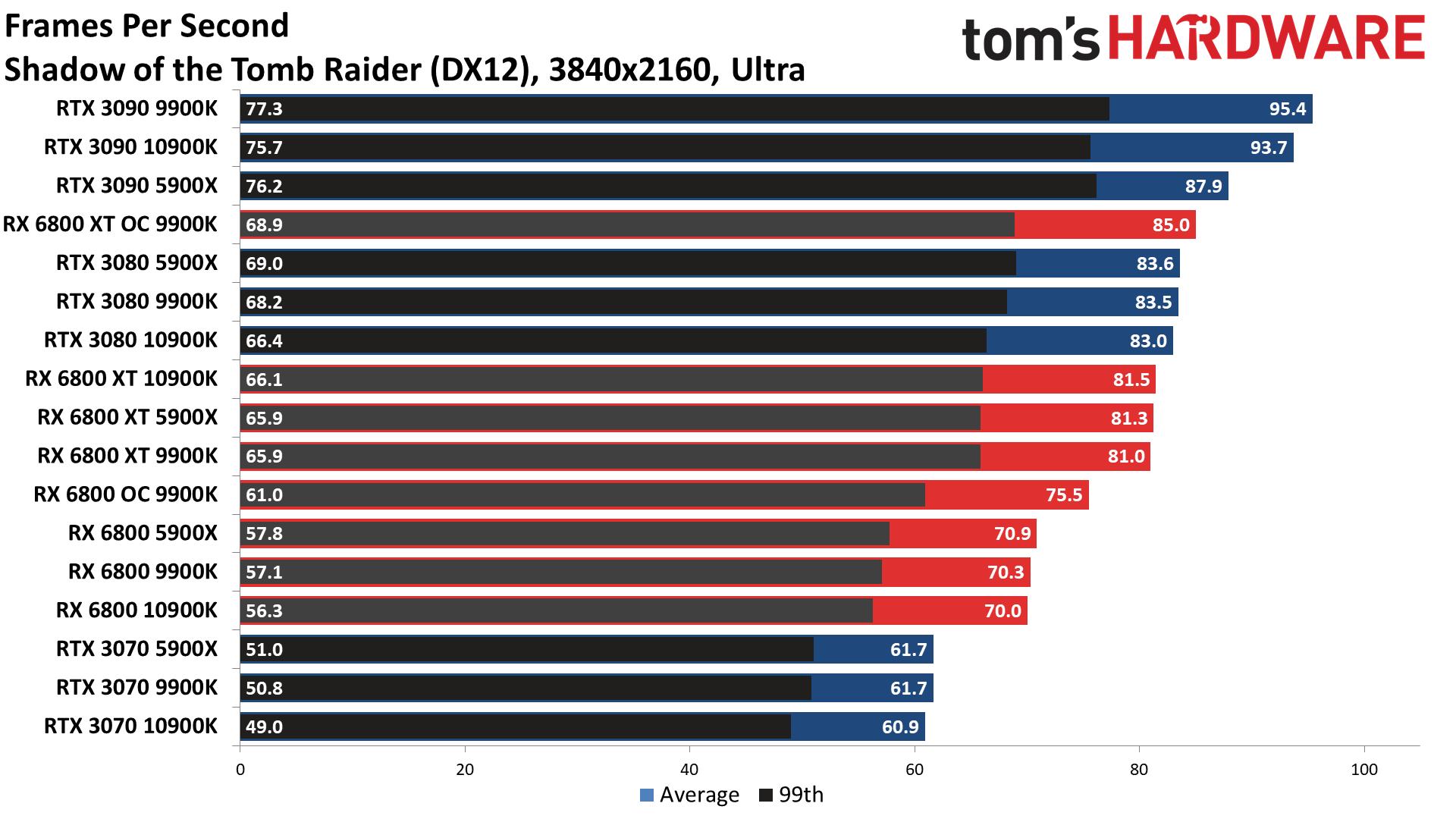

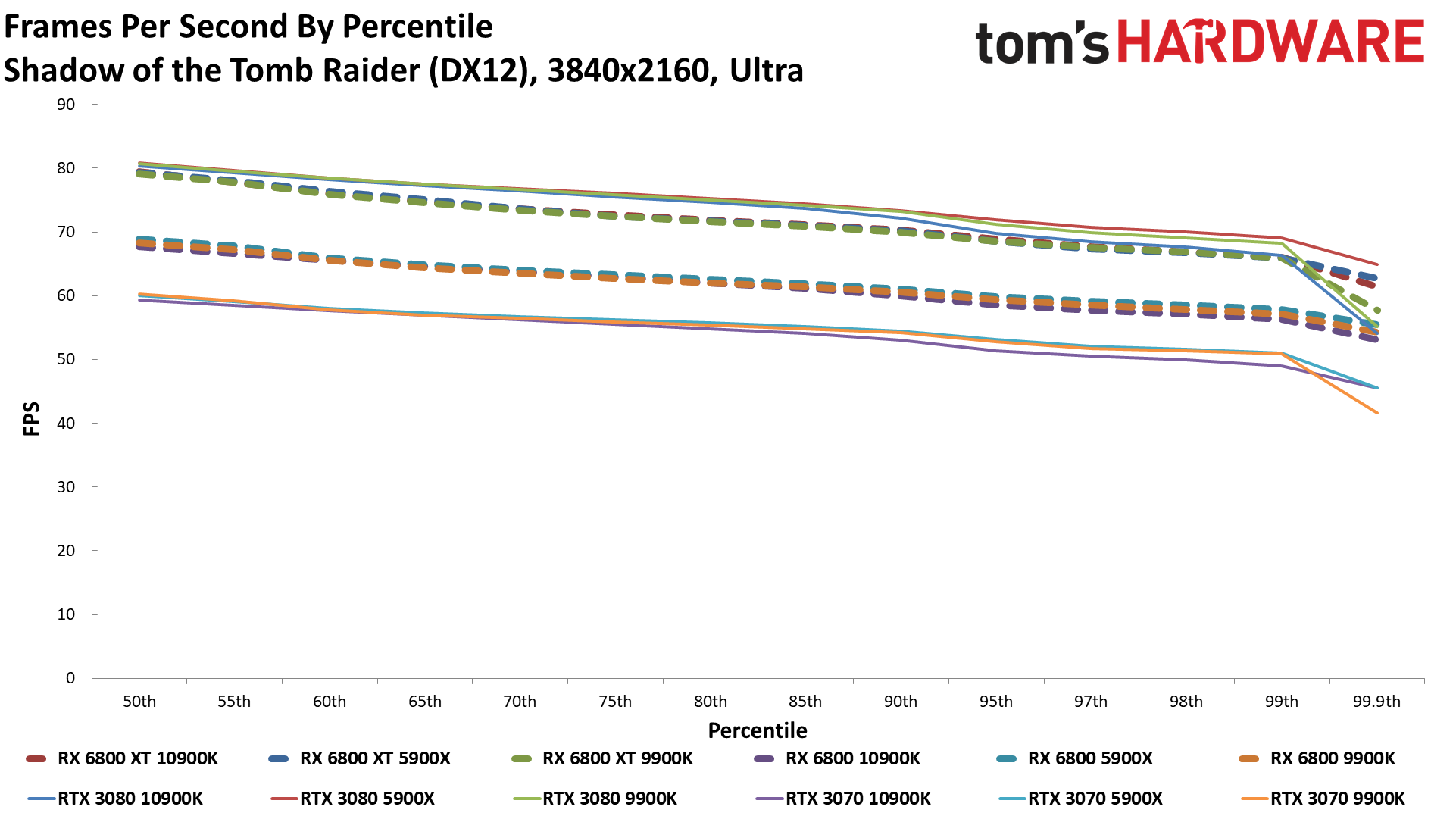

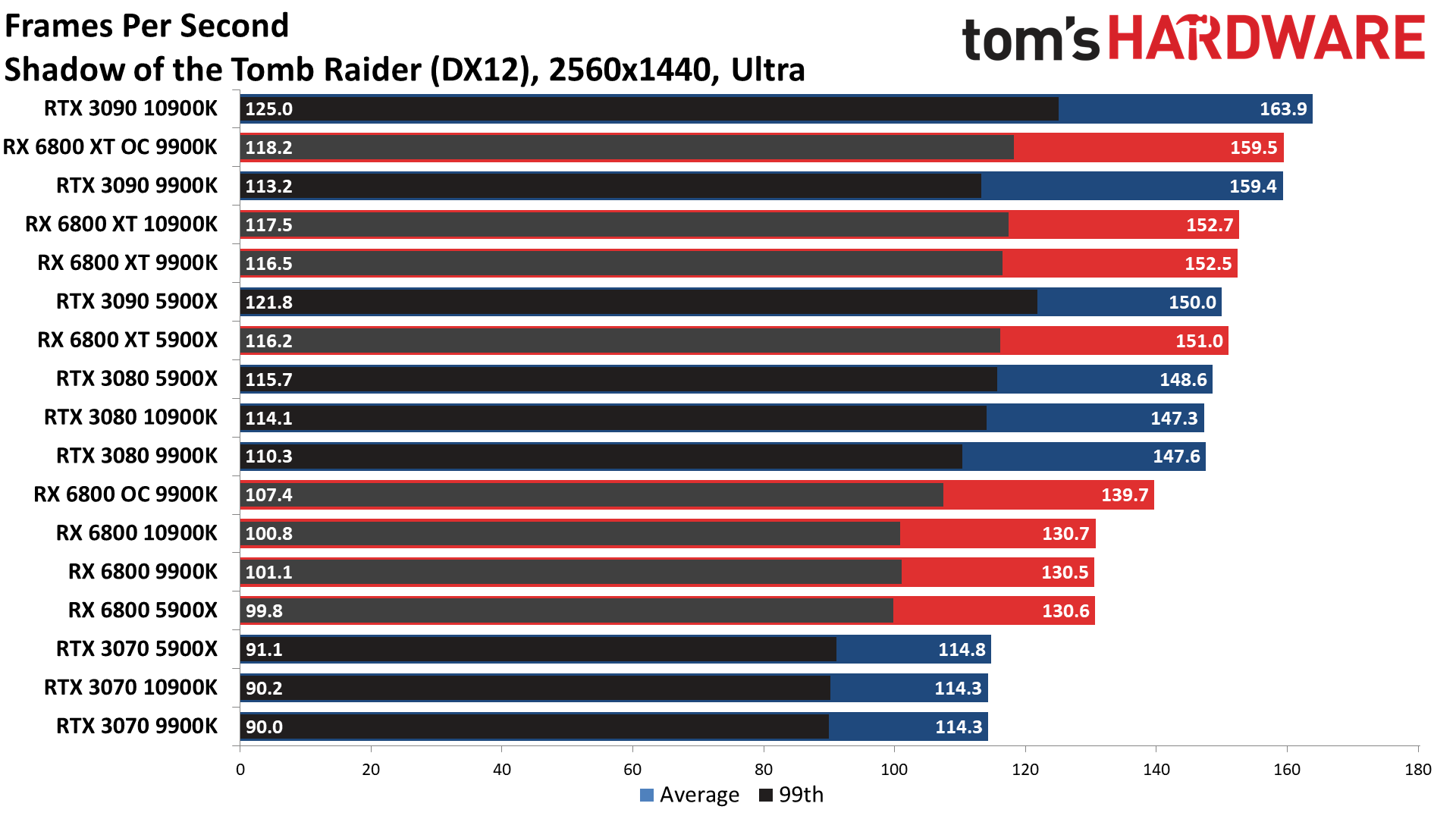

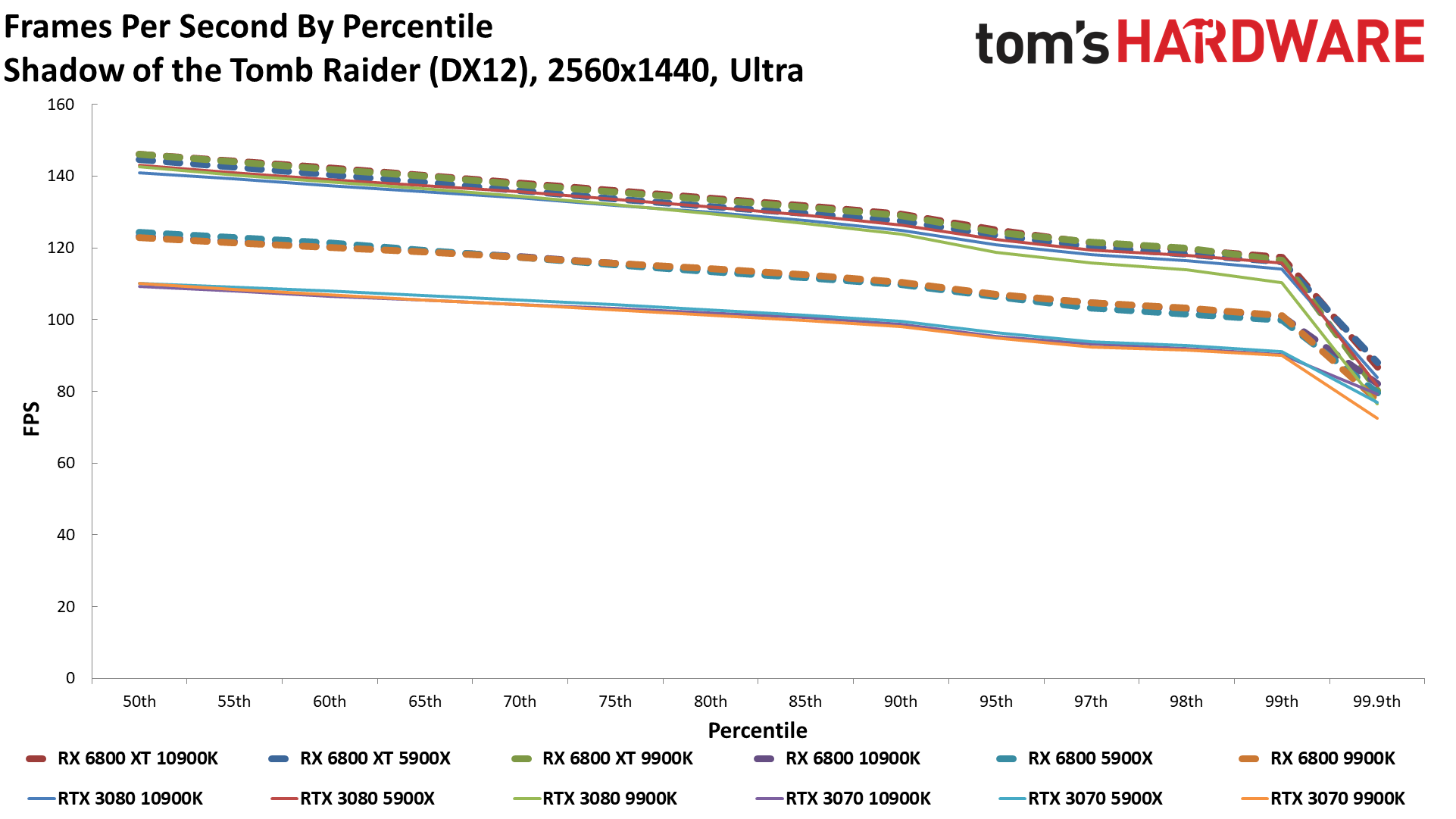

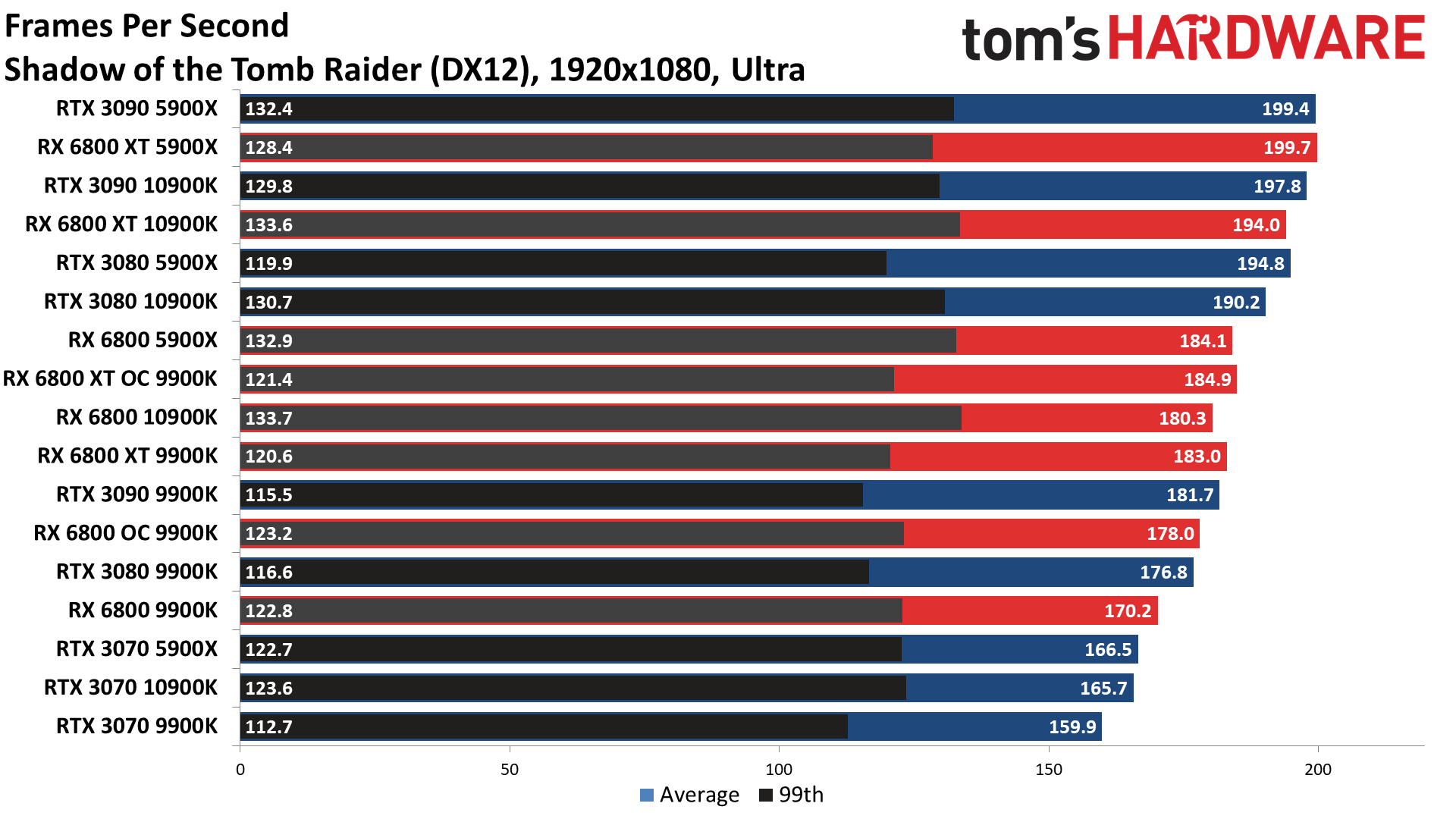

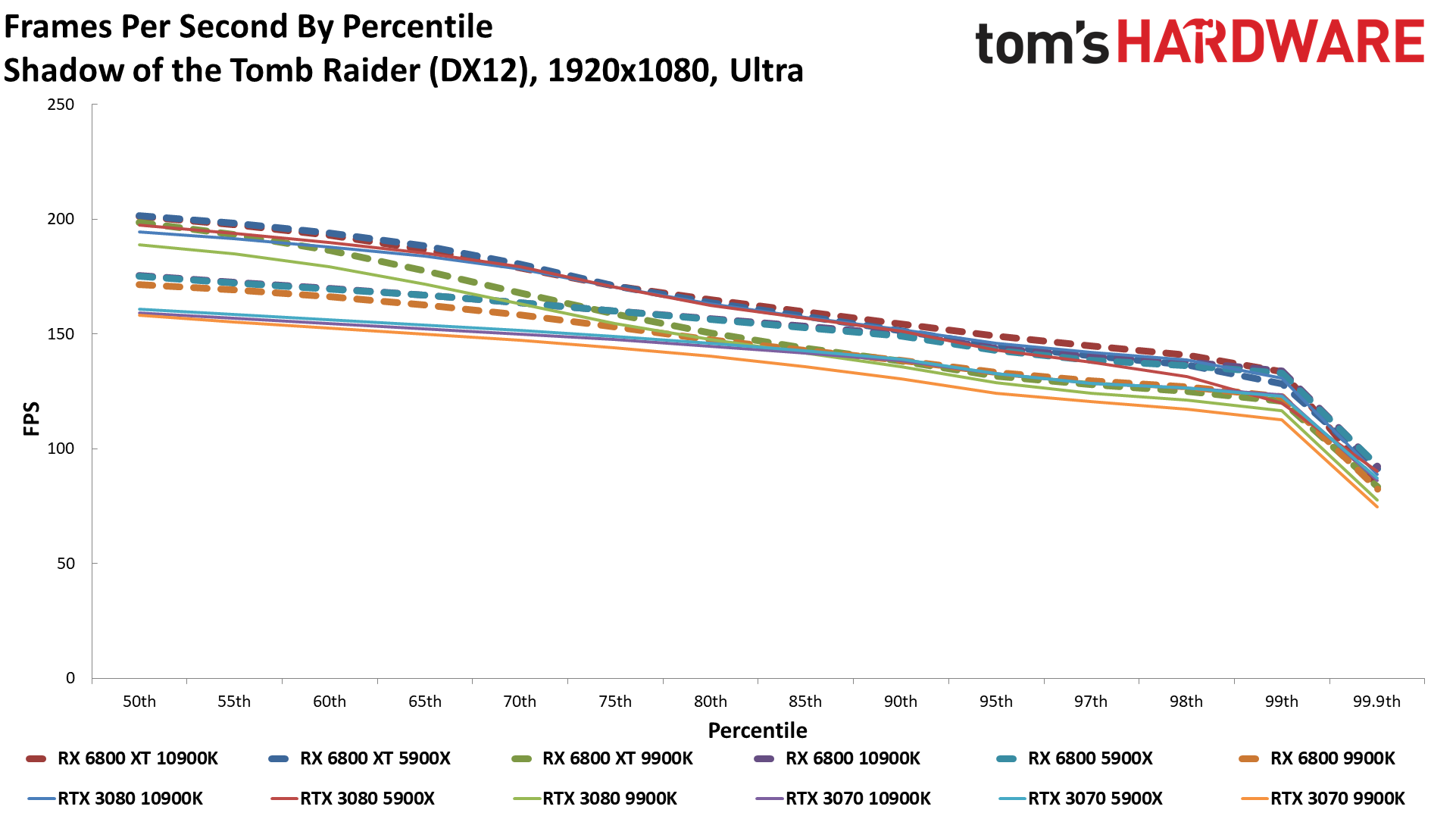

Shadow of the Tomb Raider

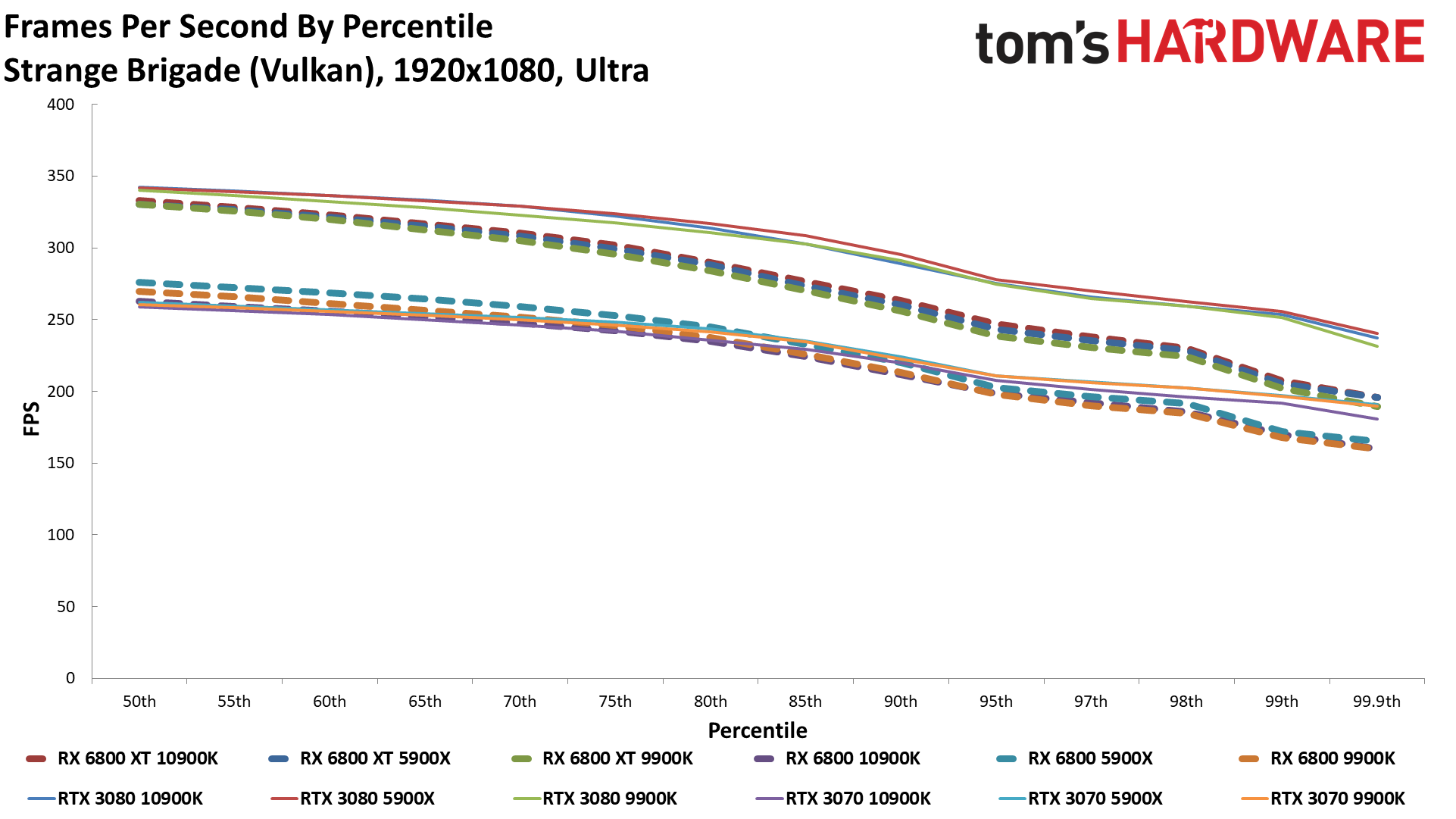

Strange Brigade

Watch Dogs Legion

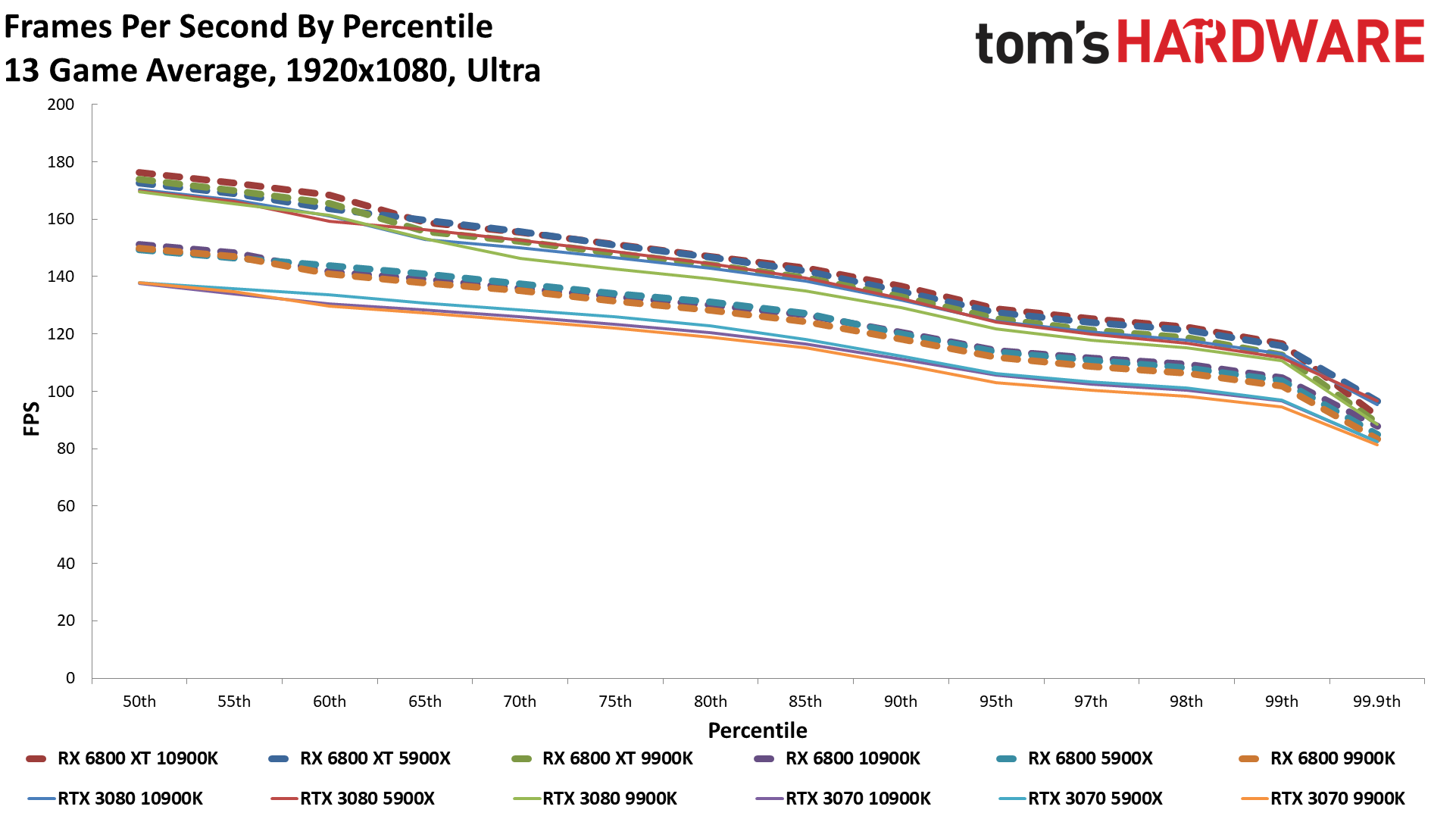

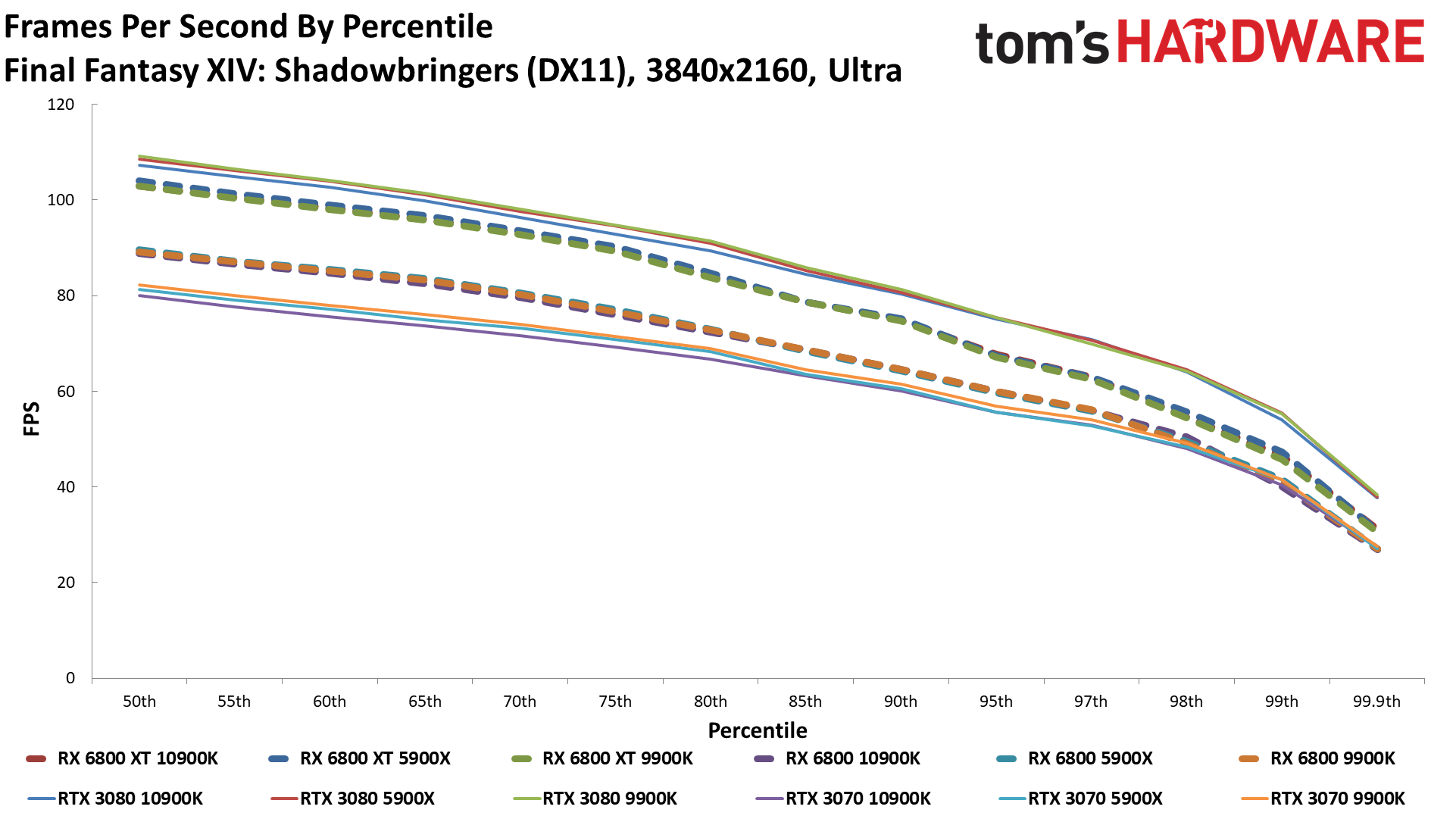

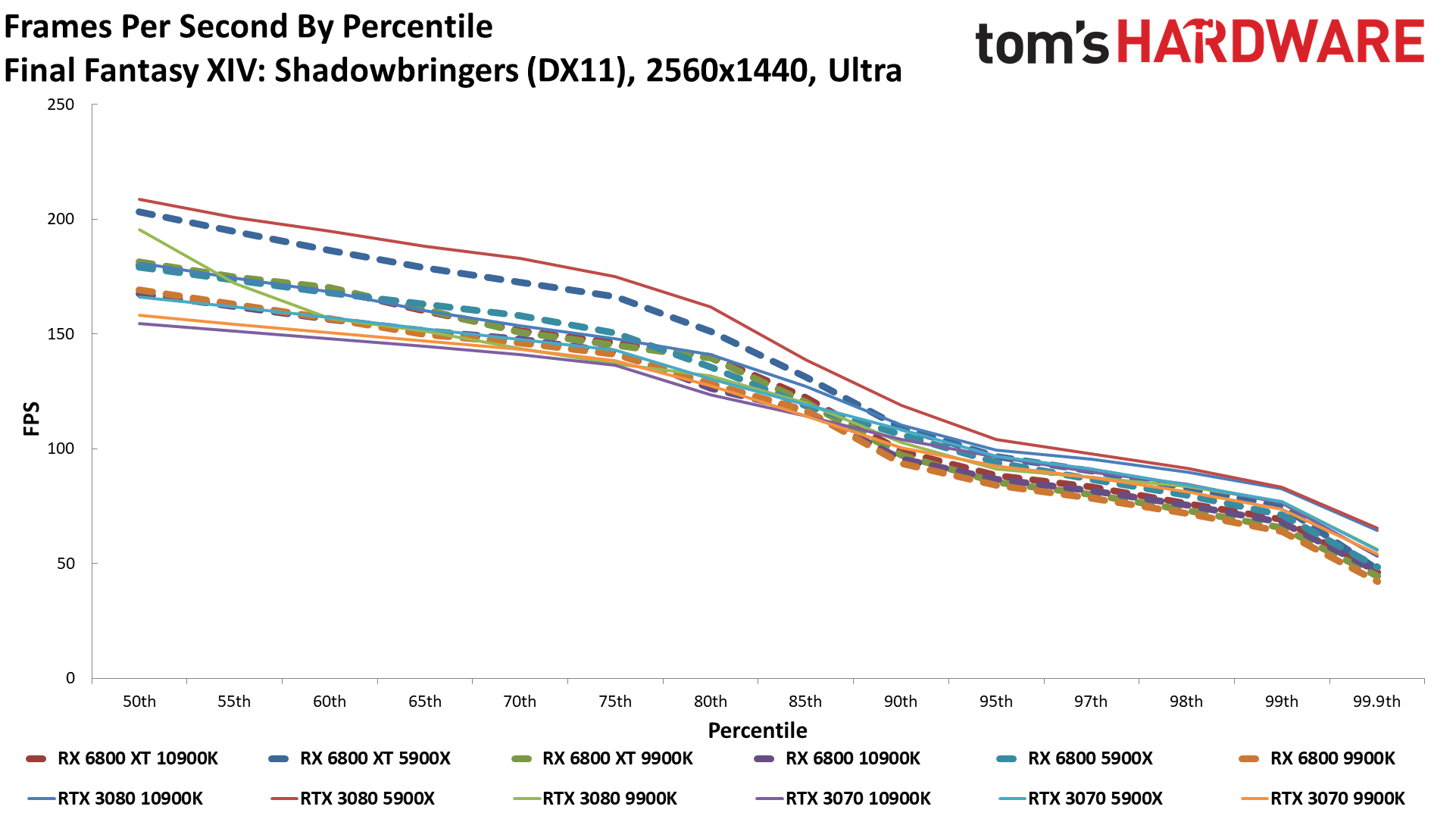

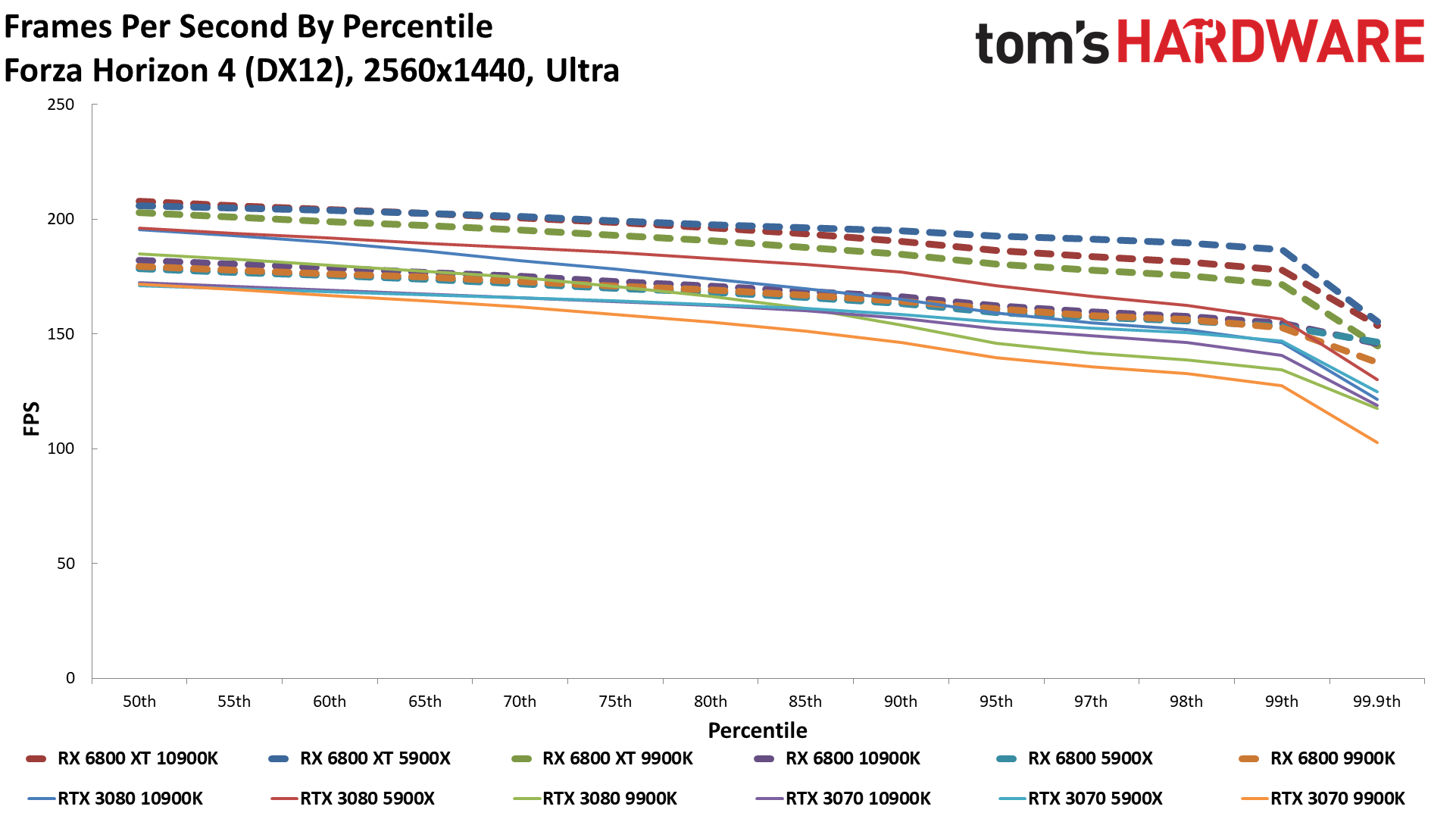

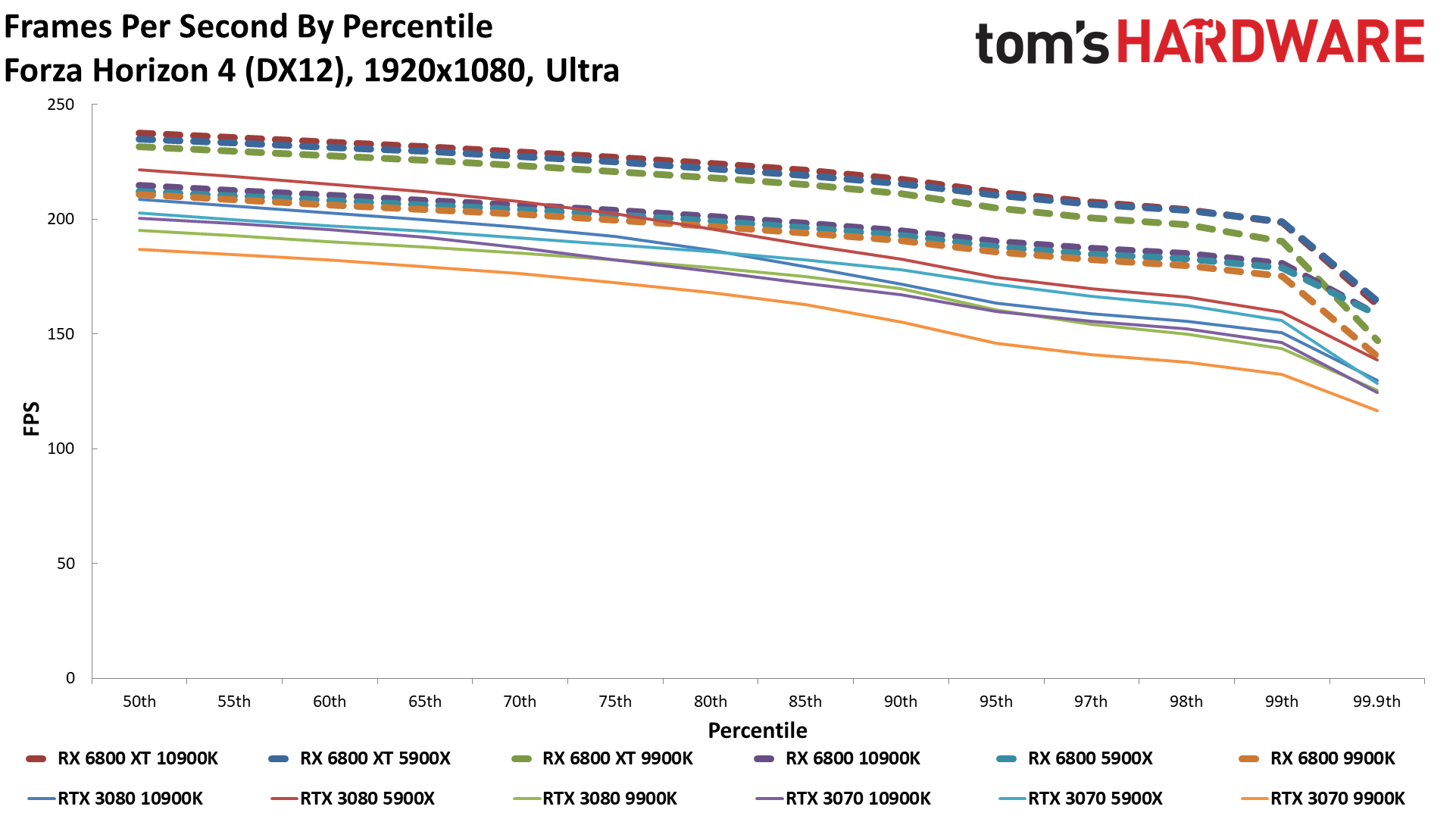

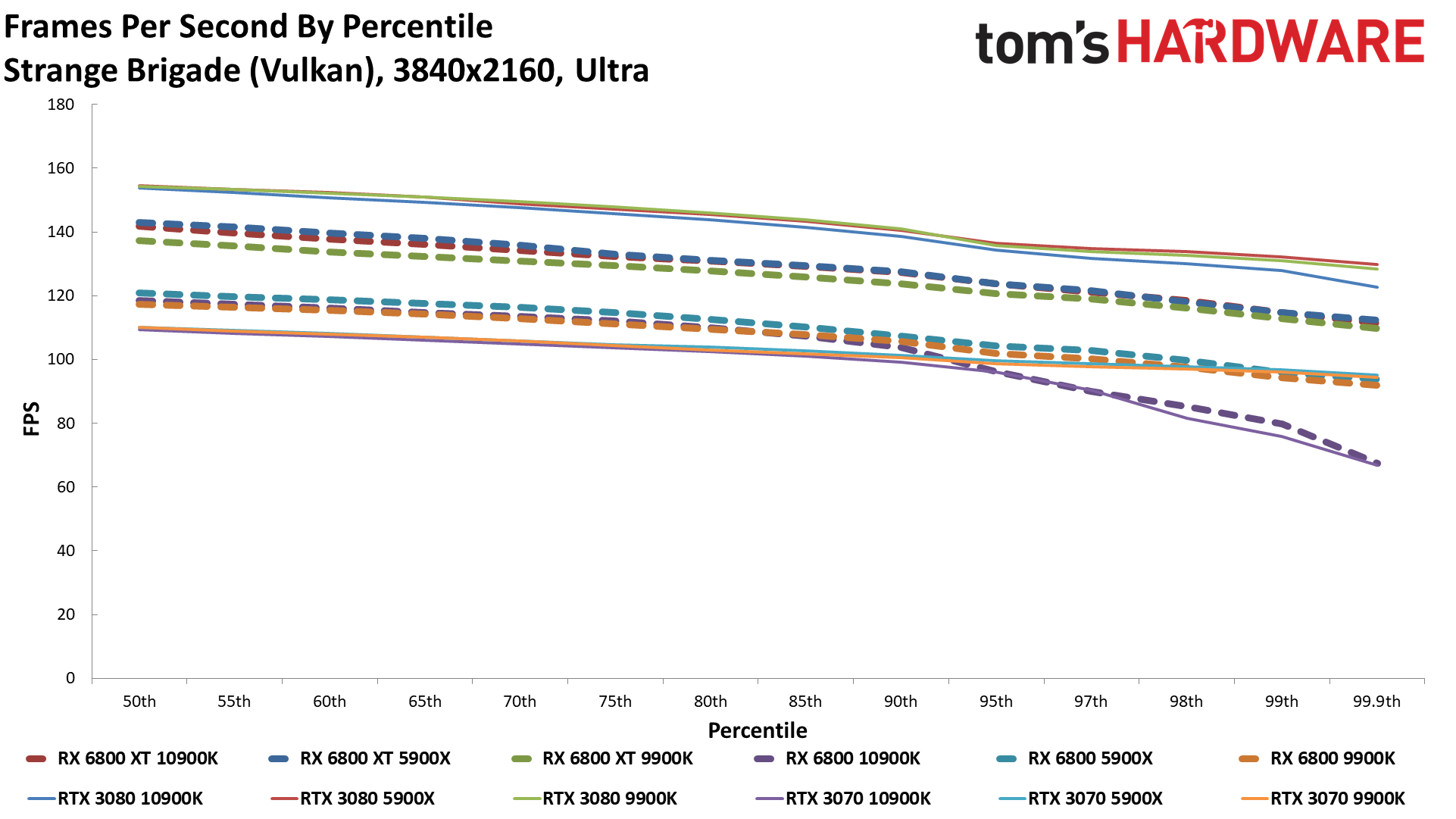

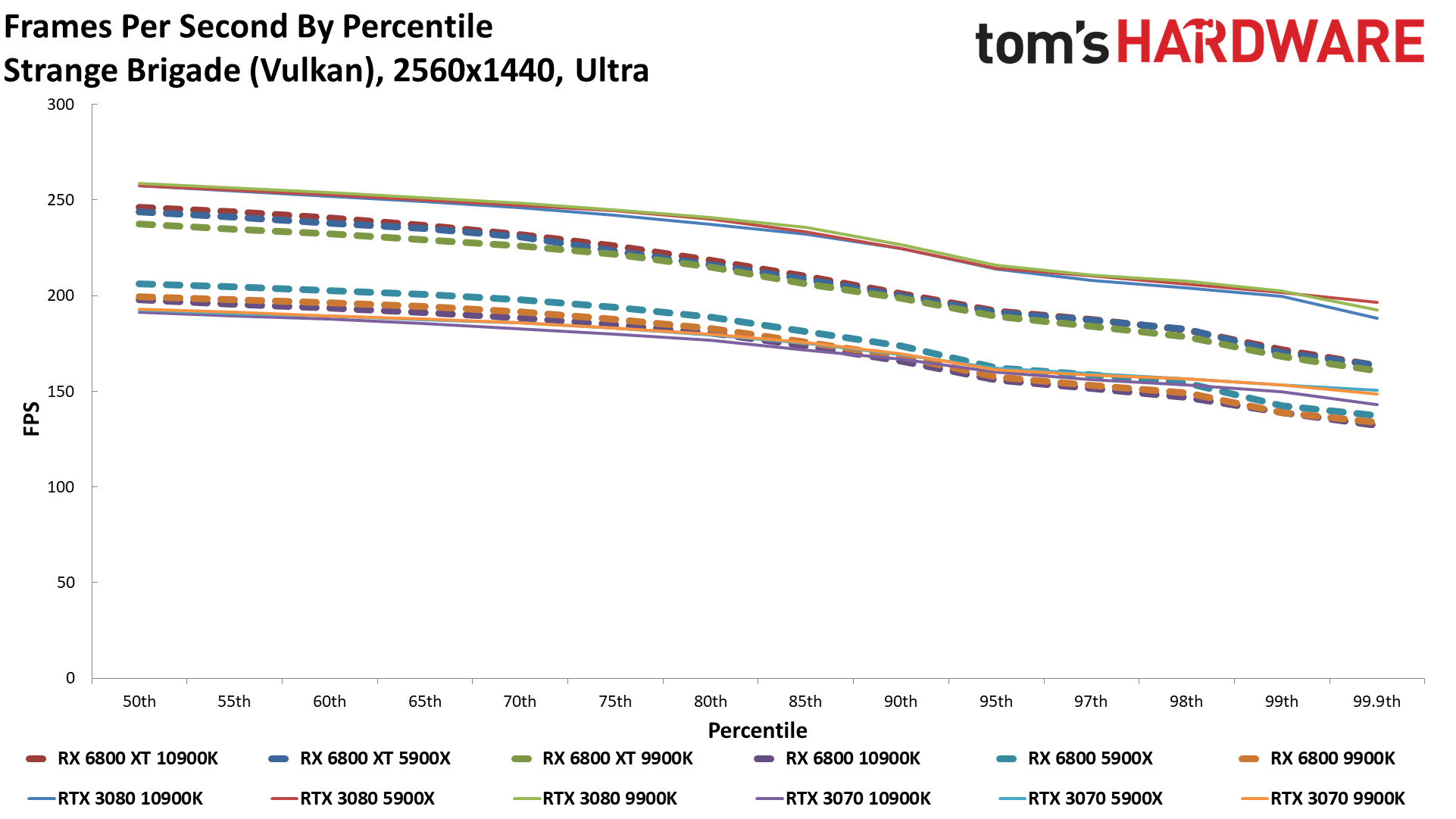

These charts are a bit busy, perhaps, with five GPUs and three CPUs each, plus overclocking. Take your time. We won't judge. Nine of the games are from the existing suite, and the trends noted earlier basically continue.

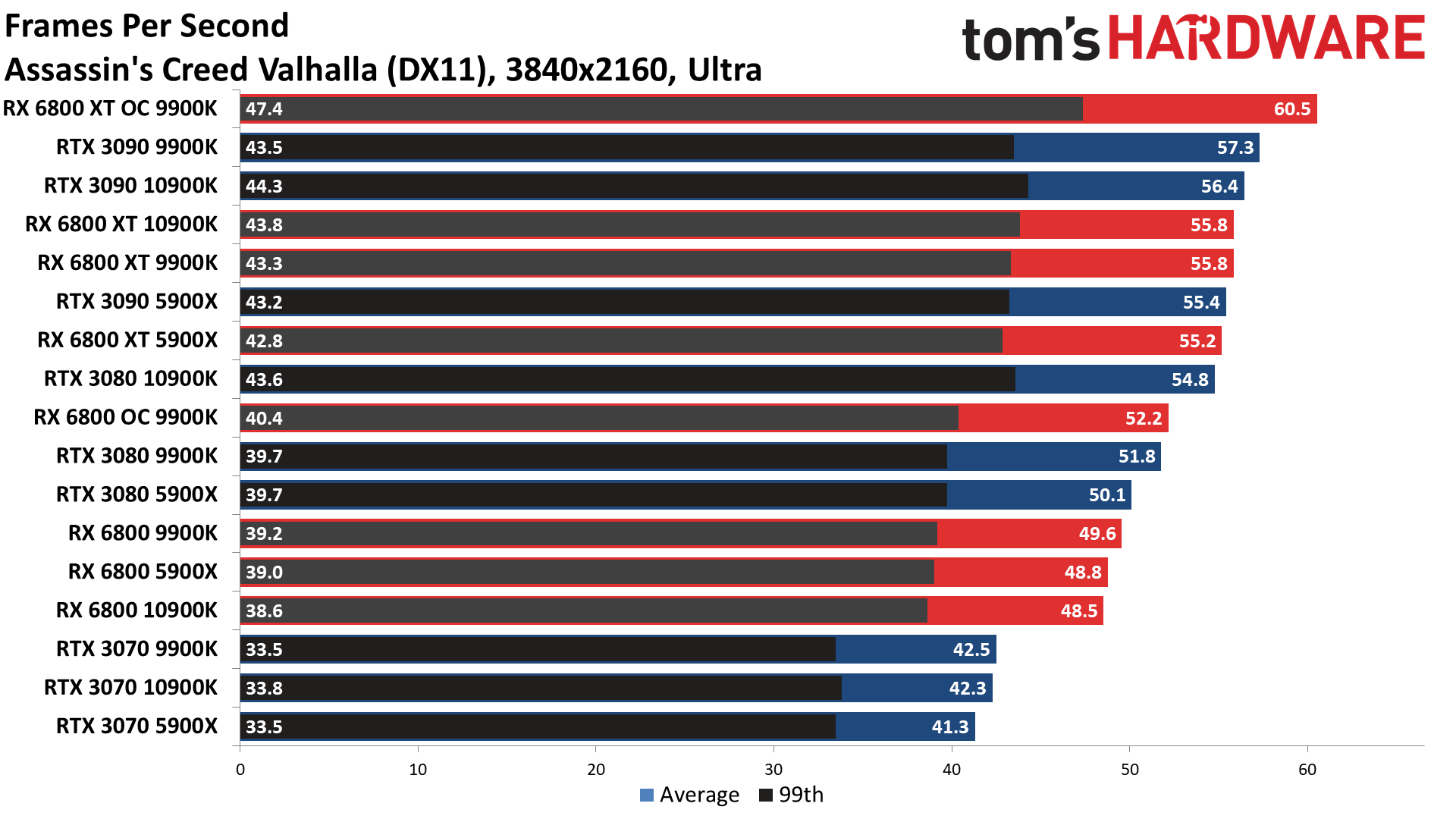

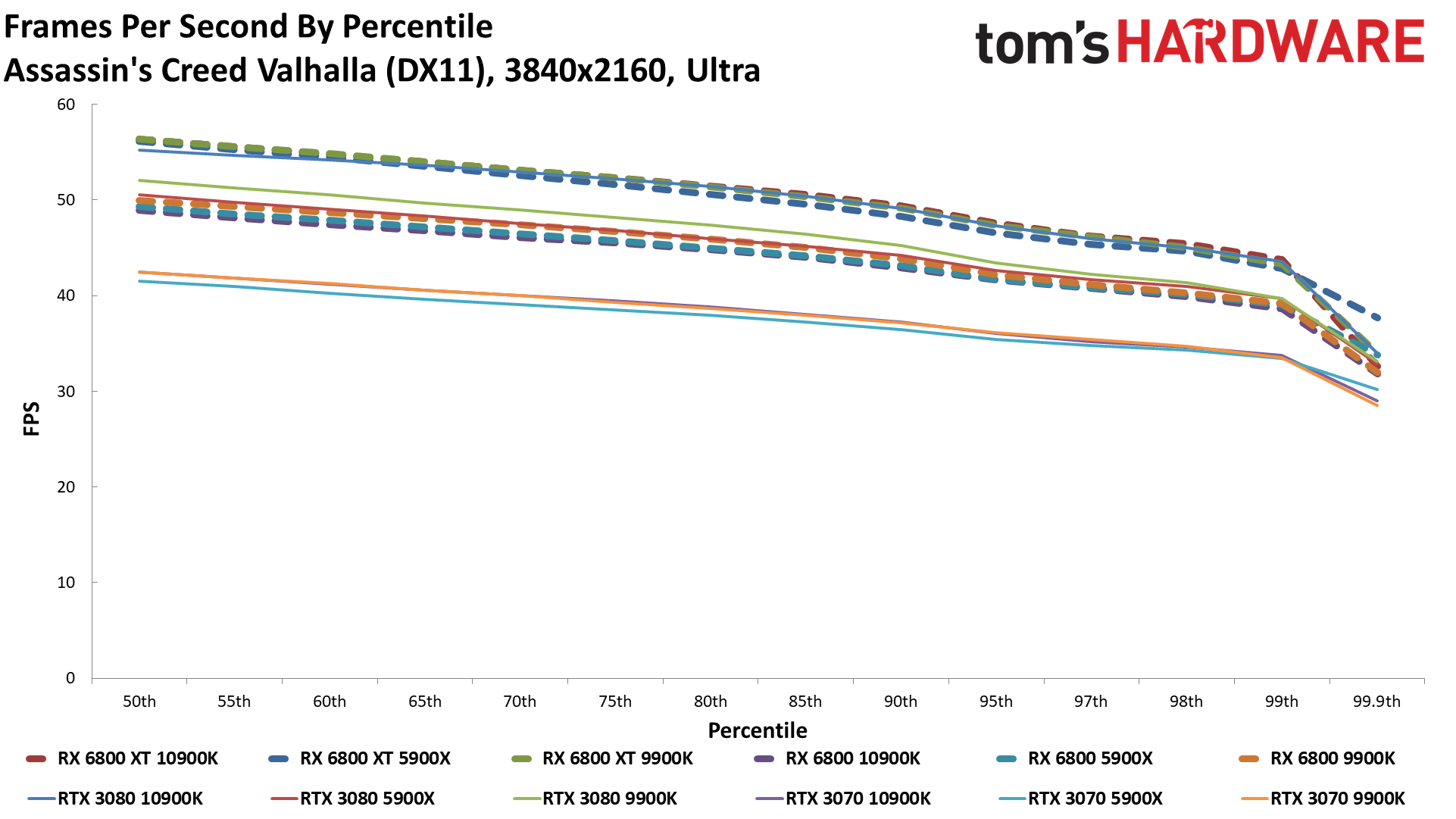

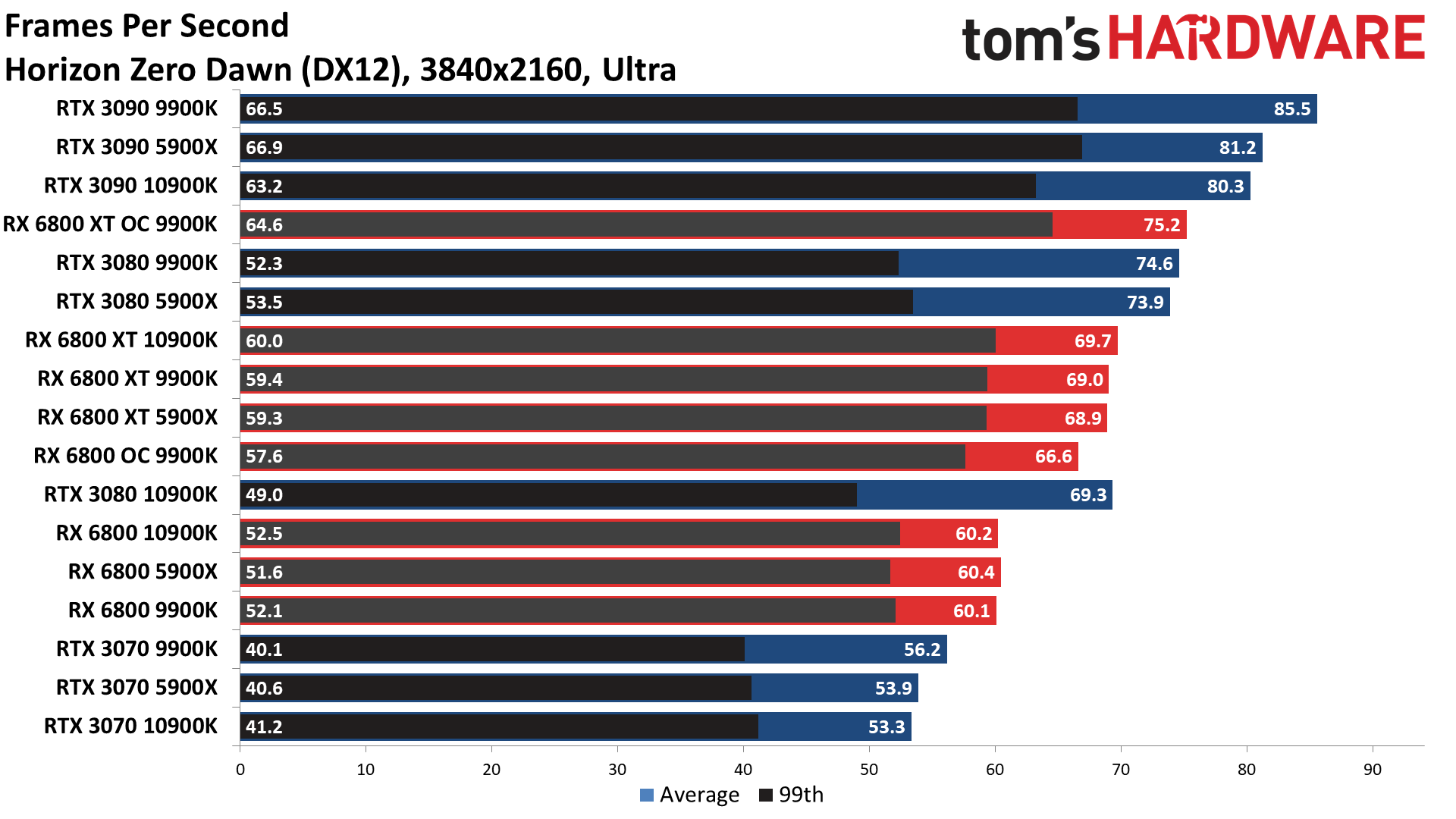

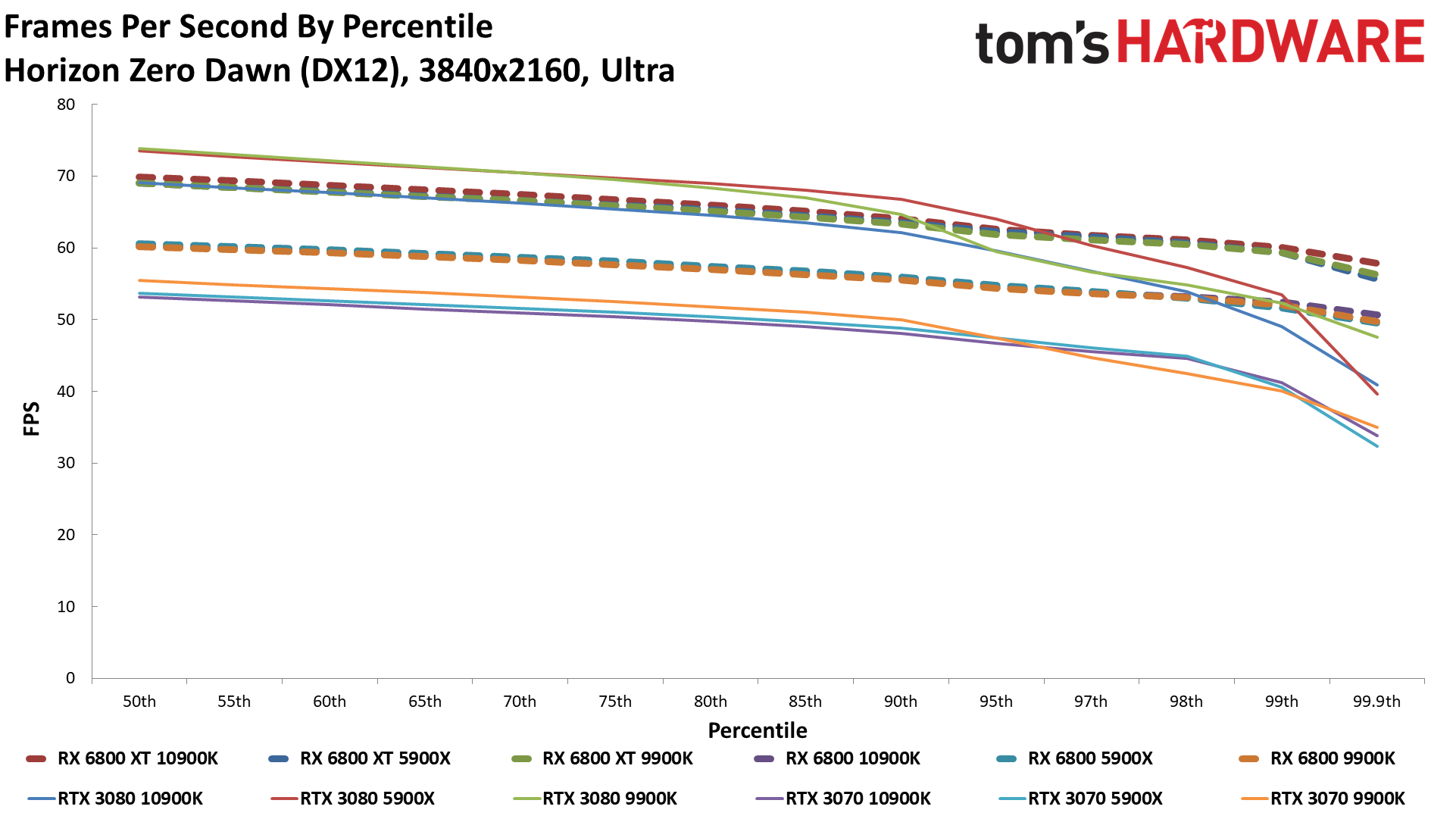

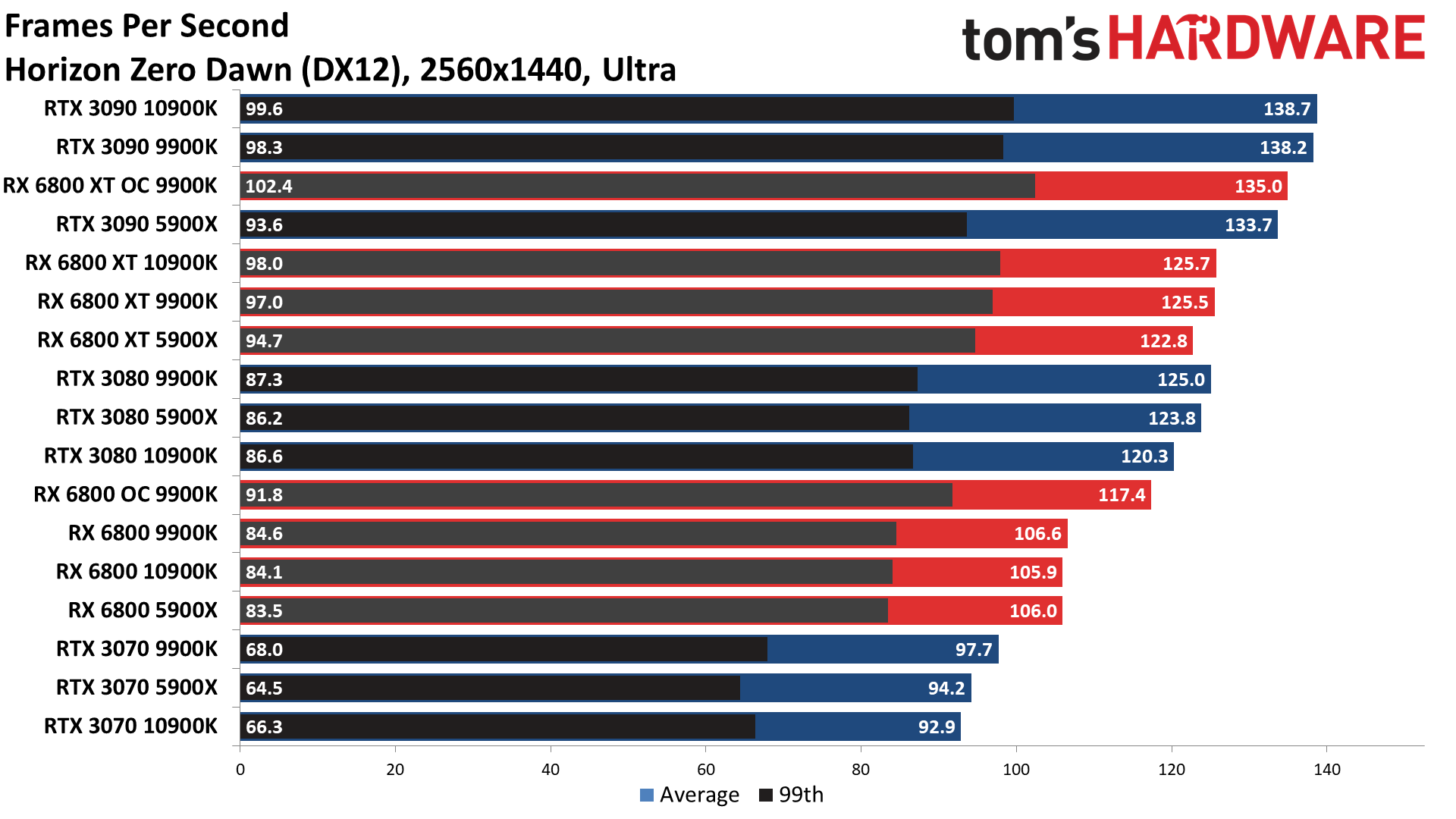

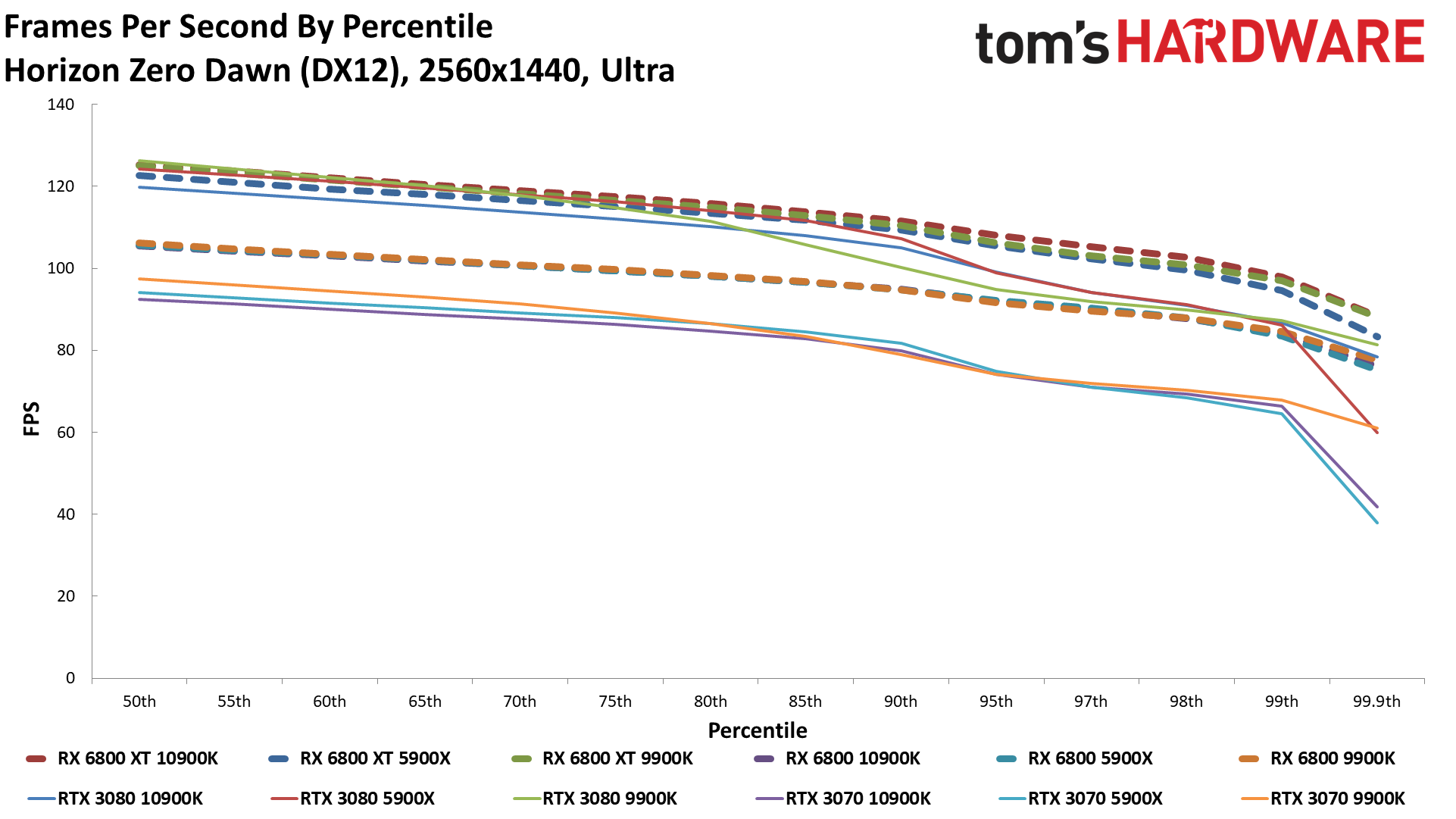

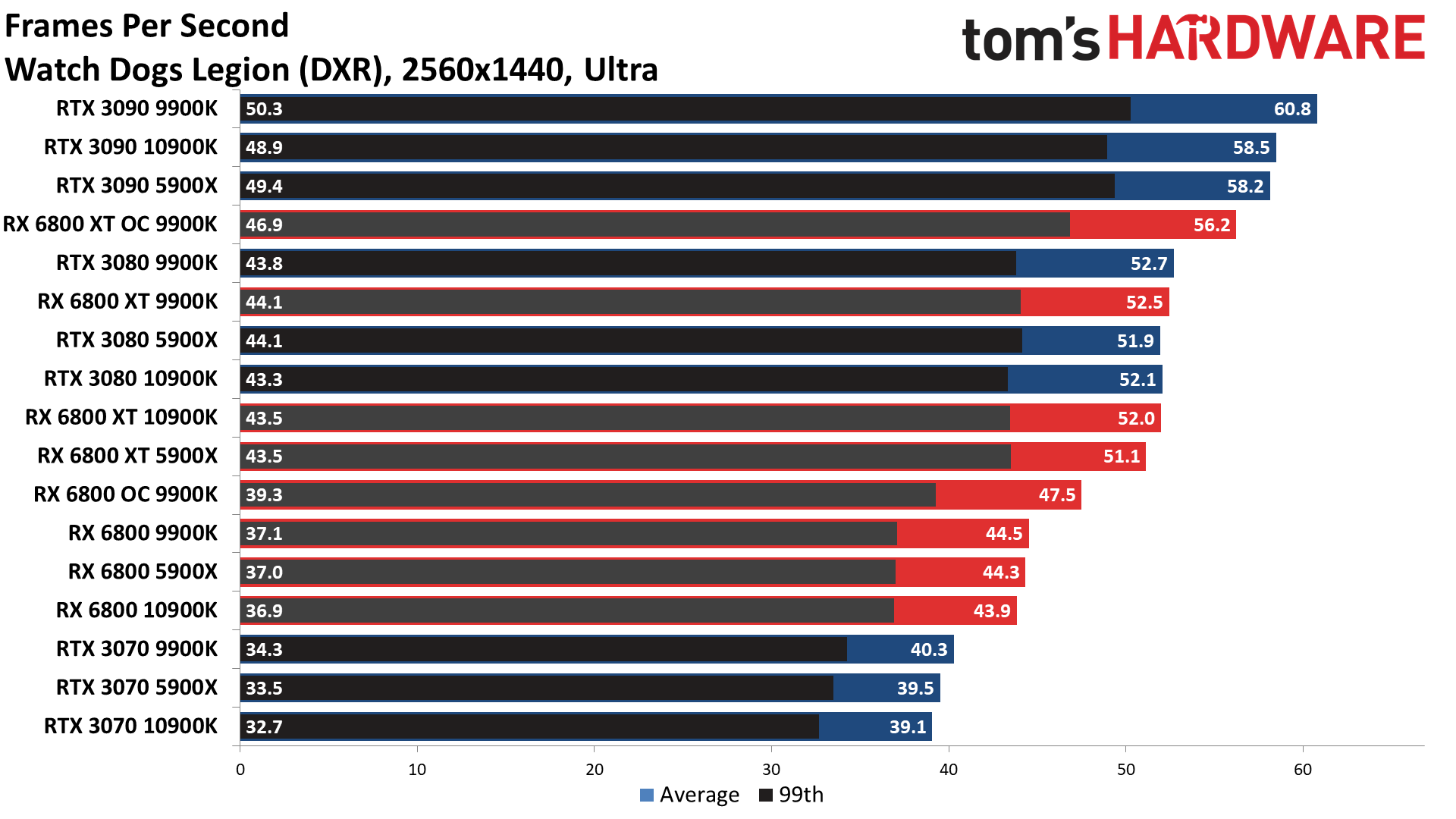

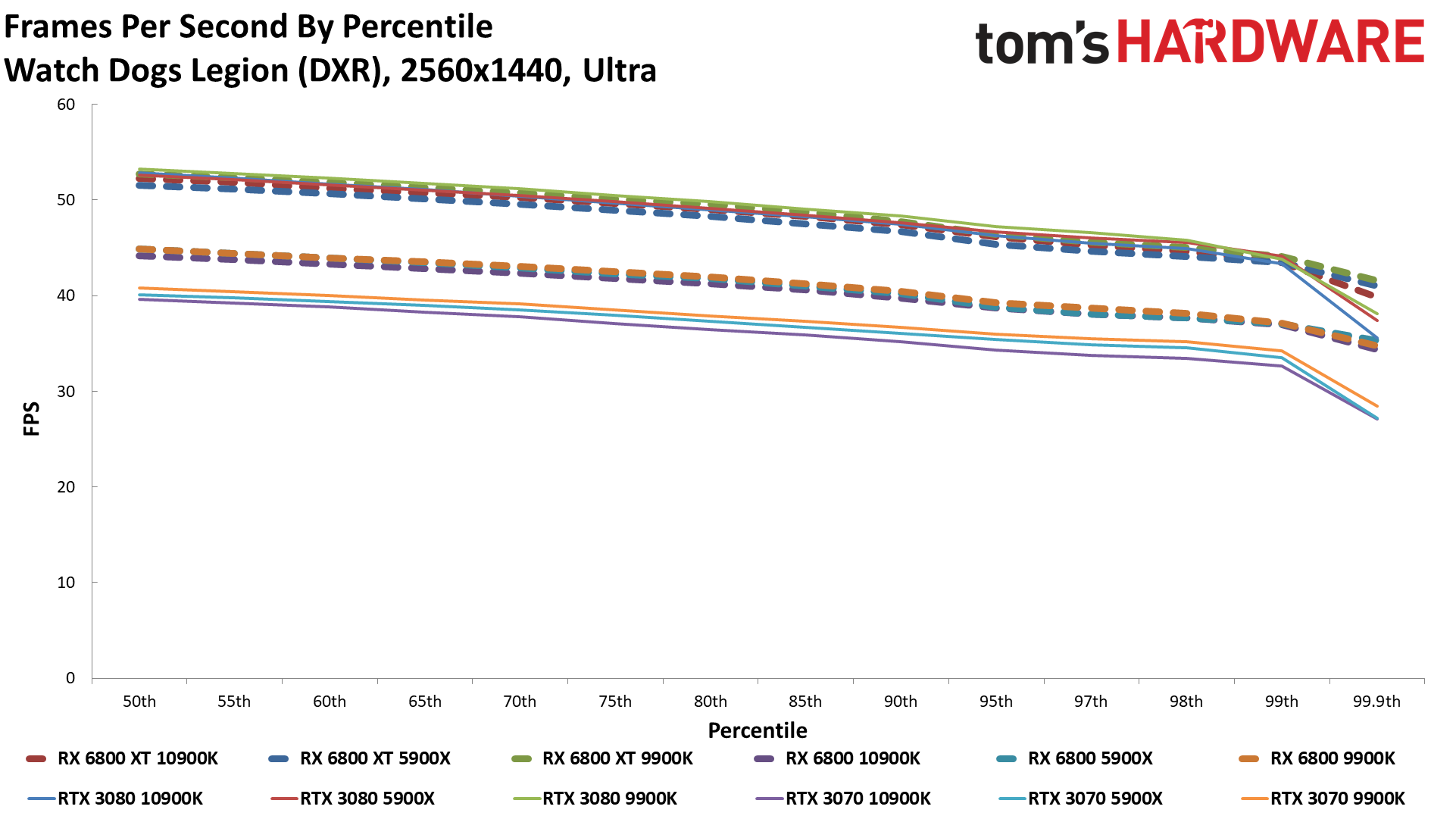

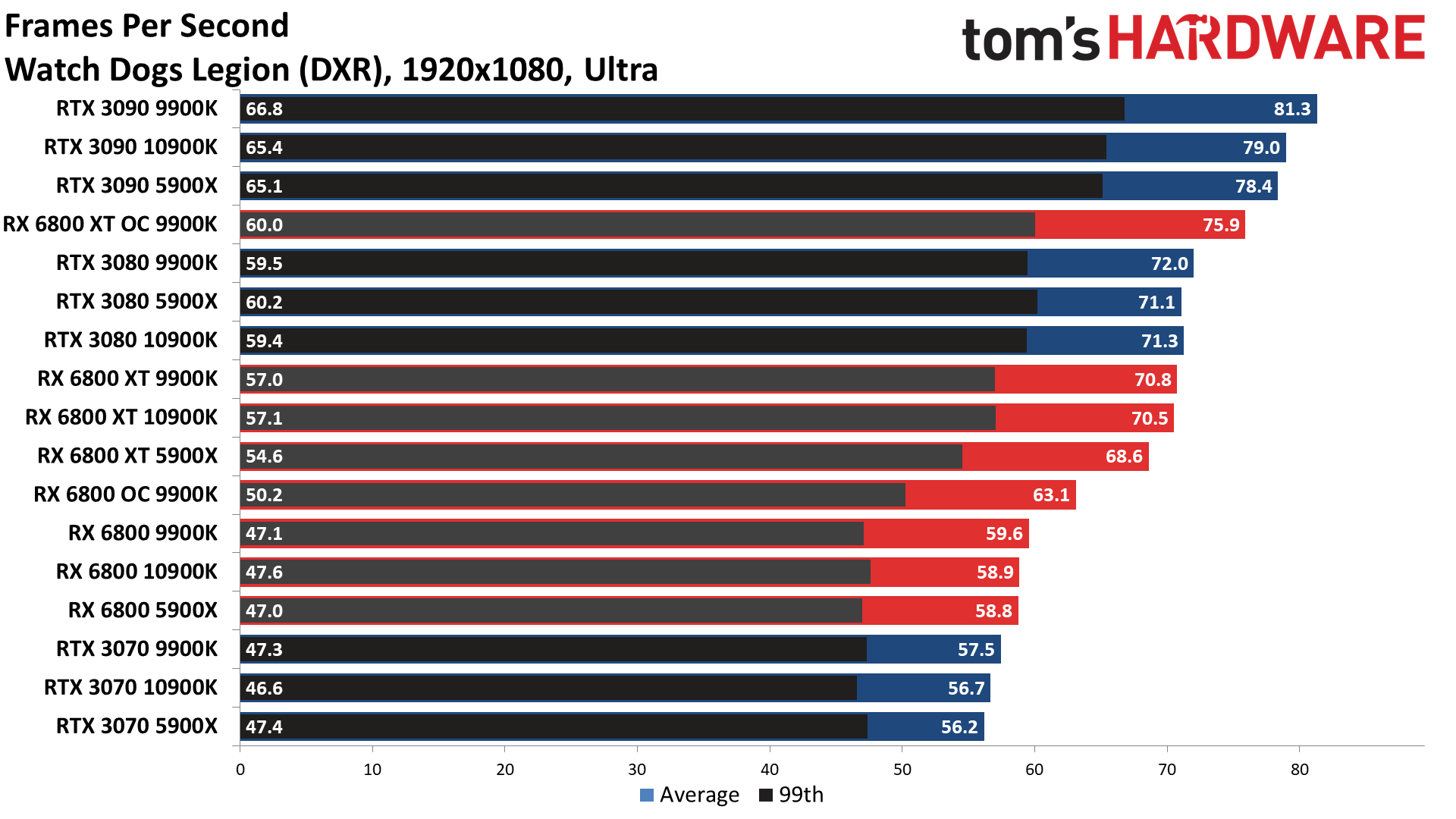

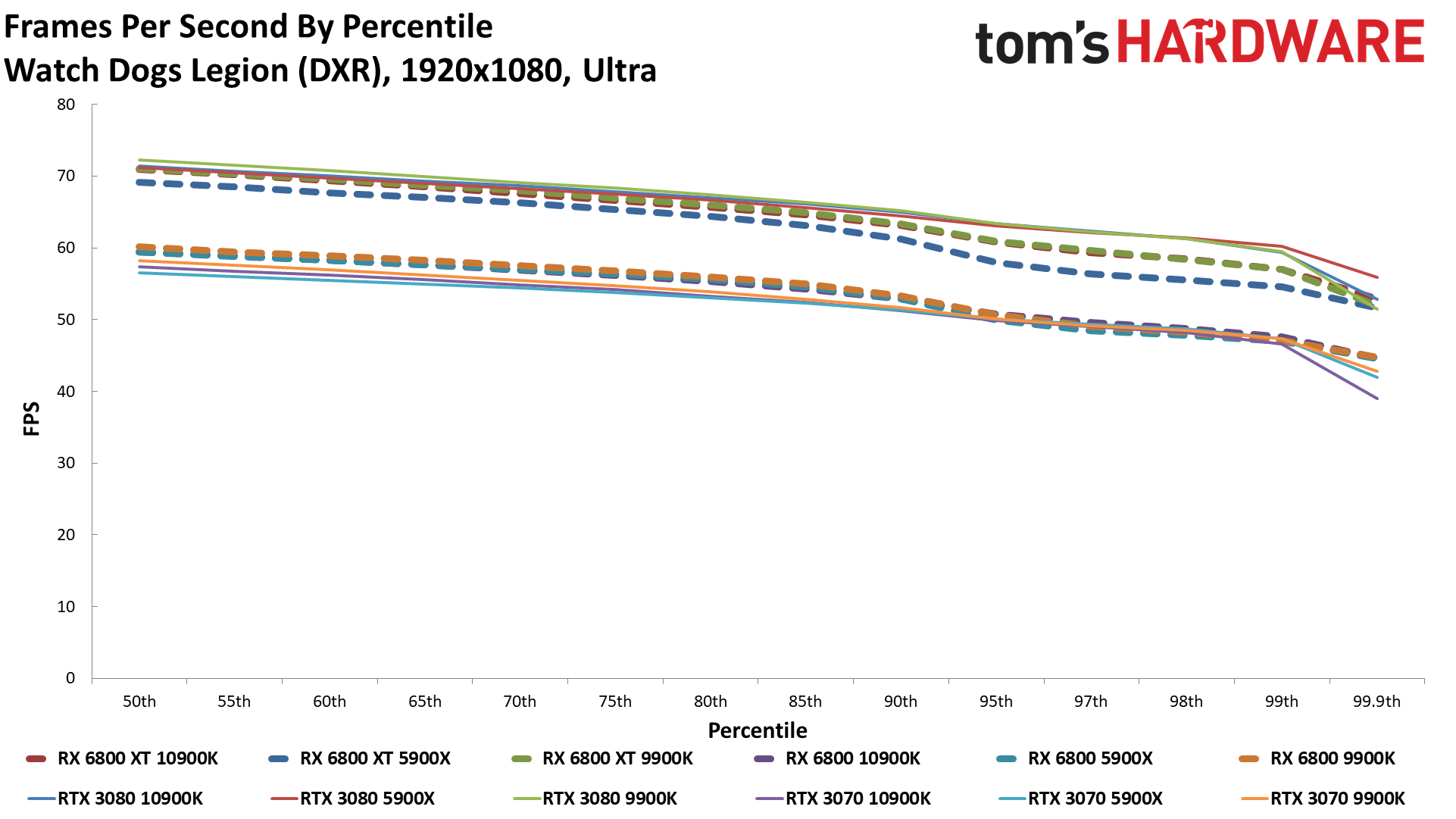

Looking just at the four new games, AMD gets a big win in Assassin's Creed Valhalla (it's an AMD promotional title, so future updates may change the standings). Dirt 5 is also a bit of an odd duck for Nvidia, with the RTX 3090 actually doing quite badly on the Ryzen 9 5900X and Core i9-10900K for some reason. Horizon Zero Dawn ends up favoring Nvidia quite a bit (but not the 3070), and lastly, we have Watch Dogs Legion, which favors Nvidia a bit (more at 4K), but it might have some bugs that are currently helping AMD's performance.

Overall, the 3090 still maintains its (gold-plated) crown, which you'd sort of expect from a $1,500 graphics card that you can't even buy right now. The RX 6800 XT mixes it up with the RTX 3080, coming out slightly ahead overall at 1080p and 1440p but barely trailing at 4K. Meanwhile, the RX 6800 easily outperforms the RTX 3070 across the suite, though a few games and/or lower resolutions do go the other way.

Oddly, my test systems ended up with the Core i9-10900K and even the Core i9-9900K often leading the Ryzen 9 5900X. The 3090 did best with the 5900X at 1080p, but then went to the 10900K at 1440p and both the 9900K and 10900K at 4K. The other GPUs also swap places, though usually the difference between CPU is pretty negligible (and a few results just look a bit buggy).

It may be that the beta BIOS for the MSI X570 board (which enables Smart Memory Access) still needs more tuning, or that the differences in memory came into play. I didn't have time to check performance without enabling the large PCIe BAR feature either. But these are mostly very small differences, and any of the three CPUs tested here are sufficient for gaming.

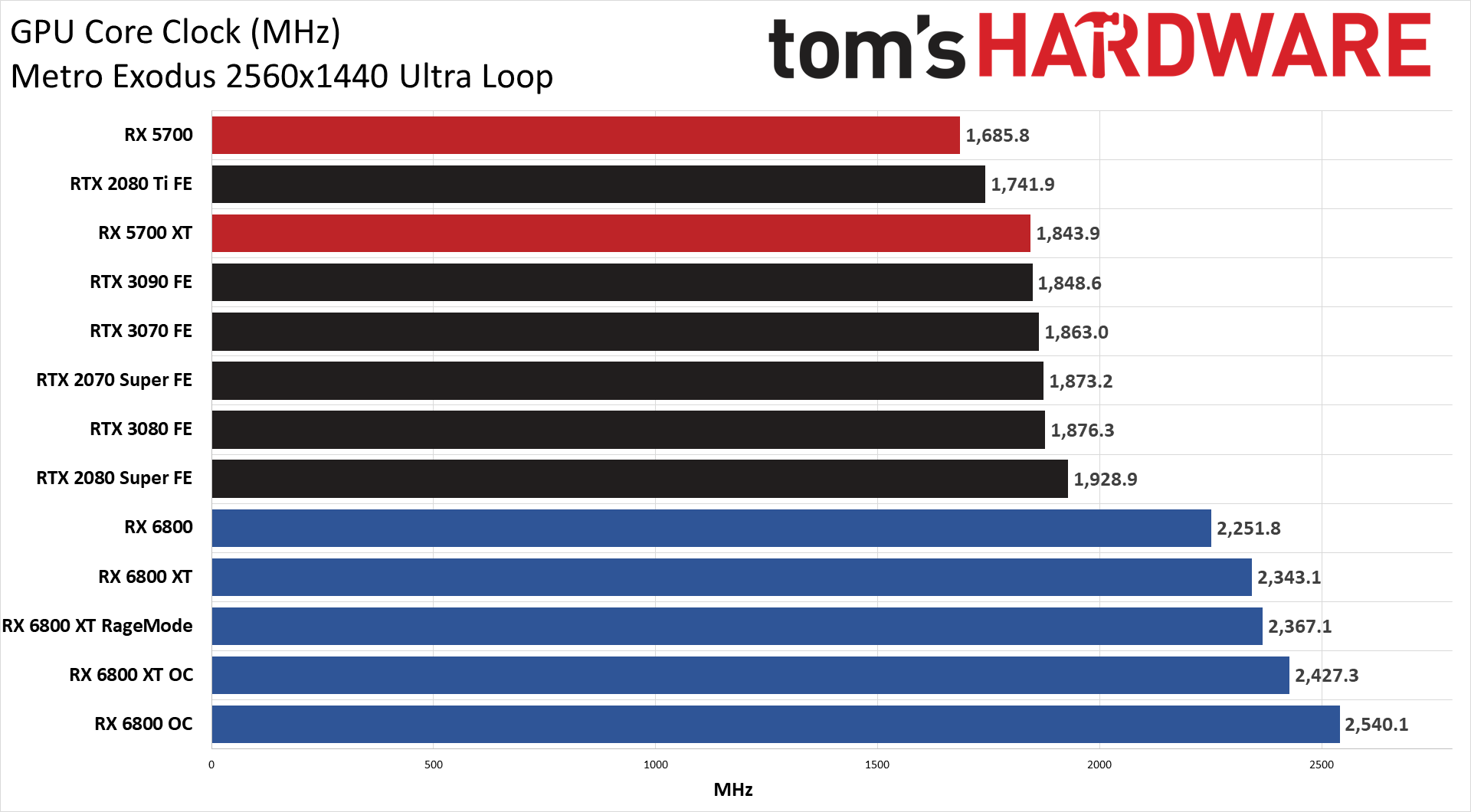

As for overclocking, it's pretty much what you'd expect. Increase the power limit, GPU core clocks, and GDDR6 clocks and you get more performance. It's not a huge improvement, though. Overall, the RX 6800 XT was 4-6 percent faster when overclocked (the higher results were at 4K). The RX 6800 did slightly better, improving by 6 percent at 1080p and 1440p, and 8 percent at 4K. GPU clocks were also above 2.5GHz for most of the testing of the RX 6800, and it's default lower boost clock gave it a bit more room for improvement.

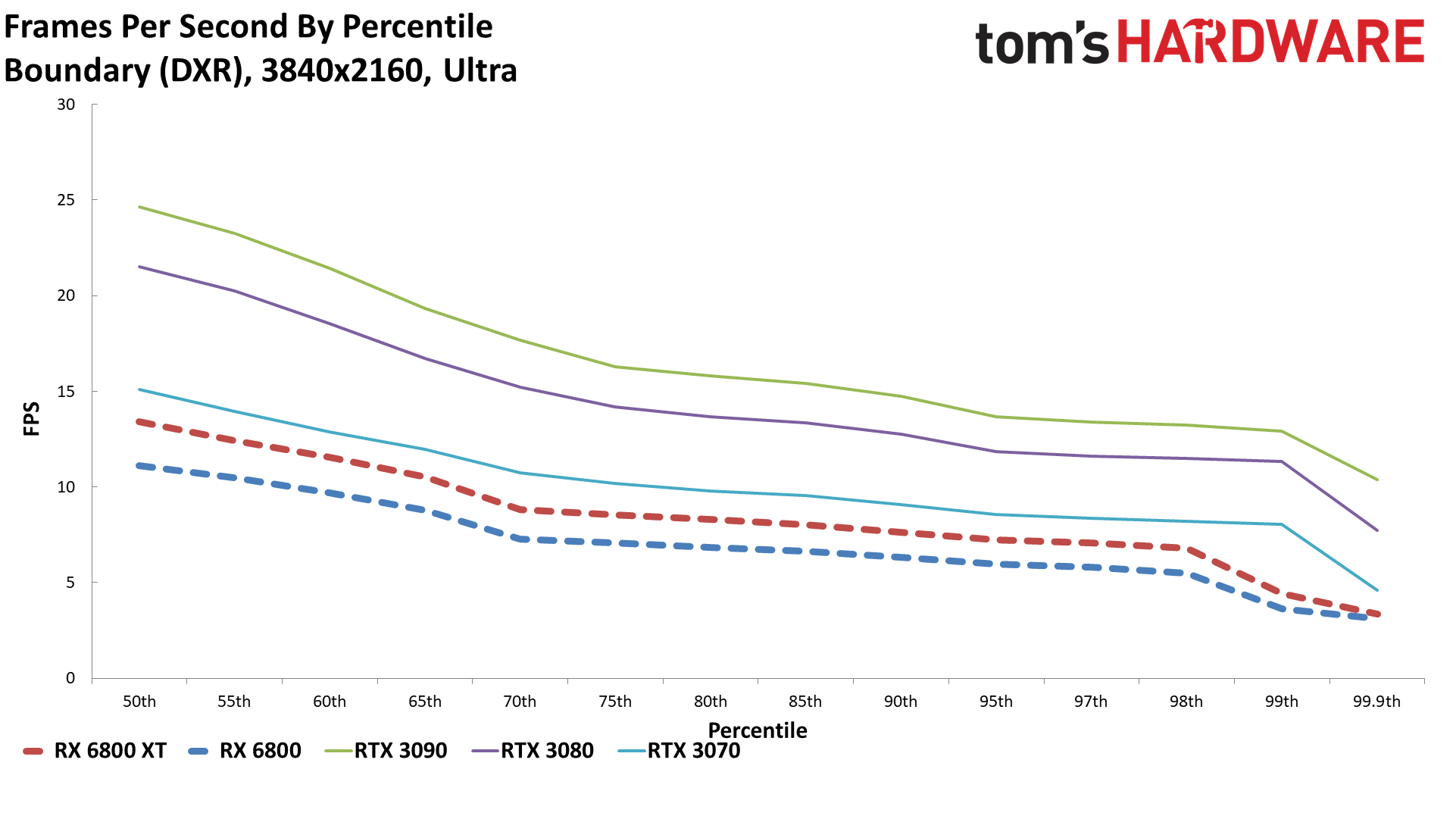

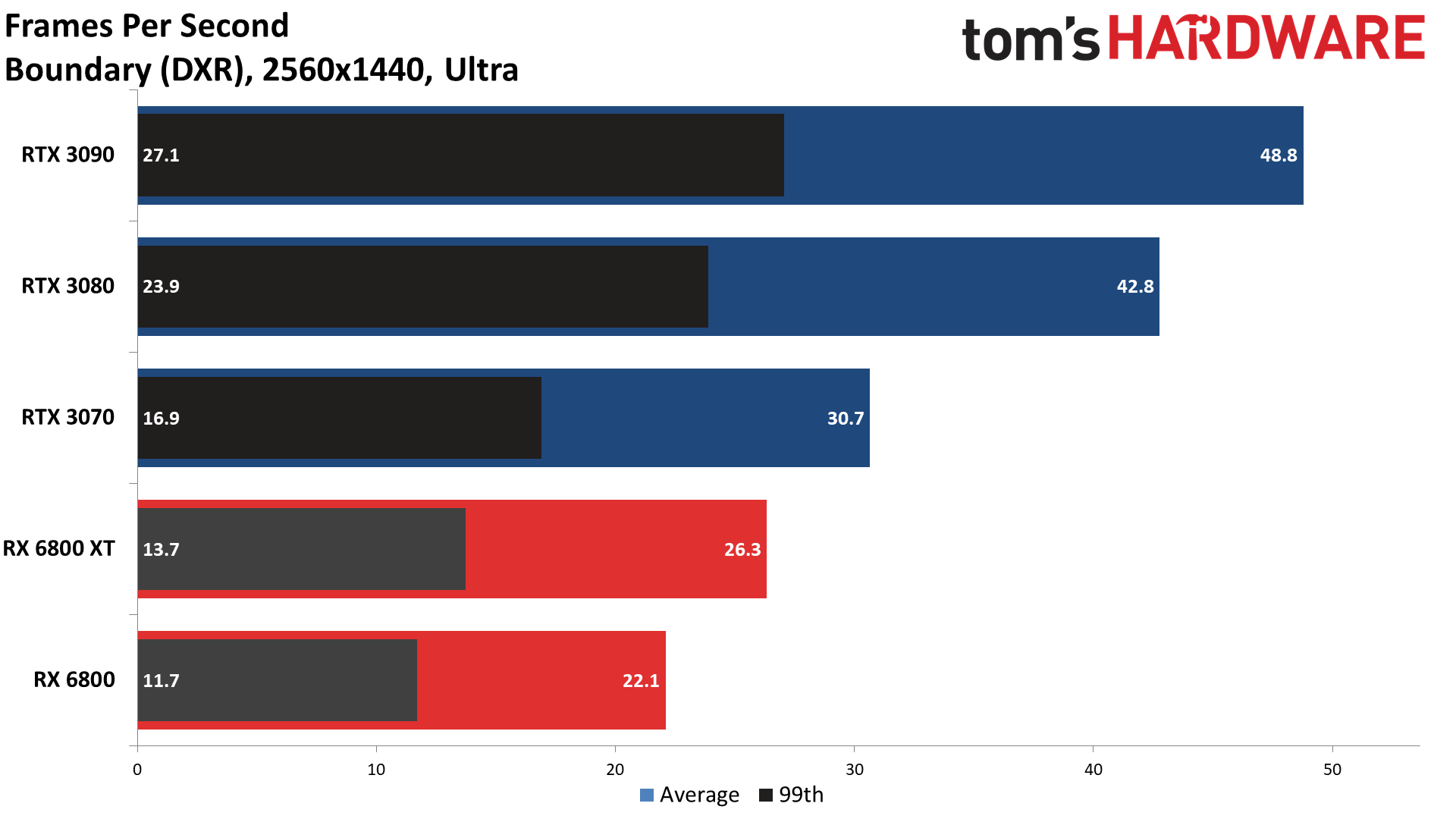

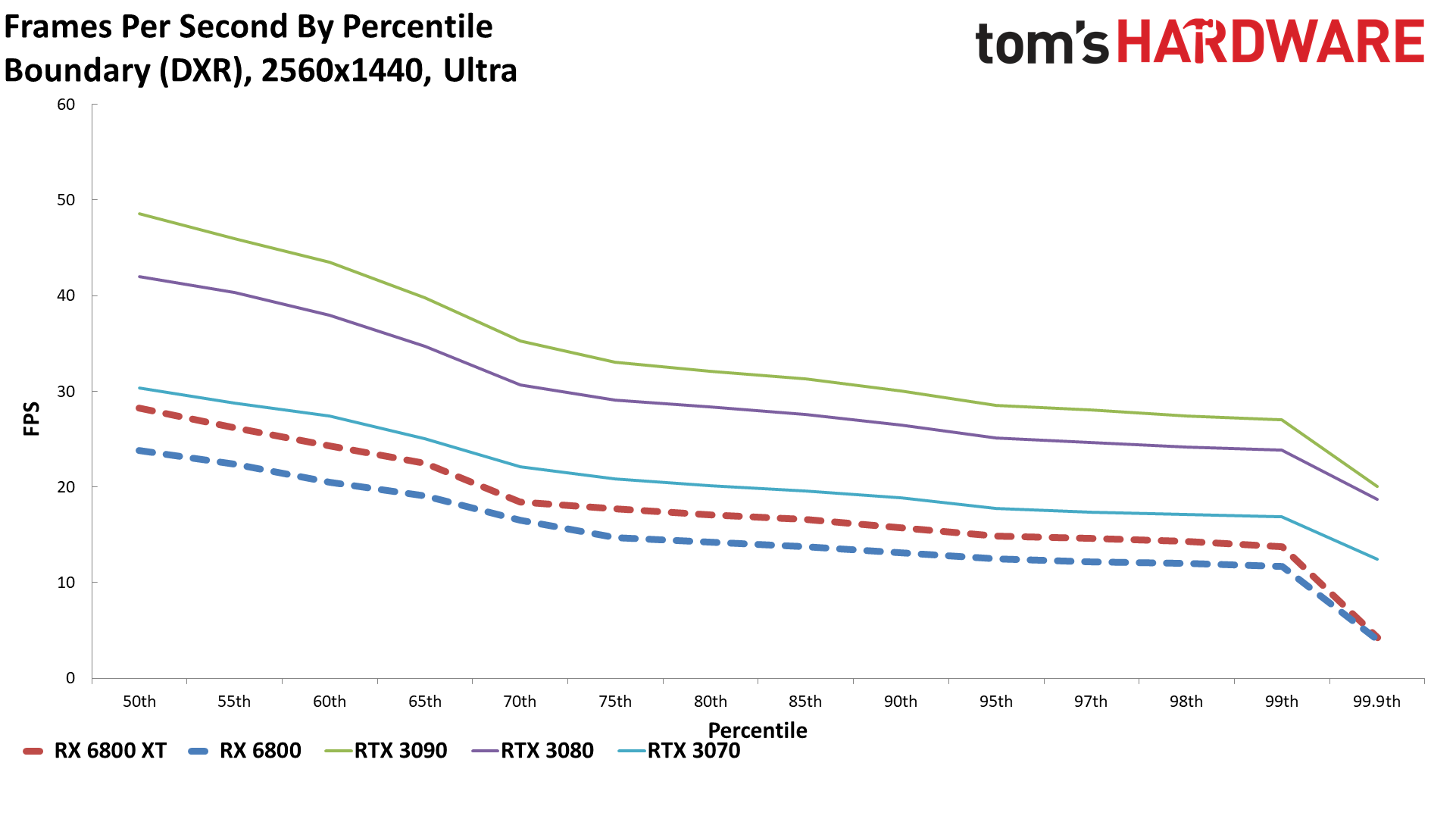

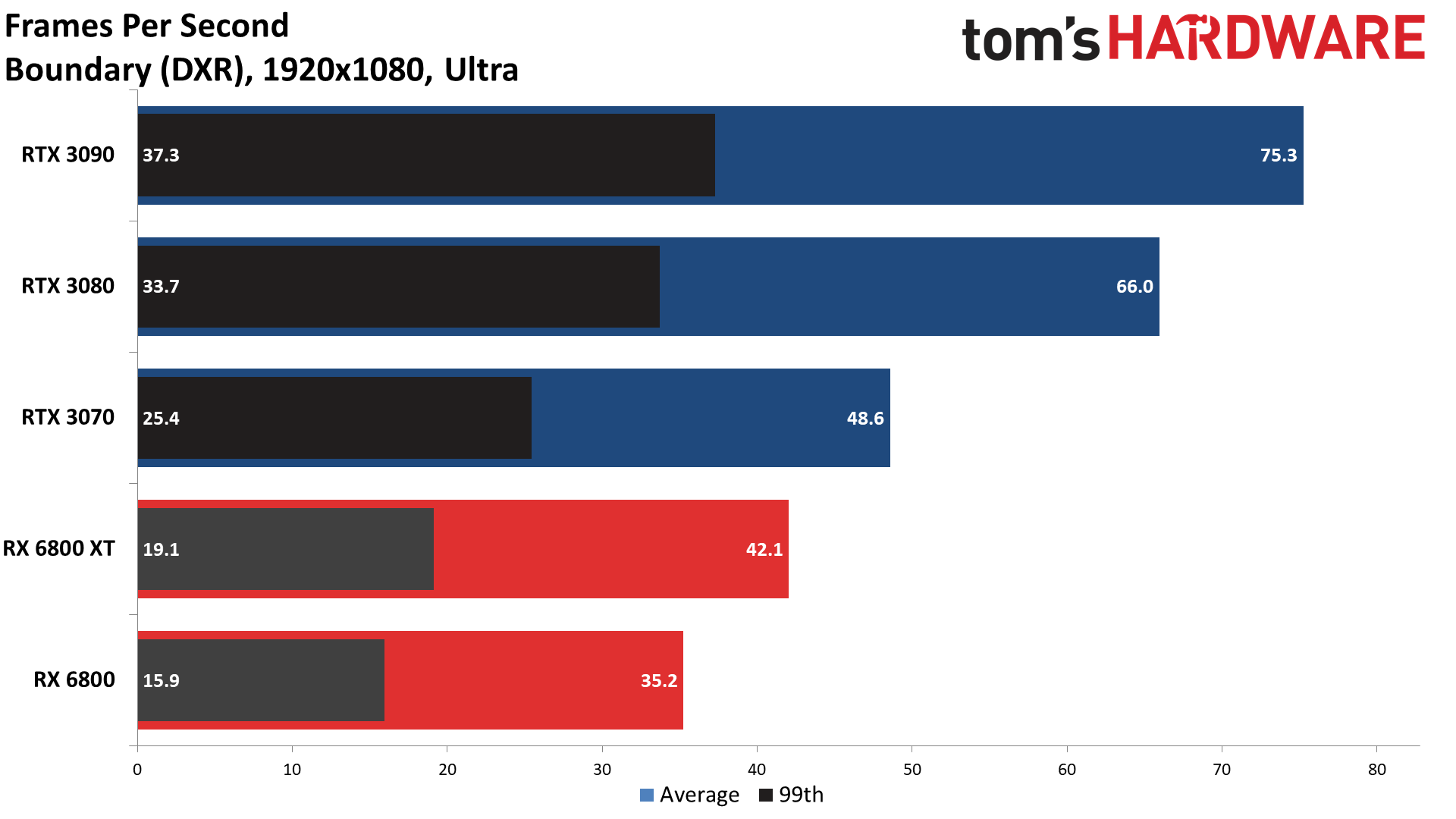

Radeon RX 6800 Series Ray Tracing Performance

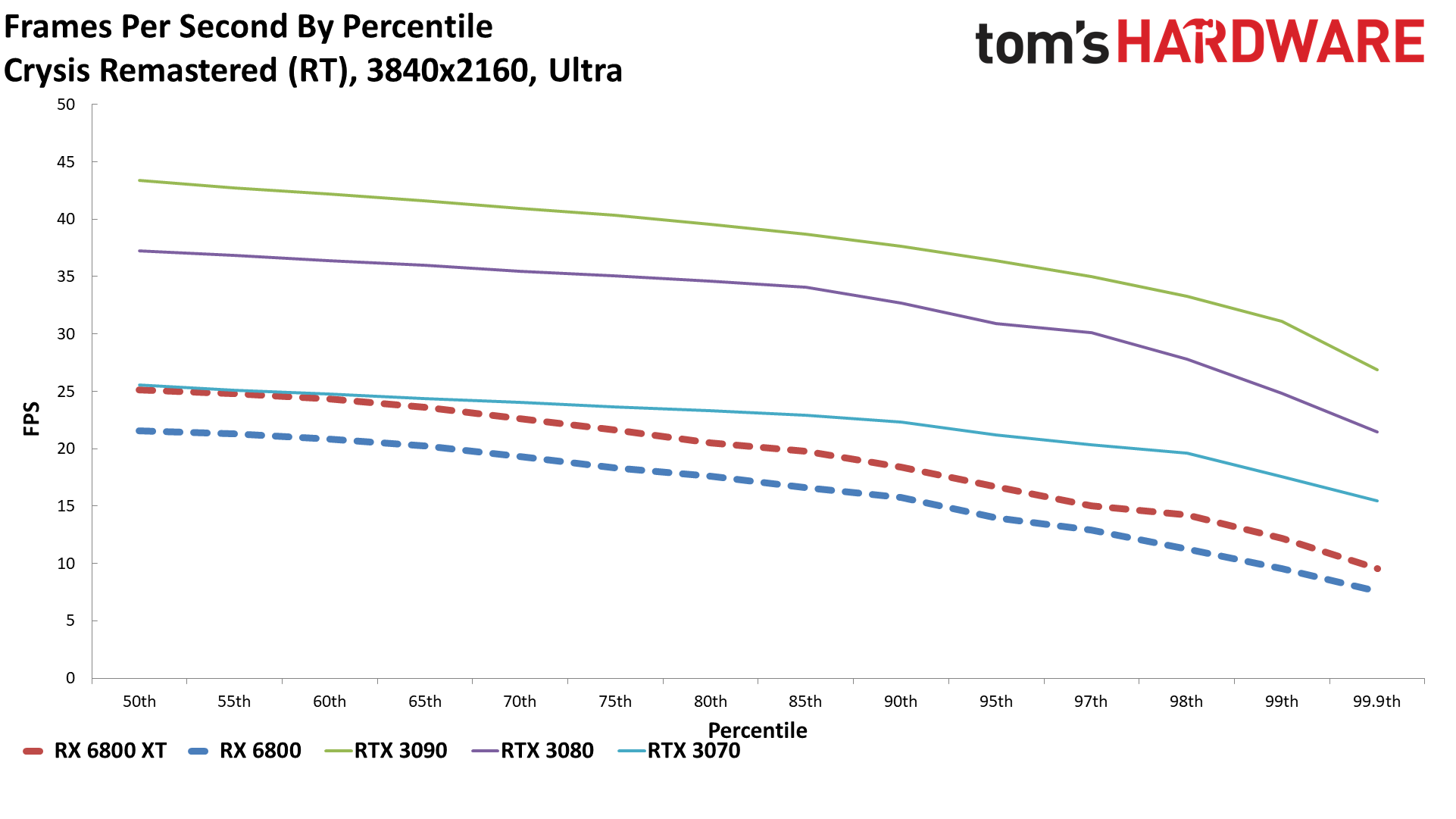

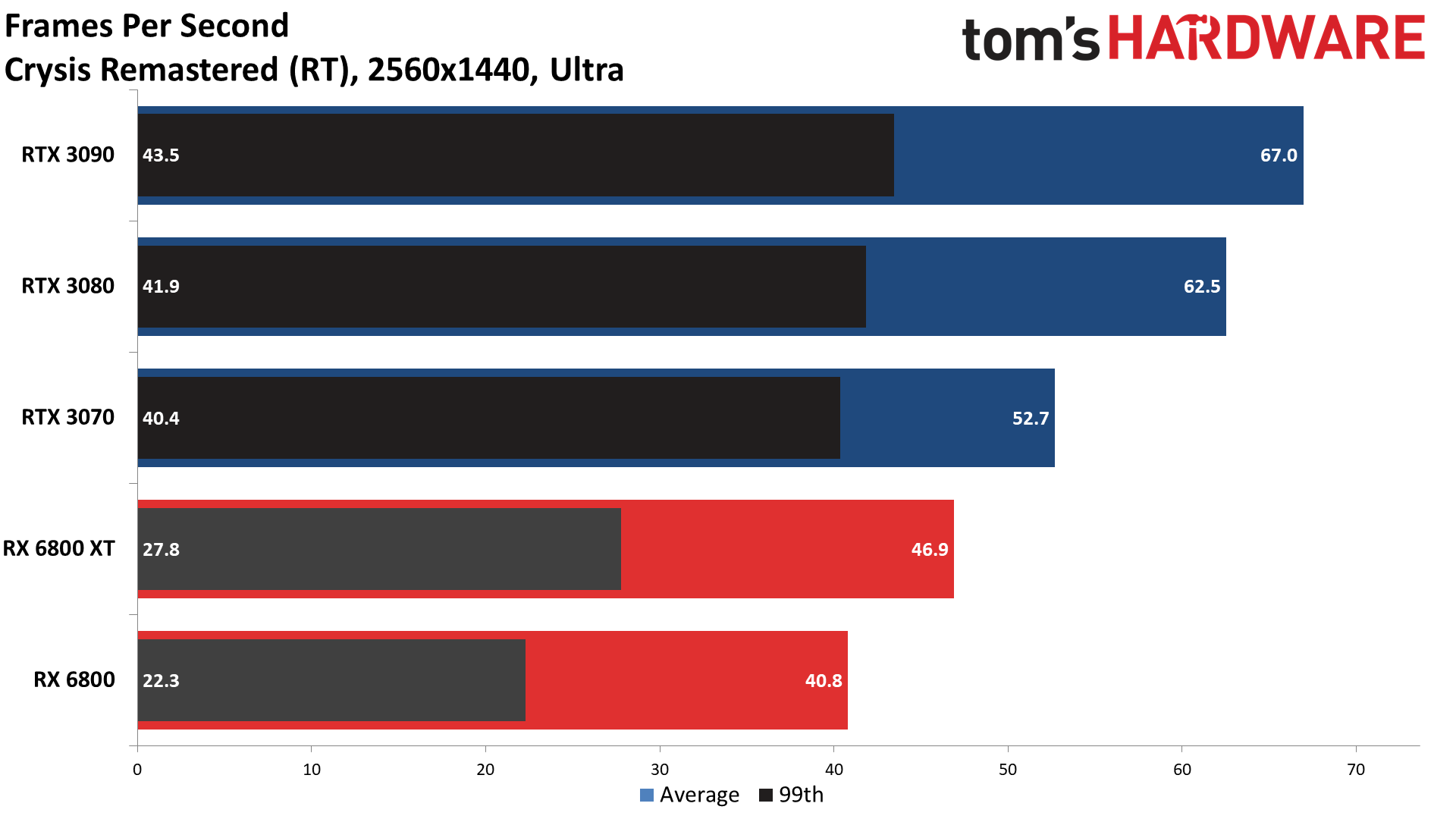

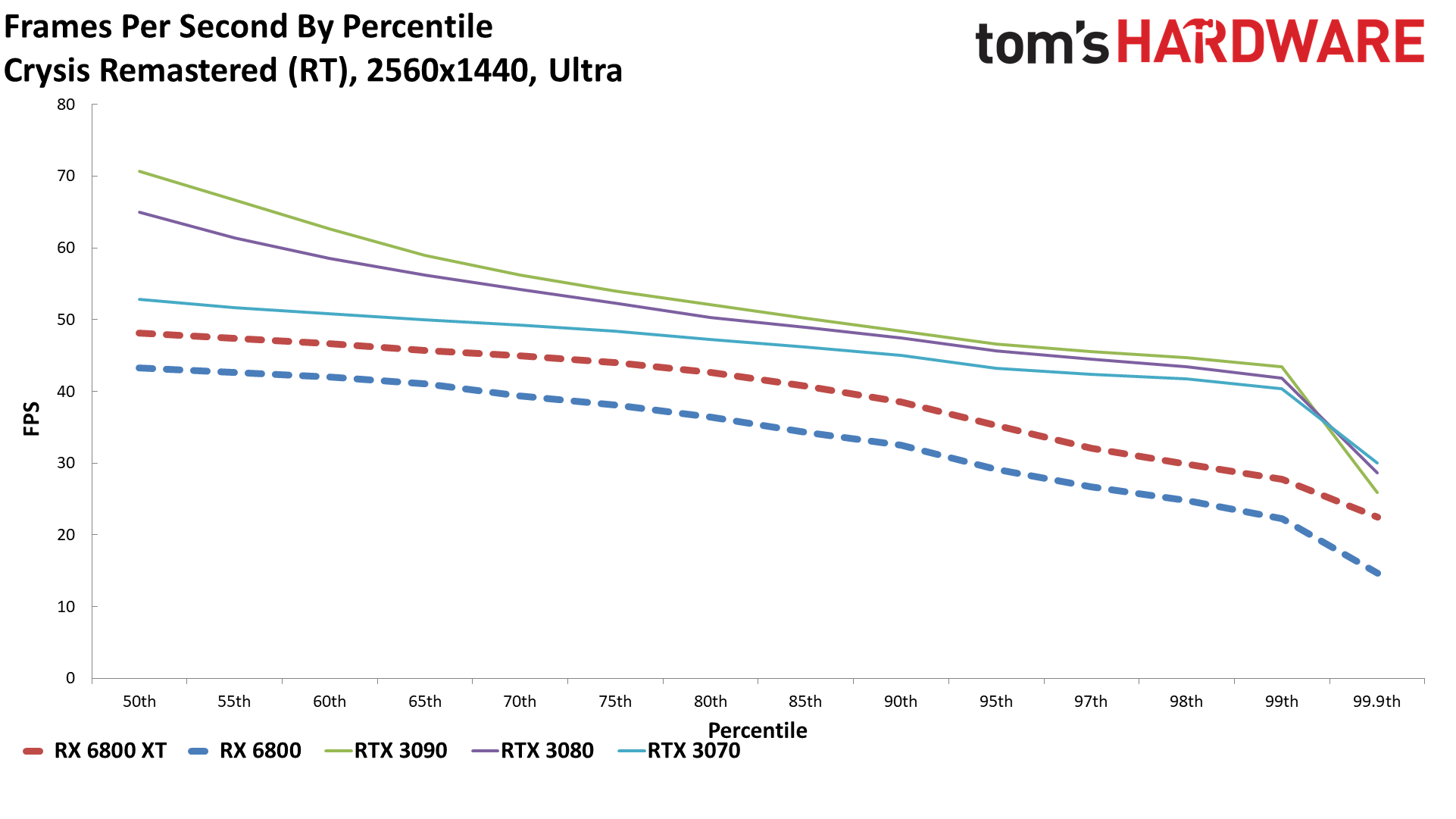

So far, most of the games haven't had ray tracing enabled. But that's the big new feature for RDNA2 and the Radeon RX 6000 series, so we definitely wanted to look into ray tracing performance more. Here's where things take a turn for the worse because ray tracing is very demanding, and Nvidia has DLSS to help overcome some of the difficulty by doing AI-enhanced upscaling. AMD can't do DLSS since it's Nvidia proprietary tech, which means to do apples-to-apples comparisons, we have to turn off DLSS on the Nvidia cards.

That's not really fair because DLSS 2.0 and later actually look quite nice, particularly when using the Balanced or Quality modes. What's more, native 4K gaming with ray tracing enabled is going to be a stretch for just about any current GPU, including the RTX 3090 — unless you're playing a lighter game like Pumpkin Jack. Anyway, we've looked at ray tracing performance with DLSS in a bunch of these games, and performance improves by anywhere from 20 percent to as much as 80 percent (or more) in some cases. DLSS may not always look better, but a slight drop in visual fidelity for a big boost in framerates is usually hard to pass up.

We'll have to see if AMD's FidelityFX Super Resolution can match DLSS in the future, and how many developers make use of it. Considering AMD's RDNA2 GPUs are also in the PlayStation 5 and Xbox Series S/X, we wouldn't count AMD out, but for now, Nvidia has the technology lead. Which brings us to native ray tracing performance.

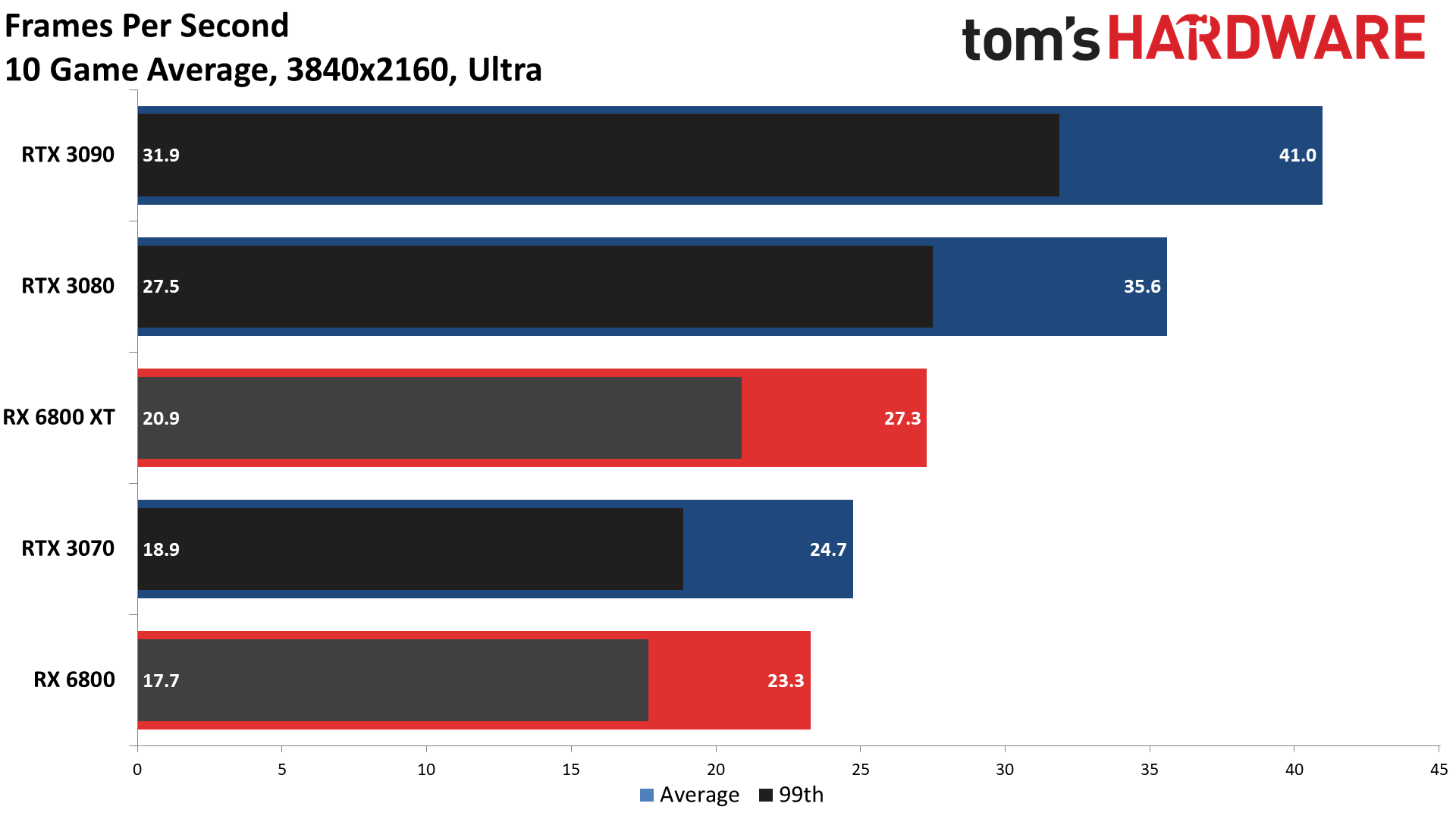

10-game DXR Average

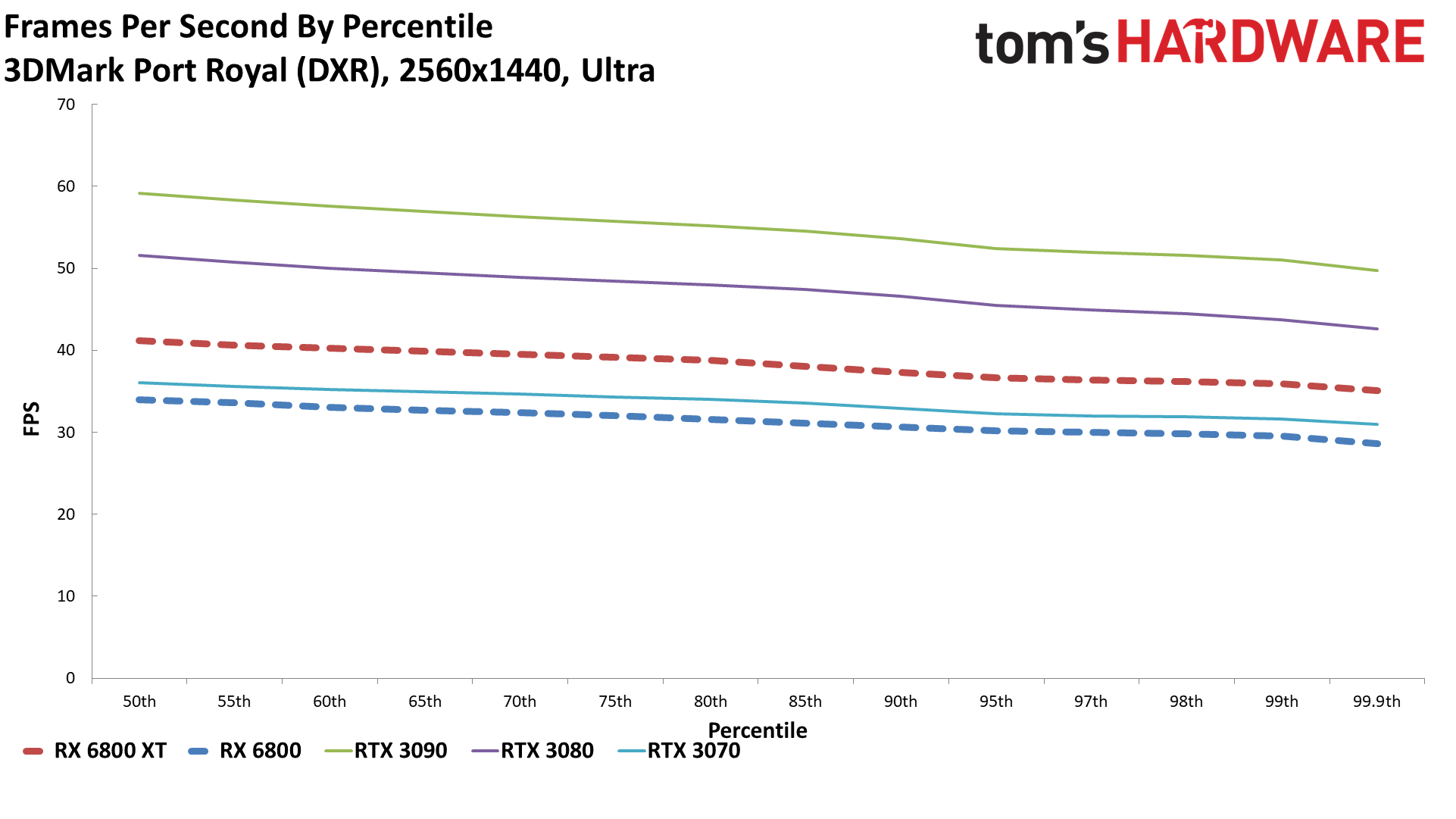

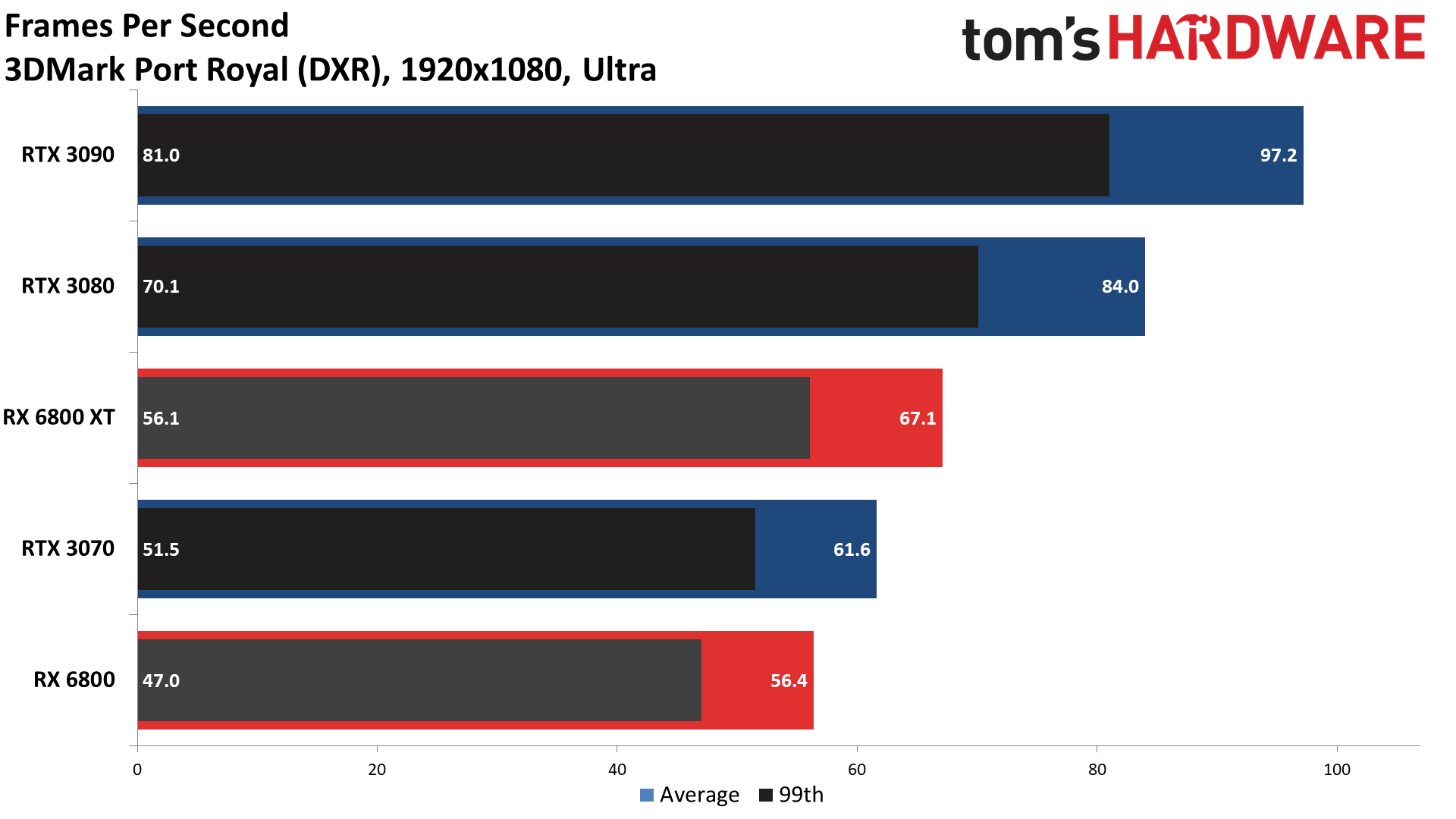

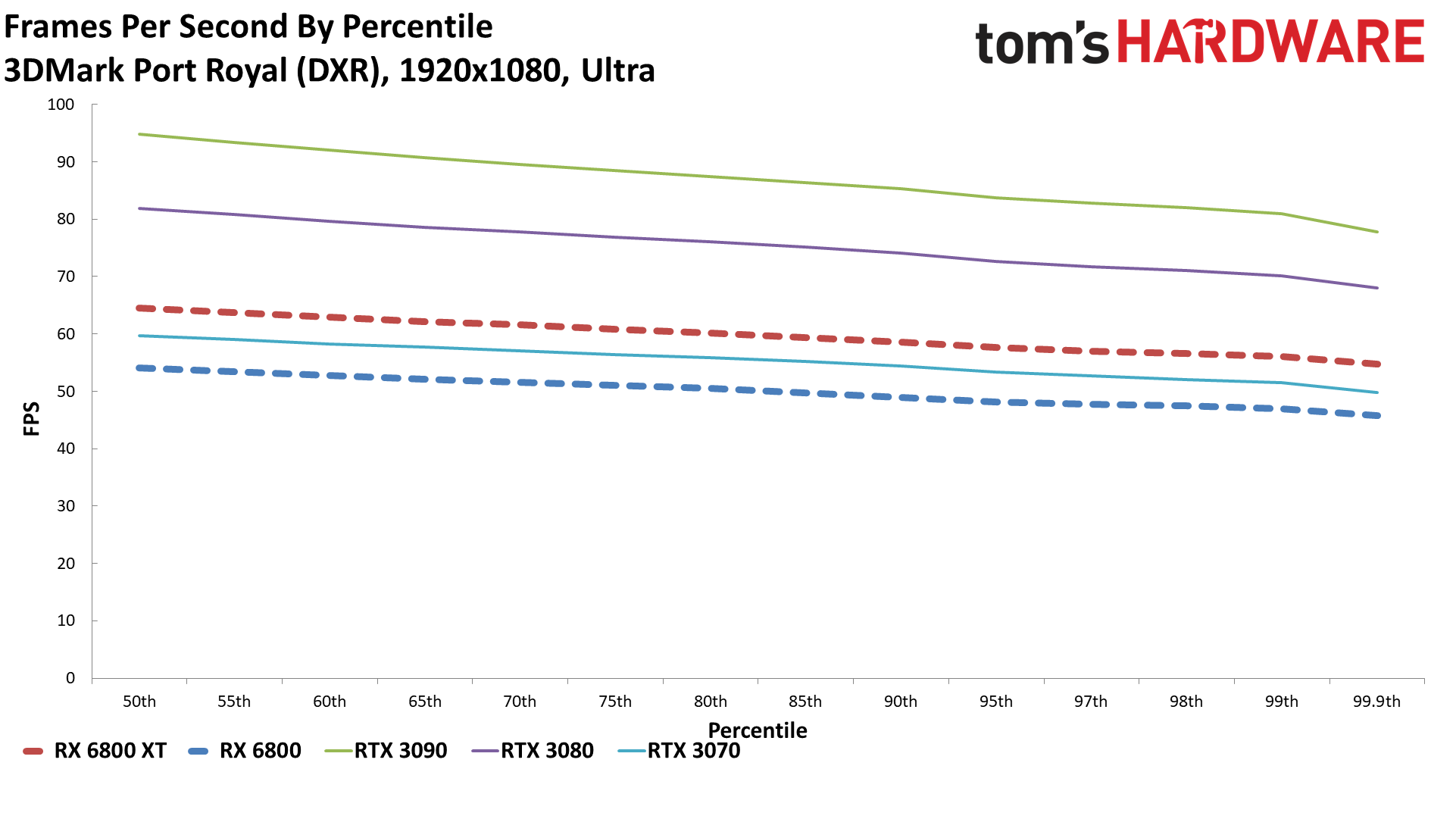

3DMark Port Royal

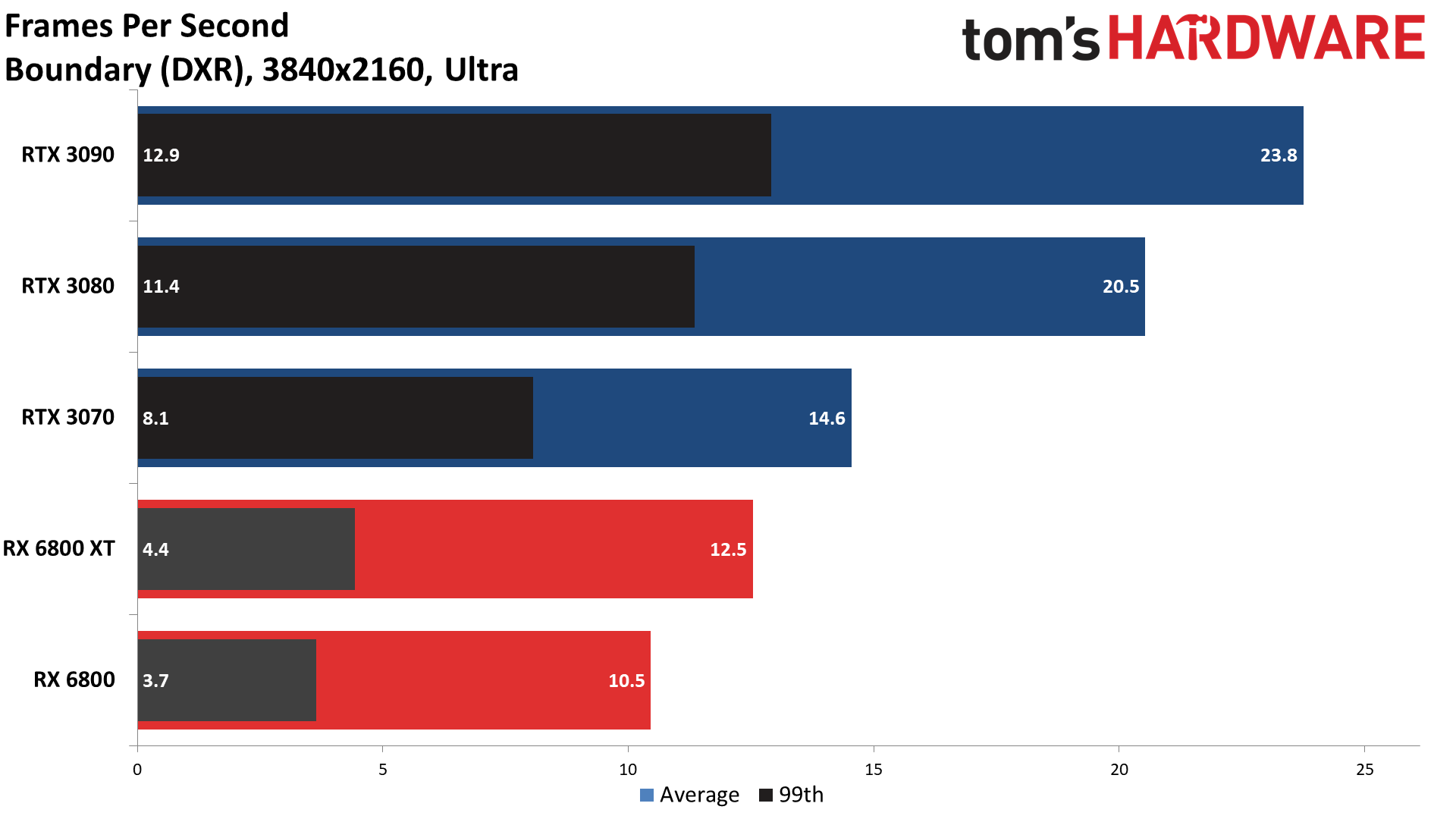

Boundary Benchmark

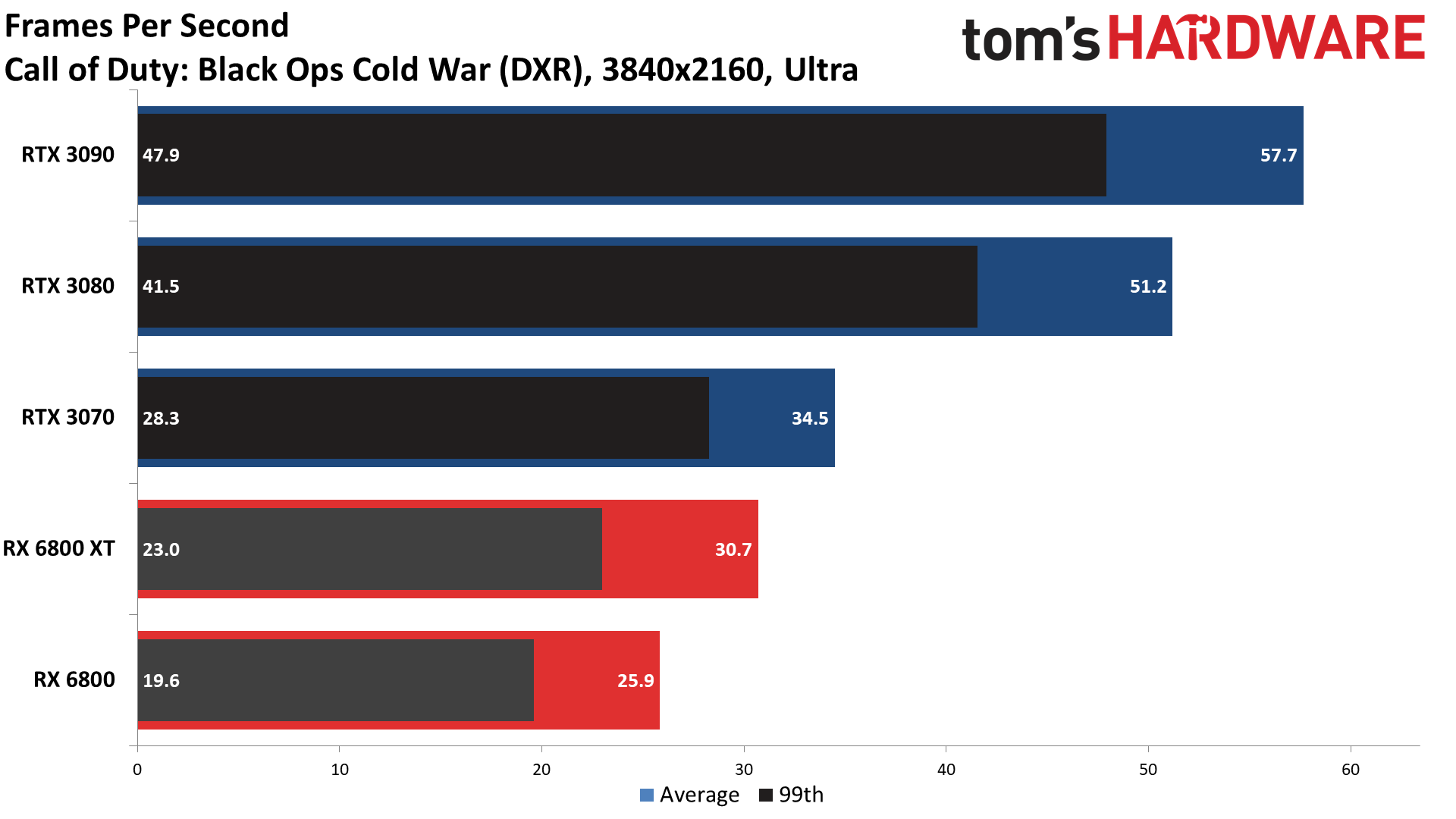

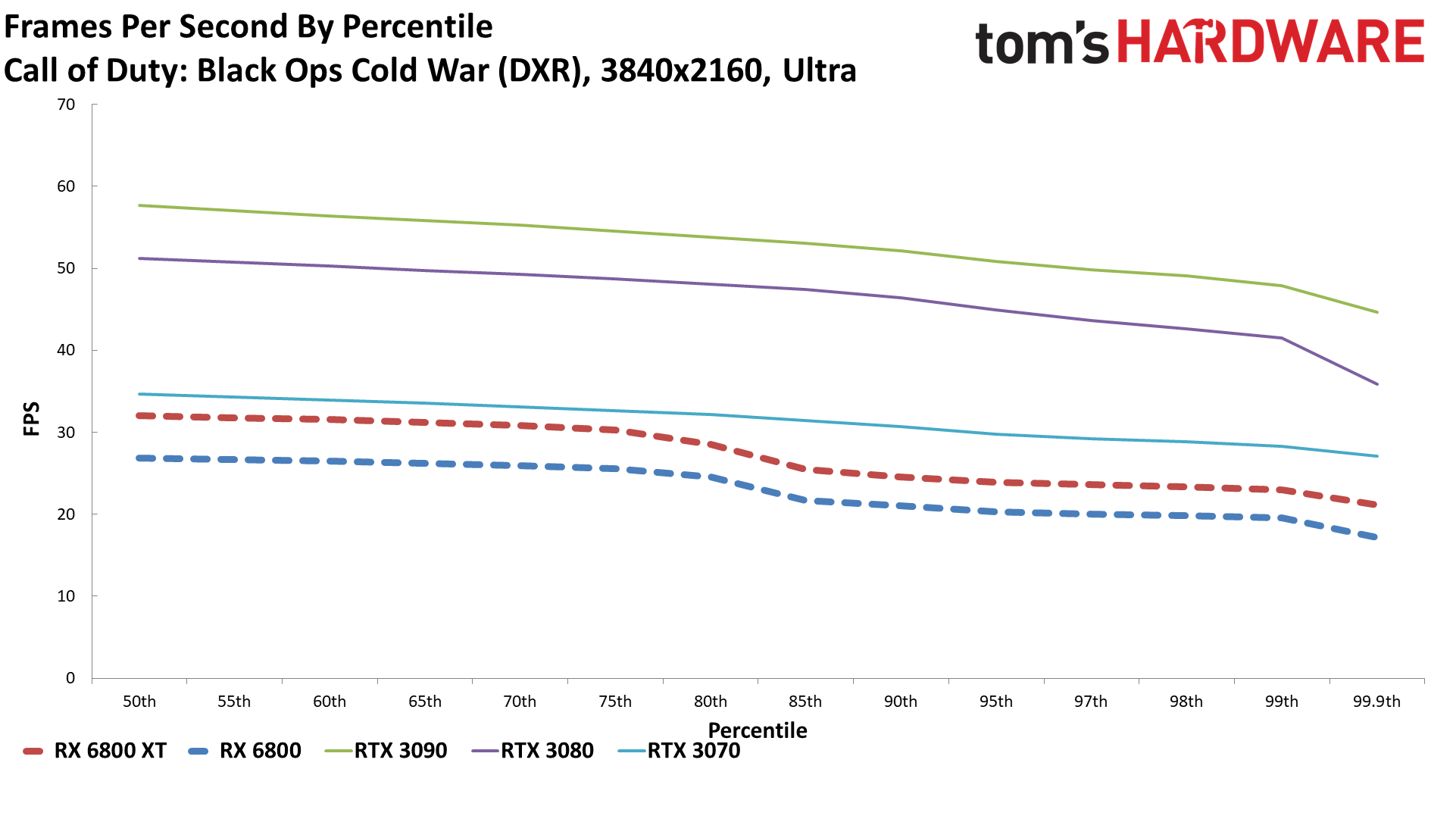

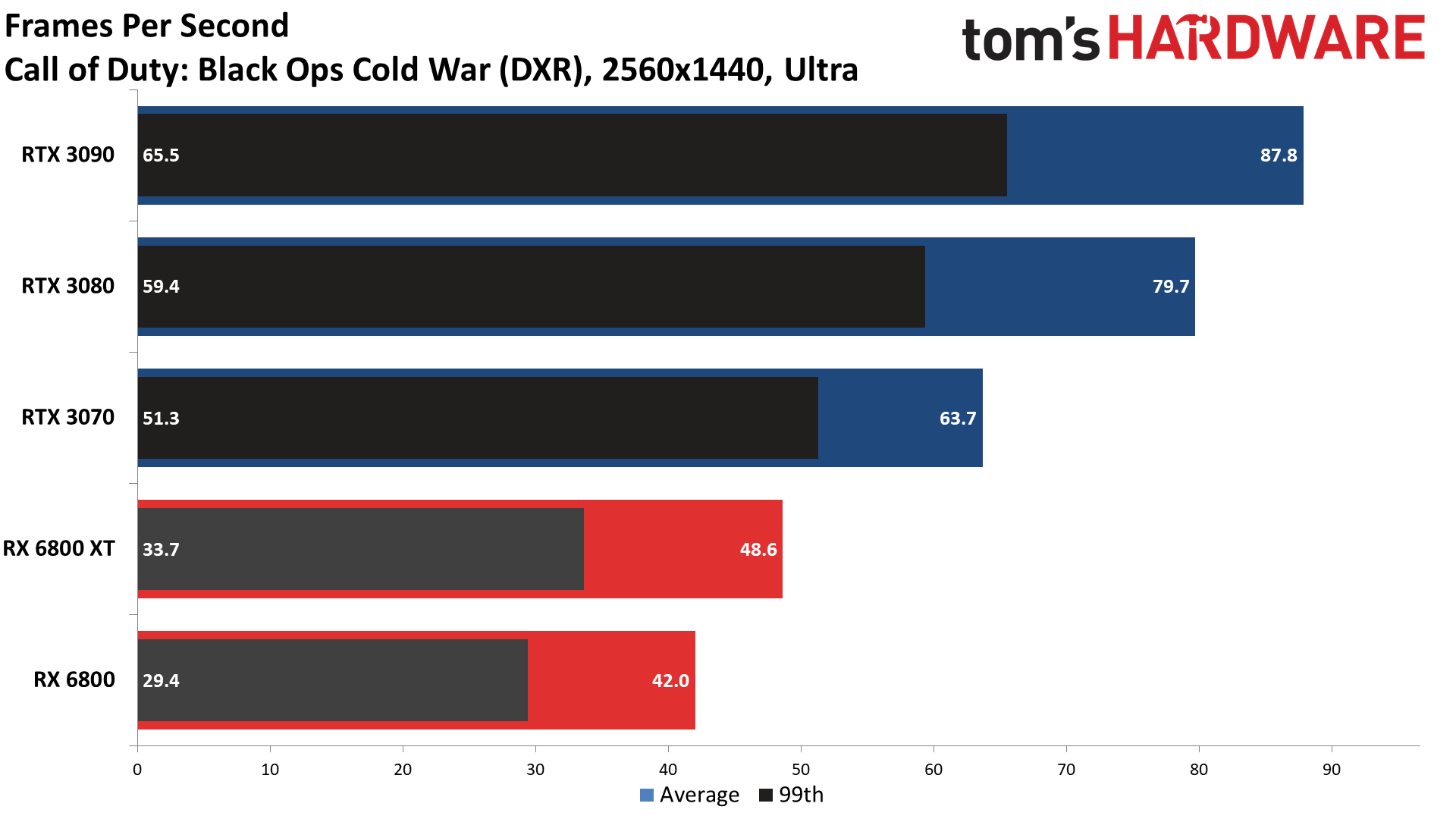

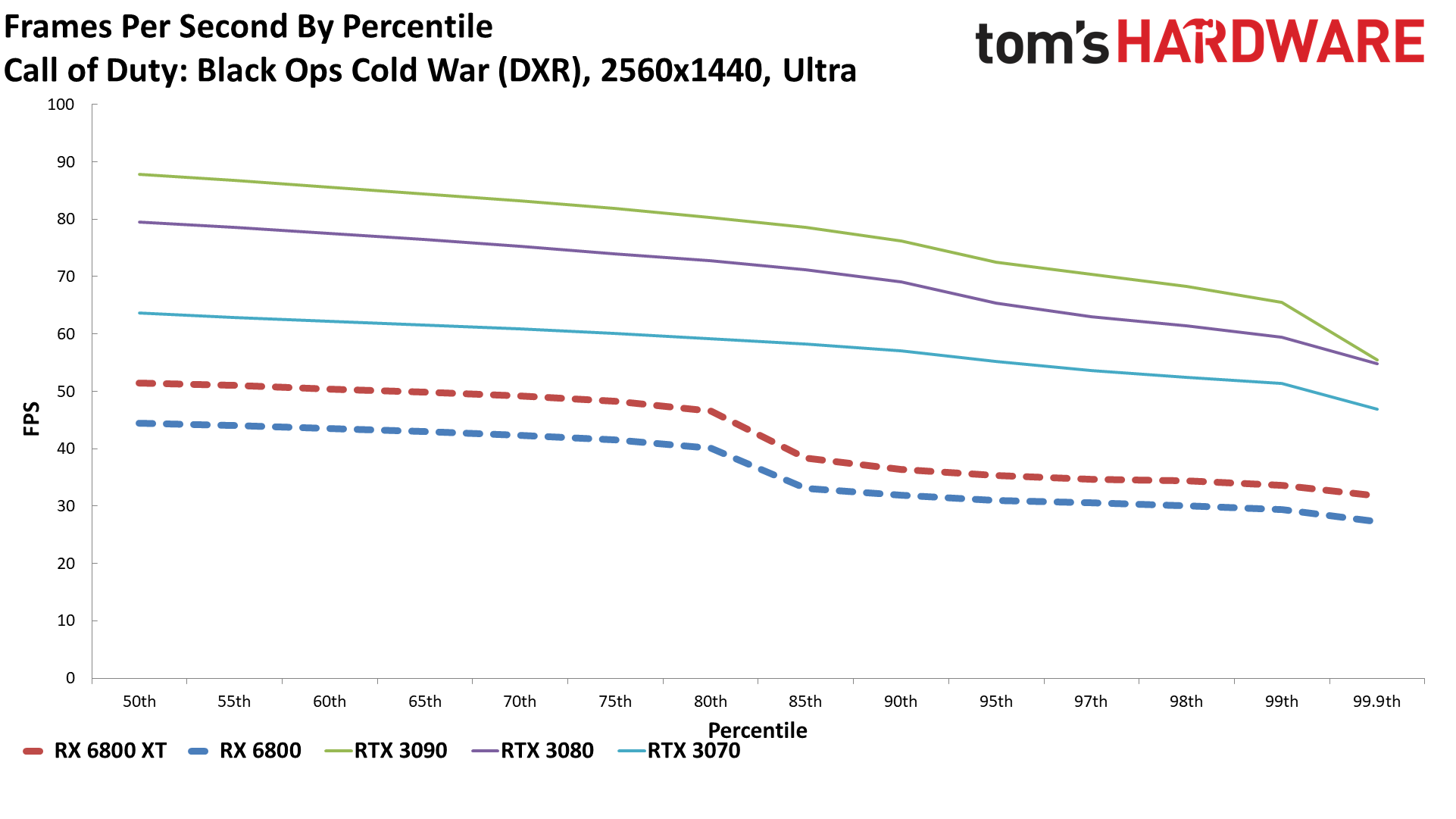

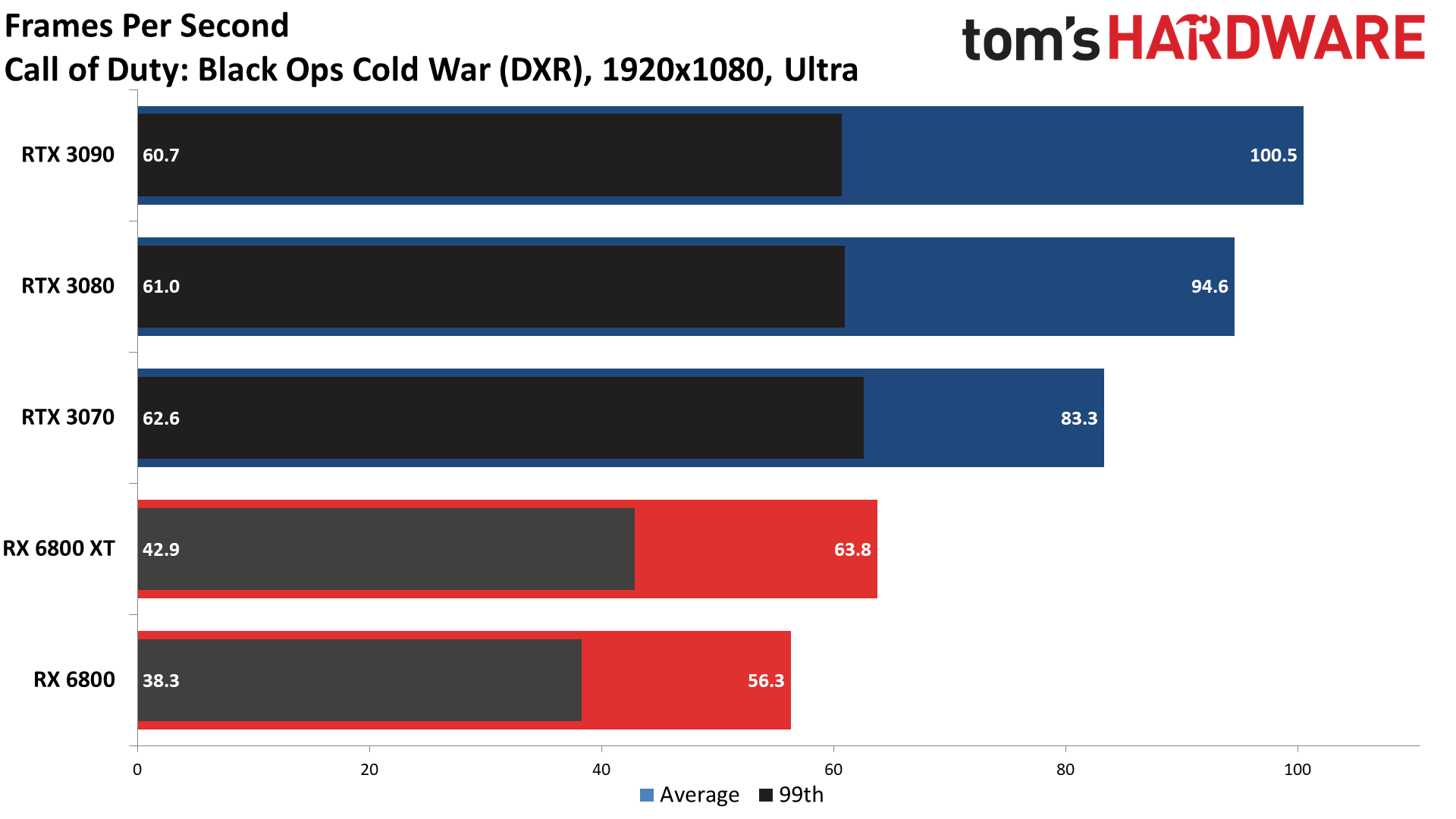

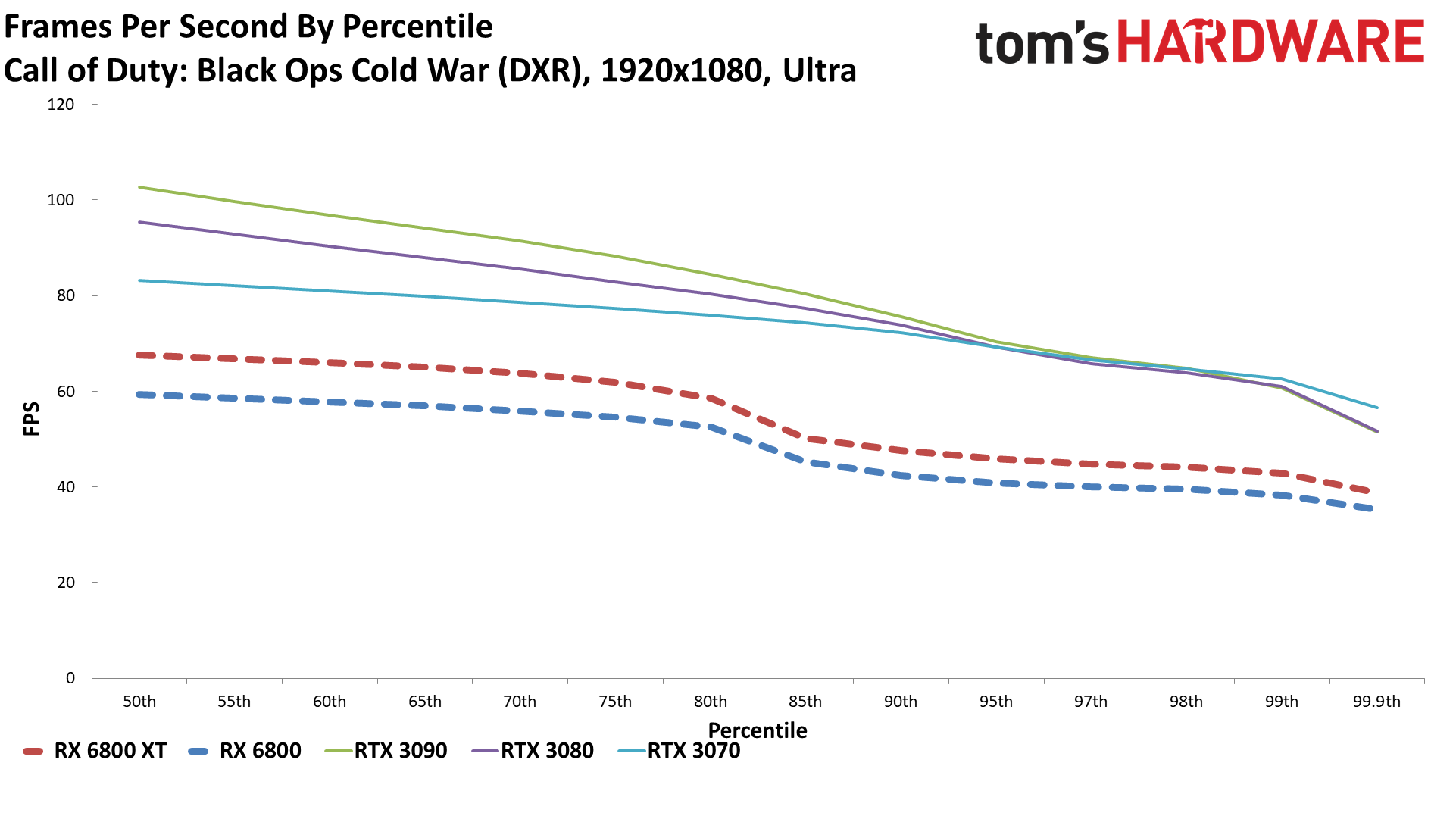

Call of Duty Black Ops Cold War

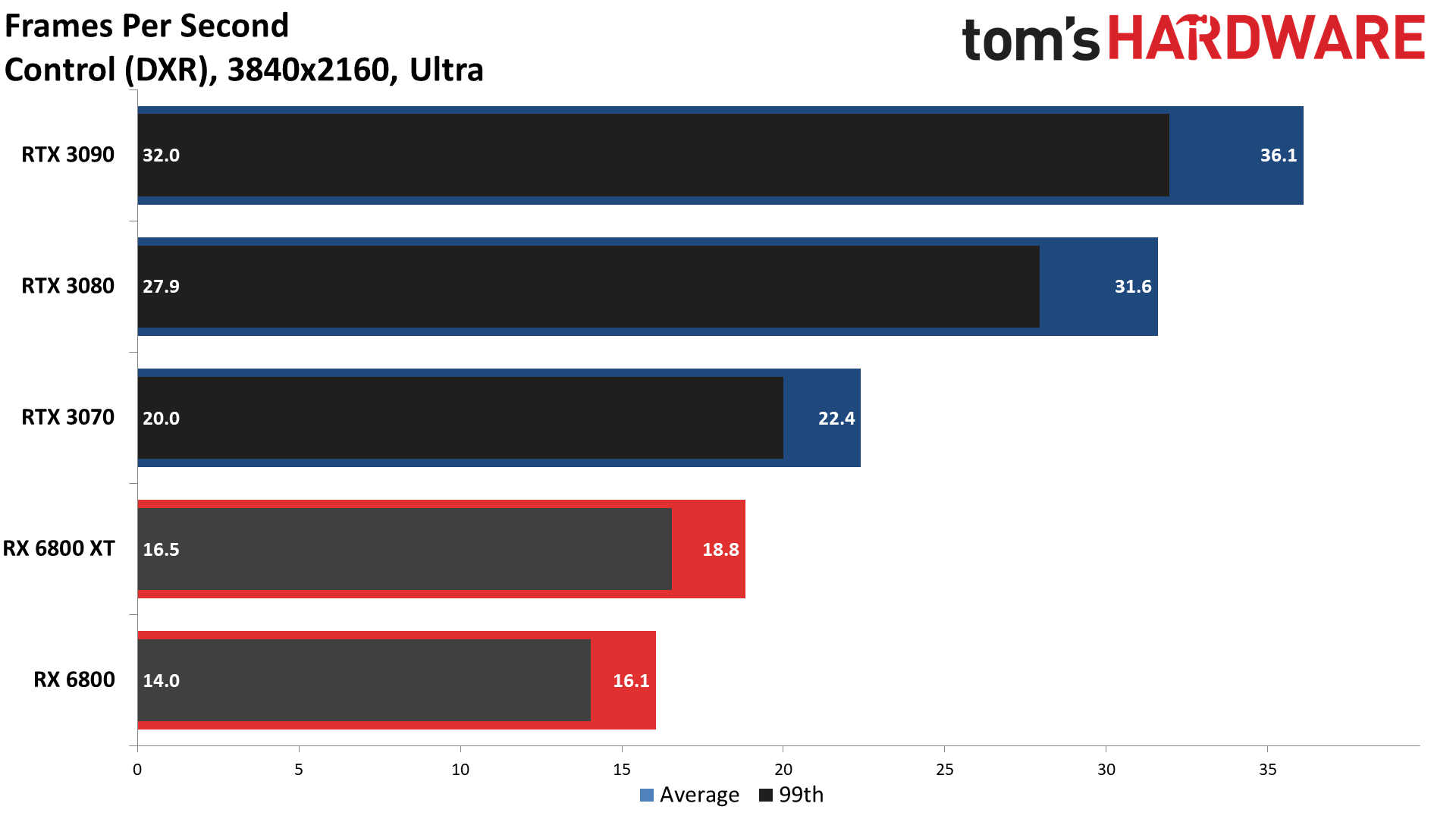

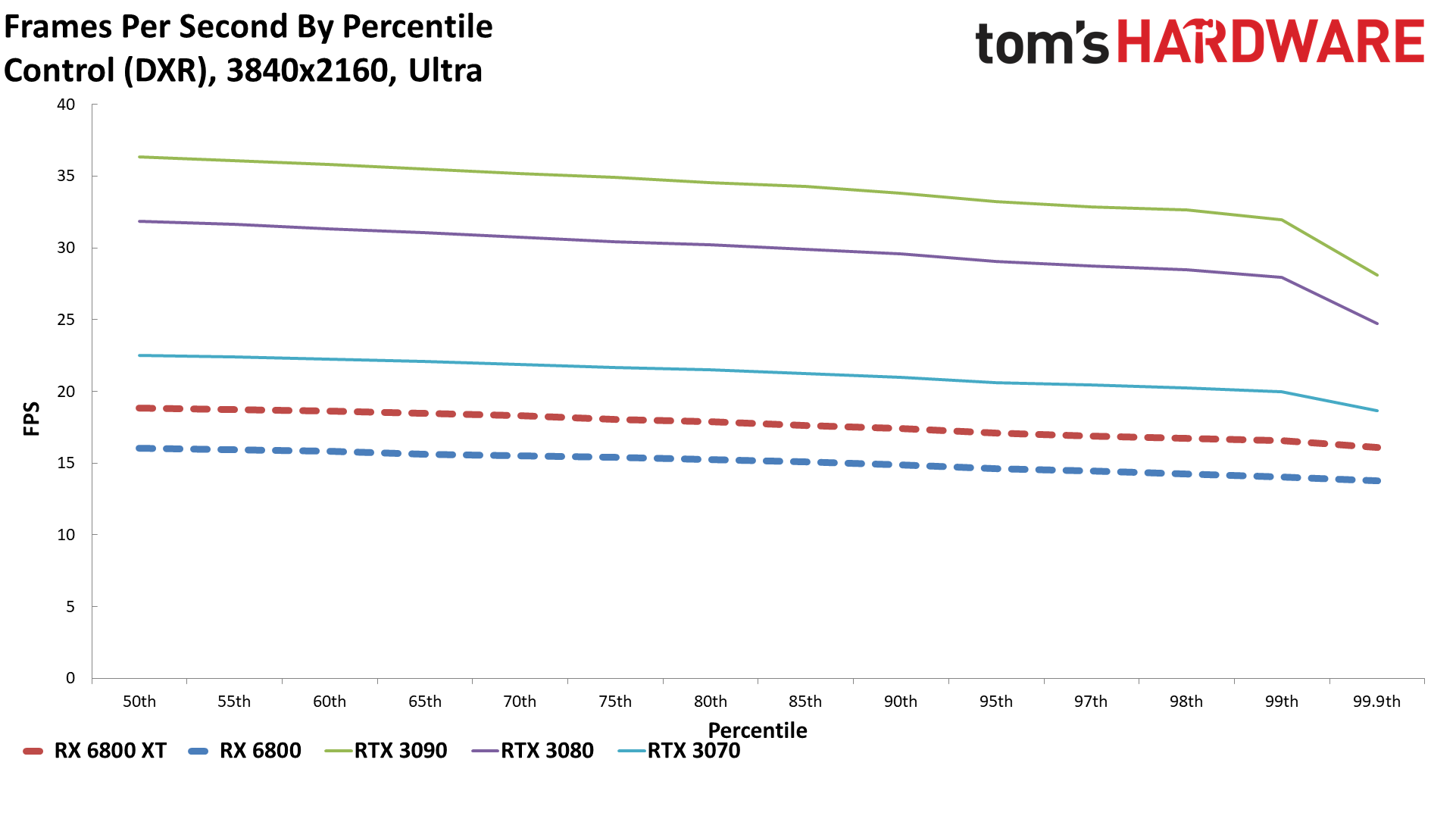

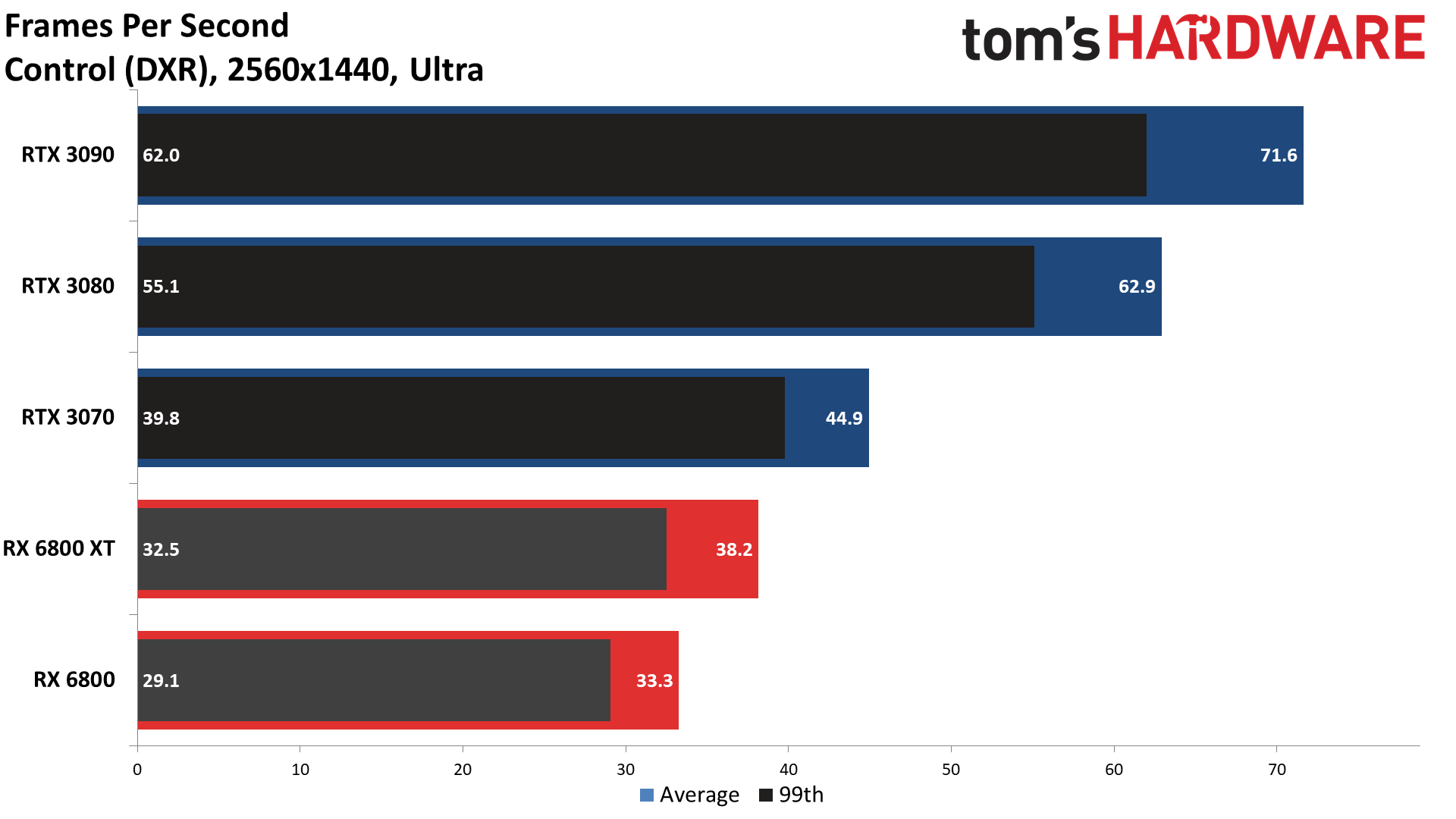

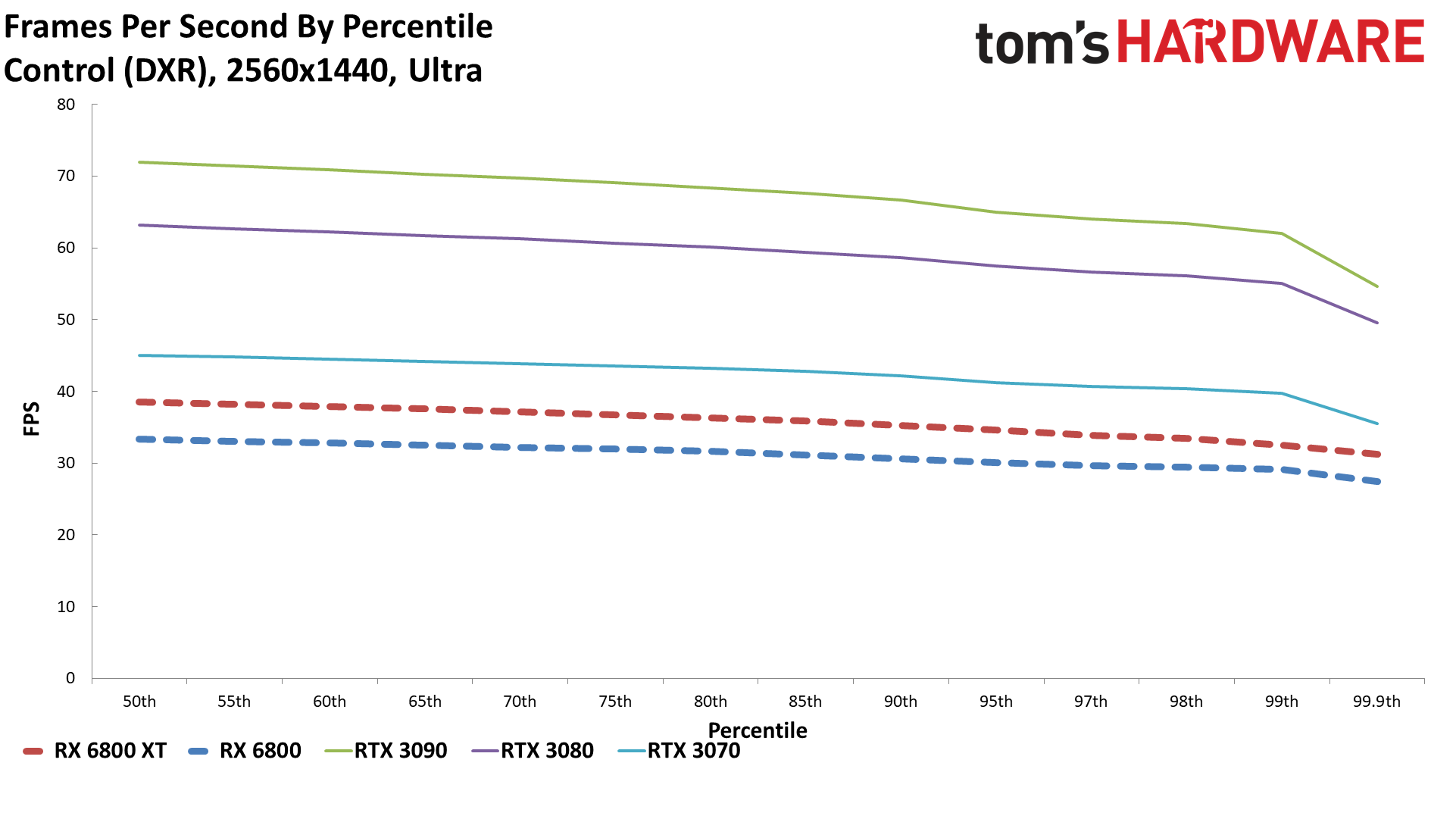

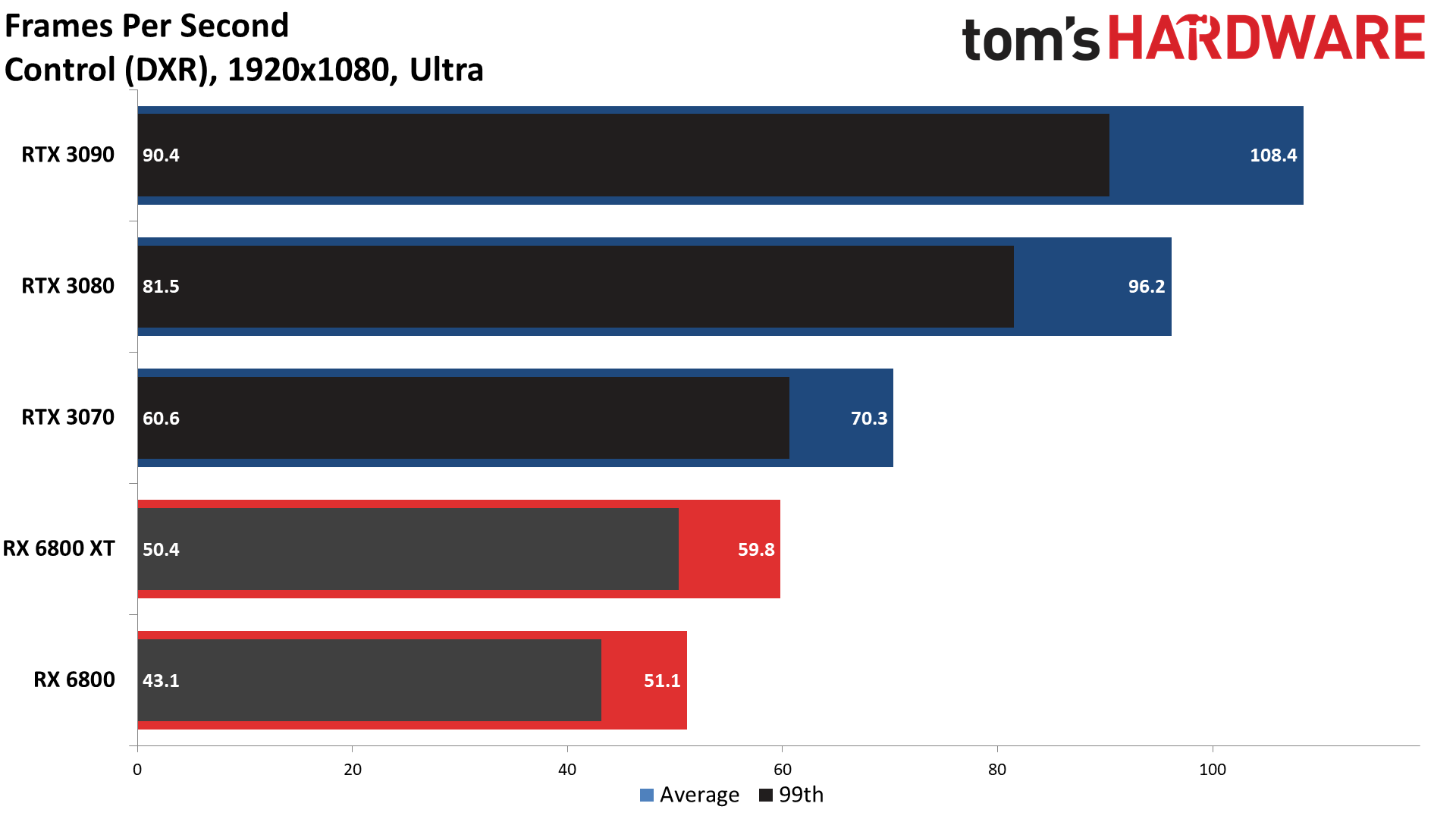

Control

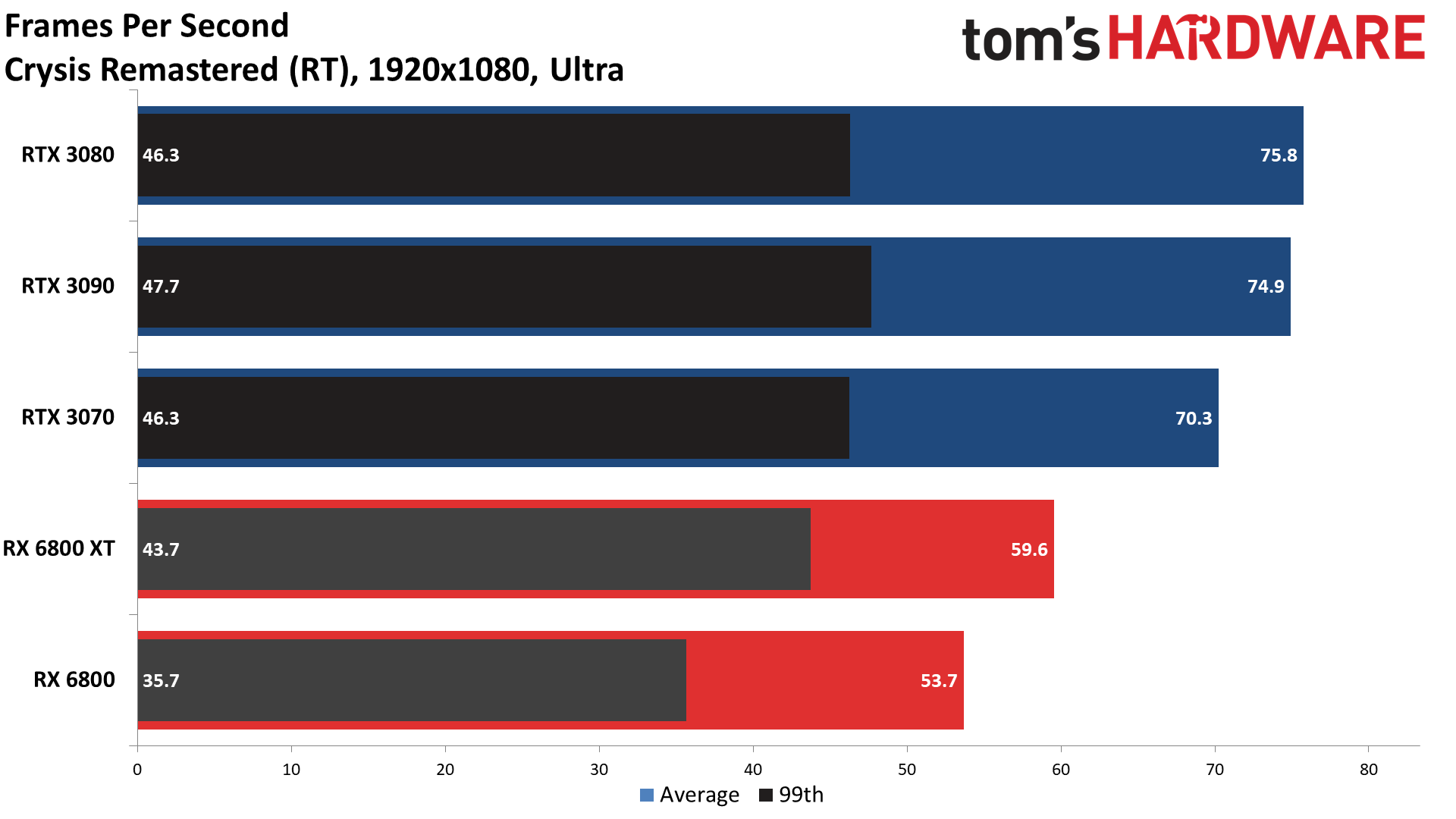

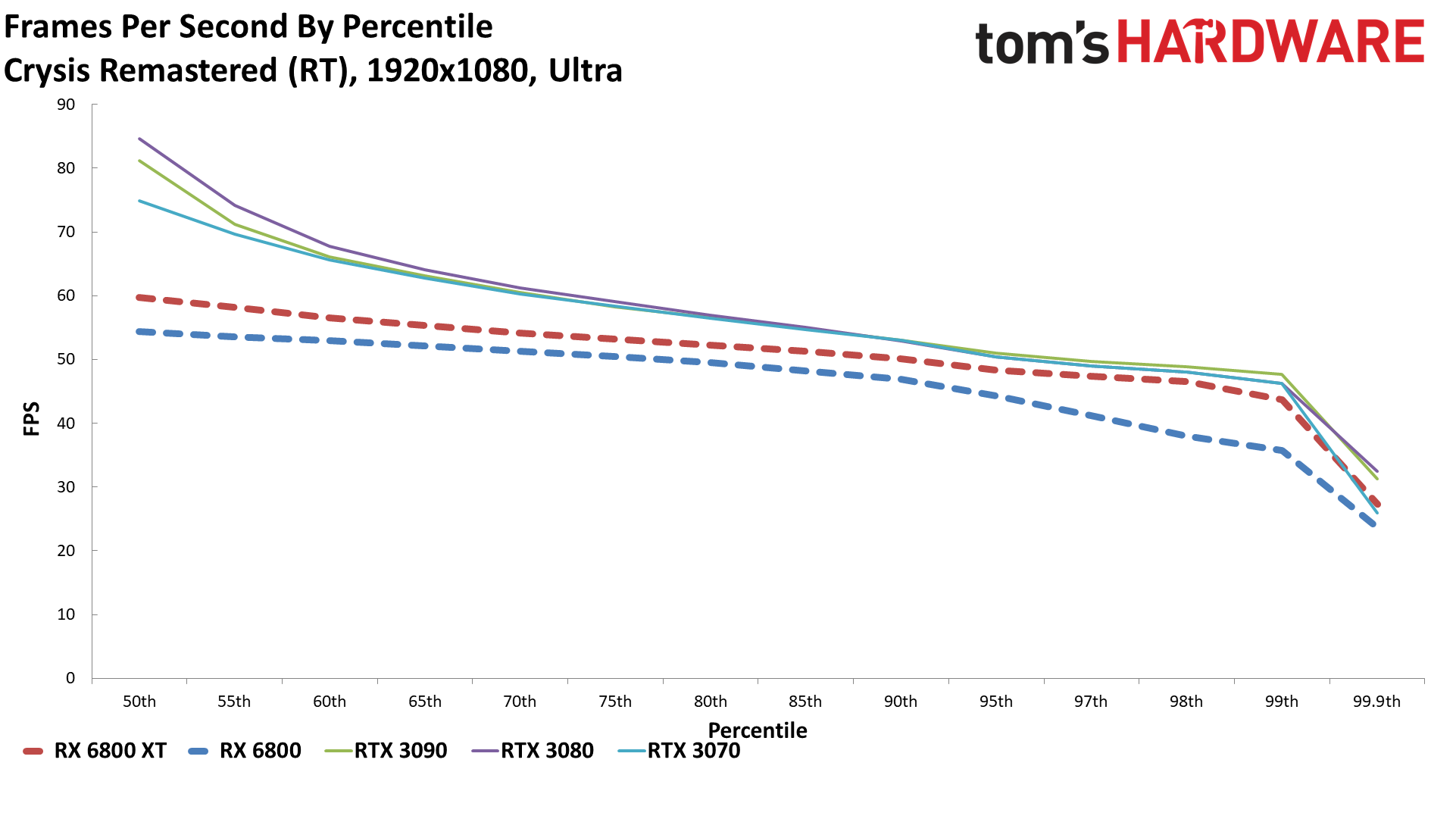

Crysis Remastered

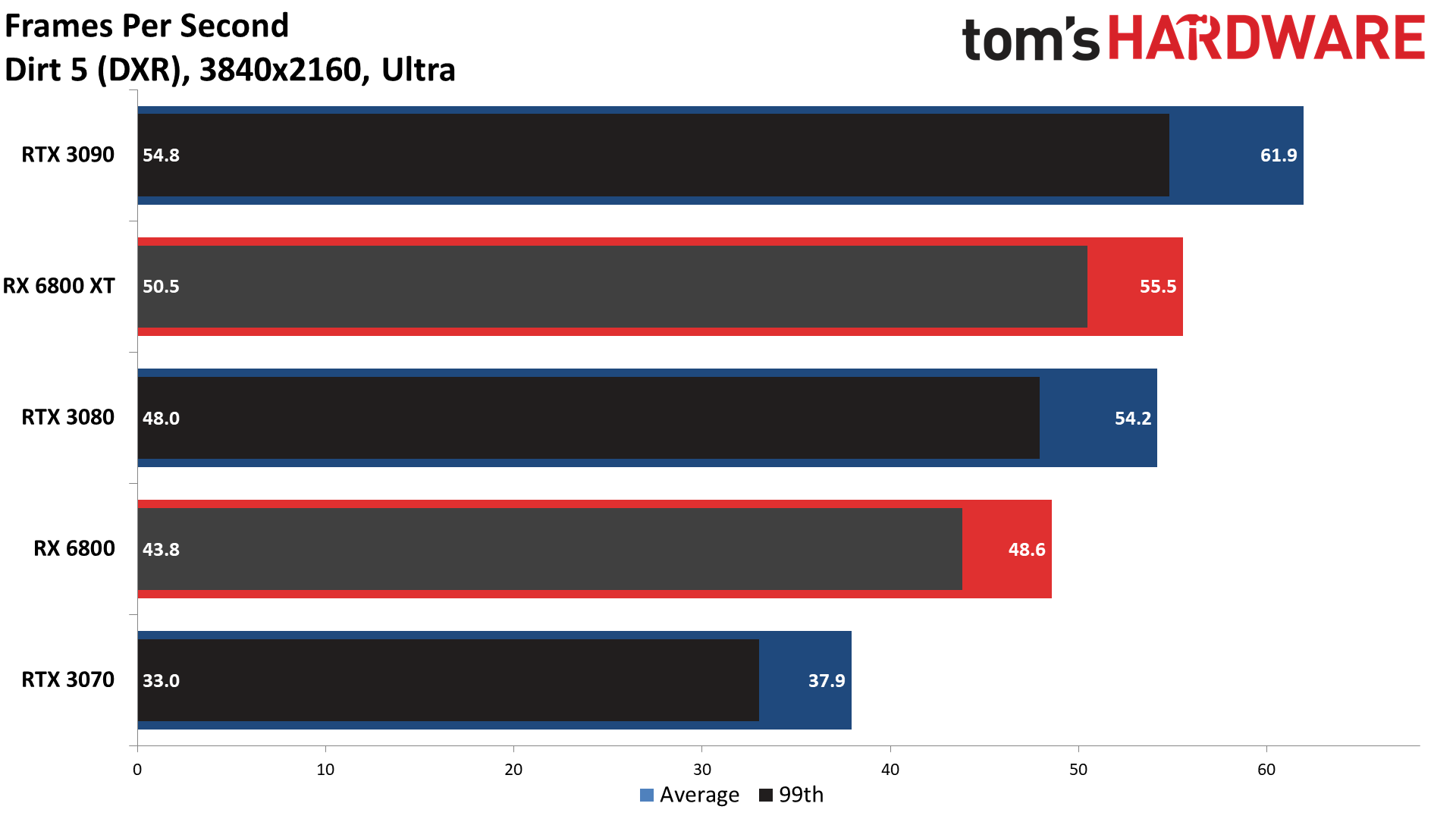

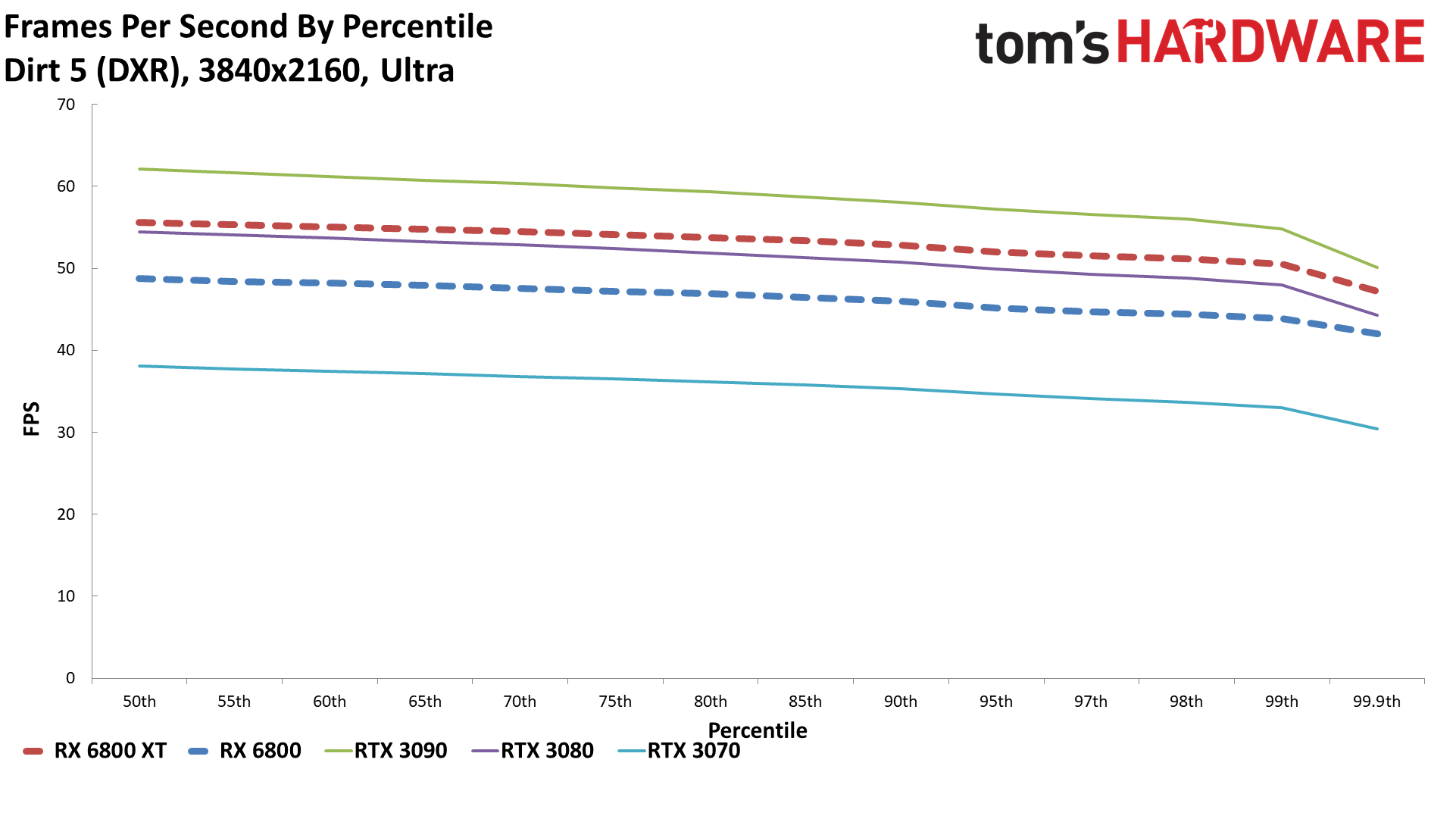

Dirt 5

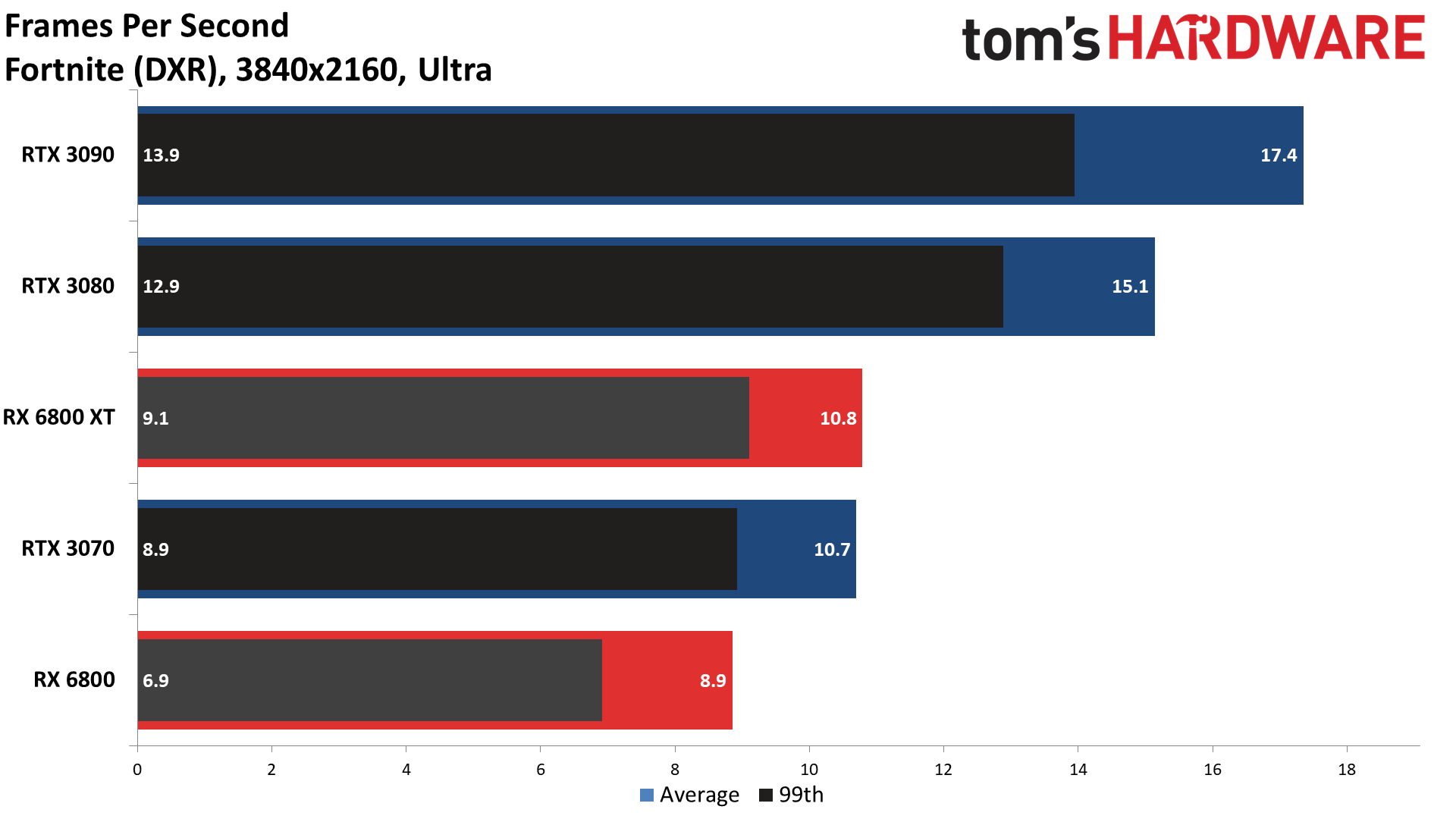

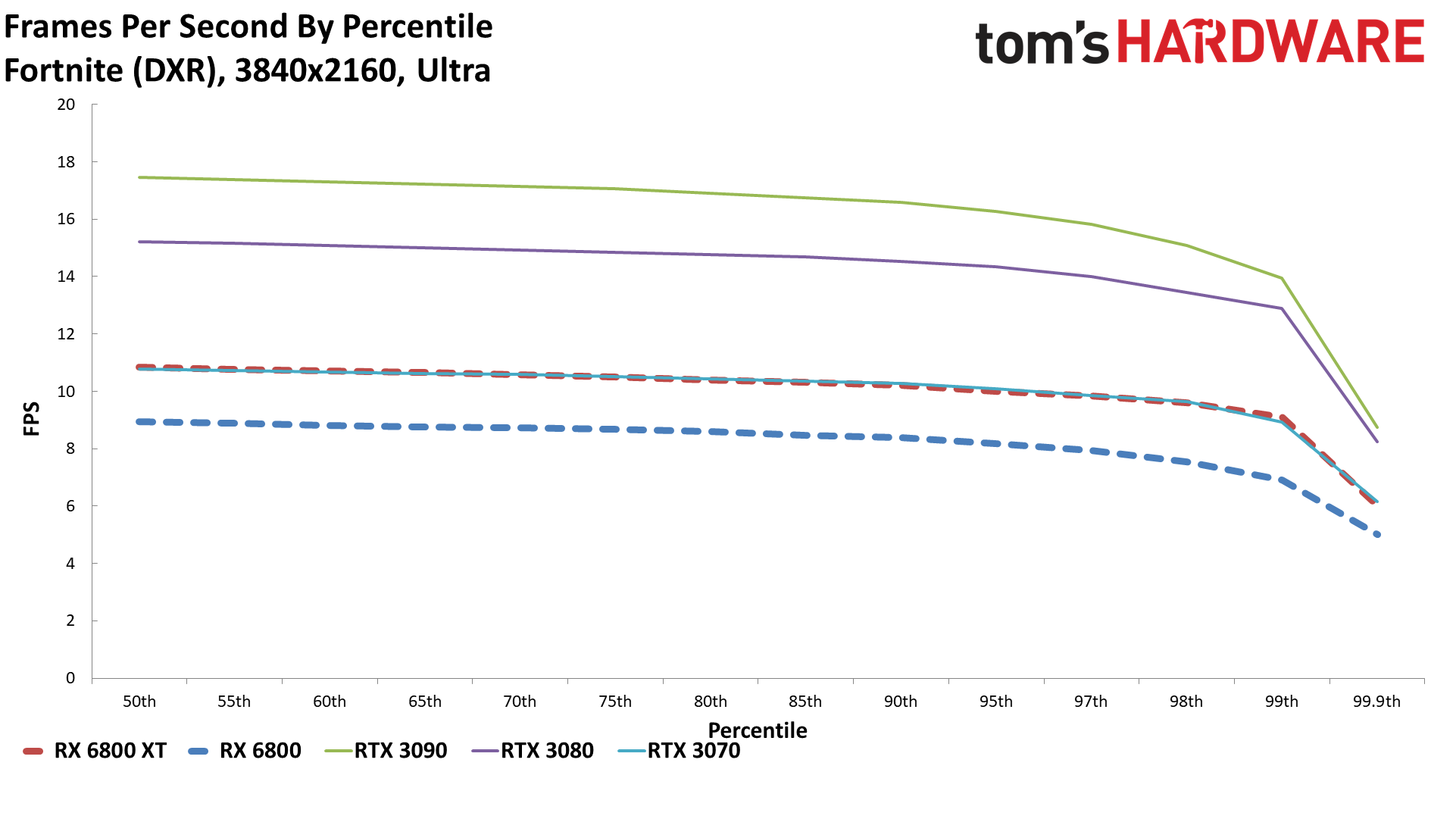

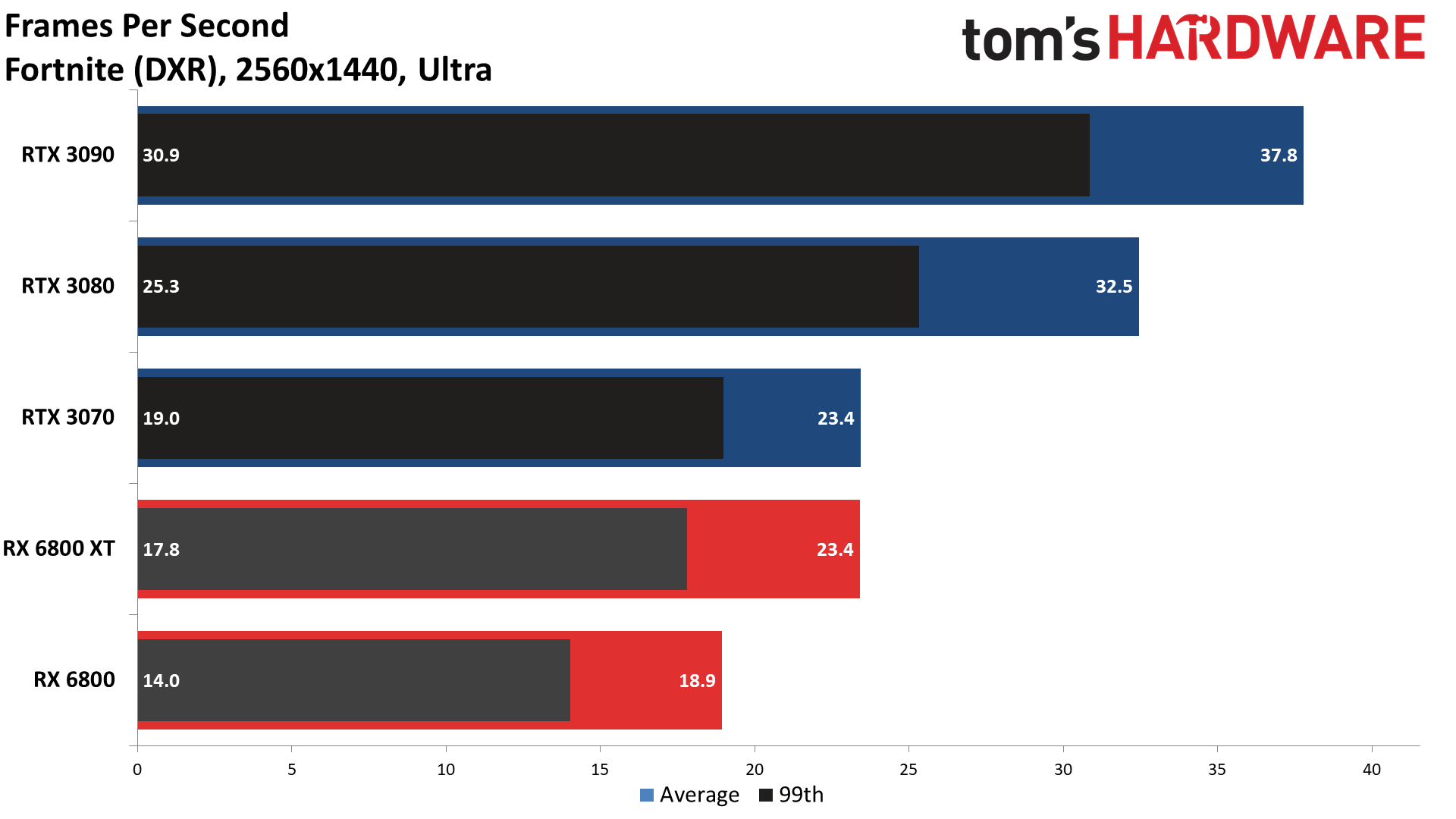

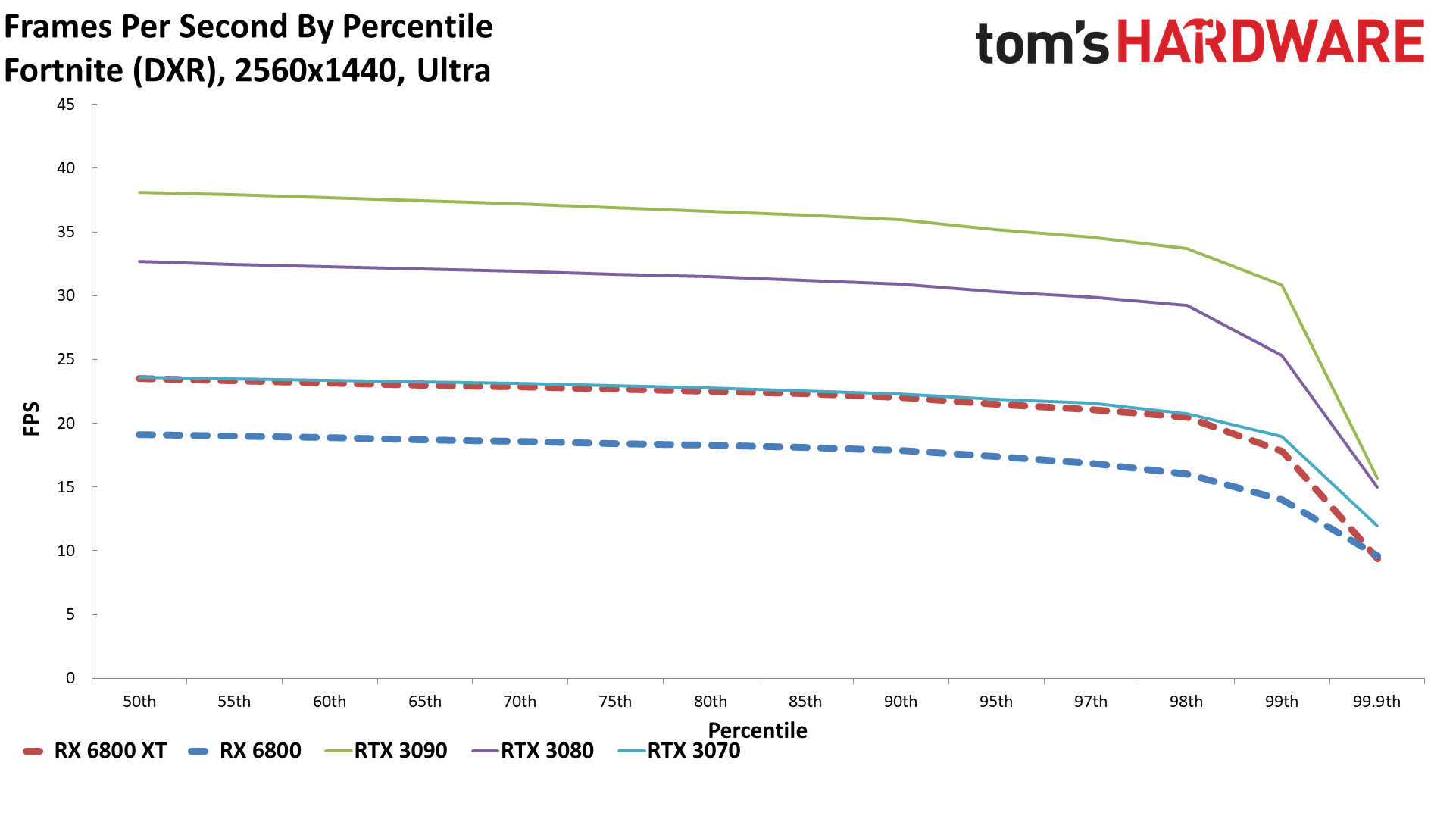

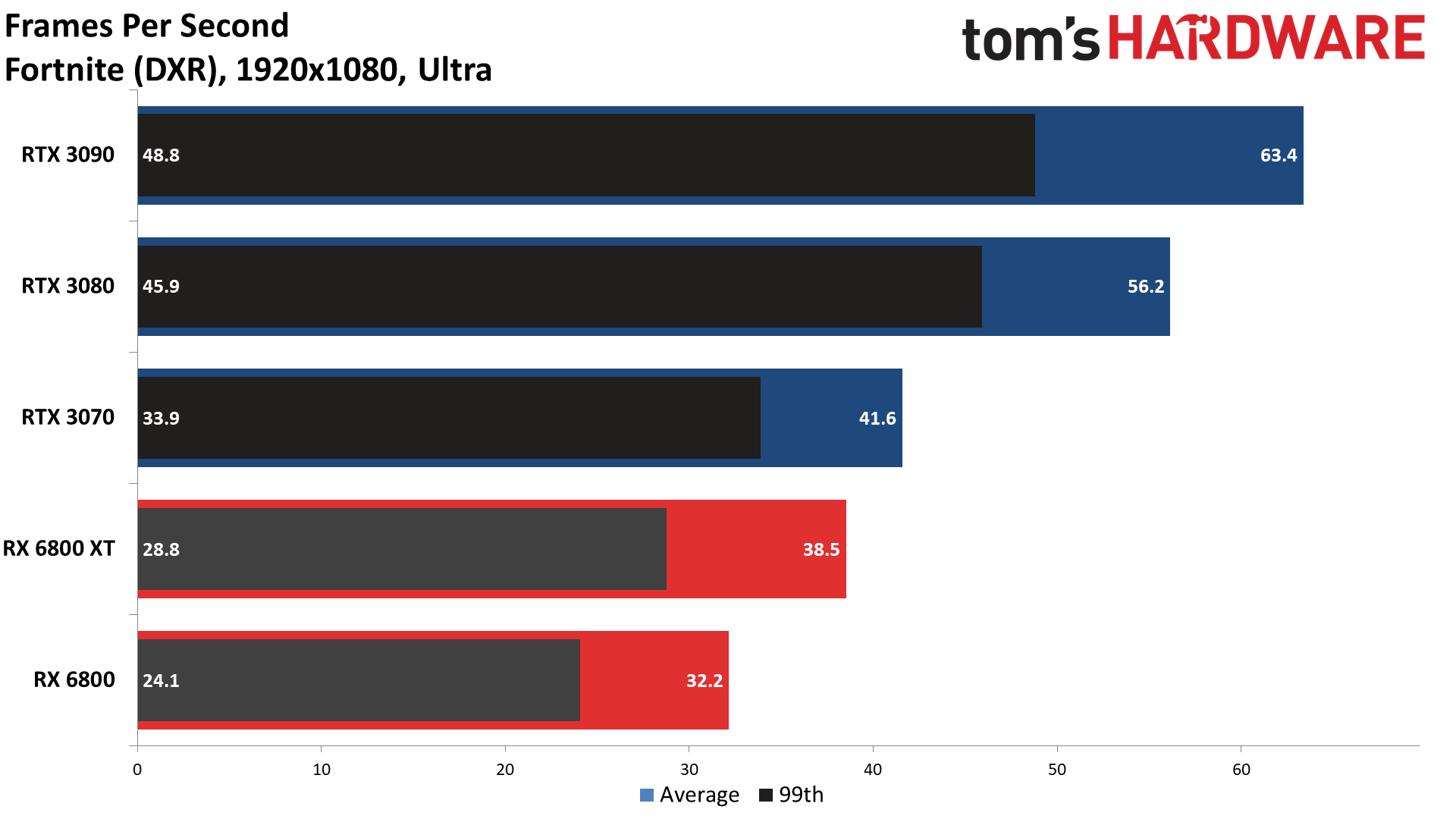

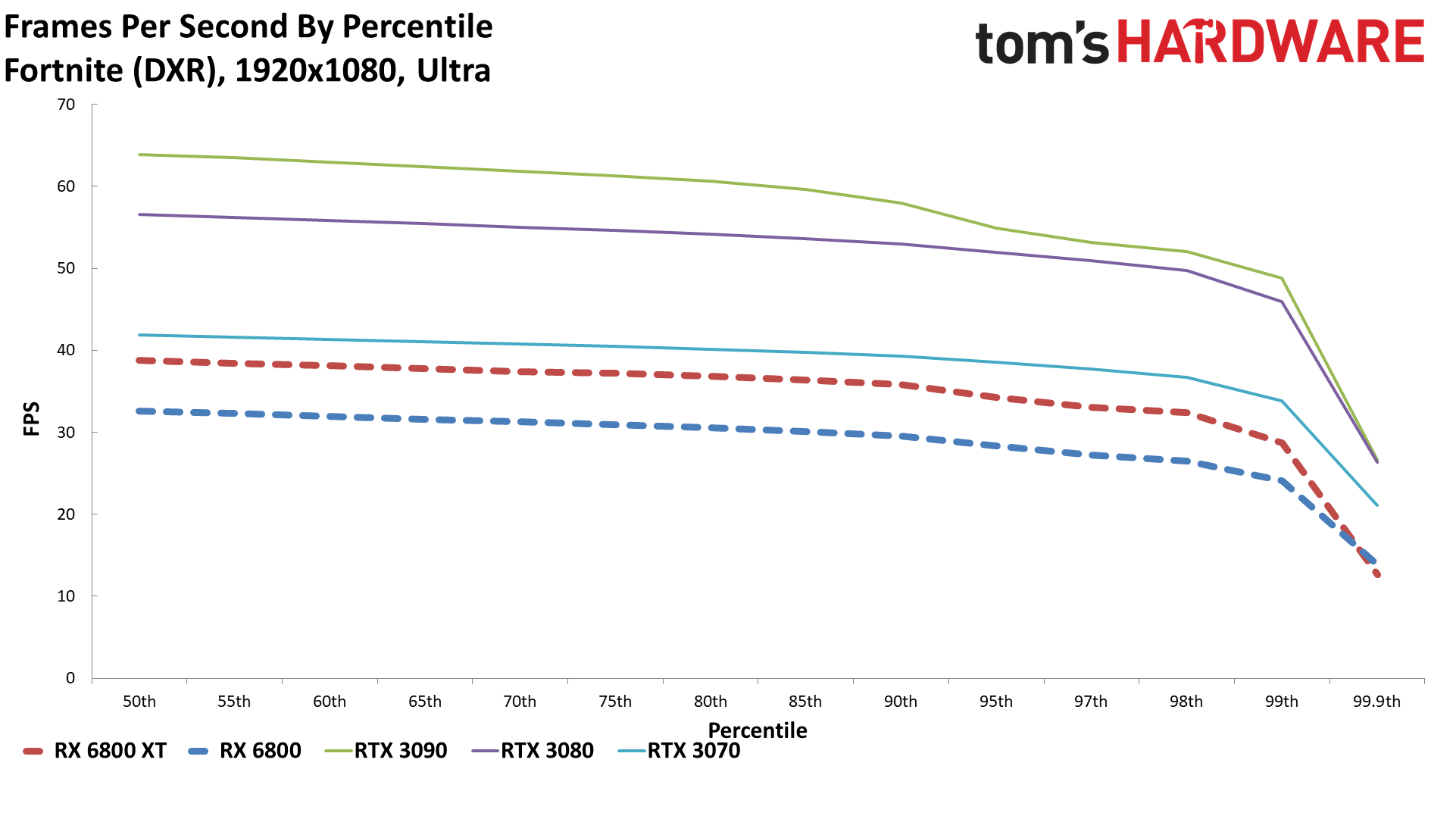

Fortnite

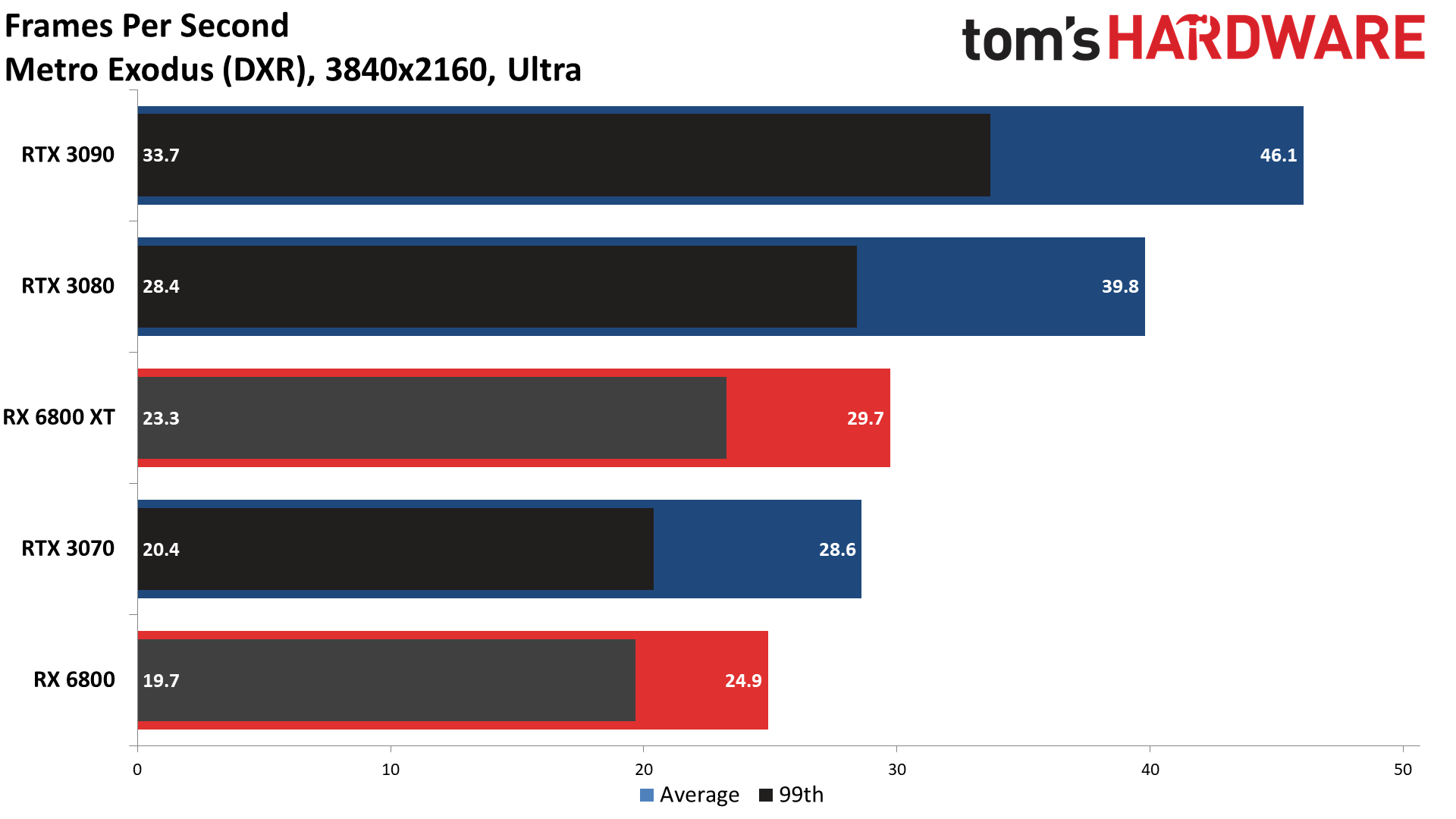

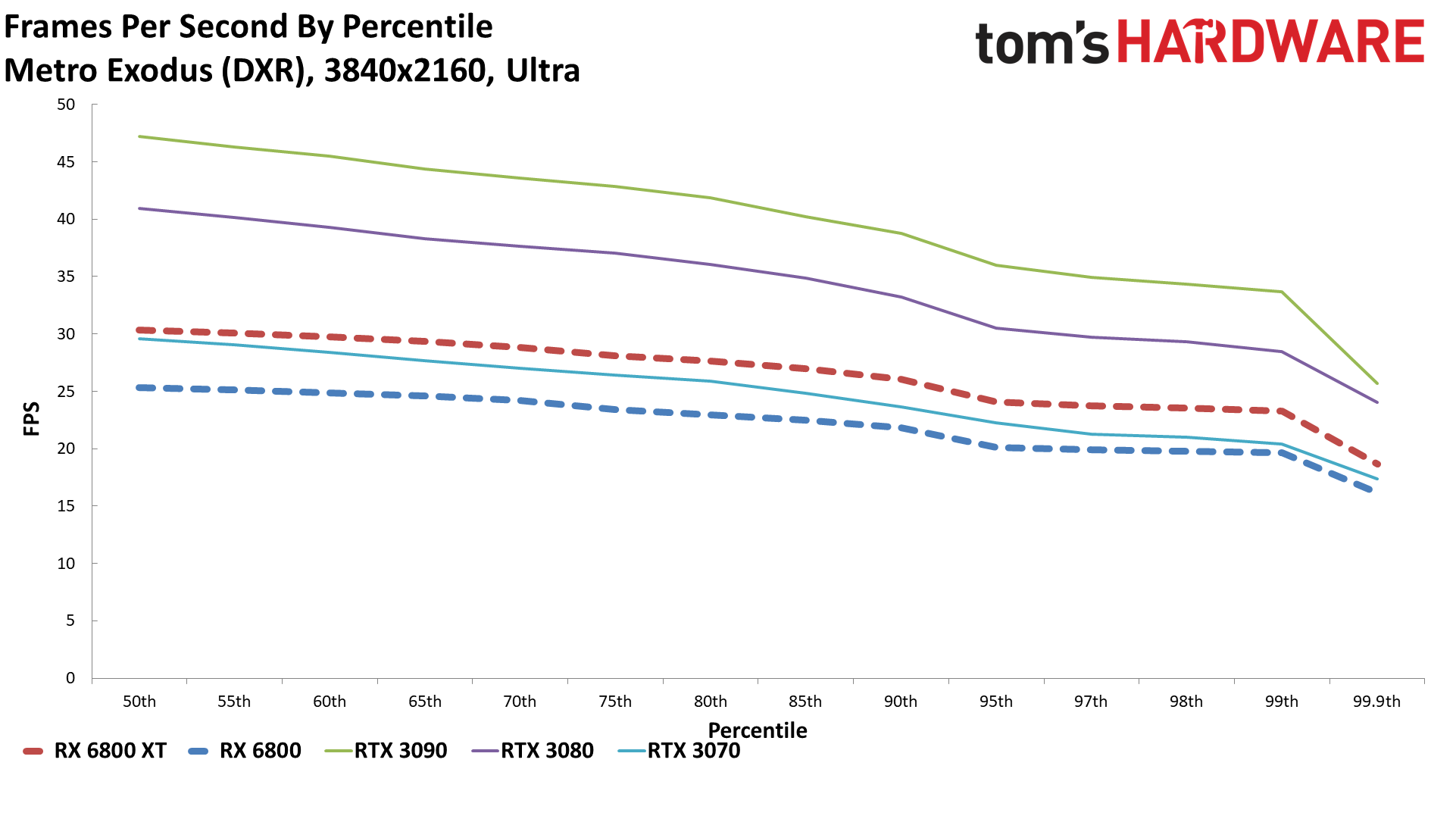

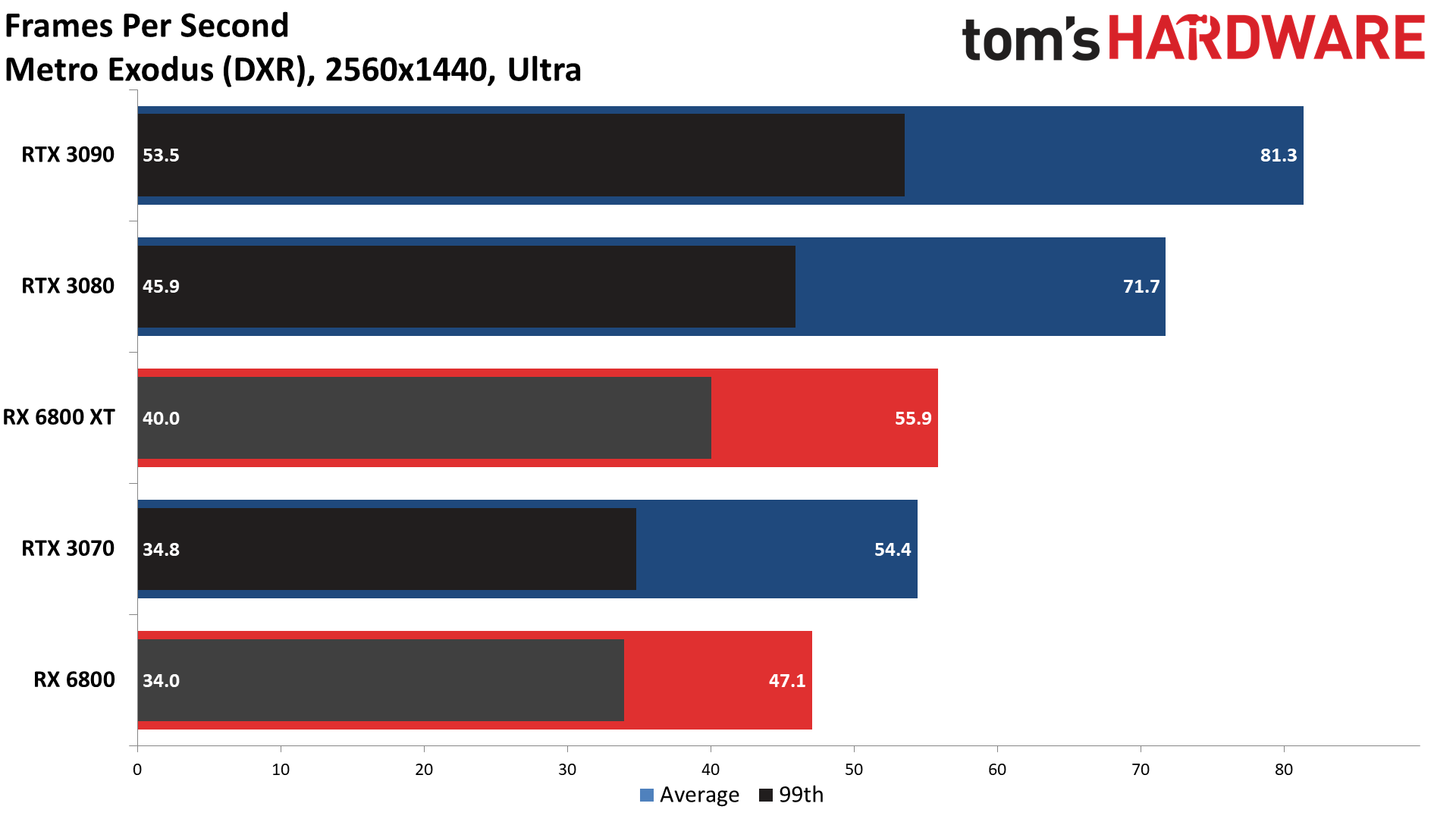

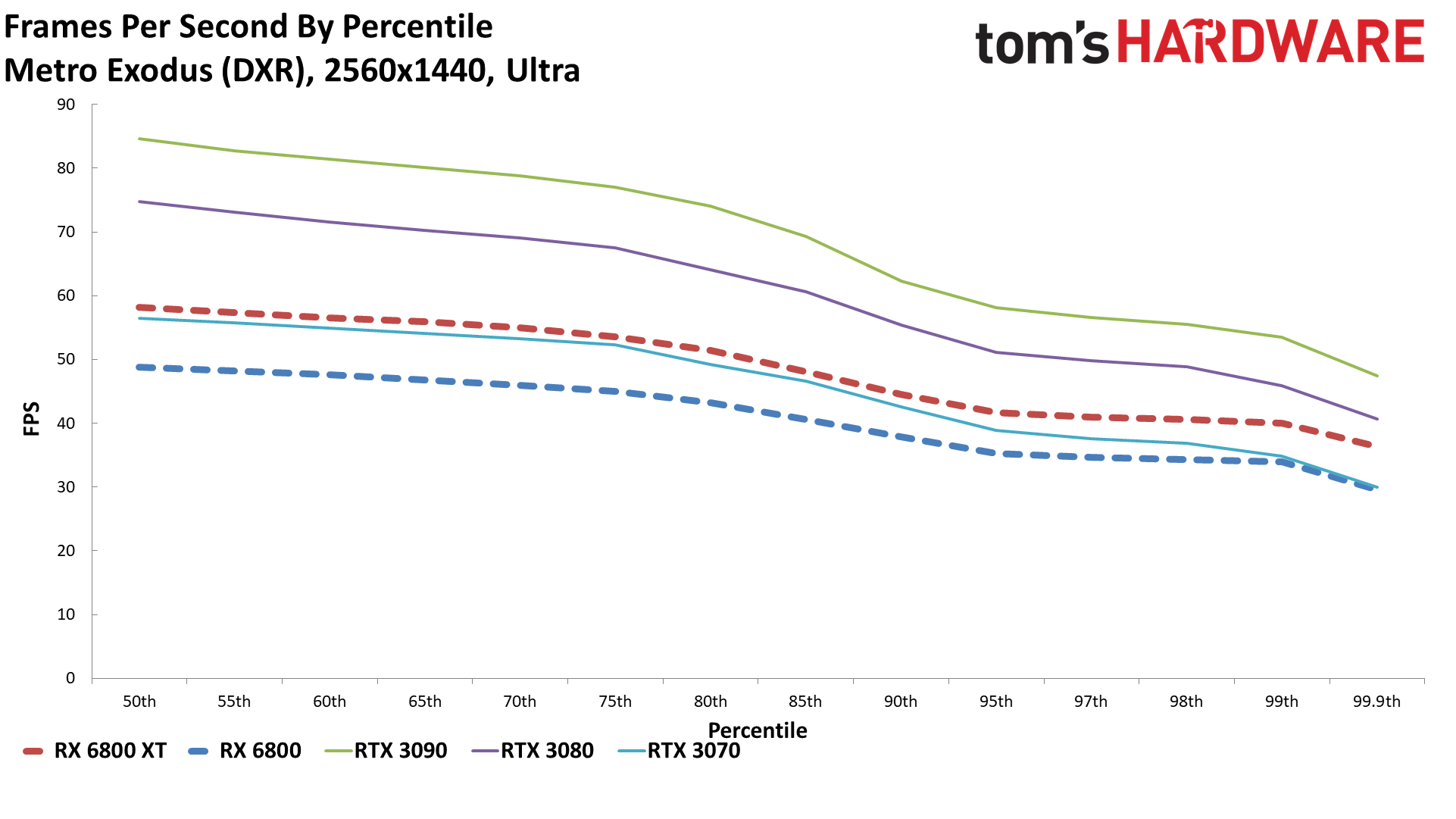

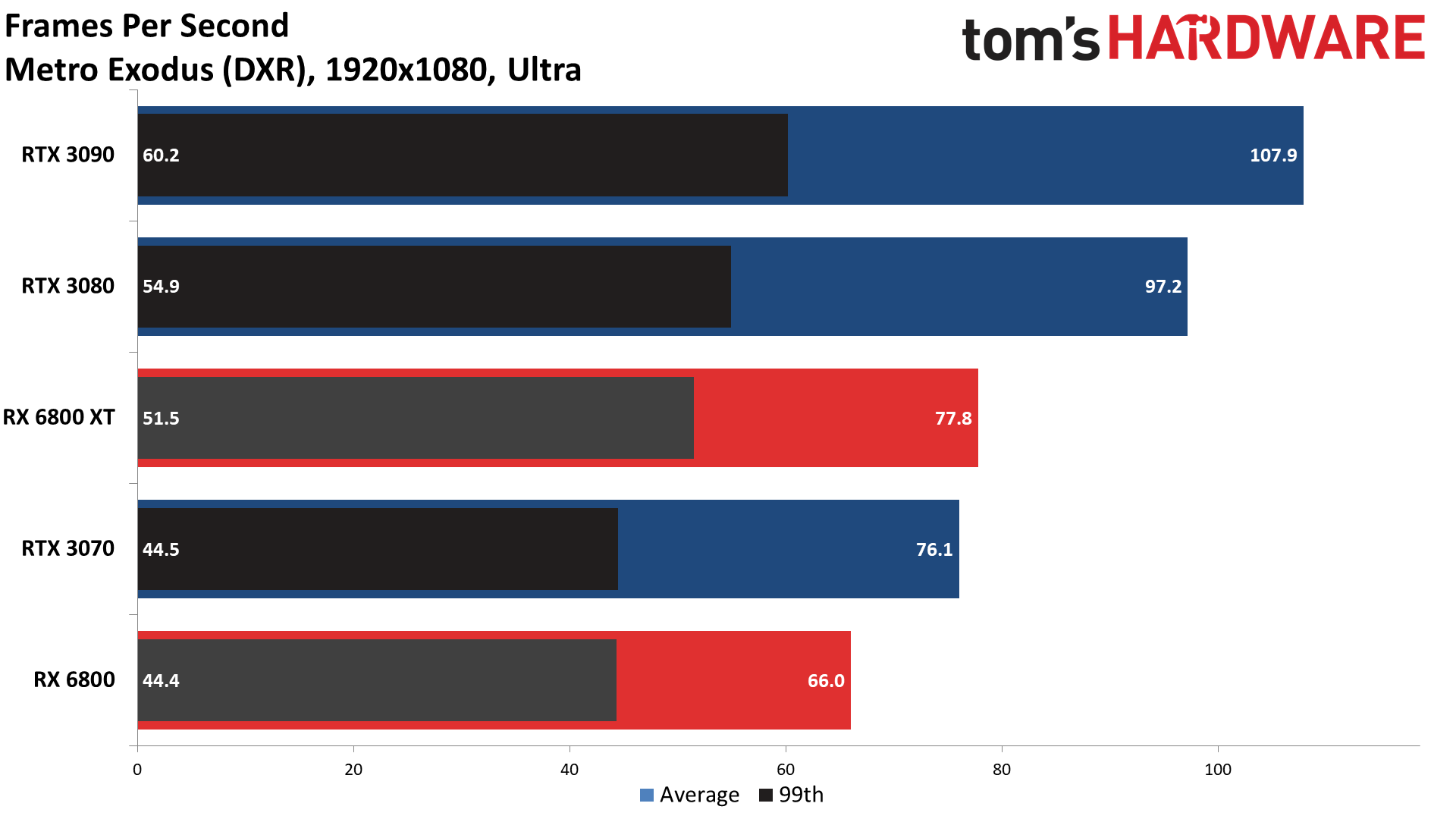

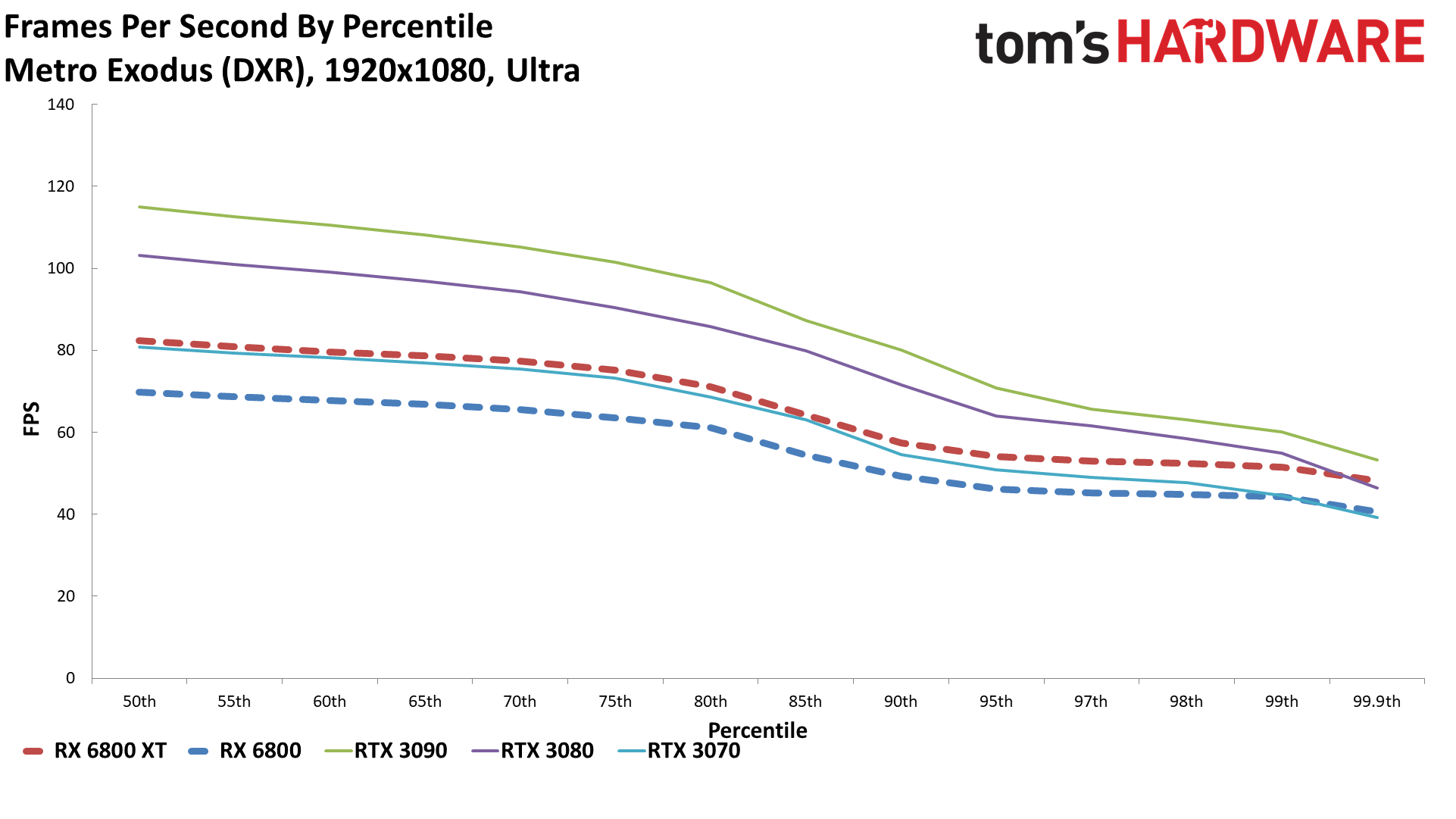

Metro Exodus

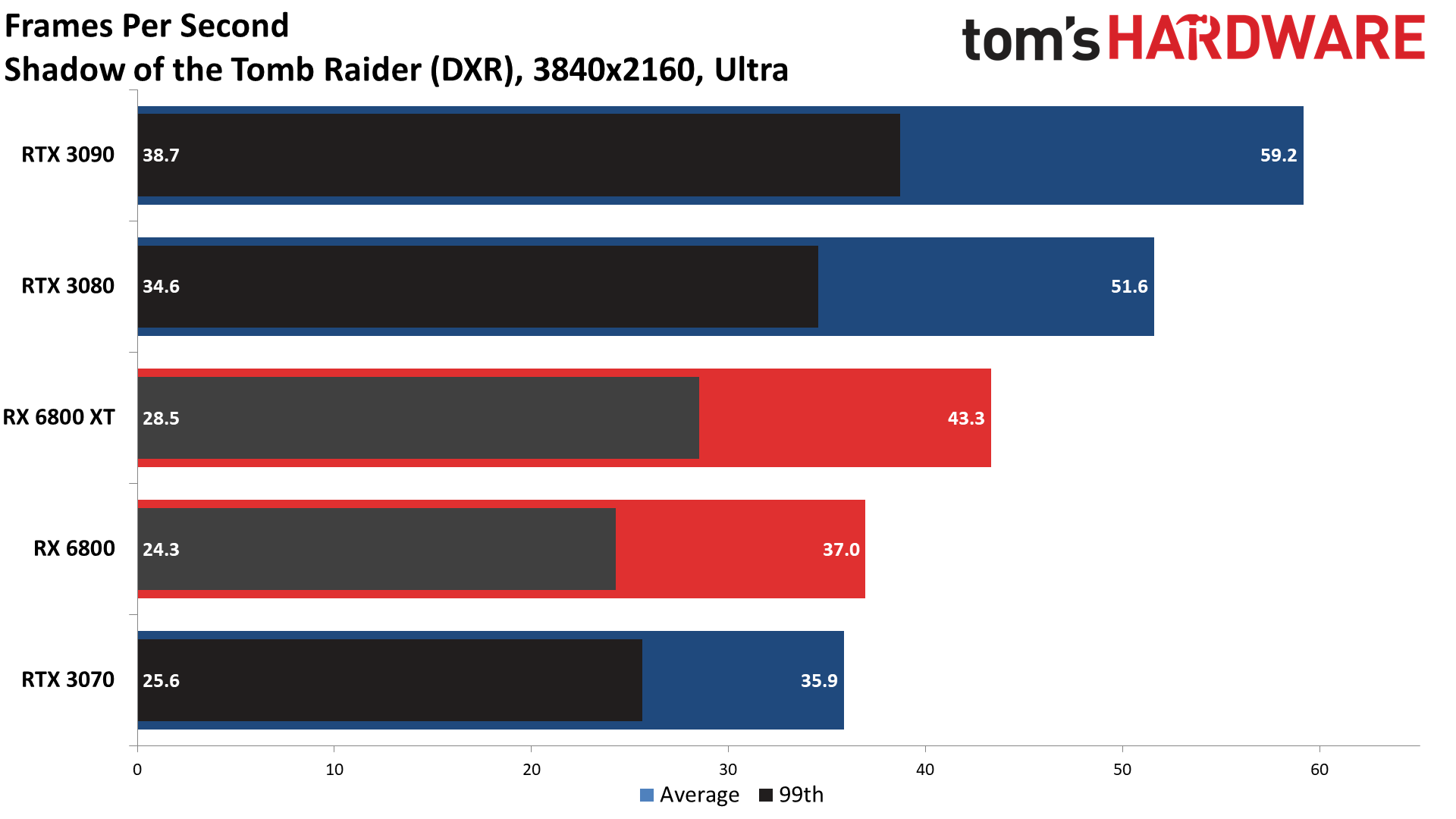

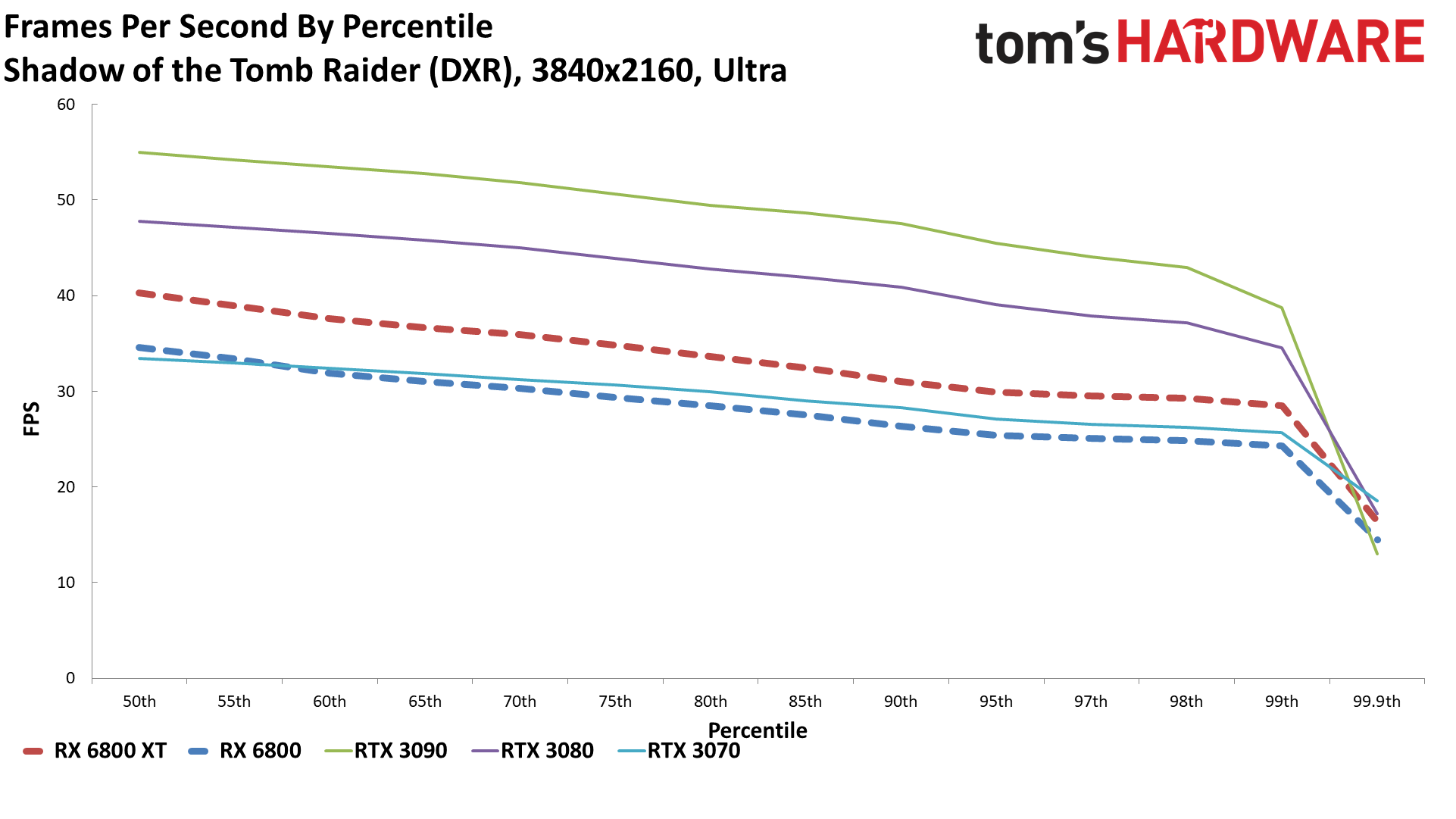

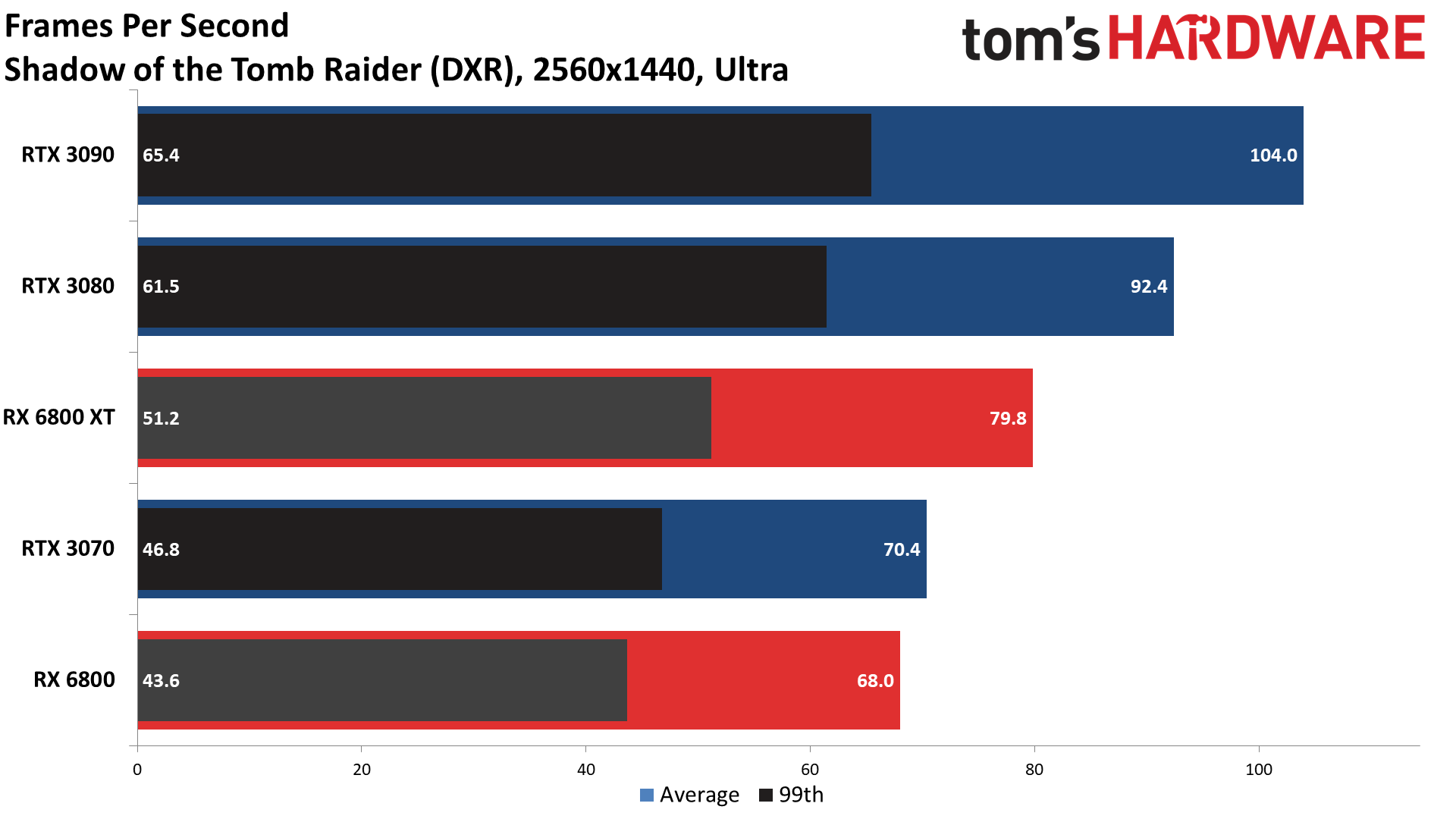

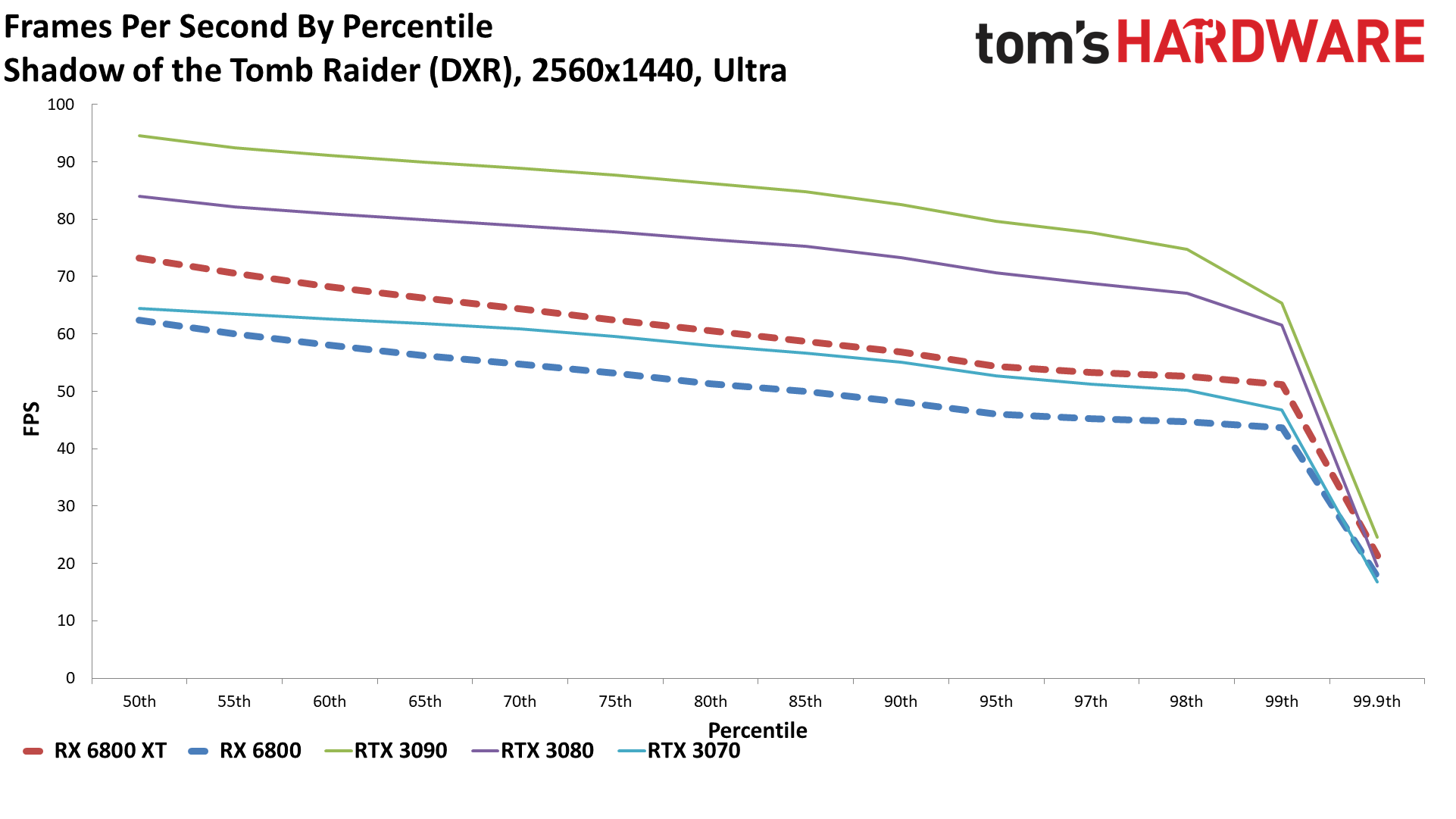

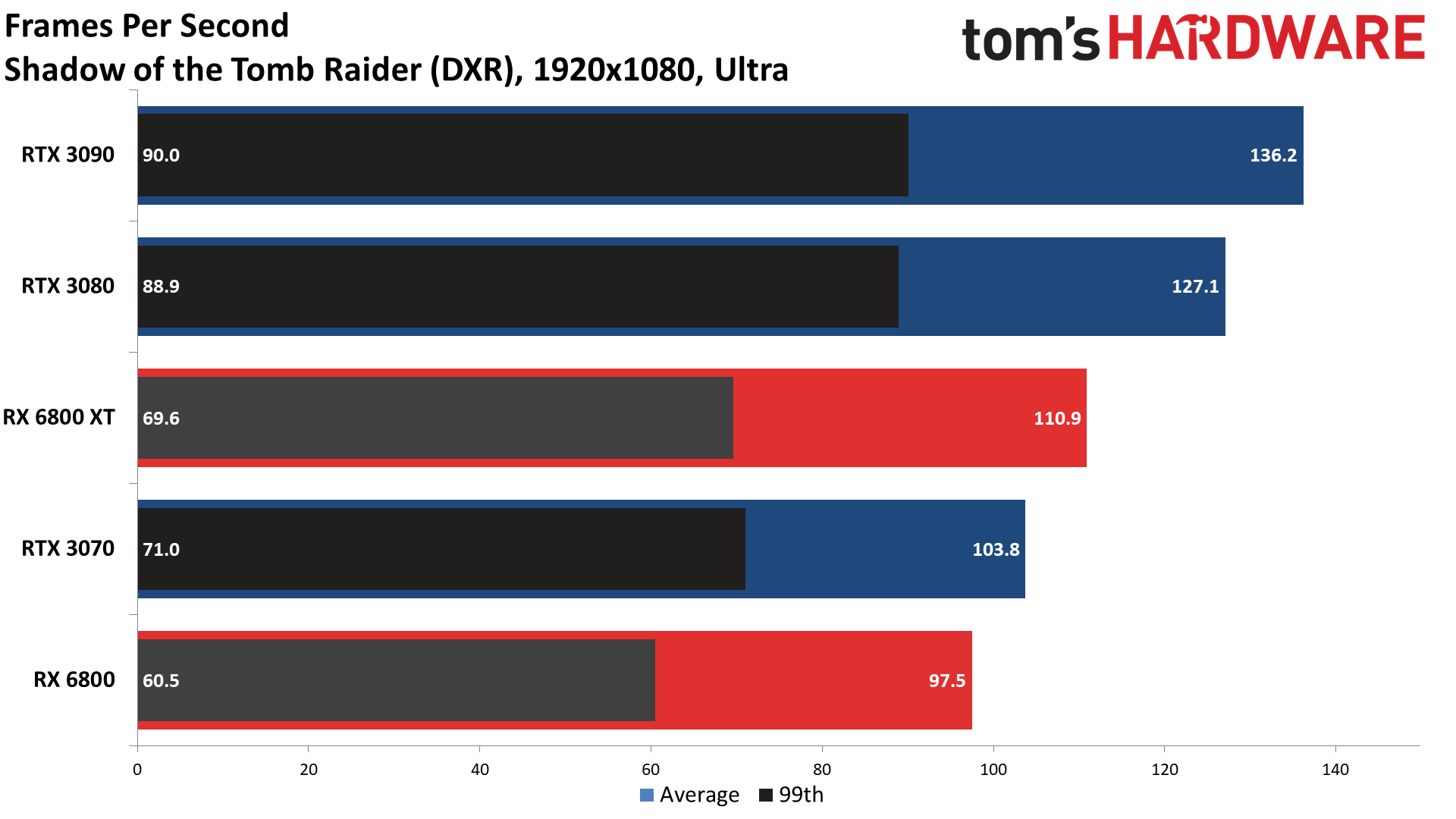

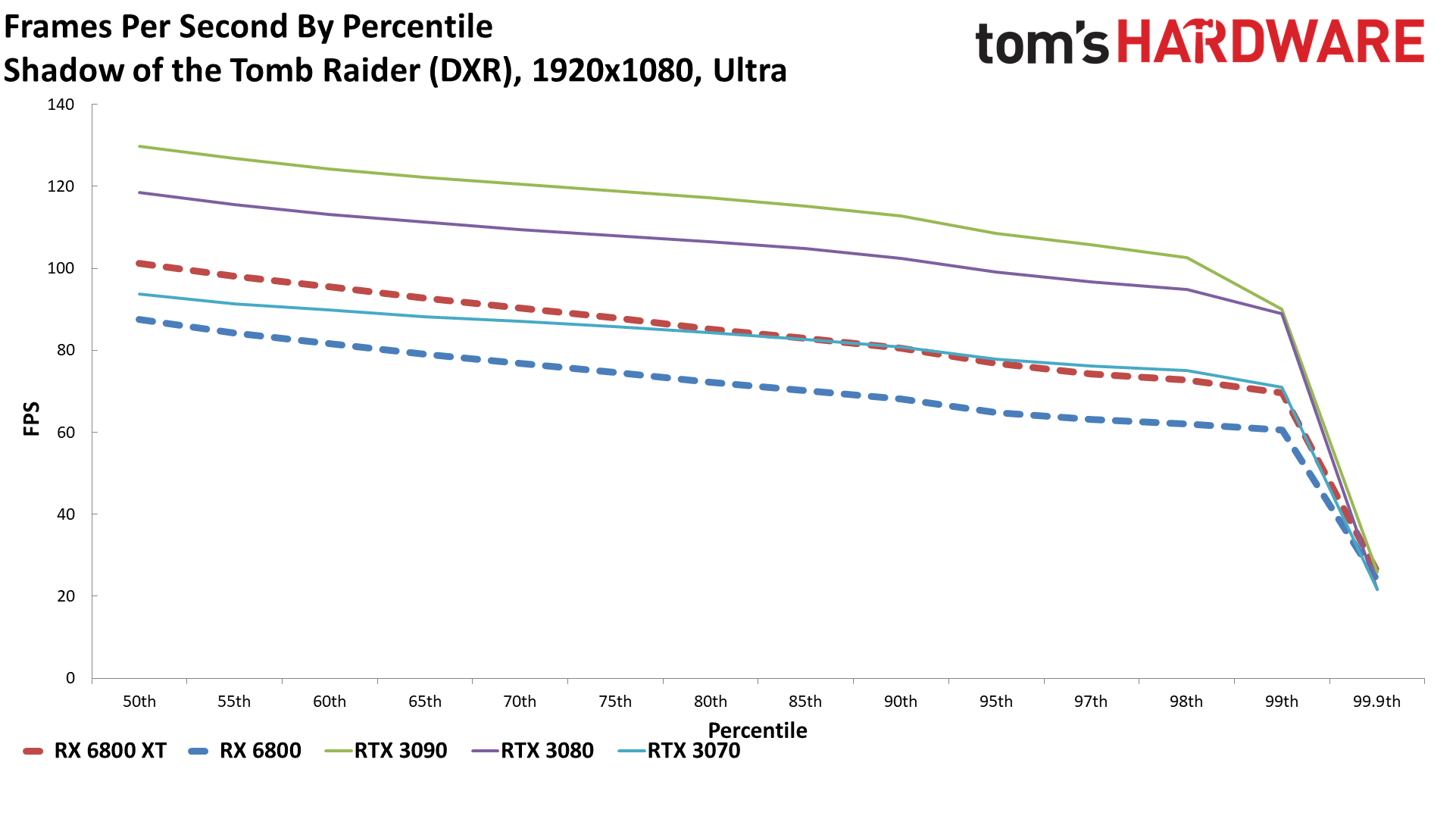

Shadow of the Tomb Raider

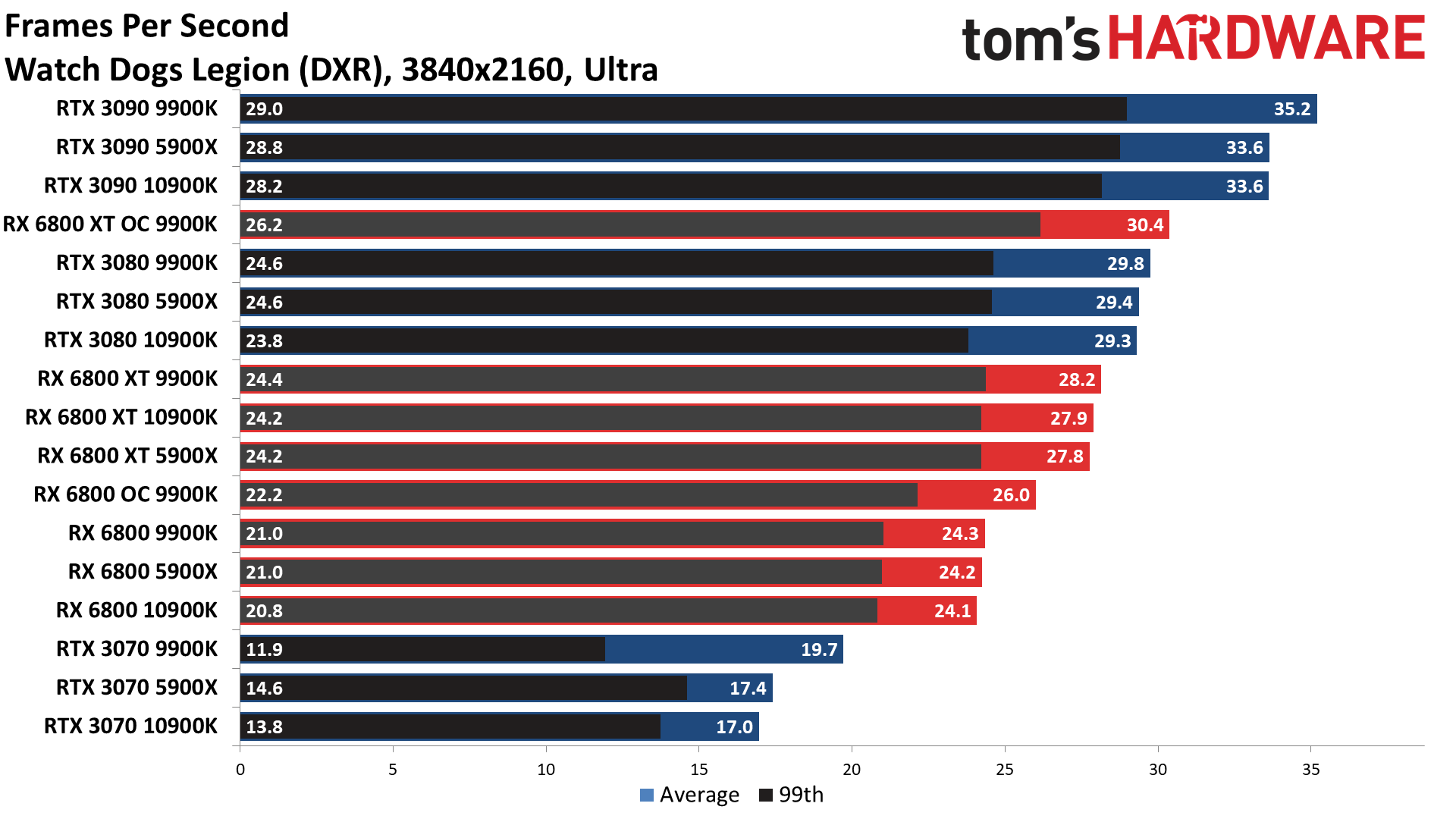

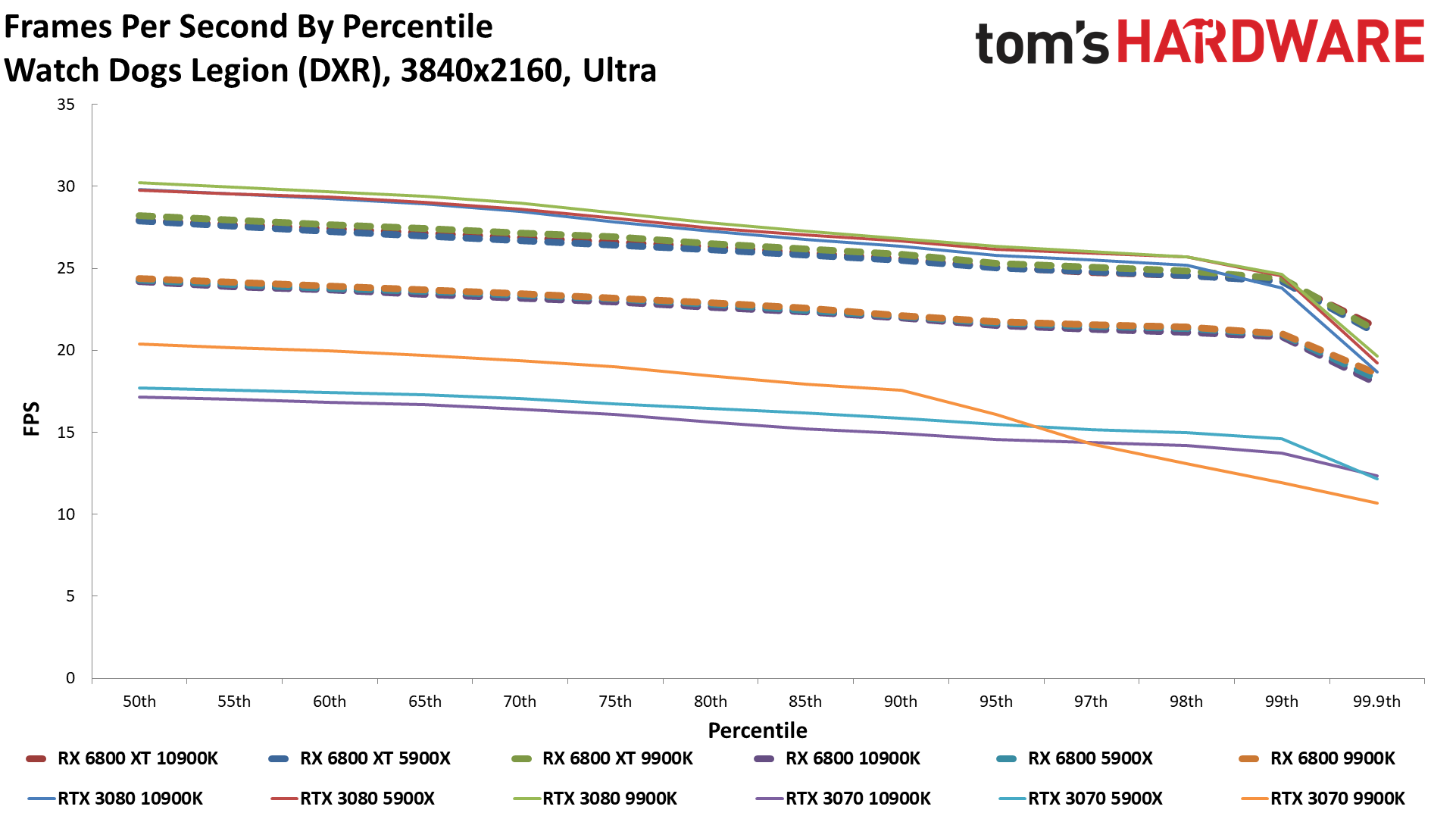

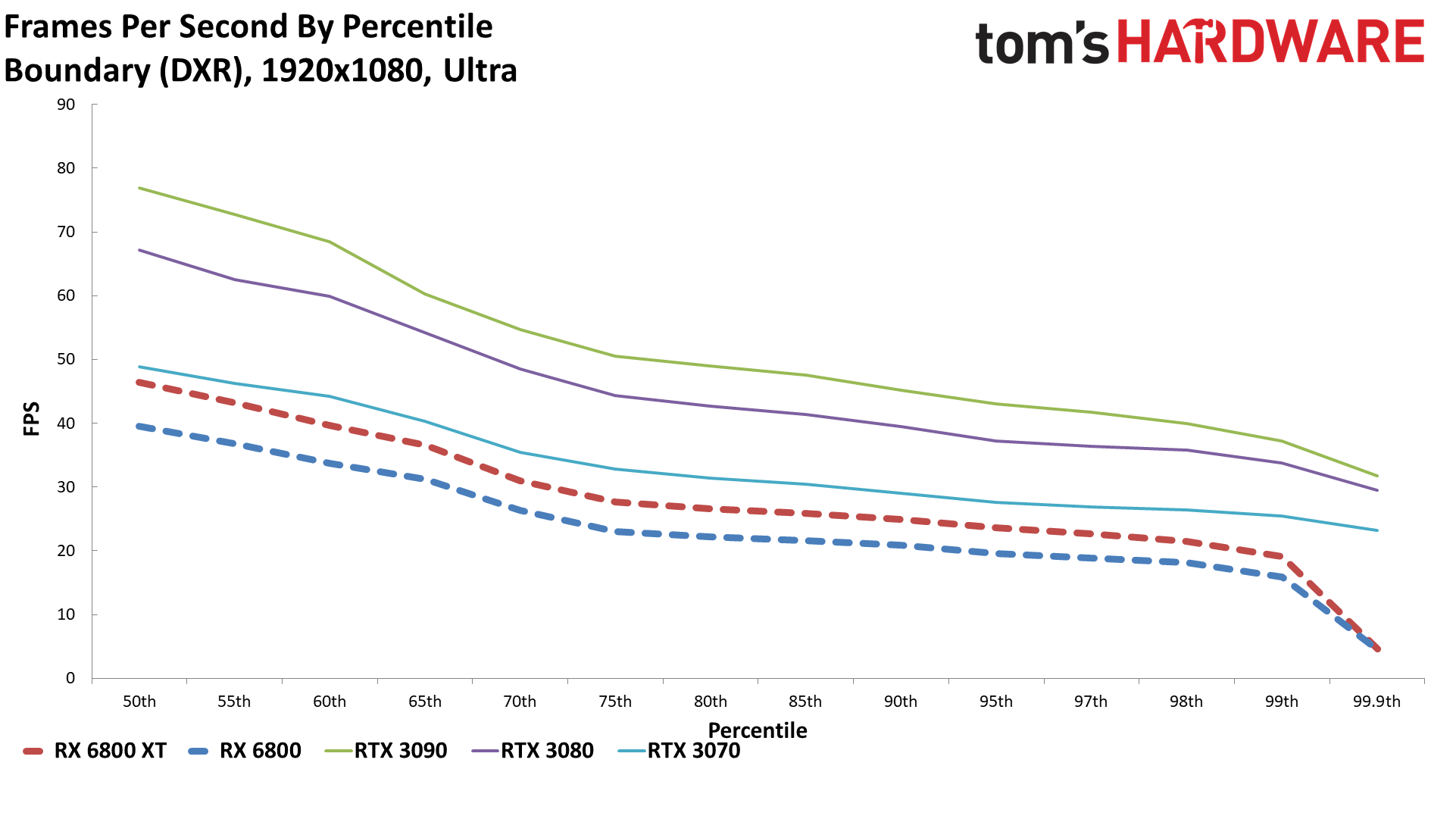

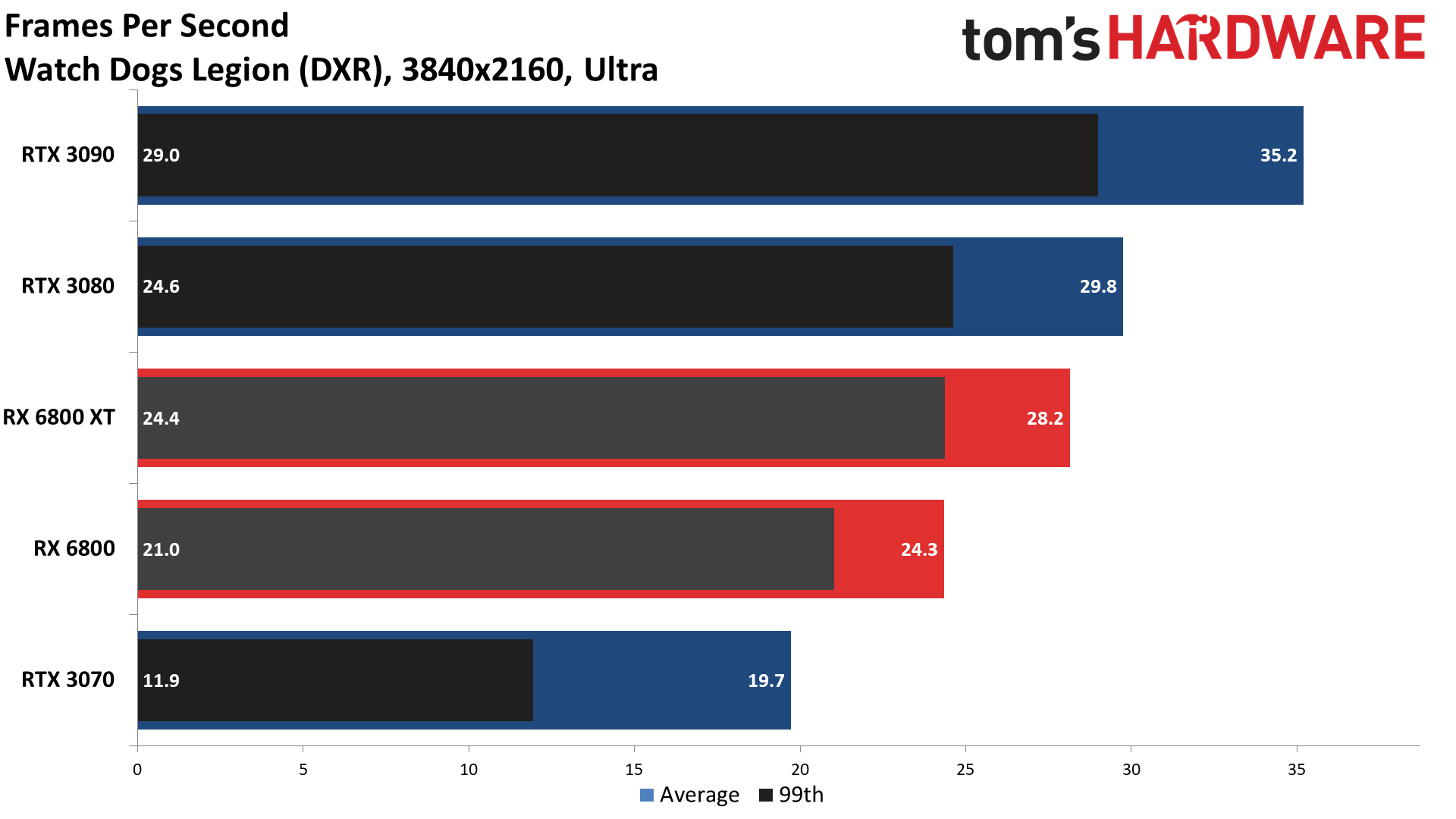

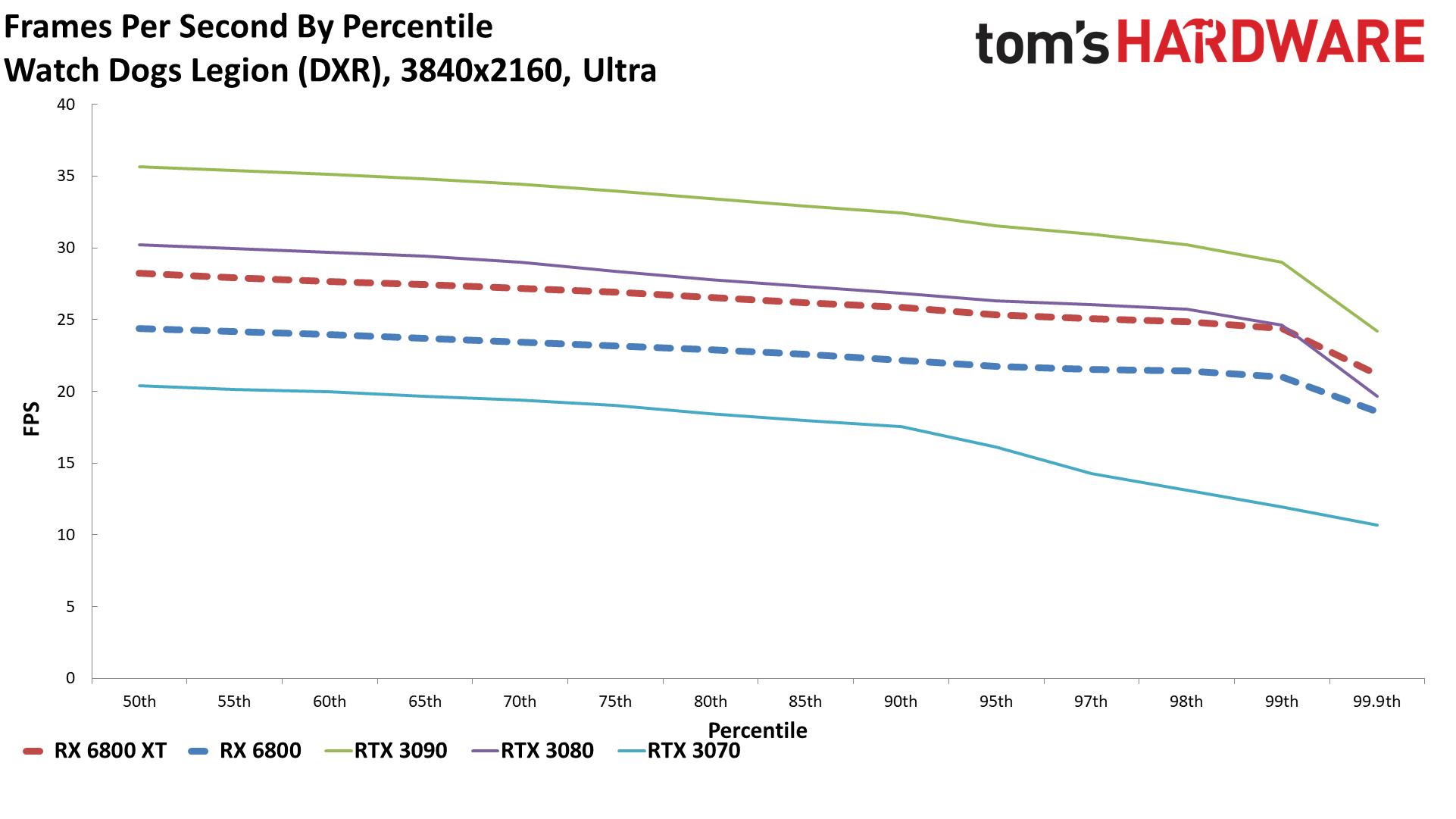

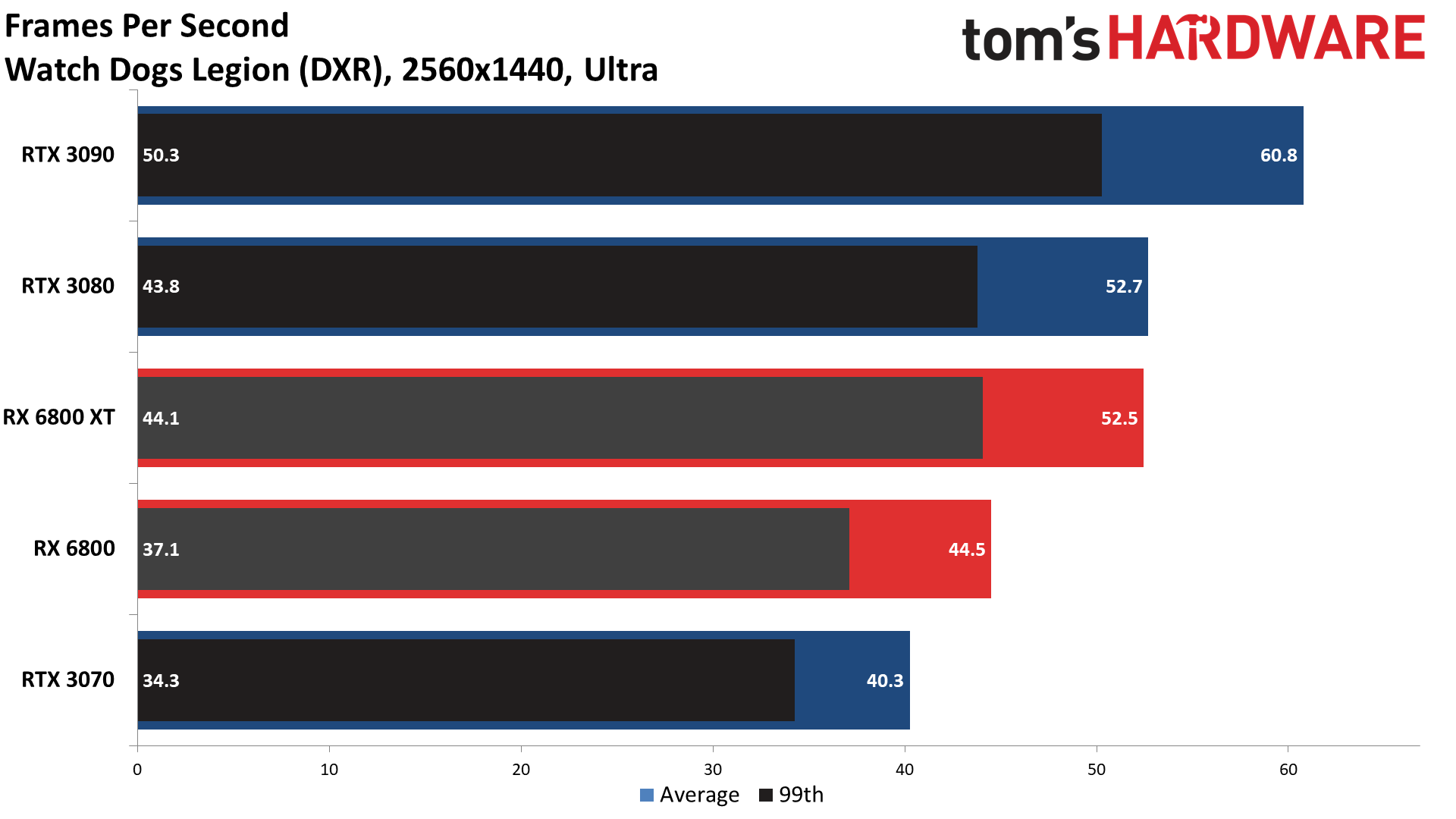

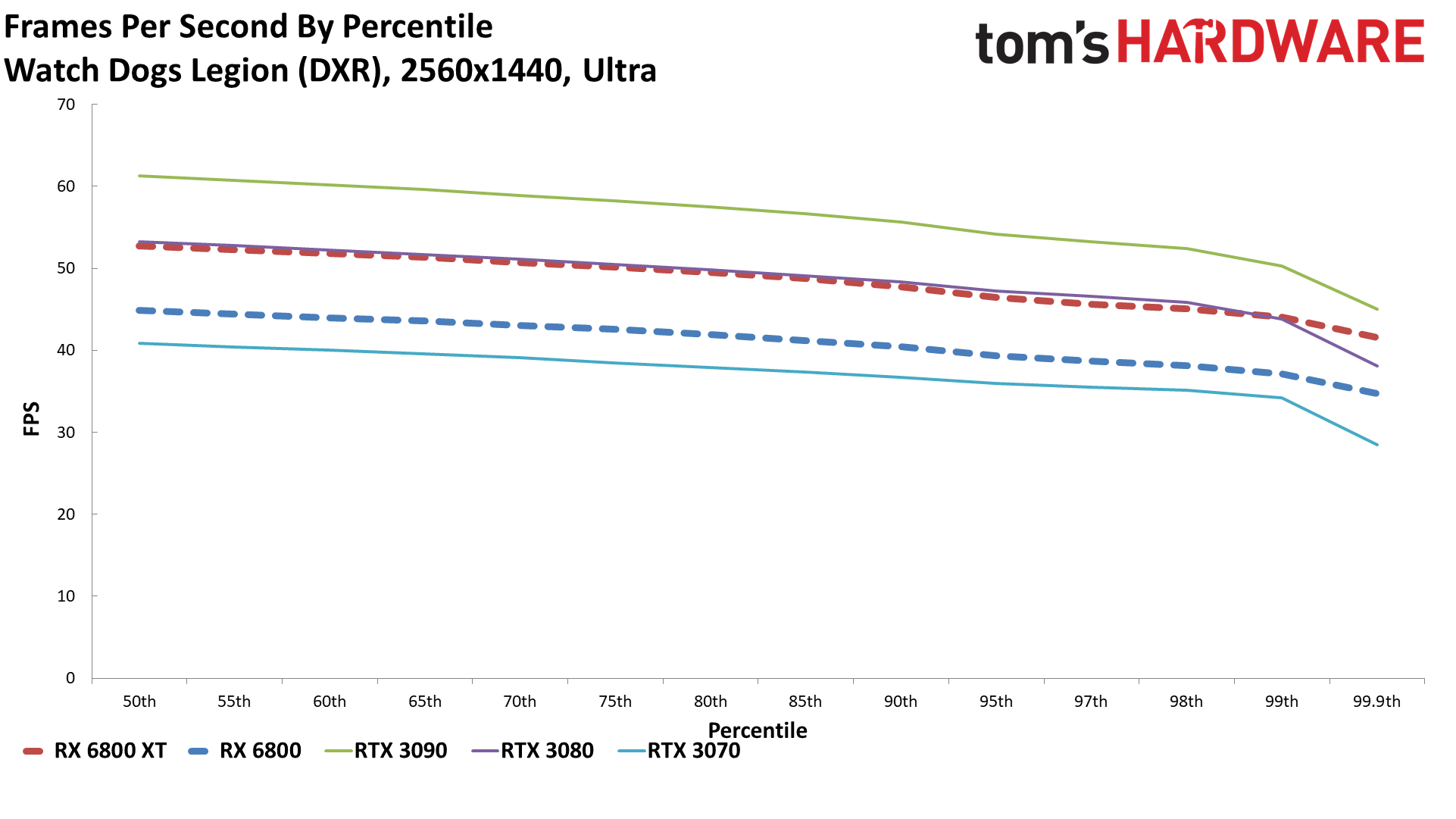

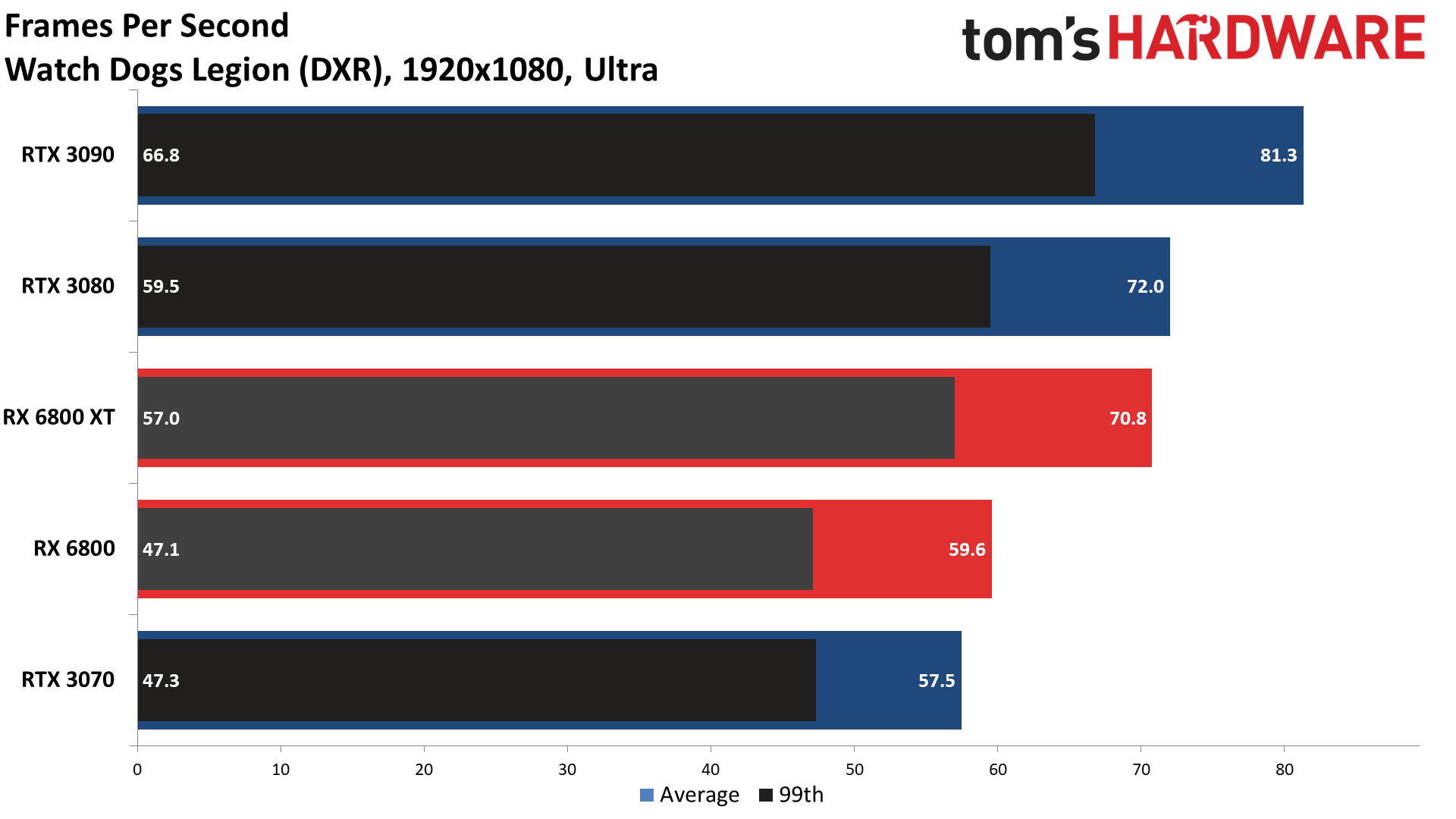

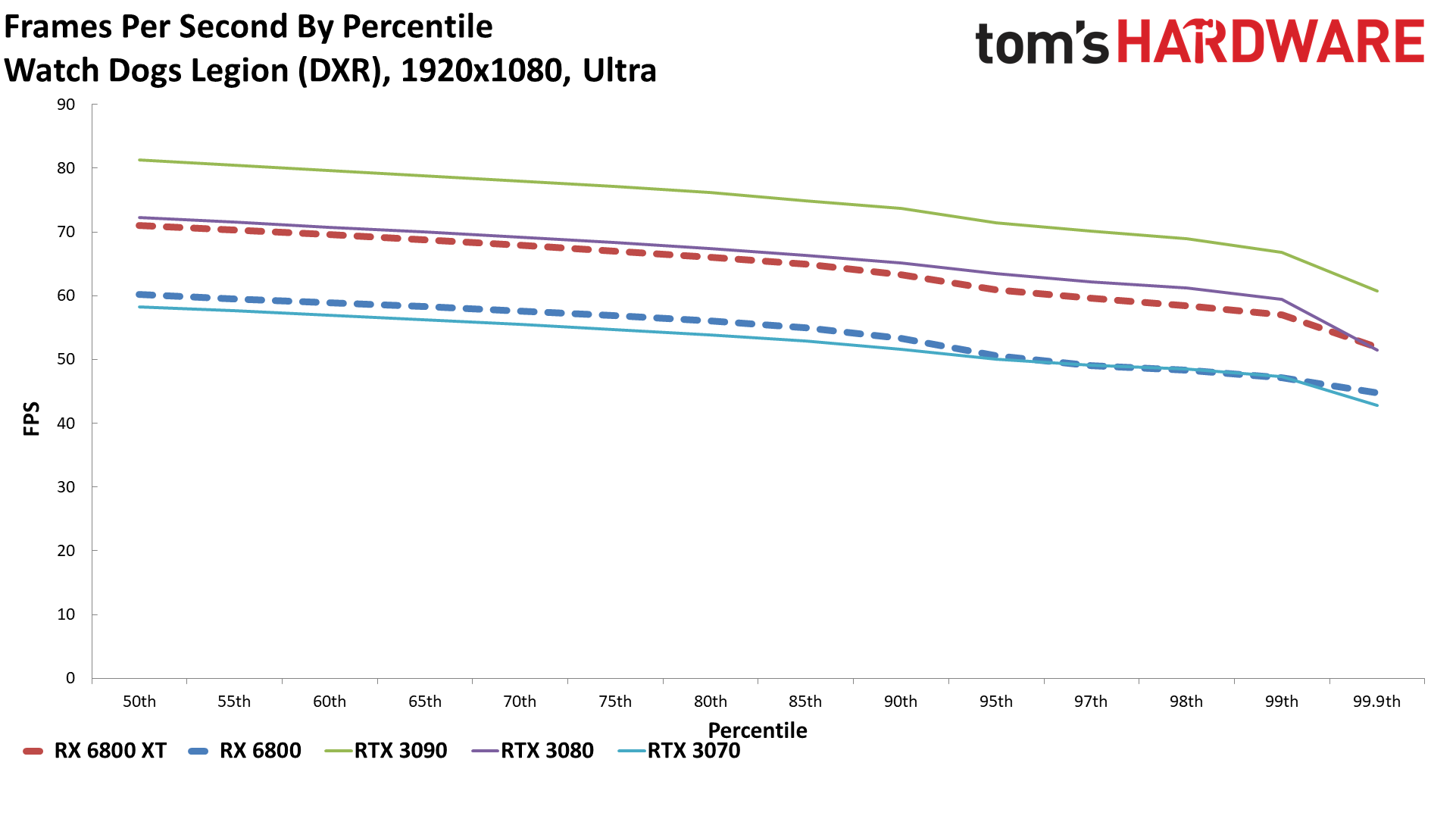

WatchDogs

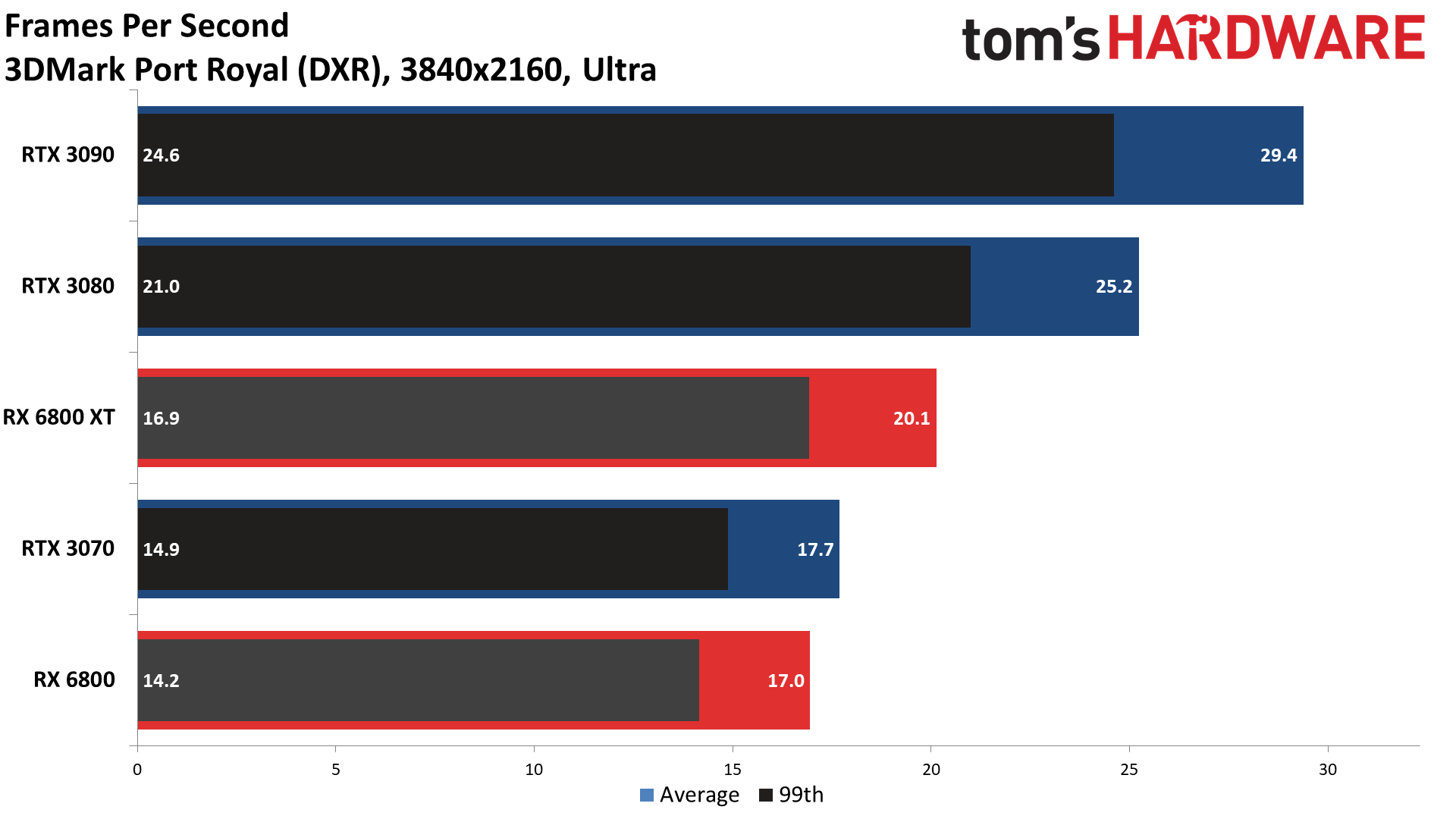

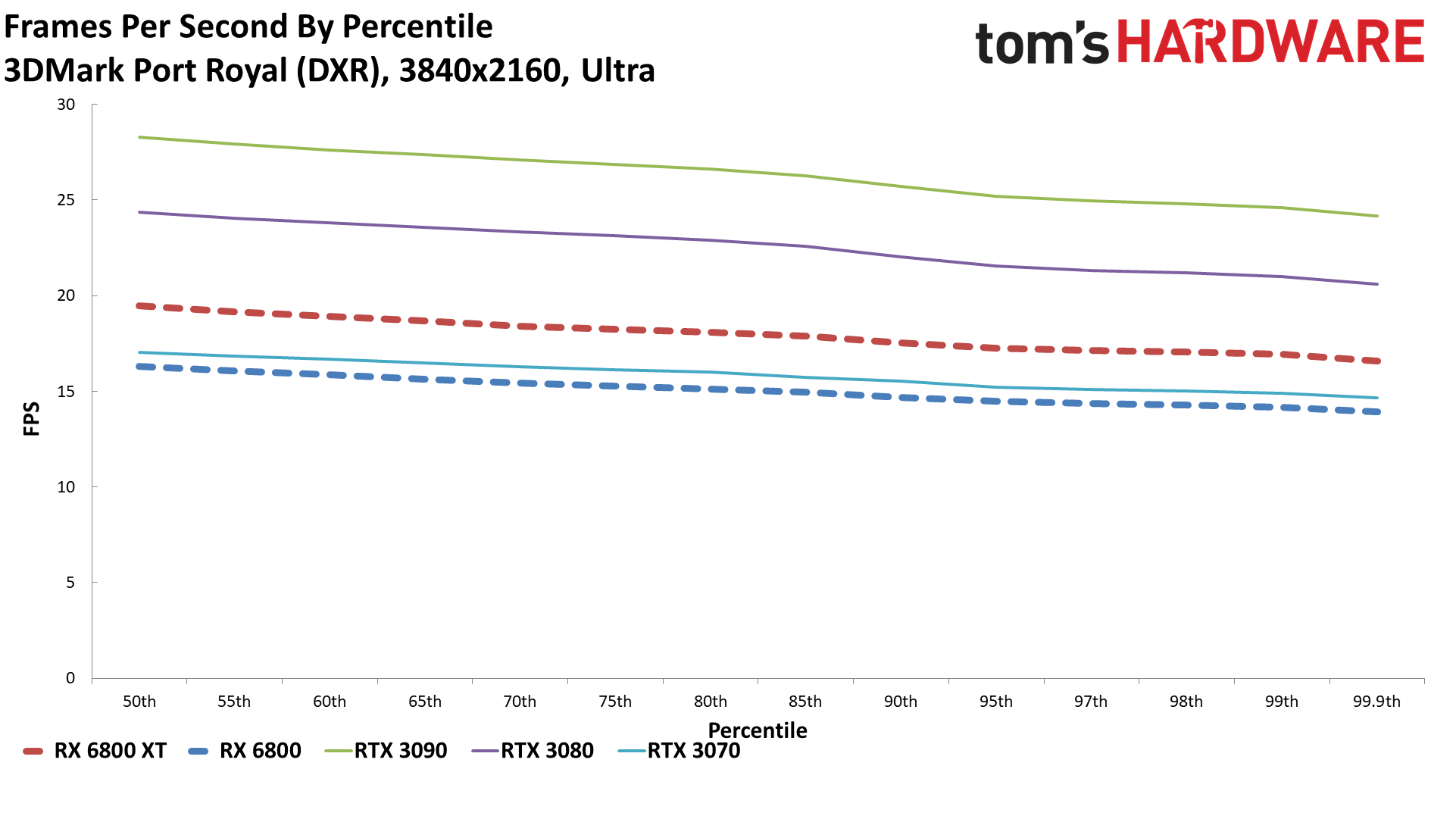

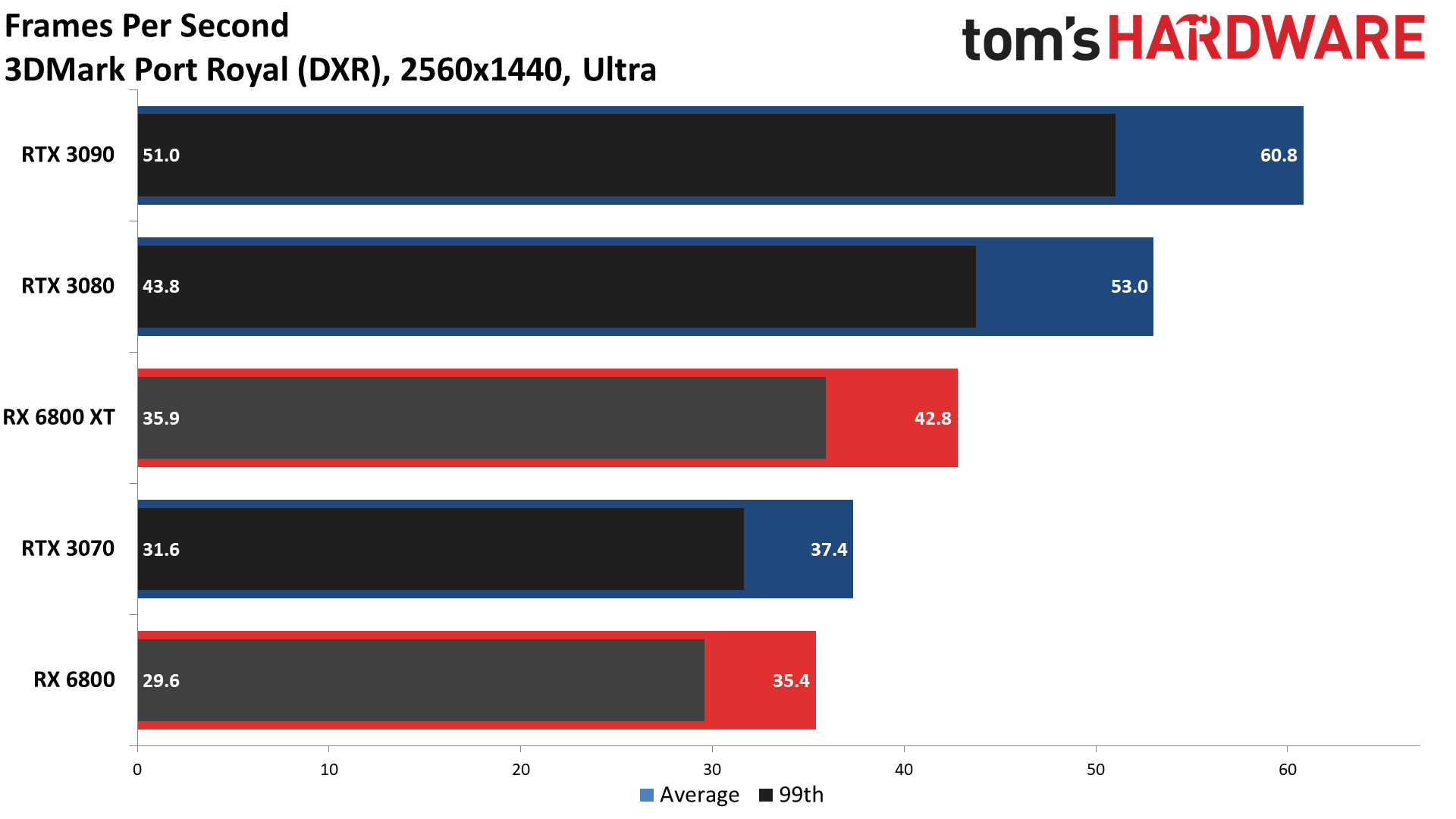

Well. So much for AMD's comparable performance. AMD's RX 6800 series can definitely hold its own against Nvidia's RTX 30-series GPUs in traditional rasterization modes. Turn on ray tracing, even without DLSS, and things can get ugly. AMD's RX 6800 XT does tend to come out ahead of the RTX 3070, but then it should — it costs more, and it has twice the VRAM. But again, DLSS (which is supported in seven of the ten games/tests we used) would turn the tables, and even the DLSS quality mode usually improves performance by 20-40 percent (provided the game isn't bottlenecked elsewhere).

Ignoring the often-too-low framerates, overall, the RTX 3080 is nearly 25 percent faster than the RX 6800 XT at 1080p, and that lead only grows at 1440p (26 percent) and 4K (30 percent). The RTX 3090 is another 10-15 percent ahead of the 3080, which is very much out of AMD's reach if you care at all about ray tracing performance — ignoring price, of course.

The RTX 3070 comes out with a 10-15 percent lead over the RX 6800, but individual games can behave quite differently. Take the new Call of Duty: Black Ops Cold War. It supports multiple ray tracing effects, and even the RTX 3070 holds a significant 30 percent lead over the 6800 XT at 1080p and 1440p. Boundary, Control, Crysis Remastered, and (to a lesser extent) Fortnite also have the 3070 leading the AMD cards. But Dirt 5, Metro Exodus, Shadow of the Tomb Raider, and Watch Dogs Legion have the 3070 falling behind the 6800 XT at least, and sometimes the RX 6800 as well.

There is a real question about whether the GPUs are doing the same work, though. We haven't had time to really dig into the image quality, but Watch Dogs Legion for sure doesn't look the same on AMD compared to Nvidia with ray tracing enabled. Check out these comparisons:

Apparently Ubisoft knows about the problem. In a statement to us, it said, "We are aware of the issue and are working to address it in a patch in December." But right now, there's a good chance that AMD's performance in Watch Dogs Legion at least is higher than it should be with ray tracing enabled.

Overall, AMD's ray tracing performance looks more like Nvidia's RTX 20-series GPUs than the new Ampere GPUs, which was sort of what we expected. This is first gen ray tracing for AMD, after all, while Nvidia is on round two. Frankly, looking at games like Fortnite, where ray traced shadows, reflections, global illumination, and ambient occlusion are available, we probably need fourth gen ray tracing hardware before we'll be hitting playable framerates with all the bells and whistles. And we'll likely still need DLSS, or AMD's Super Resolution, to hit acceptable frame rates at 4K.

Radeon RX 6800 Series: Power, Temps, Clocks, and Fans

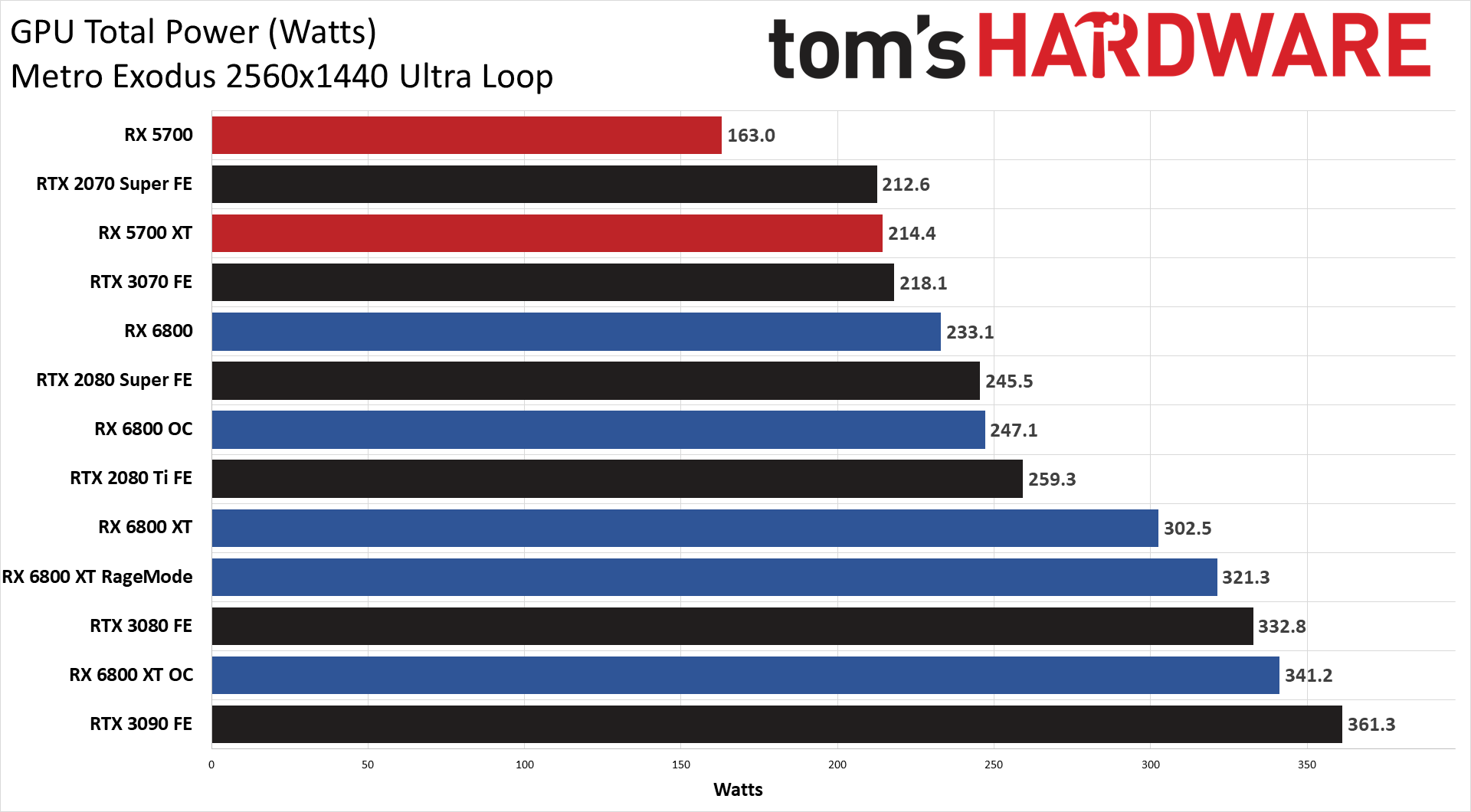

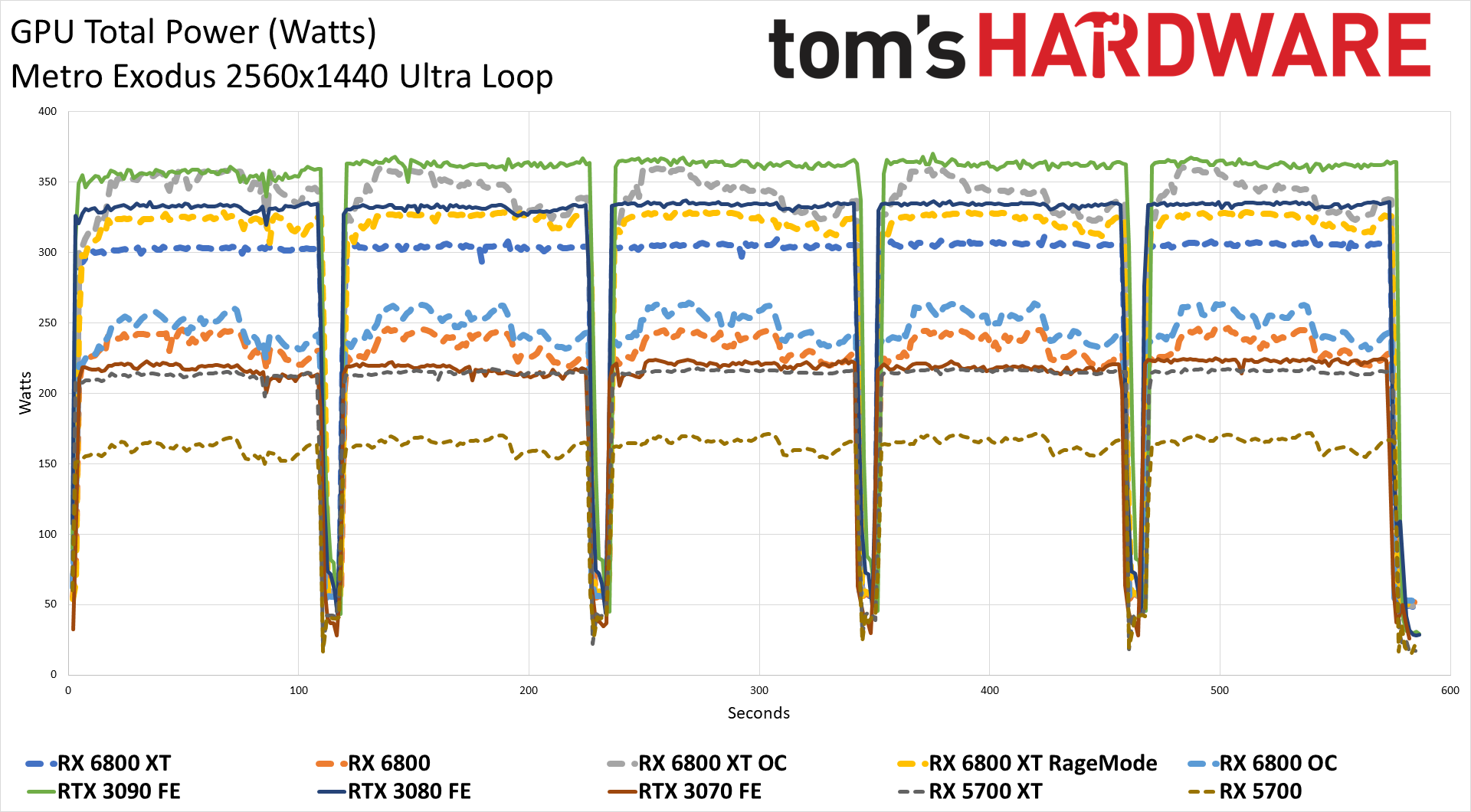

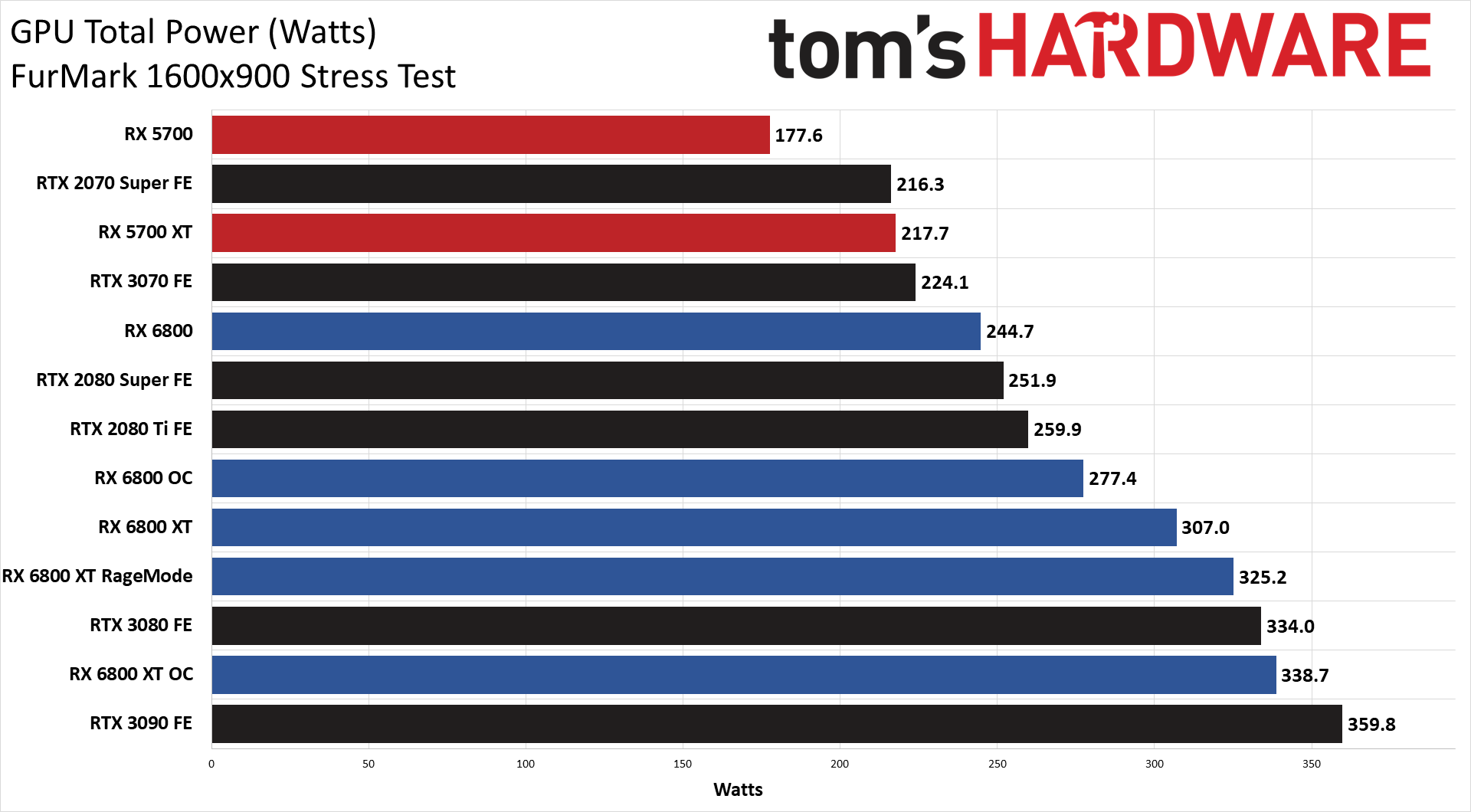

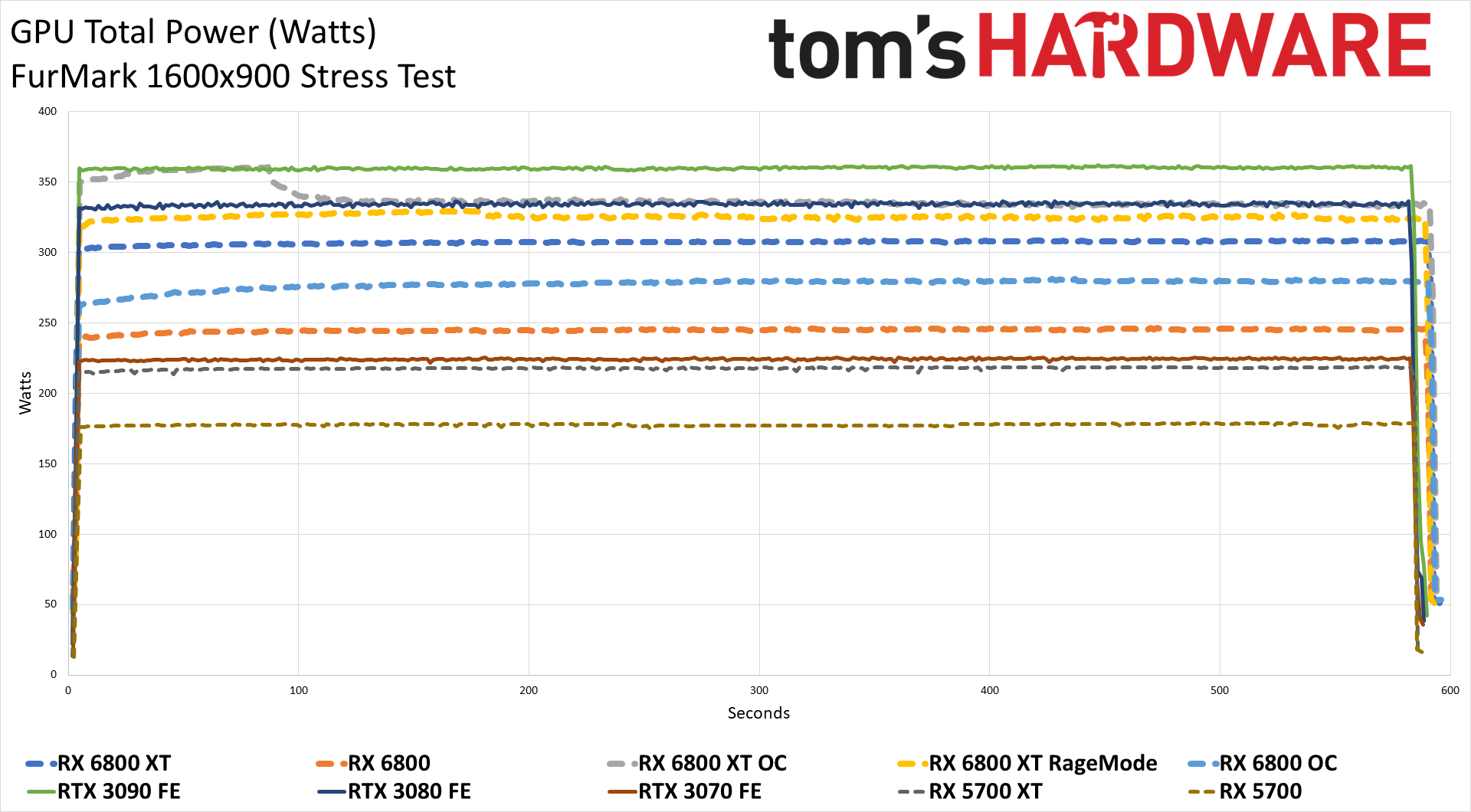

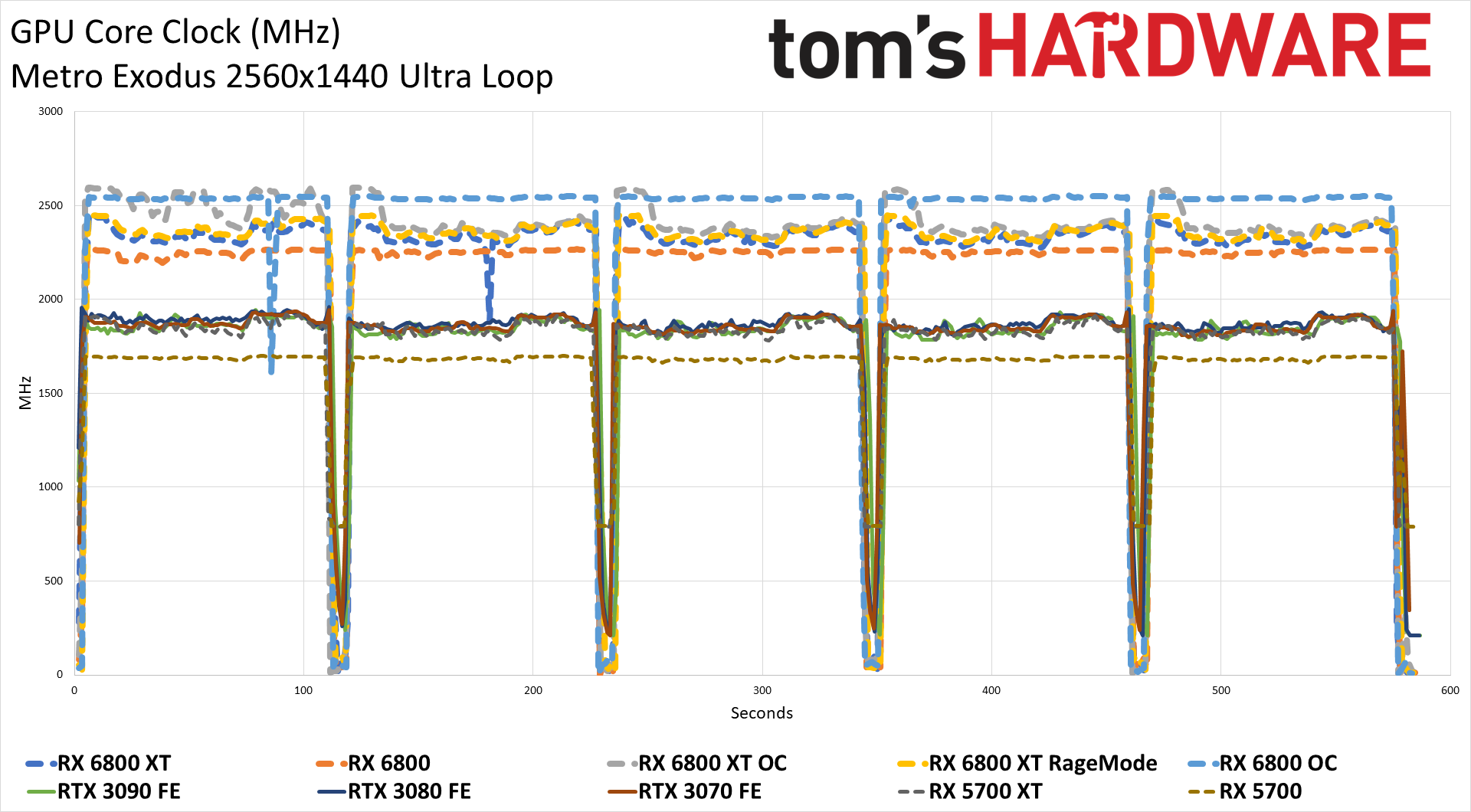

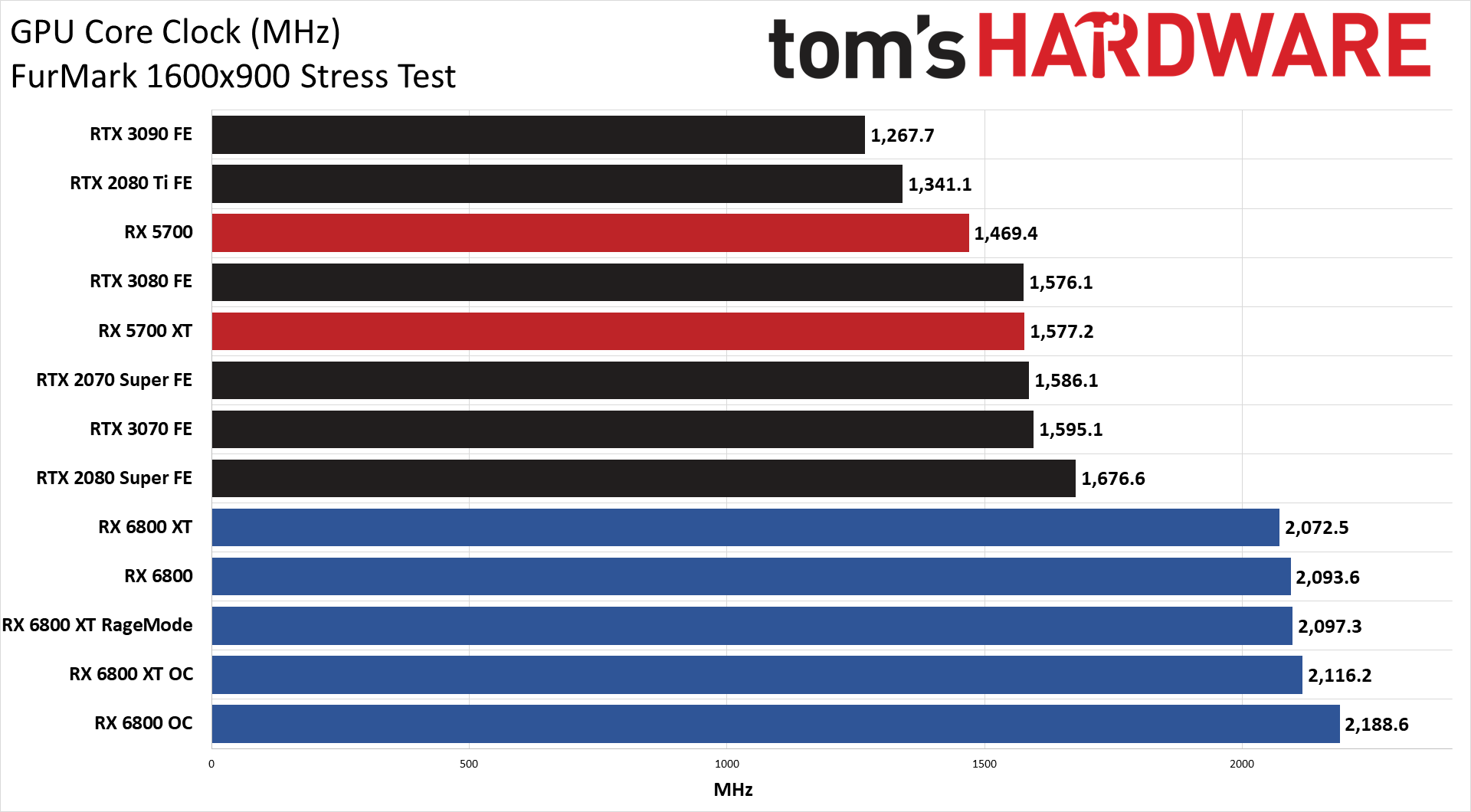

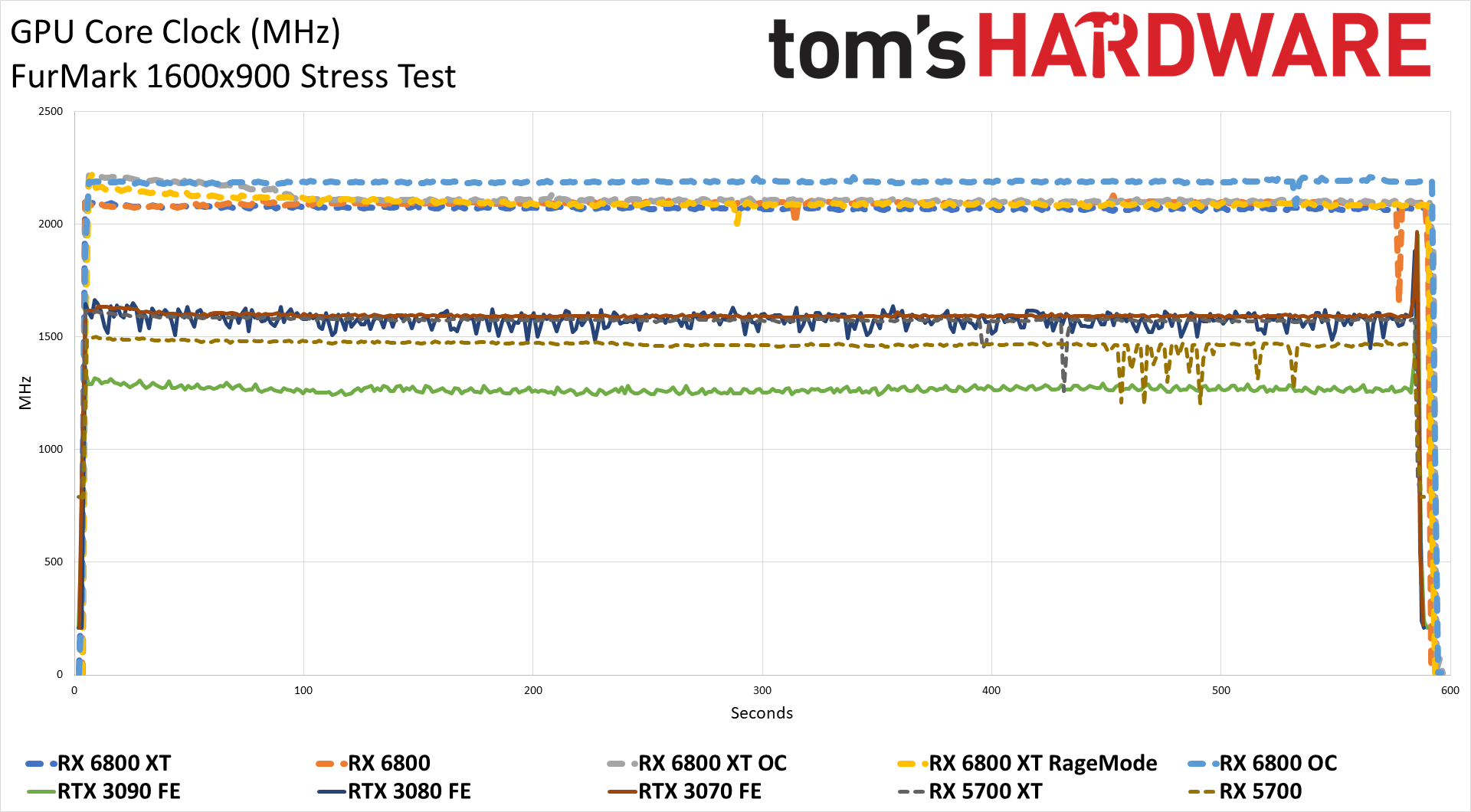

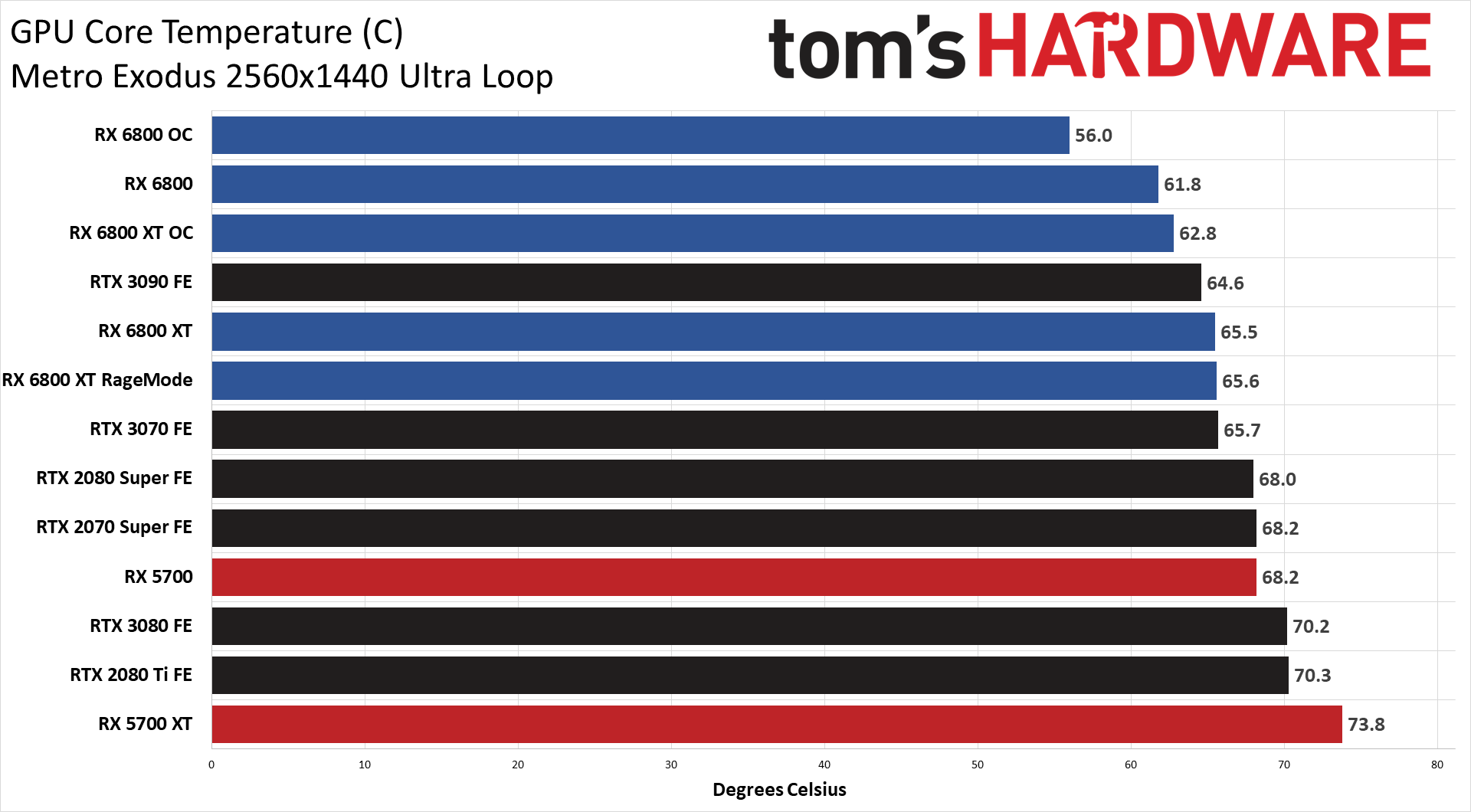

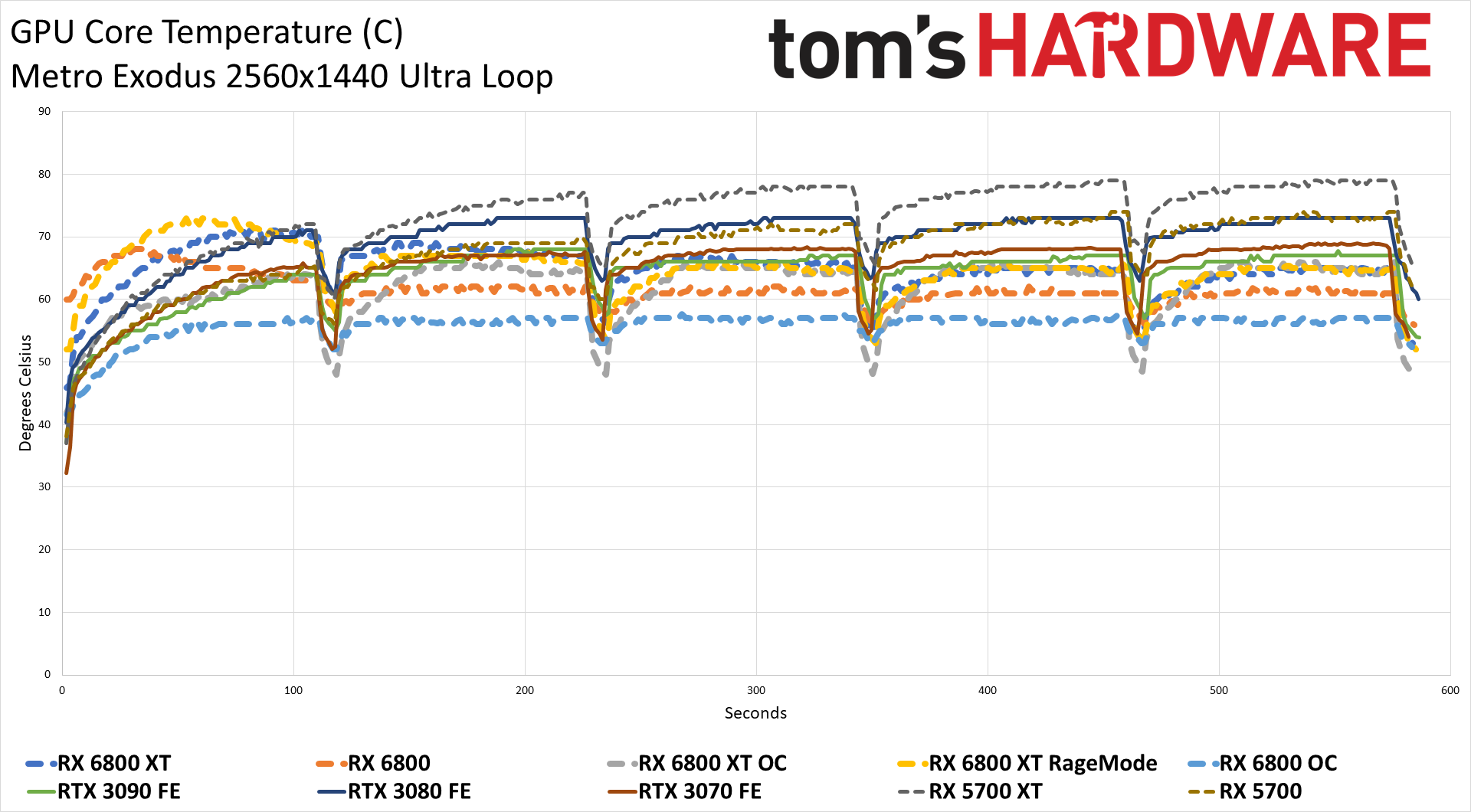

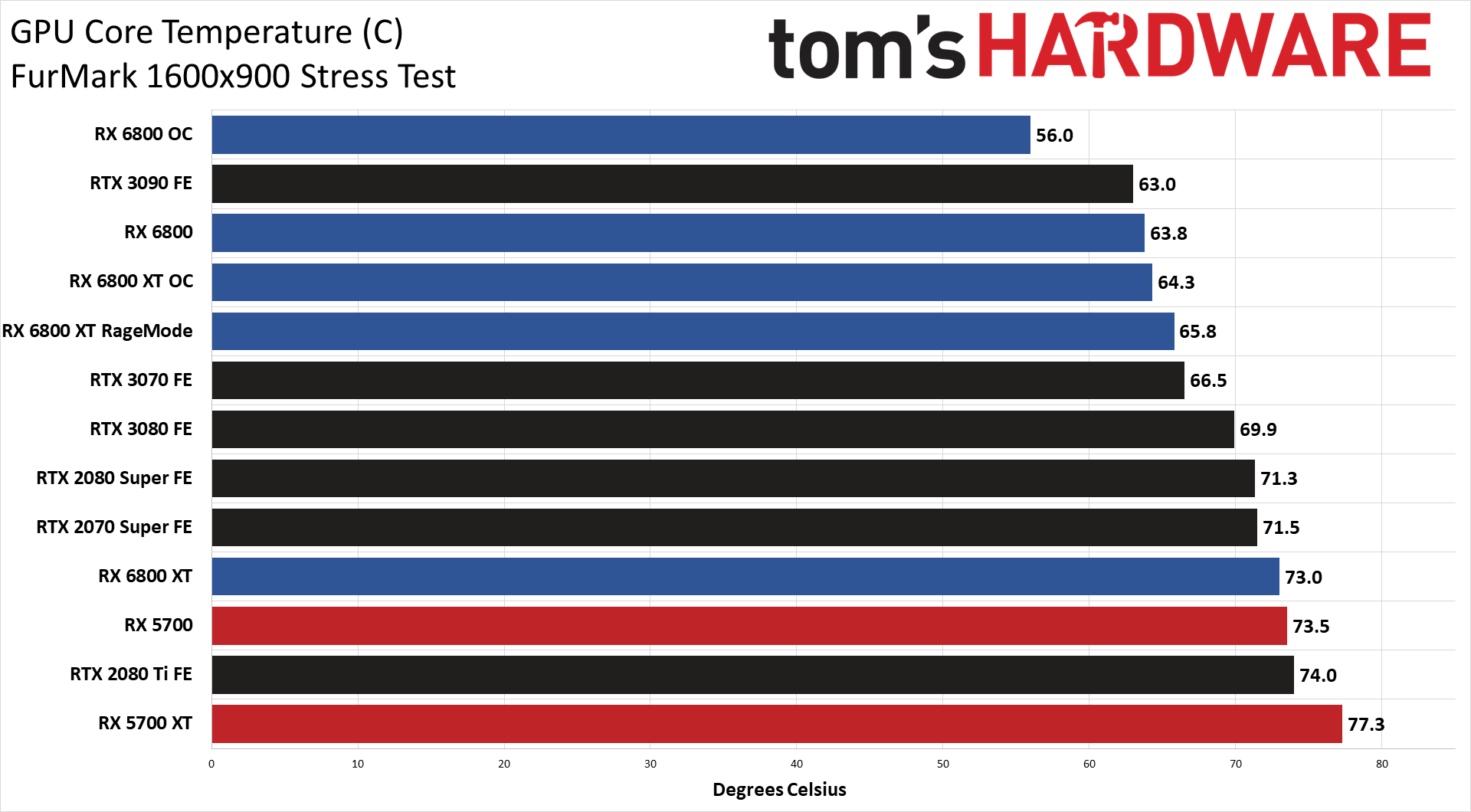

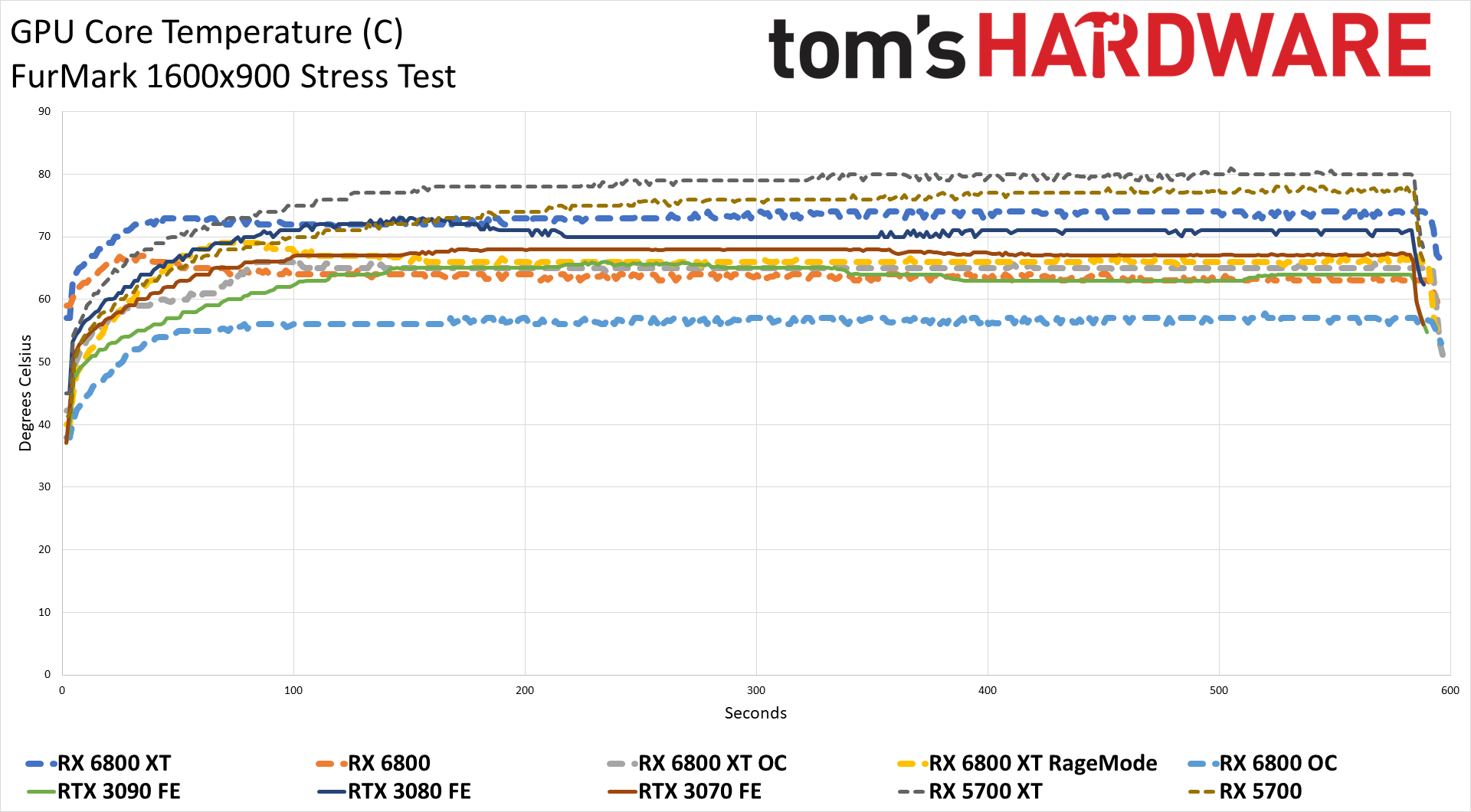

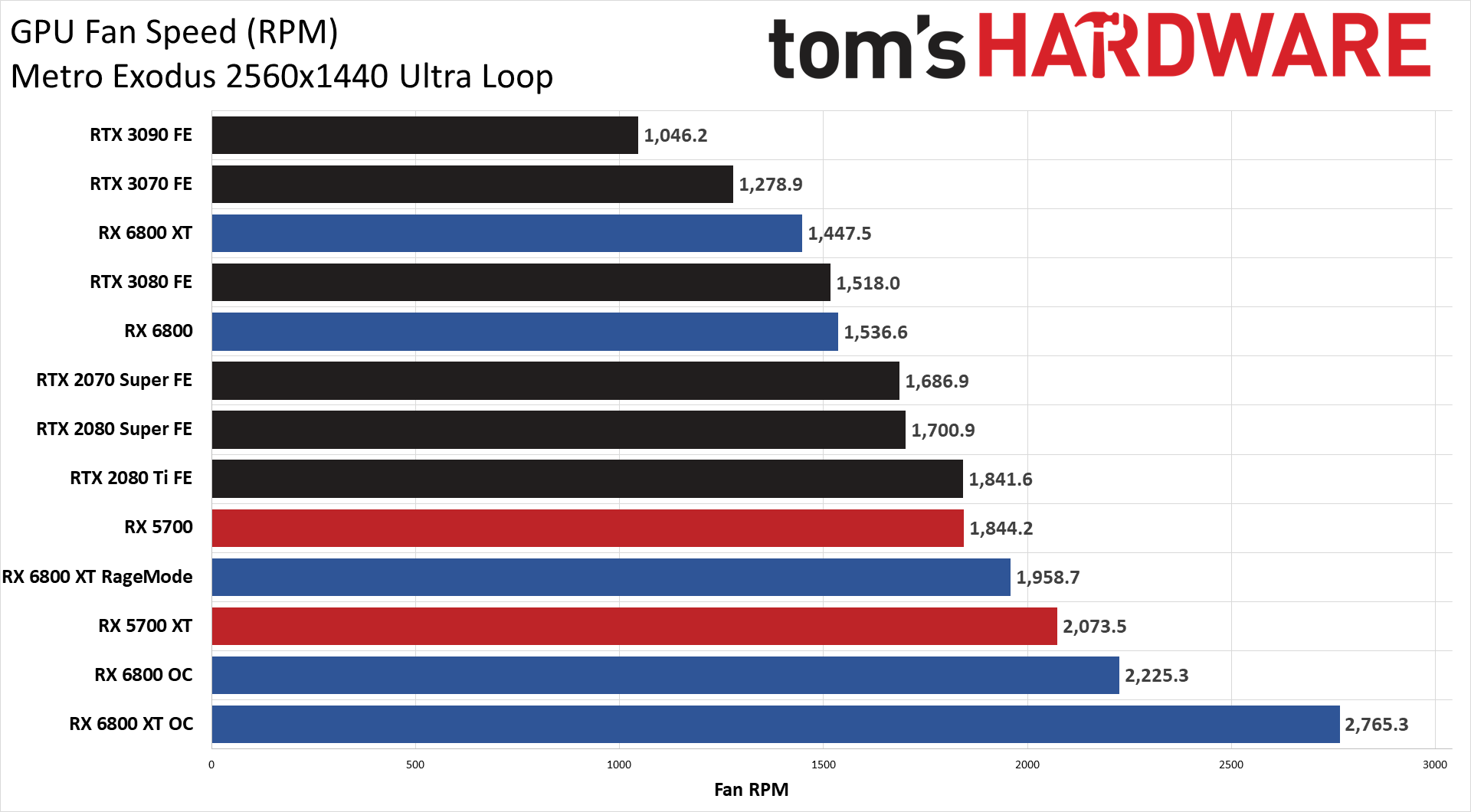

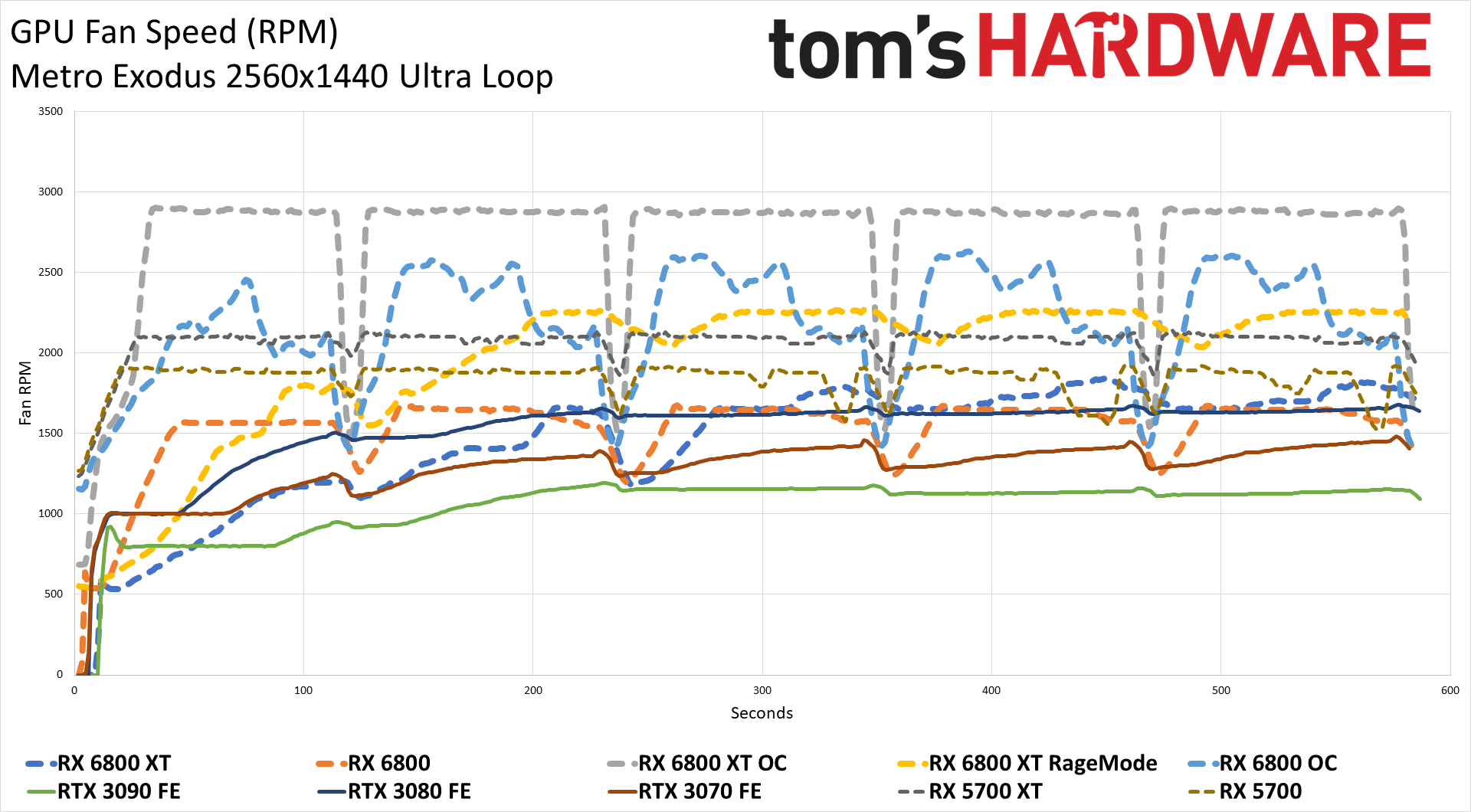

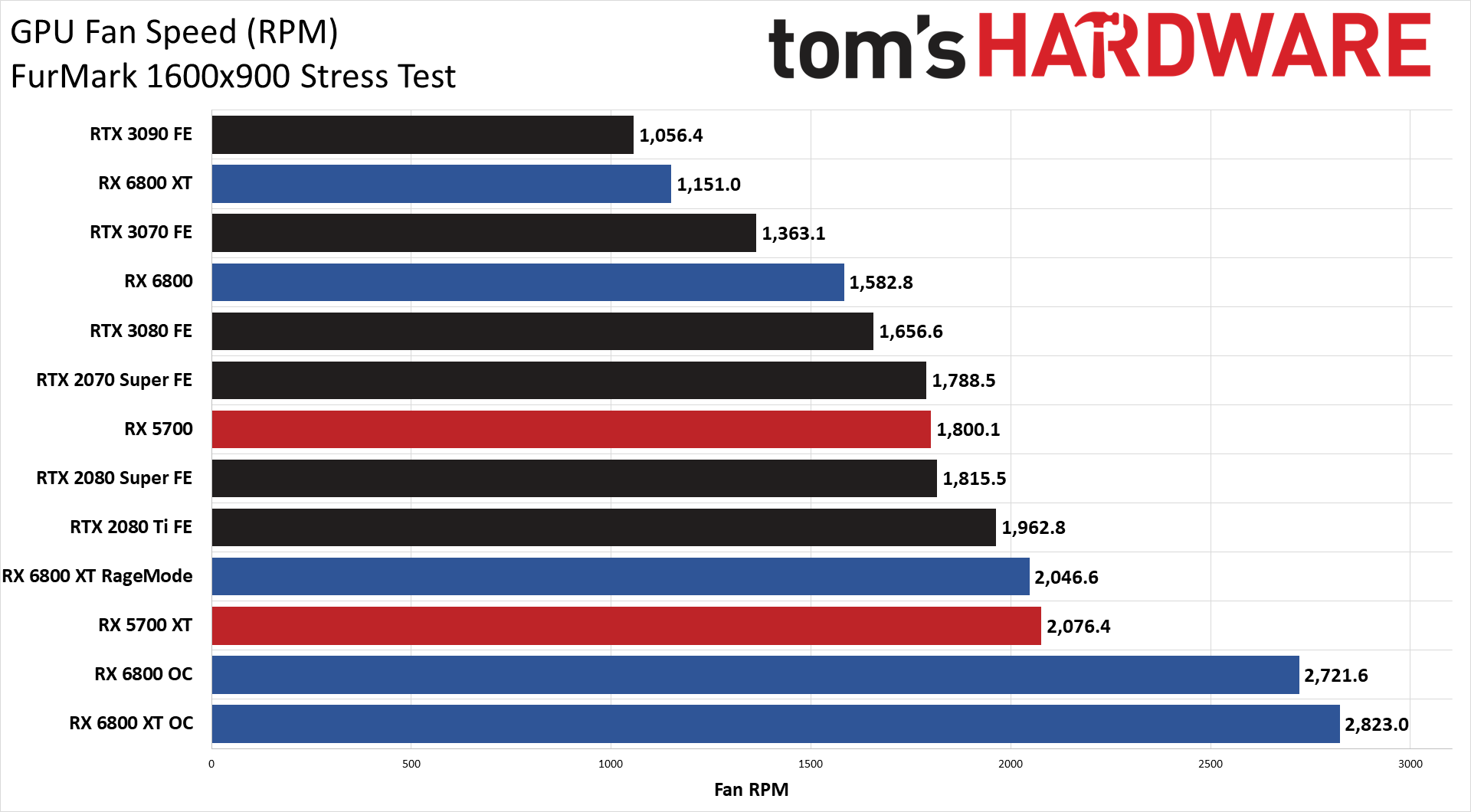

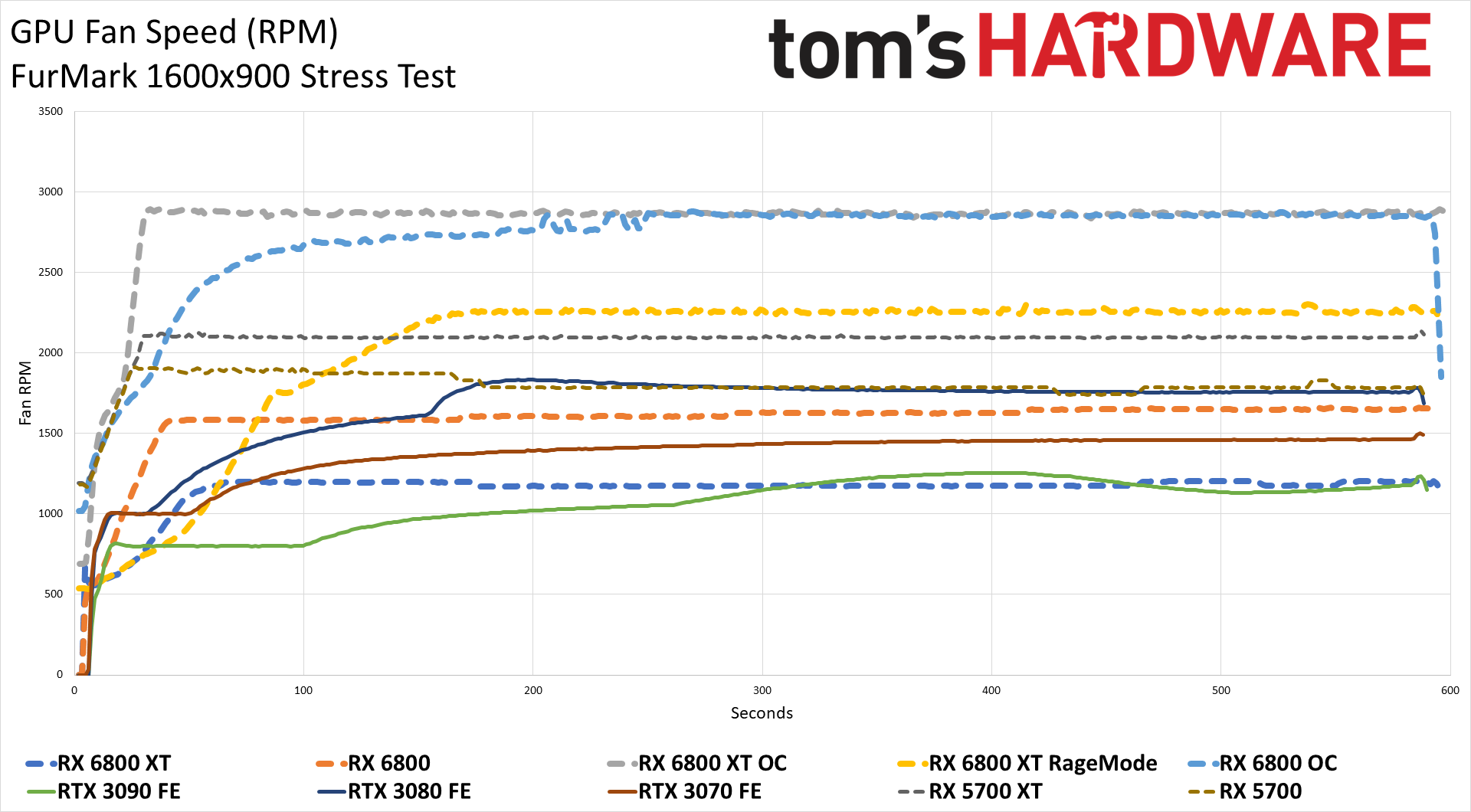

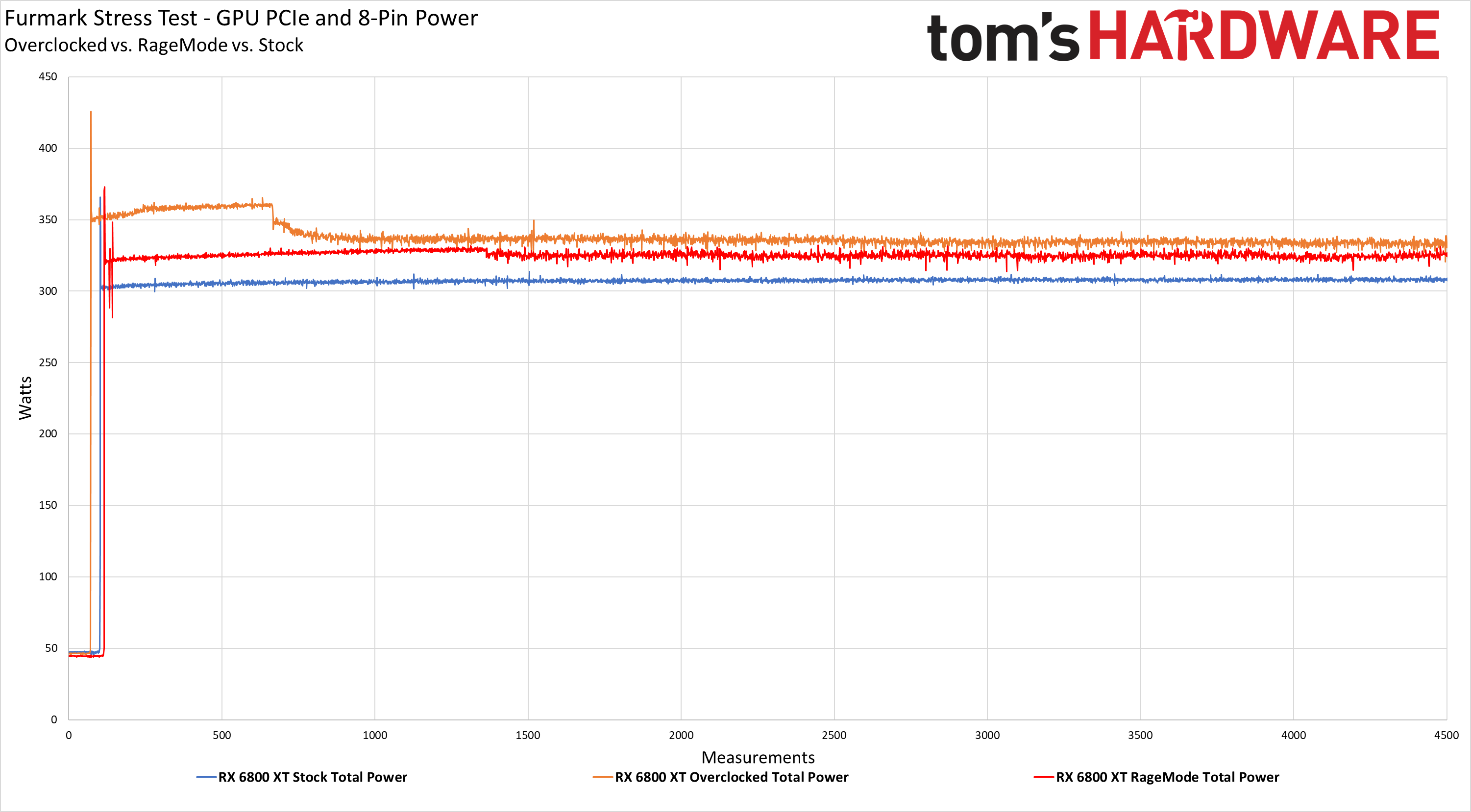

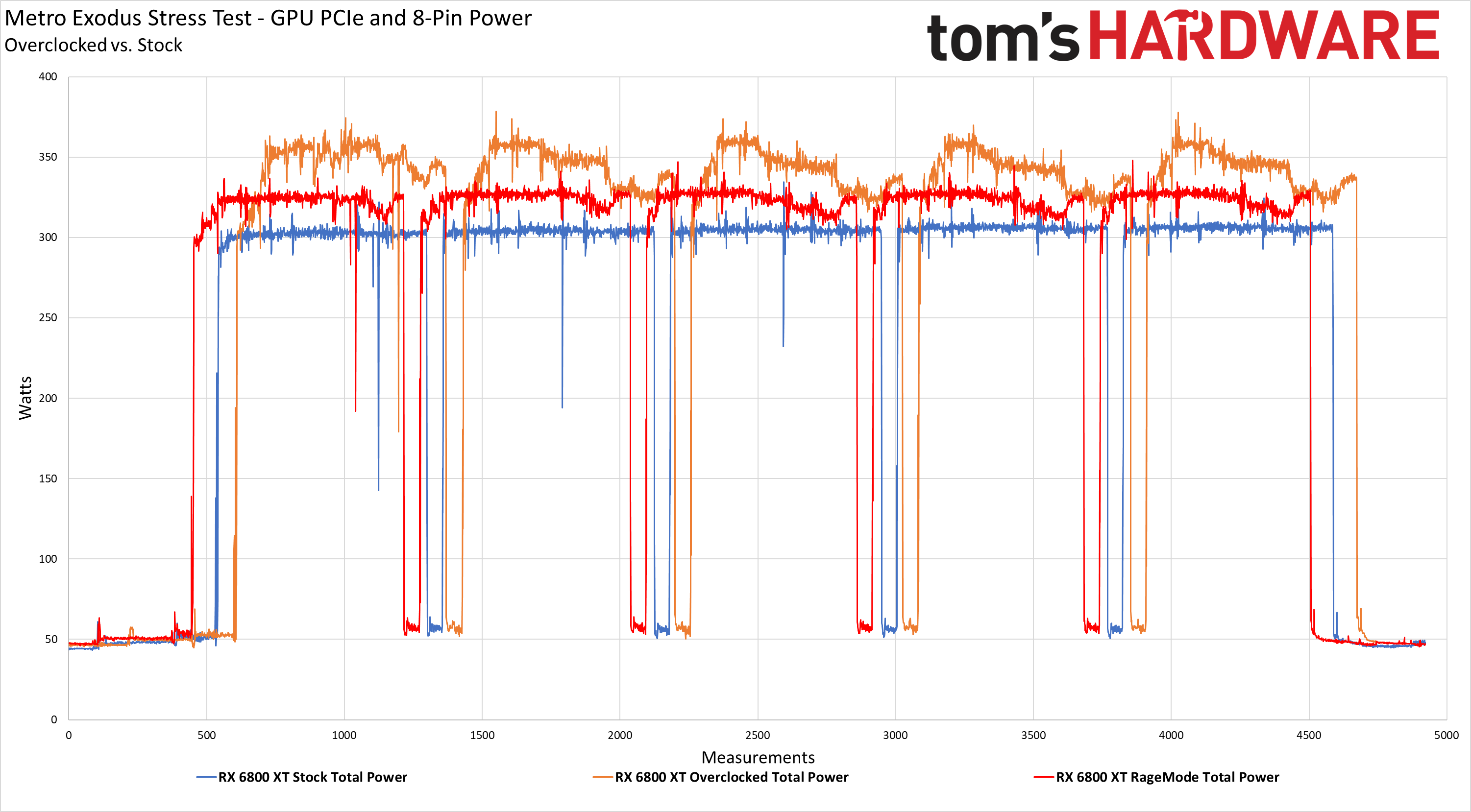

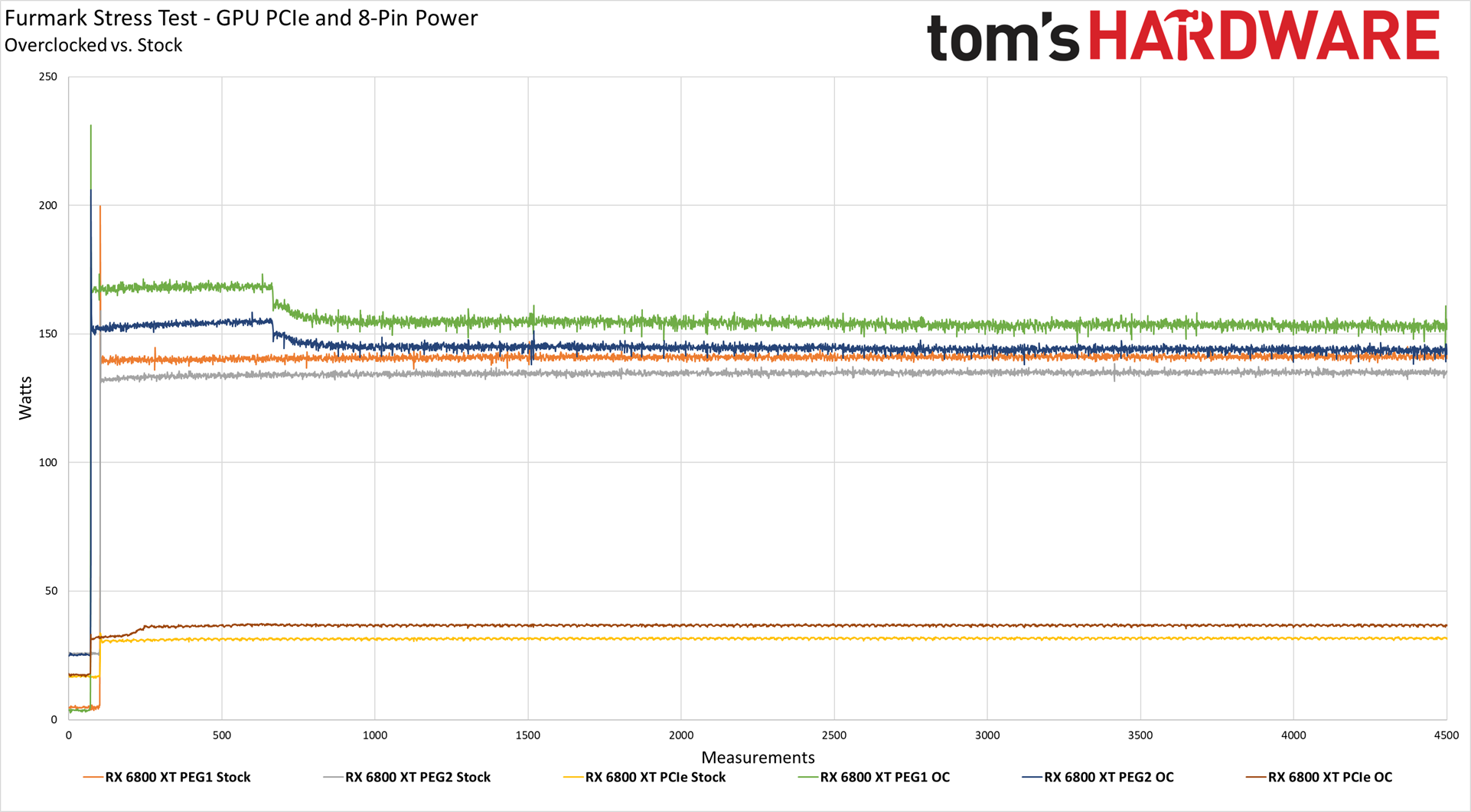

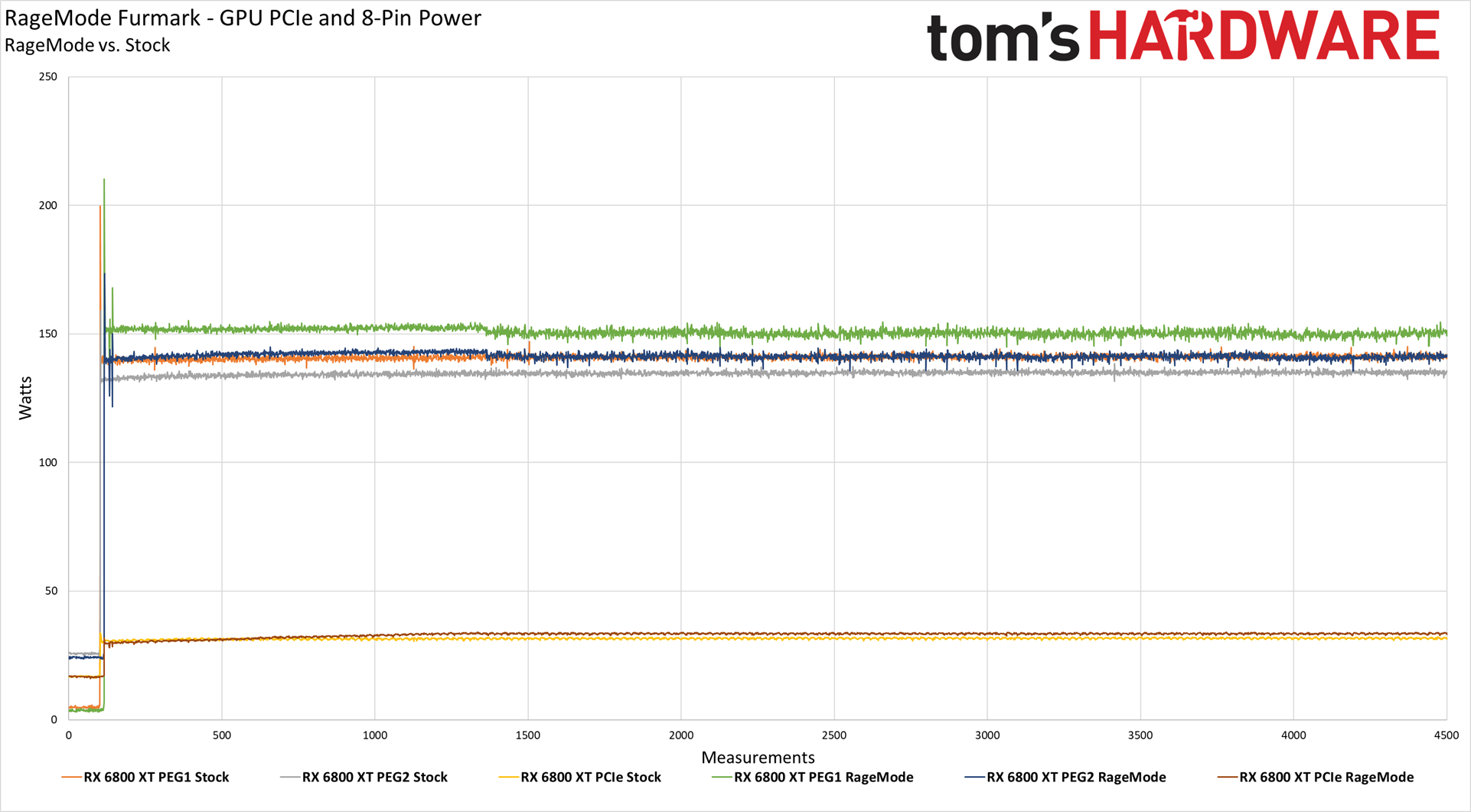

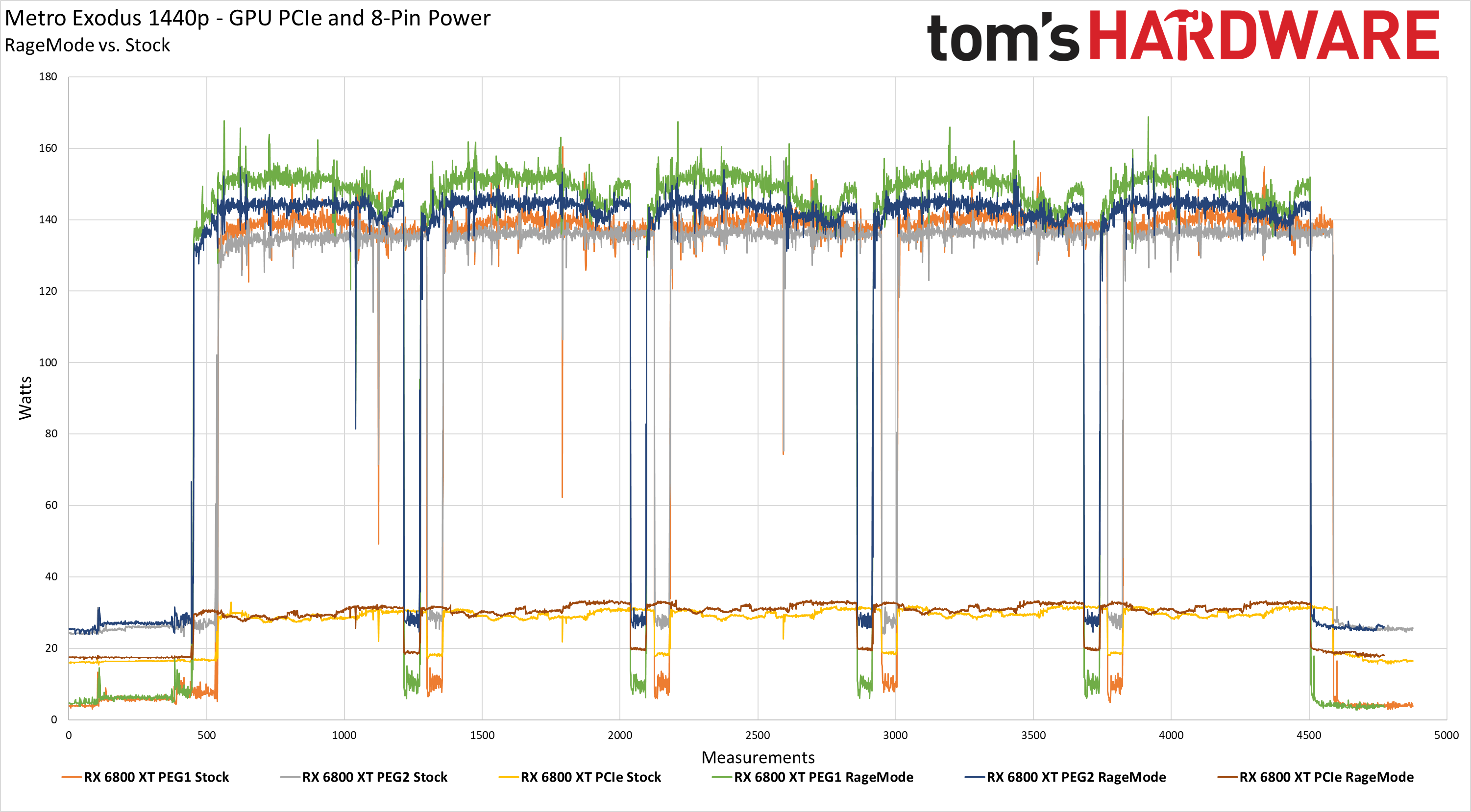

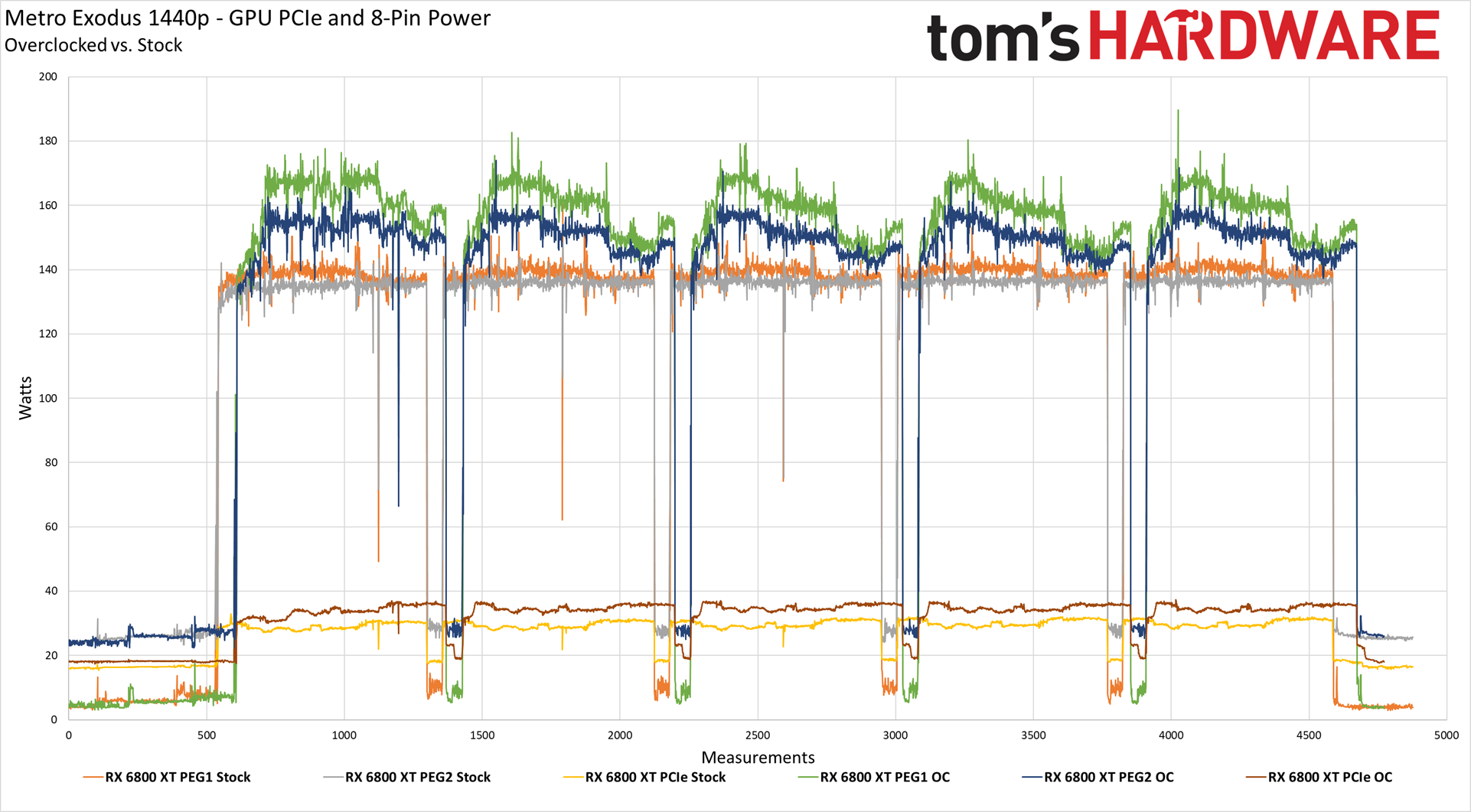

We've got our usual collection of power, temperature, clock speed, and fan speed testing using Metro Exodus running at 1440p, and FurMark running at 1600x900 in stress test mode. While Metro is generally indicative of how other games behave, we loop the benchmark five times, and there are dips where the test restarts and the GPU gets to rest for a few seconds. FurMark, on the other hand, is basically a worst-case scenario for power and thermals. We collect the power data using Powenetics software and hardware, which uses GPU-Z to monitor GPU temperatures, clocks, and fan speeds.

GPU Total Power

AMD basically sticks to the advertised 300W TBP on the 6800 XT with Metro Exodus, and even comes in slightly below the 250W TBP on the RX 6800. Enabling Rage Mode on the 6800 XT obviously changes things, and you can also see our power figures for the manual overclocks. Basically, Big Navi can match RTX 3080 when it comes to power if you relax increase the power limits.

FurMark pushes power on both cards a bit higher, which is pretty typical. If you check the line graphs, you can see our 6800 XT OC starts off at nearly 360W in FurMark before it throttles down a bit and ends up at closer to 350W. There are some transient power spikes that can go a bit higher as well, which we'll discuss more later.

GPU Core Clocks

Looking at the GPU clocks, AMD is pushing some serious MHz for a change. This is now easily the highest clocked GPU we've ever seen, and when we manually overclocked the RX 6800, we were able to hit a relatively stable 2550 MHz. That's pretty damn impressive, especially considering power use isn't higher than Nvidia's GPUs. Both cards also clear their respective Game Clocks and Boost Clocks, which is a nice change of pace.

GPU Core Temp

GPU Fan Speed

Temperatures and fan speeds are directly related to each other. Ramp of fan speed — which we did for the overclocked 6800 cards — and you can get lower temperatures, at the cost of noise levels. We're still investigating overclocking as well, as there's a bit of odd behavior so far. The cards will run fine for a while, and then suddenly drop into a weak performance mode where performance might be half the normal level, or even worse. That's probably due to the lack of overclocking support in MSI Afterburner for the time being. By default, though, the cards have a good balance of cooling with noise. We'll get exact SPL readings later (still benchmarking a few other bits), but it's interesting that all of the new GPUs (RTX 30-series and RX 6000) have lower fan speeds than the previous generation.

We observed some larger-than-expected transient power spikes with the RX 6800 XT, but to be absolutely clear, these transient power spikes shouldn’t be an issue — particularly if you don’t plan on overclocking. However, it is important to keep these peak power measurements in mind when you spec out your power supply.

Transient power spikes are common but are usually of such short duration (in the millisecond range) that our power measurement gear, which records measurements at roughly a 100ms granularity, can’t catch them. Typically you’d need a quality oscilloscope to measure transient power spikes accurately, but we did record several spikes even with our comparatively relaxed polling.

The charts above show total power consumption of the RX 6800XT at stock settings, overclocked, and with Rage Mode enabled. In terms of transient power spikes, we don’t see any issues at all with Metro Exodus, but we see brief peaks during Furmark of 425W with the manually overclocked config, 373W with Rage Mode, and 366W with the stock setup. Again, these peaks were measured within one 100ms polling cycle, which means they could certainly trip a PSU's over power protection if you're running at close to max power delivery.

To drill down on the topic, we split out our power measurements from each power source, which you’ll see above. The RX 6800 XT draws power from the PCIe slot and two eight-pin PCIe connectors (PEG1/PEG2).

Power consumption over the PCIe slot is well managed during all the tests (as a general rule of thumb, this value shouldn’t exceed 71W, and the 6800 XT is well below that mark). We also didn’t catch any notable transient spikes during our real-world Metro Exodus gaming test at either stock or overclocked settings.

However, during our FurMark test at stock settings, we see a power consumption spike to 206W on one of the PCIe cables for a very brief period (we picked up a single measurement of the spike during each run). After overclocking, we measured a simultaneous spike of 231W on one cable and 206W on the other for a period of one measurement taken at a 100ms polling rate. Naturally, those same spikes are much less pronounced with Rage Mode overclocking, measuring only 210W and 173W. A PCIe cable can easily deliver ~225W safely (even with 18AWG), so these transient power spikes aren’t going to melt connectors, wires, or harm the GPU in any way — they would need to be of much longer duration to have that type of impact.

But the transient spikes are noteworthy because some CPUs, like the Intel Core i9-9900K and i9-10900K, can consume more than 300W, adding to the total system power draw. If you plan on overclocking, it would be best to factor the RX6800 XT's transient power consumption into the total system power.

Power spikes of 5-10ms can trip the overcurrent protection (OCP) on some multi-rail power supplies because they tend to have relatively low OCP thresholds. As usual, a PSU with a single 12V rail tends to be the best solution because they have much better OCP mechanisms, and you’re also better off using dedicated PCIe cables for each 8-pin connector.

Radeon RX 6800 Series: Prioritizing Rasterization Over Ray Tracing

It's been a long time since AMD had a legitimate contender for the GPU throne. The last time AMD was this close … well, maybe Hawaii (Radeon R9 290X) was competitive in performance at least, while using quite a bit more power. That's sort of been the standard disclaimer for AMD GPUs for quite a few years. Yes, AMD has some fast GPUs, but they tend to use a lot of power. The other alternative was best illustrated by one of the best budget GPUs of the past couple of years: AMD isn't the fastest, but dang, look how cheap the RX 570 is! With the Radeon RX 6800 series, AMD is mostly able to put questions of power and performance behind it. Mostly.

The RX 6800 XT ends up just a bit slower than the RTX 3080 overall in traditional rendering, but it costs less, and it uses a bit less power (unless you kick on Rage Mode, in which case it's a tie). There are enough games where AMD comes out ahead that you can make a legitimate case for AMD having the better card. Plus, 16GB of VRAM is definitely helpful in a few of the games we tested — or at least, 8GB isn't enough in some cases. The RX 6800 does even better against the RTX 3070, generally winning most benchmarks by a decent margin. Of course, it costs more, but if you have to pick between the 6800 and 3070, we'd spend the extra $80.

The problem is, that's a slippery slope. At that point, we'd also spend an extra $70 to go to the RX 6800 XT … and $50 more for the RTX 3080, with its superior ray tracing and support for DLSS, is easy enough to justify. Now we're looking at a $700 graphics card instead of a $500 graphics card, but at least it's a decent jump in performance.

Of course, you can't buy any of the Nvidia RTX 30-series GPUs right now. Well, you can, if you get lucky. It's not that Nvidia isn't producing cards; it's just not producing enough cards to satisfy the demand. And, let's be real for a moment: There's not a chance in hell AMD's RX 6800 series are going to do any better. Sorry to be the bearer of bad news, but these cards are going to sell out. You know, just like every other high-end GPU and CPU launched in the past couple of months. (Update: Yup, every RX 6800 series GPU sold out within minutes.)

What's more, AMD is better off producing more Ryzen 5000 series CPUs than Radeon RX 6000 GPUs. Just look at the chip sizes and other components. A Ryzen 9 5900X has two 80mm square compute die with a 12nm IO die in a relatively compact package, and AMD is currently selling every single one of those CPUs for $550 — or $800 for the 5950X. The Navi 21 GPU, by comparison, is made on the same TSMC N7 wafers, and it uses 519mm square, plus it needs GDDR6 memory, a beefy cooler and fan, and all sorts of other components. And it still only sells for roughly the same price as the 5900X.

Which isn't to say you shouldn't want to buy an RX 6800 card. It's really going to come down to personal opinions on how important ray tracing will become in the coming years. The consoles now support the technology, but even the Xbox Series X can't keep up with an RX 6800, never mind an RTX 3080. Plus, while some games like Control make great use of ray tracing effects, in many other games, the ray tracing could be disabled, and most people wouldn't really miss it. We're still quite a ways off from anything approaching Hollywood levels of fidelity rendered in real time.

In terms of features, Nvidia still comes out ahead. Faster ray tracing, plus DLSS — and whatever else those Tensor cores might be used for in the future — seems like the safer bet long term. But there are still a lot of games forgoing ray tracing effects, or games where ray tracing doesn't make a lot of sense considering how it causes frame rates to plummet. Fortnite in creative mode might be fine for ray tracing, but I can't imagine many competitive players being willing to tank performance just for some eye candy. The same goes for Call of Duty. But then there's Cyberpunk 2077 looming, which could be the killer game that ray tracing hardware needs.

We asked earlier if Big Navi, aka RDNA2, was AMD's Ryzen moment for its GPUs. In a lot of ways, it's exactly that. The first generation Ryzen CPUs brought 8-core CPUs to mainstream platforms, with aggressive prices that Intel had avoided. But the first generation Zen CPUs and motherboards were raw and had some issues, and it wasn't until Zen 2 that AMD really started winning key matchups, and Zen 3 finally has AMD in the lead. Perhaps it's better to say that Navi, in general, is AMD trying to repeat what it did on the CPU side of things.

RX 6800 (Navi 21) is literally a bigger, enhanced version of last year's Navi 10 GPUs. It's up to twice the CUs, twice the memory, and is at least a big step closer to feature parity with Nvidia now. If you can find a Radeon RX 6800 or RX 6800 XT in stock any time before 2021, it's definitely worth considering. RX 6800 and Big Navi aren't priced particularly aggressively, but they do slot in nicely just above and below Nvidia's competing RTX 3070 and 3080.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

giorgiog As someone with an EVGA 3080 FTW... who gives a <Mod Edit> about raytracing? I turn it off in CoD CW to get way better framerates at 4k. Raytracing isn't worth it. Yes it's beautiful, but hardly anything more than a marketing gimmick at this point. If you can get a 3080 or a 6800, enjoy your awesome video card!Reply -

dmoros78v After watching Digital Foundry video on the comparison of Watch Dogs Legion X Box Series X ray tracing to PC versions ray tracing, I already suspected what was found in this review. Digital Foundry found a RTX 2060 Super with console ray tracing setting offered same (and sometimes better) performance than the console version... and thats a "mere" 7.2 GFLOP GPU, so rasterizing performance surely was not the culprit, ray tracing performance had to be, and this review just confirmed it. And that was again without DLSS!, enter DLSS and the 2060 super beats the console every time.Reply -

digitalgriffin Ray Tracing uses a random point sampling denoising feature between frames to reduce the number of samples necessary. The less samples you take, the blurrier the image looks. Looks like AMD is really reducing this sampling in an effort to speed up ray tracing. I think they reduced the size of the bounding boxes as well to reduce collisions. There's a lack of light reflections that show up in NVIDIA's RT that fail to show up on AMD's.Reply

One test is to see if they are using Temporal AA is to look at scenes where there are sudden light changes. "After glows" appear when lights suddenly flip off causing temporary "ghost images"

The quality is obviously suffering. -

digitalgriffin Replygiorgiog said:As someone with an EVGA 3080 FTW... who gives a <Mod Edit> about raytracing? I turn it off in CoD CW to get way better framerates at 4k. Raytracing isn't worth it. Yes it's beautiful, but hardly anything more than a marketing gimmick at this point. If you can get a 3080 or a 6800, enjoy your awesome video card!

Plenty of people do including Sony and Microsoft whom both promote it as the "next big thing" on their consoles.

Not all games are trigger happy twitch fest. Some of more experiential where quality matters. -

JarredWaltonGPU Reply

This should be up to the game dev, though, not the GPU drivers or whatever. DXR generally says, "trace these rays" and expects an answer. Not "trace some of these rays and give me your best guess."digitalgriffin said:Ray Tracing uses a random point sampling denoising feature between frames to reduce the number of samples necessary. The less samples you take, the blurrier the image looks. Looks like AMD is really reducing this sampling in an effort to speed up ray tracing. The quality is obviously suffering. -

dmoros78v Replygiorgiog said:As someone with an EVGA 3080 FTW... who gives a <Mod Edit> about raytracing? I turn it off in CoD CW to get way better framerates at 4k. Raytracing isn't worth it. Yes it's beautiful, but hardly anything more than a marketing gimmick at this point. If you can get a 3080 or a 6800, enjoy your awesome video card!

Thats the thing, thats your opinion, wich is mostly enforced by what you value more (fps) but not all people think the same. For CoD or any other First Person Shooter I agree, but some other games like Control, Watch Dogs, Tomb Raider, or mostly "Cinematic" third person games I will prefer eye candy over fps every time, I´m more than happy with 30 fps on those types of games with maximum eye candy, also because 30 fps looks more "Cinematic" (do you remember all the criticism Peter Jackson got for shooting The Hobbit at 48 fps and how it looked like a "Soap Opera" and not like a big budget movie because of it? well I think most people agree as the experiment has not been repeated again and we are still watching movies at 24 fps. -

sizzling Reply

At 1440p 144Hz using a 3080 I like having RT on in COD MW while getting over 120/130 FPS, often over 140fps depending on the map. If really bothered by very high fps why would you choose to game at 4K?giorgiog said:As someone with an EVGA 3080 FTW... who gives a <Mod Edit> about raytracing? I turn it off in CoD CW to get way better framerates at 4k. Raytracing isn't worth it. Yes it's beautiful, but hardly anything more than a marketing gimmick at this point. If you can get a 3080 or a 6800, enjoy your awesome video card! -

Phaaze88 Ohh SNAP!Reply

A)Nvidia changing FP32 compute on RTX 30 gave AMD an edge at 1080p... but I'm still on the fence about spending that kind of money on that puny little resolution.

Sorry to 1080p gamers, but that's not a cost effective investment.

B)AMD also has longer-lived driver support - sans Radeon 7, coughcough - compared to Nvidia.

So for people who tend to hold on to gpus for a while, RX 6800/XT should age a bit better than the 3080.

C)Well, look at that... AMD isn't the power hog here either, LOL.

D)A shame about their reference cooler though.

E)The ray tracing thing I can live without it on both ends. Higher resolution > RT, from my perspective. -

RodroX Im guessing theres still a lot of work to do on the developer side and probably on the driver side. But it looks pretty impresive compared to the RX 5000 series.Reply

I think RT is worth it, on the other hand I can live without it, for now.

Time will tell if AMD can adjust the software to bring at least the same quality as nvidia, but then if the performance remains close to what this review shows then I guess we will have to wait for RX 7000 series, maybe then RT on AMD wil worth out time and money. -

jeremyj_83 @JarredWaltonGPU while the graphs contain the fan speed, how was the noise on your test bench overall? Was it akin to the old reference designs or actually quiet for once.Reply