Abrash Predicts The Future Of VR

As is his tradition at Oculus Connect, Michael Abrash, Oculus' Chief Scientist, closed out the opening keynote of the event with his thoughts on the near and distant future of VR. If there’s anyone in the industry that can give us an educated guess as to where the industry is headed, Abrash can.

Throughout the nearly four-year development cycle of the Rift, Oculus continuously refined the hardware and improved its form and function along the way. Now that the Rift is a shipping product, Oculus is looking to the future and exploring what virtual reality hardware could become in the next few years.

Earlier in the Oculus Connect 3 opening keynote, Facebook CEO Mark Zuckerberg revealed a sneak peek at Project Santa Cruz, a tether-free Rift that features inside-out tracking and is powered by a mobile SoC. Inside-out tracking and tether-free VR are obvious “next steps” for VR hardware development, but Abrash expounded on some of the less obvious features that next generation VR could and should have.

“VR five years from now will make VR from today look like something out of prehistory.”

Today’s top-tier virtual reality hardware is nothing short of incredible. The Oculus Rift and the HTC Vive are capable of tricking your mind into believing that you’re in a whole new world if you let it, but if you’re determined, it’s not hard to find subtle cues (such as imperfect visuals) that pull you back to reality. The VR industry has a long way to go before our HMDs become indistinguishable from the real world.

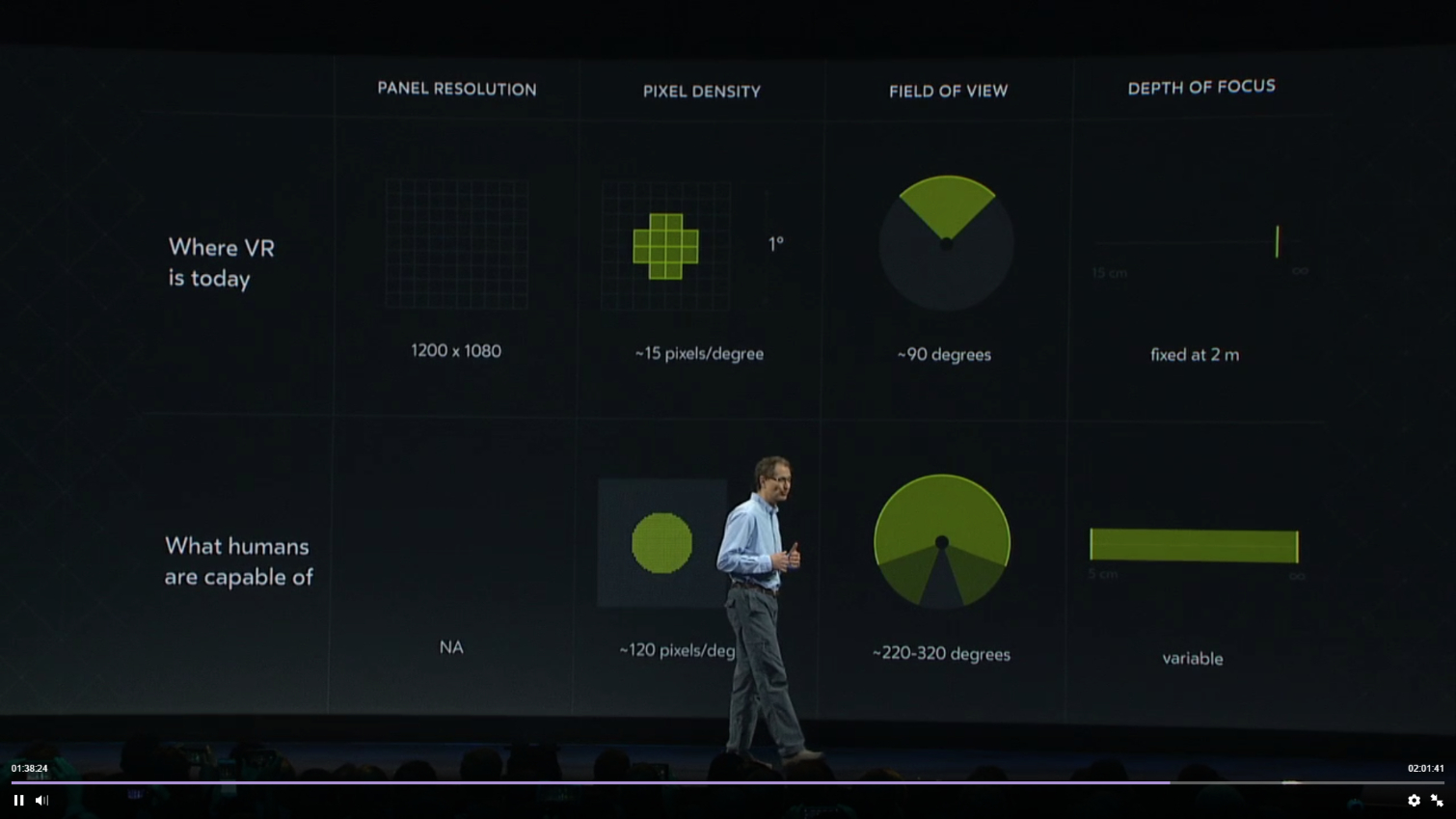

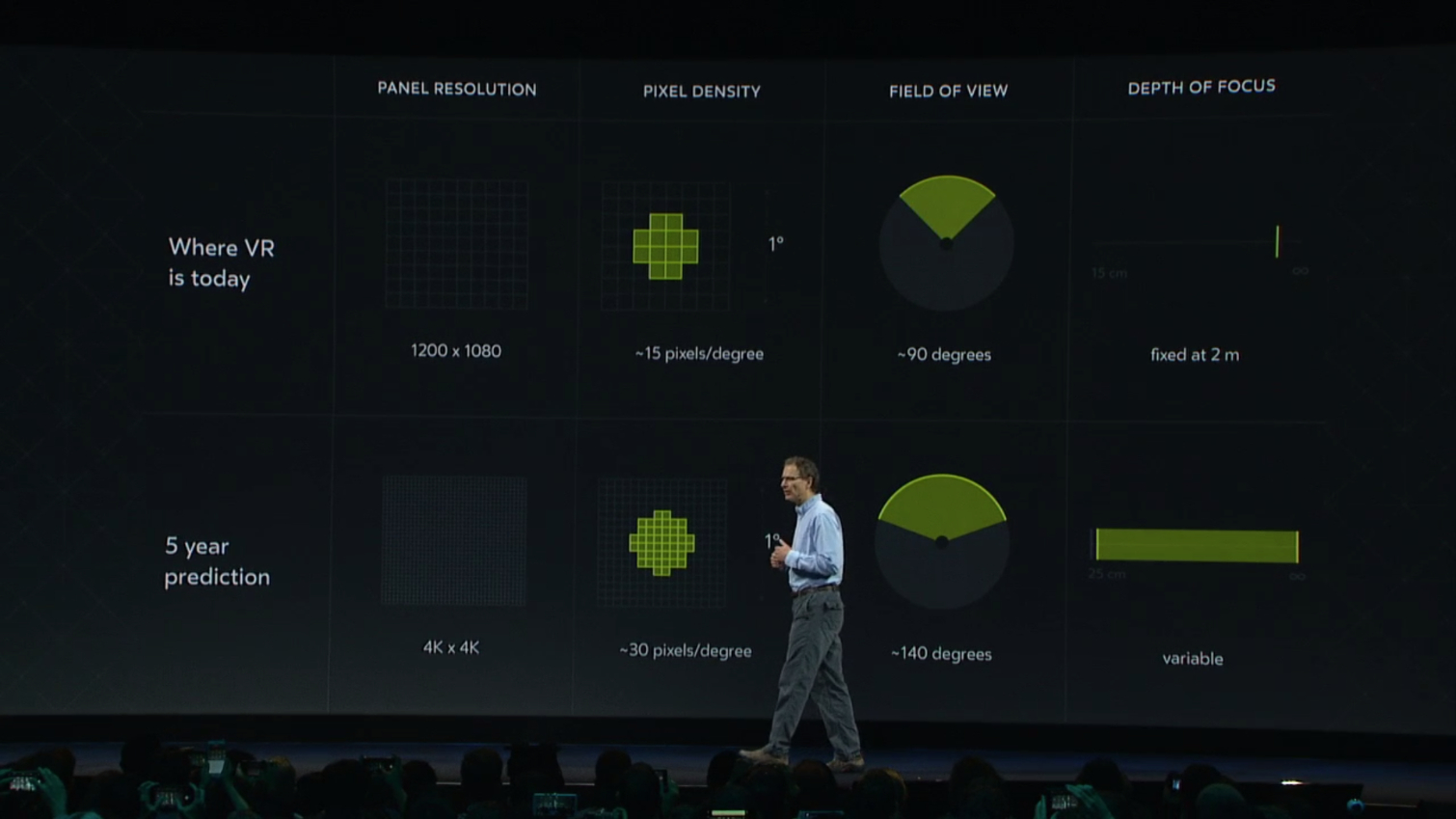

Article continues belowAccording to Abrash, the human visual system is capable of perceiving 120 pixels/degree over the span of at least 220 degrees. The current Rift hardware features two 1200x1080 panels, which provide roughly 15 pixels/degree over a roughly 90-degree field of view (FOV). The current Rift, then, has miles to go before it can max out the human visual system. Abrash believes that even in five years’ time, VR will still be a long way reproducing the equivalent of 20/20 human vision.

Abrash expects that by 2021, the technology will be available to build an HMD that offers 4K x 4K per eye, which would bring the pixel density up to 30 pixels/ degree. But that's still a far cry from what the human eye is capable of perceiving. Abrash expects that having a wider FOV will be a more compelling experience than simply increasing the pixel density and suggested that 140 degrees is a realistic expectation. Abrash noted that he doesn’t believe that it’s possible to adapt Fresnel lenses to work well beyond 100-degree FOV, so he expects that someone will develop a new lens technology to get past that problem.

We have to imagine that Starbreeze would likely disagree with Abrash about the lenses, because the StarVR HMD offers 210-degree FOV with custom Fresnel lenses.

“It may be necessary to redesign the entire graphics rendering pipeline.”

Today, VR demands the most out of your computer. Your graphics card must push the scene at 90fps to provide a smooth experience. Most current GPUs can handle the workload, but nothing available today is capable of rendering VR content at 4K x 4K per eye at 90fps. Graphics processing capabilities must increase by an order of magnitude so that VR hardware can advance. Foveated rendering will likely play an integral role in rendering VR content on such high-resolution displays, but Abrash speculated that GPU manufacturers might have to go back to the drawing board and come up with a new approach to the graphics pipeline--especially if they hope to make the HMD wireless.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

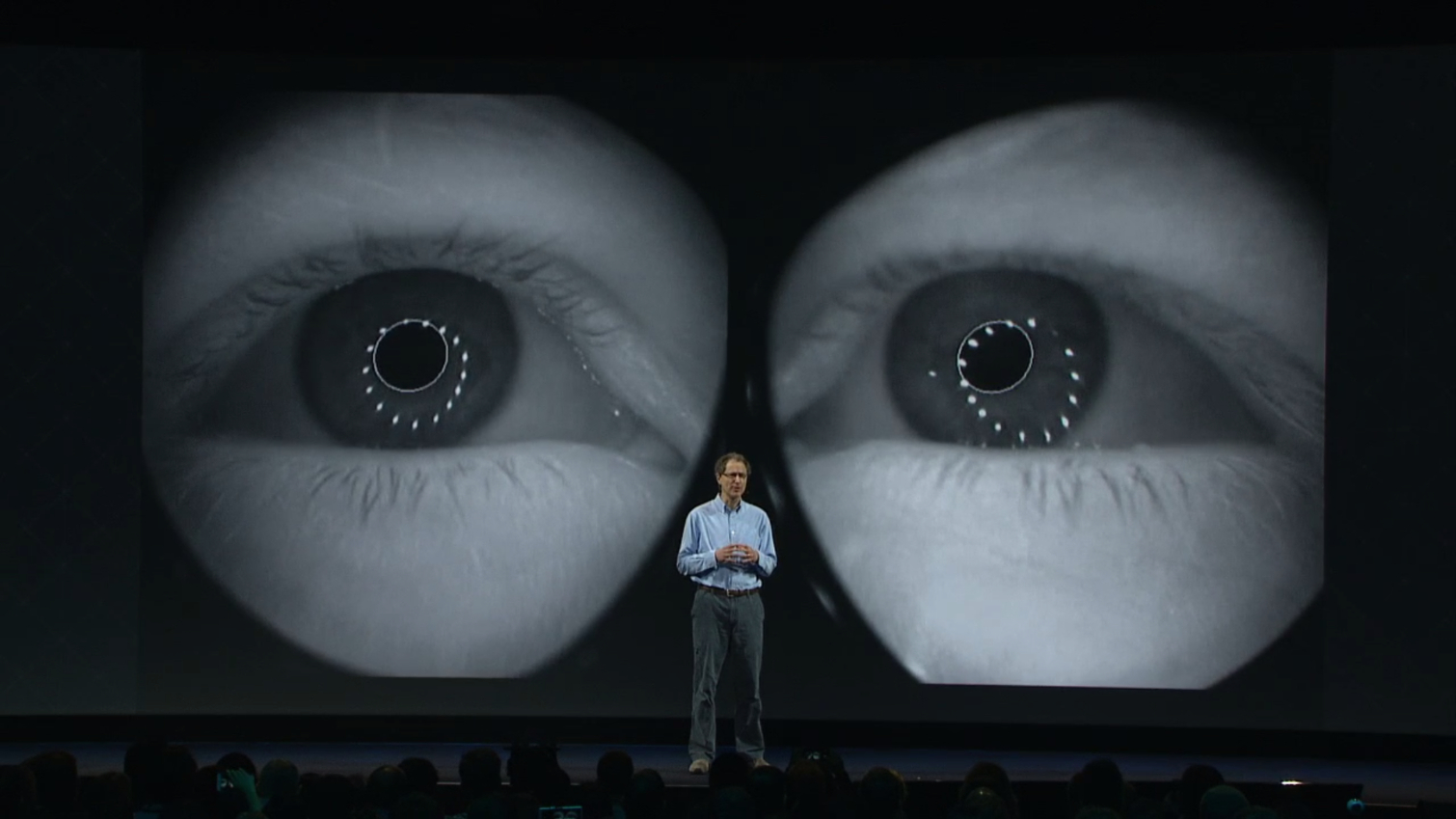

Abrash said that he once thought the problem simply required some “solid engineering,” but he now understands that it’s a bigger problem than he imagined. Eye tracking technology is capable of tracking pupils reliably enough to add life to an avatar, but “tracking required for foveated rendering is not a solved problem at all.”

Foveated rendering relies on extremely precise pupil tracking. Anything less than perfect tracking could result in massive degradation of visual quality, because only the portion of your screen that should be visible gets rendered clearly. Abrash said that current eye tracking technology isn’t capable of tracking your pupil accurately enough to properly implement foveated rendering, primarily because of the vast variety of pupil sizes, the fact that your pupils change in shape and size, and because your pupil’s shape changes when the position of your eye changes.

Abrash is unsure what it will take to surpass the hurdles in eye tracking, but he feels that the problems will be solved within the next five years--and they must be, because “great eye tracking is essential for VR” in the future, he said. He did, though, concede that foveated rendering is the least likely of all of his predictions to come true.

Some Things Won’t Change

VR HMDs will change dramatically in the next five years, but some things probably won’t change. Oculus Touch is just around the corner; pre-orders for the hand controllers open on October 10, and Oculus will start shipping the hardware on December 6. Abrash expects that Touch will remain the “state of the art” input device for VR for years to come. He postulated that it “could become the mouse of VR” and be around for the next 40 years.

It’s Abrash’s belief that your real hands are the only suitable replacement for Touch, but we’re a long way from being able to replicate haptic and kinematic feedback without a hand-held device. That sort of technology isn’t even being worked on yet and is likely a problem for a future generation to solve.

Don’t expect to be using your bare hands as your primary input device, but you can expect to use your hands for basic actions. Abrash believes that your hands will be tracked and rendered along with your avatar. You’ll be able to use hand gestures to interact with simple applications, but complex games will require a physical input device.

Further Refinement

Oculus spent a great deal of time and money on the design of the Rift HMD to ensure that it's as comfortable as possible, but that's not stopping Oculus from making the hardware even better. Abrash said that you can expect future Rift hardware to be lighter and feature better weight distribution than the current generation. He also expects that it will have a solution for prescription lenses.

Abrash shied away from speculating about the software side of the VR industry, though he did share one software idea that he "expects to be using" in five years. He called the concept "Augmented VR," which is a version of mixed reality that lets you pick and chose what you want to see. You'd be able to bring any element from the real world into the digital world and vice versa. And each person would be represented by a virtual human avatar.

Abrash isn't planning to build the software himself, but he threw the idea out there in hopes that someone will make it.

“These Are The Good Old Days”

The world of technology is changing rapidly, whether you like it or not. You may believe that virtual reality is just a passing craze, but the truth is, we’re just at the early stages of this revolutionary technology. The first round of hardware is incredible, but it's by no means perfect. If you’re not satisfied with today’s VR hardware, sit back and wait a few years. These early years will be looked back upon as "the good old days," but rest assured, the best is yet to come.

Kevin Carbotte is a contributing writer for Tom's Hardware who primarily covers VR and AR hardware. He has been writing for us for more than four years.

-

wifiburger must buy some VR stocks, not !Reply

the future is so bright it's going to burn your retina ! that should be their slogan, -

Spazzy VR is interesting as an occasional diversion. I cannot see this as a replacement for consuming content. Human interaction is already on the decline, can you imagine what will happen if VR takes over? We really will be in a world of our own!Reply

It is already known that too much time staring at a computer screen is bad for your eyes. Now we are going to put them within inches of our eyes with no buffering light source. Might be time to invest in your local optometrist. -

Jim90 Since "the human visual system is capable of perceiving 120 pixels/degree" and we're currently at "15 pixels/degree" then we have a very, very long way to go till monitor/viewports catch up.Reply

Since it's only the eye's fovea which renders in high resolution to the brain, then it would seem highly illogical to dismiss any system employing or research into foveated rendering as anything but hugely significant. -

bit_user Abrash started publishing books and articles on 3D graphics and assembly language optimization like 30 years ago. This man helped develop the original rendering engine in Quake, which was a huge leap ahead of anything that existed, at the time. Even today, I'm sure he's still one of the foremost experts in realtime 3D graphics.Reply

He obviously understands the potential, but his point is not to underestimate the challenges in doing it well enough. He's also clearly saying that the brute-force approach isn't an option.18711765 said:Since it's only the eye's fovea which renders in high resolution to the brain, then it would seem highly illogical to dismiss any system employing or research into foveated rendering as anything but hugely significant.

I agree with him - we need like VR contact lenses, or something. The rendering pipeline needs to hack the human visual system at a more fundamental level.

What you'd ideally like to do is render one pixel for each receptor on the retina. If we do that, we can afford to spend a lot more effort rendering each one. The problem is that the eye moves, and there's this deformable lens, in the way. But it's got to be cracked, and I think the refresh rate will need to be closer to 200 Hz.

Not that far back. I'd say more like 8-bit Nintendo or maybe 16-bit (Super Nintendo).18711885 said:VR is to 2016 as the Atari 2600 was to 1980?

-

bit_user ReplyAbrash expects that Touch will remain the “state of the art” input device for VR for years to come.

Not this generation of it, I'm sure. Current VR input devices are like 1980's era mice. But he has a point that you can get tactile feedback by holding something. And I still prefer a mouse over touchscreen (if I'm seated at a desk).

-

bit_user Reply

So, ditch the family!18721686 said:I just can't see myself having a family AND one of these. -

Memhorder Reply18721714 said:

So, ditch the family!18721686 said:I just can't see myself having a family AND one of these.

Maybe.....I'll get one of those rubber Japanese play toys and Baby Robots to along with the VR headset. :)