Intel Lists Overclockable Core i7-8809G With Vega Graphics

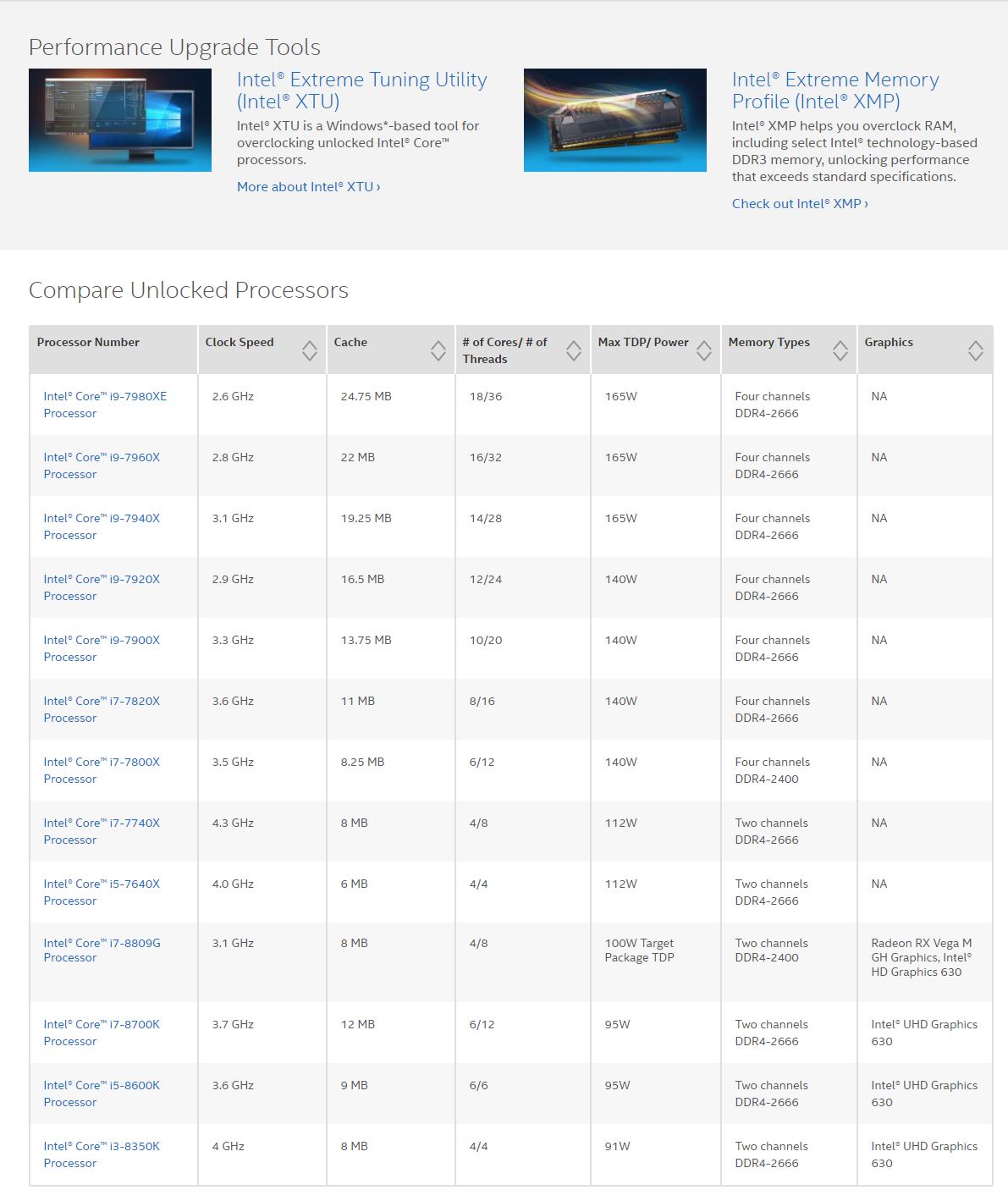

Intel is ringing in the new year with Vega graphics. Intel listed the Core i7-8809G with dual Radeon RX Vega M GH and Intel HD Graphics 630 on its India site. The new processors are listed on a page for overclockable processors. Intel has since removed the listing, but we snagged a few screenshots.

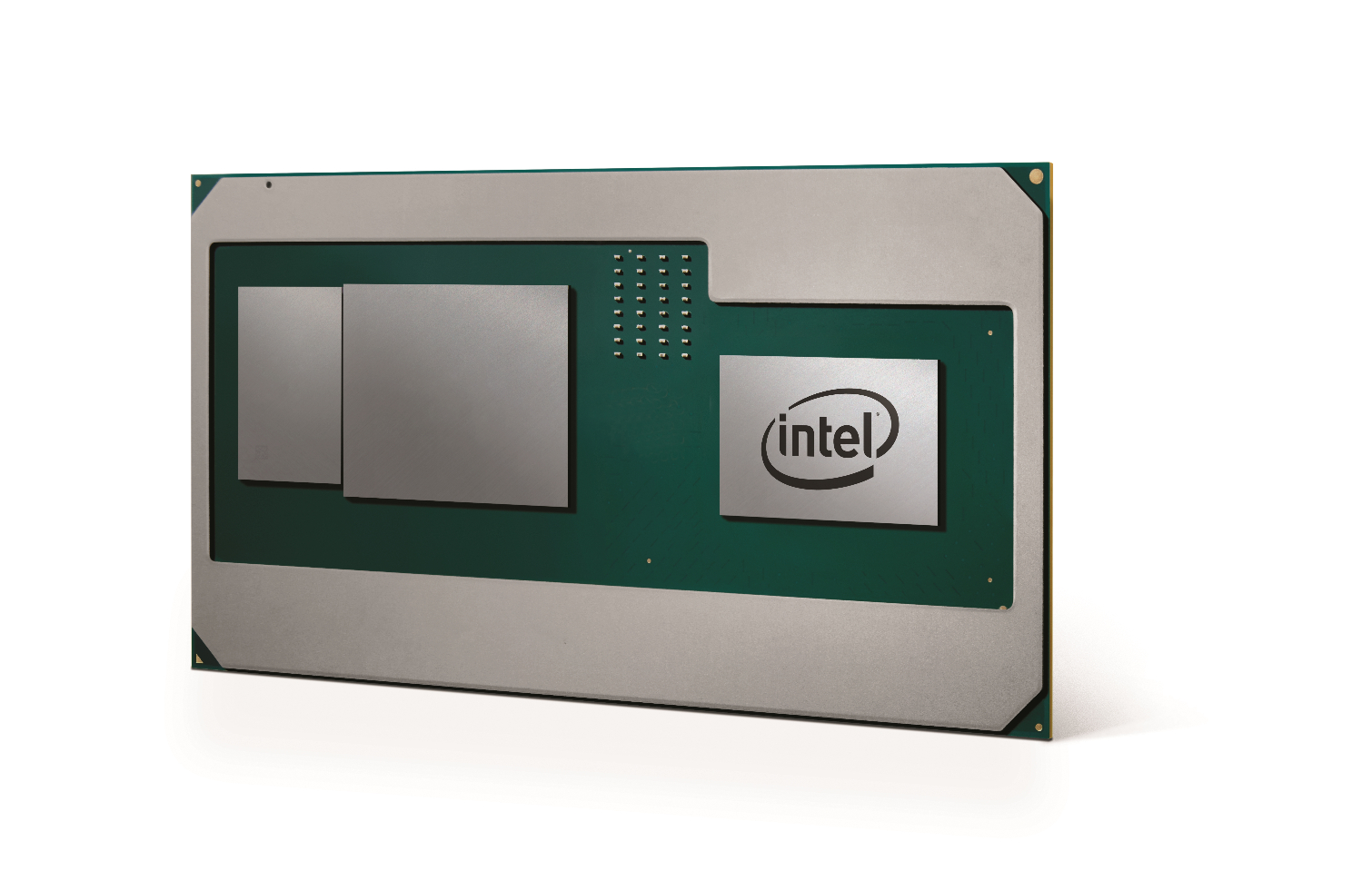

Intel shocked the industry when it announced in November 2017 that it was purchasing custom AMD graphics chips for a new MCP (Multi-Chip Package). As we can see below, the new MCP features a processor (right), AMD Vega graphics (center), and HMB2 (left). Intel connects the Vega graphics to the HBM2 via Intel's new EMIB technology. Intel has confirmed it connects the processor to the graphics via a standard PCIe connection.

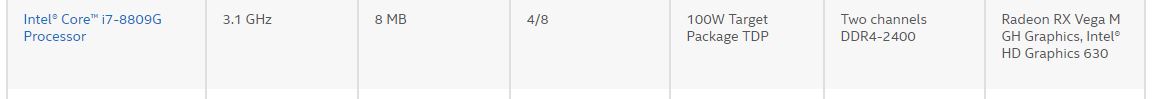

The four-core eight-thread processor has a 3.1GHz base frequency and comes with 8MB of L3 cache. It supports dual-channel DDR4-2400 memory.

Article continues belowOriginally, Intel and AMD did not disclose the accompanying graphics architecture, but many predicted it would be Polaris-based. Several clues, such as HBM2 support and a unique power-sharing design that debuted with AMD's Vega-equipped Ryzen Mobile processors, led us to believe it would use Vega graphics.

Intel is pairing Vega with H-Series processors that are traditionally 25-45W. That suggests the processors are headed into high-performance laptops, but Intel's listing points to a 100W target package TDP. For this model, Intel likely allocates the remaining 75-65W power budget to the Vega graphics cores and HBM2 stacks. The 100W TDP also implies this processor will slot into a standard motherboard. The unlocked multiplier is yet another sign that this version of the MCP isn't destined for the mobility segment. Unfortunately, we don't know any details about the Vega graphics, such as the number of CUs or the HMB2 capacity.

The Core i7-8809G may be destined for a desktop near you, but several Core i7 processors with Vega graphics have also appeared in recent 3DMark submissions--but they are listed in HP Spectre x360 convertibles. That means Intel's Vega-based processors will probably be part of a larger family that spans multiple types of devices. Leaked roadmaps have also pointed to an Intel NUC with a 100W processor, which would make for a powerful NUC with beefier integrated graphics. We expect more news around the new NUCs will surface at CES next week.

Intel lists both Vega and HD Graphics 630, so the integrated graphics on the processor are intact and functional. This will allow the processor to use its own graphics and power gate the Vega and HBM2 to preserve energy during lighter graphics workloads, which saves power.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The integrated Intel HD Graphics 630, which was rebranded as "UHD Graphics 630" with Coffee Lake, is a sign that Intel is using a Kaby Lake processor. The company could still throw a curve ball with a Coffee Lake processor. It is noteworthy that the Coffee Lake Core i3 models are also quad-cores that support dual-channel DDR4-2400 memory. Intel is including the 8-series branding with this processor, so we'll have to wait for confirmation.

The mistaken listing on Intel's website is interesting. Intel tends to list concrete specifications on product pages instead of a fuzzy metric like a "Target TDP," which implies the product is still under development. CES is next week, so this teaser may be a fortuitous mistake that helps generate some excitement headed into the show. We've reached out to Intel for comment and the company responded that it will provide more information on the processor soon. Stay tuned.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

razamatraz Interesting. 100Watts puts this above the typical mainstream desktop processors of today even...although perfectly reasonable for discrete like GPU performance (assuming it actually has that)Reply -

bit_user This is such a weird product. The only case I can see for bundling them together would be to share power management (which they do). Perhaps the socket/board will be a little simpler, as well, but bundling them will probably just move cost from the motherboard to the CPU/GPU package, and make the whole thing harder to cool than if they were two separate packages.Reply

I'm more puzzled than ever. The only way I can truly make sense of it is if it's just a stunt to show off their EMIB integration platform. -

wifiburger This product will never reach final production. 100 watt for one die to cool on laptop requires a bigger investment (cooling)then the saving they are pitching !Reply -

bit_user Does anyone have TDP specs on the GPUs that typically appear in high-end gaming laptops and "mobile workstations"?Reply

The CPU specs seem to be a match for the i7-7920HQ:

https://ark.intel.com/products/97462/Intel-Core-i7-7920HQ-Processor-8M-Cache-up-to-4_10-GHz

If it's configured down to 35 W TDP, then that would leave at least 65 W for GPU + HBM2. Or more, when the CPU isn't cranking so hard. Assuming we're talking about running on AC power, of course.

BTW, if the HD Graphics aren't being used, then you'd easily lop about 10 W off the CPU die's TDP. That means limiting it to 35 W wouldn't even entail much sacrifice, putting a turbo of 4.1 GHz still possibly within reach. -

alextheblue Reply

It would allow OEMs to build something more compact than a comparable system with an MXM GPU. I imagine that there's some net TDP savings too. If they're serious about building it, I would bet they're seeing some demand for such a design. Maybe Apple or other OEMs requested a high-power GPU on-package. Either way this is a stopgap until their own nascent high-performance graphics efforts bear fruit.20551405 said:This is such a weird product. The only case I can see for bundling them together would be to share power management (which they do). Perhaps the socket/board will be a little simpler, as well, but bundling them will probably just move cost from the motherboard to the CPU/GPU package, and make the whole thing harder to cool than if they were two separate packages.

As for cooling... it's 100W but with a fairly large surface area, there's room for more than enough heatpipes. They don't all need to end up in the same pile of fins, either. If you want to you can divide the heat. Don't get me wrong, it isn't going to end up in true ultrathins. But it doesn't need to be a DTR, either. Check out the ROG GL502VS:

https://www.asus.com/us/Laptops/ROG-GL502VS/gallery/

That's a 15.6", not a massive DTR and it has a 1070 and a 45W HQ CPU. The mobile variant of the 1070 has a 115W TDP by itself. The combined potential heat load with that HQ CPU is ~160W. Now imagine cutting your cooling requirement to just the 1070. So the cooling setup they're using for the 1070 would be sufficient by itself, and as I mentioned before you could even split it up if your design would benefit. I think it's pretty neat, even if you won't be able to cram it in an ultrathin.

What's going to hurt adoption is cost. Not only of the APU itself (hah it's got AMD graphics onboard I can call it an APU!), but I'd imagine it requires a different socket, which means a new board design exclusive to these chips. Correct me if I'm wrong, but I don't think their current mobile sockets provide enough current.

Also, does anyone remember NexGen's PF100 using IBM's MCM technology before it was cool? Heh... -

bit_user Reply

Once you add enough cooling, I don't see the space savings. I also don't get where the TDP savings would come from, unless you're assuming their power-management protocol can only function within the package.20552395 said:It would allow OEMs to build something more compact than a comparable system with an MXM GPU. I imagine that there's some net TDP savings too.

That's got to be a maximal figure, and not supported by all implementations. I'm skeptical that laptop really dissipates 160 W without more serious fans. I know the power brick is rated for 180 W of output, but a good chunk of that should be for battery charging.20552395 said:The mobile variant of the 1070 has a 115W TDP by itself.

Anyway, I'm not saying the idea of a 100 W laptop is ridiculous. On AC power, it seems reasonable for a mobile workstation or gaming laptop. I'm just saying I can't see a good reason why they should be in the same package. Even if we accept it delivers some small amount of space savings in board area, you're not going to use such a powerful setup with a tiny screen, so probably board area is not a limiting factor.

I'm sticking to the idea that Intel waved a wad of cash in the face of cash-poor AMD (remember, this project probably had its inception ~1 year ago), wanting to use it as a stunt to sell the industry on using EMIB. -

papality So like the criminally underrated 5775c. How powerful could Vega be at that low of TDP?Reply -

ElMojoMikeo Could it be a games console package? Just a stab in the dark. It would start to make more sense.Reply -

Lucky_SLS Are Intel HD 630 and Vega M sharing resources cuz of Intel's EMIB? If so, how far it is when both igpu and d gpu work together?Reply -

gggplaya The only place I see this working are in people that don't care about having a beefy and hot running laptop. Or in an intel NUC style HTPC, portable gaming computer, or even as a console to replace the current xbox or playstation.Reply