Goodbye Transistor? New Optical Switches Offer up to 1,000x Better Performance

'Optical Accelerators' ditch electricity, favoring light as an exchange medium.

A collaboration between IBM and Russian researchers has resulted in the development of optical switches that can outperform traditional transistor-based switches by operating up to 1,000 times faster. The research, published in Nature, is one of the necessary steps towards a future of light-based computing, one of the current candidates towards achieving much faster - and much more energy efficient - computing systems.

The principle is easy enough to understand: light is fast. It's the fastest medium, actually. These optical switches thus make use of light, not electricity, as their operational input and output. While traditional electronic transistors represent either of the binary values - 1 or 0 - by "switching" between these binary states after a strong enough voltage forces them to, the optical switch described by the researchers can switch states with the smallest and most efficient unit of light: a mere photon. This leads to dozens of times better energy efficiency per switching than that of electronic transistors.

"The most surprising finding was that we could trigger the optical switch with the smallest amount of light, a single photon," says study senior author Pavlos Lagoudakis, a physicist at the Skolkovo Institute of Science and Technology in Moscow. This naturally has implications on both energy efficiency and operating temperatures, which could enable optical systems to replace transistor-based ones not only when faster speeds are required, but also when there are cooling, energetic or electronic noise constraints for the system's deployment. If that last sentence just made you think that optical switches would therefore be great for quantum computers, you'd be right. In fact, it's expected that quantum computing solutions will require the parallel development of optical signaling devices that reduce external interference and allow for faster, more stable communication between scalable quantum systems. So this development matters not only for traditional, Turing-based computing systems - although even there, it could lead to operational speedups.

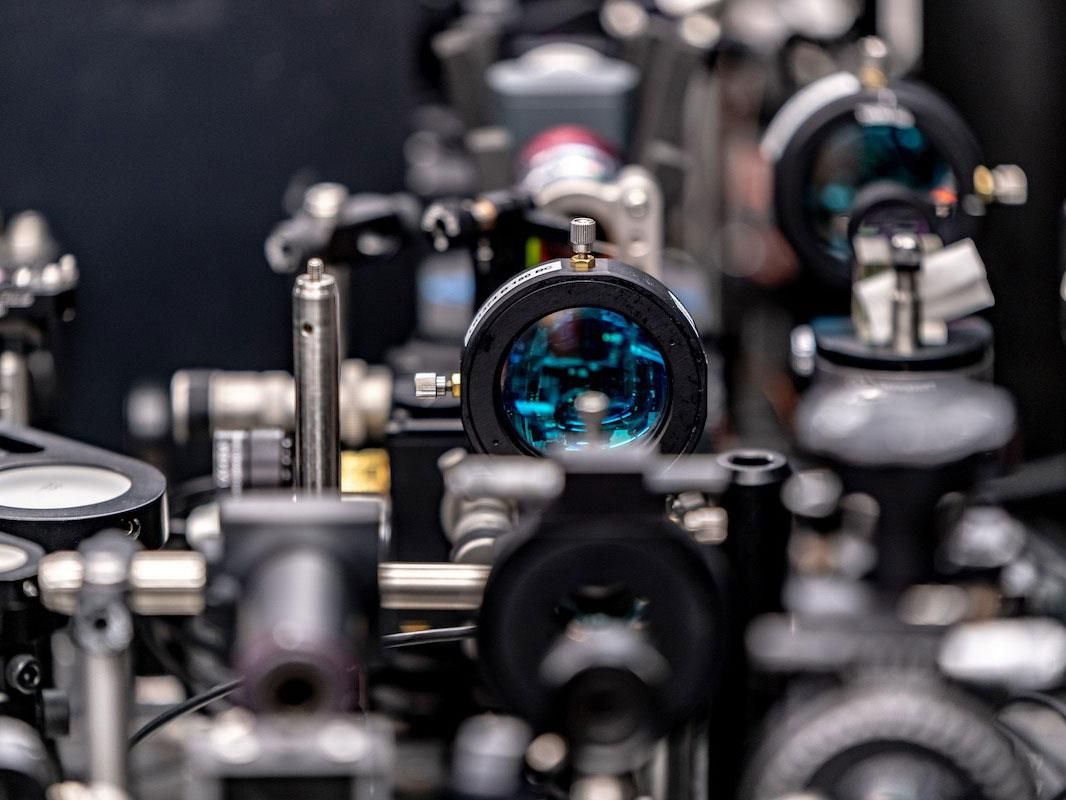

But how does the switch actually work? Lasers and mirrors. The scientists developed a 35-nanometer wide organic semiconductor polymer film, which they then sandwich between two highly reflective mirrors (the researchers call this a microcavity). The mirrors act as cages for two lasers that hit the polymer film, keeping their light trapped inside the sandwich - hitting as much surface of the polymer as possible via millions (trillions?) of sympathetic reflections between both mirrors, thus blanketing the polymer's surface. The addition of the two sheets of mirror material passively increases the lasers' reach, which results in much lower power consumption than if the lasers were guided through the entire surface.

The two lasers required for the optical switch to operate take the form of a bright, pump laser and a weak, seed laser. The pump laser essentially interacts with the microcavity, its high-power photons coupling with excitons (an exotic form of electron) to form clusters of exciton-polaritons. These clusters, which are essentially collections of particles, show exotic behavior in that they can and do act as a single atom. When they exhibit this behavior, they're called Bose-Einstein condensates. And this is where the seed laser enters the equation: it essentially interacts with these Bose-Einstein condensates, allowing for them to switch between two measurable states, which act as the binary 1 and 0 from classical computing.

Pavlo Lagoudakis does say that while their results are extremely positive, actual light-based switching and computing systems are still far away from mainstream deployment. "It took 40 years for the first electronic transistor to enter a personal computer and the investment of many governments and companies and thousands of researchers and engineers," he says. "It is often misunderstood how long before a discovery in fundamental physics research takes to enter the market." However, the road seems to be opening up for yet another incredible jump in performance for both classical or quantum-based computing systems - one photon at a time.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Francisco Pires is a freelance news writer for Tom's Hardware with a soft side for quantum computing.

-

jkflipflop98 Yeah yeah yeah we hear this every few years "optical transistors coming soon!". Show me an actual working part running optically and we'll talk.Reply -

kyzarvs Reply

From the last paragraph of the article, maybe you didn't get that far:jkflipflop98 said:Yeah yeah yeah we hear this every few years "optical transistors coming soon!". Show me an actual working part running optically and we'll talk.

"It took 40 years for the first electronic transistor to enter a personal computer and the investment of many governments and companies and thousands of researchers and engineers," he says.

"It is often misunderstood how long before a discovery in fundamental physics research takes to enter the market." -

TerryLaze Reply

Yeah because the first transistor was made in 1907 and computers weren't even a dream yet back then.kyzarvs said:"It took 40 years for the first electronic transistor to enter a personal computer and the investment of many governments and companies and thousands of researchers and engineers," he says.

Computers became very important around 1940-45... 40 years later...because of the war and yes all the governments suddenly needed computers and pumped money into them like crazy because it was the only way to keep up.

Today the only reason we don't get any computers with optical switches is because either they aren't ready yet for the same amount of performance and abuse ( how long before failure) or either they are way too expensive for anybody to consider them. -

jkflipflop98 Transistors weren't invented until 1947 bruh. Computers ran on vacuum tubes until the 70's. The only functional "computers" during WWII were all of the mechanical nature - such as the Enigma Machine.Reply -

hotaru.hino Reply

I mean there is a point though. Until there's an actual working product (show me an optical transistor computer that's running Linux or BSD), what's the point in saying "goodbye!" to currently existing technology?kyzarvs said:From the last paragraph of the article, maybe you didn't get that far:

"It took 40 years for the first electronic transistor to enter a personal computer and the investment of many governments and companies and thousands of researchers and engineers," he says.

"It is often misunderstood how long before a discovery in fundamental physics research takes to enter the market." -

TerryLaze Reply

Sure bruh...jkflipflop98 said:Transistors weren't invented until 1947 bruh. Computers ran on vacuum tubes until the 70's. The only functional "computers" during WWII were all of the mechanical nature - such as the Enigma Machine.

https://ftp.arl.army.mil/~mike/comphist/eniac-story.htmlAs in many other first along the road of technological progress, the stimulus which initiated and sustained the effort that produced the ENIAC (electronic numerical integrator and computer)--the world's first electronic digital computer--was provided by the extraordinary demand of war to find the solution to a task of surpassing importance. To understand this achievement, which literally ushered in an entirely new era in this century of startling scientific accomplishments, it is necessary to go back to 1939.

By today's standards for electronic computers the ENIAC was a grotesque monster. Its thirty separate units, plus power supply and forced-air cooling, weighed over thirty tons. Its 19,000 vacuum tubes, 1,500 relays, and hundreds of thousands of resistors, capacitors, and inductors consumed almost 200 kilowatts of electrical power.