Extreme SSD Card With 28 GBps Speeds Now Available

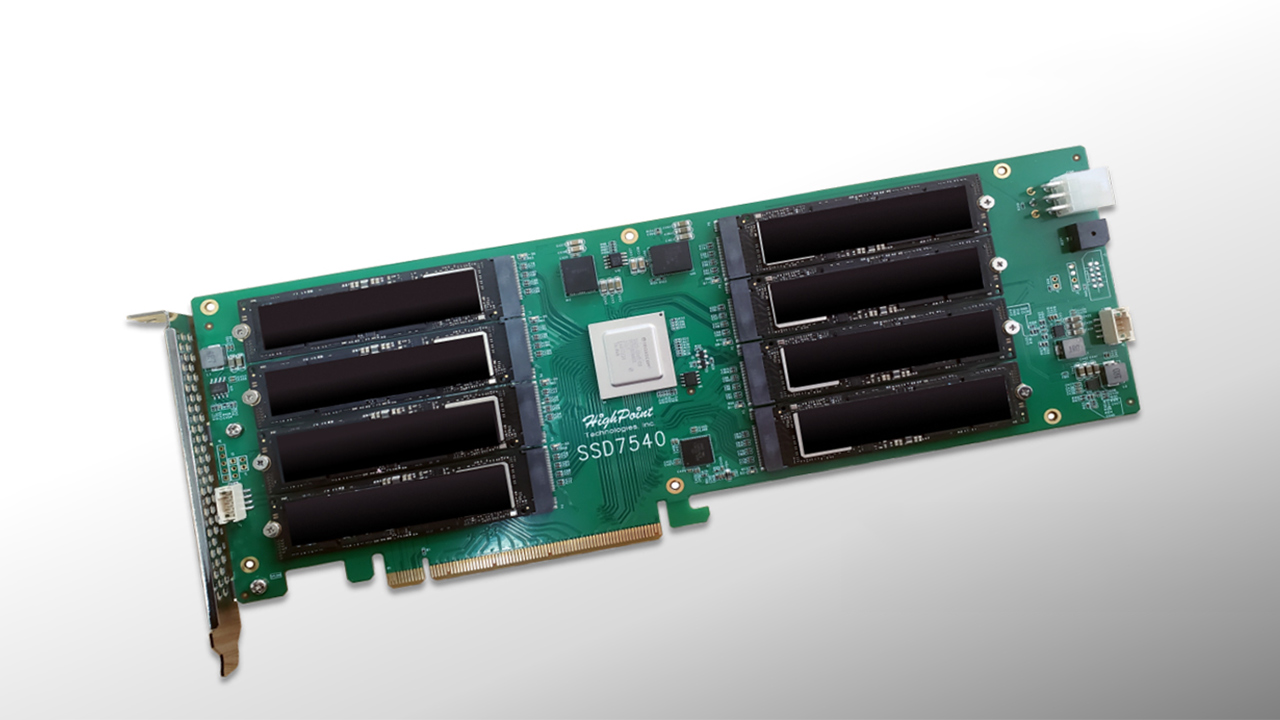

HighPoint starts selling SSD 7540 RAID card for eight M.2-2280 drives.

Latest-generation SSDs with a PCIe 4.0 x4 interface for client PCs are already very fast, but there are applications that can take advantage of even higher throughput. Advanced motherboards tend to have multiple M.2 slots and support RAID, but for some people even this is not enough. For those who demand an extremely high-performance storage subsystem, HighPoint developed an SSD RAID card that can carry eight PCIe 4.0 x4 drives.

The HighPoint SSD7540 card is based on the company's own chipset that acts like a PCIe Gen 4 switch and supports that company's RAID technology. The card has eight M.2-2280 slots to support up to eight NVMe drives configured to act independently or into one or more RAID 0, 1, and 10 (1/0) configurations providing both security and speed. HighPoint says that, when fully populated, the board can offer up to 64TB of storage and read/write speeds of up to 28GB/s.

To ensure that the drives get enough power, the HighPoint SSD7540 has a six-pin auxiliary power connector. Meanwhile, to ensure proper cooling for the SSDs, it is equipped with a massive heatsink with two fans.

The HighPoint SSD7540 adapter is now available in Japan for ¥139,500 ($1,330) without tax, according to PC Watch. While the price is definitely not low, eight SSDs also cost a lot of money. Meanwhile, those who actually need a storage subsystem with a 28GBps throughput are rarely on budget.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

pooflinger1 According to their site, the card is not actually RAID 1+0 (10), but rather 0+1. They still call it RAID 10, but it's not. They describe it as mirroring the stripes, not striping the mirrors. Capacity and performance are the same for both implementations, but there is a huge difference in fault tolerance and how a failure/rebuild affects the array.Reply -

jasonkaler Reply

I thought raid 0+1 was something they included in the textbooks as an example of what not to do, I didn't think anyone would actually go and implement it.pooflinger1 said:According to their site, the card is not actually RAID 1+0 (10), but rather 0+1. They still call it RAID 10, but it's not. They describe it as mirroring the stripes, not striping the mirrors. Capacity and performance are the same for both implementations, but there is a huge difference in fault tolerance and how a failure/rebuild affects the array.

It would be so much better to use raid 5 - you would get almost double the capacity at about the same reliability, or better yet, two spindles (if you can still call it that) of raid 5 using 3+1 each