Rivals in Arms: Nvidia's $199,000 Ampere System Taps AMD Epyc CPUs

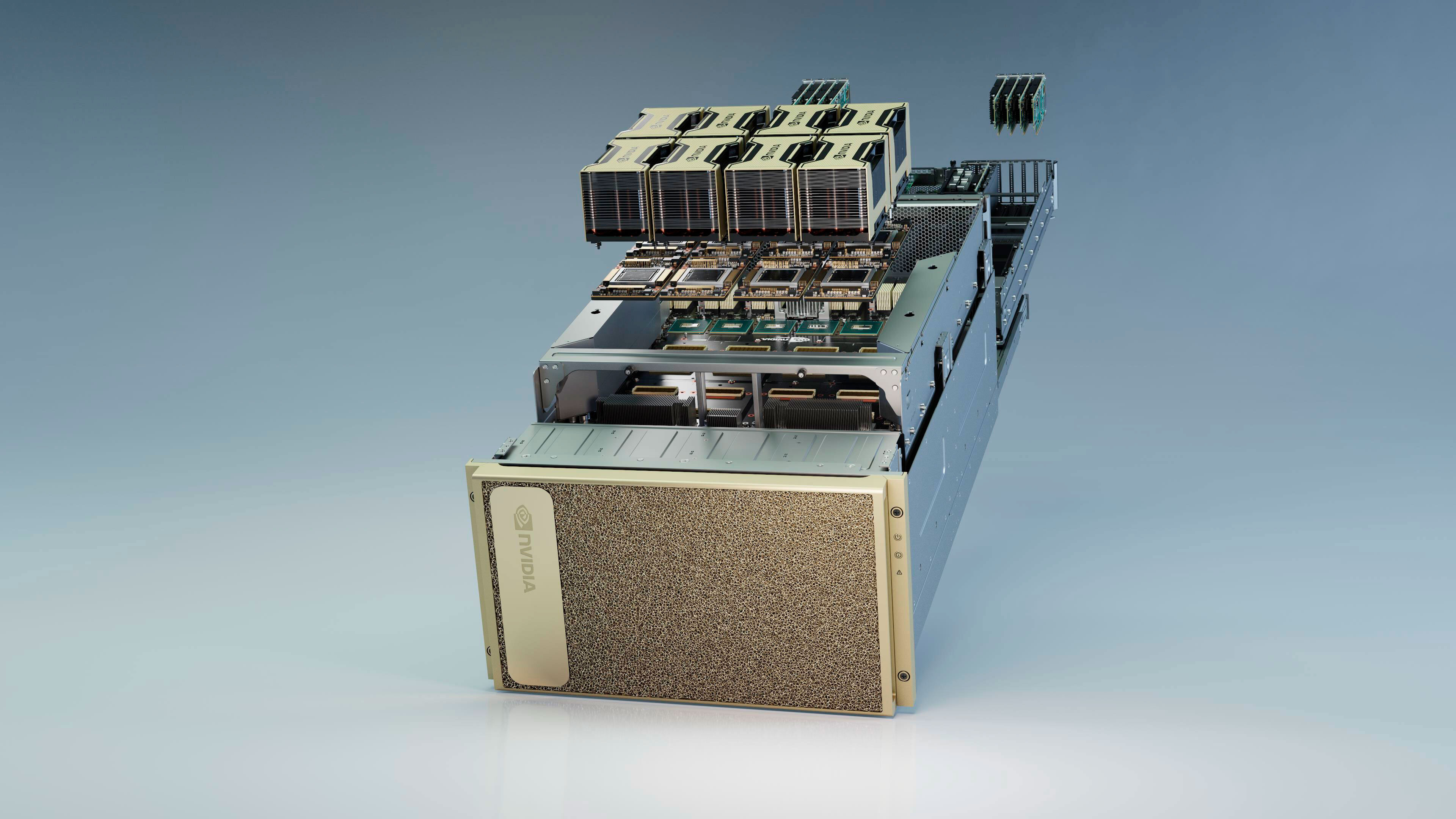

The DGX A100 is an ultra-powerful system that has a lot of Nvidia markings on the outside, but there's some AMD inside as well. A pair of core-heavy AMD Epyc 7742 (codenamed Rome) processors are at the heart of Nvidia's new $199,000 creation.

The DGX A100 employs up to eight Ampere-powered A100 data center GPUs, offering up to 320GB of total GPU memory and delivering around 5 petaflops of AI performance. The A100 might be doing most of the heavy lifting, but the team still needs a leader. However, Intel doesn't fit the bill.

The A100 leverages PCIe 4.0, but Intel doesn't have any processors currently that support the interface. AMD, on the other hand, has openly embraced the PCIe 4.0 standard on the the majority of its modern CPUs. Nvidia ultimately found comfort in AMD's arms, more specifically the Red Team's second-generation Epyc offerings.

The DGX A100 features two 7nm Epyc 7742 processors. Each Zen 2 processor comes with 64 cores and 128 threads that run with a 2.25 GHz base clock and 3.4 GHz boost clock. The Epyc 7742 duo accounts for 128 cores and 256 threads on the DGX A100.

The Epyc 7742 isn't just generous with cores; it's also pretty heavy on the cache too, supplying up to 256MB of L3 cache. More importantly, the 64-core part puts 128 high-speed PCIe 4.0 lanes at Nvidia's disposal.

A typical DGX A100 system also comes with 1TB of memory (upgradeable to 2TB), two 1.92TB NVMe M.2 SSDs in a RAID 1 array for the operating system (Ubuntu Linux) and up to four 3.84TB PCIe 4.0 NVMe U.2 drives in a RAID 0 array for secondary storay. Nvidia also offers the option to add four additional SSDs to bump the RAID 0 volume from 15TB up to 30TB.

The DGX A100 is designed with state-of-the-art networking. Nvidia recently acquired Mellanox Technologies in a whopping $6.9 billion deal, and it's already paying off. The DGX A100 packs eight single-port Mellanox ConnectX-6 VPI HDR InfiniBand adapters for clustering and one dual-port ConnectX-6 VPI Ethernet adapter for storage and networking usage. The aforementioned adapters have the capability to deliver up to 200 Gbps of throughput.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Zhiye Liu is a news editor, memory reviewer, and SSD tester at Tom’s Hardware. Although he loves everything that’s hardware, he has a soft spot for CPUs, GPUs, and RAM.

-

spongiemaster Reply

Probably really poorly. Whatever barebones onboard video AMD has on their server platforms isn't going to be great for gaming.mikewinddale said:But can it run Crysis? -

bit_user Wow, between AMD and Intel, I guess this shows who Nvidia is more concerned about, as a competitive threat.Reply

I wonder how much leeway AMD has to refuse to work with someone, though. Could they have shut this down, if they really wanted to? -

Deicidium369 Reply

You beat me to it - yeah looks like Nvidia sees a friendlier port with AMD rather than Intel. With Intel getting into the GPU market - has Nvidia thinking strategically. AMD would not turn down any business - they need every single $$ that they can get - they cannot afford to be choosy.bit_user said:Wow, between AMD and Intel, I guess this shows who Nvidia is more concerned about, as a competitive threat.

I wonder how much leeway AMD has to refuse to work with someone, though. Could they have shut this down, if they really wanted to? -

Deicidium369 Reply

if its the AST25xx series then it can barely run notepad much less Crysisspongiemaster said:Probably really poorly. Whatever barebones onboard video AMD has on their server platforms isn't going to be great for gaming. -

domih 5 petaFLOPS AI and 10 petaOPS INT8, damn!Reply

I'll buy two of them to manage my cooking recipes and Surprizamals collection.

Comforting thought: these things will end up on eBay at ~$1,999 in 15+, 20+ or 25+ years. By that time your hyper-quantum smart phone might be more powerful though. -

Murissokah Replybit_user said:Wow, between AMD and Intel, I guess this shows who Nvidia is more concerned about, as a competitive threat.

I wonder how much leeway AMD has to refuse to work with someone, though. Could they have shut this down, if they really wanted to?

The way I see Intel had nothing to do with Nvidia's decision. It was a choice between using an AMD product in their platform or not having a platform at all, since PCIe 4.0 was definitely needed for the amount of bandwith required. What this tells us is that Nvidia will not hurt its own business to avoid supporting AMD.

I'm sure we'll see Intel variants of this platform as soon as PCIe 4.0 capable processors are available from them.

As to shutting it down, I don't think its feasible, nor is it a good idea. The added market share for EPYC CPUs is very welcome and so is the marketing fodder from Nvidia using their tech. -

gg83 I mean Intel used AMD GPU for that big SoC thing they made right? Don't tech company's license/collaborate with each other all the time?Reply -

poodie13 Bit_User, Decidium ...Reply

Did either of you RTFArticle ??

It clearly states Intel doesn't support PCIe4.0 but AMD does.

Made CPU choice clear cut.

Why would AMD CPU division let GPU competition undermine product placement in prestigious server racks? -

JayNor Replydomih said:5 petaFLOPS AI and 10 petaOPS INT8, damn!

I don't know where the 5 petaFlops comes from that you quote, but this article shows 9.7 TFlops DP, while Intel's Ponte Vecchio estimate was 66 TFlop DP ... which would be important for HPC.

https://www.anandtech.com/show/15801/nvidia-announces-ampere-architecture-and-a100-products

Double Precision9.7 TFLOPs

(1/2 FP32 rate)