AMD and Nvidia to Power Four ExaFLOPS Supercomputer

Perlmutter brings AMD and Nvidia together

The National Energy Research Scientific Computing Center (NERSC) Lawrence Berkeley National Laboratory (Berkeley Lab) this week announced its new supercomputer that will combine deep learning and simulation computing capabilities. The Perlmutter system will use AMD's top-of-the-range 64-core EPYC 7763 processors as well as Nvidia's A100 compute GPUs to push out up to 180 PetaFLOPS of 'standard' performance and up to four ExaFLOPS of AI performance. All told, that makes it the second-fastest supercomputer in the world behind Japan's Fugaku.

"Perlmutter will enable a larger range of applications than previous NERSC systems and is the first NERSC supercomputer designed from the start to meet the needs of both simulation and data analysis," said Sudip Dosanjh, director or NERSC.

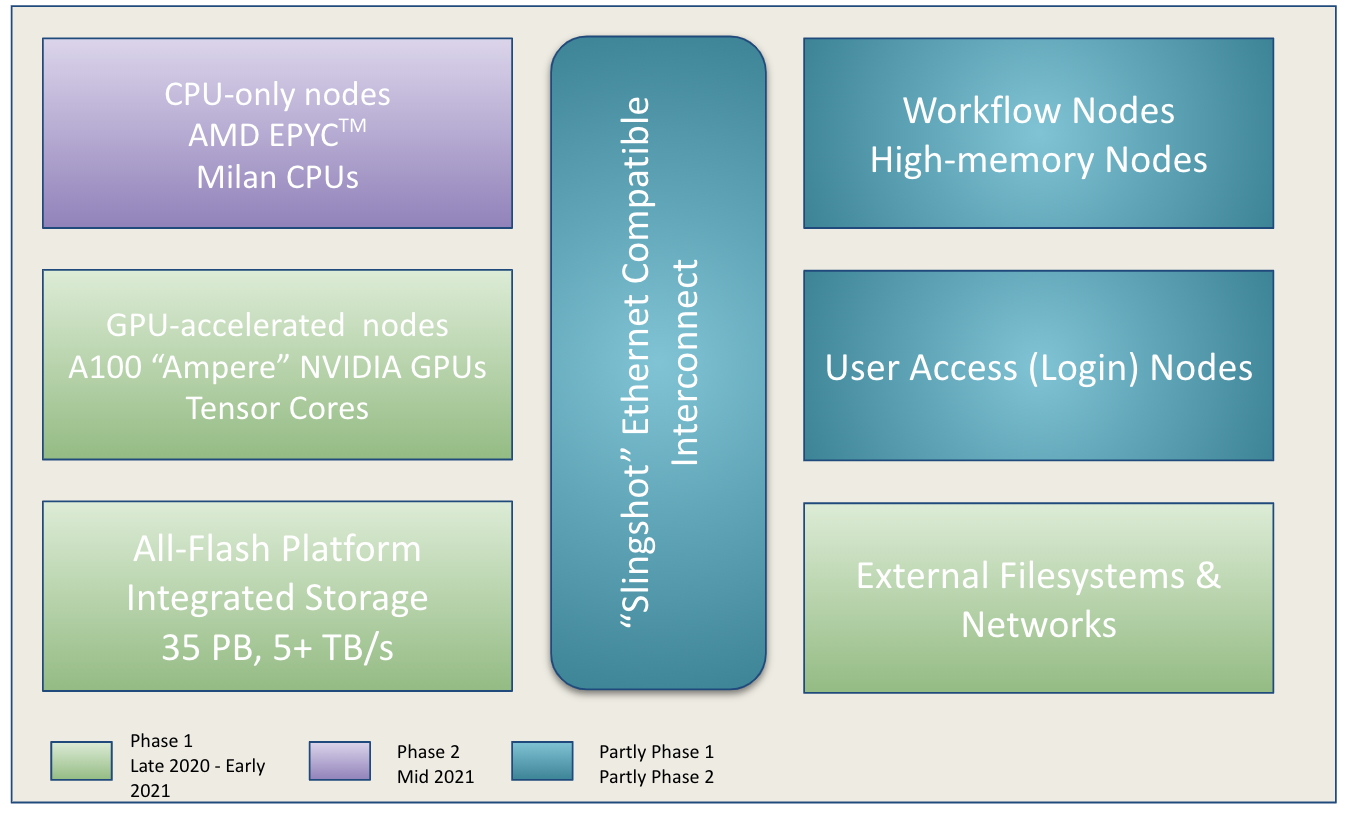

NERSC's Perlmutter supercomputer relies on HPE's heterogeneous Cray Shasta architecture and is set to be delivered in two phases:

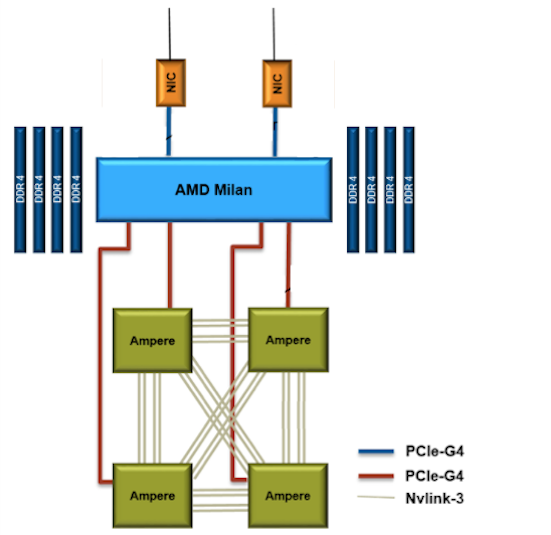

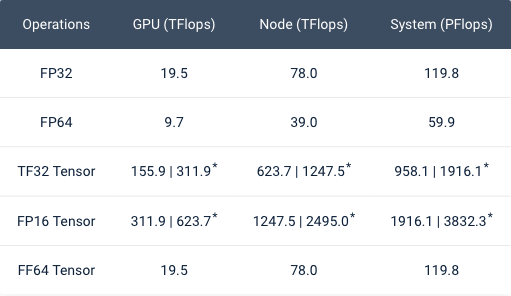

Article continues belowPhase 1 features 12 heterogenous cabinets comprising 1,536 nodes. Each node packs one 64-core AMD EPYC 7763 'Milan' CPU with 256GB of DDR4 SDRAM and four Nvidia A100 40GB GPUs connected via NVLink. The system uses a 35PB all-flash storage subsystem with 5TB/s of throughput.

The first phase of NERSC's Perlmutter can deliver 60 FP64 PetaFLOPS of performance for simulations, and 3.823 FP16 ExaFLOPS of performance (with sparsity) for analysis and deep learning. The system was installed earlier this year and is now being deployed.

While 60 FP64 PetaFLOPS puts Perlmutter into the Top 10 list of the world's most powerful supercomputers, the system does not stop there.

Phase 2 will add 3,072 AMD EPYC 7763-based CPU-only nodes with 512GB of memory per node that will be dedicated to simulation. FP64 performance for the second phase will be around 120 PFLOPS.

When the second phase of Perlmutter is deployed later this year, the combined FP64 performance of the supercomputer will total 180 PFLOPS, which will put it ahead of Summit, the world's second most powerful supercomputer. However, it will still trail Japan's Fugaku, which weighs in at 442 PFLOPs. Meanwhile, in addition to formidable throughput for simulations, Perlmutter will offer nearly four ExaFLOPS of FP16 throughput for AI applications.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

velocityg4 Wonder what its hashrate will be? As of right now. Nicehash says those 6,144 A100 GPU in Phase 1 will bring in $71,636.42 per day. That's after figuring in $0.10 per kWh.Reply -

spongiemaster Reply

A100's cost about $10,000 at retail. Giving a bulk discount and say they pay $50 million for those cards, it's going to be 2 years before they break even. If you factor in the cost of the rest of the parts in those servers and rack space and the necessary air conditioning, you're never going to break even within the service life of those cards even while making $71k a day.velocityg4 said:Wonder what its hashrate will be? As of right now. Nicehash says those 6,144 A100 GPU in Phase 1 will bring in $71,636.42 per day. That's after figuring in $0.10 per kWh. -

escksu OK, but I wonder what has all these power guzzling computers done for society?? Do they generate income?? At thee very least, crypto mining can generate income for the user. If these computers suck so much power but does nothing, then they are even worse than crypto mining and should be banned.Reply