Watch All Nine Parts of the Nvidia GTC 2020 Keynote

Jensen lays it out

The Nvidia A100 GPU based on the Ampere architecture has arrived, and Nvidia's CEO Jensen Huang talks about the technology, applications, and other advancements in his eight part digital keynote. The A100 is the highlight of the keynote, but Nvidia has its fingers in a lot of other pies. Here are the nine (one was missing earlier) different parts with a short summary of each:

Kicking things off, we have Jensen in the kitchen cooking up some server hardware. This one is pretty light on details, just getting things started for what would have been a 90 minute live keynote in the past. There's a new "I am AI" video included as well, showing the possibilities of machine learning.

While this talks tangentially about RTX and ray tracing, the main point of this video is to discuss the Tensor cores and DLSS, Deep Learning Super Sampling. It's still not clear if A100 includes RT cores or not, but DLSS has been enhanced since the initial release to create a high performance and high quality real time upscaling and anti-aliasing solution. Besides showing off what DLSS 2.0 can do, Jensen also introduces the Nvidia Omniverse, a cloud-based system for software development and collaboration. Also shown is Marbles RTX, basically a modern take on Marble Madness, now enhanced with ray tracing effects.

Did you know Nvidia recently finalized its Mellanox acquisition? As a provider of high performance networking interlinks between data center systems, it's an important addition to Nvidia's portfolio. Also, Nvidia's GPUs will now accelerate Apache Spark 3.0, which could be a huge benefit to many data processing workflows.

There's a lot of content and information out there, more than any of us could ever hope to grok. Recommender systems are a way to sift through all that data via AI, pulling out the golden nuggets that are most interesting. Nvidia's recommender solution is called Merlin.

There's no Iron Man here, but Jarvis shows up to power conversational AI in the form of a floating water droplet. Looks like Nvidia wants to compete with Siri and Hey Google, with more natural language constructs. Also, watch a voice-to-face animated gray face lip syncing some Nvidia rap.

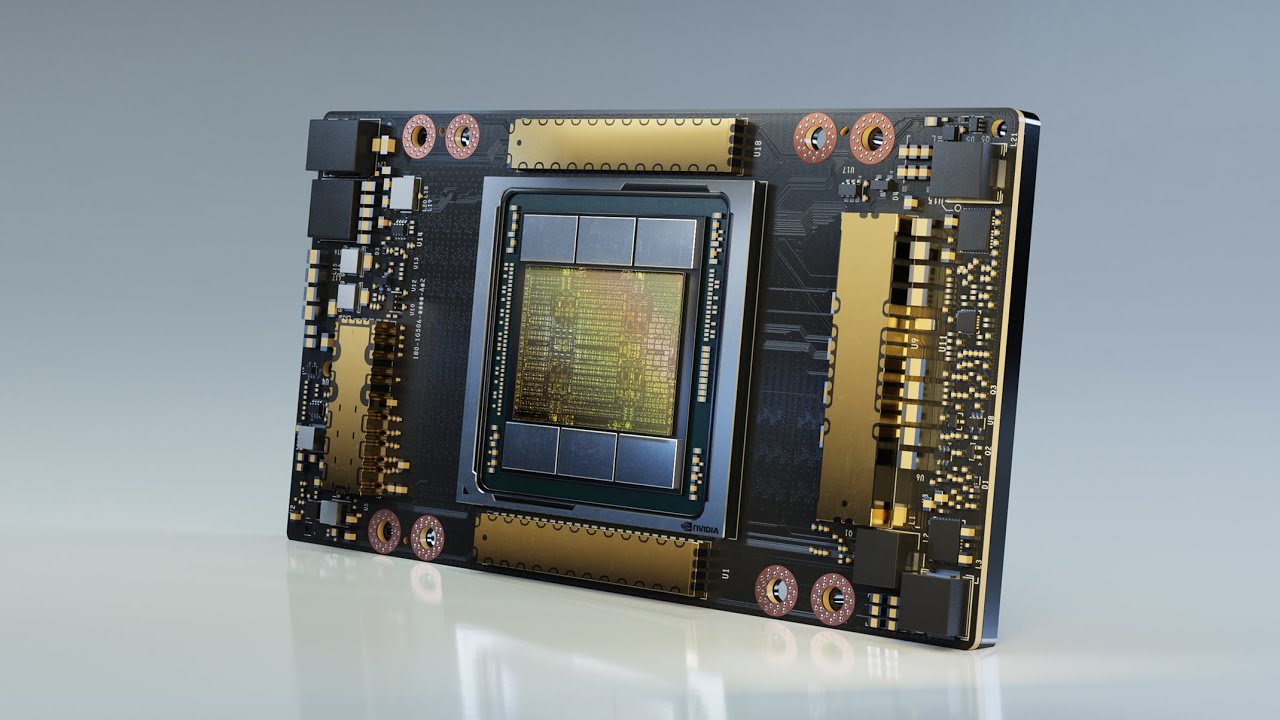

You're probably here to learn more about Ampere and the A100, and this is where the real meat of the keynote lies. Not surprisingly, this video is also twice as long as the other segments. Jensen digs into some of the details of the A100, comparing performance to the previous generation V100 data center processor. Depending on the workload, the A100 is anywhere from 2.5X to 20X faster than the V100. Also, it's 54 billion transistors and 826mm square, the largest GPU ever created.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Link eight of them together via 600 GBps NVSwitch links and each DGX A100 system can function as an even larger GPU, sort of. Jensen says DGX A100 systems can replace potentially hundreds of older data center servers: "The more you buy, the more you save." Only $199,000 each. I'll take two, please!

The A100 is also going into other devices, like the EGX A100 converged accelerator that can be used for edge computing and inferencing. EGX A100 also powers the next iteration of the Isaac robotics platform, providing a massive boost in performance.

Last but not least, autonomous cars are still a major target for many companies. Nvidia is working with many of them, and Orin is basically the next iteration of Nvidia's Drive platform. It can be used for a range of autonomous vehicles, from ADAS to autopilots and even fully autonomous solutions.

And that's a wrap. This concluding video just recaps everything that Jensen discussed in the other videos. There's also a behind the scenes segment on the "I am AI" video from the introduction.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.