Editor's Corner: Getting Benchmarks Right

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

It's All In The Numbers

In Monday's first look at AMD’s Socket AM3 interface, we observed some interesting gaming results on Intel’s Core i7 920 versus the new Phenom IIs (and subsequently got called out on them). This, of course, after overclocking both micro-architectures in a previous story and comparing their respective performances.

Of course, in that most recent piece, we used AMD Radeon HD 4870 X2 graphics cards, and in this one, we employed a pair of GeForce GTX 280s. It turns out that graphics makes all of the difference in gaming--who would have guessed?

Moreover, there were other sites that published their own evaluations of the AM3 platform, yielding a second round of comparisons.

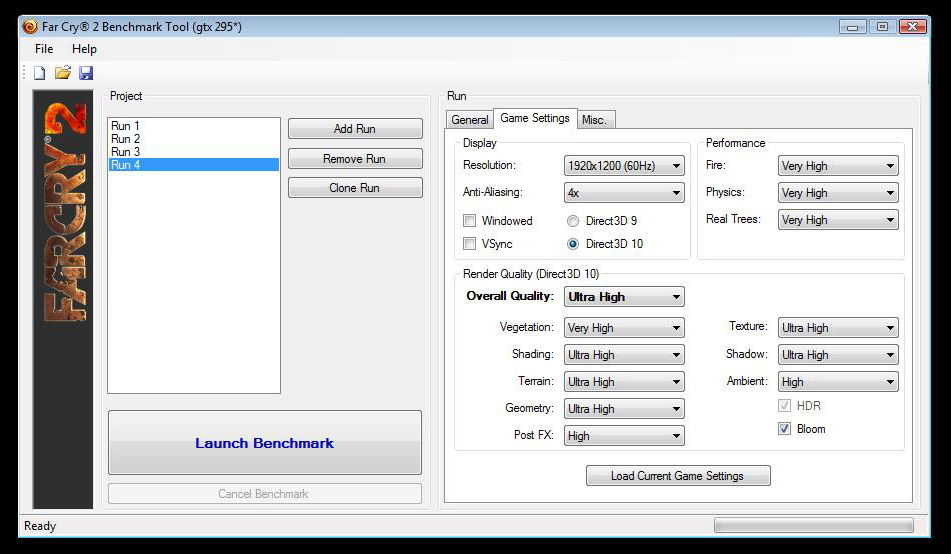

Curious as to why, exactly, we were seeing different results from some of the other publications out there and in response to requests for more data from our readers, I dedicated the past two days to hypothesizing possible causes and re-running our gaming tests, using Far Cry 2 as my indicator of choice. A sincere thank you to the folks who posited helpful information and constructive suggestions in comparing data. I tried to replicate as many of the other test scenarios from Monday's round of reviews as possible here.

Without further ado let’s get into some troubleshooting, benchmarking, and hypothesizing.

UPDATE: At the suggestion of several readers, I've re-run some of these tests (still in Far Cry 2) with Hyper-Threading disabled and then again with the Threading Optimizations in Nvidia's driver disabled completely. The results are as follows:

| Far Cry 2 | 1920x1200, no AA | 2560x1600, no AA |

|---|---|---|

| Core i7 920 @ 2.66 GHz (Hyper-Threading Enabled, 8 Threads) | 53.23 | 41.83 |

| Core i7 920 @ 2.66 GHz (Hyper-Threading Disabled, 4 Threads) | 56.24 | 44.09 |

| Core i7 920 @ 2.66 GHz (Hyper-Threading Disabled, Thread Optimization Disabled) | 56.12 | 44.11 |

Though there is some difference here, just like in the case of the fresh installation of Windows, our performance questions remain unanswered for the most part. Hopefully, these serve to cross one more possibility off of the list of explanations as to why the GeForce GTX 280 is under-performing when paired to a Core i7 processor. Now, the editorial as it originally appeared:

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Possibility #1: Benchmarking with power-saving features enabled was causing performance problems with Core i7.

| Far Cry 2 | 1920x1200, no AA | 2560x1600, no AA |

|---|---|---|

| All Power-Saving Features Enabled (Numbers From The Launch) | 53.23 | 41.83 |

| All Power-Saving Features Disabled (New Results) | 56.51 | 44.3 |

After disabling EIST (and hence turning off Turbo mode), C1E, and the thermal monitoring function that could throttle the processor if it broke past its pre-programmed 100A/130W limits, it was clear that this combination of features affected performance, but just slightly. Certainly, they weren't to blame.

Possibility #2: At DDR3-1066 (the max ratio of Intel’s engineering samples), we were starving our platform for memory bandwidth.

| Far Cry2 | 1920x1200, no AA | 2560x1600, no AA |

|---|---|---|

| i7 920 @ 2.66 GHz/DDR3-1066 and GeForce GTX 280 (Numbers From The Launch) | 53.23 | 41.83 |

| i7 920 @ 3.8 GHz/DDR3-1523 and GeForce GTX 280 (New Results) | 60.33 | 46.43 |

Rather than simply clocking the memory bus up to 1,600 MHz by upping the Bclk, we cranked the reference setting up to 190 MHz, yielding a 3.8 GHz clock speed and 1,523 MHz memory bus. The boost helped a tad at 1920x1200 and a little less at 2560x1600, but in no way made up the difference between a Phenom II at its stock settings. No go there, either, which is incredibly strange. Graphics bottleneck, anyone?

Possibility #3: The driver install was bad, and the GeForce GTX 280 was actually at fault.

| Far Cry 2 | 1920x1200, no AA | 2560x1600, no AA |

|---|---|---|

| Original Installation (Numbers From The Launch) | 53.23 | 41.83 |

| Fresh Operating System/Driver Installation (New Results) | 56.12 | 44.02 |

Starting clean, with a fresh copy of Vista x64 and a fresh driver installation, we started over using Nvidia’s GeForce 181.22 build—the latest available, and the one we used in our story earlier in the week. The i7 setup scored a bit higher, but still failed to pass any of the Phenom II X4s, which would have been the result we were looking for to show Core i7 in the lead here. Still, no go.

Possibility #4: Our results are only representative of gaming on an Nvidia card, and those using AMD Radeon boards will see something different.

| Far Cry 2 | 1920x1200, no AA | 2560x1600, no AA |

|---|---|---|

| i7 920 @ 2.66 GHz and GeForce GTX 280 (Numbers From The Launch) | 53.23 | 41.83 |

| i7 920 @ 3.8 GHz and Radeon HD 4870 X2 (New Results) | 105.08 | 79.46 |

No kidding, right? Of course gaming on a Radeon is going to give you a different result—especially in a game. But we didn’t expect variance to this extreme. Suddenly, we’re on to something. The i7 920’s results shoot up to levels that truly trounce the Phenom II X4 machine armed with the GeForce GTX 280 (as it should, given the faster processor and graphics card). We'll dive into a lot more depth on this point on the next page.

Possibility #5: Ok, turn down the CPU clock, silly. You’re still running at 3.8 GHz.

| Far Cry 2 | 1920x1200, no AA | 2560x1600, no AA |

|---|---|---|

| i7 920 @ 2.66 GHz and GeForce GTX 280 (Numbers From The Launch) | 53.23 | 41.83 |

| i7 920 @ 2.66 GHz and Radeon HD 4870 X2 (New Results) | 85.87 | 74.85 |

The results scale lower, most notably at 1920x1200, where we’d expect a CPU to have a more profound impact on gaming performance (versus 2560x1600, at least). But the 4870 X2 still leads the X4s and X3s by a commanding margin, even with the 920 running at its stock 2.66 GHz. And look how much more scaling there is coming from the overclocked configuration above.

Possibility #6: Web site XYZ used a different driver version. Perhaps something happened between that version and the most recent update you used.

| Far Cry 2 | 1920x1200, no AA | 2560x1600, no AA |

|---|---|---|

| GeForce 181.22, Jan. 22, 2009 (Numbers From The Launch) | 53.23 | 41.83 |

| GeForce 180.43 Beta, Oct. 24, 2008 (New Results) | 50.81 | 39.68 |

Stepping all the way back to October of last year, we installed Nvidia’s GeForce 180.43 package to test the difference between then and now. And, if anything, the GeForce GTX 280 only picks up performance given the more recent driver update. That’s not the problem, either.

Possibility #7: Your card is hosed.

| Far Cry 2 | 1920x1200, no AA | 2560x1600, no AA |

|---|---|---|

| GeForce GTX 280 1 GB (Numbers From The Launch) | 53.23 | 41.83 |

| GeForce GTX 280 1 GB Replacement (New Results) | 50.73 | 40.72 |

Fair enough, we have more than enough cards here to at least try swapping that out. We didn’t suspect the processor, memory, or motherboard of being defective—after all, the Core i7 920 at 2.66 GHz served up compelling results in all of our audio/video encoding apps. It was only the gaming scores that looked funny.

But that isn’t the issue either. A new card ranged from the same to slightly worse in Far Cry 2—certainly within a margin of error, to be sure.

Possibility #8: Maybe you guys use different settings that make more of an impact on the i7 920’s performance.

| Far Cry 2 | 1920x1200, no AA | 2560x1600, no AA |

|---|---|---|

| i7 920 @ 2.66 GHz, Ultra High Settings (Numbers From The Launch) | 53.23 | 41.83 |

| i7 920 @ 2.66 GHz, High Settings (New Results) | 64.74 | 48.77 |

Now it feels like we’re reaching for straws. Nevertheless, we were willing to try the same Far Cry 2 batch using High settings instead of the Ultra High DirectX 10 configuration used to test initially. And again, there’s a significant increase, but it isn’t so substantial that Intel is able to usurp the fastest X4 under the load of Very High settings.

-

Hamsterabed How very odd, when i saw the benches i immediately thought there was a problem. Glad you guys made an article to explain and backup you numbers and i hope we get some answers. don't have another driver fail Nvidia...Reply -

Tindytim Wow...Reply

Just wow.

Right when I considering leaving this site forever for it's over Mac loving, Tom flashes me a glimmer of hope. -

rdawise Thank you Chris for this follow-up article..now where is kknd to argue....Reply

I am sorry but we all know that at lower resolutions the Core i7 will beat the P2, but as the article states, but real world the PII is hitting the high notes. Could this be a driver screw up from Nvidia...probably since you're elimnating everything else. Are there any other x-factors out there...oh yes plenty more. However I think people will get the wrong impression if they read this and think the PII is "more powerful" than the Core i7. Some one who reads this should come away thinking that the PII will give you almost as great gaming as some of the Core i7s can for less money. (Time for a price cut intel).

I do a question what if you tried using memory with different timings. I believe 8-8-8-24 was used last test, but how about 7-7-7-20? Just trying to help think of reasons. Either way it gives us something to look forward to in the CPU world. Good follow-up. -

rdawise Thank you Chris for this follow-up article..now where is kknd to argue....Reply

I am sorry but we all know that at lower resolutions the Core i7 will beat the P2, but as the article states, but real world the PII is hitting the high notes. Could this be a driver screw up from Nvidia...probably since you're elimnating everything else. Are there any other x-factors out there...oh yes plenty more. However I think people will get the wrong impression if they read this and think the PII is "more powerful" than the Core i7. Some one who reads this should come away thinking that the PII will give you almost as great gaming as some of the Core i7s can for less money. (Time for a price cut intel).

I do a question what if you tried using memory with different timings. I believe 8-8-8-24 was used last test, but how about 7-7-7-20? Just trying to help think of reasons. Either way it gives us something to look forward to in the CPU world. Good follow-up. -

sohei "I believe 8-8-8-24 was used last test, but how about 7-7-7-20? Just trying to help think of reasons"Reply

wow 7-7-7-20? this is the performance...indeed

P2 works with ddr2 great and you wary about timings -

great article!!Reply

just a thought: what about previous generation of nvidia cards? could be this is a GTX 260/285/280/... problem. maybe you could try with one of 9xxx series. -

StupidRabbit awesome article.. only two pages long but it changes the way i look at the previous benchmarks. good to see you focus not only on the hardware itself but also on the benchmarks with a real sense of objectivity.. its what makes this site great.Reply -

cobra420 so it looks like a gpu issue . why not try a gtx 295 ? or is that why you set the video so low ? now you found the issue theirs no need to try a different card ? ati sure did a good job on there 4870 series . nice job tomsReply -

Maybe Farcry optimized it more on ATI, maybe Intel is throwing sticks at the wheels of nVidia at the hardware level, maybe, maybe ... :SReply

Why is Intel supporting multi-ATI config, but not multi-nVidia? Why doesn't Intel let nVidia use its Atom freely? Why, oh why?

There are so many factors. I think if you replace Farcry with a synthetic test, there will be less unknowns. Just maybe :)