Nvidia GeForce GTX 690 4 GB: Dual GK104, Announced

Did you spend some time this week wondering what Nvidia was planning for today's announcement? Everyone who guessed GeForce GTX 690, you're right. Powered by two GK104 graphics processors, we have the scoop on Nvidia's soon-to-be flagship.

Inside Nvidia's GeForce GTX 690 4 GB

Update (5/3/2012): Nvidia's GeForce GTX 690 just launched. You can read all about the card and its performance in GeForce GTX 690 Review: Testing Nvidia's Sexiest Graphics Card.

I had my head so thoroughly buried in the Ivy Bridge launch that I completely missed Nvidia’s “Something is Coming” tease last week.

Well, now we know that “Something” is actually the company’s GeForce GTX 690. It’s not here yet; you’ll have to wait until next week for a comprehensive look at the card and its performance. But we do have all of the speeds and feeds thanks to tonight’s webcast from the Nvidia's GeForce LAN 2012 in Shanghai, China.

Article continues belowInside of GeForce GTX 690

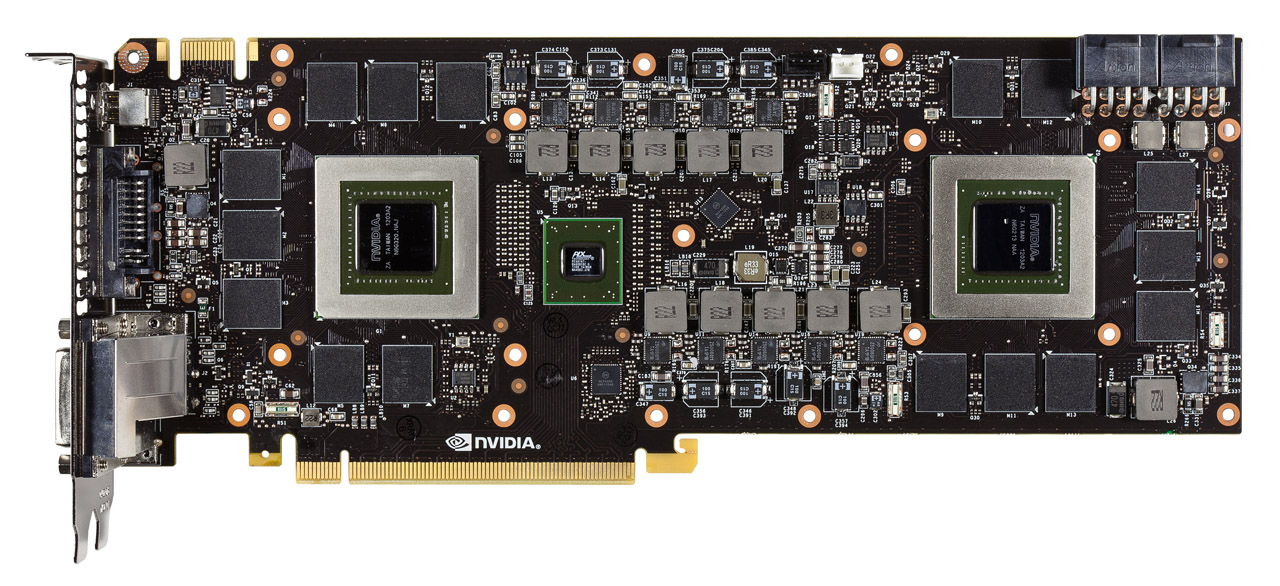

GeForce GTX 690 is a dual GK104 part. Its GPUs aren’t neutered in any way, so you end up with 3072 cumulative CUDA cores (1536 * 2), 256 combined texture units (128 * 2), and 64 full-color ROPs (32 * 2).

Gone is the NF200 bridge that previously linked GF110 GPUs on Nvidia’s GeForce GTX 590. That component was limited to PCI Express 2.0, and these new graphics processors beg for a third-gen-capable connection. Rather than engineering its own solution, though, Nvidia looked to PLX for one of its PEX 874x switches. The PCIe 3.0-capable switches accommodate 48 lanes (that’s 16 from each GPU and 16 to the bus interface) and operate at low latencies (down to 126 ns). What of NF200’s features incorporated to augment the performance of multi-GPU applications, Broadcast and PW Short? According to Nvidia, those capabilities were wrapped up into the PCIe 3.0 specification, so GeForce GTX 690 gets them through the PLX component.

Each graphics processor has its own aggregate 256-bit memory bus, on which Nvidia drops 2 GB of GDDR5 memory. The company says it’s using the same 6 GT/s memory found on its GeForce GTX 680, able to push more than 192 GB/s of bandwidth per graphics processor. Core clocks on the GK104s drop just a little, though, from a 1006 MHz base down to 915 MHz. Setting a lower guaranteed ceiling is a concession needed to duck in under a 300 W TDP. However, in cases where that power limit isn’t being hit, Nvidia rates GPU Boost up to 1019 MHz—just slightly lower than a GeForce GTX 680’s 1058 MHz spec.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

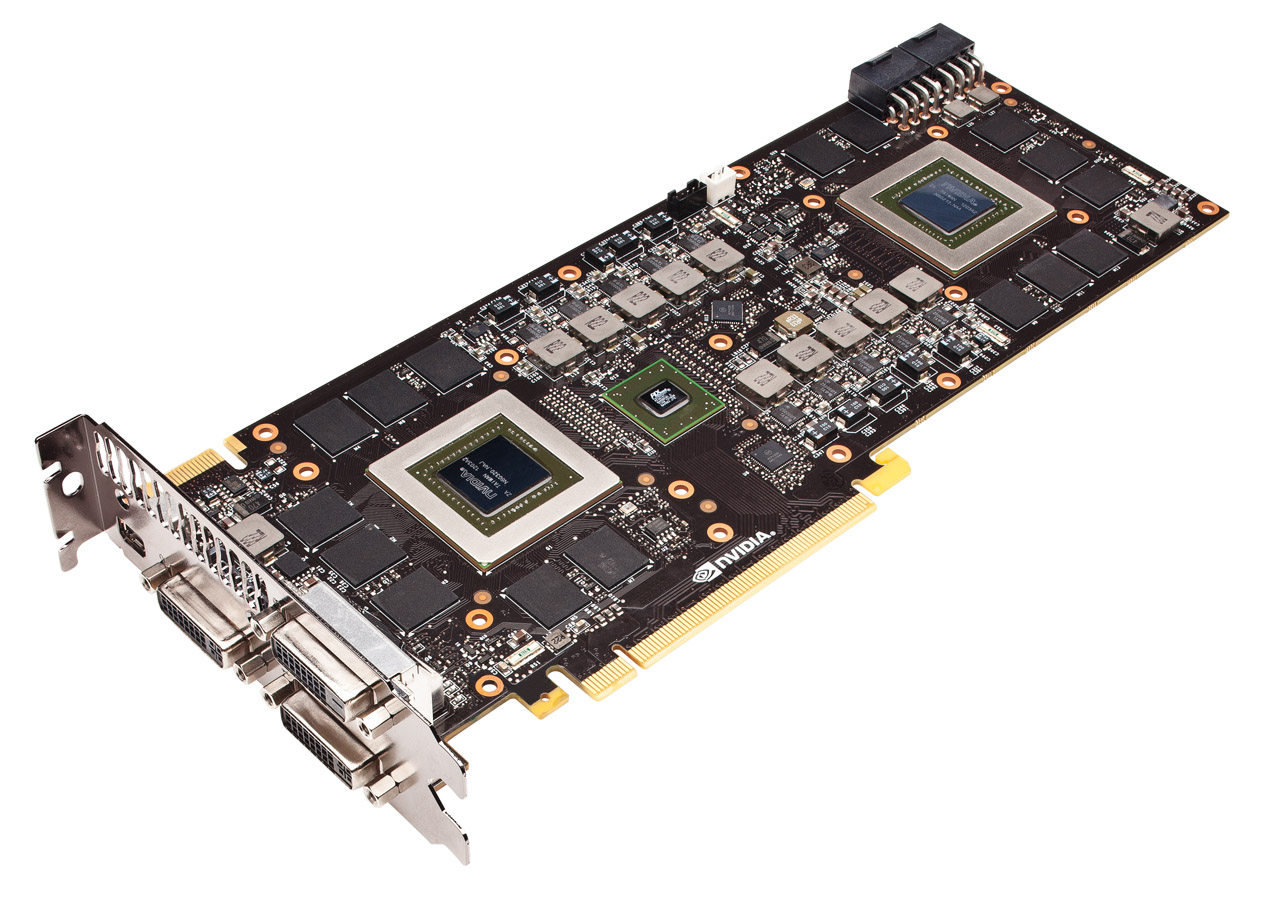

Power is actually an important part of the GeForce GTX 690’s story. Nvidia’s GeForce GTX 590 bore a maximum board power of 365 W. One 75 W slot and two 150 W eight-pin plugs pushed the 590 mighty close to its ceiling. But because the Kepler architecture takes such a profound step forward in efficiency, Nvidia is able to tag its GTX 690 with a 300 W TDP. And yet it still includes two eight-pin inputs able to deliver up to 375 W, including the slot.

Consequently, representatives are claiming that there’s significant headroom to push the GeForce GTX 690 beyond the performance of twin GeForce GTX 680s. At its stock power and clock settings, though, we’re expecting it to be a little slower than two single-GPU boards on their own. The good news is that one GeForce GTX 690 should use markedly less power and operate more quietly than a pair of GTX 680s in SLI. Nvidia says it is deliberately binning low-leakage parts to minimize heat, and its own measurements put a GeForce GTX 690 at 47 dB(A) under load compared to 51 dB(A) for the 680s.

- 1

- 2

Current page: Inside Nvidia's GeForce GTX 690 4 GB

Next Page The Exterior, Pricing, And Availability-

aviral I was also thinking that may be they are Going to launch GTX 690 but I was more sure that they will launch a lower model of 680 but they launced a top end model before lower models.Really :)Reply

But it is really nice to know that 690 a beast of cards is launced. :D -

nieur I think they have launched GTX690 before lower versions GTX680 because of yield issue for 28nm at TSMCReply

it would be hard to satisfy the requirements of medium budget cards due to their sheer number

anyway I liked the card but can only dream of having it -

J_E_D_70 Great that they're cannibalizing chips needed to get 680s out the door to paying customers so they can produce this monstrosity. Grrrr... At least wait til AMD comes out with a card that needs to be trumped. My nerd rage against nVidia is growing.Reply -

dragonsqrrl Ouch... great looking card, but wasn't expecting the $1000 price tag. Love the design and construction of the shroud, using metal instead of plastic. It certainly looks like a beast of a card, no doubt with specs and performance to match. It'll probably end up within 10% of GTX680 SLI performance.Reply -

Hazbot bee144I know! They're getting greedy!Reply

Just because they priced it high doesn't necessarily mean they're greedy, maybe they went overkill with the technology.