Intel SSD DC P3700 800GB and 1.6TB Review: The Future of Storage

With the introduction of its SSD DC P3700, P3600, and P3500, Intel is giving us our first taste of the PCIe-based NVMe specification. We take the flagship P3700 for a drive in its 800 GB and 1.6 TB incarnations. Just how fast is the future of storage?

Intel's SSD DC P3700: Up Close and Personal

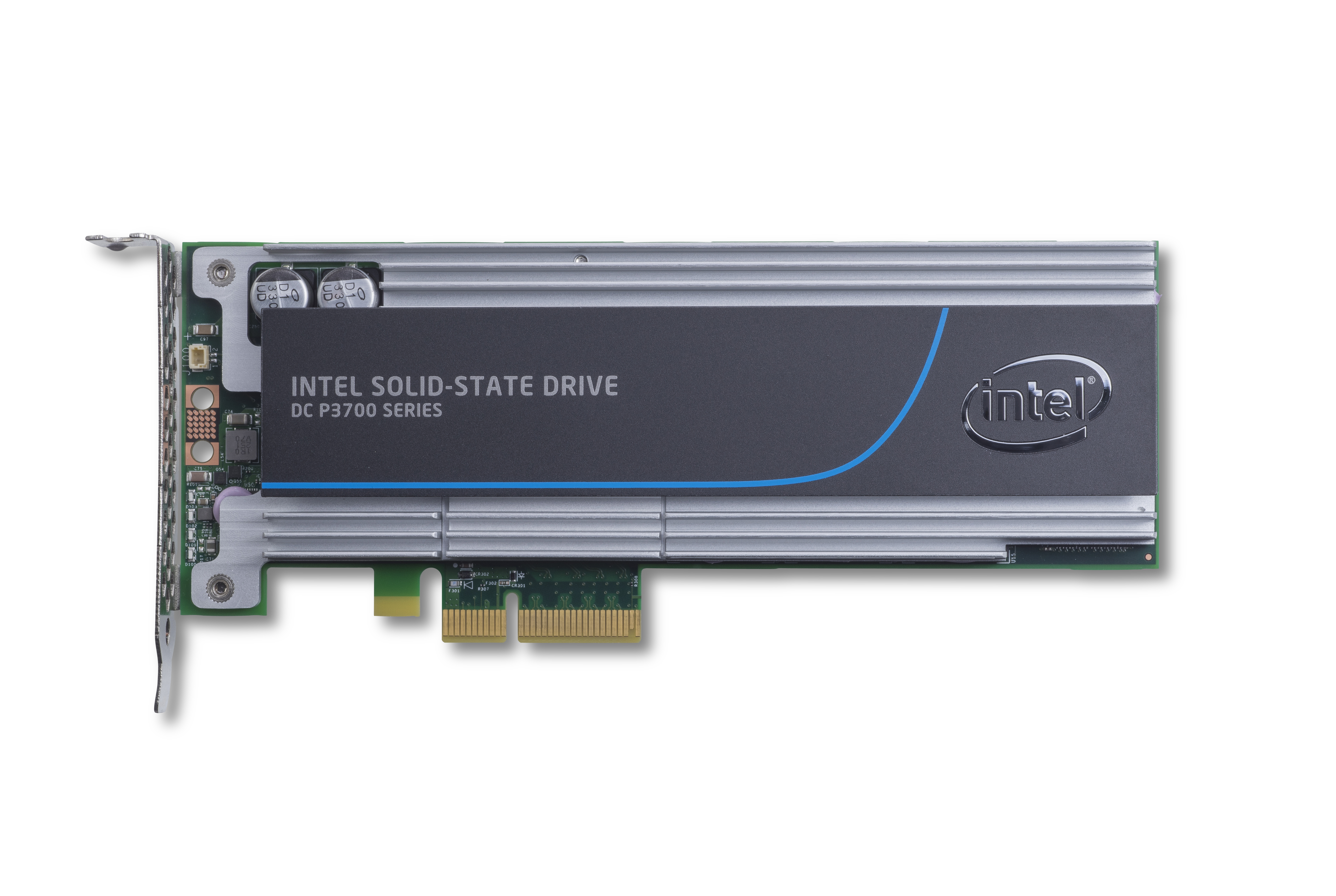

The SSD DC P3700 is an impressive-looking piece of hardware. The half-height, half-length PCIe x4 add-in card is dominated by a large heat sink. Intel doesn't employ any active cooling. It instead relies on airflow from chassis fans to keep this 25 W board under its thermal ceiling.

The drive isn't covered by just a dumb block of aluminum. Rather, what appears to be a decorative label actually conceals a plate that helps funnel air through the heat sink. It forms a channel that exploits the front-to-back cooling of most servers. Moreover, there's actually a heat sink inside. It's dedicated to the controller and sits inside of the larger sink. The smaller heat sink extends beyond the bottom of the larger sink and is held in place by an aluminum band. This allows for more consistent pressure on the processor, increasing the efficiency of heat transfer.

Why did Intel go through so much trouble designing this product's cooling? Like many PCIe-based SSDs and RAID cards, the SSD DC P3700 pulls the full 25 W allowed from a PCI Express slot. Thermal management is a priority though, and there are also lower-power modes that let you use this drive in systems not equipped with adequate cooling.

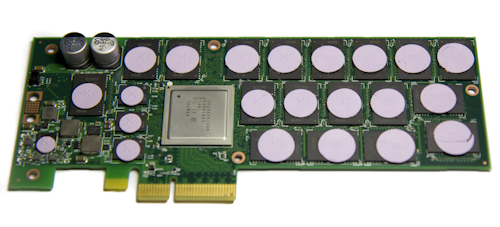

Article continues belowWith the heat sink pulled off, you can see that the board is loaded with NAND packages (in total, our 800 GB model has 36). Each package hosts 20 nm Intel HET (High-Endurance Technology) MLC NAND. In the SSD DC P3700 series, this amount of raw flash adds up to about 25% spare area.

On the controller side of the PCB, all of the NAND and DRAM packages are topped with thermal gap pads that interface with the heat sink.

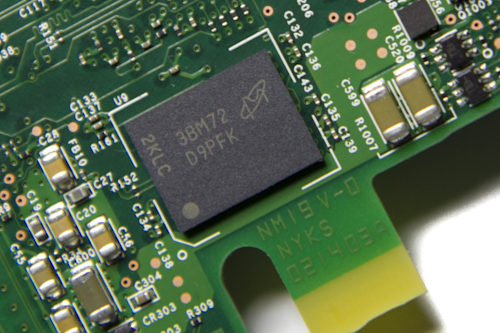

The SSD DC P3700 uses NAND we've seen on some of Intel's existing products. But the controller is all-new. A great many of the SATA-based SSDs we review employ an eight-channel design. The P3700's processor supports an astounding 18 channels and operates at 400 MHz. Naturally, you get a ton more parallelism, which plays to one of NVMe's strengths.

Our review unit also hosts 1.25 GB (256 MB x 5) of DDR3-1600 DRAM. The NAND and DRAM placement on both sides of the board is identical. They're almost mirror images of each other.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

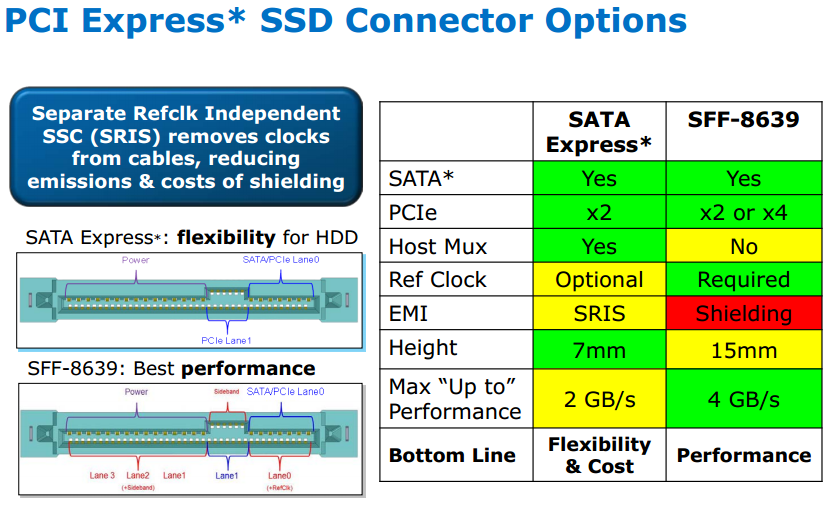

Intel's NVMe-based product line has one other trick up its sleeve. You can buy these drives to drop into a PCIe slot or in 2.5" enclosures. Now, you might be asking how to attach a 2.5" drive that communicates over PCIe, right? That's where the SFF-8639 connector specification comes in.

This enterprise connector specification is where the industry is heading. What might not be totally clear is that it allows for a single connector able to support current SATA and SAS drives, and facilitates PCIe signaling. The unused portion of the SATA/SAS connector exposes the PCI Express lanes, along with the required sideband signals and clocks. But while the connector supports multiple interfaces, it's up to the system manufacturer to expose the right hook-ups. You may see SFF-8639 drive bays limited to SATA/SAS or PCIe, for example. And don't expect this stuff on the desktop anytime soon. As of now, it's an enterprise-only specification.

We really like the table above because it also shows the specifics of SATA Express, which always comes up when we talk about NVMe and SFF-8639. Unlike SFF-8639, SATA Express requires a host mux in order to tell the system whether the drive is using SATA or PCIe for connectivity.

It's unfortunate that we don't have an SFF-8639-attached SSD DC P3700 to look at because we still have a few concerns. Although the form factor is rated at the same performance as an add-in card, its environmental specs are completely different. Intel says that the PCIe board can handle between 0 and 55 °C. The 2.5" models are only rated for 0 to 35 °C ambient. The add-in card needs the typical 200-300 linear feet per minute to achieve those temperatures. Hitting 35 degrees imposes more serious requirements. Intel's 2 TB model purportedly needs 650 LFM across the drive, for example. That could prove challenging, since most servers put storage up in the front of their enclosures and use fans to pull air over the device's surface.

Current page: Intel's SSD DC P3700: Up Close and Personal

Prev Page A Deeper Look At NVM Express Next Page How We Tested Intel's SSD DC P3700-

xback In the 1st table on page 1, the "4k random write IOPS" are reversed :)Reply

(3500 scores highest, while the 3700 scores lowest) -

redgarl OCZ already went there and even made their own connector for providing more bandwith to SSD... just a shame that now Intel try to remove the carpet from beneath the feet of OCZ. Well, old tech is new tech.Reply

By the way, OCZ revodrive was priced similarly, I don't see that big fuzz from Toms here. -

Nuckles_56 "Intel's 2 TB model purportedly needs 650 LFM across the drive"Reply

What the hell is LFM? -

JeanLuc The active power consumption numbers on first table are wrong (I hope!) 35,000 watts active?Reply

Edit:

It's not actually wrong it might just be my out of date browser I'm using in the office but for me the numbers aren't lining up correctly. -

pjmelect Reply"Intel's 2 TB model purportedly needs 650 LFM across the drive"

What the hell is LFM?

Linear Feet per Minute of airflow

-

pjmelect Reply"Intel's 2 TB model purportedly needs 650 LFM across the drive"

What the hell is LFM?

Linear Feet per Minute of airflow

-

Nuckles_56 Reply13947314 said:"Intel's 2 TB model purportedly needs 650 LFM across the drive"

What the hell is LFM?

Linear Feet per Minute of airflow

Ah that makes sense now