Sandy Bridge-E: Core i7-3960X Is Fast, But Is It Any More Efficient?

Ironically, when it comes to performance, Intel’s Core i7-3960X is the real Bulldozer. Since its power consumption levels are lower than the Gulftown-based Core i7, it should also deliver amazing performance per watt as well. Is that really the case?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Is Core i7-3960X An Efficiency Winner?

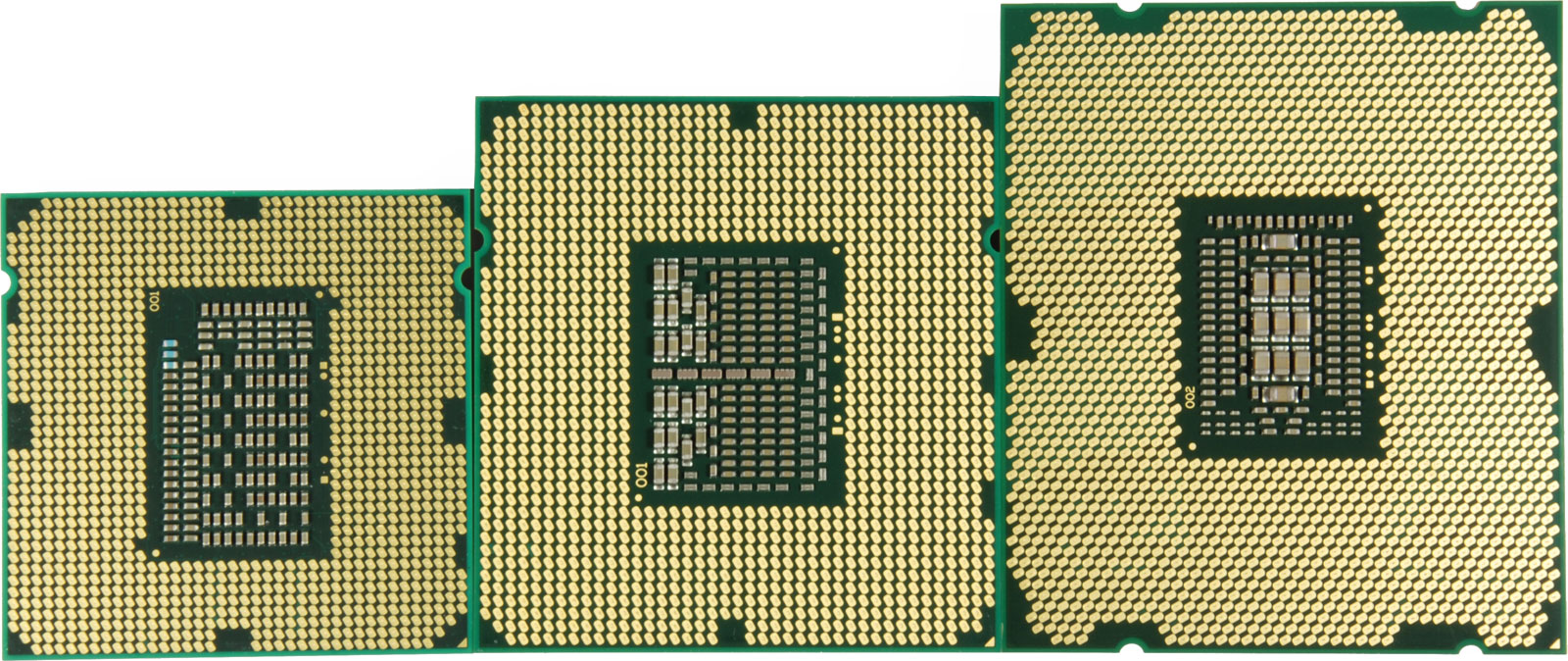

Because we just reviewed Core i7-3960X, most of the performance results in this article don’t come as a surprise. Intel’s latest desktop processor is a beast in that it delivers unmatched performance per clock with the added benefit of six cores. As a result of its architecture and scalable ring bus, the beefed-up Sandy Bridge-E enables an additional two cores on the desktop, and will soon facilitate up to two more on the Xeon E5 family. Although a quarter of its available shared L3 cache is disabled, access to 15 MB represents a substantial increase from Gulftown's 12 MB. And clock rates as high as 3.9 GHz with one or two cores active (by virtue of Turbo Boost) augment performance in less-optimized apps. Otherwise, the technology manages to push a 3.3 GHz base clock up to 3.6 GHz, even when the chip is fully-loaded.

All of this is made possible at lower idle power use compared to last generation's hexa-core flagship. And, armed with a modest Radeon HD 6850, system power use drops as low as 62 W. Even a high-end GeForce GTX 580 doesn't push the machine beyond 90 W. Considering that older systems often incur more than 120+ W of consumption, it's clear that Intel's Sandy Bridge design pushed beyond simply increasing performance.

However, peak system power consumption goes up quite a bit. This is attributable to the large, complex die and the fact that it's pushed hard by technologies like Turbo Boost to maximize performance in every conceivable workload. Because we used a capable closed-loop liquid cooling system, the processor's maximum speeds were maintained for longer intervals than on average air-cooled solutions. We're not looking at an affordable quad-core LGA 1155-based chip here; expensive cooling is the price enthusiasts have to pay for the extra performance.

Article continues belowSandy Bridge-E is the undisputed performance winner, and, for the first time, I'd label this high-end configuration reasonable with regard to idle power use. However, two additional cores and the extra cache on a very large die impose greater power requirements under load than they add to the performance charts. As a result, Intel's mainstream Sandy Bridge processors end up outshining Sandy Bridge-E in every measure of efficiency. Unless you really need a hexa-core platform for its raw performance (power be damned), existing Core i5 and Core i7 chips able to drop into LGA 1155 are more sensible solutions.

For obvious reasons, efficiency does not scale linearly with core count, necessitating a revised answer to my initial question: Sandy Bridge-E delivers more efficiency than other six-core processors. However, it's hard to make that -E represent efficiency. If that's the metric you're looking to optimize, drop the -E suffix altogether, and save money now (on the hardware) and over time (on power) with Intel's year-old mainstream Sandy Bridge processors.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Is Core i7-3960X An Efficiency Winner?

Prev Page Efficiency Score And Power Diagram

Patrick Schmid was the editor-in-chief for Tom's Hardware from 2005 to 2006. He wrote numerous articles on a wide range of hardware topics, including storage, CPUs, and system builds.

-

fstrthnu Aand yet more evidence that most people looking for a high-end processor will be perfectly fine with the i5-2500K or the 2600KReply -

sam_fisher fstrthnuAand yet more evidence that most people looking for a high-end processor will be perfectly fine with the i5-2500K or the 2600KReply

I guess it just depends on what you're doing. If you have a high end workstation and are using programs that are going to utilise all 12 threads, quad channel memory and 40 lanes of PCIe, and you need that processing power then it's probably not a bad investment. Whereas for most users the 2500K or the 2600K will do fine. -

benikens ReplyIronically, when it comes to performance, Intel’s Core i7-9360X is the real Bulldozer. Since its power consumption levels are lower than the Gulftown-based Core i7, it should also deliver amazing performance per watt as well. Is that really the case?

It's i7-3960x, not i7-9360x -

pwnorbpwnd Correct me if I'm wrong but isn't the 6850 a Barts card? Unless I am wrong but I own a 6850.Reply -

one-shot There is a small typo on Page 9Reply

"Total power used drops again relative to Cor ei7-3960X's predecessor, the Core i7-980X (Gulftown)." -

Shape ReplyIronically, when it comes to performance, Intel’s Core i7-9360X is the real Bulldozer.

ROFL!!! Very well said!

Nice! -

de5_Roy another informative, in-depth article about efficiency. great work guys!Reply

3960x might very well be the $1k cpu that's worth the (over)price unlike the older 980x.

sb-e shows that both single threaded and multi threaded performance as well as efficient power use can be ahcieved by a 32nm, 6 core, 130 tdp cpu (but you gotta pay a lot for that).

when you bring price into the equation, quad core sb i5 and i7(95w tdp) are the best way to go (i wonder how an i7 2700k fare if it was tested alongside these cpus). -

agnickolov And I was so hoping Visual C++ had made it into the regular benchmark set. Sadly, it's missing here...Reply -

giovanni86 Looking forward to seeing what type of Air/liquid cooled Overclocks can be achieved with these newly released processors.Reply