Tigo T-One 240GB Low Cost SSD Review

Why you can trust Tom's Hardware

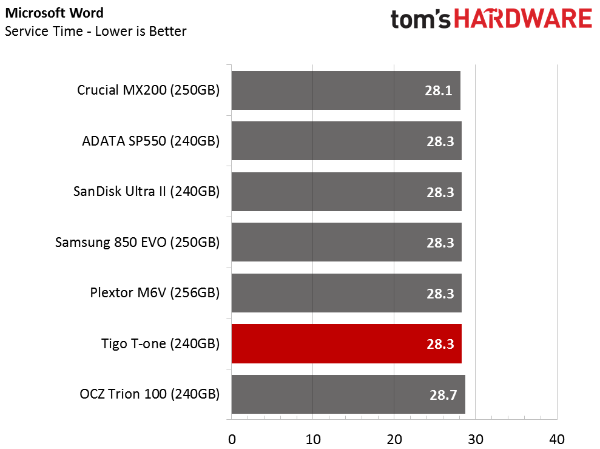

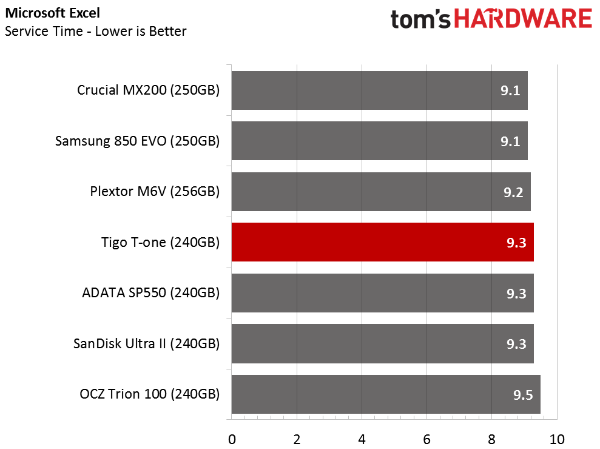

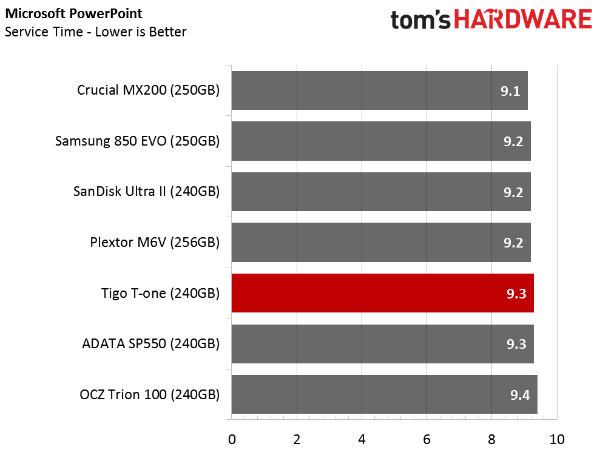

Real-World Software Performance

PCMark 8 Real-World Software Performance

For details on our real-world software performance testing, please click here.

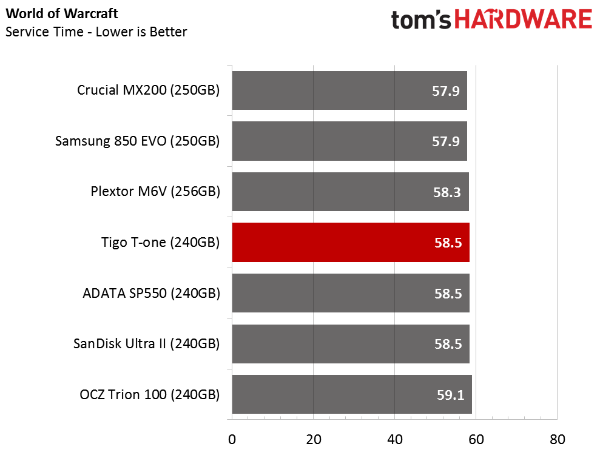

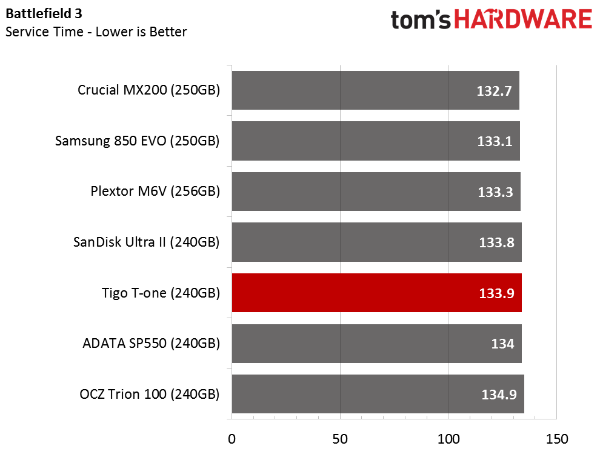

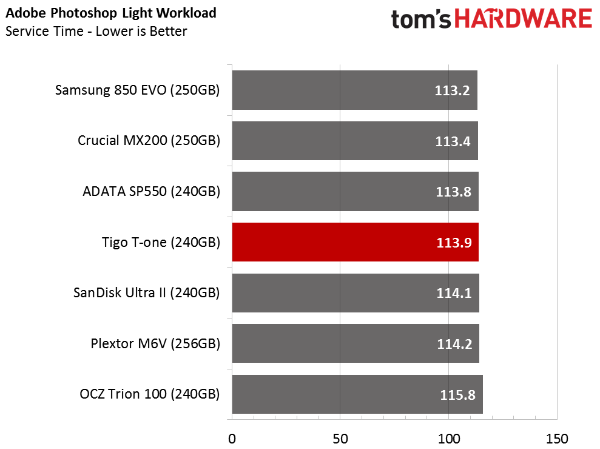

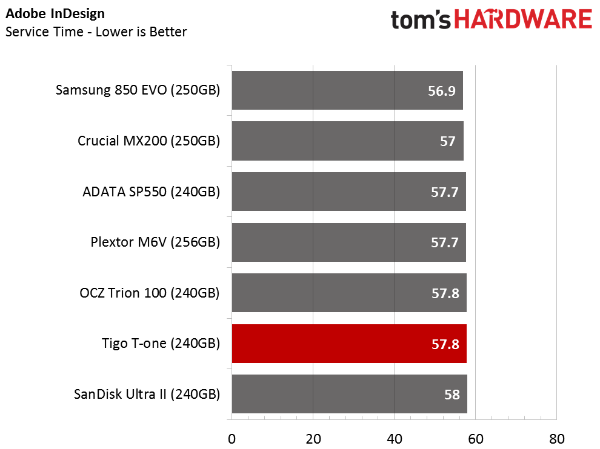

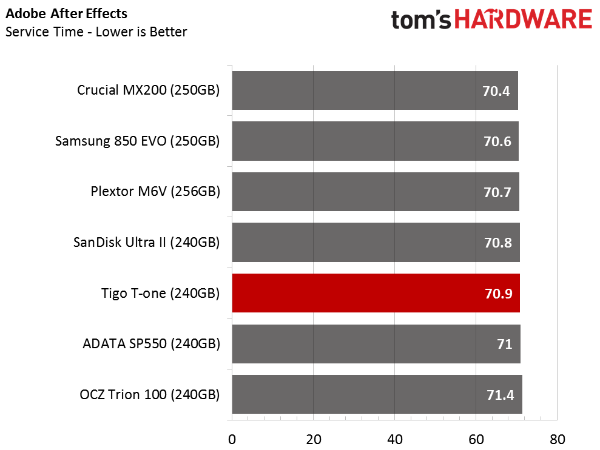

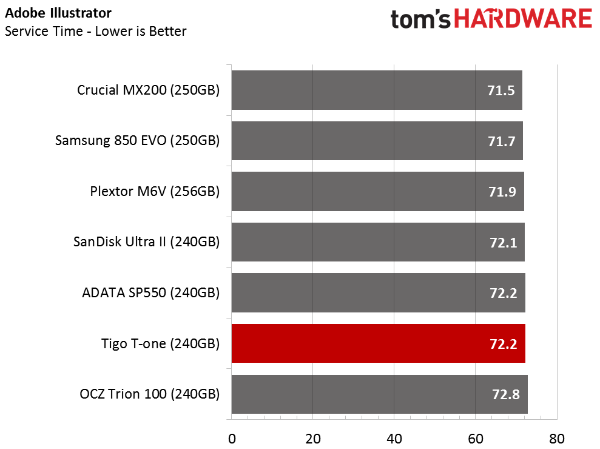

Once you move away from heavy workloads, the 240GB T-One performs well enough in the real world.

In this series of tests, Tigo's drive replaces the 256GB Mushkin Reactor. I was surprised to see how close both SSDs came to each other in nearly every test. As a reminder, the Reactor employs MLC flash and Silicon Motion's previous-gen controller.

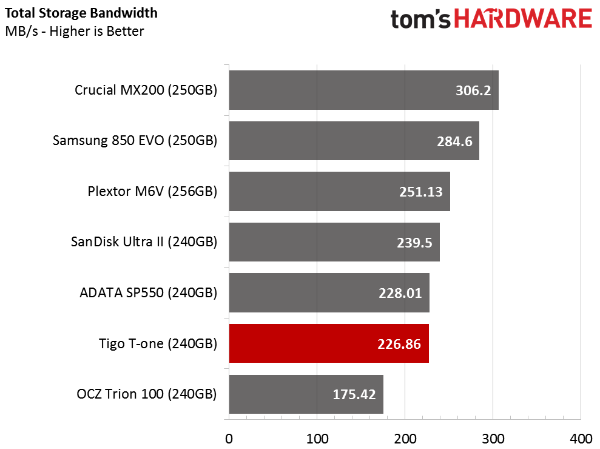

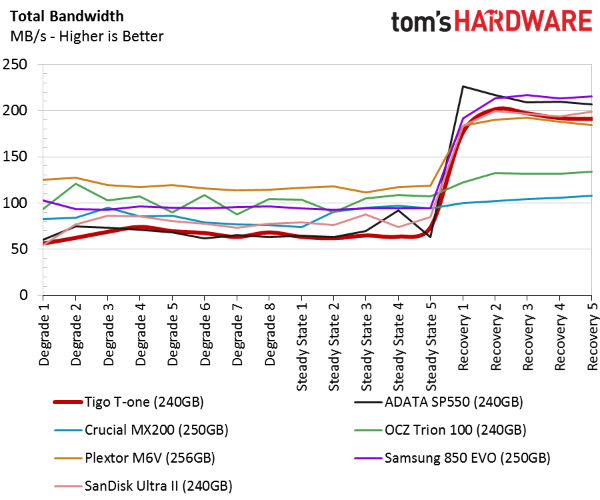

Total Storage Bandwidth

With our results averaged and converted to throughput, Tigo's T-One falls to the bottom of our chart, outperforming just one other drive.

PCMark 8 Advanced Workload Performance

To learn how we test advanced workload performance, please click here.

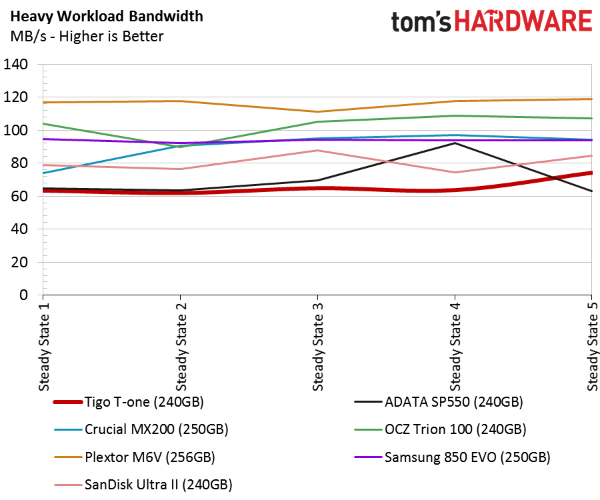

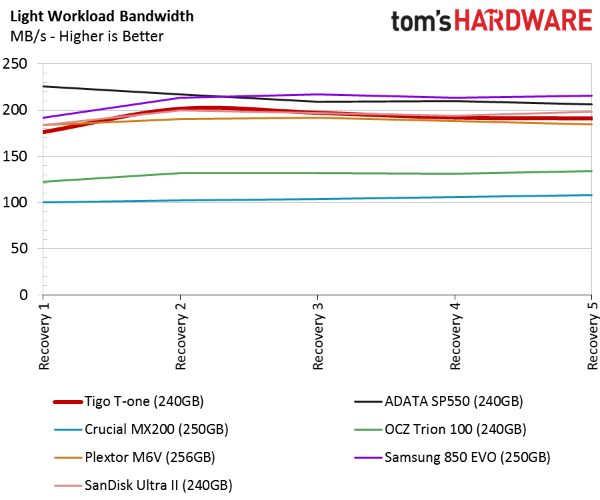

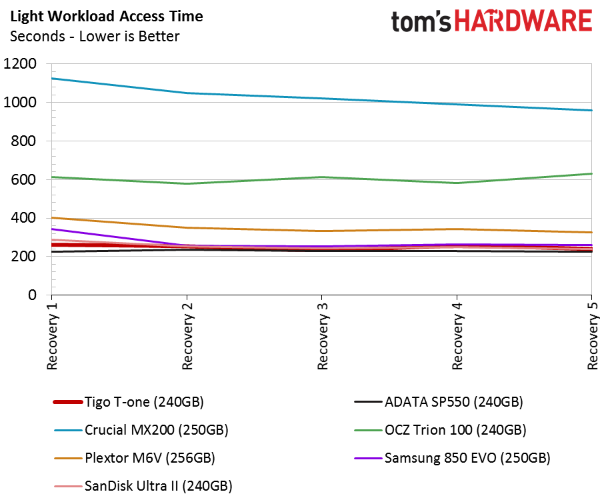

We only want to focus on lighter workloads, since that's what these low-cost SSDs were designed to address. Here, the T-One rises to the top when all of the drives are forced to operate with very little idle time between tests.

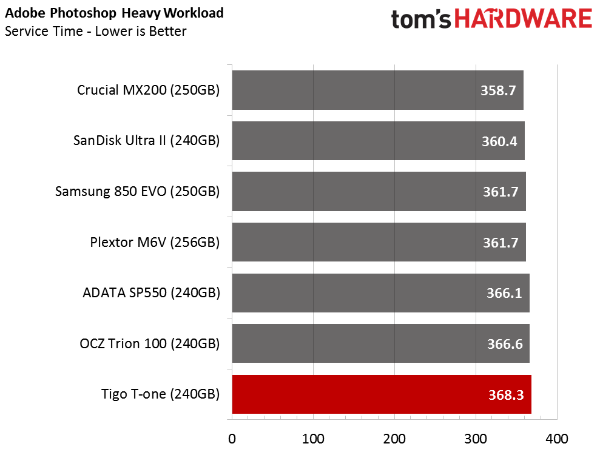

Of course, heavy workloads tell another story entirely...

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Access Time Test

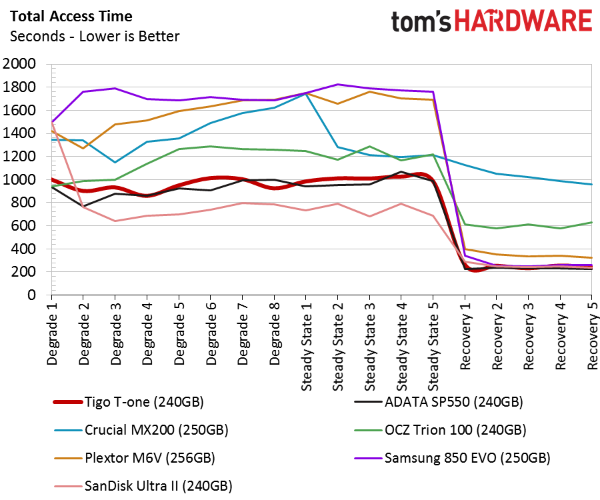

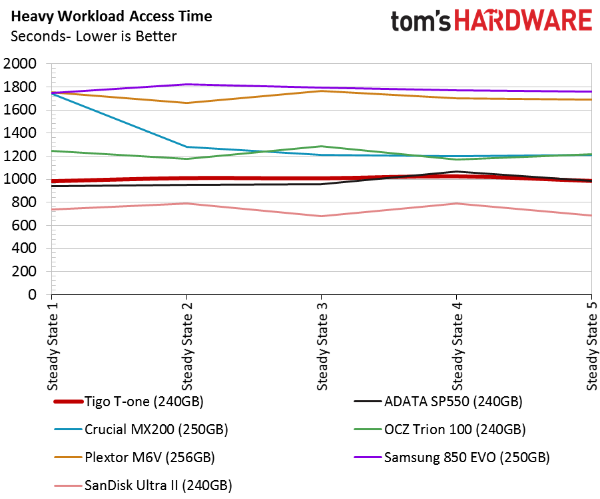

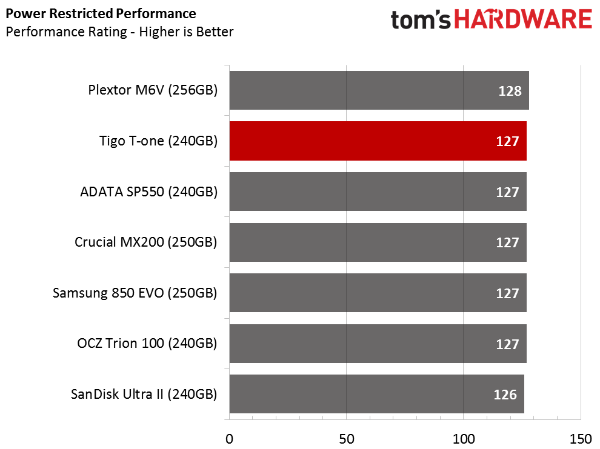

The T-One fares well when we look at access times from all of the tests combined. This is one of the most important metrics we run, though it's important to remember that premium SSDs report half the latency compared to drives in this chart.

Notebook Battery Life

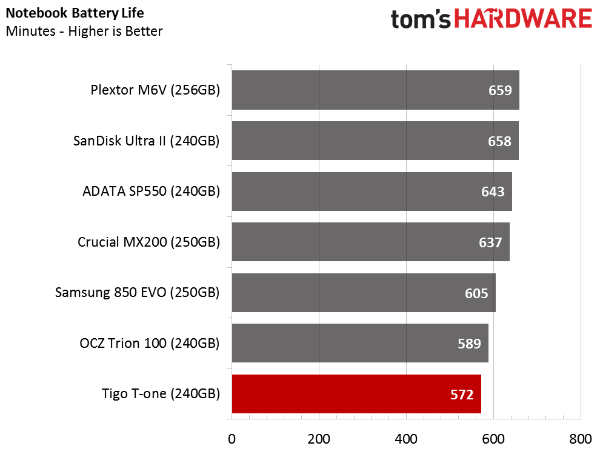

Of the tests we performed on Tigo's T-One, this was the most shocking. Companies spend a lot of time tuning firmware and building circuits to consume less power. Tigo claims this SSD supports the DevSlp low-power mode, but there is obviously a power consumption problem in play.

Current page: Real-World Software Performance

Prev Page Mixed Workload And Steady State Next Page Conclusion

Chris Ramseyer was a senior contributing editor for Tom's Hardware. He tested and reviewed consumer storage.

-

James Mason Would have like to see a price to performance chart to compare all the ones tested.Reply -

darcotech The price of Samsung EVO 850 250Gb is 88.67USD not 149.99. And could be probably found even cheaper.Reply

Buying cheap SSD from non established company is big no for me. My data are on it, and I want to feel safe (even if I have backup). -

joex444 Even if you wanted to spend just $61 on a 240GB SSD, there are other options. PNY, AData, and Kingston all offer 240GB SSDs *lower* than $61. These are all companies that have been around for a good amount of time whereas Tigo is a completely unknown company from China, which tends to be a source of lower quality parts compared to S. Korea, Japan, or Taiwan.Reply -

shrapnel_indie Tektronix is an old U.S. company that still exists. I hope the opening statements were about when Tigo started and not them as Tektronix got its start in 1946.Reply

http://www.tek.com/about-us -

RobinEricsson > Tigo is a division of TektronixReply

Tektronix, as in the electronics test and measurement tool company (http://www.tek.com/ )? Are you sure about that?

It doesn't seem that computer memory and oscilloscopes are closely related. Plus the website of Tektronix's parent company, Danaher, doesn't list Tigo or its worldwide brand Kimtigo as one of the companies in its portfolio (http://www.danaher.com/our-businesses/business-directory ). -

captaincharisma its like every computer related company in the world is selling its own SSD drives nowReply -

mapesdhs I recently tested a Gloway SSD (a model eBay keeps pushing in its "other items like this" listings), easily one of the worst models I've ever come across, terrible write speeds. Definitely avoid.Reply -

rhysiam Your verdict seems a little scare-mongering and introduces a theme which (unless I've missed it) wasn't addressed anywhere else in the review" "you need to decide if losing data is worth the $20 you saved".Reply

What are you basing that on? Have you got data or theory or at least personal experience to back that up?

That line made me go and read the article, assuming I'd find a story of multiple failed drives during your testing process, but I can't see a single sentence about reliability or data retention in the article (correct me if I'm wrong!) You shouldn't really advise your readers to avoid a drive because it'll lose their data if you haven't actually addressed or supported that assertion in the article itself. -

CRamseyer Here is the deal with reliability. The big names in the industry do no cut corners. Samsung, Intel, Micron/Crucial, SK Hynix, Toshiba and SanDisk are the fab companies. They make the flash and they also get first pick when from the production. These companies also know about features in the memory to enable or disable to increase reliability, performance and so on. Most of the extra switches have to do with the ECC (linked to reliability).Reply

I kill SSDs all of the time. It happens so often that I don't even think about it when it happens. Many of the companies send me early drives to test with pre-release firmware. I would estimate that 2 our of every 5 die during testing. Retail products have a much higher success rate. I may kill one in every 60 or so. My testing goes well beyond what anyone would consider normal use so don't let the high percentage take away from buying a new SSD.

When a product review goes live that isn't the end of my testing. At any time I'm developing 3 to 5 new tests for consideration in SSD or NAS reviews. Every once in awhile you will see one of these tests in a regular review to show a corner case problem.

With that said, it's rare for a fab company SSD to fail. I'm not saying they never fail, just the rate is much lower than products from smaller companies. I have around 300 SSDs (maybe more) dating back to 2007 so we are not talking about a small sample size.

Last but not least, I've been in 4 test labs. Two of the companies were fab companies and two were not. The difference is night and day between what the big names in the industry do compared to what the smaller companies do.

That is not to say that I would never use SSDs from smaller companies. I do use them in my lab but when it comes to systems I keep valuable data on, I use fab company drives. -

wh3resmycar ocz trion 100 goes for like $50 here in the philippines (240gb). i use my ssd for games mainly and considering i have a 100MB connection i can afford to have a broken ssd and redownload my whole librabry in a couple of hours.Reply