Vista: Benchmarking or Benchmarketing?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Can Vista's Performance Indices Replace Benchmarking?

There is a lot of variety in the hardware market: hundreds of types of processors, motherboards, hard drives, graphics cards and other components to choose from. And although consolidation has been going on for several years, leaving only AMD/ATI, Intel and Nvidia as the main players, along with a few motherboard makers and storage giants, finding the right products still can be an exhausting task. This is where benchmarking comes into play, because it helps to measure qualities and characteristics by applying a certain metric. Windows Vista now provides a built-in benchmarking solution to assess component and system performance, but does the so-called Experience Index correspond to what Tom's Hardware and other tech publications find on the test bench?

We will talk about benchmarking fundamentals on the following page, but I'd like to forestall the issue of synthetic vs. real-world benchmarks. Typically, using so-called real-world benchmarks is considered the proper way of technical benchmarking, because you use real applications to measure performance or assess capabilities. Although this creates a lot of work for reviewers, real-world software provides very relevant results. A great example is our popular Interactive CPU Charts, which compile almost 30 different programs and benchmarks across most processors on the market. Simply select your application and you'll receive results that are relevant to you.

Yes, the CPU Charts also include synthetic benchmarks - these were designed to specifically measure certain performance aspects of components. But synthetic benchmarks can be created with a certain focus in mind, resulting in test runs that may be less relevant, while real-world applications reflect what people actually use. The common attempt to create trust in synthetic benchmarks is finding industry-wide support, resulting in industry standard benchmarks.

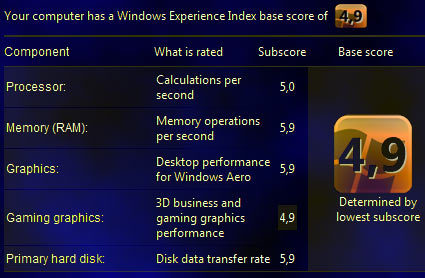

Microsoft is in an excellent position to create an industry standard benchmark on a consumer level, because Vista will be the dominant operating system for new PCs by the middle of this year. A built-in benchmarking solution would not only allow users to assess and compare the performance of components and systems, but be a powerful marketing instrument for the whole industry as well. Microsoft developed a comprehensive but simple to understand rating scheme, called the Windows Experience Index. It includes both performance and feature evaluation, expressed as a single number; Microsoft would like to see performance indices on both hardware and software retail boxes. This would allow users can check whether their scores were good enough to run a software package they intended to buy, and to compare possible hardware upgrades to match or exceed the Experience Index required by the software.

The advantages of such an all-in-one benchmarking solution are pretty obvious, but how well does the Windows Experience Index really reflect real-life performance? As the name says, it is an experience index that was specifically designed for Windows Vista. We decided to check how the Vista results compare to our own in-depth benchmarking.

Join our discussion on this topic

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Can Vista's Performance Indices Replace Benchmarking?

Next Page Benchmark Basics

Patrick Schmid was the editor-in-chief for Tom's Hardware from 2005 to 2006. He wrote numerous articles on a wide range of hardware topics, including storage, CPUs, and system builds.