Conversations As A Platform: Microsoft’s Vision Of People, Bots And Digital Assistants

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Among the many announcements here at Microsoft’s Build developer conference in San Francisco, the company spent a significant amount of time with a concept it called “conversation as platform,” which it believes will introduce human language and machine intelligence as the next computing interface. Cortana lies at the heart of this expansive initiative, but it will require artificial intelligence and machine learning, and the use of bots that can appear in your everyday computing experiences, especially where your conversations happen.

To enable this, Microsoft is introducing a variety of tools, including the Microsoft Bot Framework and Skype Bot tools for developers, as well as Cognitive Services APIs. These are new additions to Microsoft's Cortana Intelligence Suite, which is a big data and machine learning initiative built on Microsoft's Azure cloud.

Partially, and in more tangible terms, Microsoft is enhancing Cortana and making the digital assistant available in more places, including in Skype (available starting today). Microsoft also announced Skype for HoloLens. All of this, including the developer tools and client apps, are available starting today as previews, Microsoft said, although the Skype clients for all platforms are available now.

Article continues belowThere’s much to digest here, and most of it is futuristic and depends heavily on the ecosystem of developers here at Build. We’ll try to break down just a few of the key facets and some examples. Also, Microsoft CEO Satya Nadella, who spoke frequently and at length about this concept, stressed the need for security and transparency in a world where personal digital assistants are interacting with bots and application processes (he speculated that bots would become the “new applications”) on your behalf. This context-aware assistance is based on deeper and deeper understanding of your behaviors and preferences, as well as context, both of the real world and of your specific conversations.

Nadella proclaimed that this didn’t have to be man versus machine, but rather instead man with machine.

Cortana Everywhere

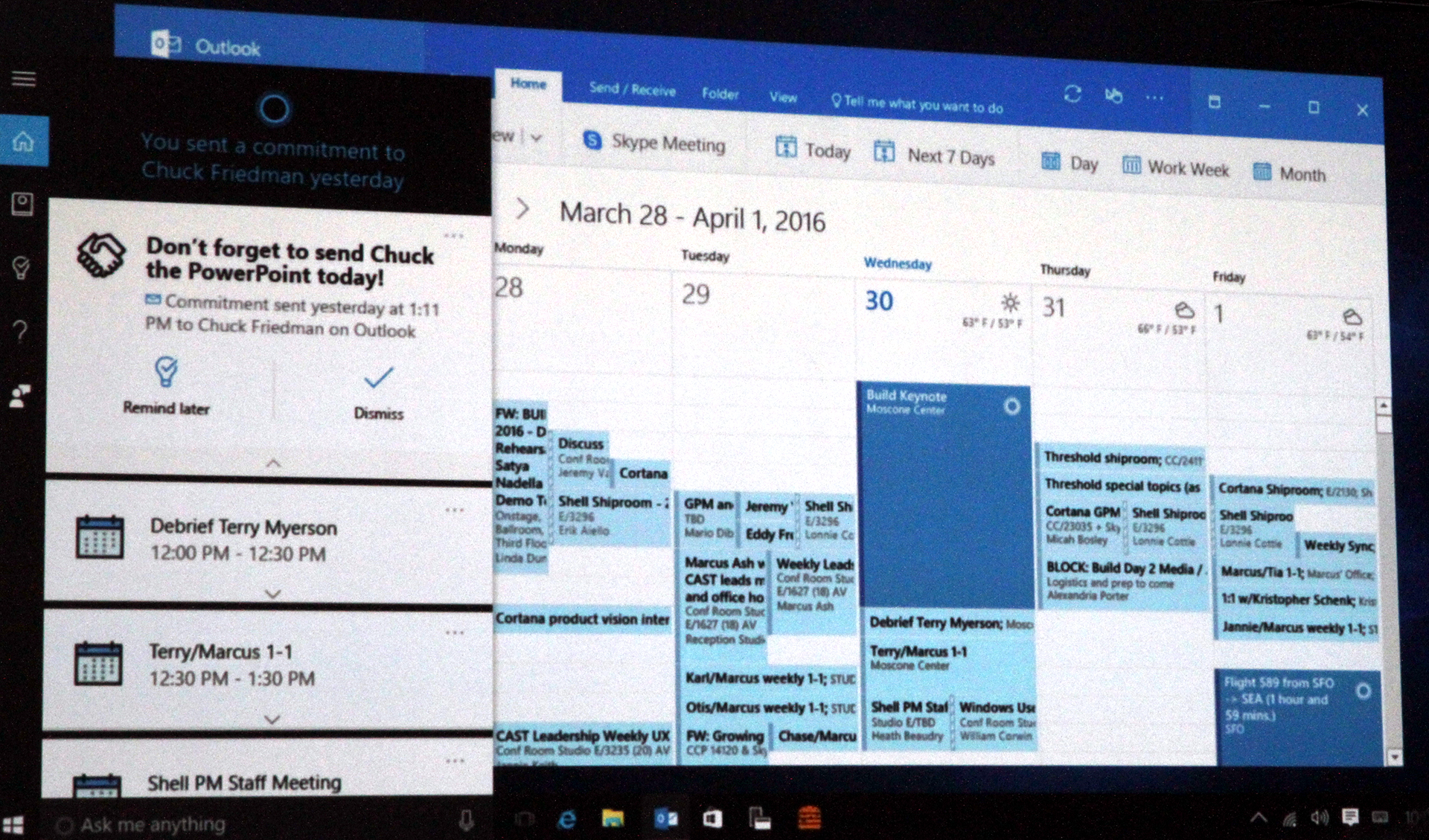

First, Cortana. Microsoft wants to infuse the personal assistant into all devices (it runs not just on Windows of course) and be part of many applications. For example, Cortana will now be a part of Outlook, able to look at your email and calendar (with your permission) and understand situational context in messages. Talk about a meeting, and Cortana can potentially schedule it for you. Talk about a flight, and Cortana can put it on the calendar. Talk about a task you promised to accomplish, and Cortana can get involved, finding and sending documents on your behalf. Get a taxi receipt via email, and Cortana can put it in your Microsoft Expense app.

And so on, including accomplishing some of these tasks on, or in conjunction with, Android and iOS running Cortana. As other apps also get Cortana integration, Cortana can begin to broker the interactions. Microsoft showed a couple of examples, one of which was Just Eat, a food pick up/delivery app that populated as an option in conjunction with a calendar appointment at lunch time. (That is, Cortana can see that you're planning to lunch with a friend in a specific area and will offer up a suggestion for a restaurant to try.) These functions are called Proactive Actions, and developers are getting an invitation for a preview.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Conversation Canvas

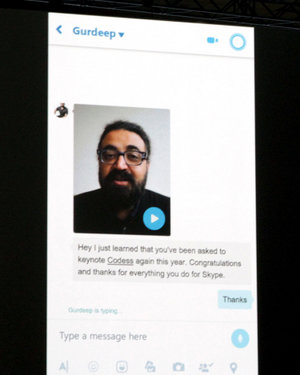

Microsoft sees many of these digital assistant and bot entities finding their way into our normal communication tools, like Skype, SMS, WeChat, Slack and email. Because Skype belongs to Microsoft, most of its demonstrations focused here. For example, in one Skype interaction, Microsoft demonstrated a video message (with transcript of the video underneath it, created automatically using Skype Translate). As part of the dialog--a boss' congratulatory message--a bot kicked in from a local cupcake merchant asking for permission to extract the user's location and make a delivery. It even offered an estimated delivery time.

There were more impressive examples, including interacting with bots to book a hotel room at a Westin in Ireland, but the point is that Microsoft envisions Skype and similar tools as a “conversation canvas,” and it envisions developers working with the Skype Bot SDK to make it happen.

As part of the Bot Framework tools, Microsoft also demonstrated how developers can use a built in semantic dictionary to begin enabling natural language rules into their apps (er, bots). There will be a host of tools that allow developers to make their bots smarter through machine learning over time.

Cognitive Services

Microsoft also announced a series of APIs (22 of them) as part of its Cognitive Services. These APIs are built around more general intelligence services (vision, speech, search, contextual knowledge and so on) that developers can build into their applications. Microsoft demonstrated some incredible early applications of this, such as the ability to take a picture and have it recognize the objects in it, but also to build information about the image using what it called CaptionBot.

In one demonstration, Microsoft showed off what it called CRIS, or custom recognition intelligence service, and compared a speech-to-text translation of a child speaking. Naturally, its analysis showed a much higher precision of interpretation based on its knowledge of child speech patterns.

Microsoft said that Cortana is involved in a million conversations each day. It's hard to know how many Cortana iOS or Android installs there have been, or how people are using it, but its growth, along with services like Google Now and Apple's Siri, show some semblance of customer interest. Microsoft demonstrated today that it is trying to move beyond the neat parlor tricks of a voice-based search engine.

Fritz Nelson is the Editor-In-Chief of Tom's Hardware. Follow him on Twitter, Facebook and Google+. Follow us on Facebook, Google+, RSS, Twitter and YouTube.

-

grimfox How much will all of these bots cost the users? Will your be billed per recommendation? More likely they will operate the same way Cortana does. They will sell your information to ad agencies both specific and in aggregate which will then target ads at you. You wont pay a dime for these "services," you'll pay in personal information. This is why Windows 10 is "free" they want as many people feeding information into Cortana as possible so they can sell that information. You aren't paying cash for Win10, you're paying in data.Reply -

hdmark I love the concept of cortana but I wish it would reside fully on my desktop and not interact with Microsoft. It would be so convenient to have it going through all my stuff and helping me out, as long as it kept that information with me and didn't sell itReply -

Ilya__ ReplyHow much will all of these bots cost the users? Will your be billed per recommendation? More likely they will operate the same way Cortana does. They will sell your information to ad agencies both specific and in aggregate which will then target ads at you. You wont pay a dime for these "services," you'll pay in personal information. This is why Windows 10 is "free" they want as many people feeding information into Cortana as possible so they can sell that information. You aren't paying cash for Win10, you're paying in data.

Looks like every company out there is switching to this profit model. Although, as far as I know they do not really sell 'your' data, they sell stats they generated off your data. -

warezme I'm not a jet setting metropolitan hipster. 99% of these features are junk to me. I disable it all.Reply -

FritzEiv The question/theories about what Microsoft is really up to is also on my mind. I've been trying to get some answers to questions around this. For example: What happens when/if there are thousands of Bots vying for my attention via Cortana (and Cortana in Skype and Cortana in Outlook and dozens of other Cortana infused apps)? Will they (the Bot companies) eventually pay (like they do in a search query) for access to my eyeballs, now based on knowing a bunch of things about me that Cortana is tracking, seemingly for my own benefit but now for the benefit of paying advertisers (er, Bot makers)? Beyond the conspiracy theories, how can I arbitrate these services/Bots -- sure they are being served to me based on my favorite things, behavior, preferences, but do things start to get suggested to me? On the flip side, what about discovery? I may not want to do the things I've always done or use the things I've always used, but instead be offered some other ideas. (Microsoft did tell me they would have a "more" function that would let you do this.) Ultimately I think the lack of answers about this could just mean Microsoft hasn't figured it all out yet; on the other hand, they may have and don't want to talk about it. I don't necessarily want to stir the conspiracies here, but these are the questions I'm trying to get answered. Personally I'm excited about the intelligence this will bake into my experiences, but in light of our recent history of companies exploiting our personal data, I do start to wonder if I'll just end up opting out of this. Anyway, if folks want to submit (here) a list of questions for Microsoft, I'll keep working on this . . .Reply -

wifiburger strange, the more articles coming from Microsoft the more I ignore them, all this talk about visions, future and their take on technology is pure non-sense, It's really annoying people and makes Microsoft look bad by ignoring current markets or trowing something in the market that doesn't sellReply -

grimfox IIRC The Cortana EULA states that your data can be used by Microsoft and by subsidiaries and can be sold to third parties. Here's an article the talks about Windows 10 privacy settings: http://thenextweb.com/microsoft/2015/07/29/wind-nos/#grefReply

The way everything is written you can shut off those features and Microsoft wont have that information to sell, but if you activate them your data is not secured or limited to use by Microsoft. The text about what is collected is quite vague and they make a note about it in the linked article.

I understand a lot of companies are switching to this model, and some are only using "aggregated data" but in this instance and probably many others that is not true. I'm saying it's wrong, potentially very dangerous, and we need to be aware of exactly what is going on. Privacy should be the default. If I want to release my data from Microsoft to amazon or any other specific third party, I should have to explicitly agree for that to happen. It shouldn't be a catchall "I agree" check box on the EULA.