Computex 2007: Motherboard Mania

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Gigabyte

All DQ6 type motherboards carry an array of 12 voltage regulators. This decreases total efficiency, but ensures a stable voltage supply at all times. We found six phases to be a good number, but Gigabyte still goes for the maximum. Since it regularly reaches good overclocking results with its top motherboards, the strategy can't be wrong.

Gigabyte is showing off a very large number of products. The GA-X38T-DQ6 is the X38 motherboard sample that is going to support DDR3 memory. The interesting part is its voltage regulator array, which consists of 12 phases. So does the GA-M790-DQ6, which will be the new top model in the Socket AM2 product line for Athlon X2 and Phenom X2/X4 processors.

MSI: Back To Its Roots

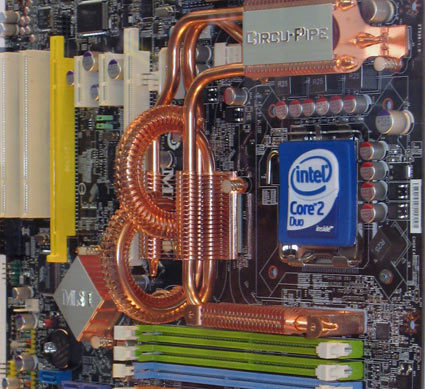

MSI's Circu-Pipe design offers a larger surface and works well according to our first test results. It looks funny, though...

After years of drought MSI finally is doing better. The firm isn't losing money anymore, and is focusing on what it does best: motherboards, graphics cards, notebooks and other components. My Style Inside may not disappear, but MSI realized that reaching the brave new consumer electronics world is more difficult than it had expected.

The Teraflop Flip: Graphics Go Super-Computing

There are some major changes for super-computing on the horizon, involving AMD, Intel and Nvidia. However, the scope of how these changes will occur is still under discussion, as well as development. AMD's Radeon HD 2900XT can easily calculate 475 billion floating point operations per second (GFLOPS). CrossFire, and with a little overclocking, has yielded over one trillion FLOPS or 1 TeraFLOP (TFLOP) - typically this level can only be reached by expensive super-computers.

Nvidia has a similar approach to super-computing using its G80 processor inside the GeForce 8800 series graphics cards. Deviating from traditional graphics, Nvidia is expected to make an announcement about a new utilization of this technology in combination with CUDA - the company's C compiling software - for combined GPU and CPU computing. There is also talk of Intel's approach using its special execution x86 architecture with graphics functionality, codenamed "Larrabee."

Both veins of super-computing development are not without their theoretical pitfalls, as GPUs are not as deep as CPUs, which refers to the complexity of dynamic branching, and highly dynamic executions will not process as quickly as on a CPU. The opposite is true for GPUs, which are very wide yet shallow calculators. If they have a lot of similar tasks to get done and don't require a lot of branching, a GPU will outperform a CPU. While we wait pondering the advantages and limitations, new hardware is currently being "taped out", which could move development in a whole new direction. We shall have to wait and see what happens.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.