PCI Express Battles PCI-X

Analysis

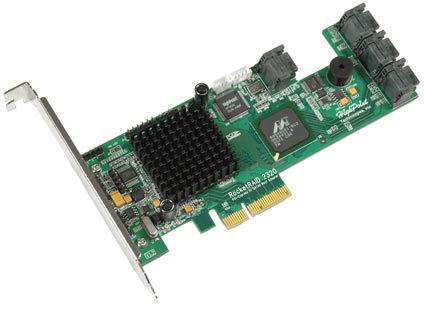

If you are looking to make the switch from PCI-X to PCI Express to boost up your throughput numbers, you will be disappointed. The differences in transfer rate between two identical storage setups running the PCI-X and PCI Express versions of HighPoint's current controller cards will be negligible. Although we provide our RAID 5 benchmark results only here, running a RAID 0 with eight drives will boost all the numbers without changing the fact that there is hardly any difference in data transfer speed.

The story looks different, though, if you examine some I/O performance benchmarks. The database, fileserver and workstation benchmark patterns do benefit from the PCIe solution, although the benefit is mostly visible with deeper queues. At the same time, the web server benchmark does not benefit at all.

The reason for this is the distribution of read and write access: IOmeter web server does not write anything at all, while the other patterns do. As a result, the reason for the performance difference can be attributed to the fact that PCI Express offers dedicated upload and download bandwidth, thanks to its two signaling pairs.

Conclusion

The performance difference between PCI-X and PCI Express in real life is relatively small, since the bandwidth that is offered by both options is rather ample to satisfy the requirements of RAID controllers with up to eight drives.

However, the more add-in cards you plan to use, and the more concurrent read/write operations that are going on, the more beneficial PCI Express components will be. Thanks to independent upstream/downstream bandwidth, and the fact that each device is allocated bandwidth for its own exclusive use, the interface of the future should be PCI Express even in the server space. Still, it is going to take many months, if not years, until PCI Express hardware has reached a level of maturity and market penetration that allows it to finally overcome the well-established presence of the parallel PCI-X bus.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Analysis

Prev Page Benchmarks, Continued Next Page SATA II RAID Controller Comparison Table

Patrick Schmid was the editor-in-chief for Tom's Hardware from 2005 to 2006. He wrote numerous articles on a wide range of hardware topics, including storage, CPUs, and system builds.

-

herb2021 Buyers need to be very aware of limited compatiblility with many of today's MB. Will not work in many machines. Highpoint Tech support is one of the worst I have worked with. No indication of any technical ability in those I have worked with.Reply