Adata Premier Pro SP920 SSD: From 128 To 1024 GB, Reviewed

Adata shifts away from SandForce in its Premier Pro SP920 SSD family. With promises of incredible performance and spiffy features like DevSlp, Adata's latest employs the Marvell controller we saw in Crucial's M550. But the two share quite a bit more...

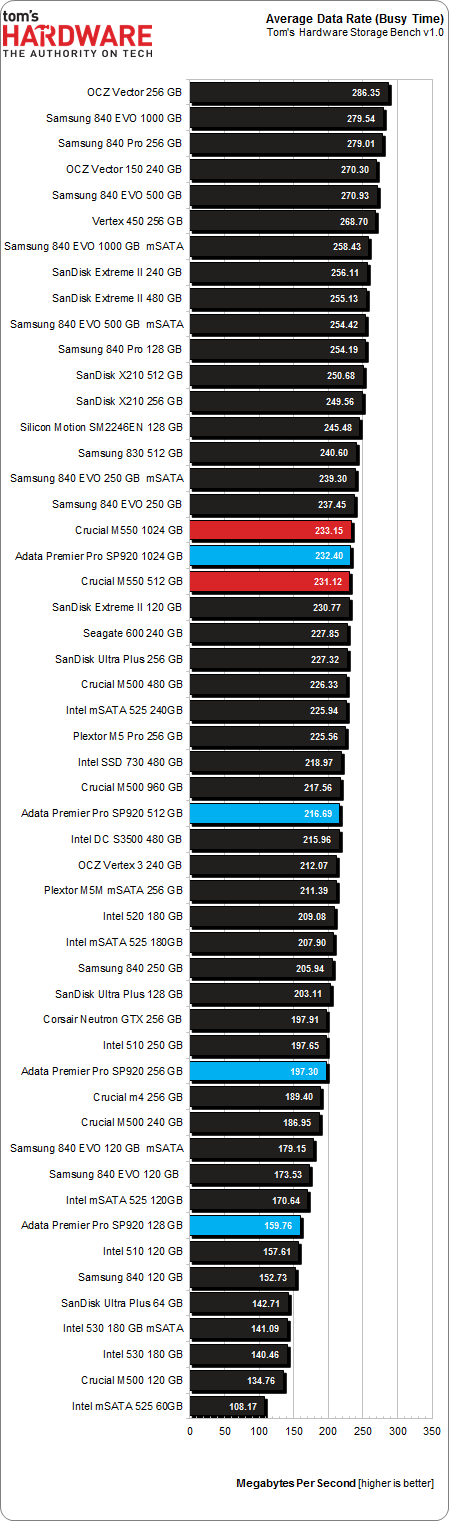

Results: Tom's Hardware Storage Bench v1.0

Storage Bench v1.0 (Background Info)

Our Storage Bench incorporates all of the I/O from a trace recorded over two weeks. The process of replaying this sequence to capture performance gives us a bunch of numbers that aren't really intuitive at first glance. Most idle time gets expunged, leaving only the time that each benchmarked drive is actually busy working on host commands. So, by taking the ratio of that busy time and the amount of data exchanged during the trace, we arrive at an average data rate (in MB/s) metric we can use to compare drives.

It's not quite a perfect system. The original trace captures the TRIM command in transit, but since the trace is played on a drive without a file system, TRIM wouldn't work even if it were sent during the trace replay (which, sadly, it isn't). Still, trace testing is a great way to capture periods of actual storage activity, a great companion to synthetic testing like Iometer.

Incompressible Data and Storage Bench v1.0

Also worth noting is the fact that our trace testing pushes incompressible data through the system's buffers to the drive getting benchmarked. So, when the trace replay plays back write activity, it's writing largely incompressible data. If we run our storage bench on a SandForce-based SSD, we can monitor the SMART attributes for a bit more insight.

| Mushkin Chronos Deluxe 120 GBSMART Attributes | RAW Value Increase |

|---|---|

| #242 Host Reads (in GB) | 84 GB |

| #241 Host Writes (in GB) | 142 GB |

| #233 Compressed NAND Writes (in GB) | 149 GB |

Host reads are greatly outstripped by host writes to be sure. That's all baked into the trace. But with SandForce's inline deduplication/compression, you'd expect that the amount of information written to flash would be less than the host writes (unless the data is mostly incompressible, of course). For every 1 GB the host asked to be written, Mushkin's drive is forced to write 1.05 GB.

If our trace replay was just writing easy-to-compress zeros out of the buffer, we'd see writes to NAND as a fraction of host writes. This puts the tested drives on a more equal footing, regardless of the controller's ability to compress data on the fly.

Average Data Rate

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Storage Bench trace generates more than 140 GB worth of writes during testing. This tends to penalize drives smaller than 180 GB and favor those with more than 256 GB of capacity. So, it's not wise to take a trace from a 240 GB SSD and wrap it around something, say, 40 GB-large. Using a small trace on a larger SSD is no problem, but going the other way results in different trace timing, affecting the results.

If you've followed us so far, you won't be surprised to see Crucial's M550s and the 1024 GB SP920 tripping over one another. Nor should the 128 and 256 GB model's outcome require much explanation. But the 512 GB SP920? That doesn't end up where we might have guessed.

First, we tried running our trace a second time. Then we tried other systems, all of which spat back the same result. Really though, losing 15 MB/s doesn't mean much at the end of the day. But we do come away knowing that the 512 GB SP920 isn't exactly the same as the 1024 GB model. Nor is it identical to the M550s. Something is slightly different on what should be an identical drive. Perhaps a look at mean service times on the next page will shed some light...

Current page: Results: Tom's Hardware Storage Bench v1.0

Prev Page Results: Random Performance Next Page Results: Tom's Hardware Storage Bench v1.0, Continued-

cryan Reply13011395 said:I prefer Sandisk, if you don't mind.

The X210 is pretty awesome, but newer Marvell implementations are built with Haswell-style power features in mind. If you're looking for a drive to use in mobile applications, mind the heat and power consumption stats.

Regards,

Christopher Ryan -

rajangel Awhile back I purchased a few different SSD's to test out (OCZ, Crucial, Patriot, Adata). The Adata is the only one still running and was always the quickest. I don't know how this one is built, but the last Adata was built tough. The OCZ was so flimsy it felt like paper. The Crucial and the Patriot were slightly better in build quality. Now that I'm in the market for a new drive I may consider this.Reply -

cryan Reply13012280 said:Awhile back I purchased a few different SSD's to test out (OCZ, Crucial, Patriot, Adata). The Adata is the only one still running and was always the quickest. I don't know how this one is built, but the last Adata was built tough. The OCZ was so flimsy it felt like paper. The Crucial and the Patriot were slightly better in build quality. Now that I'm in the market for a new drive I may consider this.

I have to say, the plastic or metal chassis a drive comes in doesn't mean much. In the lab, I like a nice heavy metal SSD casing, but in a laptop? You probably want a flimsy plastic chassis. It's not conductive and doesn't add much weight.

Regards,

Christopher Ryan -

rajangel It's a matter of opinion. I like things that are built well, and have a quality appearance. I think build quality does affect performance (read reliability). Especially when connectors/etc are cheap in construction. However, just my opinion.Reply -

cryan Reply13012326 said:It's a matter of opinion. I like things that are built well, and have a quality appearance. I think build quality does affect performance (read reliability). Especially when connectors/etc are cheap in construction. However, just my opinion.

I agree that a substantial chassis tends to reinforce the perception of a drive's build quality, but much of the time its aesthetic. The component choice on the PCB speaks more to quality. I've seen some downright terrible drives in the fanciest of cases.

Regards,

Christopher Ryan

-

rajangel I think there should be a restriction that prevents the article author from replying, unless there is a substantial mistake that was noted. I feel like tomshardware authors troll their own threads. This has become a problem lately. I'm at the point where I feel my business and time would be better spent on a real tech website. Tomshardware is like the Yahoo of tech sites lately.Reply -

iltamies Typo on last page: "Adata gets a solid product able to soften the wait, and Micron (Crucial's parent company) gets to more more volume." should read "move more volume."Reply -

Wisecracker Impressive ... power consumption is a bit high though, compared to the Samsung 120GB Evo (my current $80 fav)Reply

Are 'microseconds' considered 'milliseconds' ??