Upgrade Advice: Does Your Fast SSD Really Need SATA 6Gb/s?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Storage Bench v1.0, In More Detail

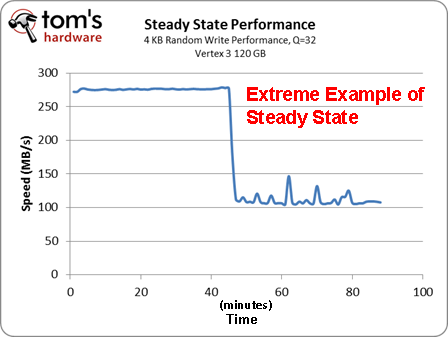

SSD manufacturers prefer that we benchmark drives the way they behave fresh out of the box because solid-state drives slow down once you start using them. If you give an SSD enough time, though, it will reach a steady-state performance level. At that point, its benchmark results reflect more consistent long-term use. In general, reads are a little faster, writes are slower, and erase cycles happen as slow as you'll ever see from the drive.

We want to move away from benchmarking SSDs fresh out of the box whenever possible because you only really get that performance for a limited time. After that, you end up with steady-state performance until you perform a secure erase and start all over again. Now, we don't know about you, but we don't reformat our production workstations every week. So, while performance right out of the box is an interesting metric, it's not nearly as relevant in the grand scheme of things. Steady-state performance is what ultimately matters.

While this is a new move for us, IT professionals have long used this approach to evaluate SSDs. That's why the consortium of producers and consumers of storage products, Storage Networking Industry Association (SNIA), recommends benchmarking steady-state performance. It's really the only way to examine the true performance of an SSD in a way that represents what you'll actually see over time.

Article continues belowThere are multiple ways to get to a SSD’s steady state, but we're going to use a proprietary benchmark storage benchmark from Intel. This is a trace-based benchmark, which means that we're using an I/O recording to measure relative performance. Our trace, which we're dubbing Storage Bench v1.0, comes from a two-week recording of my own personal machine, and it captures the level of I/O that you would see during the first two weeks of setting up a computer.

Installation includes:

- Games like Call of Duty: Modern Warfare 2, Crysis 2, and Civilization V

- Microsoft Office 2010 Professional Plus

- Firefox

- VMware

- Adobe Photoshop CS5

- Various Canon and HP Printer Utilities

- LCD Calibration Tools: ColorEyes, i1Match

- General Utility Software: WinZip, Adobe Acrobat Reader, WinRAR, Skype

- Development Tools: Android SDK, iOS SDK, and Bloodshed

- Multimedia Software: iTunes, VLC

The I/O workload is somewhat moderate. I read the news, browse the Web for information, read several white papers, occasionally compile code, run gaming benchmarks, and calibrate monitors. On a daily basis, I edit photos, upload them to our corporate server, write articles in Word, and perform research across multiple Firefox windows.

The following are stats on the two-week trace of my personal workstation:

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

| Statistics | Storage Bench v1.0 |

|---|---|

| Read Operations | 7 408 938 |

| Write Operations | 3 061 162 |

| Data Read | 84.27 GB |

| Data Written | 142.19 GB |

| Max Queue Depth | 452 |

According to the stats, I'm writing more data than I'm reading over the course of two weeks. However, this needs to put into context. Remember that the trace includes the I/O activity of setting up the computer. A lot of this information is considered one-touch, since it isn't accessed repeatedly. If we exclude the first few hours of my trace, the amount of data written drops by over 50%. So on a day-to-day basis, my usage pattern evens out to include a fairly balanced mix of read and writes (~8-10 GB/day). That seems pretty typical for the average desktop user, though this number is expected to favor reads among the folks consuming streaming media on a larger and more frequent basis.

On a separate note, we specifically avoided creating a really big trace by installing multiple things over the course of a few hours, because that really doesn't capture real-world use. As Intel points out, traces of this nature are largely contrived because they don't take into account idle garbage collection, which has a tangible effect on performance (more on that later).

Current page: Storage Bench v1.0, In More Detail

Prev Page Buy The SSD You Can Afford, Not The Fastest One Next Page More Background On Our Benchmarks-

compton Buying the best drive rather than the perceived fastest is good advice. I have fast drives and slow drives, but I prize the reliable ones. The good news is that there are drives which are both fast and reliable, so don't buy a drive just because of its Vantage score or simply because of the speed with which it handles 0-fill data.Reply -

compton Which FW is the 830 using? The Test Setup and Benchmarks page lists it as CXM0. There are currently 3 FWs, CXM01,02,03]B1Q. The page simply lists CXM0.Reply -

phamhlam Crucial m4, Samsung 830, and Intel 320 are all good drives. 128GB drives go for $180. They are the best value.Reply

I find it interesting that SATA 3 doesn't make a difference in file copy. Most SATA 3 drives cost the same as a SATA 2 so no need to save a few dollars. -

SteelCity1981 So basiucly what this is saying is even thought SATA 3 looks impressive on paper, when it comes to actual real world results it's really not any faster than SATA 2 in performaning everyday real world task.Reply -

dark_knight33 I think I wrote you an email asking for this article when I was looking to buy my SSD a few weeks/months ago. Even though your article came after I purchased mine, thanks for addressing it. I'm rocking a Vertex III 240GB on my Sata II x58 MB and I don't regret it one bit.Reply -

a4mula I can say this. I'm running 2x OCZ Solid first gen SSDs off SATA 3Gb/s ICH10R. When new they benched at about 300/100 sequential read/write. Compared to current generation drives this is pretty slow. When researching my current build I asked a friend that just put together a rig with a 64GB M4 on Intel 6Gb/s if I could give it a spin. While his machine boots faster w/o a doubt, I attest most of this to the RAID verification I face when I boot. Inside Win7 I couldn't tell a difference at all. While I'm sure his system is faster, it just wasn't obvious or noticeable in my opinion.Reply -

sincreator What about quality? Is there any way to stress them till they start to fail? It just seems that if there isn't much difference in the drives in real world applications, then the next logical thing a buyer would want to know would be how much average data particuar drives can read/write before a failure. Like actual stress testing in a controlled environment. Come on Tom's, don't you want to destroy a few perfectly good SSD's? lol. These are things i would like to know more than anything else so I could make a very informed decision before a purchase.Reply

I asked before but no one answered. Anyway here goes... If SSD's are supposed to be more reliable than spinning drives, why are most warranties for 3 years instead of the usual 5 years on high end conventional spinning drives? It seems like the companies are not to confident in their products to me, and that's why I ask this question and the one that preceded it. It would be nice to get some honest answers...... -

compton sincreatorWhat about quality? Is there any way to stress them till they start to fail? It just seems that if there isn't much difference in the drives in real world applications, then the next logical thing a buyer would want to know would be how much average data particuar drives can read/write before a failure. Like actual stress testing in a controlled environment. Come on Tom's, don't you want to destroy a few perfectly good SSD's? lol. These are things i would like to know more than anything else so I could make a very informed decision before a purchase. I asked before but no one answered. Anyway here goes... If SSD's are supposed to be more reliable than spinning drives, why are most warranties for 3 years instead of the usual 5 years on high end conventional spinning drives? It seems like the companies are not to confident in their products to me, and that's why I ask this question and the one that preceded it. It would be nice to get some honest answers......Reply

Well, the warranties are mostly 3 years, but some drives like Intel's 320s and Plextor's M3S drives do have 5 years of coverage.

As for stress testing... well... some have taken this matter in their own hands to answer that very question. So far, it's far more than anyone could imagine. And for complex reasons, a drive only writing 10GB might not wear out it's NAND in over a century. A drive's endurance is typically way underestimated. No one is going to wear out any 3xnm or 2xnm NAND in 5 years, except in the most extreme cases. Most drives die from firmware problems, or physical damage to the PCB or components, or some other unknown phenomenon. Only the factory could do a proper autopsy, and since the FW, FTL, controller, etc. are usually trade secrets or covered under NDA, no one in the know is going to volunteer.

There is an SSD endurance thread on the XtremeSystems forum:

http://www.xtremesystems.org/forums/showthread.php?271063-SSD-Write-Endurance-25nm-Vs-34nm/page1

-

heezdeadjim You probably aren't going to see much of a difference in speed while on the desktop from one SSD to another. It's when loading programs and game levels that you might see a real difference in.Reply

I know when I first got my 1st gen OCZ Vertex nearly when it first came out, I was always the first person on the map for Counter Strike. While other players were still loading the level, I would rush in from the side and lob a grenade and take a few people out because they didn't think anyone could get there so fast (now with more people with SSD's, it's not quite so funny anymore).

I do appreciate being able to open PS CS5 in less than 2 seconds (for quick photo re-edits) and opening Premiere a lot faster too. Transferring large RAW photo folders (think 50+GBs total) to and from backup HDD's, I could use the extra MB's from these new 6Gb/s versions.

-

cmcghee358 I've read this article entirely too many times. Except this time it looks much better than the version I saw. Good job Mr. Angelini!Reply