Gigabit Ethernet: Dude, Where's My Bandwidth?

First Test: How Fast Is Gigabit Supposed To Be, Anyway?

How fast is a gigabit? If you hear the prefix "giga" and assume 1,000 megabytes, you might also figure that a gigabit network should deliver 1,000 megabytes per second. If this sounds like a reasonable assumption to you, you’re not alone. But unfortunately, you’re going to be fairly disappointed.

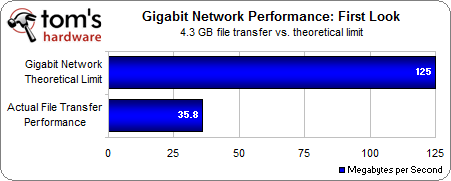

So what is a gigabit? It is 1,000 megabits, not 1,000 megabytes. There are eight bits in a single byte, so let’s do the math: 1,000,000,000 bits divided by 8 bits = 125,000,000 bytes. There are about a million bytes in a megabyte, therefore a gigabit network should be capable of delivering a theoretical maximum transfer of about 125 MB/s.

While 125 MB/s might not sound as impressive as the word gigabit, think about it: a network running at this speed should be able to theoretically transfer a gigabyte of data in a mere eight seconds. A 10 GB archive could be transferred in only a minute and 20 seconds. This speed is incredible, and if you need a reference point, just recall how long it took the last time you moved a gigabyte of data back before USB keys were as fast as they are today.

Armed with this expectation, I’ll move a file over my gigabit network and check the speed to see how close it comes to 125 MB/s. We’re not using a network of wonder machines here, but we have a real-world home network with some older but decent technology.

Copying a 4.3 GB file from one of these PCs to another five different times resulted in a 35.8 MB/s average. This is only about 30% as fast as a gigabit network’s theoretical ceiling of 125 MB/s.

What’s the problem?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: First Test: How Fast Is Gigabit Supposed To Be, Anyway?

Prev Page What Makes A Gigabit Network? Cards, Cables, And Hubs Next Page Network Speed Limiting FactorsDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

drtebi Interesting article, thank you. I wonder how a hardware based RAID 5 would perform on a gigabit network compared to a RAID 1?Reply -

HelloReply

Thanks for the article. But I would like to ask how is the transfer speed measured. If it is just the (size of the file)/(a time needed for a tranfer) you are probably comsuming all the bandwith, beacuse you have to count in all the control part of the data packet (ethernet header, IP headrer, TCP header...)

Blake -

jankee The article does not make any sense and created from an rookie. Remember you will not see a big difference when transfer small amount of data due to some transfer negotiating between network. Try to transfer some 8GB file or folder across, you then see the difference. The same concept like you are trying to race between a honda civic and a ferrari just in a distance of 20 feet away.Reply

Hope this is cleared out.

-

spectrewind Don Woligroski has some incorrect information, which invalidates this whole article. He should be writing about hard drives and mainboard bus information transfers. This article is entirely misleading.Reply

For example: "Cat 5e cables are only certified for 100 ft. lengths"

This is incorrect. 100 meters (or 328 feet) maximum official segment length.

Did I miss the section on MTU and data frame sizes. Segment? Jumbo frames? 1500 vs. 9000 for consumer devices? Fragmentation? TIA/EIA? These words and terms should have occurred in this article, but were omitted.

Worthless writing. THG *used* to be better than this. -

IronRyan21 ReplyThere is a common misconception out there that gigabit networks require Category 5e class cable, but actually, even the older Cat 5 cable is gigabit-capable.

Really? I thought Cat 5 wasn't gigabit capable? In fact cat 6 was the only way to go gigabit. -

cg0def why didn't you test SSD performance? It's quite a hot topic and I'm sure a lot of people would like to know if it will in fact improve network performance. I can venture a guess but it'll be entirely theoretical.Reply -

flinxsl do you have any engineers on your staff that understand how this stuff works?? when you transfer some bits of data over a network, you don't just shoot the bits directly, they are sent in something called packets. Each packet contains control bits as overhead, which count toward the 125 Mbps limit, but don't count as data bits.Reply

11% loss due to negotiation and overhead on a network link is about ballpark for a home test. -

jankee After carefully read the article. I believe this is not a tech review, just a concern from a newbie because he does not understand much about all external factor of data transfer. All his simple thought is 1000 is ten time of 100 Mbs and expect have to be 10 time faster.Reply

Anyway, many difference factors will affect the transfer speed. The most accurate test need to use Ram Drive and have to use powerful machines to illuminate the machine bottle neck factor out.