OCZ Octane 512 GB SSD Review: Meet Indilinx's Everest Controller

More Background On Our Benchmarks

4 KB Random

Our Storage Bench v1.0 mixes random and sequential operations. However, it's still important to isolate 4 KB random performance because that's such a large portion of what you're doing on a day-to-day basis. Right after Storage Bench v1.0, we subject the drives to Iometer to test random 4 KB performance. But why specifically 4 KB?

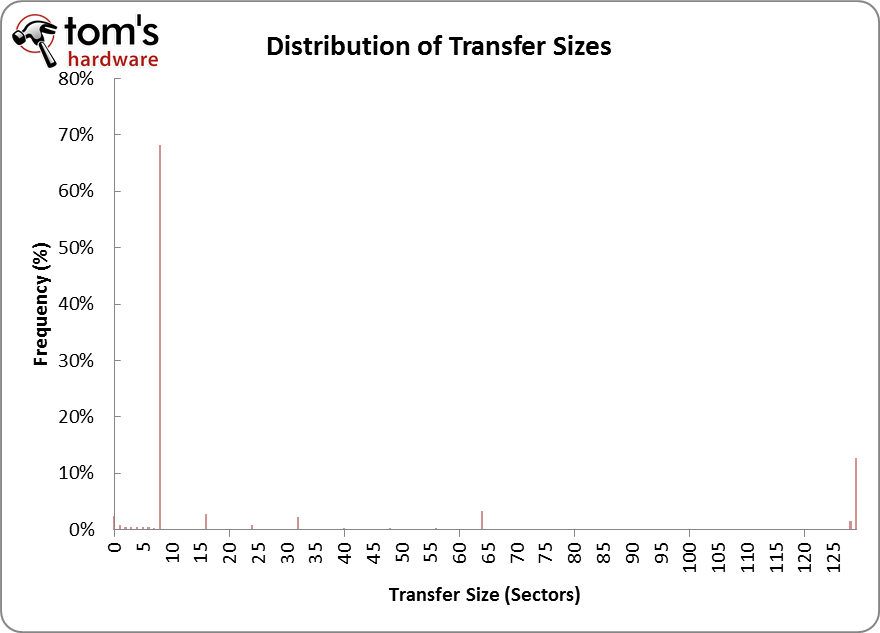

When you open Firefox, browse multiple Web pages, and write a few documents, you're mostly performing small random read and write operations. The chart above comes from analyzing Storage Bench v1.0, but it epitomizes what you'll see when you analyze any trace from a desktop computer. Notice that close to 70% of all of our accesses are eight sectors in size (512 bytes per sector, thus 4 KB).

We're restricting Iometer to test an LBA space of 16 GB because a fresh install of a 64-bit version of Windows 7 takes up nearly that amount of space. In a way, this examines the performance that you would see from accessing various scattered file dependencies, caches, and temporary files.

If you're a typical PC user, it's important to examine performance at a queue depth of one, because this is where the majority of your accesses are going to fall on a machine that isn't being hammered by I/O commands.

Before we get to the numbers, note that we're presenting random performance in MB/s instead of IOPS. There is a direct relationship between these two units, as average transfer size * IOPS = MB/s. Most workloads tend to be a mixture of different transfer sizes, which is why the networking ninjas in IT prefer IOPS. It reflects the number of transactions that occur per second. Since we're only testing with a single transfer size, it's more relevant to look at MB/s (it's also more intuitive for "the rest of us"). If you want to convert back to IOPS, just take the MB/s figure and divide by .004096 MB (remember your units) for the 4 KB transfer size.

128 KB Sequential

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

SSD manufacturers often want to stress random performance because it's a clear case where they decimate conventional hard drives. Sequential performance is a little different, but still represents an important aspect of performance to examine.

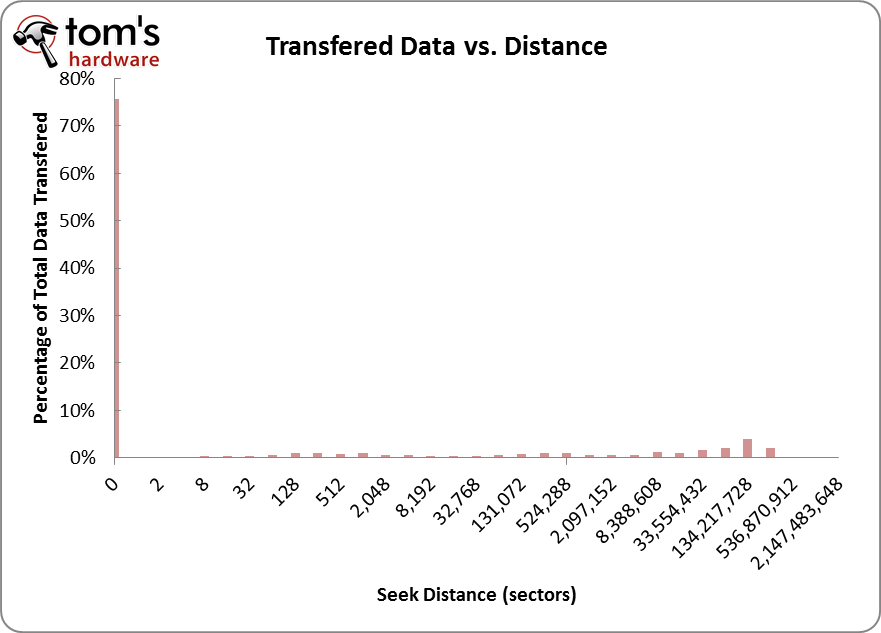

But how pervasive is sequential performance for the average user? Take a look at the graph below; it shows the distribution of all the seek distances from one of our traces.

The first thing you'll notice is that there's a preponderance of activity zero sectors away, which means that our trace is made mostly of back-to-back requests, or sequential I/O. If the trace was 100% random, none of the accesses would be zero sectors away.

More and more of your data is becoming sequential in natural, especially if you're watching movies and listening to music. Consider that most webpages contain less than 1 MB worth of data and most emails less than 16 KB. Office productivity isn't particularly disk intensive, but that workload pales in comparison to multimedia, as a two minute movie transfer can easily exceed 200 MB.

Of course, this doesn't even touch the subject of gaming. We've traced six games now and except in the case of MMORPGs, we've found gameplay related data to be mostly sequential. First person shooter games like Crysis 2 are particularly data heavy, as only 20 minutes of gameplay involves reading and writing over 1 GB of data.

Current page: More Background On Our Benchmarks

Prev Page Storage Bench v1.0, In More Detail-

ksampanna theuniquegamerI think in 2 to 3 years we can get a affodable and fast 1tb ssd in marketReply

Fast yes, affordable no. My guess is atleast 5 years for a 1 TB ssd to be under $100 -

EDVINASM Still comparing Crysis 2 to everything that moves? I had WD Blue in RAID 0 for quite a while and was relatively happy. Before Christmas however, I have replaced them with just simple, SATA 300 Intel 320 SSD 80Gb. Boy what a difference! No more HDD scratchy sounds, no heat from them, no vibrations, no annoying ticks when idle, silent.. Speed wise PC boots up within 30 sec, and I am only running Intel i3 2100 with no OC. To those who are holding onto HDD I would say unless capacity is the key - sell it off for an SSD. Especially now that HDD prices are skyroketting it is proving easier and easier to do the swap.Reply -

nebun ksampannaFast yes, affordable no. My guess is at least 5 years for a 1 TB ssd to be under $100it's so much fun to dream....don't expect prices to drop that much....that's what people people said about CPUs a few years back, yet nothing has changed.... another example is the mid and top end video cards....since manufacturing techniques have improved and have become more efficient one would think that the products would be cheaper....that's not the case....it's called demmand....people demand faster components and will pay a premium price for it, why would manufacturers drop the prices?...they still have to make a profitReply -

mayankleoboy1 theuniquegamerI think in 2 to 3 years we can get a affodable and fast 1tb ssd in marketReply

yeah.

and in 2 to 3 years we can get a 20 core intel 9999 X edition for $50.

and gtx990X2 for just $100. -

buzznut edvinasmStill comparing Crysis 2 to everything that moves? I had WD Blue in RAID 0 for quite a while and was relatively happy. Before Christmas however, I have replaced them with just simple, SATA 300 Intel 320 SSD 80Gb. Boy what a difference! No more HDD scratchy sounds, no heat from them, no vibrations, no annoying ticks when idle, silent.. Speed wise PC boots up within 30 sec, and I am only running Intel i3 2100 with no OC. To those who are holding onto HDD I would say unless capacity is the key - sell it off for an SSD. Especially now that HDD prices are skyroketting it is proving easier and easier to do the swap.Reply

And I recommend folks hold onto their current hard drives and get a boot SSD. 80GB may be enough for you, but a lot of us have bigger storage needs. Its gonna take about a year for the hard drive market to recover, so hang on to those mechanical drives. -

drwho1 theuniquegamerI think in 2 to 3 years we can get a affodable and fast 1tb ssd in marketReply

mayankleoboy1yeah.and in 2 to 3 years we can get a 20 core intel 9999 X edition for $50.and gtx990X2 for just $100.

I do believe that 3-5 years from now we will see a huge increase on performance accompanied by a huge drop in price (compare with today's prices and performance)

Then we will probably have SATA 4 on the market and the "right price/GB/TB" will be on SATA 3 SSD's.

With that in mind, I have always build my systems a generation "behind" which is always more than "a few" generations of whatever I had built last, I have always double or triple my previous built performance for around the same money invested on it.

(plus/minus a few new "tricks" that probably were not on the previous built that could raise my budget

200 dollars or so)

Is is possible to get an 1TB SSD for around $100-$200 dollars in 3-5 years?

I believe it will be.

just don't expect to also be the faster SATA 4, you will have to "compromise" by been a little "behind"

in speed.

-

tetracycloide nebunthat's what people people said about CPUs a few years back, yet nothing has changedAMD Athlon 64 4000+ San Diego 2.4GHz circa 2005 - $475.99 inflation adjusted to 2011 ~$548.22Reply

Intel Core 2 Duo E6850 Conroe 3.0GHz circa 2007 - $279.99 inflation adjusted to 2011 ~$304.10

Intel Core i5-2500K Sandy Bridge 3.3GHz circa 2011 - $219.99

I'm sorry, you were saying?