Xeon Phi: Intel's Larrabee-Derived Card In TACC's Supercomputer

After eight years of development, Intel is finally ready to announce its Xeon Phi Coprocessor, which is derived from the company's work with Larrabee. Although the architecture came up short as a 3D graphics card, it shows more promise in the HPC space.

TACC's Stampede Supercomputer: Xeon Phi In The Field

Intel wanted to demonstrate that Xeon Phi isn't just a face-saving announcement to explain away the last eight years of research, which seemed to hit a wall with Larrabee. Rather than simply claiming that customers had cards and were working with them, the company invited us to an event at the Texas Advanced Computing Center, which is currently building a supercomputer based on Xeon Phi. When we visited, there were already more than 2000 cards installed.

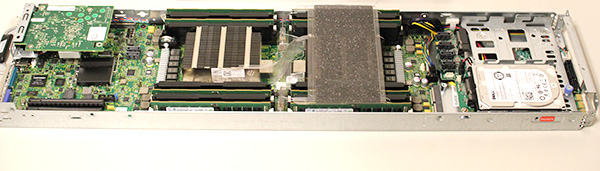

During the installation process, every card is placed into a PCIe riser chassis, and then dropped into a Dell server. Each PowerEdge C8220X "Zeus" node contains two Xeon E5-2680 processors and 32 GB of system memory. Here is what the server looks like:

That mezzanine card in the back is for InfiniBand. Dual LGA 2011 interfaces are covered by passive heat sinks and flanked by four DIMMs each. Equipped with 32 GB per node, that works out to eight 4 GB ECC-capable DIMMs per server. On the right, you see room for 2.5" storage. Stampede uses conventional hard drives. The supercomputer is not set up to be a Hadoop cluster; it's focused on compute performance.

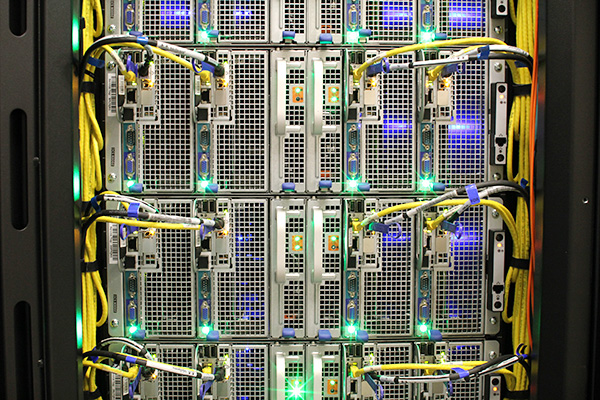

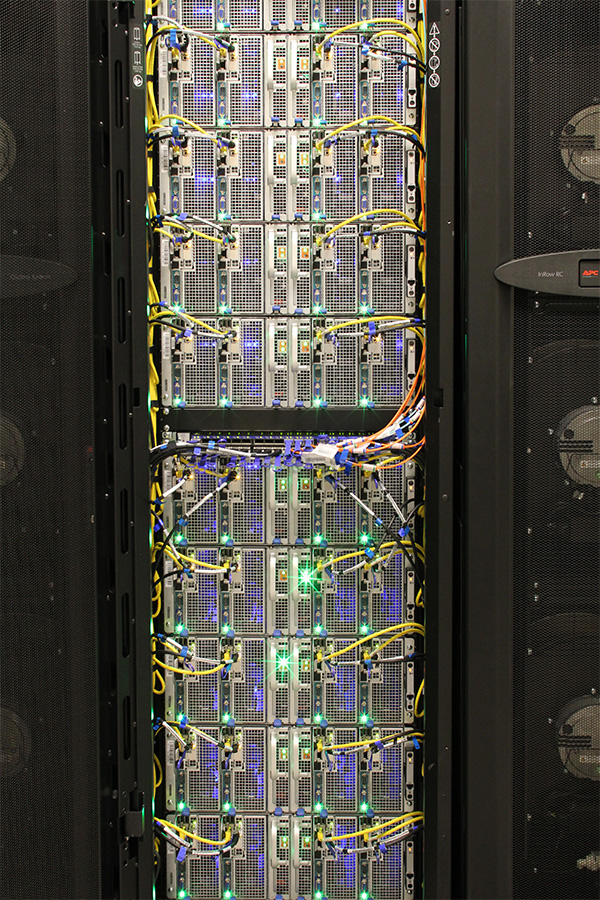

We were told that the blue lights you see inside some of the nodes are Xeon Phi cards already installed. Several of these Dell servers were then placed in racks and flanked by APC cooling and the necessary power conduits.

Xeon Phi co-processors make up about seven petaFLOPS of the supercomputer's 10 petaFLOPS of capacity.

But Stampede is not just composed of thousands of Xeon E5 CPUs and Xeon Phi co-processors. It also features 128 Nvidia Tesla K20s for remote visualization, along with 16 servers sharing 1 TB of memory and two GPUs for large data analysis. Truly, there's a lot more that goes into a supercomputer than just concessions for raw compute potential.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: TACC's Stampede Supercomputer: Xeon Phi In The Field

Prev Page The Value Proposition Of Xeon Phi: Optimization Next Page TACC's Stampede Supercomputer: Xeon Phi In The Field, Continued-

mocchan Articles like these is what makes me more and more interested in servers and super computers...Time to read up and learn more!Reply -

wannabepro Highly interesting.Reply

Great article.

I do hope they get these into the hands of students like myself though. -

ddpruitt Intriguing idea....Reply

These X86 cores have the uumph to run something a little more complex than what a GPGPU can. But is it worth it and what kind of effort does it require. I'd have to disagree with Intel's assertion that you can get used to it by programming for an "i3". Anyone with a relatively modern graphics card can learn to program OpenCL or CUDA on there own system. But learning how to program 60 cores efficiently (or more) from an 8 core (optimistically) doesn't seem reasonable. And how much is one of these cards going to run? You might get more by stringing a few GPUs together for the same cost.

I'm wonder if this is going to turn into the same time of niche product that Intel's old math-coprocessors did. -

CaedenV man, I love these articles! Just the sheer amounts of stuffs that go into them... measuring ram in hundreds of TBs... HDD space in PBs... it is hard to wrap one's brain around!Reply

I wonder what AMD is going to do... on the CPU side they have the cheaper (much cheaper) compute power for servers, but it is not slowing Intel sales down any. Then on the compute side Intel is making a big name for themselves with their new (but pricy) cards, and nVidia already has a handle on the 'budget' compute cards, while AMD does not have a product out yet to compete with PHI or Tesla.

On the processor side AMD really needs to look out for nVidia and their ARM chip prowess, which if focused on could very well eat into AMD's server chip market for the 'affordable' end of this professional market... It just seems like all the cards are stacked against AMD... rough times.

And then there is IBM. The company that has so much data center IP that they could stay comfortably afloat without having to make a single product. But the fact is that they have their own compelling products for this market, and when they get a client that needs intel or nvidia parts, they do not hesitate to build it for them. In some ways it amazes me that they are still around because you never hear about them... but they really are still the 'big boy' of the server world. -

A Bad Day esrevermehReply

*Looks at the current selection of desktops, laptops and tablets, including custom built PCs*

*Looks at the major data server or massively complex physics tasks that need to be accomplished*

*Runs such tasks on baby computers, including ones with an i7 clocked to 6 GHz and quad SLI/CF, then watches them crash or lock up*

ENTIRE SELECTION IS BABIES!

tacoslavei wonder if they can mod this to run games...

A four-core game that mainly relies on one or two cores, running on a thousand-core server. What are you thinking? -

ThatsMyNameDude Holy shit. Someone tell me if this will work. Maybe, if we pair this thing up with enough xeons and enough quadros and teslas, we can connect it with a gaming system and we could use the xeons to render low load games like cod mw3 and tf2 and feed it to the gaming system.Reply -

mayankleoboy1 Main advantage of LRB over Tesla and AMD firepro S10000 :Reply

A simple recompile is all thats needed to use PHI. Tesla/AMD needs a complete code re write. Which is very very expensive .

I see LRB being highly successful. -

PudgyChicken It'd be pretty neat to use a supercomputer like this to play a game like Metro 2033 at 4K, fully ray-traced.Reply

I'm having nerdgasms just thinking about it.